Early science acceleration experiments with GPT-5

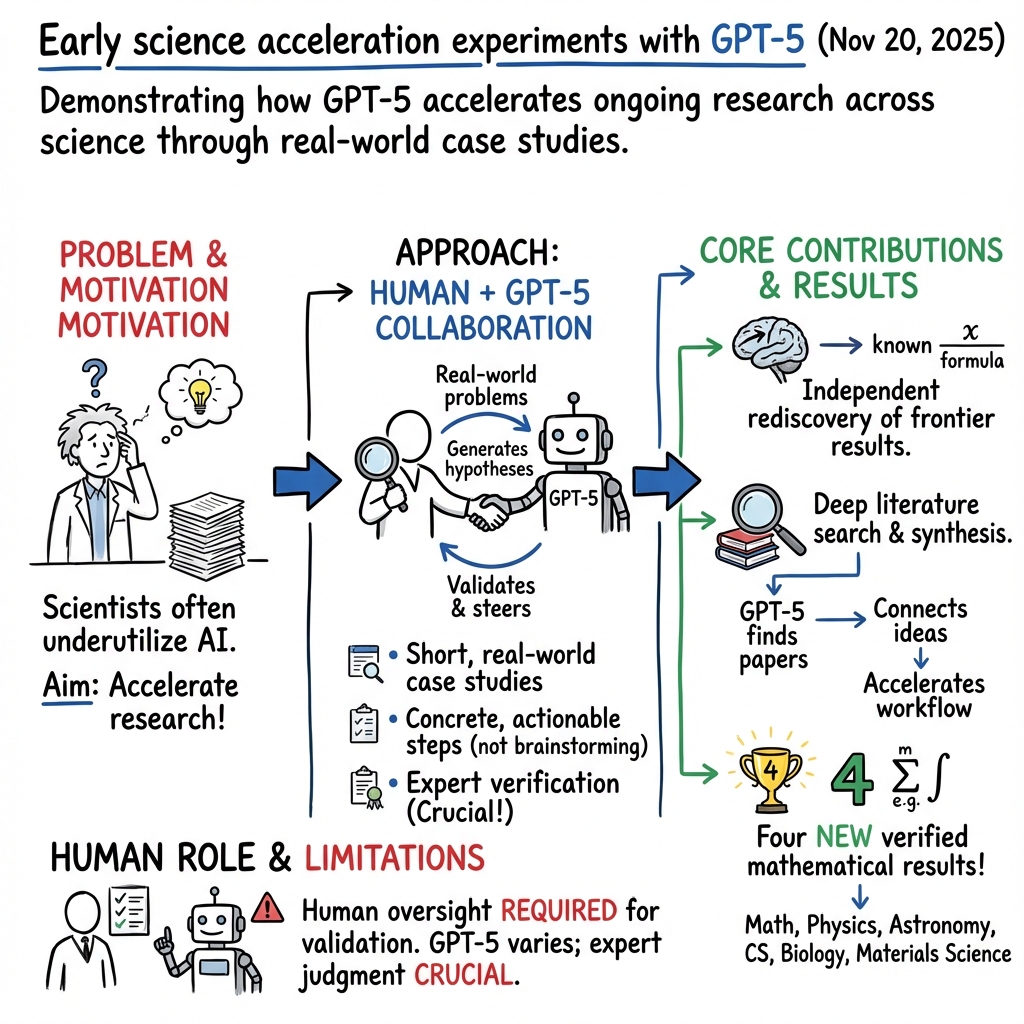

Abstract: AI models like GPT-5 are an increasingly valuable tool for scientists, but many remain unaware of the capabilities of frontier AI. We present a collection of short case studies in which GPT-5 produced new, concrete steps in ongoing research across mathematics, physics, astronomy, computer science, biology, and materials science. In these examples, the authors highlight how AI accelerated their work, and where it fell short; where expert time was saved, and where human input was still key. We document the interactions of the human authors with GPT-5, as guiding examples of fruitful collaboration with AI. Of note, this paper includes four new results in mathematics (carefully verified by the human authors), underscoring how GPT-5 can help human mathematicians settle previously unsolved problems. These contributions are modest in scope but profound in implication, given the rate at which frontier AI is progressing.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Explain it Like I'm 14

Overview

This paper is about testing how a very advanced AI model, called GPT-5, can help real scientists do their work faster and better. The authors collected short, true stories from different fields—like math, physics, astronomy, computer science, biology, and materials science—showing where GPT-5 actually helped move research forward. They also point out where the AI was helpful, where it wasn’t, and how experts worked with it.

What questions did the paper try to answer?

The paper explores a few simple, practical questions:

- Can GPT-5 help scientists discover new ideas or steps in their ongoing research?

- In what kinds of tasks is GPT-5 most useful (and least useful)?

- How can scientists and AI work together effectively—who does what best?

- Can AI find important information in the scientific literature faster than humans?

- Can AI independently “rediscover” tough results that experts already know, showing it really understands the topic?

- Most strikingly: Can AI help make brand-new, correct scientific results—especially in math—when humans check the details?

How did they do it?

The authors ran “case studies,” which are like short, focused experiments with real research problems. Here’s what that looked like:

- Independent rediscovery: They asked GPT-5 to solve problems that experts already knew the answers to but didn’t tell the AI those answers. This is like giving someone a puzzle that has a known solution to see if they can get there on their own.

- Deep literature search: They had GPT-5 dig through scientific papers to find useful facts, methods, and references. Think of it as asking a super-fast librarian to find the best, relevant “needles in a haystack.”

- Working in tandem: Scientists and GPT-5 worked together, going back and forth. The AI suggested ideas, wrote code, drafted arguments, or proposed experiments, while humans guided it, checked its work, and decided what to use.

- New results with AI: In some cases, GPT-5 proposed steps that led to original, correct results, especially in mathematics. Importantly, human experts carefully verified these results to make sure they were truly right.

Throughout, the authors saved the actual conversations with GPT-5 to show how the collaboration unfolded. Whenever the AI made suggestions (like a proof idea, a data analysis plan, or a way to design an experiment), the humans tested and checked them.

What did they find, and why does it matter?

Here are the main takeaways:

- GPT-5 can speed up real research: It helped experts move faster by suggesting new angles, summarizing or linking papers, drafting technical text, writing starter code, and proposing proof ideas or experiment setups.

- It’s not perfect and needs supervision: The AI sometimes made mistakes, missed context, or suggested ideas that didn’t fully work. Human experts had to steer it, ask the right questions, and verify everything important.

- It can “rediscover” advanced results: GPT-5 could independently re-derive known scientific ideas at the cutting edge, which shows it’s not just repeating facts—it can reason through complex problems.

- It can do deep literature work: GPT-5 was useful for finding relevant papers, connecting ideas across fields, and surfacing methods that might otherwise be overlooked.

- New math results: The paper reports four new mathematical results that the AI helped produce. Human mathematicians checked these carefully and confirmed they were correct. While these results are “modest” (not giant breakthroughs), they are real and new—an important milestone.

- Human-AI teamwork works best: The strongest outcomes came from back-and-forth collaboration. Humans provided goals, judgment, and careful checking; the AI provided speed, breadth, and creative suggestions.

Why this matters: If AI can already help produce new, correct results in some areas—and do reliable support work in others—then it can accelerate how quickly science moves forward.

What could this mean for the future?

- Faster discovery: Scientists might spend less time on routine tasks (searching, summarizing, drafting) and more time thinking deeply and making decisions.

- Broader access: Researchers at smaller institutions (or students) could get “expert-level” assistance, helping level the playing field.

- New ways of working: Science may become more iterative and interactive, with human-AI pairs exploring more ideas more quickly.

- Guardrails are essential: Because AI can make confident mistakes, human checking, transparency, and careful evaluation will remain crucial.

- Big potential as models improve: These early successes suggest that future, more capable AI could make even more significant contributions—if used responsibly.

In short, this paper shows that GPT-5 can already act like a powerful research assistant and, in some cases, a creative collaborator. It doesn’t replace scientists, but it can help them think wider, work faster, and sometimes reach new results that matter.

Knowledge Gaps

Below is a concise list of knowledge gaps, limitations, and open questions the paper leaves unresolved. Each point is framed to be actionable for future researchers.

- Methodological transparency: Precise GPT-5 configuration, version, temperature/decoding settings, tool integrations (e.g., retrieval, code execution), and session management are not specified, hindering replication.

- Prompting provenance: Full prompt transcripts, iteration histories, and selection criteria for the shared interactions are not released, limiting reproducibility and auditability.

- Baselines and controls: There are no controlled comparisons against human-only workflows, prior GPT versions, or other models; randomized controlled or blinded studies are absent.

- Quantitative impact: Time saved, success rates, error rates, and quality improvements across domains are not measured with defined metrics or statistical analysis.

- Selection bias: Case studies appear curated and may over-represent positive outcomes; the frequency and nature of failures or neutral results are not systematically reported.

- External validation: Mathematical results are “verified by the human authors” but lack independent verification (e.g., peer review, formal proof checking in Lean/Isabelle) and public reproducibility artifacts.

- Novelty vs. memorization: Claims of “independent rediscovery” are not accompanied by contamination audits or provenance analyses to rule out training-data leakage or memorized content.

- Literature search efficacy: Recall/precision, coverage across subfields, and error (hallucination) rates of GPT-5’s literature search are not quantified or benchmarked.

- Failure-mode taxonomy: While shortcomings are mentioned qualitatively, there is no systematic categorization, frequency analysis, or severity assessment of observed failure modes.

- Generalizability: It is unclear whether observed benefits transfer to diverse institutions, resource settings, languages, and less well-documented scientific areas.

- Workflow standardization: Reusable protocols for human–AI co-reasoning (prompt templates, verification checklists, escalation paths) are not distilled into actionable guidelines.

- Ethical and dual-use considerations: Biology and materials case studies lack structured risk assessments (e.g., dual-use, biosafety), red-teaming results, or safety filter audits.

- Authorship and credit: Policies for attributing AI contributions, responsibility, and accountability in scholarly outputs are not articulated or evaluated.

- Cost and accessibility: Compute/API costs, latency, tool dependencies, and affordability constraints are not documented, impeding assessment of adoption feasibility.

- Robustness to model drift: The stability of results under future GPT-5 updates or across model variants is not examined; version pinning and re-run protocols are absent.

- Longitudinal outcomes: Whether AI-suggested directions yield durable scientific outputs (publications, citations, follow-on results) over time is untracked.

- Cross-model comparisons: The paper focuses on GPT-5 without evaluating whether comparable performance is achievable with other (including open-source) models or multimodal systems.

- Statistical rigor: No pre-registration, sample-size justification, or hypothesis testing is provided; effect sizes and confidence intervals are absent.

- Data/code availability: Replication packages (prompts, logs, scripts, datasets, analysis notebooks) are not provided via public repositories.

- Scope and criteria for “new results”: The criteria used to label contributions as “new” (especially in mathematics) are not formalized, and novelty statements are not rigorously substantiated.

- Interoperability with tools: The extent to which external tools (symbolic math, CAS, simulators, reference managers) were used and their impact on outcomes is unclear.

- Human factors: Cognitive load, trust calibration, oversight burdens, and required expertise for effective AI collaboration are not assessed.

- Security and privacy: Protocols for handling sensitive/unpublished data in interactions with GPT-5, and risks of inadvertent disclosure, are not addressed.

- Benchmarking for AI-assisted discovery: No standardized benchmark tasks or datasets are proposed to evaluate and compare AI’s contribution to scientific discovery across domains.

Practical Applications

Practical Applications Derived from “Early science acceleration experiments with GPT-5”

The paper demonstrates that GPT-5 can independently rediscover frontier results, conduct deep literature searches, co-work effectively with experts, and contribute to novel mathematics results. These capacities translate into concrete applications across research-centric sectors and beyond.

Immediate Applications

- Strong literature-review copilot (academia, healthcare/biomed, materials, policy). What it enables: deep, cross-referenced synthesis of prior work; novelty checks; rapid identification of gaps and conflicting findings. Potential tools/workflows: RAG-enhanced search over arXiv/PubMed/Scopus; integration into Zotero/EndNote; “related work” drafting and automated citation graph mapping. Dependencies/assumptions: up-to-date access to paywalled/open corpora; citation accuracy checks; provenance tracking and hallucination guards.

- Novelty and prior-art checker (academia, legal/IP, industry R&D). What it enables: “independent rediscovery” verification—fast detection of whether a claim/result has a precedent in the literature or patents. Potential tools/workflows: semantic similarity + claim extraction + argument matching; prior-art search for patent drafting and due diligence. Dependencies/assumptions: comprehensive corpora (including patents), high-precision claim matching, legal review.

- Research hypothesis generator and prioritizer (biology, materials science, physics). What it enables: propose testable hypotheses from literature gaps; rank by plausibility, novelty, and cost; suggest minimal experiments to discriminate alternatives. Potential tools/workflows: Bayesian scorecards, influence diagrams, power analysis; Jupyter/R pipelines for hypothesis screening. Dependencies/assumptions: domain data availability; human domain review and ethical oversight.

- Experimental design and protocol drafting (wet labs and materials labs). What it enables: step-by-step protocols with parameter ranges, controls, and error modes; safety and compliance checklists. Potential tools/workflows: ELN/LIMS integrations (e.g., Benchling), printable SOPs, checklists for IBC/IRB compliance. Dependencies/assumptions: institution-specific SOPs; biosafety/regulatory constraints; tacit lab know‑how still needed.

- Math and proof assistance with verification hooks (mathematics, CS theory, formal methods). What it enables: propose lemmas, proof sketches, counterexamples; translate to formal proof assistants for checking. Potential tools/workflows: Lean/Isabelle/Coq pipelines; auto-conjecturing + SMT counterexample search; proof diffing and minimal counterexample generation. Dependencies/assumptions: formalization libraries coverage; compute for proof search; expert validation.

- Code and simulation copilot (software, computational science, engineering). What it enables: scaffold simulations, unit tests, and analysis notebooks; refactor legacy scripts; instrument code for reproducibility. Potential tools/workflows: IDE plugins (VS Code, PyCharm), containerized environments, CI checks for numerical stability/statistical validity. Dependencies/assumptions: secure data access; licensing of dependencies; version control hygiene.

- Data analysis and statistical guardrails (all empirical sciences, finance). What it enables: EDA, model selection, diagnostics, power/sampling calculations; warnings for p-hacking and multiple-hypothesis pitfalls. Potential tools/workflows: reproducible pipelines (Snakemake/MLflow), preregistration templates, auto-generated robustness checks. Dependencies/assumptions: clean metadata; governance for access to sensitive data; statistician oversight.

- Cross-disciplinary translation and tutoring (education, interdisciplinary teams). What it enables: convert arguments across subfields (e.g., physics ↔ math formalism), generate didactic explanations and concept bridges. Potential tools/workflows: “explain-mode” within PDFs; jargon translators; curriculum-aligned micro-lessons. Dependencies/assumptions: accurate mapping across vocabularies; continual evaluation for subtle conceptual errors.

- Scientific writing and editing assistant (academia, industry R&D). What it enables: draft sections (methods/related work), produce figures captions, check consistency of notation/units, suggest response-to-reviewers text. Potential tools/workflows: journal-style templates, citation managers, automated checklist compliance (e.g., CONSORT, PRISMA). Dependencies/assumptions: authorship/ethics policies; plagiarism detection and disclosure norms.

- Journal/editorial triage support (publishers, conferences). What it enables: initial screening for scope fit, superficial errors, potential duplications; flag sections needing expert attention. Potential tools/workflows: editorial dashboards; similarity and claim-overlap heatmaps; math/proof sanity checks before full review. Dependencies/assumptions: clear editorial policies for AI assistance; human-in-the-loop decisions.

- Grant and RFP alignment assistant (academia, nonprofits, government programs). What it enables: match project aims to solicitations; draft aims, budgets, milestones; risk and impact framing. Potential tools/workflows: opportunity feeds, compliance checklists, budget sanity check templates. Dependencies/assumptions: funder-specific rules; institutional approvals.

- Team workflow playbooks for “working in tandem with AI” (research ops, education). What it enables: training and SOPs for effective prompting, critique loops, verification steps, and role allocation between humans and AI. Potential tools/workflows: short courses, internal wikis, prompt libraries tied to lab/department needs. Dependencies/assumptions: organizational buy-in; change management.

Long-Term Applications

- Closed-loop, AI-driven “self-driving labs” (materials, chemistry, synthetic biology). What it enables: GPT-5 plans experiments, lab robots execute, sensors feed results back, and the model optimizes hypotheses iteratively. Potential tools/workflows: robotics integration, Bayesian optimization, autonomous safety interlocks, electronic lab notebooks with real-time control. Dependencies/assumptions: robust hardware integration; stringent safety/regulatory approvals; high-fidelity simulators.

- End-to-end theorem discovery with formal certification (mathematics, software verification). What it enables: autonomously discover and formally certify new results; translate conceptual proofs into machine-checked artifacts. Potential tools/workflows: tight coupling with proof assistants, large formal libraries, search over proof spaces with neural guidance. Dependencies/assumptions: expanded formal corpora; compute; community standards for formal publication.

- AI-augmented peer review and reproducibility audits (publishers, funders). What it enables: automated reproduction of analyses; environment recreation; proof and code linting; anomaly detection in reported stats. Potential tools/workflows: container sandboxes, dataset lineage tracking, “repro scorecards” attached to manuscripts. Dependencies/assumptions: open artifacts; legal data-sharing frameworks; reviewer training.

- Dynamic, machine-actionable knowledge graphs of science (publishers, academia, pharma). What it enables: continuously updated claim graphs linking methods, datasets, and results; automated contradiction resolution and confidence scoring. Potential tools/workflows: standardized claim ontologies, provenance and uncertainty tracking, APIs for downstream tools (e.g., trial design, materials screening). Dependencies/assumptions: publisher cooperation; interoperability standards.

- Cross-domain synthesis engines for discovery (energy, climate, astro, biomed). What it enables: generate novel connections across fields (e.g., combinatorial designs ↔ experiment schedules; control theory ↔ cellular circuits), proposing high-leverage research programs. Potential tools/workflows: multi-corpus multimodal RAG (text, code, figures), model ensembles for disciplinary perspective-taking. Dependencies/assumptions: access to heterogeneous data; methods for bias control.

- AI as “principal investigator” under governance (R&D orgs, policy). What it enables: AI shapes research agendas, allocates resources, and runs experiments under human oversight; continuous portfolio optimization. Potential tools/workflows: governance dashboards, audit trails, risk and ethics monitors. Dependencies/assumptions: clear accountability and liability frameworks; cultural acceptance; safety standards.

- Personalized scientific education at scale (education, workforce upskilling). What it enables: adaptive research apprenticeships, automated feedback on research artifacts (proofs, code, methods), and scaffolded “replicate a paper” curricula. Potential tools/workflows: LMS integration, sandboxed compute, skill maps and mastery tracking. Dependencies/assumptions: privacy protections; validated assessment rubrics.

- National/sectoral AI research infrastructure (policy, public–private partnerships). What it enables: shared compute and secure data enclaves for AI-accelerated science; standardized evaluation suites for “time-to-insight” and error rates. Potential tools/workflows: trusted research environments; model cards and evaluation leaderboards tailored to science tasks. Dependencies/assumptions: sustained funding; interagency coordination; cybersecurity.

- Compliance-aware clinical and regulatory synthesis (healthcare, policy). What it enables: end-to-end evidence synthesis for guidelines and regulatory submissions; scenario modeling for policy impacts. Potential tools/workflows: audit-ready documentation, bias/harms analysis, transparent uncertainty quantification. Dependencies/assumptions: regulated data access; model transparency; human oversight by clinicians/regulators.

- Corporate R&D acceleration pipelines (pharma, semiconductors, advanced manufacturing). What it enables: portfolio triage, automated prior-art sweeps, accelerated experiment cycles, and IP drafting. Potential tools/workflows: integration with PLM/LIMS, IP management systems, design of experiments optimizers. Dependencies/assumptions: IP and confidentiality controls; validation and QA processes.

- Scientific risk forecasting and alignment tooling (meta-science, policy). What it enables: early warning for research dead-ends or high-payoff directions; evaluate systemic risks of accelerated discovery. Potential tools/workflows: meta-analytic dashboards, causal modeling of research trajectories, safety case generation. Dependencies/assumptions: high-quality metadata on research outcomes; agreement on risk metrics.

Assumptions across applications: availability of GPT-5-level capabilities; reliable retrieval over comprehensive, licensed corpora; rigorous human oversight and domain verification; integration with existing tools and data governance; adherence to ethical, safety, and authorship norms.

Glossary

- Active character: In TeX, a character whose category code is “active” and thus behaves like a macro. " \catcode`*=\active "

- BibLaTeX: A modern LaTeX system for managing bibliographies and citations. "\addbibresource{bib/references.bib}"

- Catcode: TeX’s “category code” that defines how each character is interpreted (e.g., letter, macro, active). "{\catcode`*=\active\gdef*{\mathclose{}\,\mathopen{}"

- Conjecture: A mathematical statement believed to be true but not yet proven. "\newtheorem{conjecture}{Conjecture}"

- Corollary: A result that follows immediately from a theorem. "\newtheorem{corollary}{Corollary}"

- DeclareNameAlias: A BibLaTeX command to alias one name formatting scheme to another. "\DeclareNameAlias{sortname}{given-family}"

- ExecuteBibliographyOptions: A BibLaTeX command to set global bibliography options. "\ExecuteBibliographyOptions{uniquename=false, uniquelist=false}"

- Frontier AI: Cutting-edge, state-of-the-art artificial intelligence systems. "AI models like GPT-5 are an increasingly valuable tool for scientists, but many remain unaware of the capabilities of frontier AI."

- gdef: A TeX command for globally defining a macro. "{\catcode`*=\active\gdef*{\mathclose{}\,\mathopen{}"

- Lemma: An auxiliary mathematical result used to prove a theorem. "\newtheorem{lemma}{Lemma}"

- Mathcode: TeX’s code that controls a character’s behavior in math mode. "\mathcode`*=\"8000"

- mdframed: A LaTeX environment for creating framed boxes. "\begin{mdframed}[style=ChatBoxFrame]"

- numberwithin: An amsmath command to number theorem-like environments within another counter (e.g., section). "\numberwithin{theorem}{section}"

- Proposition: A formal mathematical statement, typically less central than a theorem. "\newtheorem{proposition}{Proposition}"

- Remark: An explanatory or contextual note accompanying formal results. "\newtheorem{remark}{Remark}"

- renewcommand: A LaTeX command to redefine an existing command. "\renewcommand{\left}{\mathopen{}\mathclose\bgroup\originalleft}"

- Scientific frontier: The leading edge of research where new discoveries are made. "\chapter{\,Independent rediscovery of known results at the scientific frontier}"

- Subfile: A LaTeX command (from the subfiles package) to include a subdocument. "\subfile{content/01-Bubeck}"

- tcolorbox: A LaTeX environment for creating colored and styled boxes. "\begin{tcolorbox}[breakable,enhanced,flush right,width=0.8\textwidth]"

- Theorem: A major mathematical statement that has been rigorously proven. "\newtheorem{theorem}{Theorem}"

Collections

Sign up for free to add this paper to one or more collections.