- The paper presents EvoRec, an efficient framework that uses a Locate-Forget-Update paradigm to adapt LLM recommenders to dynamic user preferences.

- The methodology isolates sensitive model layers, updates only 30% of parameters, and filters outdated interactions to maintain recommendation accuracy.

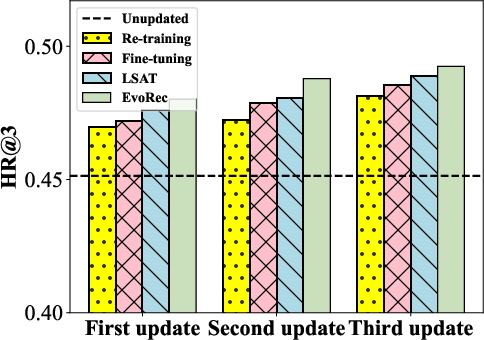

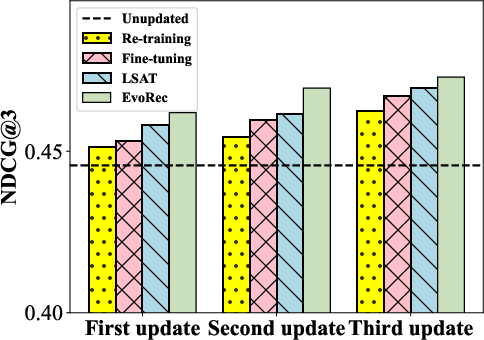

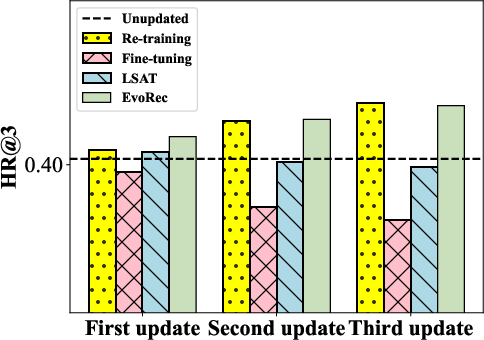

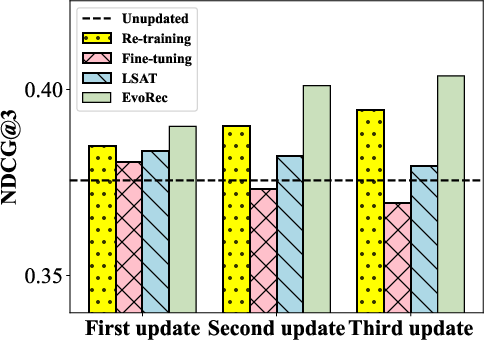

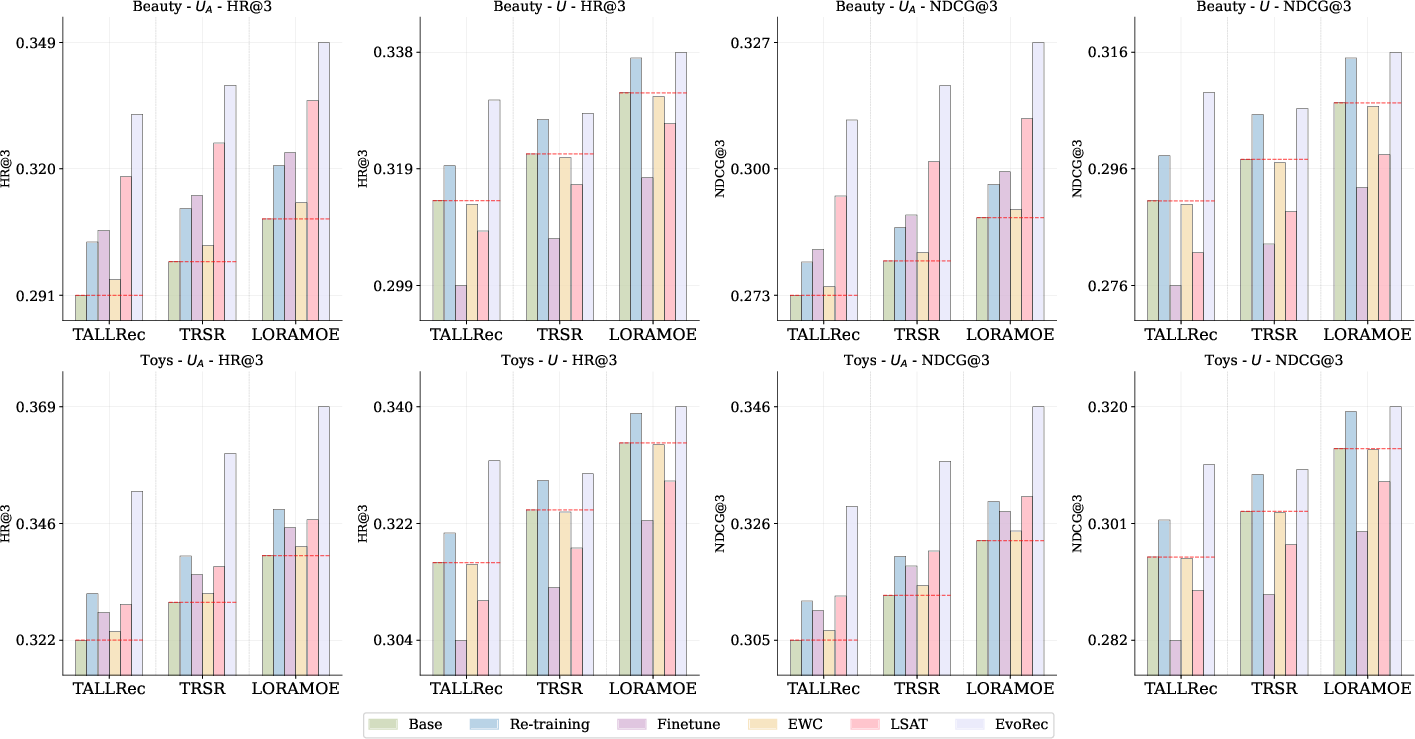

- Empirical results on Amazon Beauty and Toys datasets demonstrate improved HR@3 and NDCG@3 scores while mitigating preference forgetting.

An Efficient LLM-based Evolutional Recommendation with Locate-Forget-Update Paradigm

Introduction

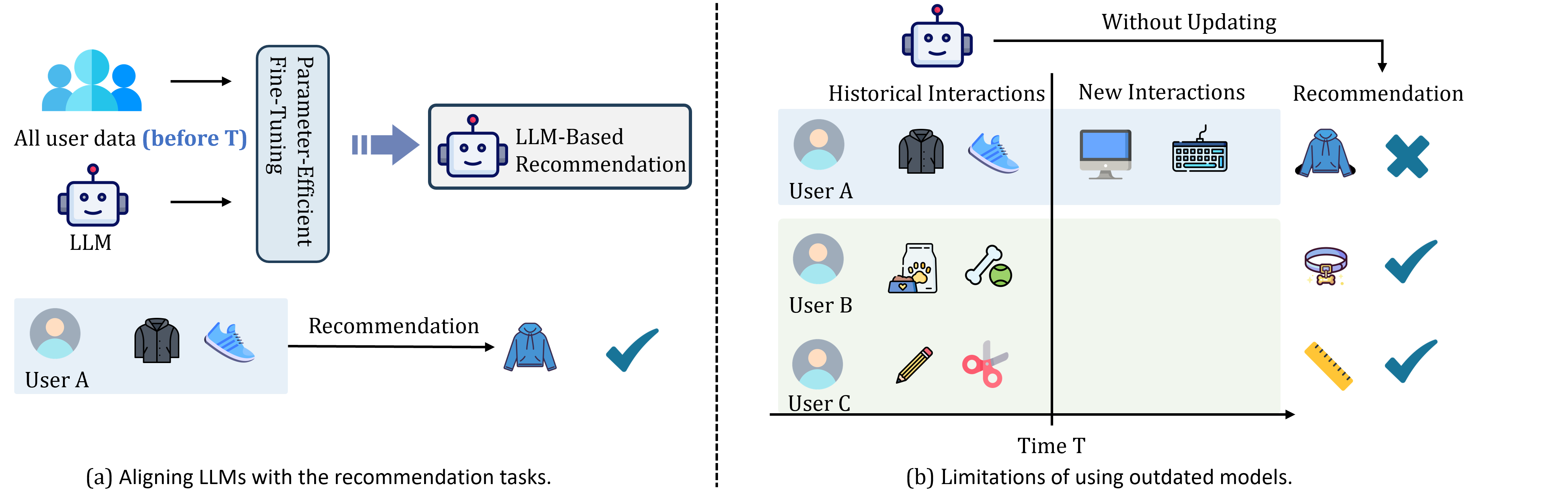

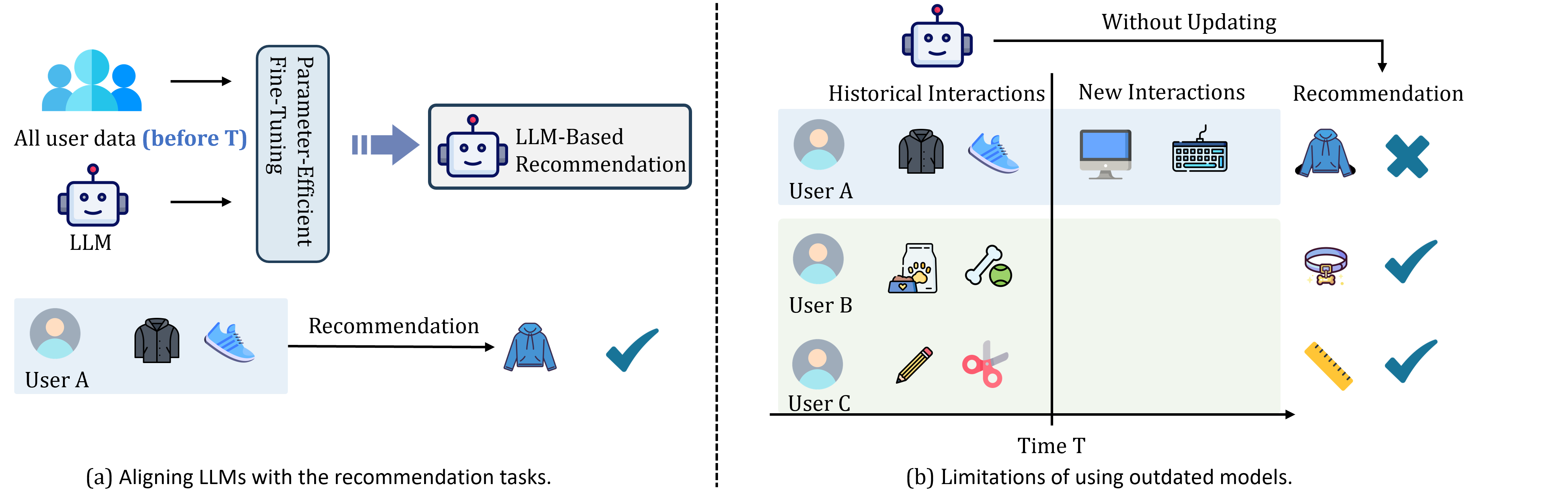

This paper introduces EvoRec, a novel framework aimed at optimizing the adaptability of LLM-based recommender systems (LLMRec) in dynamic environments. In the landscape of modern e-commerce, LLMs have shown significant promise due to their superior understanding of user-item interaction sequences. However, a critical challenge persists—efficiently adjusting these models to align with evolving user preferences, especially when such preferences exhibit substantial drift over time (Figure 1).

Figure 1: Illustration of preference drift challenge in personalized recommendation. As user preference drift (e.g., User A's preference changes from clothing to electronics), existing LLM-based recommenders struggle to adapt and provide accurate personalization.

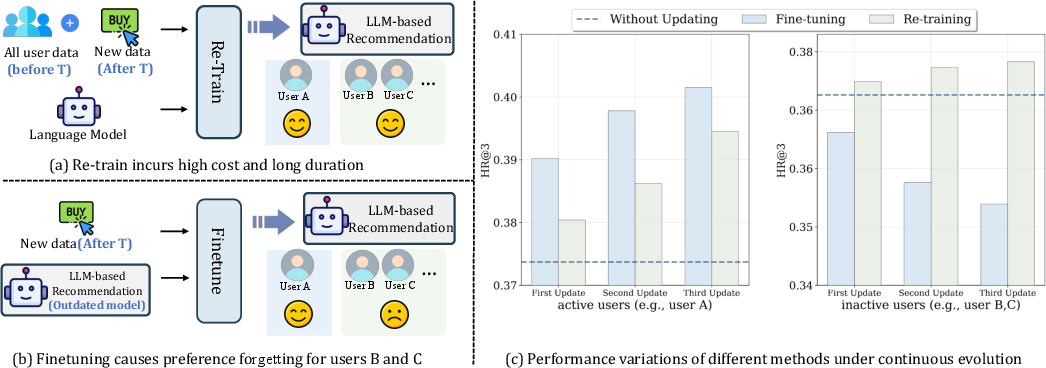

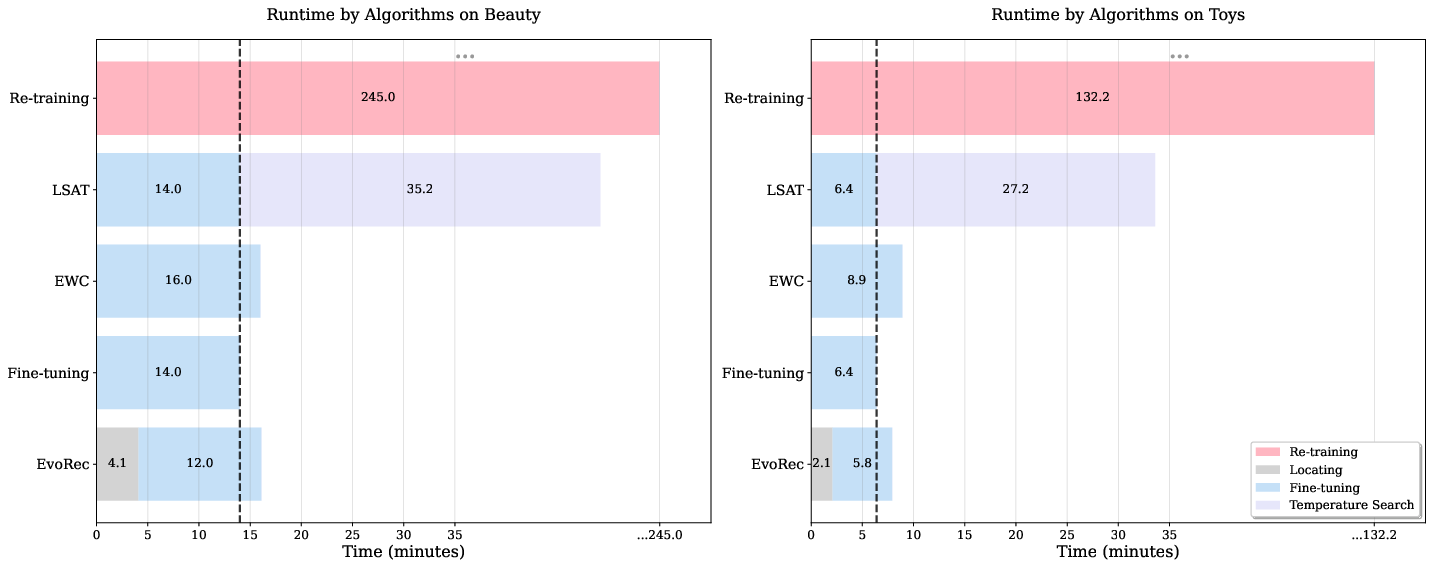

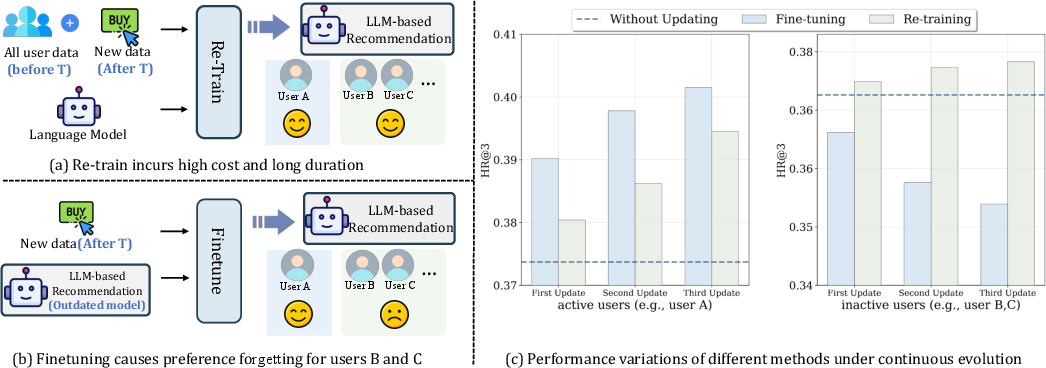

Conventional retraining methodologies become prohibitively expensive, while fine-tuning can cause substantial preference forgetting, particularly affecting users with static interaction histories (Figure 2).

Figure 2: Limitations of two common evolution paradigms: Fine-tuning adapts to evolving preferences but results in performance degradation for stable users due to preference forgetting.

EvoRec Framework

EvoRec innovatively resolves these issues through the Locate-Forget-Update framework. This methodology strategically addresses the intrinsic inefficiencies of typical LLM adaptation techniques.

Locate-Sensitive Layers

Central to EvoRec is its ability to pinpoint specific layers within the model that are most sensitive to changes in user preferences. By tracking the divergence in hidden states between the original and updated interaction sequences, EvoRec isolates these critical parameters, supporting a focused and cost-effective update process.

Forget Outdated Interactions

Before updating the model, EvoRec employs a filtering mechanism to identify and discard outdated user interactions. This step ensures that the model remains attuned to the current user preferences, thereby enhancing the quality of recommendations.

Update Critical Parameters

The final step involves updating only those parameters deemed crucial by the localization process, thereby implementing a refined and efficient adaptation of the LLMRec model. This selective updating process accounts for only 30% of the typical LoRA adapter parameters, underlining its resource efficiency.

Experimental Evaluation

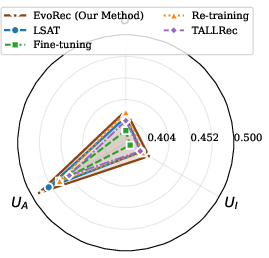

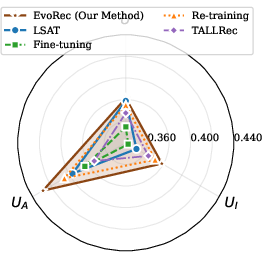

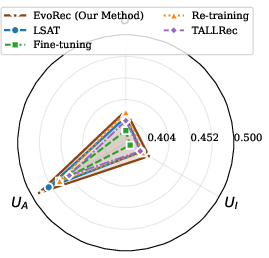

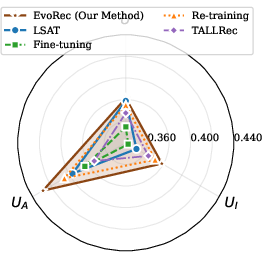

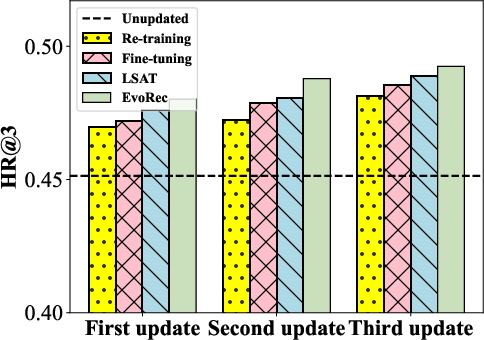

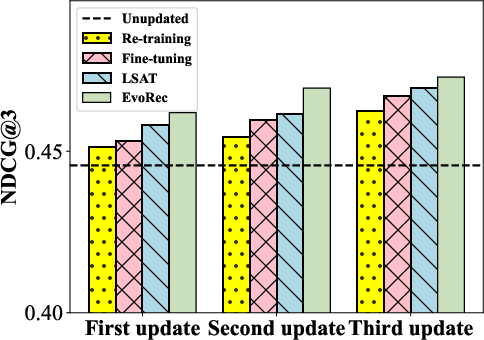

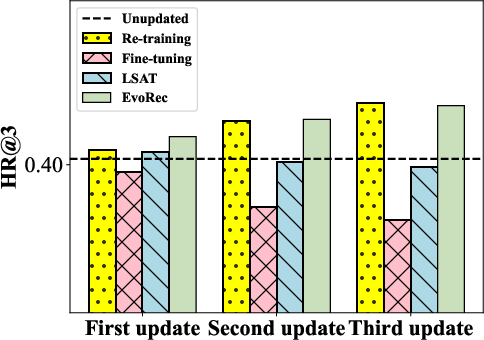

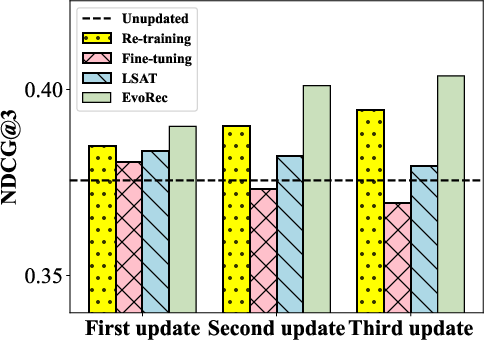

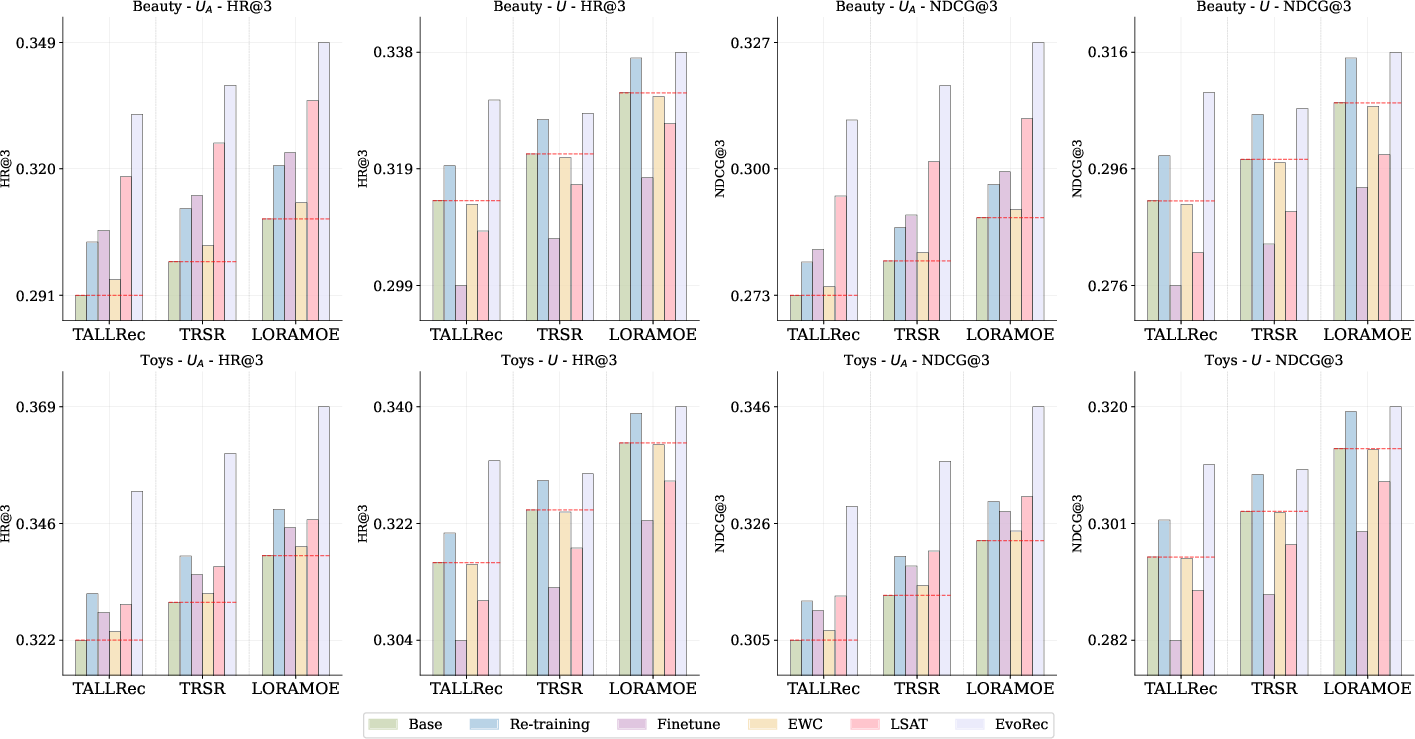

The efficacy of EvoRec was empirically validated through extensive experiments on two datasets: Amazon Beauty and Toys. The results demonstrated that EvoRec not only enhances performance metrics such as HR@3 and NDCG@3 on active user sets but also sustains overall system integrity by mitigating preference forgetting more effectively than existing paradigms (Figures 5, 6, 9, 11).

Figure 3: HR@3 on Beauty dataset indicating improved recommendation accuracy.

Figure 4: Performance enhancement visualized on the U set through HR@3 metric.

Figure 5: Performance of different evolution algorithms on LLaMA-2-7B-Chat demonstrating competitive gains with EvoRec.

Figure 6: Time analysis of different incremental learning paradigms on Beauty and Toys showing the efficiency of EvoRec.

Implications and Future Directions

The integration of localized parametric adjustment and interaction filtering within EvoRec sets a new benchmark for dynamic LLM-based recommendation systems. Such innovations hold promise not only in enhancing personalization efficiency but also in reducing operational costs.

The implications extend into the field of adaptive AI, where the next development stage involves further automating the parameter localization process and expanding this framework to encompass broader, more complex datasets across various domains.

Conclusion

EvoRec represents a significant advancement in the domain of recommendation systems, addressing critical challenges in scalability, adaptability, and efficiency. By enabling LLMRec to dynamically evolve in response to user preference shifts, EvoRec not only improves recommendation accuracy but also ensures resource-efficient model maintenance, paving the way for future explorations in adaptive and intelligent recommendation solutions.