- The paper introduces a resilience model using the Autonomous Classification Ratio to detect performance degradation and optimize recovery while minimizing CO₂ emissions.

- It compares decision-making policies—including multi-objective optimization, game-theoretic, and reinforcement learning approaches—to balance rapid recovery with energy costs.

- Empirical tests on a collaborative robot demonstrate that containerization cuts CO₂ emissions by 50% and RL-agent policies achieve 20% faster recovery despite higher energy demands.

Green Resilience of Cyber-Physical Systems: Detailed Expert Summary

Introduction and Research Context

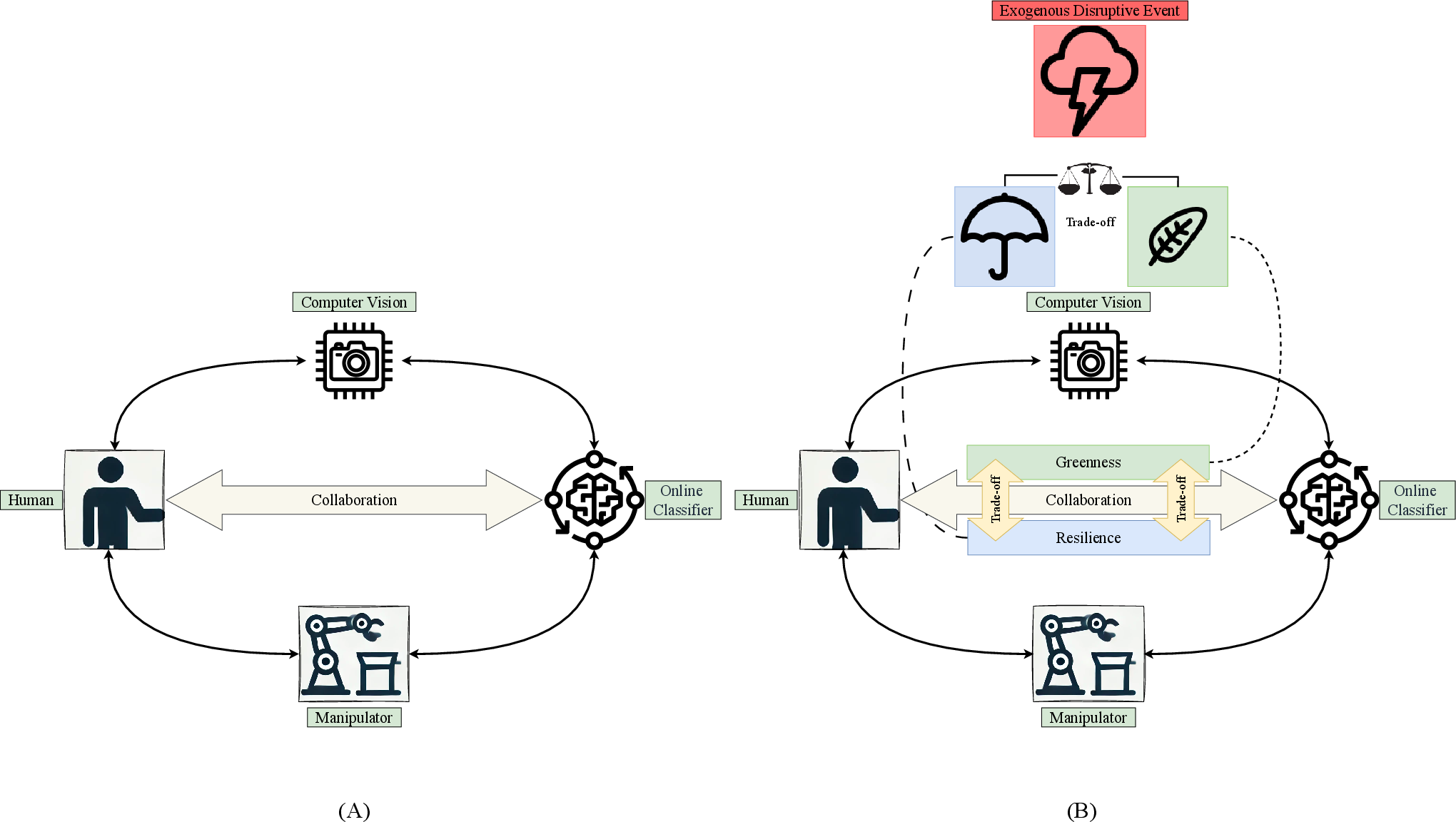

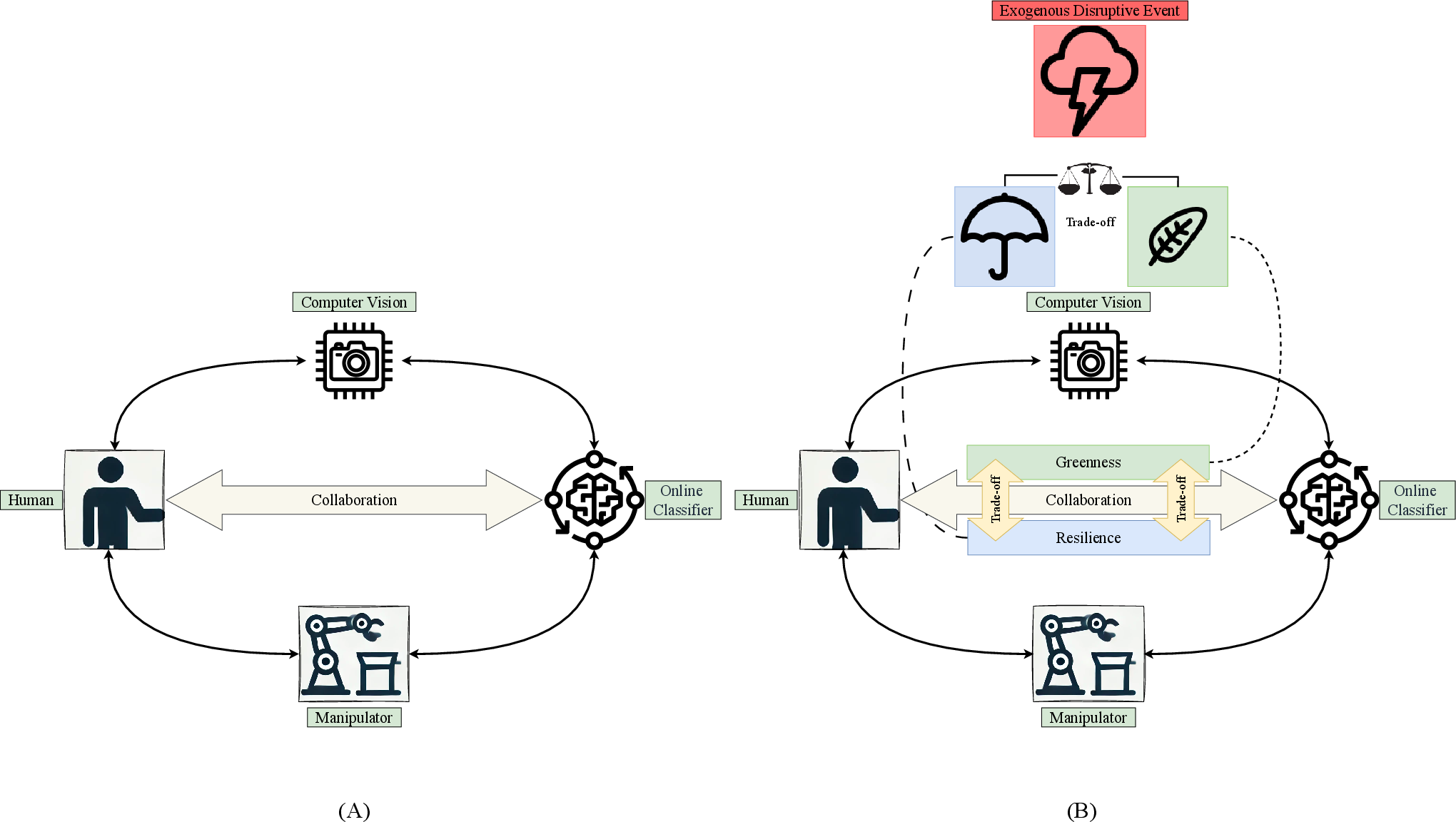

This dissertation addresses resilience and greenness in Online Collaborative Artificial Intelligence Systems (OL-CAIS), a human-centric subclass of Cyber-Physical Systems (CPS) that learn tasks online through human interaction. The work is situated within the paradigm of Industry 4.0, leveraging continual and online learning to maximize system autonomy and minimize reliance on human intervention. The focus is on how OL-CAIS respond to exogenous disruptive events—environmental changes that degrade system performance—and the dual challenge of restoring performance (resilience) without incurring unacceptable energy costs or CO₂ emissions (greenness).

Figure 1: Key research challenges facing industrial OL-CAIS—online learning for steady performance, performance degradation after disruption, and the required balance between resilience and greenness during recovery.

The thesis systematically models OL-CAIS runtime behavior, develops intelligent decision-making frameworks that operationalize the trade-off between resilience and greenness, and confronts the issue of catastrophic forgetting in online learning. The practical context is a collaborative robot (CORAL), used as an experimental testbed for both real-world and simulated evaluations.

Theoretical Frameworks and Methodology

Resilience Modeling

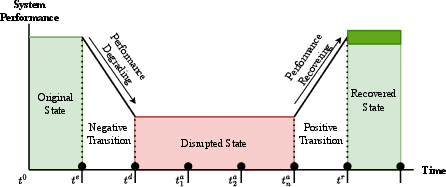

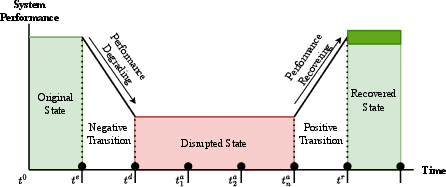

Central to the work is a resilience model tracking the Autonomous Classification Ratio (ACR)—the fraction of actions performed autonomously—over sliding windows of system iterations. Three operational states are defined: steady (autonomous), disruptive (decreased ACR/performances), and final (post-disruption, possible memory degradation). The model is designed for runtime detection of performance degradation and automated classification of system states.

Figure 2: The resilience model, showing performance drops and recoveries as the system responds to disruptive events.

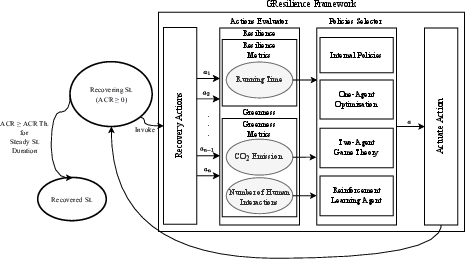

Decision-Making Policies

Recovery policies are structured within the GResilience framework, encompassing three agent-based approaches:

Measurement and Evaluation

A custom measurement framework quantifies:

- Resilience: Recovery speed (disruptive-to-recovered transition time ratio), performance steadiness.

- Greenness: Mean CO₂ emissions, degree of human intervention (autonomy metric).

The theoretical constructs are implemented in CAIS-DMA: a modular decision-making assistant supporting simulation, monitoring, and online deployment.

Experimental Validation

Real-world and simulated experiments are executed with the CORAL robot for representative disruptive events: hardware failure (lights outage) and adversarial attacks (histogram manipulation of color images). All agent-based policies are benchmarked versus baseline internal policies. The empirical findings are:

Catastrophic Forgetting and State Dynamics

The thesis exposes that disruptive events cause OL-CAIS classifiers to forget original environmental conditions, leading to post-recovery performance degradation (catastrophic forgetting). Recoveries in the final state require renewed human interaction and learning. Continuous support via intelligent policies is necessary to maintain steady autonomous performance in environments with frequent or repeated disruptions.

Key Numerical Results and Contradictory Findings

- Recovery duration under RL policies is reduced by up to 20% compared to internal and optimization/game-theoretic approaches, and performance fluctuation ratios decrease by 50–85% compared to baselines.

- Autonomy ratios in recovery states increase by 15–45% under agent policies, with RL-agent consistently highest.

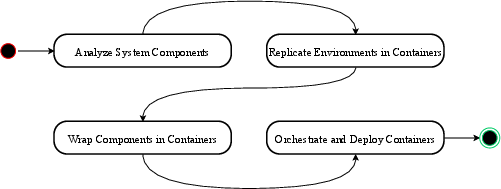

- Containerization reduced measured CO₂e by approximately 50% over bare-metal (0.099 vs. 0.198 kg CO₂e for two-hour continuous classification).

- RL-agent policies achieved this superior resilience at a statistically significant (p < 0.01) cost in mean CO₂ emissions (up to 70% greater than other policy approaches).

Practical and Theoretical Implications

The theoretical frameworks and empirical tools (ACR-based resilience modeling, GResilience, CAIS-DMA) operationalize the trade-off between greenness and resilience for human-centric CPS. Practically, the methodology and containerization yield actionable energy and sustainability improvements for industrial robots and OL-CAIS. The findings clarify that aggressive resilience—even with state-of-the-art RL policies—can contradict greenness goals due to heavier computation. This insight informs future system design: decision-makers must explicitly model and optimize for carbon-aware recovery, not merely rapid restoration.

On the theoretical front, performance evolution modeling and equilibrium-based policies can generalize to broader CPS domains: energy grids, healthcare, and any AI-driven system with adaptive autonomy. Future work could incorporate multi-model state machines for robust forgetting mitigation, and runtime-aware policy containers for continual state re-balancing.

Conclusion

This dissertation provides methodological, empirical, and architectural advances for environmentally responsible, resilient OL-CAIS. Decision-makers are equipped with metrics, models, and agent-based policies that enable informed, runtime-optimal action selection. The work demonstrates that resilience and greenness are often competing objectives; achieving sustainable, resilient CPS requires explicit, agent-based trade-off modeling, containerized resource management, and continual adaptation to disruptive events. The frameworks herein support scalable deployment and evolution of green-resilient industrial CPS.