- The paper demonstrates that few-shot prompting significantly enhances LLM performance, enabling test generation that rivals human-written tests in coverage and defect detection.

- The methodology integrates automated test generation with an evaluation pipeline that uses metrics like mutation score and explicit class path prompts to address compilation errors.

- This framework, evaluated on real-world Java projects using the Classes2Test dataset, paves the way for reliable, automated software testing and potential expansion to other languages.

LLMs for Automated Unit Test Generation and Assessment in Java: The AgoneTest Framework

Introduction and Motivation

The use of unit tests is a fundamental practice in modern software development, ensuring the correctness and reliability of individual components. However, creating these tests manually is often a labor-intensive process, requiring expertise and time. This paper introduces the AgoneTest framework, which leverages LLMs for automating the generation and assessment of unit tests in Java. Unlike traditional test generation methods, AgoneTest does not propose a novel test generation algorithm but offers a comprehensive evaluation pipeline designed to benchmark different LLMs and their prompting strategies. Key to this framework is the "Classes2Test" dataset, mapping Java classes to their test classes, facilitating class-level test code evaluation.

AgoneTest Framework Overview

The AgoneTest framework provides an automated infrastructure for evaluating LLM-generated unit tests. The primary objective is to enable developers and researchers to systematically compare the efficacy of LLMs in generating unit tests. AgoneTest integrates project setup, context extraction, test execution, and quality evaluation through metrics like mutation score and test smells. The framework operates on open-source Java repositories and includes Java's commonly used versions and testing frameworks.

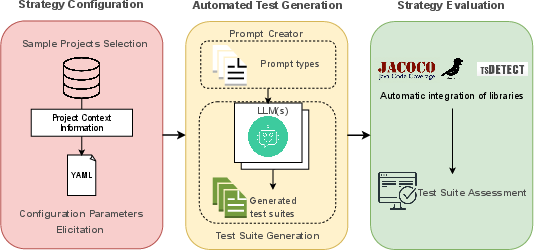

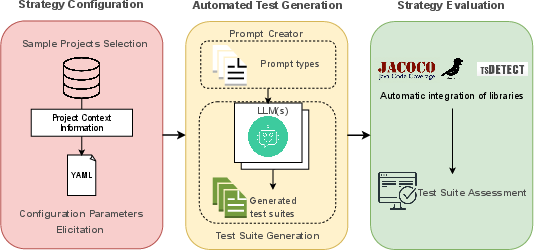

Figure 1: Overview of AgoneTest framework.

AgoneTest's architecture can be divided into several key phases:

- Sample Projects Selection: Utilizes the Classes2Test dataset to automatically select Java repositories for evaluation. These repositories not only compile but are representative of real-world projects.

- Configuration Parameters Elicitation: Extracts project-specific configurations such as Java version and testing framework, essential for prompt creation.

- Automated Test Generation: Implements LLM interactions via the Prompt Creator module, enabling the generation of unit test suites.

- Strategy Evaluation: Employs an automated assessment methodology to evaluate test quality based on coverage metrics and test smells.

Experimental Design and Results

The evaluation conducted using AgoneTest focuses on three main research questions:

- The performance of different LLMs and prompting strategies in generating unit tests.

- The impact of compilation errors on success rates.

- Strategies to improve compilation success rates.

Key Findings

- Performance Comparison: The study highlights the effectiveness of LLMs in matching or exceeding the coverage and defect detection capabilities of human-written tests. Notably, few-shot prompting strategies significantly enhanced the performance of LLMs like llama3.1:70b and gemini-1.5-pro across most quality metrics.

- Compilation Issues: A critical challenge identified is the significant number of compilation errors, primarily due to missing symbols and incorrect imports. Despite these hurdles, AgoneTest demonstrates the ability of LLM-generated tests to perform comparably to human-generated tests, contingent on successful compilation.

- Enhancements for Compilation Success: The study proposes an enhanced strategy that improves compilation success through explicit specification of class paths in prompts, addressing symbol and reference errors effectively.

Implications and Future Directions

The AgoneTest framework represents a significant advancement in the automated evaluation of unit test generation, providing researchers and practitioners with a powerful tool for systematic benchmarking. The insights obtained from using AgoneTest underscore the potential of LLMs in automating software testing, particularly when enhanced with improved prompting strategies.

Future research directions include extending the framework to support other programming languages and further refining strategies to decrease compilation failures. These enhancements will not only broaden the applicability of AgoneTest but also improve the overall reliability and adoption of LLM-powered test generation methods in real-world software development environments.

Conclusion

AgoneTest provides a novel and systematic approach to evaluating LLM-generated unit tests, offering a robust infrastructure for the automated assessment of test quality across various metrics. By harnessing the capabilities of LLMs and addressing current challenges, AgoneTest sets the foundation for future explorations and developments in automated software testing, paving the way for more efficient and effective testing practices.