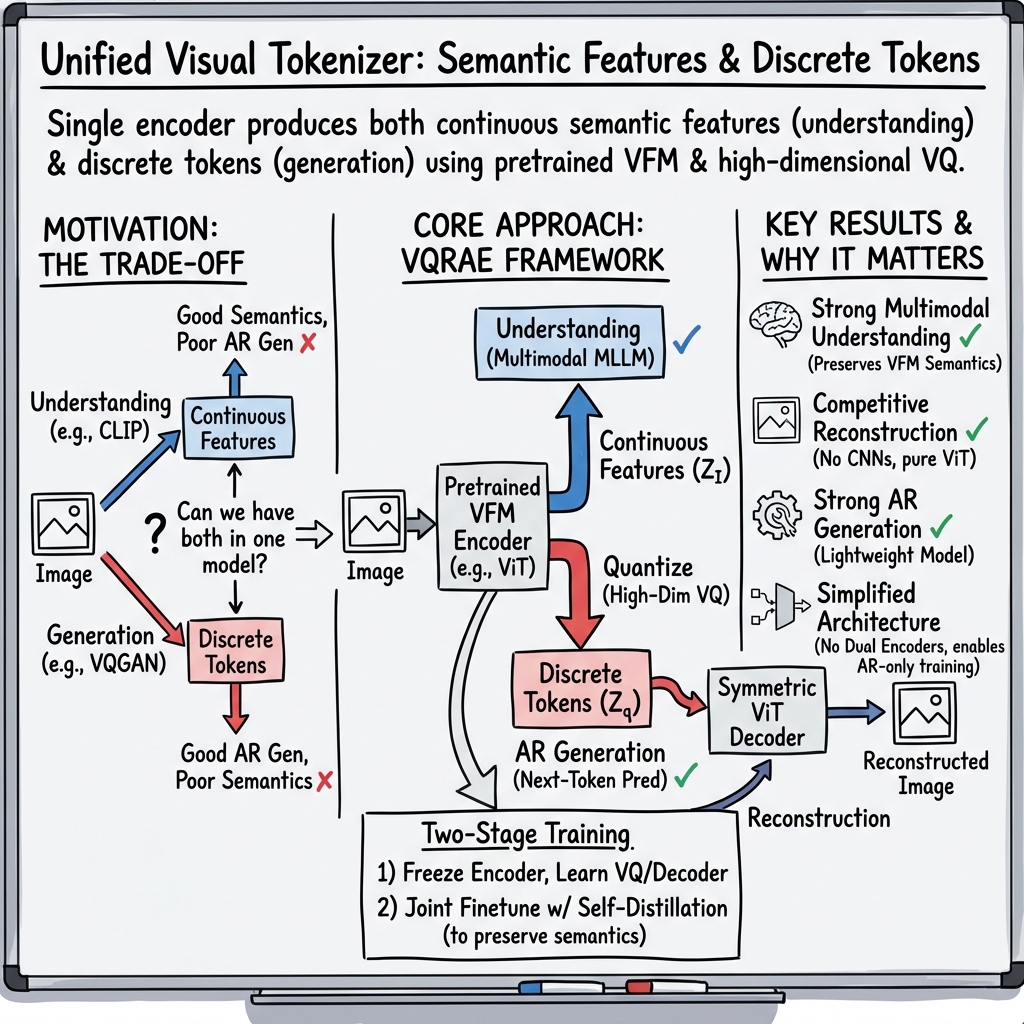

- The paper introduces VQRAE, a unified model leveraging a high-dimensional semantic codebook to integrate visual understanding, generation, and reconstruction.

- It employs a two-stage training strategy with a frozen encoder followed by joint optimization, ensuring robust semantic retention and high-quality image reconstruction.

- Experimental benchmarks show VQRAE outperforms dual-encoder models in efficiency and interaction depth, paving the way for enhanced multimodal AI systems.

Overview of VQRAE: Representation Quantization Autoencoders for Multimodal Understanding, Generation, and Reconstruction

The paper "VQRAE: Representation Quantization Autoencoders for Multimodal Understanding, Generation and Reconstruction" (2511.23386) introduces a novel approach in unified multimodal modeling that seeks to address the challenges in integrating visual understanding and generative tasks within a single architecture. The primary contribution of the paper is the introduction of the Vector Quantization Representation Autoencoder (VQRAE), which leverages a unified tokenizer capable of producing continuous semantic features for tasks such as image understanding and discrete tokens for tasks like visual generation and reconstruction.

Technical Approach

VQRAE Architecture

The VQRAE framework utilizes pretrained vision foundation models (VFMs) like CLIP as the base, building upon these with a symmetric ViT-based decoder, and a high-dimensional semantic VQ codebook. The methodology involves a two-stage training strategy. Initially, the encoder remains frozen, allowing the framework to learn a semantic VQ codebook optimized with a pixel reconstruction objective. Subsequently, the encoder is jointly optimized with self-distillation constraints and the discrete token reconstruction objectives. This dual-stage approach ensures robust semantic feature retention alongside high-quality image reconstruction.

High-Dimensional VQ Codebook

A significant contribution in VQRAE is the exploration into high-dimensional codebooks, around 1536 dimensions, which contrast previous practices typically favoring low-dimensional spaces (8-256 dimensions). The high-dimensional semantic codebook achieves nearly 100% utilization, effectively resisting collapse—common in lower-dimensional techniques—while maintaining superior image reconstruction and generation capabilities. This contrasts with previous VQ methods that could not maintain such robustness at higher dimensions.

Experimental Validation

The paper reports competitive results across numerous benchmarks for visual comprehension, generation, and reconstruction. VQRAE excels in executing a balanced trade-off between these tasks within a unified tokenizer architecture, surpassing dual-encoder models like TokenFlow and Janus in complexity efficiency and interaction depth. Results indicate improved semantic feature retention without sacrificing fine-grained detail reconstruction.

Generative Capability

When applied to autoregressive image generation, VQRAE demonstrates compatibility with highly optimized AI infrastructures, showcasing improved generation quality and scaling dynamics. This includes generating styles, subjects, and scenarios with competitive performance relative to both diffusion-based and existing autoregressive models.

Implications and Future Directions

The VQRAE presents significant strides in achieving integrated multimodal modeling, paving the way for future developments in unified models capable of seamless transitions between understanding and generation. The successful implementation of a high-dimensional quantization strategy introduces exciting possibilities for further enhancing model efficiency and capability across diverse domains.

Future research could explore leveraging VQRAE’s unified tokenizer in different applications, particularly focusing on the synergies between various modalities to optimize and streamline task integration. Moreover, continued advancements in model scaling and encoder-decoder interaction dynamics will be crucial to exploring the full potential of VQRAE in expanding multimodal AI systems' capabilities.

Conclusion

Overall, the VQRAE paper contributes a meaningful advancement in the multimodal field by introducing a robust architecture capable of integrating diverse tasks in a unified setting. The use of high-dimensional semantic codebooks and innovative training paradigms validates the capability of VQRAE to maintain high performance across multiple domains, offering valuable insights for future research in multimodal AI.