- The paper presents the first physical ID-transfer attack exploiting the MOT’s association phase using adversarial trajectories.

- It uses gradient descent optimization to derive physical maneuvers that cause identity swaps in both simulated and real-world settings.

- Experimental results reveal high success rates across multiple MOT models, highlighting vulnerabilities in safety-critical systems.

Physical ID-Transfer Attacks Against Multi-Object Tracking via Adversarial Trajectory

Introduction and Motivation

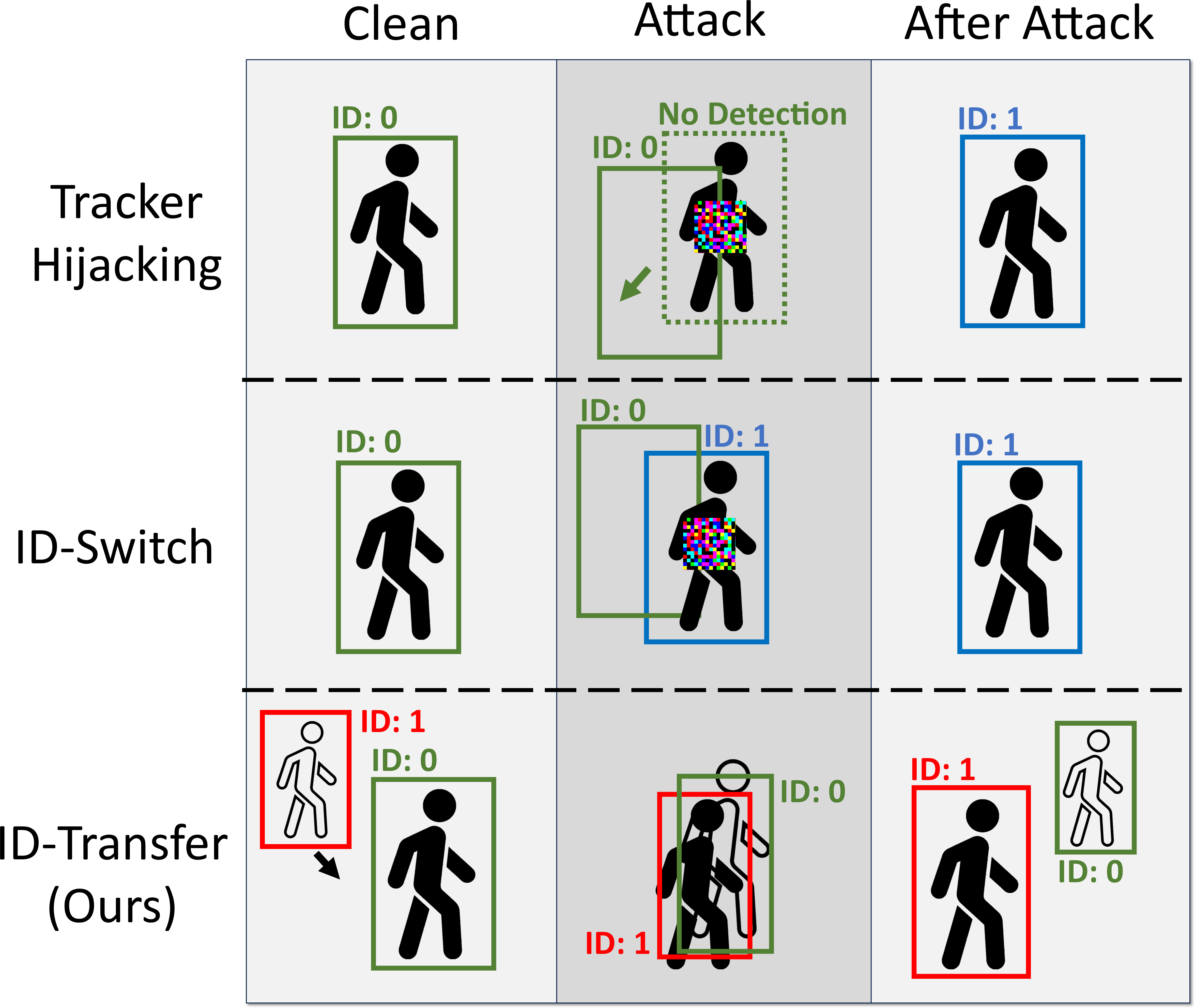

This work investigates a critical vulnerability in state-of-the-art Multi-Object Tracking (MOT) systems deployed in safety-critical domains such as surveillance and autonomous driving (AD). It formalizes and demonstrates Physical ID-Transfer Attacks, in which an attacker can maneuver physically, following an adversarial trajectory that causes a MOT system to swap the identities (IDs) of the attacker and a target object. This attack occurs independently of the object detection (OD) stage—prevailing in real time and in the physical domain—by exploiting the MOT’s association phase and motion prediction models.

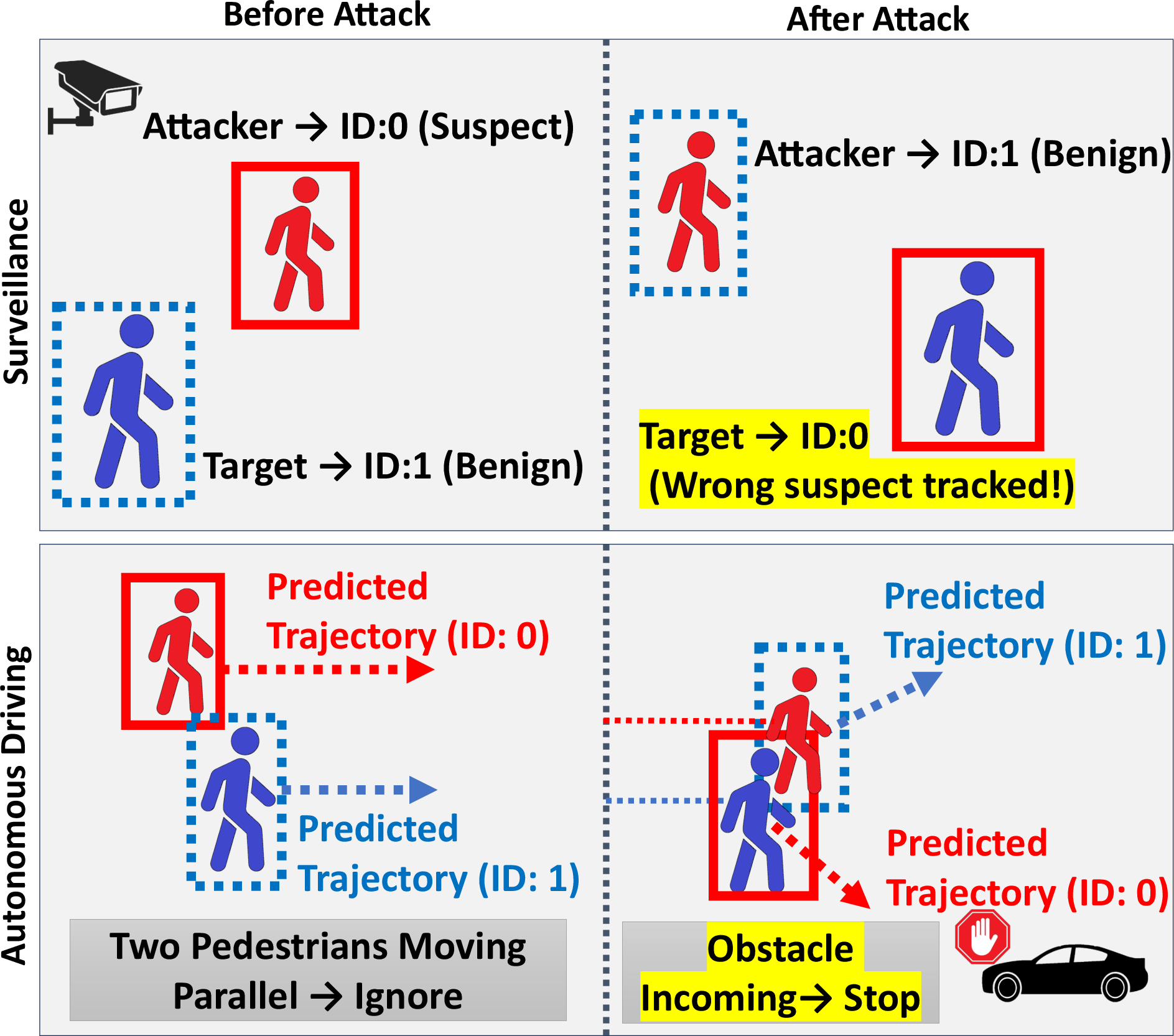

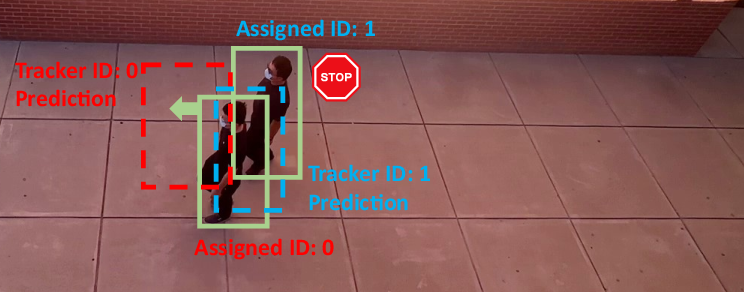

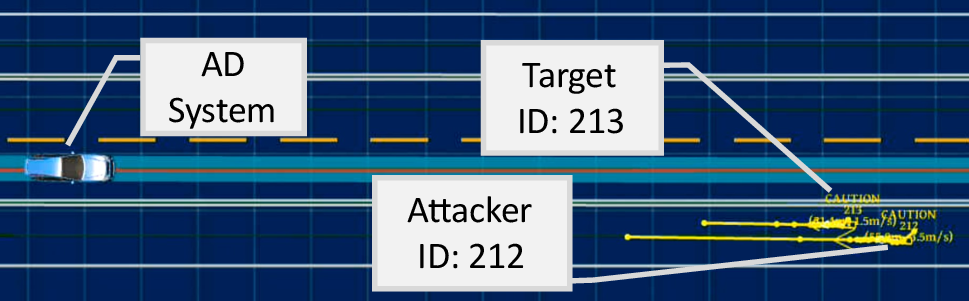

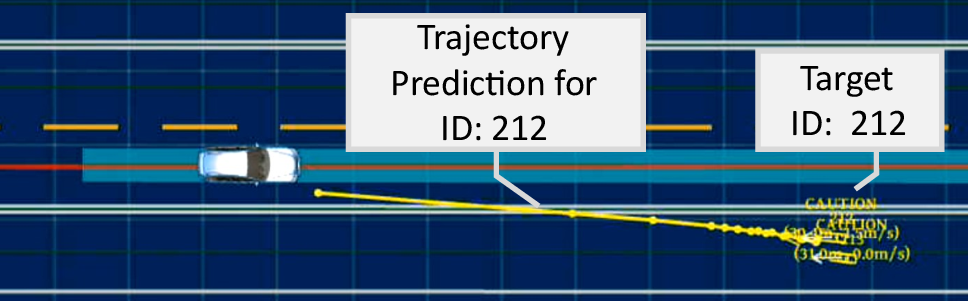

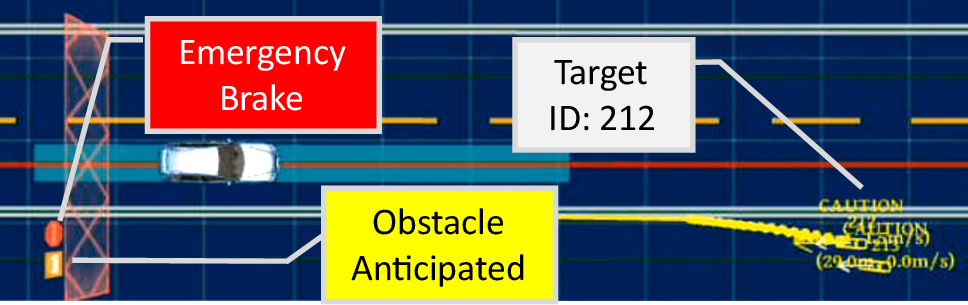

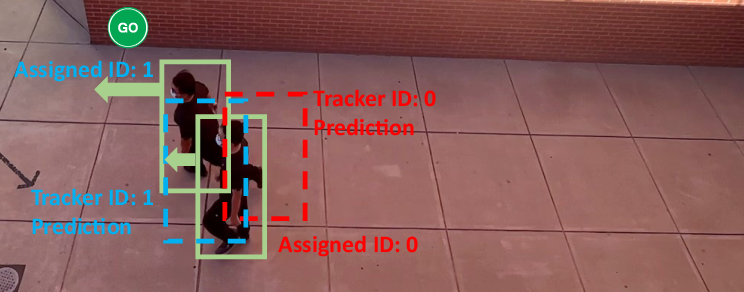

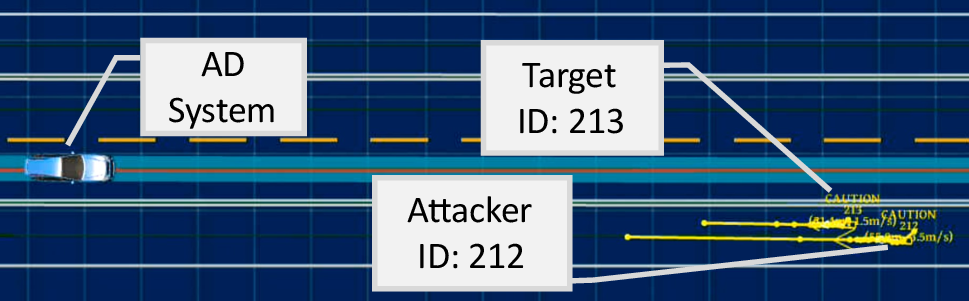

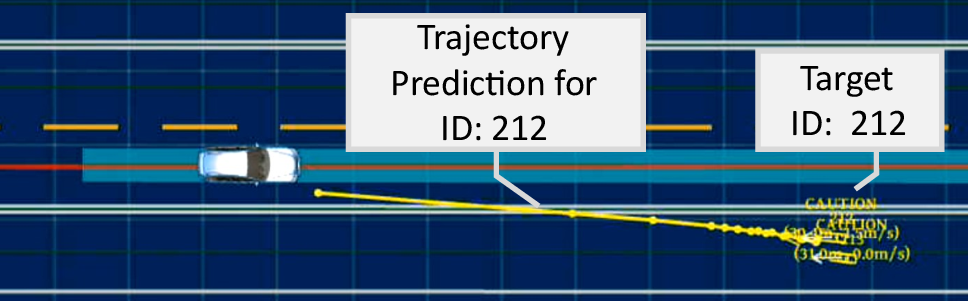

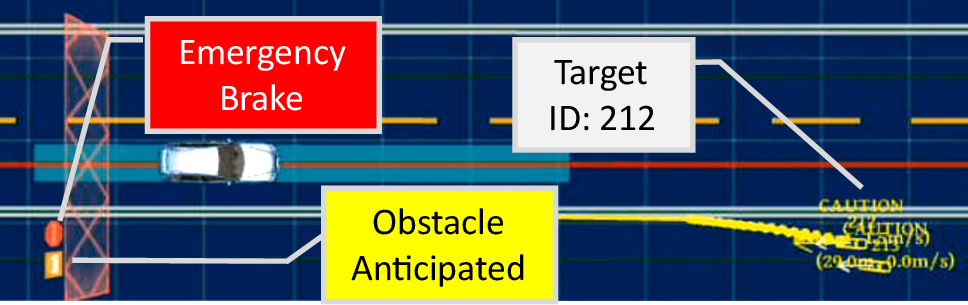

The consequences of such ID-Transfer are demonstrated for both surveillance—misidentifying and erroneously tracking persons of interest—and autonomous driving, where trajectory histories inconsistent with ground truths induce prediction failures in AD systems.

Figure 1: ID-Transfer attack consequences: erroneous target tracking in surveillance and incorrect trajectory prediction in AD due to inconsistent historical data.

Technical Framework and Threat Model

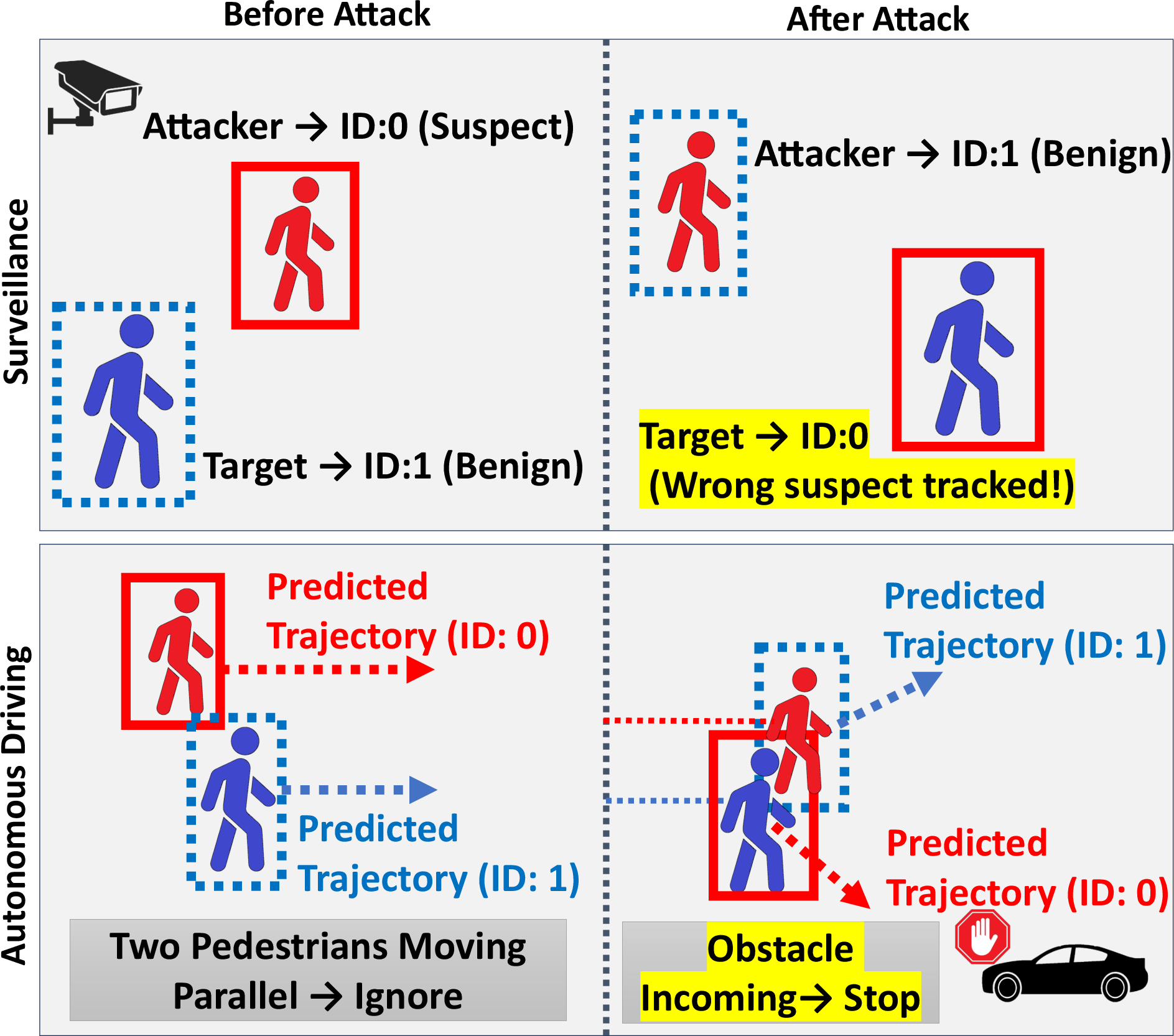

Most modern MOT algorithms follow a classic tracking-by-detection paradigm: OD models identify objects per frame, after which the association phase matches detected bounding boxes to trackers using weighted bipartite graph matching based predominantly on bounding box overlap and predicted motion (Kalman Filters or derivatives).

Figure 2: Standard tracking-by-detection pipeline, from detection to association and continuous object tracking.

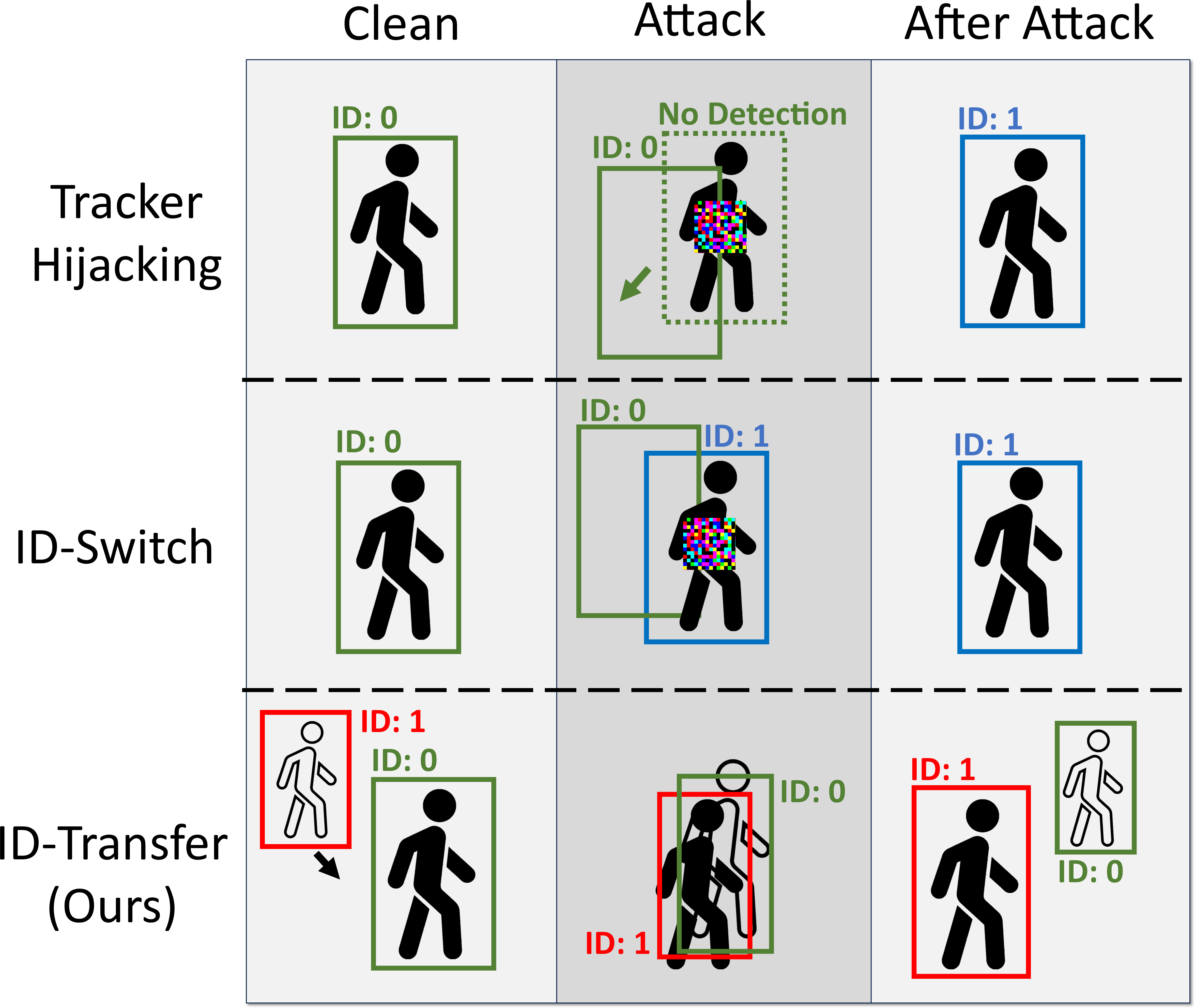

Prior attacks on MOT have primarily focused on digital perturbations to the OD module, requiring strong adversary capabilities (white-box knowledge, access to video frames, etc.) and producing non-robust, offline-only attacks. In contrast, this work presents a method that bypasses digital perturbation entirely, instead optimizing the attacker’s physical trajectory to induce association errors via the predicted object motion.

Figure 3: Comparison: prior attacks manipulate OD modules in the digital space; ID-Transfer attacks operate via adversarial physical trajectories.

The threat model assumes the attacker can observe the MOT sensor layout and target trajectory and control its own movement within reasonable physical constraints, but cannot affect other objects.

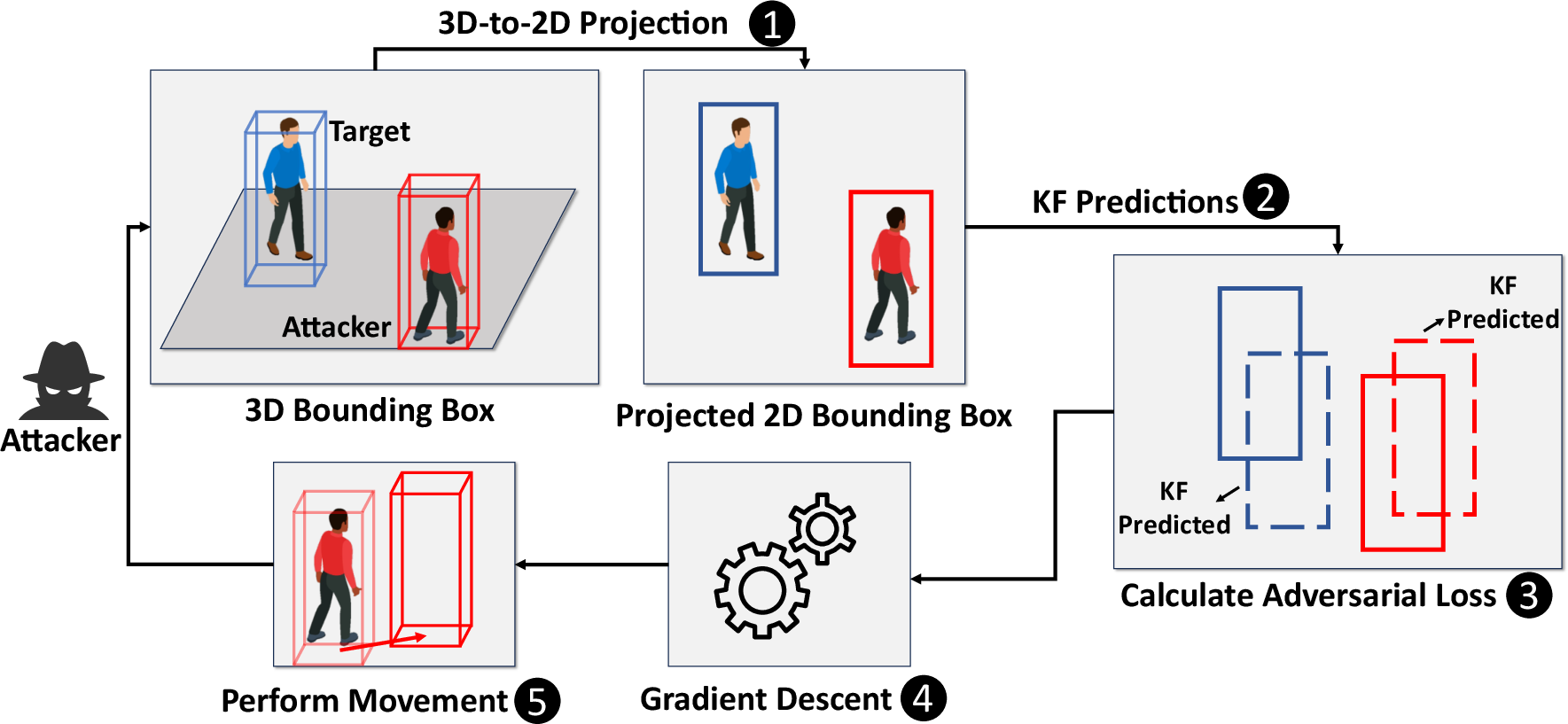

Attack Methodology

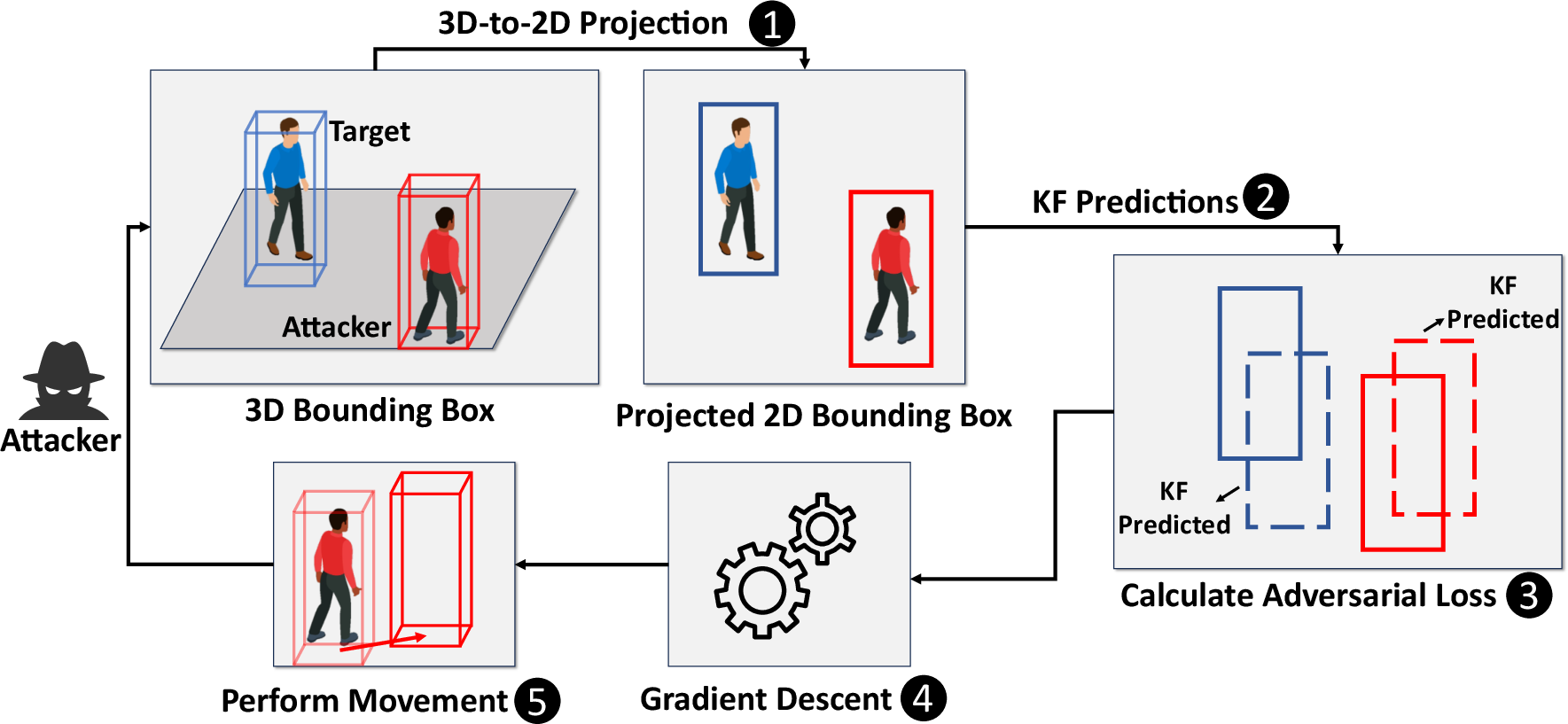

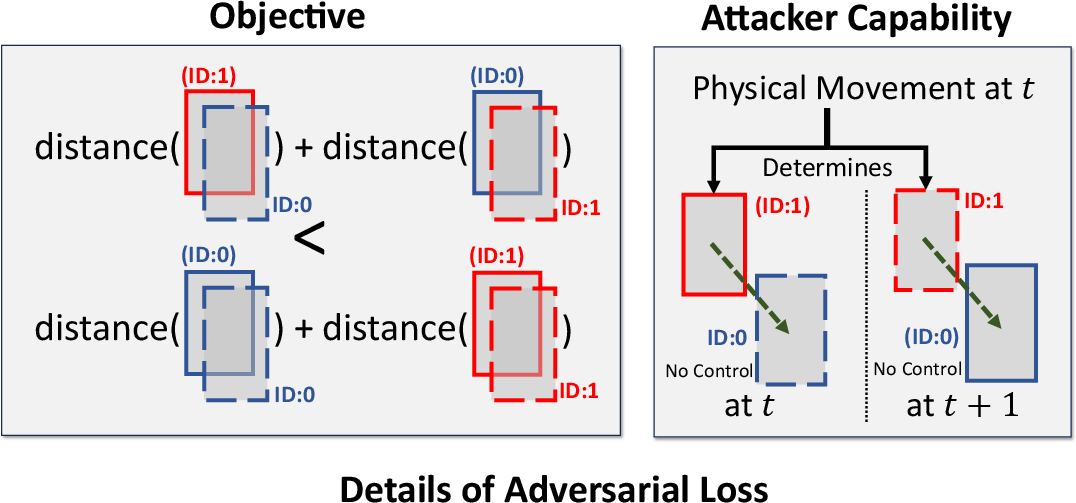

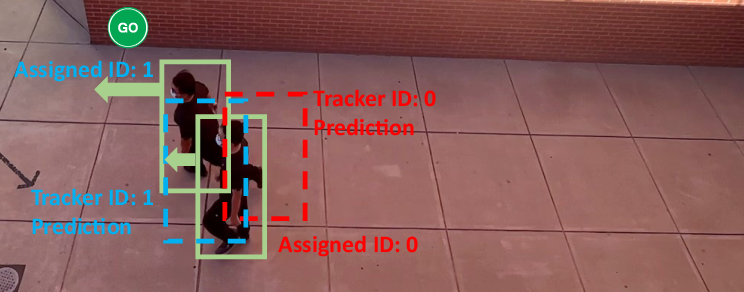

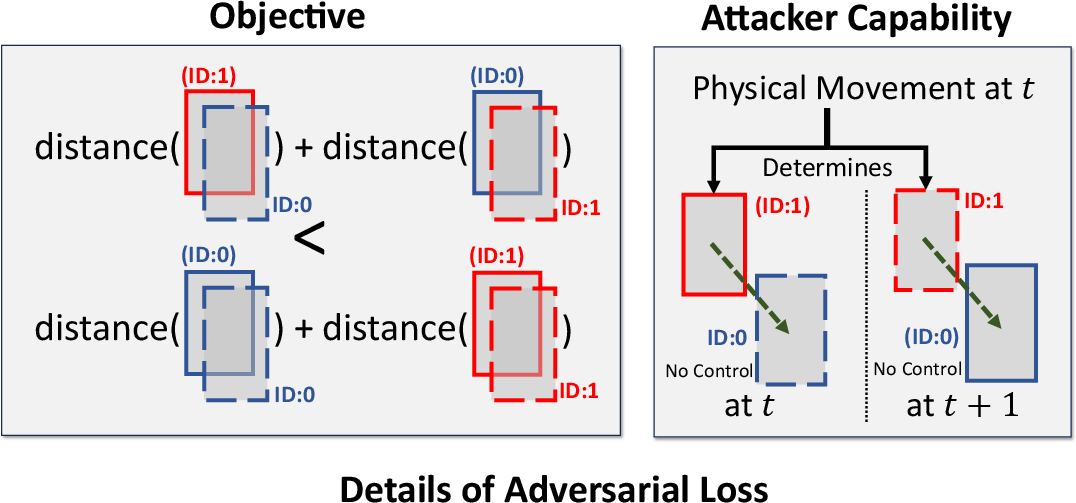

The core insight is that motion-only association in MOT systems, especially those utilizing Kalman Filters, renders them susceptible to adversarial maneuvers that manipulate matching costs in the association phase. The attack is formalized by minimizing the sum of association distances for swapped ID assignments—i.e., making the cost for the attacker-to-target tracker assignment (and vice versa) lower than the correct assignment.

A gradient-descent optimization finds the attacker’s movement at each frame, subject to a physical displacement constraint, using a loss function based on d-IoU (differentiable IoU):

Figure 4: Attack pipeline: attacker iteratively selects physical locations via adversarial loss minimization to induce ID-Transfer.

The loss function links the attacker’s detected box to the target’s predicted box, and the attacker’s own predicted box to the target’s actual detection, producing a trajectory that maximizes ID assignment confusion.

Figure 5: Adversarial loss: attacker’s movement simultaneously aligns its detected/predicted bounding boxes with those of the target.

Universal Adversarial Maneuvers

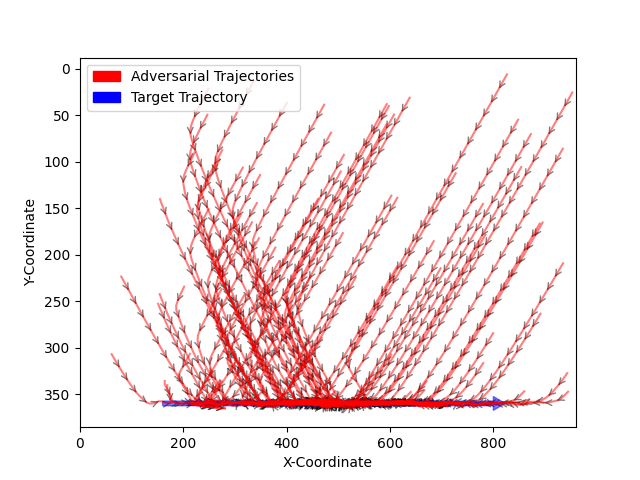

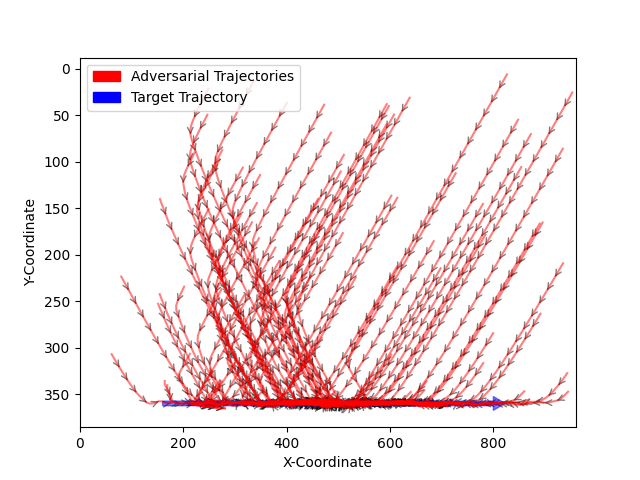

To demonstrate real-world feasibility for human adversaries lacking precise control, the work abstracts optimized physical trajectories into two universal adversarial maneuvers (UAMs):

- Go-and-Stop: Attacker starts behind target, accelerates to approach, then abruptly decelerates once close, causing Kalman filter prediction to overshoot and swap IDs.

- Stop-and-Go: Attacker starts ahead, moves towards target’s future path, then accelerates as target passes, again inducing ID assignment reversal.

These heuristics were validated both in simulation and physical trials.

Figure 6: Example of Go-and-Stop UAM when the attacker starts behind the target.

Figure 7: Visualization of adversarial trajectories—bounding box centers showing common patterns for successful attacks.

Experimental Results

Simulation (CARLA)

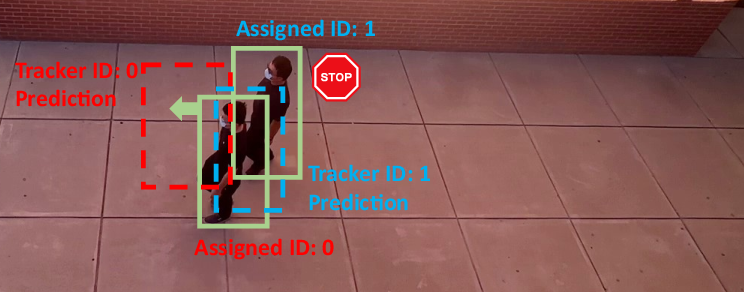

White-box attacks against SORT achieved a 100% success rate; transferability to ByteTrack, OC-SORT, Deep OC-SORT, BoT-SORT, and StrongSORT yields success rates between 74% and 93% for pedestrian surveillance, with similarly high rates for AD scenarios. For UAMs executed by human adversaries, attack success rates reached 45% in real-world settings.

Real-World Experiments

Human subjects performed UAMs in pedestrian surveillance and AD scenarios (with both similar and distinct attires for attacker/target), achieving substantial attack rates on most MOT models—especially those lacking robust appearance (ReID) integration.

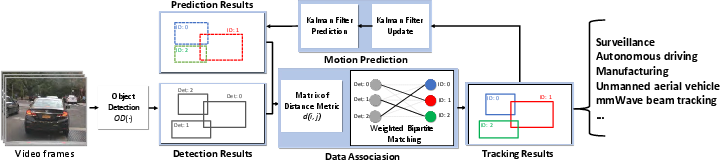

Figure 8: Real-world vehicle surveillance: examples of successful ID-Transfer attacks.

Figure 9: MOT frame before attack; IDs correctly assigned prior to adversarial trajectory.

Impact and Implications

The transferability to black-box MOT models is attributed to shared design principles (linear motion prediction and bipartite matching), suggesting systemic vulnerability across tracking-by-detection MOTs. Appearance-based association (ReID) can mitigate, but does not eliminate, susceptibility—especially when bounding boxes overlap or appearance features are ambiguous.

These findings have significant implications:

- Safety-Critical Systems: AD trajectory prediction can be subverted without direct OD attacks, risking improper vehicle planning and control decisions.

- Surveillance: Attacker can evade identification or incriminate benign targets through physical maneuvers.

- MOT Robustness: The reliance on linear motion prediction and insufficiently adaptive association exposes MOT systems to attacks that are physically realizable, even by non-expert adversaries.

Countermeasures and Future Work

Potential defenses include:

- More expressive, adaptive motion prediction models (Extended Kalman Filter, Particle Filter).

- Robust appearance features with improved update mechanisms to minimize cross-contamination.

- Multi-modal association measures combining motion, appearance, and spatial-temporal context.

- Anomaly detection on motion patterns, flagging excessive non-linearity.

Further research should aim to realize physical white-box attacks in real systems, evaluate the impact of execution errors, and design comprehensive MOT robustness benchmarks against physical attacks.

Conclusion

This paper presents the first physical, online ID-Transfer attack against MOT systems, fundamentally exploiting the association phase rather than OD. High attack success rates in simulation and physical trials underscore the under-recognized weaknesses of tracking-by-detection MOTs. The demonstrated universal adversarial maneuvers show that even human agents can effectively induce severe tracking errors, necessitating improved defense mechanisms in MOT systems for safety-critical applications.