UTrice: Unifying Primitives in Differentiable Ray Tracing and Rasterization via Triangles for Particle-Based 3D Scenes

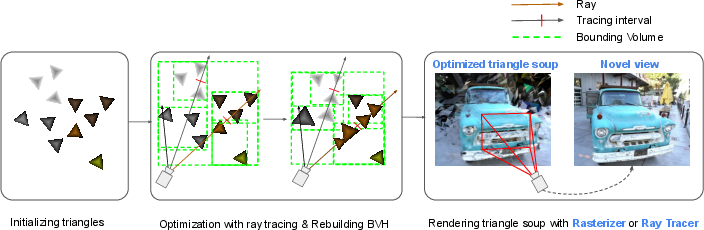

Abstract: Ray tracing 3D Gaussian particles enables realistic effects such as depth of field, refractions, and flexible camera modeling for novel-view synthesis. However, existing methods trace Gaussians through proxy geometry, which requires constructing complex intermediate meshes and performing costly intersection tests. This limitation arises because Gaussian-based particles are not well suited as unified primitives for both ray tracing and rasterization. In this work, we propose a differentiable triangle-based ray tracing pipeline that directly treats triangles as rendering primitives without relying on any proxy geometry. Our results show that the proposed method achieves significantly higher rendering quality than existing ray tracing approaches while maintaining real-time rendering performance. Moreover, our pipeline can directly render triangles optimized by the rasterization-based method Triangle Splatting, thus unifying the primitives used in novel-view synthesis.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Explain it Like I'm 14

UTrice: Using Triangles to Make Real-Time 3D Rendering Better — Explained Simply

Overview

This paper is about making 3D scenes look realistic and render quickly on computers. The authors introduce a method called UTrice that uses tiny triangles (instead of blurry “blobs” called Gaussians) to build and render 3D scenes. Their idea makes it easier to get high-quality visuals and realistic camera effects (like depth of field) while still running fast.

Goals and Questions

The paper tries to answer three simple questions:

- Can we use triangles as the basic building blocks for both main ways of rendering—ray tracing and rasterization—so everything works together smoothly?

- Can triangles help create sharper, more detailed images than the usual “Gaussian splats” used in many recent methods?

- Can this be done fast enough to be useful in real-time or near real-time applications (like games or VR)?

How the Method Works (with analogies)

Think of rendering as shining millions of tiny flashlight beams (rays) into a 3D scene to figure out what color each pixel should be.

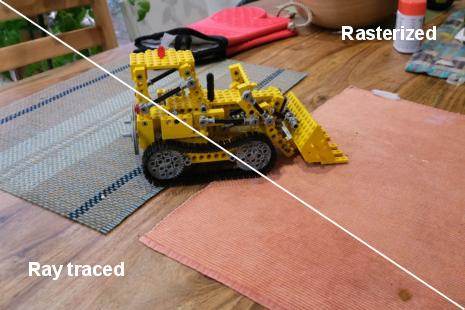

- Ray tracing: Like shining those flashlight beams and seeing which objects the beams hit first. It’s great for realistic effects, like soft blur (depth of field), reflections, and lighting.

- Rasterization: Like flattening 3D triangles onto the screen quickly. It’s super fast and widely used in graphics cards.

Most recent methods use “Gaussian splats,” which are fuzzy blobs in 3D that look smooth but can be tricky to ray trace. To trace Gaussians, earlier work wrapped each blob in a fake shape (like putting a cardboard box around a balloon) to make ray intersections easier. This adds extra work and memory.

UTrice does something simpler:

- It uses triangles directly as the primitive (the basic shape). Triangles are the most common shape in computer graphics and are easy to handle for both ray tracing and rasterization.

- The triangles are initialized from 3D points captured from real photos (using a technique called Structure-from-Motion, which builds a 3D point cloud from many pictures).

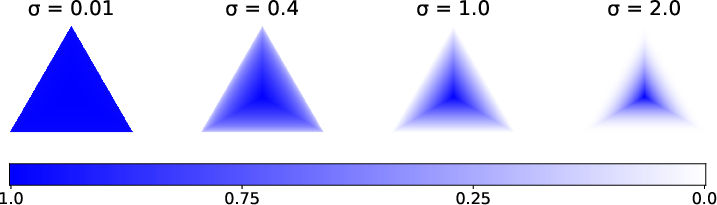

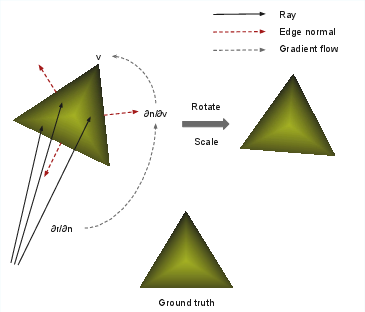

- A special “window function” gives each triangle a smooth, controllable response when a ray hits it. This lets the triangle be “differentiable,” meaning the computer can slightly adjust the triangle’s corners during training to make the final image match real photos better. Think of it like an adjustable sticker: the more you tweak its corners, the better it fits the scene.

- The method uses a smart search tree (a BVH, like a folder-tree that organizes shapes) to quickly find which triangles a ray might hit. Because they use triangles directly, they skip building complicated “proxy geometry” around blobs.

- They blend colors in front-to-back order (like stacking tinted glass layers), and they compute losses (scores) to guide the triangle adjustments during training. This uses PyTorch and custom GPU code to make everything fast.

Importantly, UTrice works with existing triangle-based methods (like Triangle Splatting) and modern ray tracing engines (like OptiX), so it fits into current graphics systems easily.

Main Findings and Why They Matter

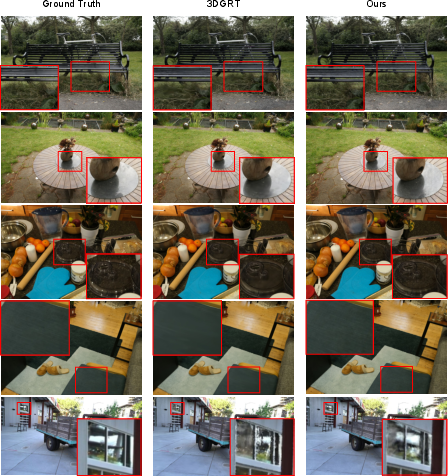

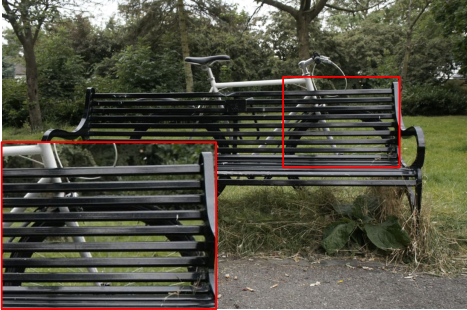

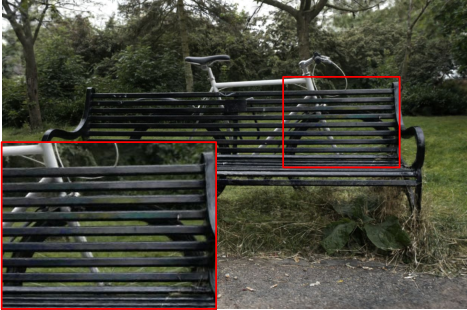

- Sharper, more realistic details: Compared to Gaussian-based ray tracing (3DGRT), UTrice preserves fine textures better and avoids overly smooth or noisy regions. It improves a key perceptual quality score (LPIPS) by around 15%, meaning images look better to people.

- Unified pipeline: The same triangles can be used for both rasterization (fast, widely used) and ray tracing (realistic effects). This avoids converting between different shape types and makes the system simpler.

- Real-time-ish speed: While UTrice is a bit slower than the optimized Gaussian tracer in some tests, it still runs near real-time and can be sped up with more engineering.

- Works with fancy camera effects: Because it traces rays directly, it can naturally handle depth of field, non-standard cameras (like fisheye or LiDAR), and advanced lighting.

Impact and What’s Next

This research shows that using triangles as the main building blocks can simplify 3D rendering and improve quality, especially for realistic effects and novel view synthesis (creating new views from a few photos). It could help:

- Game engines and VR systems render more lifelike scenes without complex conversions.

- Visual effects teams get both speed and realism in one pipeline.

- Research on non-standard cameras (like LiDAR) by tracing rays directly from those sensors.

Limitations and future work:

- The method can create lots of small, separate triangles (“triangle soup”), which increases memory and can slow training.

- Training currently has some inefficiencies, and extremely skinny or weird triangles can cause issues.

- The authors plan to reduce redundancy, make training faster, and handle extreme triangle shapes more robustly.

In short, UTrice brings triangles back to center stage, making high-quality, realistic 3D rendering simpler and more unified across different techniques.

Knowledge Gaps

Knowledge gaps, limitations, and open questions

Below is a concise list of unresolved issues and opportunities for future work identified in the paper.

- Physical light transport and materials: the pipeline performs front-to-back alpha blending of k-closest intersections with per-triangle SH color; it does not support physically based BRDFs, shadow rays, secondary bounces, or global illumination. How to extend UTrice to full Monte Carlo path tracing with materials, importance sampling, and multiple scattering remains open.

- Shading and re-lighting: using degree-3 spherical harmonics to encode per-triangle color precludes re-lighting and view-/light-dependent effects (specularities, anisotropy). It is unclear how to parameterize and optimize physically meaningful materials (e.g., microfacet BRDFs) and normals per triangle while retaining stability and real-time performance.

- Differentiability of the window function: the triangle window function uses max and ReLU, which are non-smooth and introduce kinks at edges and outside the triangle. The paper does not analyze gradient bias/variance, stability at boundaries, or the effect of the smoothness factor on convergence. A principled schedule for , or smoother alternatives (e.g., softmax/log-sum-exp), is not explored.

- Robustness to degenerate/extreme triangles: the method currently “lacks a mechanism for handling extreme triangles,” and training can produce NaNs without view-based pruning. What regularization, reparameterization, or topology operations (edge collapse/swap, aspect-ratio constraints) can robustly prevent slivers and degeneracies?

- Primitive count and memory overhead: the “triangle soup” has no connectivity, causing redundant vertex storage and higher VRAM usage. There is no mechanism for vertex sharing, connectivity recovery, remeshing, decimation, or hierarchical LODs. Quantifying and reducing memory footprint and bandwidth costs is an open direction.

- BVH update strategy and profiling: while triangles avoid custom proxy geometry, the paper does not quantify BVH rebuild/refit costs or detail update frequency during training. Evaluating refit vs rebuild, TLAS/BLAS separation, incremental rebuilds, and persistent BVHs—and providing a per-iteration time breakdown—remains to be done.

- k-closest buffer and termination thresholds: the impact of k, buffer size, and transmittance thresholds on quality, bias, and performance is not ablated. Adaptive, per-ray selection of k and principled stopping criteria (with error control) are unexplored.

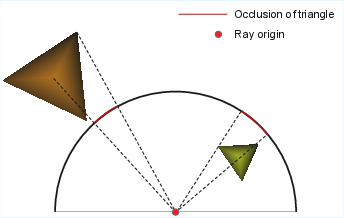

- Densification and pruning design: the proposed world-space “occlusion” metric (angle-based) replacing image-space footprint lacks theoretical justification and extensive ablation across scenes and camera models. Sensitivity to thresholds (e.g., ), and generalization of the criterion to non-pinhole cameras are not evaluated.

- Compatibility with rasterization-based Triangle Splatting: the claim of compatibility via invariance under linear transformations is qualitatively shown but not rigorously analyzed, especially since perspective projection is non-linear. Conditions under which compatibility breaks (e.g., severe lens distortion, extreme FOV) are not studied.

- Camera and sensor models: although the tracer accepts arbitrary rays and shows depth-of-field, no experiments validate non-pinhole models (fisheye, panoramic, rolling shutter) or active sensors (LiDAR with time-of-flight and intensity). How gradients behave and whether the occlusion/footprint metrics still hold under these models is unknown.

- Anti-aliasing and filtering: there is no analysis of aliasing for sub-pixel or minified triangles, nor exploration of analytic filtering (e.g., EWA) or stochastic supersampling to control shimmer and moiré in animation and novel views.

- Geometry accuracy and supervision: the paper reports image-space metrics (PSNR/SSIM/LPIPS) but no geometry metrics (depth/normal/mesh quality) or comparisons against ground-truth geometry. It remains unclear how accurately UTrice recovers scene structure and surface normals.

- Initialization sensitivity: triangles are initialized from SfM points as in 3DTS, but the sensitivity to initialization quality, point density, and noise is not studied. Whether the method can recover from poor or sparse initializations remains open.

- Dynamic/4D scenes: applicability to deforming or dynamic scenes (time-varying triangles, learnable motion) is untested. How to maintain BVH coherence, handle topology changes, and ensure stable gradients over time is unresolved.

- Scaling to very large scenes: evaluation covers a limited subset of Mip-NeRF360 and Tanks and Temples at downsampled resolutions. Out-of-core training/inference, streaming, chunking, and LOD strategies for city-scale scenes are not addressed.

- Fairness and scope of comparisons: comparisons to baselines mix reproduced and reported numbers with different downsampling factors and “quality vs performance” settings. Matched training budgets, uniform preprocessing, and user studies for perceptual quality are missing.

- Performance portability: results are on a single RTX 6000 Ada GPU; there is no evaluation on other GPUs, APIs (DXR/Vulkan), or mobile/embedded devices, nor analysis of performance portability and memory limits.

- Parameterization of color basis: the choice of SH degree (fixed at 3) is not ablated. The trade-offs between SH order, storage cost, high-frequency reflectance (e.g., glints), and stability require systematic study.

- Early termination bias: front-to-back alpha compositing with transmittance thresholds introduces potential bias. Quantifying the induced error and devising error-controlled termination schemes are open topics.

- Reproducibility details: key hyperparameters (e.g., schedule, , prune/merge thresholds), BVH update frequency, and implementation choices are either deferred to supplementary material or not fully specified; a complete recipe for reproduction and sensitivity analysis is missing.

Practical Applications

Practical Applications of UTrice

UTrice introduces a differentiable, triangle-based ray tracing pipeline for particle-based 3D scenes that unifies rasterization and ray tracing without proxy geometry. It maintains near real-time performance, improves perceptual quality over Gaussian-based ray tracing (e.g., 3DGRT), and directly supports triangles optimized by rasterization (e.g., 3DTS), enabling realistic effects (depth of field, environment lighting, non‑pinhole camera models) via standard hardware ray tracing APIs (OptiX/DXR). Below are actionable applications, categorized by immediacy, with sectors, potential products/workflows, and feasibility notes.

Immediate Applications

The following can be deployed now with current tooling, given access to modern GPU ray tracing (e.g., NVIDIA RTX/OptiX or DXR) and multi-view scene capture.

- Real-time novel view synthesis with ray-traced effects

- Sector: software/graphics, gaming, AR/VR, film/VFX

- What: Integrate UTrice to render triangle-based radiance fields with physically based ray-traced effects (depth of field, reflections) while preserving fine details better than Gaussian ray tracing.

- Tools/workflows:

- Pipeline:

SfM point cloud -> 3DTS training (rasterization) -> UTrice ray-traced rendering - Engine integrations: OptiX/DXR backends; Unity/Unreal plugin for triangle splat radiance fields; Blender/Omniverse add-on for scene capture and rendering

- Assumptions/dependencies: Requires GPU ray tracing hardware (RTX-class or DXR-capable), sufficient VRAM for triangle “soup” (no mesh connectivity), multi-view image datasets, PyTorch/CUDA for training; current training is ~2× slower than 3DGRT and rendering is ~30% slower than 3DGRT quality mode.

- Photogrammetry and digital twins with realistic camera/lens modeling

- Sector: AEC (architecture, engineering, construction), industrial inspection, cultural heritage

- What: Replace Gaussian-based pipelines with triangle primitives for better edge sharpness and color consistency in virtual walkthroughs and asset inspections; leverage non‑pinhole camera models (panoramic, fisheye) for specialized optical setups.

- Tools/workflows: SfM capture -> triangle-based optimization -> UTrice ray-traced viewer with configurable lens models; export to CAD/BIM or twin platforms

- Assumptions/dependencies: Accurate camera calibration; adequate viewpoints for SfM; GPU ray tracing availability; memory management for large scenes.

- High-fidelity product visualization and interactive catalogues

- Sector: e-commerce, retail marketing

- What: Produce photoreal renderings with depth of field and realistic lighting from captured product scenes, avoiding detail loss typical of Gaussian kernels.

- Tools/workflows: Batch pipeline to auto-train triangle fields from product photos; web viewer backed by server-side GPU ray tracing; precomputed ray-traced assets for AR overlays

- Assumptions/dependencies: Multi-view imagery per product; GPU rendering in the pipeline; server or edge compute for interactive experiences.

- Robotics sensor re-simulation and camera prototyping

- Sector: robotics/autonomous vehicles

- What: Use UTrice’s ray-only input to model non‑pinhole cameras and LiDAR-like ray paths for re-simulation and perception testing in photorealistic scenes (compatible with prior LiDAR-RT concepts).

- Tools/workflows: Scene capture -> triangle-based radiance field -> ray-set import (LiDAR scan patterns, fisheye rigs) -> UTrice simulation harness for benchmarking perception models

- Assumptions/dependencies: Accurate scene capture; ray patterns reflecting real sensor characteristics; GPU ray tracing; triangle count/memory tuned for large environments.

- Educational tooling for differentiable rendering and ray tracing

- Sector: education/academia (graphics/CV courses)

- What: Teaching modules demonstrating differentiable ray tracing with triangle primitives, gradient flow to geometry, and unified rasterization-ray tracing optimization.

- Tools/workflows: Ready-to-use notebooks (PyTorch + OptiX), assignments replicating window function and gradient propagation; visualizations of k-closest intersection ordering

- Assumptions/dependencies: Access to GPU-equipped labs; OptiX/DXR support; simplified datasets.

- VFX/previz pipelines with unified optimization and final rendering

- Sector: film/VFX

- What: Optimize scenes quickly via rasterization (3DTS) for previz; switch to UTrice for final ray-traced looks (DOF, complex camera models) without proxy geometry conversion.

- Tools/workflows: Dailies pipeline using triangle splats; shot-level tuning of smoothness/opacity; ray-traced deliverables for lookdev

- Assumptions/dependencies: Studio RTX infrastructure; I/O tooling around triangle assets; quality controls for triangle densification/pruning.

Long-Term Applications

These are promising but require further research or engineering optimization (e.g., reducing primitive counts, memory, training/runtime speed, mobile support, and robust handling of “extreme” triangles).

- Mobile and edge deployment for AR/VR and on-device scanning

- Sector: AR/VR, consumer apps

- What: Real-time triangle-based ray-traced rendering on mobile SoCs with hardware ray tracing (future DXR/Vulkan RT on mobile) for in-situ visualization and scanning.

- Tools/products: Mobile SDK leveraging DXR/Vulkan RT; on-device pipeline

capture -> train (lightweight) -> render; compression of triangle fields - Assumptions/dependencies: Mobile RT hardware maturity; memory-efficient triangle representation (connectivity/instancing), faster training; energy-efficient kernels.

- City-scale digital twins and simulation for policy and urban planning

- Sector: public policy, smart cities, transportation

- What: High-fidelity, photoreal city models enabling accurate optical simulation (e.g., glare, visibility) and sensor planning (camera/LiDAR placement), with unified rendering across tools.

- Tools/workflows: Scalable capture -> distributed training; streaming tri‑splat fields; analytic modules for visibility and lighting impact

- Assumptions/dependencies: Scalable BVH for billions of primitives; streaming/LOD strategies; governance for data capture/privacy; multi-GPU/cloud rendering.

- Integrated AAA game asset pipeline with physically based triangle radiance fields

- Sector: gaming

- What: Authoring tools that transform captured environments into optimized triangle splat radiance fields, with final ray-traced effects unified across engine rendering paths.

- Tools/products: Engine plugins, automatic triangle densification/pruning pipelines, hybrid raster + ray tracing; asset QA for perceptual metrics (LPIPS-driven)

- Assumptions/dependencies: Performance parity with engine budgets; robust handling of dynamic scenes; better memory footprint and editor tooling.

- Medical visualization and surgical training with non‑pinhole optics

- Sector: healthcare

- What: Endoscopic/fisheye camera simulation for training and planning in photoreal anatomical models using triangles for sharper features and realistic lens effects.

- Tools/workflows: Procedural scene generation -> ray-set modeling of medical optics -> UTrice renderers integrated into training simulators

- Assumptions/dependencies: Regulatory constraints; validated medical datasets; specialized lens/sensor modeling; deterministic performance guarantees.

- Industrial inspection and energy asset monitoring

- Sector: energy, manufacturing

- What: Digital twin inspections with accurate optical renderings (e.g., reflective surfaces, complex lighting in plants) for remote assessment and training.

- Tools/workflows: Capture pipelines; inspection viewers with ray-traced DOF/reflections to reveal micro-defects; integration with maintenance systems

- Assumptions/dependencies: Scene capture at scale; runtime optimizations; domain-specific visualization and reporting layers.

- Standardization of triangle-based differentiable rendering in research/industry

- Sector: academia/standards

- What: Establish common formats and APIs for triangle radiance fields, unifying rasterization and ray tracing workflows (data representations, window function parameters, SH color models).

- Tools/workflows: Open specs, reference implementations, benchmark suites (SSIM/LPIPS/PSNR + runtime metrics), dataset standards

- Assumptions/dependencies: Community adoption; cross-vendor RT support; reproducible training/evaluation pipelines.

- Real-time sensor re-simulation for autonomous systems at fleet scale

- Sector: robotics/autonomous vehicles

- What: Massive scenario generation and ray-based re-simulation (cameras/LiDAR) for robustness testing and domain randomization using triangle radiance fields.

- Tools/workflows: Cloud-scale rendering farms; scenario authoring; feedback loops with perception models; automatic densification/pruning tuned to sensor regimes

- Assumptions/dependencies: Significant engineering in distributed BVH traversal; memory-efficient representations; integration with ML training pipelines.

- Photoreal telepresence and remote collaboration

- Sector: enterprise collaboration, education

- What: Streaming photoreal environments with accurate lens effects and high-frequency details for immersive teaching and collaboration.

- Tools/products: Real-time streaming of triangle fields; adaptive LOD; lightweight client renderers

- Assumptions/dependencies: Network bandwidth and latency; client hardware with RT; codec support for triangle field transmission; runtime scaling.

Each of these applications leverages UTrice’s core innovations: triangle primitives as a unified, differentiable representation across rasterization and ray tracing; compatibility with complex camera models through ray-defined inputs; reduced BVH/intersection overhead versus proxy geometry; and improved perceptual quality in high-frequency regions. Feasibility is primarily governed by hardware ray tracing availability, scene capture quality, memory/runtime optimization for triangle “soup,” and integration into existing production pipelines.

Glossary

- 3D Gaussian Splatting: A point-based differentiable rendering technique representing scenes with Gaussian particles for real-time novel-view synthesis. "3D Gaussian Splatting~\cite{Kerbl2023-nj} represents scenes with anisotropic Gaussian particles and uses a tile-based rasterizer to optimize their position and appearance, achieving state-of-the-art novel-view synthesis and real-time rendering."

- Acceleration structure (BVH): A hierarchical spatial index used by ray tracers to accelerate intersection queries. "The construction of an OptiX program can be roughly divided into two steps: building the acceleration structure (BVH) and defining the ray tracing behavior through various built-in programs."

- Alpha blending: A compositing operation combining overlapping semi-transparent primitives using per-primitive opacity. "The overlapping N particles are then composited using the following alpha blending equation to obtain the final pixel color."

- Anisotropic Gaussian: A Gaussian distribution with direction-dependent variance used as a rendering kernel. "3D Gaussian Splatting~\cite{Kerbl2023-nj} represents scenes with anisotropic Gaussian particles..."

- Any-hit program: An OptiX shader stage invoked on potential ray-primitive hits, used to control hit processing. "This is achieved by performing an insertion sort within the Any-hit program."

- Bounding Volume Hierarchy (BVH): A tree of nested bounding volumes enabling efficient ray traversal and intersection. "In ray tracing, the most fundamental acceleration data structure is the Bounding Volume Hierarchy (BVH)."

- BVH construction: The process of building the BVH from scene primitives, often a performance bottleneck. "This design substantially reduces the computational cost of BVH construction and intersection testing..."

- BVH traversal: The procedure by which rays walk through the BVH to find intersections. "store up to ray–particle intersections in front-to-back order during BVH traversal"

- Covariance matrix: A matrix encoding the shape and orientation of a Gaussian kernel in space. " is the covariance matrix."

- D-SSIM: A differentiable variant of SSIM used as a perceptual loss in optimization. "We combine the loss with the D-SSIM term \cite{Kerbl2023-nj}..."

- Densification: A training strategy that increases primitive count by subdividing or adding primitives to improve coverage. "Inspired by 2D Gaussian Splatting, they incorporated a distortion regularization term and replaced the heuristic densification strategy with an MCMC-based densification~\cite{3dgsmcmc} approach."

- Depth of field: An optical effect where parts of the scene appear sharp while others are blurred due to finite aperture. "realistic ray tracing effects such as depth of field (right)."

- Differentiable ray tracing: A ray tracing pipeline designed to support gradient backpropagation for optimization. "we propose a differentiable triangle-based ray tracing pipeline that directly treats triangles as rendering primitives..."

- Differentiable triangle: A triangle primitive with smoothly varying response enabling gradient-based optimization. "Triangle Splatting~\cite{3dts} replaces Gaussians with differentiable triangles, better preserving high-frequency details and edge sharpness."

- Environment lighting: Illumination from a surrounding environment map or global lighting field. "compute environmental lighting using Precomputed Radiance Transfer (PRT)..."

- Front-to-back traversal: An ordered processing of intersections from near to far along the ray. "Due to this front-to-back traversal design, they re-derived the gradients of the optimizable parameters."

- GLSL fragment shader: A GPU program run per-pixel in rasterization pipelines to compute color. "a small MLP is executed within a GLSL fragment shader to predict colors..."

- Icosahedron: A 20-faced polyhedron used as proxy geometry to enclose Gaussians. "They enclose each Gaussian particle with proxy geometry (an icosahedron)..."

- Incenter (triangle): The point equidistant from all edges of a triangle, center of the inscribed circle. "where is the incenter of triangle"

- Jacobian: The matrix of first-order partial derivatives describing local linearization of a transformation. "where is the Jacobian of the affine approximation of the projective transformation..."

- k-closest intersection algorithm: A method to retain the nearest k intersections along a ray for translucent compositing. "For further details on the k-closest intersection algorithm, please refer to~\cite{3dgrt}."

- k-closest semi-transparent particle-tracing algorithm: A particle tracing approach that orders and accumulates the k nearest translucent hits. "it employs a k-closest semi-transparent particle-tracing algorithm to retrieve particles that are ordered along the ray direction."

- k-element buffer: A fixed-size storage used during ray tracing to record up to k intersections. "During ray tracing, a -element buffer is used to record intersection information..."

- Light Transport Equation: The integral equation describing radiance flow in a scene, solved via rendering. "It is employed to perform Monte Carlo integration of the Light Transport Equation~\cite{Kajiya86}..."

- LPIPS: A learned perceptual image patch similarity metric used to assess visual fidelity. "For quantitative evaluation, we report PSNR, SSIM~\cite{ssim}, and LPIPS~\cite{lpips}."

- Markov Chain Monte Carlo (MCMC): A sampling-based optimization method used here for adaptive primitive densification. "replaced the heuristic densification strategy with an MCMC-based densification~\cite{3dgsmcmc} approach."

- Monte Carlo integration: A stochastic numerical method for approximating integrals, commonly used in rendering. "It is employed to perform Monte Carlo integration of the Light Transport Equation..."

- Neural Radiance Fields (NeRF): An implicit neural representation modeling scene radiance as a function of position and view direction. "Neural Radiance Fields (NeRF)~\cite{nerf} utilize an implicit multi-layer perceptron (MLP)..."

- Non-pinhole camera models: Camera models with complex optics beyond simple perspective projection (e.g., fisheye, panoramic). "further extended this method to handle non-pinhole camera models..."

- Occlusion: The blocking of light or visibility by geometry along a viewing ray. "reflects the triangleâs occlusion with respect to the camera."

- Opacity: The per-primitive property controlling transparency in compositing. "The first factor is the opacity, a widely adopted criterion."

- OptiX: NVIDIA’s programmable GPU ray tracing framework used to implement the pipeline. "For GPU-accelerated ray tracing, we adopt the OptiX~\cite{OptiX} framework."

- Pinhole camera model: An idealized camera model using a projection matrix with zero aperture. "we do not project primitives into the image space using the projection matrix of a pinhole camera model."

- Precomputed Radiance Transfer (PRT): A technique to precompute scene response to lighting for efficient relighting. "~\cite{prtgs,tsinghuaprtgs} compute environmental lighting using Precomputed Radiance Transfer (PRT)..."

- Projective transformation: The mapping from 3D to 2D image coordinates via camera projection. "the Jacobian of the affine approximation of the projective transformation..."

- Proxy geometry: Auxiliary mesh used to approximate or bound non-mesh primitives for acceleration structures. "uses icosahedra as proxy geometries to tightly enclose each Gaussian particle when building BVH."

- PSNR: Peak Signal-to-Noise Ratio, a pixel-wise fidelity metric for image reconstruction. "For quantitative evaluation, we report PSNR, SSIM~\cite{ssim}, and LPIPS~\cite{lpips}."

- Rasterization: A rendering approach projecting primitives to image space and shading per-fragment. "Gaussian-based particles are not well suited as unified primitives for both ray tracing and rasterization."

- Ray generation program: An OptiX stage that creates rays and controls the main tracing loop. "within the OptiX Ray generation program, a ray is first emitted..."

- Ray tracing: A physically based rendering technique that simulates light paths via rays. "ray tracing is a widely used rendering technique in computer graphics."

- Ray transmittance: The cumulative transparency along a ray as it passes through semi-transparent primitives. "Then, the colors of these triangles are accumulated along with the ray transmittance."

- ReLU: Rectified Linear Unit, a non-linear function used here in the triangle window response. ""

- Spherical harmonics: A set of orthogonal basis functions on the sphere used to encode view-dependent color. "we encode the color using spherical harmonics of degree $3$."

- SSIM: Structural Similarity Index Measure, a perceptual image quality metric. "For quantitative evaluation, we report PSNR, SSIM~\cite{ssim}, and LPIPS~\cite{lpips}."

- Structure-from-Motion (SfM): A technique to reconstruct 3D structure and camera poses from images. "We initialize the triangles from the point clouds obtained via Structure-from-Motion (SfM)..."

- Tile-based rasterizer: A GPU rasterization strategy that processes the image in tiles for efficiency. "uses a tile-based rasterizer to optimize their position and appearance..."

- Transmittance: The fraction of light that passes through a semi-transparent primitive. "where is the color of the Gaussian and is the transmittance."

- Triangle Splatting: A rasterization-based method using triangles as primitives for optimization and rendering. "Triangle Splatting(3DTS)~\cite{3dts}, introduced the use of triangles as primitives for optimization."

- Unit normal: A normalized vector perpendicular to a surface or edge, used for inside-outside tests. "where is the unit normal of the edge pointing outward..."

- Window function (triangle): A smooth per-point weighting over the triangle used to enable differentiability. "The function is a window function that produces a smoothly varying response over the triangle plane."

- World space: The global coordinate system in which geometry and rays are defined, as opposed to image space. "Unlike 3DTS~\cite{3dts}, we define our window function in world space..."

Collections

Sign up for free to add this paper to one or more collections.