- The paper introduces a decoupled 3D Gaussian Splatting framework that delays densification to mitigate artifacts from dynamic elements.

- It employs scale-cascaded mask bootstrapping with DINOv2 features to robustly separate static structures from transient objects.

- The method integrates a color affine transformation branch to handle illumination variations, achieving artifact-free, high-fidelity novel views.

RobustSplat++: Decoupling Densification, Dynamics, and Illumination for In-the-Wild 3DGS

Introduction

The "RobustSplat++" framework presents a new approach for robust 3D Gaussian Splatting (3DGS) in unconstrained scenes, specifically targeting artifact suppression in the presence of transient objects and complex illumination variations. Traditional 3DGS excels in static, controlled settings but suffers substantial performance degradation when exposed to dynamic distractors or non-uniform scene lighting. This work conducts an analysis of the densification process in standard 3DGS, identifying key failure modes due to premature Gaussian growth and unreliable mask supervision. The authors then propose a principled decoupling of 3DGS densification, dynamic content handling, and appearance modeling to achieve consistent, high-quality scene representations in challenging real-world scenarios.

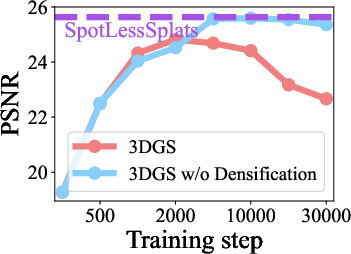

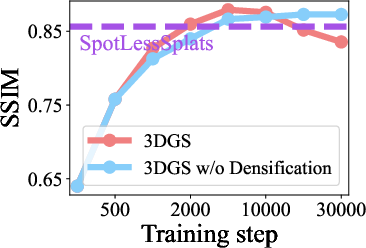

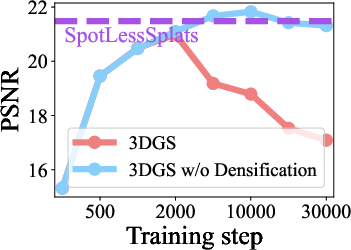

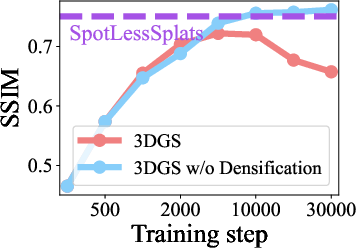

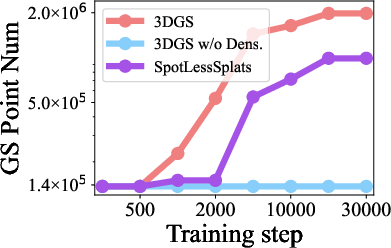

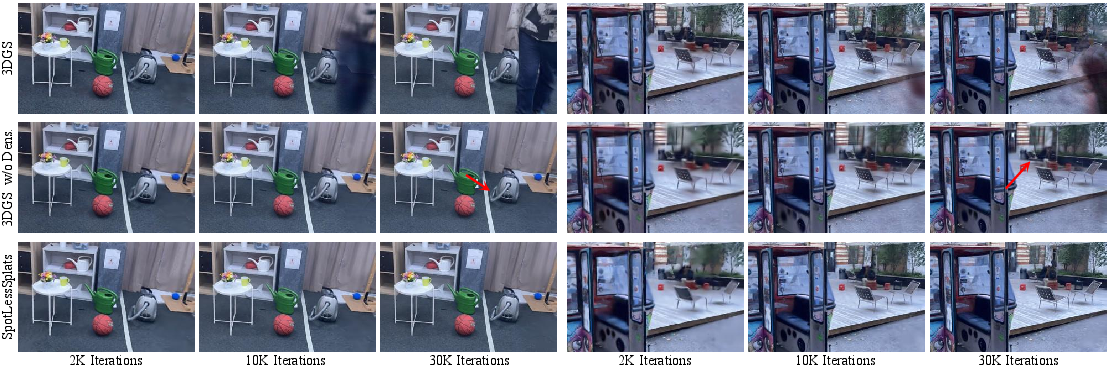

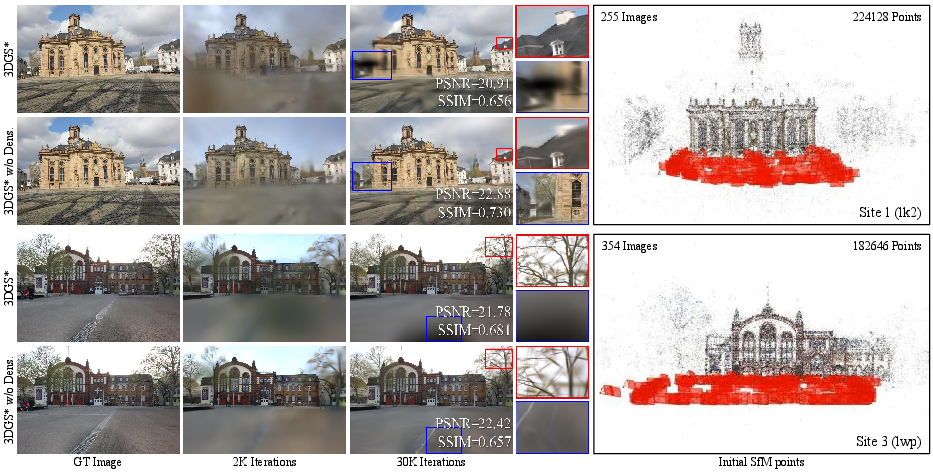

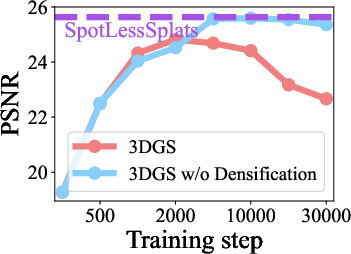

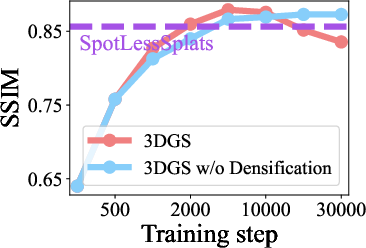

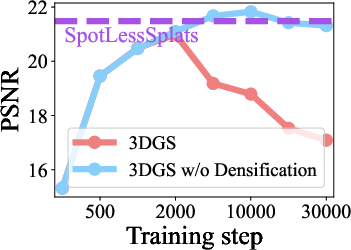

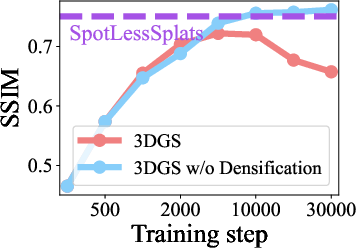

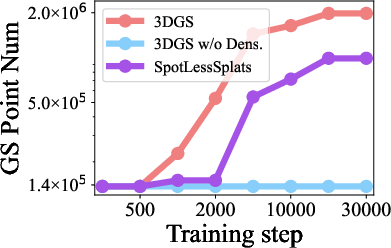

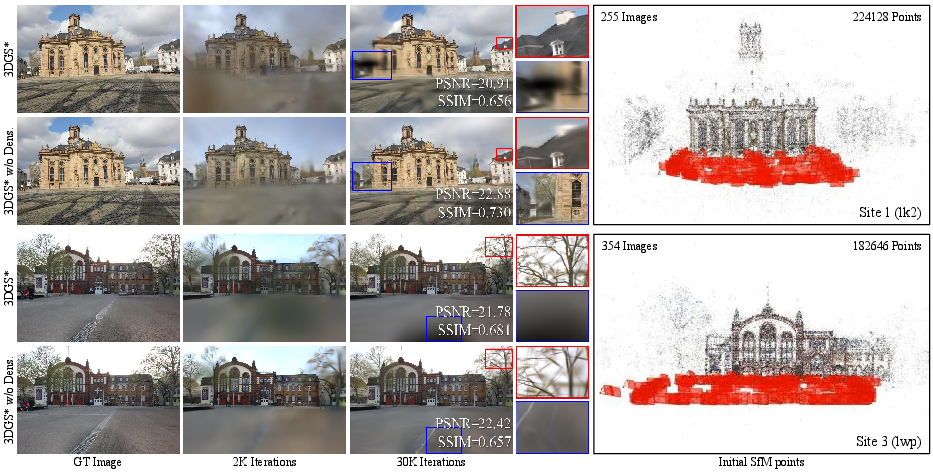

Through rigorous experimentation and ablation, the paper demonstrates that standard 3DGS densification—i.e., the growth and division of Gaussians based on positional gradients—frequently results in excessive fitting of transient distractors. As illustrated in the initial analysis, vanilla 3DGS increases the number of Gaussians during optimization, inadvertently modeling transient objects and inducing artifacts when distractors are not filtered out at the mask level.

Figure 1: Analysis of the impact of Gaussian densification on transient object fitting in vanilla 3DGS, showing artifact formation during training.

Disabling densification notably mitigates these artifacts, with results paralleling specialized transient-removal techniques. However, forgoing densification leads to underfit reconstructions, especially in regions with sparse initialization, which trade off fine detail for smoothness. The critical insight is that Gaussian densification must be delayed until static scene structure has been stably reconstructed, avoiding premature overfitting to moving content.

Decoupling Transient Handling: Mask Bootstrapping and Delayed Growth

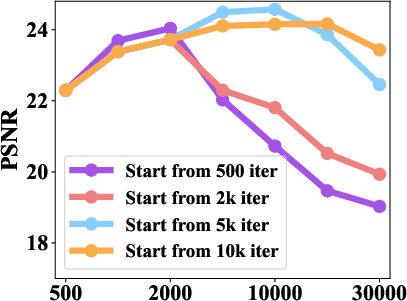

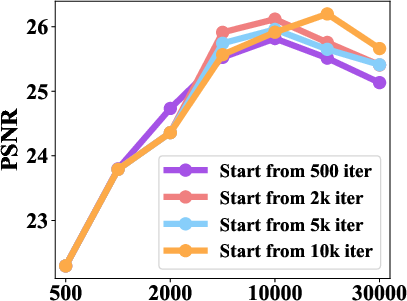

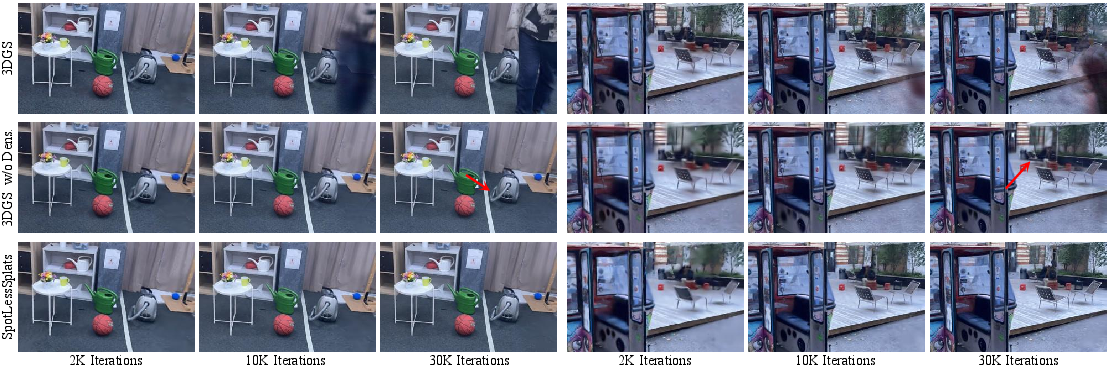

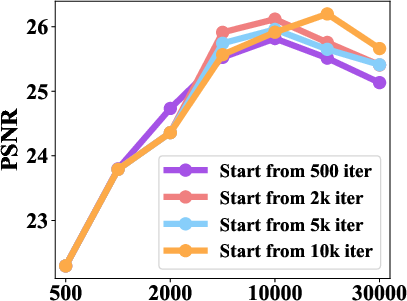

The proposed solution integrates two key components: delayed Gaussian growth and a scale-cascaded mask bootstrapping mechanism. Delayed Gaussian growth prevents early optimization from proliferating Gaussians in dynamic areas, enabling the scene to first stabilize its global structure. This is validated by controlled studies varying the start time of densification, showing significant reductions in photometric error and improved PSNR when growth is postponed and regulated by masking.

Figure 2: Effects of the start iteration for Gaussian densification with and without robust transient mask learning.

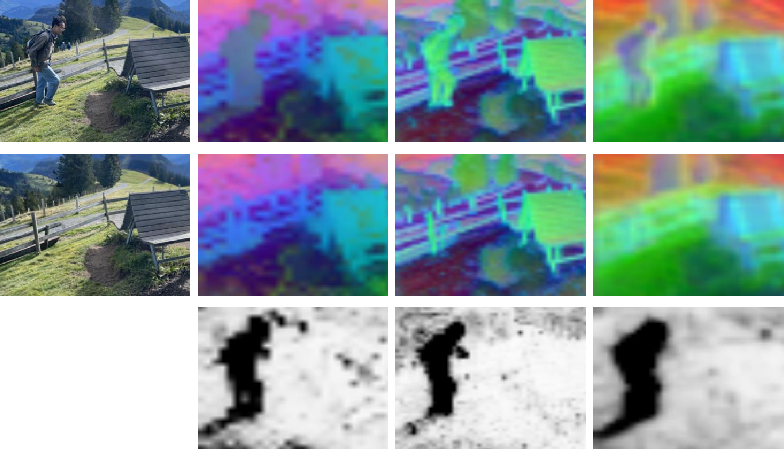

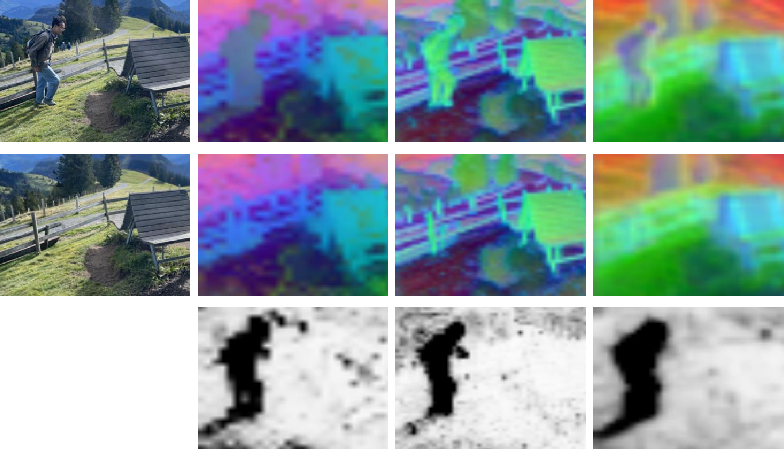

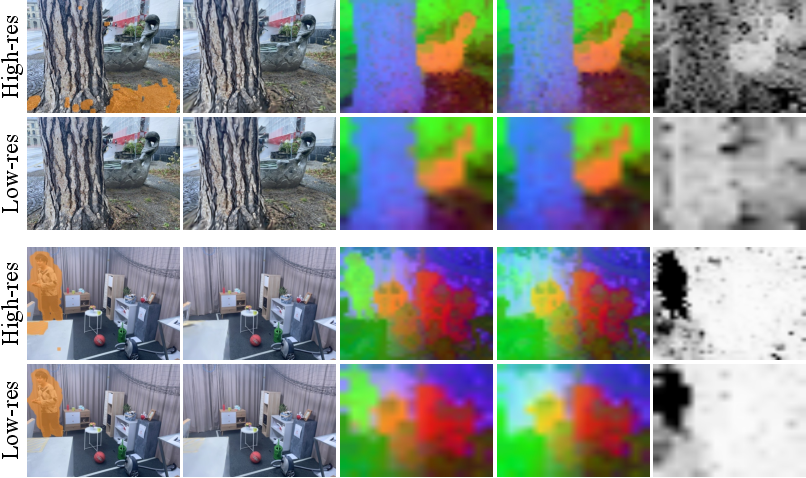

Mask prediction is achieved via an MLP conditioned on DINOv2 image features, providing a computationally efficient and semantically rich signal. Supervisory signals for mask learning are carefully designed, combining image residuals and feature similarity between captured and rendered views. Notably, initial mask bootstrapping is performed at low image resolution, leveraging semantic consistency and noise robustness, before progressing to high-resolution details. This coarse-to-fine supervision supports stable mask estimation and avoids premature exclusion of under-reconstructed static regions.

Figure 3: PCA visualization of DINOv2, SAM2, and Stable Diffusion features; cosine similarity maps highlight differences in feature alignment between ground-truth and rendered images.

Figure 4: Impact of mask supervisions from different resolutions; low-resolution mask bootstrapping supports accurate early classification of static versus dynamic regions.

Illumination-Robust Splatting through Appearance Modeling

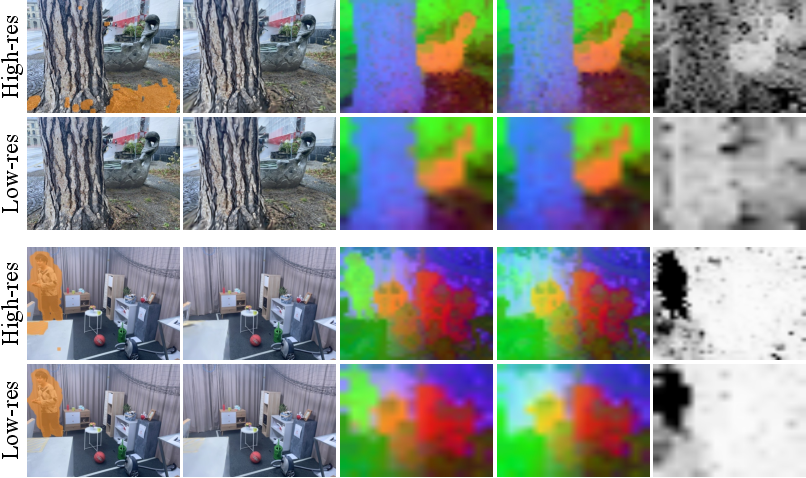

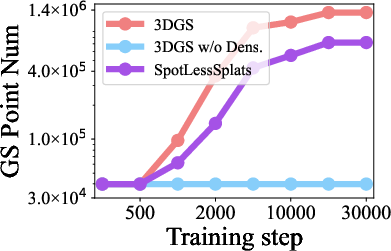

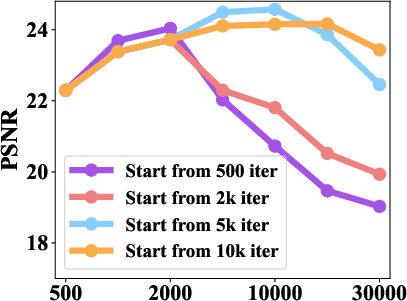

Extending to scenes with illumination variations, RobustSplat++ employs a color affine transformation branch, predicting per-image Gaussian color adjustments using joint 2D-3D embedding conditioning. This framework models global and local appearance shifts, accounting for lighting, reflections, and shadows beyond simple exposure changes. Dense visual environments, such as those in outdoor photo collections, pose additional challenges for appearance modeling, especially when unconstrained by time or weather. The delayed Gaussian growth strategy is shown to synergize with appearance learning: fixing Gaussian count during initial embedding optimization stabilizes color transformation convergence and prevents artifact formation due to misattributed photometric error.

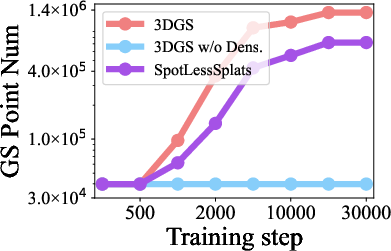

Figure 5: Analysis of Gaussian densification in illumination variation settings; disabling densification enhances global appearance consistency but can limit recovery of high-frequency detail.

Mask optimization is kept robust under appearance change by selecting the supervision (between raw and affine-rendered images) with the least photometric error, further decoupling dynamic and illumination effects.

Experimental Validation

Comprehensive evaluation is performed on unconstrained datasets such as NeRF On-the-go, RobustNeRF, Mip-NeRF 360, NeRF-OSR, and PhotoTourism. RobustSplat++ consistently outperforms baselines in both qualitative and quantitative measures across transient and illumination categories. For instance, in NeRF On-the-go, RobustSplat achieves the highest PSNR, SSIM, and lowest LPIPS across varied occlusion scenarios. On NeRF-OSR and PhotoTourism datasets, RobustSplat++ exhibits superior performance in modeling complex appearance changes, yielding visually consistent and artifact-free novel views.

The ablation studies isolate the contribution of each component, verifying that delayed growth and mask bootstrapping independently and jointly enhance reconstruction accuracy. Robustness to hyperparameter variations (e.g., mask regularization decay) is demonstrated, and the learned masks are shown to be sufficiently accurate to enable effective appearance disentanglement.

Implications and Future Directions

Practically, RobustSplat++ extends the applicability of 3D Gaussian Splatting to uncontrolled real-world image collections, advancing robust novel-view synthesis and photorealistic scene reconstruction under dynamic and variable lighting conditions. The analysis highlights the interdependence of densification, dynamic filtering, and appearance learning, suggesting that future scene understanding pipelines must coherently decouple these phases for reliable generalization.

Theoretically, this work raises open questions on the interaction between explicit point-based representations and learned semantic supervision, especially as data scale or spatio-temporal variability increases. Embedding-based appearance modeling currently requires test-time optimization and may limit real-time deployment. Further research is needed to enable fully generalizable, spatio-temporal dynamic modeling and scalable, adaptive appearance disentanglement.

Conclusion

RobustSplat++ introduces a robust design paradigm for 3DGS optimization in unconstrained scenes, decoupling densification, dynamics, and illumination through delayed Gaussian growth, scale-cascaded mask bootstrapping, and learned appearance modeling. Extensive analysis confirms that premature densification is a major source of artifacts, and the proposed framework addresses these challenges, delivering state-of-the-art performance in both artifact suppression and rendering fidelity. Limitations remain in modeling geometry under spatio-temporal changes and achieving generalized appearance modeling for real-time applications, indicating fertile ground for further inquiry in robust, scalable scene understanding.