Reflection Removal through Efficient Adaptation of Diffusion Transformers

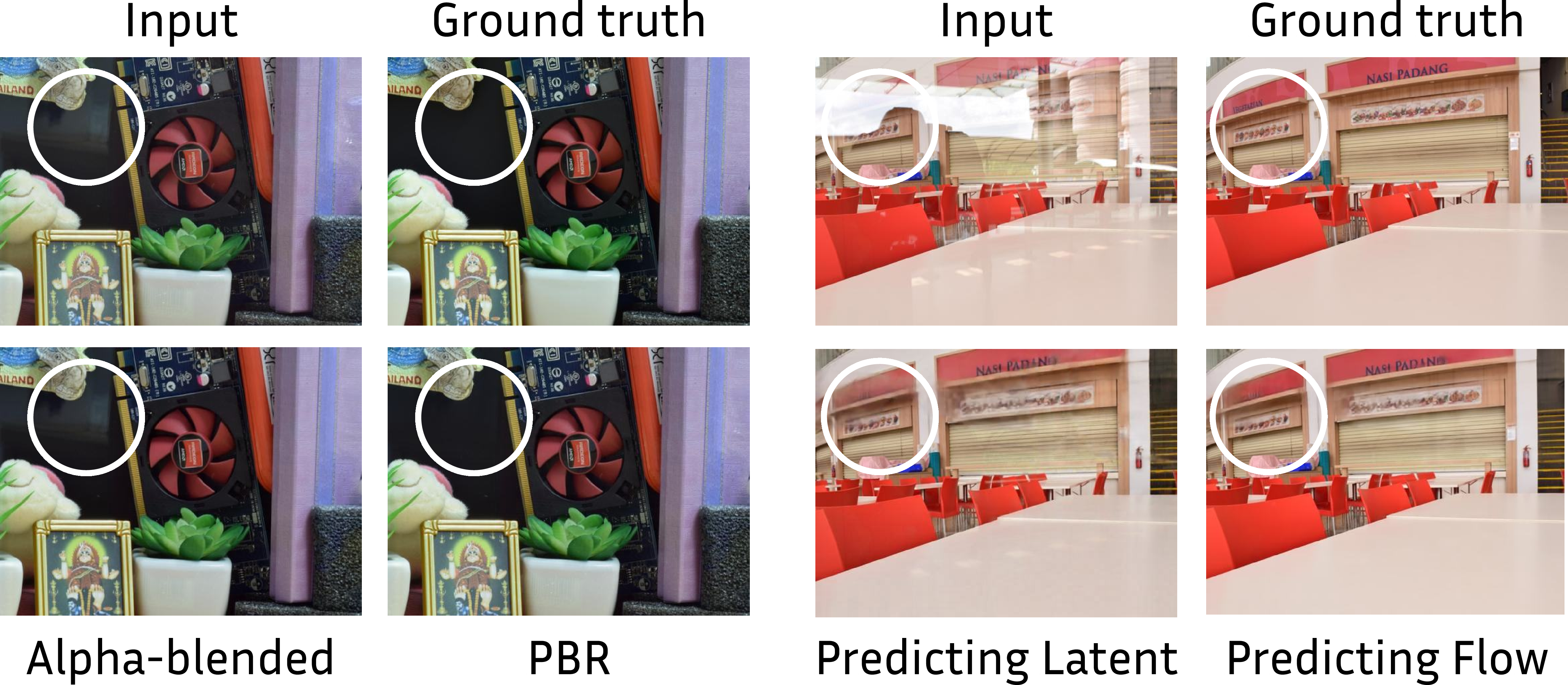

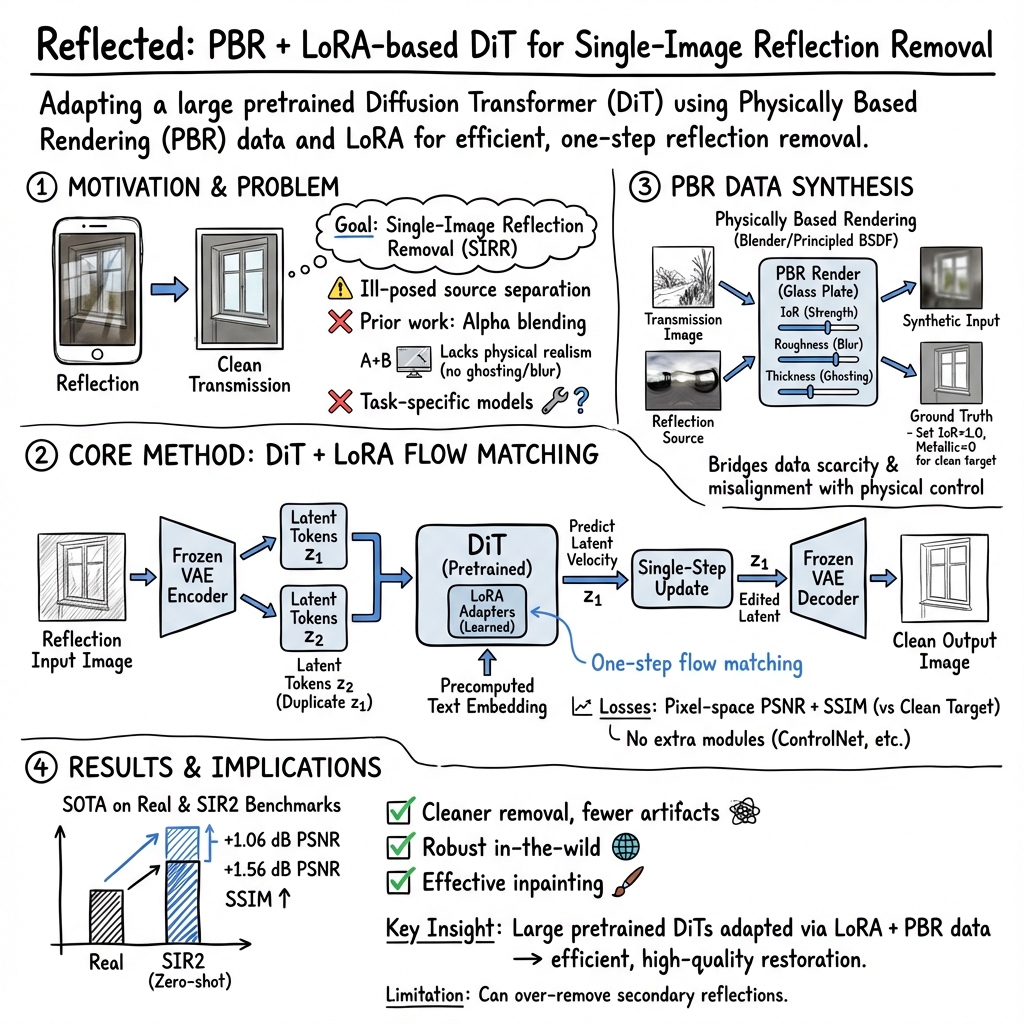

Abstract: We introduce a diffusion-transformer (DiT) framework for single-image reflection removal that leverages the generalization strengths of foundation diffusion models in the restoration setting. Rather than relying on task-specific architectures, we repurpose a pre-trained DiT-based foundation model by conditioning it on reflection-contaminated inputs and guiding it toward clean transmission layers. We systematically analyze existing reflection removal data sources for diversity, scalability, and photorealism. To address the shortage of suitable data, we construct a physically based rendering (PBR) pipeline in Blender, built around the Principled BSDF, to synthesize realistic glass materials and reflection effects. Efficient LoRA-based adaptation of the foundation model, combined with the proposed synthetic data, achieves state-of-the-art performance on in-domain and zero-shot benchmarks. These results demonstrate that pretrained diffusion transformers, when paired with physically grounded data synthesis and efficient adaptation, offer a scalable and high-fidelity solution for reflection removal. Project page: https://hf.co/spaces/huawei-bayerlab/windowseat-reflection-removal-web

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Explain it Like I'm 14

What this paper is about

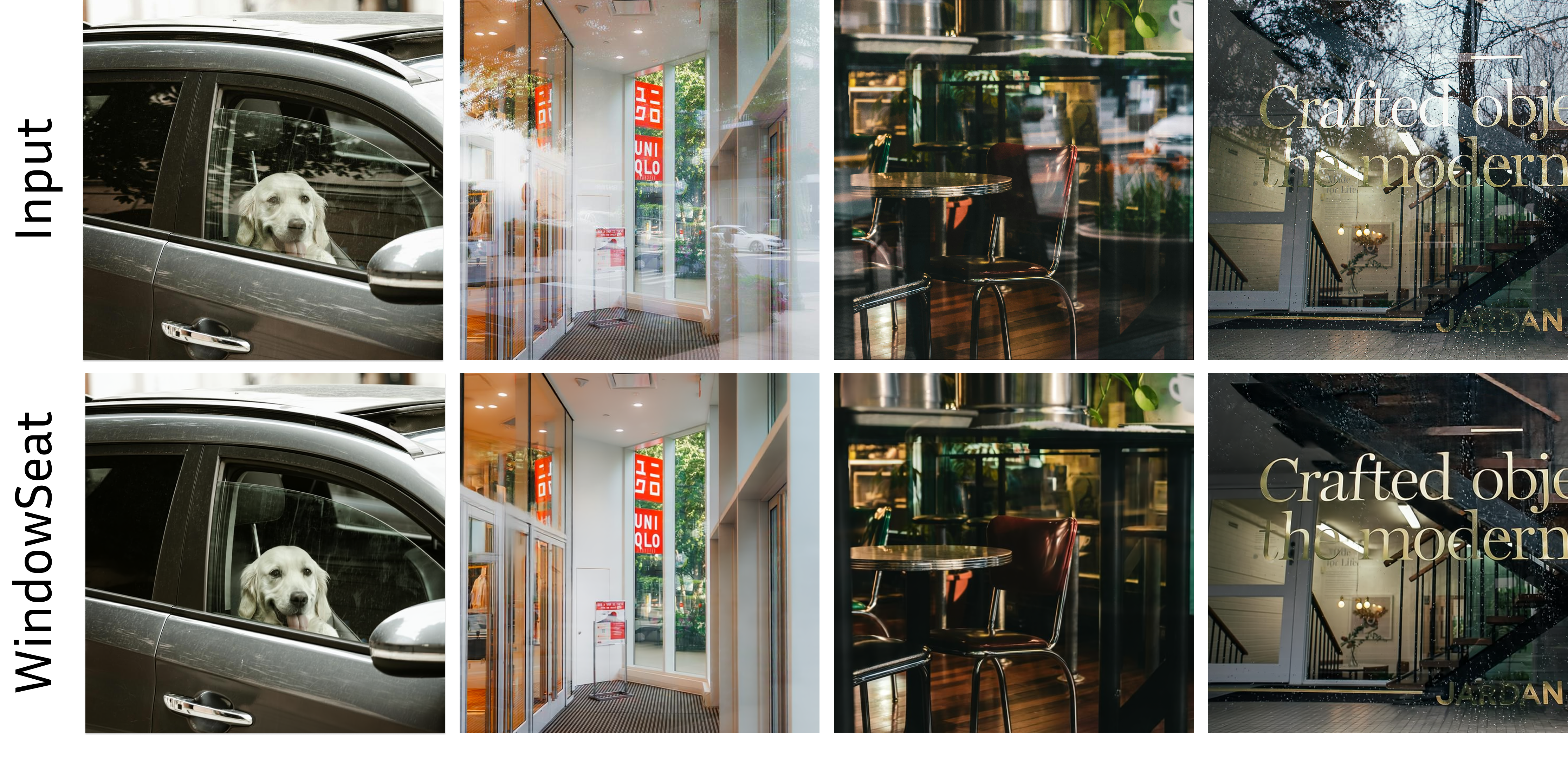

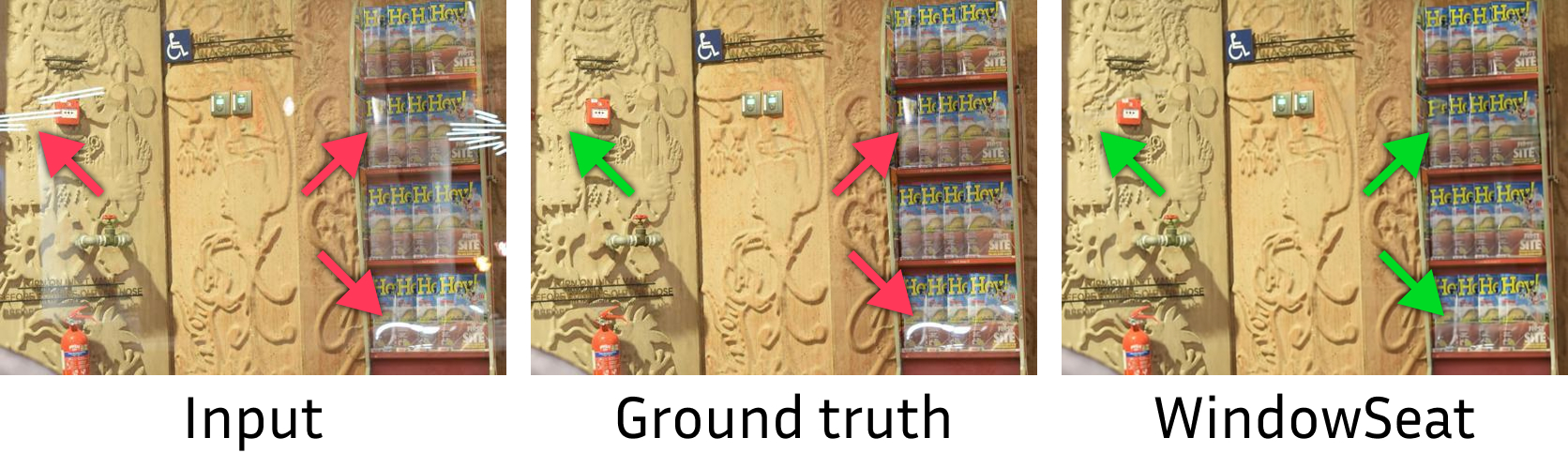

The paper is about getting rid of unwanted reflections in photos taken through glass—like when you take a picture from inside a car or a building and you see your own face or room lights faintly on the window. The authors build a tool called WindowSeat that turns a powerful image‑generation model into a fast “one‑click” reflection remover.

What questions the researchers asked

They focused on three simple questions:

- Can a big, general image model be taught to remove reflections from a single photo quickly and well?

- What kind of training data works best for this—cheap but fake-looking blends, or more realistic, physics‑based simulations of glass?

- What is the simplest, fastest way to “fine‑tune” (teach) a large model so it keeps its skills but learns this new task?

How they did it (in plain language)

Think of their approach as teaching a very skilled digital artist a new trick:

- A large image model as the “artist”: They start with a huge, pre‑trained image model called a diffusion transformer. Normally, this kind of model can create or edit images. The authors reuse it as a smart photo editor that removes reflections.

- Light training, not from scratch: Instead of retraining the whole giant model, they add a small attachment (called LoRA) that nudges the model to learn the “erase reflections” skill. This is like adding a new tool to an artist’s kit rather than retraining them from zero. It’s efficient enough to train in about a day on a single consumer GPU.

- One step, not many: Many diffusion models improve an image in lots of small steps. Here, the model edits the photo in one forward pass, making it fast for practical use.

- Teaching with realistic fake photos: Real training pairs (photo with reflection + the same photo without reflection) are hard to capture perfectly. Instead, they generate super‑realistic training images using a 3D tool (Blender). This method is called Physically Based Rendering (PBR). It simulates how light behaves with real glass—things like:

- Index of Refraction (how strongly glass bends and reflects light),

- Roughness (how blurry a reflection looks),

- Thickness (which can cause “ghosting,” where reflections appear doubled).

This is much more realistic than simple “alpha blending” (just mixing two pictures with a transparency slider), which can’t create true glass effects like strong highlights or ghosting.

- How the model “sees” images: They use a compressor‑decoder (a VAE) so the model edits a compact “code” of the image rather than the full picture directly. The model learns to predict a small, helpful change to that code—like nudging a slider—to remove reflections. They train it with image quality measures that reward both pixel accuracy and structural clarity.

What they found and why it’s important

- Cleaner results with fewer artifacts: WindowSeat removes reflections more reliably and leaves fewer weird leftovers compared to other methods. It’s especially good at tough cases with strong reflections or blur.

- Fast and practical: Because it works in one step and only a small add‑on is trained, it’s efficient and suitable for real‑world use, like on phones.

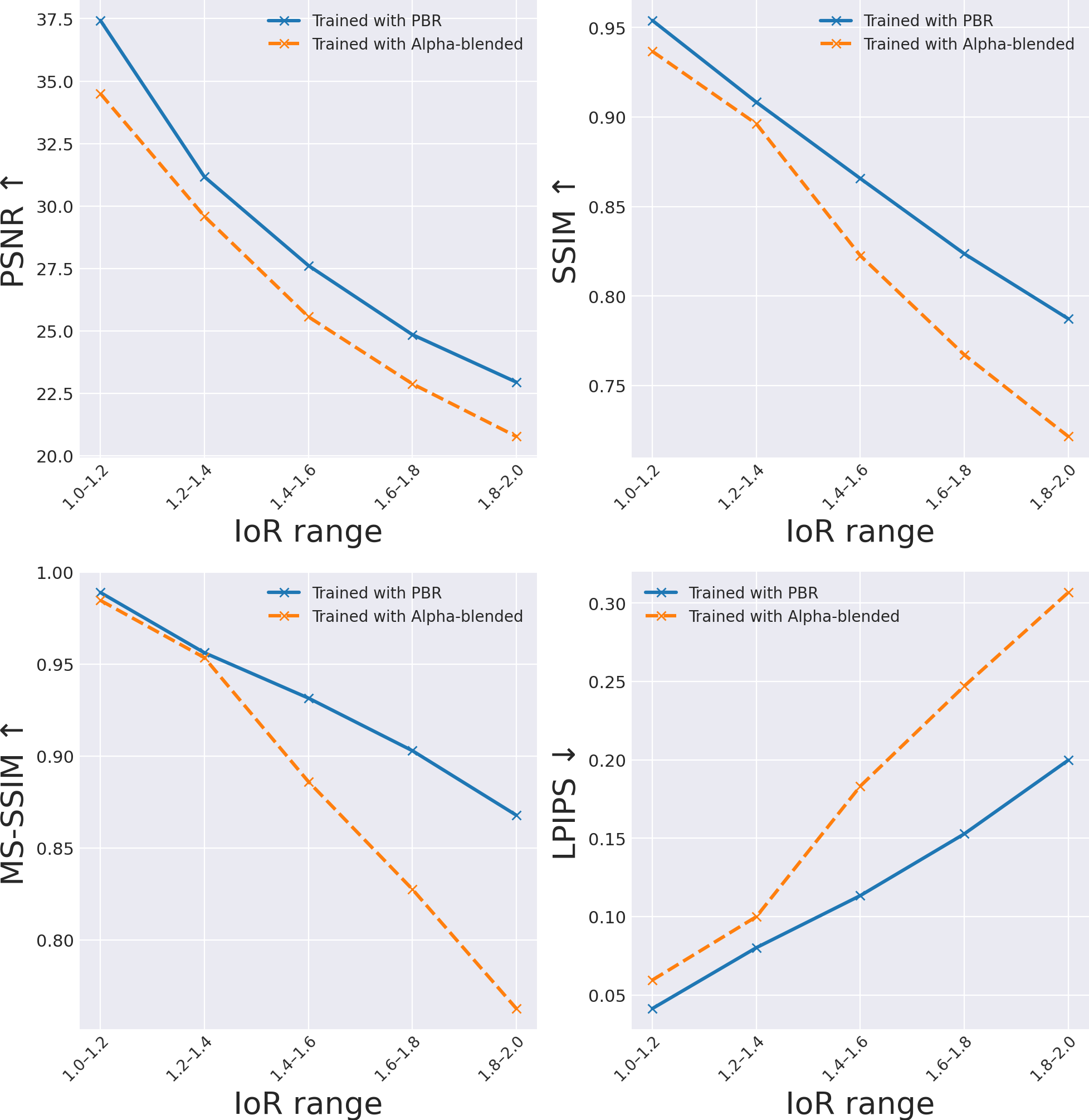

- Realistic training data pays off: Models trained with the physics‑based (PBR) images clearly beat those trained on simple blended images, especially when reflections are strong. In other words, “better fake data” leads to better real‑world performance.

- Simple choices that matter:

- Feeding the model two copies of the image’s compact code works better than using random noise as the second input.

- Predicting a “small change” to the image code (instead of the entire edited code outright) gives more accurate results.

- Using both types of quality checks during training (for pixel accuracy and structure) leads to the best images.

- State‑of‑the‑art performance: On several standard tests and on photos from the real world, the method matches or beats leading systems, producing sharper, cleaner images.

What this could mean in the real world

- Better everyday photos: Cleaner shots through windows—from cars, planes, museums, or storefronts—without complicated settings or extra hardware.

- Helpful for apps and devices: This can improve phone cameras, home cameras, and any tool that looks through glass.

- A recipe for other tasks: The big idea—reuse a strong general model, fine‑tune it lightly, and train with realistic synthetic data—can be used for other image “cleanup” jobs, like removing glare, raindrops, or smudges.

- Limitations and next steps: Sometimes the model removes reflections that the photographer might want to keep (like faint secondary reflections that add realism). In the future, giving users control over “how much reflection to remove” and extending the method to videos could make it even more useful.

Knowledge Gaps

Unresolved knowledge gaps, limitations, and open questions

The following list summarizes what remains missing, uncertain, or unexplored, framed as concrete, actionable directions for future research:

- Training data avoids refractive misalignment by enforcing pixel alignment (IoR=1.0 for GT), leaving robustness to real-world refraction-induced pixel shifts and spatial warping unaddressed; develop datasets and methods that explicitly handle misalignment (e.g., learned alignment, warping-invariant losses, optical flow).

- Physically Based Rendering (PBR) coverage is narrow: IoR (1.25–1.75), roughness (0–0.05), thickness (0–5 cm). Extend to tinted/colored glass, frosted/etched surfaces, curved/laminated/multi-pane (double/triple glazing), wedge angles, wire-reinforced, scratched, dirty, fogged, and wet glass with physically valid models.

- Reflection sources are either HDR panoramas or planar RGB images; add 3D reflective scenes with geometry and depth to simulate realistic parallax, occlusions, and view-dependent effects in reflections.

- Polarization and wavelength-dependent phenomena (e.g., polarization state, chromatic dispersion, birefringence) are not modeled; incorporate spectral and polarization-aware rendering to improve physical fidelity and generalization.

- Self-shadows and interactions between subjects and glass (e.g., proximity-dependent shading, contact reflections) are not simulated; extend the PBR pipeline and training to capture these effects.

- The method is single-image only; temporal consistency for video or burst inputs is not addressed. Design temporally-aware models and datasets with consistent reflection behavior across frames.

- The model outputs only the transmission layer; it does not estimate the reflection layer. Explore joint prediction of transmission and reflection to enable editing, diagnostics, and user control.

- Overshooting removal can erase desired secondary/higher-order reflections (user intent mismatch); add controls to specify removal strength/order (e.g., prompt-based, sliders, or confidence thresholds) and train with intent-aware objectives.

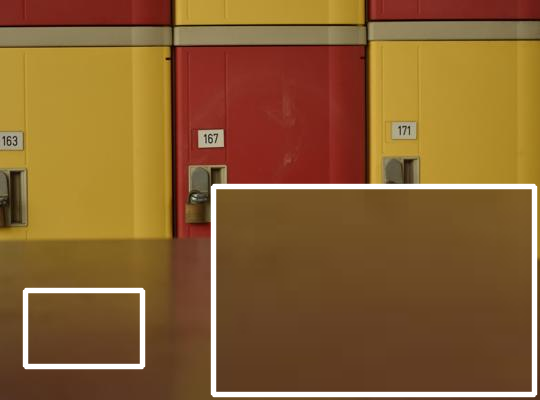

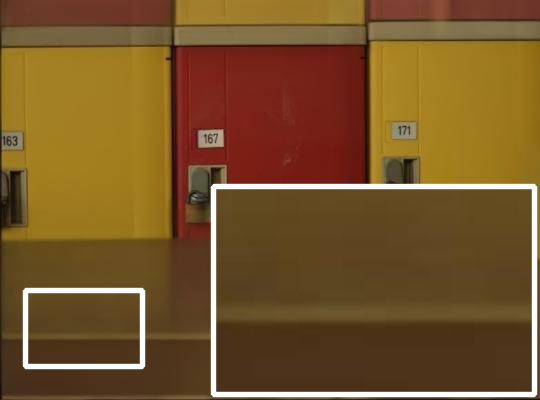

- VAE is fully frozen; assess whether fine-tuning the VAE or upgrading to higher-fidelity autoencoders reduces compression artifacts and improves detail, color accuracy, and texture preservation.

- One-step flow update replaces multi-step diffusion without quantifying the trade-off; rigorously compare one-step vs. few-step/multi-step sampling for difficult cases (e.g., extreme reflections, dense ghosting).

- Training losses are limited to PSNR and SSIM in pixel space; investigate perceptual (e.g., LPIPS/VGG), edge/structure consistency, physics-informed constraints (e.g., Fresnel consistency), adversarial objectives, and latent-space losses aligned with flow matching.

- Tile-based inference (linear blending of overlapping tiles) may introduce seams or boundary artifacts; systematically analyze and optimize tiling (e.g., feathering, Poisson blending, diffusion-based stitching) or enable end-to-end high-resolution inference.

- Inference/runtime and memory on mobile devices are not reported; quantify latency, throughput, energy, and memory under typical smartphone constraints and explore model compression/distillation for on-device deployment.

- 4-bit QLoRA quantization is used for 95.7% of parameters; conduct a systematic study of quantization impacts on fidelity, failure modes, and robustness across scene types and resolutions, including de-quantization strategies for critical layers.

- PBR dataset size (25,000) and diversity may be insufficient for long-tail coverage; scale data, measure distribution coverage, and adopt active data generation to target hard cases (e.g., night neon, extreme highlights, rain).

- Release status of the PBR generator and dataset is unclear; provide open-source tools, parameter distributions, and rendering recipes to enable reproducibility and community extension.

- Evaluation relies on PSNR/SSIM/MS-SSIM/LPIPS; add human perceptual/user studies and task-oriented evaluations (e.g., downstream recognition through glass) to validate practical quality and user satisfaction.

- Benchmark biases and ground-truth misalignment (e.g., Nature) confound comparisons; create new benchmarks with pixel-accurate GT, multiple reflection orders, controlled misalignment, and metadata for glass properties.

- Generalization to non-glass reflective media (e.g., water surfaces, glossy screens, metals) is not studied; test and adapt the method for broader reflective scenarios.

- Robustness to realistic camera conditions (sensor noise, motion blur, high ISO, rolling shutter, tone mapping) is unquantified; simulate camera pipelines and noise models during training and evaluate on mobile captures.

- Text conditioning is mentioned but not detailed; explore prompts for control (e.g., “remove mild reflections”, “preserve highlights”), prompt robustness, and semantic guidance.

- The second latent stream design is only lightly ablated (duplication vs. noise); investigate richer inputs (edges, segmentation, physics priors, reflection cues) and architectures for improved source separation.

- Transmission scene is a textured plane in PBR; simulate full 3D transmission scenes to capture depth-dependent interactions (parallax, occlusions, volumetric effects).

- Ghosting is modeled only via thickness; add models for multi-pane spacing, wedge angles, surface micro-defects, and uneven thickness to reproduce realistic ghost distances and intensities.

- HDR-to-sRGB tone mapping and radiometric consistency are not analyzed; study camera pipeline matching (white balance, gamma, tone curves) to reduce domain gap between rendered and real captures.

- Failure analysis is limited to a single example; build a taxonomy of errors (e.g., over-removal, hallucination, residual reflections, color shifts) and correlate with scene/glass parameters for targeted fixes.

- Safety/ethics implications (e.g., removing forensic cues or intended reflections) are not discussed; define guardrails, confidence measures, and UI affordances to avoid undesired content removal.

- Dependency on the chosen foundation DiT is not explored; ablate across base models and sizes (e.g., DiT variants, editing backbones) to understand portability and performance trade-offs.

- Hyperparameter sensitivity (LoRA rank=128, loss weights λPSNR=0.1, λSSIM=20, training resolution 608/768) is not systematically examined; perform sensitivity analysis to guide stable, efficient adaptation.

- Performance under extreme reflection strengths beyond the chosen IoR range is not quantified; extend tests to higher IoR, roughness, complex lighting, and specular saturation to map failure boundaries.

Practical Applications

Immediate Applications

The paper’s one-step DiT-based reflection removal model (WindowSeat), its Blender-based PBR data synthesis pipeline, and its efficient LoRA/QLoRA adaptation recipe enable several deployable use cases today.

- Mobile camera and gallery apps — sector: consumer software, mobile OEMs

- Add a “Remove reflections” toggle in the camera pipeline or a tap-to-fix tool in gallery editors. The one-step, feed-forward design minimizes latency and avoids multi-step sampling.

- Workflow/tools: tiling at 608–768 px resolution; VAE encode → DiT one-step flow update → VAE decode; optional ONNX/TensorRT export; integrate on-device NPU where available.

- Dependencies/assumptions: the base DiT is large (~12.5B params); practical on-device deployment likely needs distillation/quantization and careful memory management; overshooting can remove intentional reflections, so a strength slider/preview is advisable. Apache-2.0 checkpoint exists for immediate trials.

- Photo editing suites and plugins — sector: creative software (e.g., Photoshop, Lightroom, GIMP, Affinity)

- Batch-reflection removal for framed photos, window/glass shots, aquarium/museum images; reduces manual clone/heal work.

- Workflow/tools: wrap the model as a plugin or command-line filter; batch process images; include a strength/keep-highlights control.

- Dependencies/assumptions: GPU acceleration or cloud offload recommended; ensure color management matches VAE/DiT preprocessing.

- E-commerce, real estate, and cultural heritage imaging — sector: retail, real estate, museums/archives

- Pre-process product or property photos taken through glass (display cases, shop windows), and digitization of artifacts behind glass.

- Workflow/tools: a server-side microservice/API that accepts images and returns reflection-free versions; integrate into photo pipelines before listing/publishing.

- Dependencies/assumptions: legal/compliance teams should validate any edits applied to documentary assets; overshoot risk in removing secondary reflections.

- Surveillance, dashcams, and storefront security cameras (offline/nearline) — sector: security, automotive

- Improve downstream analytics (OCR, person/vehicle detection) by pre-processing frames to suppress glass reflections from windshields or window-mounted cameras.

- Workflow/tools: frame-by-frame batch inference for investigations or periodic reprocessing; integrate before analytics models.

- Dependencies/assumptions: current model is single-image; for real-time streams and strict latency budgets, hardware optimization or lighter distilled models may be required.

- Robotics data cleaning for mapping and perception (offline) — sector: robotics, industrial automation

- Clean reflections from cameras behind protective covers to improve SLAM/SfM robustness in post-processing.

- Workflow/tools: integrate as a ROS/SLAM preprocessing step in data pipelines prior to reconstruction.

- Dependencies/assumptions: single-image corrections may introduce small inconsistencies frame-to-frame; acceptable for offline pipelines but not yet ideal for real-time.

- Academic replication and extensions of the adaptation recipe — sector: academia, applied ML

- Reuse the LoRA/QLoRA protocol to repurpose DiT image-editors for restoration tasks (deblurring, dehazing, flare/raindrop removal).

- Workflow/tools: PEFT + bitsandbytes; 4-bit QLoRA; PSNR+SSIM losses in pixel space; second latent duplication; flow-parameterized output.

- Dependencies/assumptions: access to a suitable pre-trained DiT (image-editing) backbone and its VAE; license compatibility.

- Physically Based Rendering dataset synthesis for imaging tasks — sector: academia, graphics/Vision R&D

- Use the Blender Principled BSDF pipeline to create controllable, photorealistic datasets for reflection removal and related optical phenomena.

- Workflow/tools: Blender scripting; HDR environment maps and planar RGB sources; vary IoR, thickness (ghosting), roughness; render paired contaminated/clean images.

- Dependencies/assumptions: rendering time/budget; HDRI licensing; data domain alignment (the pipeline currently avoids refractive misalignment by design).

- Cloud/SaaS reflection removal API — sector: developer platforms, marketplaces

- Offer a turnkey API for marketplaces and agencies to clean glass artifacts at scale.

- Workflow/tools: autoscaled GPU inference; tiling; webhooks; basic QC dashboards.

- Dependencies/assumptions: cost/performance trade-offs of large DiT inference; consider usage caps and SLAs.

- Documentation and policy support for edited imagery — sector: policy/compliance, media organizations

- Provide clear metadata flags when reflection removal is applied; internal guidelines for when/where to use automated de-reflection.

- Workflow/tools: add XMP/EXIF tags; generate edit audit logs in pipelines.

- Dependencies/assumptions: organizational policy alignment; end-user disclosure requirements in news/forensics settings.

- Teaching optics and computational photography — sector: education

- Use the PBR pipeline to demonstrate how IoR, roughness, and thickness affect reflections and ghosting; compare alpha blending vs. PBR.

- Workflow/tools: course labs with scripted Blender scenes and calibration charts.

- Dependencies/assumptions: student access to GPUs/Blender; curated HDRI sets.

Long-Term Applications

Several higher-impact workflows become feasible with further research on model compression, temporal consistency, and expanded physics coverage.

- Real-time, on-device reflection removal — sector: mobile, AR/VR, automotive

- Integrate into ISP/NPU for live viewfinder and video at 30+ FPS.

- Path to product: knowledge distillation from DiT to compact architectures; int8/FP8 inference; operator fusion; hardware co-design.

- Dependencies/assumptions: accuracy/latency balance on mobile SoCs; thermal/power constraints; robust performance across lighting and glass types.

- Temporally consistent video de-reflection — sector: post-production, streaming, ADAS/robotics

- Extend the model with temporal modules to avoid flicker and maintain detail across frames for film/TV cleanup and ADAS night driving.

- Path to product: temporal consistency losses; recurrent or attention-based video DiTs; streaming tiling.

- Dependencies/assumptions: training data with temporal glass effects; compute budgets for long sequences.

- User-controllable layer separation (how many reflections to remove, preserve highlights) — sector: creative and pro software

- Interactive controls to retain artistic/specular elements, or to output both transmission and reflection layers.

- Path to product: multi-head prediction; disentanglement losses; UI sliders; prompt guidance.

- Dependencies/assumptions: robust disentanglement from single-image cues; UX testing to prevent over-editing.

- Estimating glass/material properties from a single image — sector: AR occlusion, inspection, optics

- Jointly infer IoR, thickness, roughness to assist AR scene understanding through windows or for quality control in manufacturing.

- Path to product: supervised or weakly supervised training with PBR annotations; uncertainty estimates.

- Dependencies/assumptions: identifiability from single-view cues; domain gaps between synthetic and real optics.

- Forensics and compliance “inverse mode” (reflection recovery) — sector: law enforcement, media verification

- Recover/enhance the reflection layer to identify bystanders or photographers (opposite of removal) with audit trails.

- Path to product: train dual-output heads for transmission/reflection; conservative priors to avoid hallucinations; provenance metadata.

- Dependencies/assumptions: legal/ethical review; high risk of spurious content if unconstrained.

- Generalized optics-degradation data factory — sector: vision model training platforms

- Expand the PBR pipeline into an “OpticsBench” service for glare, lens flare, veiling glare, raindrops, refraction, and dirty glass.

- Path to product: parameterized scene generators; measurable realism metrics; dataset versioning.

- Dependencies/assumptions: rendering scalability; careful calibration to real sensors/lenses.

- Domain-specific medical/scientific imaging (e.g., microscope slide glare) — sector: healthcare, life sciences

- Adapt the method to coverslip reflections and specular artifacts in microscopy and lab imaging.

- Path to product: domain PBR with appropriate refractive indices; collaborations for annotated data; rigorous validation.

- Dependencies/assumptions: safety/regulatory constraints; proof of non-degradation of diagnostically relevant features.

- Safety-critical perception under glare (night driving through windshields) — sector: automotive, aerial robotics

- Robust reflection/glare suppression as a preprocessor for detection/tracking pipelines in adverse weather/night conditions.

- Path to product: stress-tested datasets; redundancy with traditional polarizers; fail-safe fallbacks.

- Dependencies/assumptions: certification requirements; adversarial robustness; corner cases (curved, multi-layer laminated glass).

- Standards for AI restoration transparency — sector: policy, standards bodies

- Define watermarking/metadata standards for automated restorations in journalism, insurance claims, and evidence handling.

- Path to product: collaboration with IPTC/EXIF/Coalitions for Content Provenance; toolkits for automatic tagging.

- Dependencies/assumptions: multi-stakeholder alignment; mechanisms to prevent metadata stripping.

- Guidance for capture-time reflection mitigation — sector: HCI, camera UX

- Use fast PBR previews to suggest shooting angles or polarizer use that minimize reflections before capture.

- Path to product: on-device simulation heuristics; AR overlays showing “glare-safe” zones.

- Dependencies/assumptions: fast scene/light estimation; user acceptance of guidance prompts.

- Broad computational photography extensions via the adaptation recipe — sector: imaging software, OEMs

- Apply the same LoRA/QLoRA + flow-matching fine-tuning approach to lens flare removal, raindrop removal, dehazing, low-light enhancement.

- Path to product: task-specific PBR data; multi-task heads; unified image-restoration SDKs.

- Dependencies/assumptions: curated task datasets; avoiding interference between tasks in multi-task models.

Glossary

- AdamW: An optimizer variant with decoupled weight decay commonly used for training deep networks. "AdamW~\cite{Loshchilov2017DecoupledWD} is initialized with a learning rate of "

- Alpha blending: A linear image compositing technique that mixes two images by weighted sums to simulate reflections. "We refer to this approach as alpha blending."

- Anisotropic reflection effects: Direction-dependent reflection phenomena where reflectance varies with orientation on a surface. "simulate light transport between the scene and glass to reproduce anisotropic reflection effects."

- bfloat16: A 16-bit floating-point format with the same exponent as FP32, used to reduce memory while retaining dynamic range. "are kept in bfloat16, while 95.7\% of the total parameters are quantized to 4-bit."

- BRDF: Bidirectional Reflectance Distribution Function; describes how light is reflected at an opaque surface depending on incoming and outgoing directions. "Disneyâs BRDF~\cite{Burley2012PhysicallyBasedSA, 2015ExtendingTD}"

- ControlNet: An auxiliary conditioning network for diffusion models that allows guided generation using external inputs. "we do not use ControlNet modules, cross-latent skip connections, losses in latent space, or any VAE fine-tuning."

- Cross-attention: An attention mechanism that relates features across different streams or modalities. "or cross-attention for dual-stream prediction,"

- Cross-latent skip connections: Architectural links that pass information between latent streams to aid multi-stream prediction. "we do not use ControlNet modules, cross-latent skip connections, losses in latent space, or any VAE fine-tuning."

- Diffusion Transformer (DiT): A transformer-based diffusion model architecture operating in latent space for image generation/editing. "We introduce a diffusion-transformer (DiT) framework for single-image reflection removal"

- Environment map: A panoramic image representing scene illumination used to simulate reflections and lighting. "a panoramic high-dynamic-range (HDR) environment map"

- Flow matching: A generative training objective that learns velocity fields to transform samples in one step. "Our DiT backbone was pre-trained with a flow matching objective"

- Foundation model: A large, broadly pretrained model that encodes general visual priors and can be adapted to downstream tasks. "leverage a foundation model that already encodes rich scene understanding"

- Ghosting: Multiple faint, displaced reflections caused by internal glass reflections and thickness. "ghosting is produced by varying the glass thickness."

- Gradient checkpointing: A memory-saving technique that recomputes intermediate activations during backpropagation. "enable gradient checkpointing for the VAE and the DiT."

- HDR: High Dynamic Range imaging representing a wider range of luminance values than standard sRGB. "high-dynamic-range (HDR) environment map"

- Index of Refraction (IoR): A material property indicating how strongly it bends light, influencing reflection strength. "Index of Refraction (IoR) affects reflection strength."

- In-domain: Data from the same distribution as the training set used for evaluation. "in-domain and zero-shot benchmarks."

- Inpainting: Filling in missing or occluded content in images in a visually coherent way. "consistently yields better inpainting,"

- Lanczos resampling: A high-quality image scaling filter used for resizing with minimal artifacts. "using Lanczos resampling."

- Latent space: A compressed representation space produced by an encoder (e.g., VAE) where models operate. "We perform reflection removal in the latent space of a frozen variational autoencoder (VAE)."

- Light attenuation: Reduction of light intensity as it passes through a medium, affecting transmission color. "Light attenuation through the medium is simulated by assigning a base color to the material,"

- LoRA: Low-Rank Adaptation; a parameter-efficient fine-tuning technique for large transformers. "Efficient LoRA-based adaptation of the foundation model, combined with the proposed synthetic data, achieves state-of-the-art performance"

- LPIPS: Learned Perceptual Image Patch Similarity; a deep-feature-based perceptual similarity metric. "compare MS-SSIM and LPIPS values in Tab.~\ref{tab:offline_evaluation}."

- Microfacet distribution: Statistical model of surface microgeometry that governs specular reflection blur. "the surface roughness controls the microfacet distribution that determines the blur of specular reflections."

- MS-SSIM: Multi-Scale Structural Similarity; an image quality metric computed across multiple scales. "compare MS-SSIM and LPIPS values"

- PEFT: Parameter-Efficient Fine-Tuning; methods/frameworks that adapt large models by training small subsets of parameters. "use PEFT~\cite{peft} to attach bfloat16 LoRA~\cite{hu2022lora} adapters,"

- Physically Based Rendering (PBR): Rendering approach that simulates light transport based on physical principles for realism. "Physically Based Rendering (PBR) pipeline for synthetic data generation."

- Pixel-perfect alignment: Exact correspondence of pixels across image pairs without spatial shifts. "samples without pixel-perfect alignment due to refraction, object, or camera motion"

- Principled BSDF: A physically based shader model (from Disney) that unifies common BRDF/BSDF components. "the Principled BSDF shading model~\cite{Burley2012PhysicallyBasedSA, 2015ExtendingTD}, implemented in Blender~\cite{blender},"

- Prompt conditioning: Guiding model predictions using a text prompt embedding during inference/training. "under prompt conditioning "

- PSNR: Peak Signal-to-Noise Ratio; a reconstruction fidelity metric based on pixel-wise error. "We train the DiT LoRA adapters in pixel space with PSNR and SSIM losses"

- QLoRA: Quantized LoRA; enables low-bit quantization during fine-tuning of large transformers. "such as QLoRA~\cite{qlora, liu2025fluxqlora}."

- Quantization (4-bit): Representing model weights/states with low-bit precision to reduce memory and compute. "are quantized to 4-bit."

- Radiance: Physical quantity measuring light power per unit area per unit solid angle. "HDR sources encode radiance rather than display intensities,"

- Refractive pixel shifts: Spatial displacements in images caused by refraction through glass. "difficult due to refractive pixel shifts, inconsistent illumination, and scene or camera motion."

- Screen-space: Image-plane approximations that ignore full 3D light transport, often used for synthetic mixing. "screen-space mixing models, which lack the physical realism"

- Specular highlights: Bright reflections from smooth, shiny surfaces caused by direct reflection of light sources. "learn the removal of both specular highlights and reflections."

- SSIM: Structural Similarity Index; an image quality metric focusing on structural information. "and report PSNR and SSIM values as provided in the respective papers;"

- Stereo baseline: The distance between two cameras enabling depth perception and multi-view cues. "stereo baseline~\cite{niklaus2021learned},"

- Subsurface scattering: Light scattering within translucent materials that causes soft blurring effects. "such as subsurface scattering, which produces characteristic blurring,"

- Surface roughness: Material parameter controlling microfacet spread that affects reflection blur and scatter. "Roughness controls the degree of scatter and blur."

- Text embedding: Vector representation of text used to condition a model’s predictions. "The text embedding is precomputed in advance."

- Token (latent token): Discrete units (here, latent patches) processed by transformers in sequence form. "two sequences of latent tokens of identical spatial layout:"

- Variational Autoencoder (VAE): A probabilistic encoder–decoder model that learns a latent representation of data. "operate in a compressed latent space in the bottleneck of a VAE~\cite{Kingma2014}."

- Zero-shot: Evaluation on datasets not seen during training, without task-specific fine-tuning. "evaluated in a zero-shot manner"

Collections

Sign up for free to add this paper to one or more collections.