- The paper introduces GDKVM, which leverages linear key-value association and a gated delta rule to efficiently capture spatiotemporal relationships in echocardiography videos.

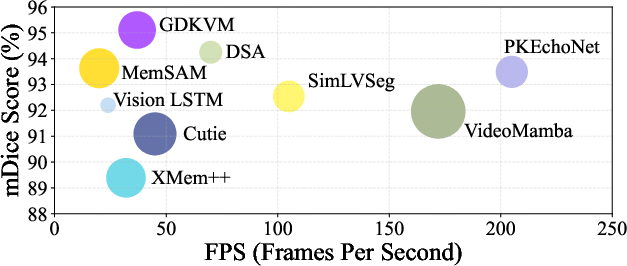

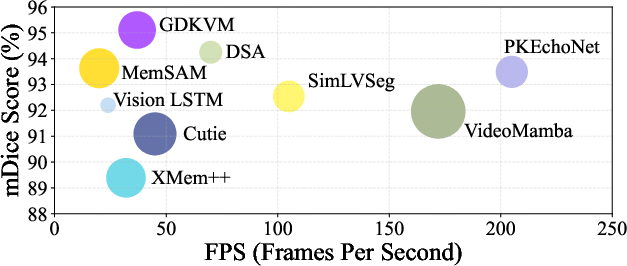

- It achieves high segmentation accuracy (mDice up to 95.11) and real-time processing (37 FPS) on CAMUS and EchoNet-Dynamic, demonstrating strong clinical potential.

- The incorporation of multi-scale Key-Pixel Feature Fusion enhances boundary detection and noise resilience, addressing common issues in ultrasound imaging.

GDKVM: Spatiotemporal Key-Value Memory with Gated Delta Rule for Echocardiography Video Segmentation

Problem Statement and Motivation

Robust segmentation of cardiac structures in echocardiography videos remains a central challenge for quantifying cardiac function and supporting clinical decision-making. The task is complicated by significant noise, dynamic anatomical deformation, and low-contrast boundaries typical in ultrasound imaging, which severely impede reliable feature extraction and temporal tracking. While CNN-based architectures (e.g., U-Net), Vision Transformers, and STM networks have yielded advances, limitations persist: CNNs are inherently local, Transformers are computationally prohibitive for long sequences, and most STM approaches fail to balance global temporal context with spatial detail without high-cost memory mechanisms or strong error propagation.

GDKVM Architecture

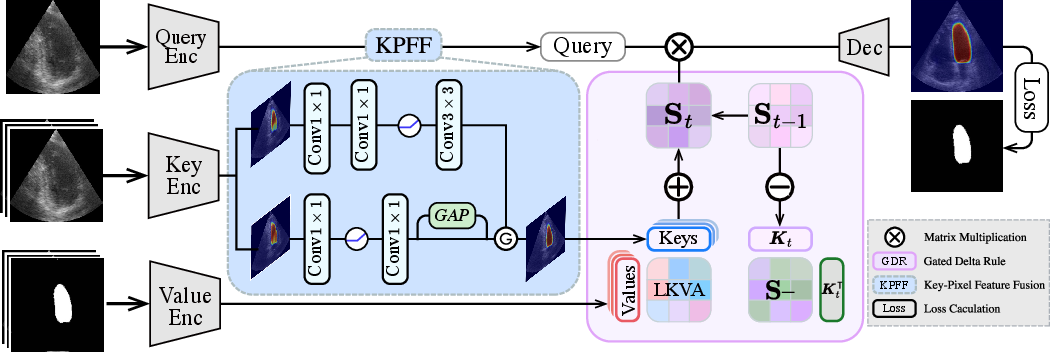

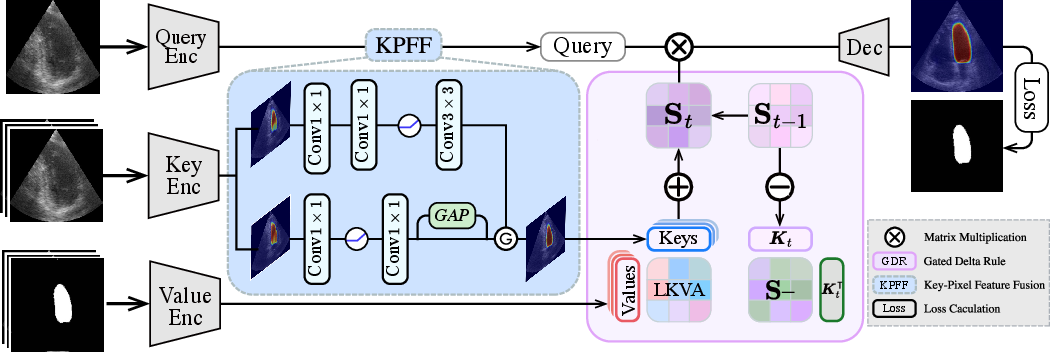

GDKVM addresses these deficits through a novel architecture unifying linear key-value memory representation, dynamic memory management, and rich feature fusion across scales. The model comprises three key innovations:

- Linear Key-Value Association (LKVA): Efficiently models inter-frame spatiotemporal relations as a linear RNN, mapping keys and values into a recurrent state transition matrix, eliminating the quadratic cost associated with conventional attention.

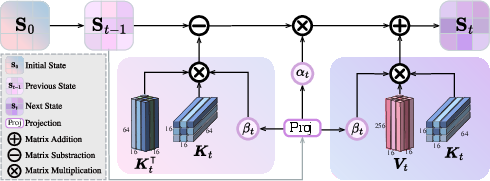

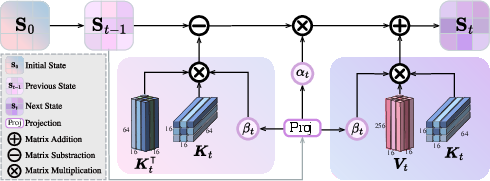

- Gated Delta Rule (GDR): GDR introduces two data-adaptive gates—αt for decay and βt for interpolation/updating—which selectively forget or reinforce temporal memory, enabling robust adaptation to rapid cardiac deformations and controlling memory saturation during noisy or long sequences.

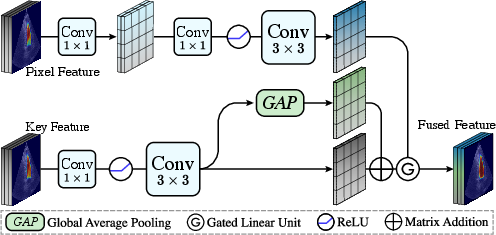

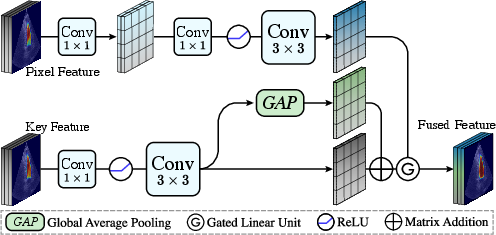

- Key-Pixel Feature Fusion (KPFF): Fuses multi-scale local key features, global feature statistics, and pixel-level representations within a gating framework, amplifying both spatial detail and global consistency to combat image degradation and artifact-induced errors.

Figure 1: GDKVM architecture overview, illustrating the LKVA mechanism, Gated Delta Rule, and KPFF module integration.

The LKVA mechanism reformulates the traditional dot-product attention by recursively updating a hidden state matrix St=i=1∑tViϕ(Ki)⊤, where ϕ(⋅) denotes an optional feature map for kernelization. Unlike quadratic-attention models, the update is linear in sequence length, critical for real-time processing of echocardiography videos.

GDR refines this further by decomposing memory update into removal and adaptive replacement:

St=αtSt−1(I−βtKtKt⊤)+βtVtKt⊤

where αt and βt are learned gates, conditionally controlling both the decay of memory and strengthening of current-frame information.

KPFF employs a gated fusion between local (convolutional), global (GAP-expanded), and pixel features to output robust key-pixel features:

Ffused=G⋅(FK+FGlobal)+(1−G)⋅FPix

where G is a learned gate, promoting spatiotemporal resilience.

Figure 2: Gated Delta Rule operation. βt adapts to heart motion, αt regulates long-term memory decay.

Figure 3: Key-Pixel Feature Fusion mitigates boundary blur and noise by jointly learning across spatial scales.

Experimental Validation

Datasets and Evaluation Metrics

Experimental results are presented on CAMUS and EchoNet-Dynamic—two canonical datasets for cardiac structure tracking. Standard metrics including mDice, mIoU, Hausdorff Distance (HD), Average Surface Distance (ASD), and LVEF estimation (Pearson correlation, bias, std) are reported. The training is performed with common optimizers and augmentations, benchmarking both accuracy and computational efficiency.

Quantitative Results

GDKVM achieves superior segmentation metrics: CAMUS—mDice 95.11, mIoU 92.97, HD 3.05, ASD 1.98; EchoNet-Dynamic—mDice 93.46, mIoU 90.86, HD 2.38, ASD 1.36, with real-time inference (37 FPS) and only 35.2M parameters. These results are consistently ahead or on par with the strongest prior methods, including Vision LSTM, DSA, MemSAM, and VideoMamba. Significant gains are especially evident in clinical utility, as GDKVM delivers highest agreement in LVEF estimation (Pearson 0.904; bias −0.19%, std 11.3%).

Figure 4: Speed (FPS) vs. mDice segmentation accuracy. Larger bubbles indicate more parameters.

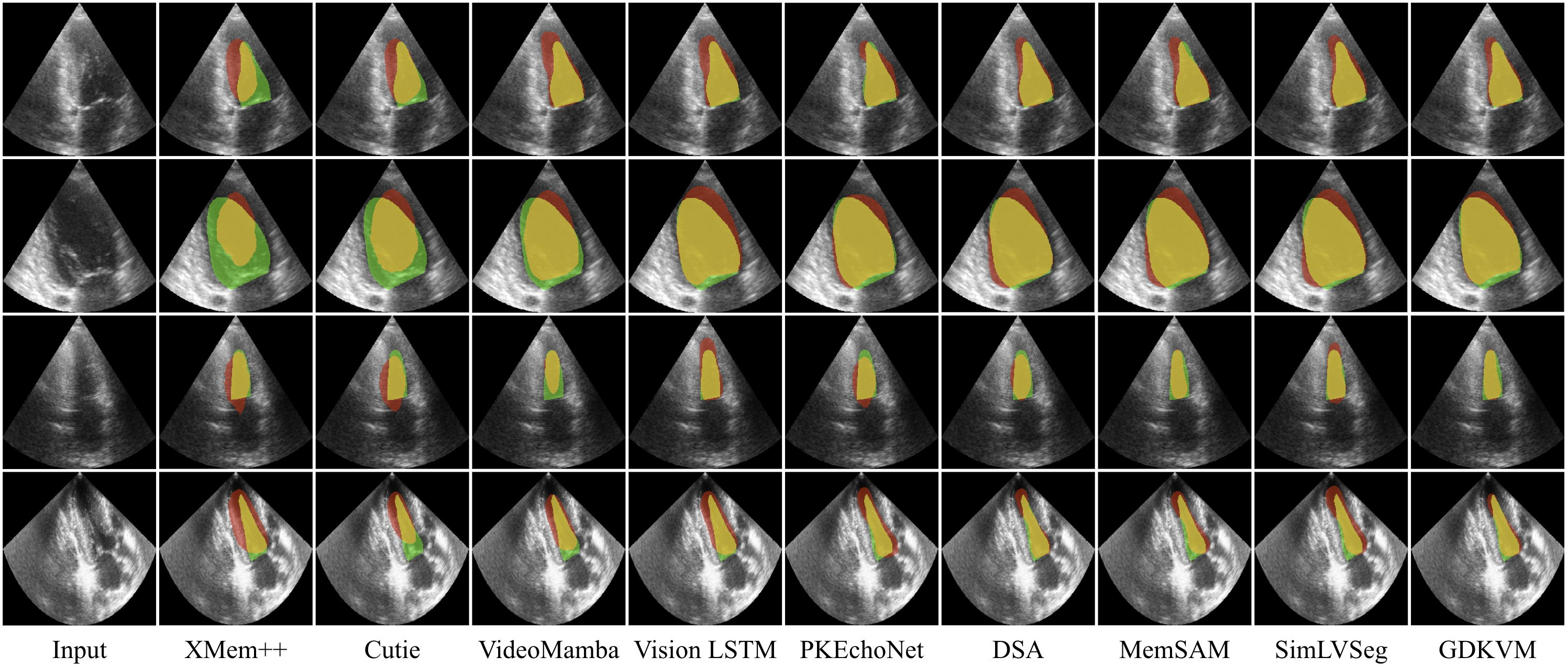

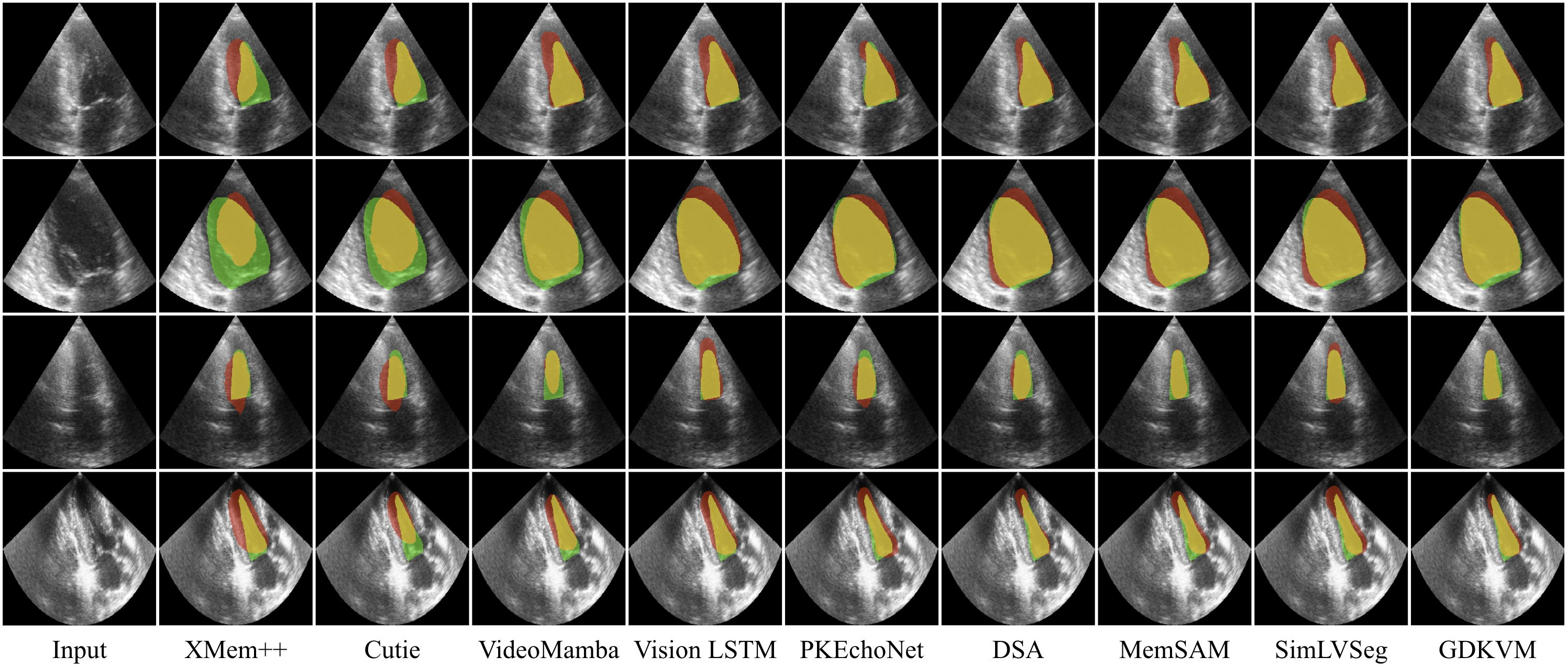

Qualitative Evaluation

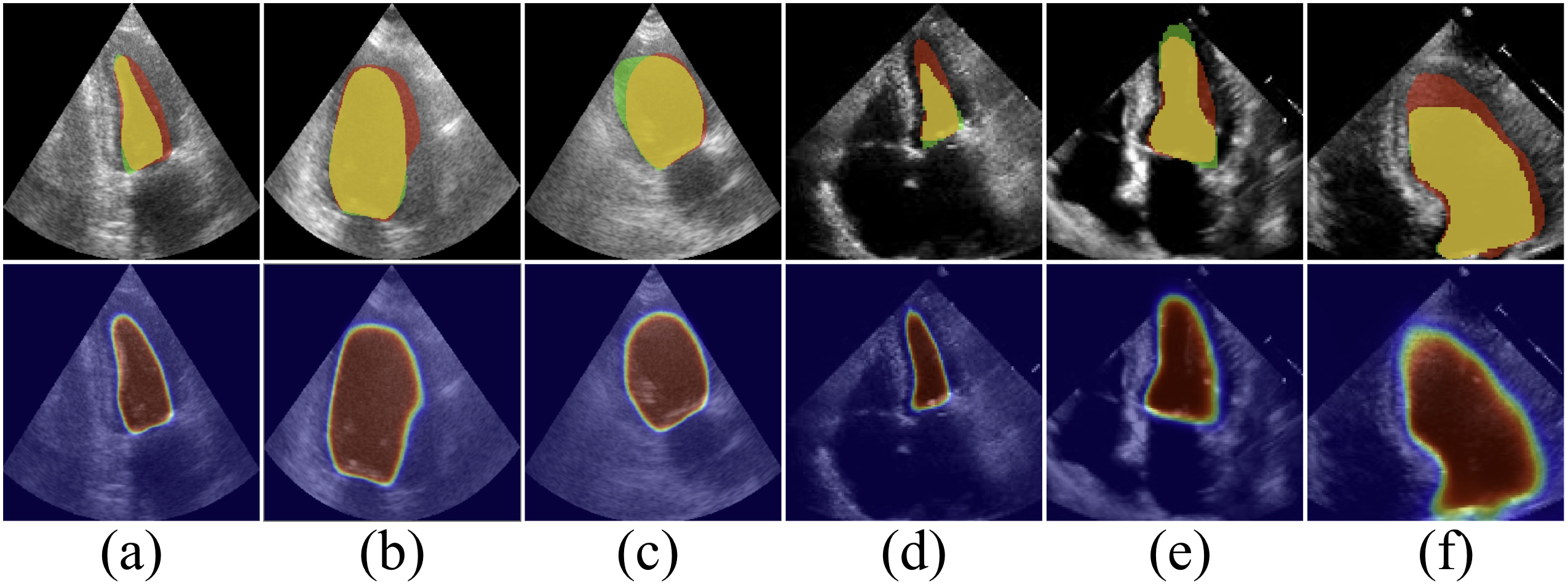

Visual assessment demonstrates that GDKVM masks align more closely with cardiac anatomy, reducing both over- and under-segmentation—problems that plague previous methods, especially on frames with significant deformation or low SNR.

Figure 5: Visual comparison on CAMUS. GDKVM produces fewer artifacts and tracks regional shape changes more precisely than SOTA benchmarks.

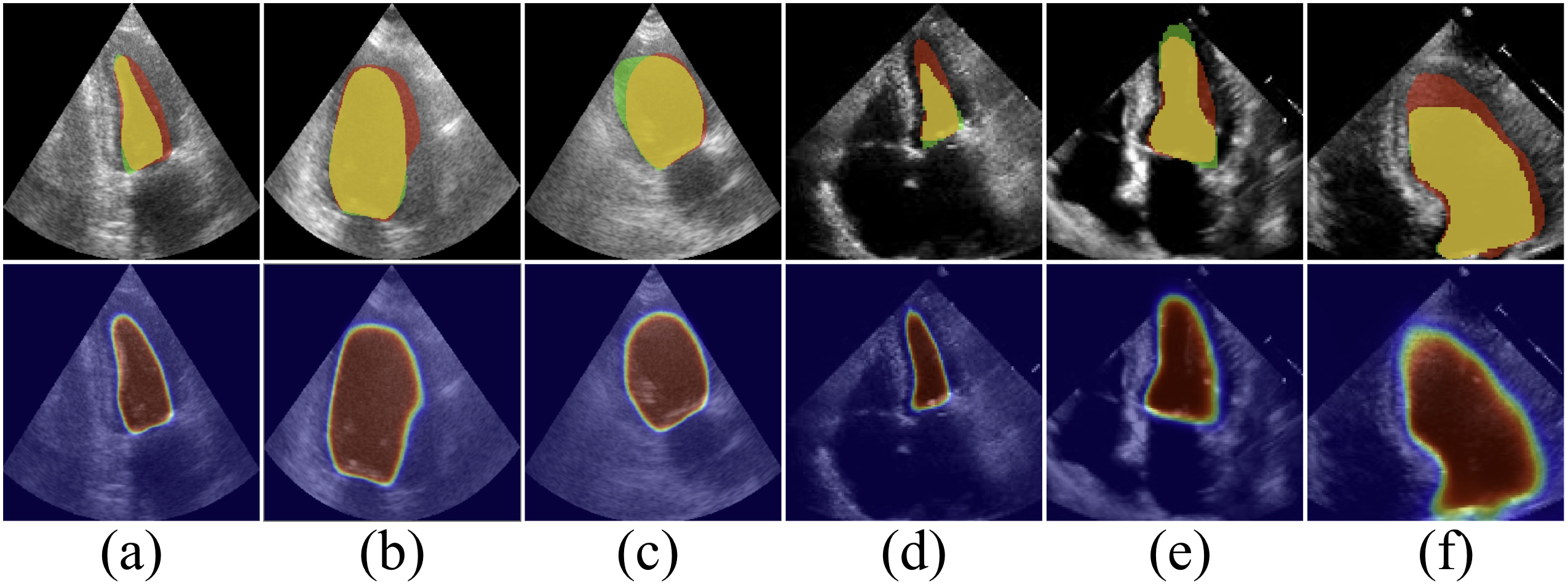

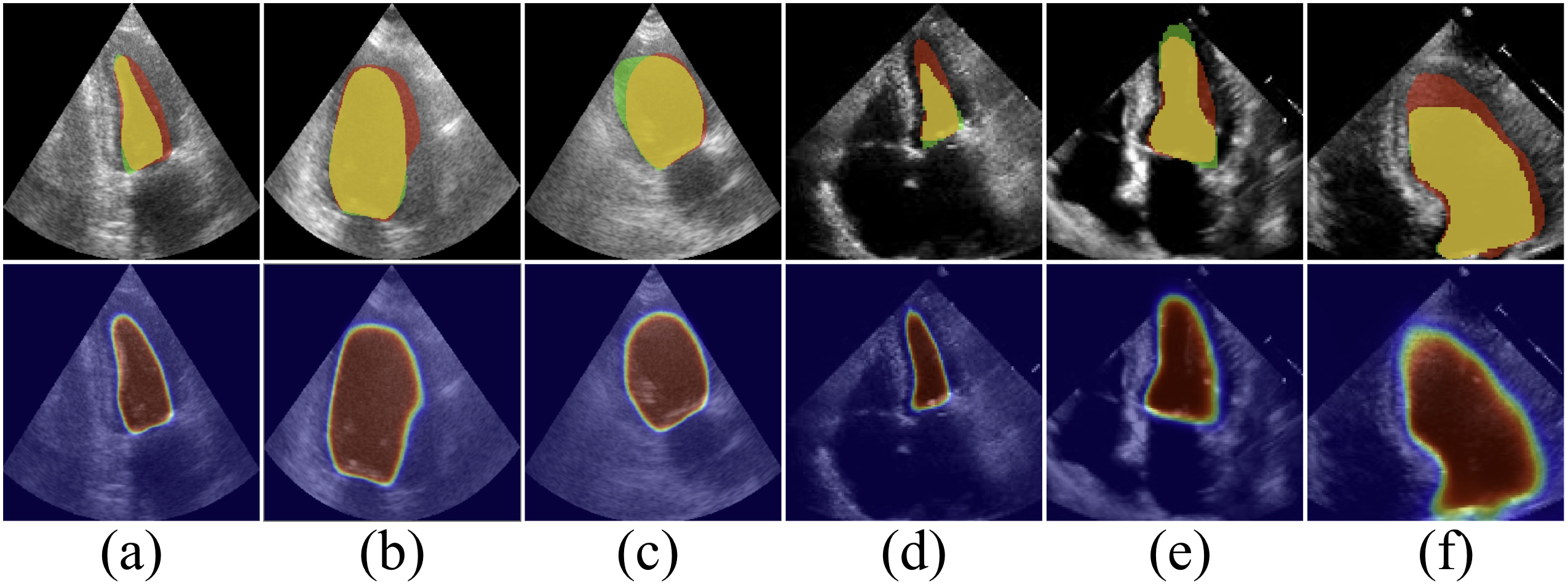

Ablation studies underscore the necessity of both GDR and KPFF. Removing gating (using baseline LKVA only) degrades Dice by nearly 2 points, while disabling KPFF amplifies the model’s vulnerability to image corruption. The gating parameters’ distributions reveal adaptive memory utilization aligned with cardiac phases.

Figure 6: Segmentation confidence heatmaps under different ablation strategies, highlighting GDR/KPFF impact on robustness.

Analysis and Limitations

The memory management strategy (state matrix shape, gating configuration) is well-justified for ultrasound, but further validation on more diverse medical video segmentation tasks, including those with longer-range dependencies and partial supervision, is required. Current square-matrix limitations restrict some forms of hardware parallelism. Some failure cases suggest that adaptive boundary refinement remains an open challenge.

Figure 7: GDKVM failure cases; rare instances of over-confident misalignment, typically in highly ambiguous regions.

Theoretical and Practical Implications

The introduction of the Gated Delta Rule as a general principle unifies efficient linear memory with learned, data-dependent gating, positioning GDKVM as a template for scalable video segmentation architectures in medical imaging and potentially in other domains where recurrent, context-sensitive memory is pivotal. KPFF’s design encourages further research into physiologically-driven fusion strategies, especially where fine-grained spatial cues are essential.

Beyond clinical applications, the architectural insights into learnable, selective memory update and multi-scale fusion can catalyze developments in efficient sequence modeling for other video and time-series tasks, including surveillance, robotics, and multi-modal perception.

Conclusion

GDKVM establishes a new line of research for efficient, robust echocardiography video segmentation by combining linear key-value association, gated memory decay, and multi-scale feature fusion. The architecture surpasses strong benchmarks in both segmentation fidelity and clinical index estimation, while preserving real-time applicability. GDKVM’s framework advocates broader adoption of adaptive gating principles and hybrid feature strategies for scalable video understanding, with clear avenues for extension in both medical and general sequential modeling contexts.

(2512.10252)