The resource theory of causal influence and knowledge of causal influence

Abstract: Understanding and quantifying causal relationships between variables is essential for reasoning about the physical world. In this work, we develop a resource-theoretic framework to do so. Here, we focus on the simplest nontrivial setting -- two variables that are causally ordered, meaning that the first has the potential to influence the second, without hidden confounding. First, we introduce the resource theory that directly quantifies causal influence of a functional dependence in this setting and show that the problem of deciding convertibility of resources and identifying a complete set of monotones has a relatively straightforward solution. Following this, we introduce the resource theory that arises naturally when one has uncertainty about the functional dependence. We describe a linear program for deciding the question of whether one resource (i.e., state of knowledge about the functional dependence) can be converted to another. Then, we focus on the case where the variables are binary. In this case, we identify a triple of monotones that are complete in the sense that they capture the partial order over the set of all resources, and we provide an interpretation of each.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Explain it Like I'm 14

Overview

This paper is about understanding cause and effect in a simple situation: one thing (call it X) can influence another thing (call it Y), and there are no secret third things messing them both up. The authors build two “resource theories” — a careful way to measure and compare how strong the cause-effect link is, and how useful our knowledge about that link is. They focus on the simplest case to make the ideas clear and then show how to check and compare different situations.

Key Questions

The paper asks two main questions:

- If we know exactly how Y depends on X (like a fixed rule or function), how can we measure “how much” X really influences Y? When can we turn one rule into another using only allowed, safe steps?

- If we don’t know the exact rule, but we have a probability over several possible rules (some chance it’s rule A, some chance it’s rule B, etc.), how can we measure the usefulness of that knowledge? When can we safely turn one “state of knowledge” into another?

Methods and Approach

The authors use three core ideas and explain them with everyday analogies:

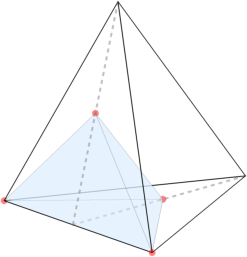

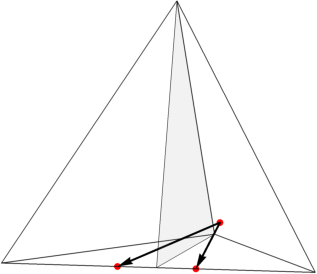

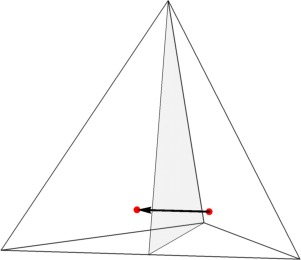

- Causal diagrams: Think of X as a wire going into a box, and Y as a wire coming out. The box is a “function” — a rule that tells Y what to be based on X. This makes cause and effect easy to picture.

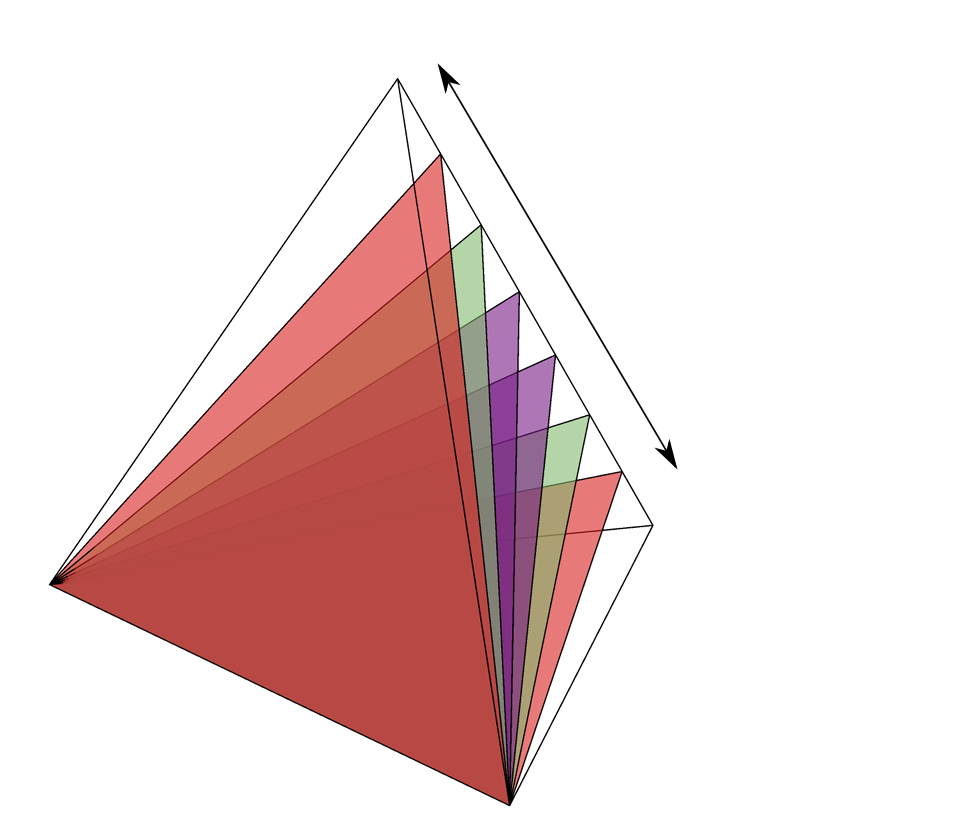

- Resource theory: Imagine abilities as “resources.” Free resources are things you can use for free and that don’t create new cause-effect power out of thin air. Allowed actions (free operations) are like safe tools: you can do pre-processing before the function, and post-processing after it, but you cannot add a new secret link that sneaks influence across the boundary.

- Two scenarios:

- Resource Theory of Causal Influence (RTCaus): The resource is a single, fixed function from X to Y.

- Resource Theory of Knowledge of Causal Influence (RTKnowCaus): The resource is your probability distribution over possible functions (your “state of knowledge” about what rule the world is using).

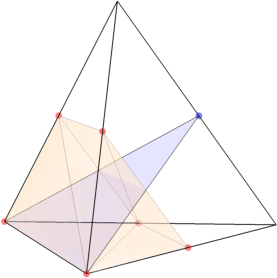

To make things concrete, they use a simple “communication channel” story: Alice sends a bit X through a channel, Bob receives a bit Y. The channel applies one of four simple functions:

- Identity (I): Y = X

- Flip (F): Y = not X

- Reset to 0 (R0): Y = 0, no matter X

- Reset to 1 (R1): Y = 1, no matter X

Sometimes the channel “leaks” a flag to the environment saying which function it used. If Bob learns the flag, he can react perfectly in some cases. If he doesn’t, he only knows the overall input–output statistics, which can hide important differences.

Technical tools explained simply

- Image size: For a function f, its “image” is the set of possible outputs it can produce when you vary inputs. Bigger image means more ways Y can depend on X — more causal influence.

- Linear program: This is a recipe in math that checks if one resource can be turned into another, by solving a set of simple constraints. Think of it like filling in a spreadsheet so all the rules are satisfied; if there’s a solution, conversion is possible.

Main Findings

1) RTCaus: When the function is known

- Free functions are ones that ignore X (resets like R0 or R1). They have zero causal influence because Y never depends on X.

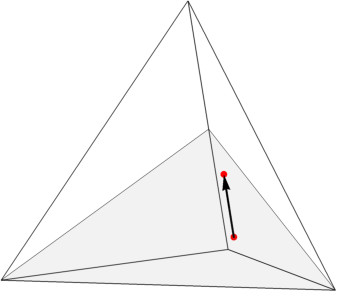

- Allowed actions (free operations) are pre-processing and post-processing that don’t add new cause-effect paths.

- Conversion rule: You can turn function f into function g by allowed actions if and only if the number of distinct outputs f can produce is at least as large as the number g can produce. In symbols, f → g if and only if |Im(f)| ≥ |Im(g)|.

- A simple score (monotone) that completely captures this is M(f) = log2(|Im(f)|). You can think of it as “bits of causal influence.”

- In the binary case (X and Y are bits), I and F have one bit of influence (two possible outputs), while R0 and R1 have zero (only one output).

2) RTKnowCaus: When the function is uncertain

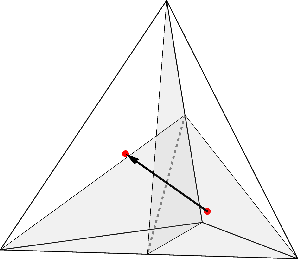

- The resource is now your probability distribution over functions (how likely the channel uses I, F, R0, R1, etc.).

- Allowed actions are now probability distributions over safe operations that also don’t create new cause-effect links.

- Conversion test: The authors show how to decide if one knowledge-state can become another using a linear program.

- Binary case result: Three specific scores (monotones) fully characterize the partial order — they tell you everything about which resources are more or less powerful: 1) Weight on causally connected functions: How much probability is on functions like I and F that actually let X influence Y. 2) Bias (polarization) between I and F: If the channel favors I over F (or vice versa), Bob can often guess X from Y better without learning the flag. 3) Postselection certainty of causal connectivity: Even if your overall guessing chance is the same, sometimes certain outputs guarantee the function was causally connected (e.g., seeing Y = 1 might prove the channel used F). This monotone captures that “certainty after filtering” idea.

These three together give a complete way to compare and classify knowledge about causal influence when X and Y are bits.

Why the “knowledge” view matters

Two different knowledge-states can produce the same observable statistics P(Y|X) but represent very different causal situations:

- 50% I + 50% F versus 50% R0 + 50% R1 both make Y look random regardless of X.

- But in the first case, if you could learn the flag, you could perfectly recover X every time.

- In the second case, even learning the flag doesn’t help: the channel ignored X completely.

- So the probability distribution over functions contains strictly more information about causality than the basic input–output statistics.

Implications and Impact

- Practical inference: This framework helps scientists, engineers, and data analysts compare and manage cause-effect mechanisms in a principled way, not just by looking at averages or correlations.

- Communication with leaks: If a channel leaks a “flag” about which function was used, knowing how much probability sits on causally connected functions, how biased those are, and how certain you can be after seeing specific outputs can guide smarter communication strategies.

- Foundations for bigger systems: While the paper focuses on the simplest case (just two variables, no hidden confounders), the approach lays groundwork for analyzing more complex causal networks in a structured, quantitative way.

- Better decisions under uncertainty: The linear-program test and the complete set of monotones in the binary case show that we can systematically answer “Can we safely convert this knowledge into that knowledge?” — useful in machine learning, physics, and any field where we learn mechanisms from data.

In short, the paper turns “cause-effect strength” and “knowledge about cause-effect” into measurable resources. It shows how to compare them, how to transform them safely, and how to understand what extra knowledge really buys you beyond raw input–output data.

Knowledge Gaps

Knowledge gaps, limitations, and open questions

The following list highlights what remains missing, uncertain, or unexplored in the paper, stated concretely to guide future research:

- Generalization beyond two-node, no-confounding settings:

- Extend RTCaus and RTKnowCaus from a single causal edge () to arbitrary DAGs with multiple parents/children, path-dependent influences, and network composition.

- Incorporate confounders, mediators, and collider structures to test whether free operations and monotones still capture “no new causal influence” appropriately.

- Address cyclic/feedback structures and dynamic causal processes where acyclicity may fail.

- Choice and justification of free operations:

- Provide axiomatic justification (e.g., physical, operational, categorical) that the chosen free deterministic combs in RTCaus and their probabilistic analogs in RTKnowCaus are the unique or most natural “no-new-influence” operations.

- Explore alternative plausible free sets (e.g., allowing certain side-channels or postselection) and characterize how these change the induced pre-order and monotones.

- Completeness of monotones outside the binary case:

- In RTKnowCaus, the triple of monotones is shown complete only for binary variables; characterize complete monotone families for general finite alphabets (non-binary cardinalities).

- Determine whether a finite complete set of monotones exists for arbitrary alphabets; if not, specify minimal infinite families or functional bases.

- Operational interpretations and task-based benchmarks:

- Provide task-based characterizations (e.g., communication, prediction, control) for each monotone in non-binary settings, analogous to the interpretations given for the binary case.

- Link monotone values to concrete performance bounds in operational tasks (e.g., success probabilities, regret, decision-theoretic utilities), and verify tightness.

- Approximate and noisy convertibility:

- Develop an “ε-convertibility” framework allowing approximation errors in conversions (both RTCaus and RTKnowCaus), along with robust monotones that quantify degradation under noise.

- Analyze continuity, stability, and robustness of monotones with respect to small perturbations in distributions over functions or in observed statistics.

- Computational complexity and scalability:

- For RTKnowCaus, characterize the complexity of the linear program for convertibility (worst-case and average-case), and provide bounds on instance sizes as a function of alphabet cardinalities.

- Derive efficient algorithms, structural decompositions, or symmetry reductions that make convertibility tests tractable in larger alphabets or multi-edge settings.

- Structure of the pre-order and lattice properties:

- Determine whether the induced pre-order in RTKnowCaus (and RTCaus) forms a lattice under natural operations (e.g., join/meet via mixtures or composition), and characterize supremum/infimum constructs.

- Explore the existence of incomparable resources and quantify their prevalence beyond the binary case.

- Catalysis, activation, and asymptotic regimes:

- Investigate catalytic phenomena (resources that enable conversions without being consumed) and activation (combined resources enabling new conversions) in both RTCaus and RTKnowCaus.

- Define asymptotic resource rates (distillation/dilution) under many-copy limits, and establish additivity or superadditivity of monotones under tensor products.

- Composition rules and network-level behavior:

- Formalize how resources (functions or distributions over functions) compose across multiple edges in a DAG, including sequential and parallel composition, and characterize how monotones behave under composition.

- Study how knowledge-of-causal-influence propagates or aggregates in networks (e.g., along chains, forks, colliders), and whether monotones obey data-processing-like inequalities.

- Relationship to Shannon theory and other resource theories:

- Precisely relate RTKnowCaus convertibility and monotones to classical channel capacity, mutual information, and other Shannon-theoretic measures when the flag is unknown.

- Compare RTCaus/RTKnowCaus to resource theories of channels, entanglement, nonlocality, and contextuality; identify formal embeddings or separations and shared structural features.

- Empirical identifiability and estimation:

- Provide procedures to estimate the distribution over functions (response-function distribution) from finite data and interventions, and quantify identifiability conditions and sample complexity.

- Analyze how estimation uncertainty impacts the resource assessment and convertibility decisions (e.g., confidence intervals, robust LP formulations).

- Continuous and large-alphabet variables:

- Extend the framework to continuous variables and large alphabets, including measurability and integration issues for distributions over functions, and corresponding monotone definitions.

- Identify discretization schemes or functional parameterizations that preserve operational meanings while ensuring computational tractability.

- Non-deterministic causal mechanisms:

- The framework models stochasticity via distributions over deterministic functions; investigate direct handling of inherently stochastic causal mechanisms (non-deterministic structural equations) and their relation to the current modeling.

- Assess whether allowing stochastic functions as primitives changes the free operations or requires new monotones.

- Postselection and third monotone interpretation:

- Generalize and formalize the “postselection-based certainty about causal connectivity” interpretation of the third monotone beyond the binary setting, including necessary and sufficient conditions for equality cases.

- Quantify how postselection constraints (e.g., cost, availability) affect resource convertibility and monotone values.

- Uniqueness and characterization of monotones:

- Determine whether the identified monotones (especially in RTKnowCaus) are unique up to order-preserving transformations, and characterize the space of all monotones compatible with the free operations.

- Explore convexity, additivity, and extremality properties of monotones under mixing of resources.

- Higher-order resources:

- The paper defers analysis of partial orders over deterministic combs and distributions over combs; develop the theory for these higher-order resources, including their free operations and monotones.

- Clarify operational tasks where higher-order resources are primary (e.g., adaptive protocols, meta-inference) and connect to their resource characterizations.

- Practical protocols and environment access:

- Model the costs and constraints of extracting the flag variable (environmental information), including noisy or partial access, and integrate these into the resource theory via constrained free operations.

- Provide explicit protocols that transform knowledge resources under realistic limitations (e.g., bounded side-channel capacity, limited interventions).

- Connection to causal discovery and counterfactuals:

- Link resource assessments to causal discovery guarantees (e.g., under what conditions does resource convertibility imply identifiability of mechanisms or counterfactual predictions).

- Formalize how counterfactual tasks map onto resource monotones and free operations, especially when only partial mechanism knowledge is available.

- Experimental validation:

- Propose empirical tests (synthetic or real-world) to evaluate whether resource monotones correlate with task performance in communication or inference scenarios as claimed, and assess deviations in practical settings.

Practical Applications

Practical Applications of “The resource theory of causal influence and knowledge of causal influence”

Below are practice-oriented applications that flow from the paper’s core results: (i) a resource theory for deterministic causal influence between two variables (RTCaus), fully characterized by the image-size monotone M(f)=log2|Im(f)| and a total order; and (ii) a resource theory for knowledge of causal influence (RTKnowCaus) where resources are distributions over functions, with (for binary variables) a complete set of three monotones and a linear program (LP) to decide resource convertibility. The paper also introduces a “flagged channel” perspective clarifying when knowledge about the mechanism itself (beyond P(Y|X)) enables value-of-information decisions.

Immediate Applications

These can be deployed with current methods, especially in binary settings and small finite alphabets.

- Industry (Software/ML): mechanism-aware A/B testing and experimentation

- Use case: Different product mechanisms can produce the same average lift P(Y|X) but differ in causal influence. Use RTKnowCaus monotones to:

- Prioritize variants that preserve causal connectivity (higher “weight on causally connected functions”).

- Decide whether to invest in additional logging/telemetry to disambiguate mechanisms (value-of-information).

- Tools/workflows: Add a module that (i) estimates a distribution over functions consistent with experimental design, (ii) computes the three binary monotones, and (iii) runs the LP to test convertibility under allowed pre/post-process operations.

- Assumptions/dependencies: Two-variable focus; finite alphabets (binary best-supported); no confounding; ability to model pre/post-processing as “free” operations in the business pipeline.

- Communications/Networking: channel coding with partial side information (flagged channels)

- Use case: When a channel’s behavior switches among deterministic modes (identity/flip/reset), use monotones to:

- Quantify how much benefit arises from acquiring partial or full mode information from the environment.

- Decide when to deploy side-channel probing (e.g., pilot signals, feedback) because the knowledge will translate into perfect or near-perfect decoding (high weight on identity/flip and high polarization).

- Tools/workflows: Mechanism-probing procedures; adaptive coding schemes conditioned on estimated mode mixtures; LP-based convertibility checks to see if pre/post-processing can turn one channel characterization into a more usable one.

- Assumptions/dependencies: Mode-switching channels with discrete modes; feasible to extract/estimate mode flags; negligible confounding; stable mechanism during probing.

- Academia (Causal Inference/ML): new evaluation metrics beyond P(Y|X)

- Use case: When two models or interventions induce the same conditional distribution but different distributions over response functions, use the three monotones to refine evaluation:

- Metric 1: weight on causally connected functions (how often X actually influences Y).

- Metric 2: polarization between connected functions (how biased the causal mapping is, impacting guessability from Y).

- Metric 3: postselection-based certainty of causal connectivity (how often one can be sure a specific run had causal connection after observing Y).

- Tools/workflows: Research libraries exposing “mechanism monotones” for binary cases; benchmarks comparing interventions with identical P(Y|X) but different causal mechanisms.

- Assumptions/dependencies: Binary focus for completeness; careful experimental design to learn distributions over functions (e.g., via interventions or instrumental variables).

- Healthcare/Clinical Ops: trial design and biomarker prioritization

- Use case: Two treatments yield the same outcome distribution, but differ in how often the treatment causally influences outcomes and in how reliably that influence can be detected post hoc. Use monotones to:

- Decide whether to collect extra biomarkers or run sub-studies to uncover mechanism mixtures (e.g., identity vs reset-like behavior).

- Prioritize interventions with higher causal connectivity and polarization when actionable bedside decisions rely on quick inference.

- Tools/workflows: Trial planning tools that simulate distributions over functions given plausible biological mechanisms; value-of-information calculators tied to monotones.

- Assumptions/dependencies: Low-confounding settings (or good instruments); variable discretization or binning; clear mapping of clinical mechanisms to deterministic components.

- Robotics/Operations: mode-aware control under mechanism uncertainty

- Use case: Robot dynamics switch across discrete modes (e.g., terrain/contact conditions). RTKnowCaus monotones quantify:

- Whether sensing to identify the active mode materially improves control (high weight on causally connected modes).

- How much can be inferred about prior state/action from observed feedback without extra sensing (polarization).

- Tools/workflows: Mode-identification policies gated by monotone thresholds; LP-based checks to see if permitted pre/post-processing (filters, remappings) convert a harder mechanism mixture into an easier one.

- Assumptions/dependencies: Discrete-mode abstraction; reliable estimation of mixture over deterministic maps; negligible hidden confounding between action and mode.

- Finance/Econometrics: regime detection for decision rules

- Use case: Trading or credit decision policies face regime switches. Two policies can give same average outcomes but differ in causal connectivity. Use monotones to:

- Decide when to invest in regime-sensing signals (e.g., macro tags) based on expected gain from converting knowledge into better actions.

- Tools/workflows: Backtesting engines enhanced with mechanism-metric reporting; LP-based dominance checks among policy mechanisms under allowed transformations.

- Assumptions/dependencies: Discrete regime modeling; capacity to intervene or observe proxies; stable mapping over test windows.

- Policy/Regulation: reporting standards for causal claims

- Use case: Require mechanism-aware reporting when asserting causal impact; equal P(Y|X) is insufficient. Regulators can:

- Recommend disclosure of mechanism uncertainty as distributions over functions and monotones for binary endpoints.

- Encourage value-of-information analysis for proposed monitoring or subgroup analysis.

- Tools/workflows: Guidance templates including monotone summaries; audit checklists to ensure mechanism identification attempts where practical.

- Assumptions/dependencies: Feasible interventions or instruments; clear notion of free transformations relevant to the domain.

- Daily Life/Decision-Making: practical value-of-information heuristics

- Use case: When deciding whether to seek additional information before acting (e.g., testing a device feature, checking a condition), use:

- “Weight on causal connectivity” to judge if additional checking could convert uncertainty into highly reliable decisions.

- “Polarization” to assess whether simple heuristics (e.g., act as if Y≈X) are already good enough without extra info.

- Tools/workflows: Lightweight decision aids or checklists framing decisions in terms of “mechanism-connectivity” and “bias.”

- Assumptions/dependencies: Coarse binary abstractions of actions/outcomes; user can observe a proxy of Y and optionally seek a flag-like cue.

Long-Term Applications

These need further research, scaling beyond two variables, handling confounding, or moving beyond finite/discrete cases.

- General multi-variable causal systems and DAGs (industry/academia)

- Potential: Extend RTCaus/RTKnowCaus from a single edge X→Y to arbitrary DAGs, enabling:

- End-to-end mechanism-aware evaluation of complex systems (software stacks, supply chains, multi-organ physiological systems).

- Network-level value-of-information policies (which sensors/interventions most improve mechanism knowledge globally).

- Tools/products: Libraries for resource orders on DAGs; multi-edge monotones; graph-wide LP/convex programs for convertibility.

- Dependencies: Nontrivial generalization of free operations; efficient characterizations of partial orders; scalability.

- Confounding and partially observed structures

- Potential: Integrate hidden confounders into the resource-theoretic picture (e.g., extend beyond local error-only models).

- Tools/products: Identifiability conditions to reconstruct distributions over functions under confounding; robust monotones.

- Dependencies: New identifiability theory; interventional data; careful modeling of free operations under confounding.

- Continuous variables and stochastic mechanisms

- Potential: Move from finite/binary to continuous outcomes and to non-deterministic structural equations (beyond mixtures of deterministic functions).

- Tools/products: Measure-theoretic generalizations; approximate/relaxed monotones; numerical optimal-transport analogs.

- Dependencies: Advanced functional analysis; tractable approximations; domain-specific discretizations.

- Real-time adaptive control and safe autonomy (robotics/transport)

- Potential: Closed-loop controllers that compute mechanism monotones online to trigger sensing/actions (e.g., determine when to confidently infer past state from current observation without extra sensing).

- Tools/products: Embedded LP/convex solvers; on-board estimators of distributions over functions; certification routines.

- Dependencies: Real-time computation; stable estimation under noise; safety constraints; certifications for autonomy.

- Healthcare policy and adaptive trials

- Potential: Trial platforms that adaptively collect biomarkers or stratify patients based on estimated mechanism monotones, prioritizing causal-connectivity-preserving treatments.

- Tools/products: Trial design optimizers that trade budget against expected mechanism resolution; regulatory frameworks adopting mechanism reporting.

- Dependencies: Ethical/regulatory acceptance; robust estimation of function-distributions; integration with electronic health records.

- Privacy, security, and explainability under “flag leaks”

- Potential: Treat environmental leakage of mechanism flags as a privacy/security channel. Balance utility (better decoding) against leakage risks.

- Tools/products: Policies that cap or audit mechanism leakage; explainability layers that report mechanism certainty without exposing sensitive flags.

- Dependencies: Formal privacy models; field-specific constraints (e.g., medical data regulations).

- Fairness and algorithmic accountability

- Potential: When different mechanisms yield same aggregate outcomes across groups, monotones can reveal disparate causal connectivity or postselection certainties that affect downstream equity.

- Tools/products: Fairness diagnostics that go beyond outcome parity to mechanism parity; compliance dashboards.

- Dependencies: Ability to estimate mechanism distributions by group; careful causal assumptions; stakeholder alignment.

- Dataset shift and robustness in ML

- Potential: Detect shifts not just in P(Y|X) but in distributions over mechanisms; design interventions or data collection to preserve or recover desirable connectivity.

- Tools/products: Shift detectors for mechanism mixtures; retraining curricula that target mechanism monotones.

- Dependencies: Interventional or auxiliary data; scalable estimation of mechanism mixtures in high dimensions.

- Standardization and education

- Potential: Sector-wide standards to report “mechanism resource summaries” (monotones, convertibility claims) alongside conventional metrics.

- Tools/products: Curriculum modules for causal mechanism resource thinking; benchmark datasets with labels at the “function-distribution” level.

- Dependencies: Community adoption; tooling support; demonstrable wins over status quo.

In all cases, feasibility depends on core assumptions highlighted by the paper:

- Theoretical scope: results are exact in the two-variable, no-confounding, finite (binary-complete) setting.

- Identification: estimating a distribution over functions from data often requires interventions or strong instruments; observational data alone may be insufficient.

- Free operations: value propositions assume realistic pre/post-processing analogs in the target domain; misalignment can limit convertibility claims.

- Computation: the linear program is tractable for binary/small alphabets; scaling requires algorithmic advances or approximations.

These applications outline a path from immediate, mechanism-aware metrics and decisions to a more ambitious program: mechanism resource accounting across complex systems, with principled value-of-information planning and governance.

Glossary

- Bipartition: A division of systems into two parts (e.g., sender and receiver) used to analyze cause-effect connections across the split. "All of the processes we consider admit of a bipartition of the involved systems between a sender and a receiver."

- Causal connectivity: The presence of a nontrivial cause-effect relationship between variables. "Bob has certainty that there was causal connectivity in at least some specific rounds"

- Causal inference: The study of learning causal models and relationships from data and interventions. "Progress in causal inference has shown that it is possible to learn about the causal model from observations and interventions"

- Causal-inferential framework: A formalism that combines causal processes with inferential (knowledge) structures. "We represent causal relationships between variables using a graphical framework motivated by process theories and the causal-inferential framework~\cite{omlet}."

- Causal mechanism: The specific functional relationship or process by which one variable influences another. "two different causal mechanisms are consistent with the same observational data."

- Causal structure: The arrangement of variables and directed influences among them, independent of specific parameter values. "A causal structure can be represented as a directed acyclic graph (DAG)"

- Causally disconnected: A situation where the output is independent of the input, indicating no causal influence. "we say that and are causally disconnected."

- Causally ordered: An ordering of variables where earlier ones can influence later ones without hidden confounding. "two variables that are causally ordered, meaning that the first has the potential to influence the second, without hidden confounding."

- Conditional probability distribution: A distribution describing the probabilities of an output given an input. "the best characterization of the communication channel that they can have is the conditional probability distribution "

- Confounder: An unobserved variable that influences multiple observed variables, potentially creating spurious associations. "In this work, we will only study causal structures without confounders."

- Cost-construction: A method to build resource monotones from a function and a set of resources via optimization. "One method for constructing resource monotones is called the cost-construction"

- Counterfactuals: Hypothetical outcomes under alternative interventions or conditions. "This formulation eliminates the ambiguity between causation and inference and permits reasoning about counterfactuals."

- Deterministic comb: A higher-order operation that maps one function (channel) to another via pre- and post-processing. "A deterministic comb is an operation taking a function into another function "

- Directed acyclic graph (DAG): A graph with directed edges and no cycles, used to represent causal structures. "A causal structure can be represented as a directed acyclic graph (DAG) where nodes correspond to random variables"

- Downward closure: The set of all resources reachable from a given resource via free operations. "For any resource , the set of all resources to which it can be converted is called its downward closure."

- Equivalence classes: Sets of resources that can be converted into each other using free operations. "Resources that are mutually interconvertible form equivalence classes."

- Flag variable: An auxiliary variable indicating which specific function/mechanism is applied by a channel on each run. "encoded in what we call a flag variable"

- Free deterministic comb: A comb that does not introduce new causal influence across the bipartition, consisting only of pre- and post-processing. "The free deterministic combs consist of the set wherein there is a pre-processing of the input of and a post-processing of the output of "

- Free operations: Operations allowed at no cost in a resource theory; they cannot increase the resource. "When a free process is used to achieve interconversion of one resource into another, it is referred to as a free operation."

- Free resources: Processes assumed to be freely available, forming the baseline of a resource theory. "free processes, which are assumed to be freely available and constitute the free resources"

- Functional causal model: A causal model specified by a causal structure, deterministic functions for observed variables, and distributions over unobserved variables. "A functional causal model is defined as the specification of a causal structure (represented by a DAG or a string diagram) together with a set of functions for each observed variable and a probability distribution over each unobserved variable."

- Functional dependence: The deterministic mapping from input variables to output variables within a causal mechanism. "the exact functional dependence characterizing their relation is unknown"

- Linear program: An optimization formulation with linear objective and constraints used to decide resource convertibility. "We describe a linear program for deciding the question of whether one resource (i.e., state of knowledge about the functional dependence) can be converted to another."

- Monotone: A real-valued function that does not increase under free operations, used to certify non-convertibility. "A monotone is a real-valued function that is non-increasing under free operations"

- Partial order: An ordering over equivalence classes where some pairs may be incomparable. "The set of these equivalence classes, along with the induced conversion relation between them, forms a partial order."

- Postselection: Conditioning analyses on specific observed outcomes to extract information about mechanisms or connectivity. "the third monotone only quantifies his certainty about the causal connectivity after postselection on some specific value of "

- Pre-order: A reflexive and transitive relation induced by convertibility via free operations. "These relations define a pre-order on the set of resources"

- Process theories: Abstract frameworks where processes (states, channels, etc.) and their compositions are the primary objects. "motivated by process theories~\cite{coecke2018picturing,Selby2021,gogioso2017fantastic}"

- Probability distribution over functions: A distribution that encodes uncertainty over which deterministic function is applied from inputs to outputs. "this dependence is represented by a probability distribution over functions"

- Resource convertibility: The possibility of transforming one resource into another using only free operations. "We show that resource convertibility in this setting can be decided using a linear program."

- Resource monotone: A function used to quantify resources that cannot increase under free operations. "A useful tool in analyzing resource conversion is the notion of a resource monotone."

- Resource Theory of Causal Influence (RTCaus): The resource theory where deterministic functional dependences are the resources. "the Resource Theory of Causal Influence, denoted by "

- Resource Theory of Knowledge of Causal Influence (RTKnowCaus): The resource theory where probability distributions over functions are the resources. "the Resource Theory of Knowledge of Causal Influence, denoted by "

- Shannon theory: The resource theory framework for communication channels when only conditional distributions are known. "the relevant resource theory for the case where Alice and Bob do not have any information about the flag variable is Shannon theory"

- Side-channel: An auxiliary perfect communication channel used to aid characterization of another channel. "perfect communication channel termed a side-channel"

- Stochastic map: A probabilistic mapping from inputs to outputs induced by (possibly random) functions. "the same stochastic map from to "

- String diagram: A graphical representation of processes where wires are variables and boxes are operations or functions. "Equivalently, it can be represented as a string diagram"

- Universal function applier: A process that applies whichever deterministic function is specified by a program input to the data input. "may be termed a universal function applier"

Collections

Sign up for free to add this paper to one or more collections.