- The paper introduces a deep learning benchmark that fuses SAR, PMW, and climate model data to create high-resolution, daily meltwater maps.

- Deep CNN models like UNet-SMP achieved 95% pixelwise accuracy, reducing MAE by 40% and outperforming traditional interpolation methods.

- The approach provides a robust framework for geospatial downscaling, enhancing monitoring of glaciological processes and sea-level rise projections.

MeltwaterBench: Deep Learning for Spatiotemporal Downscaling of Surface Meltwater

Introduction

"MeltwaterBench: Deep learning for spatiotemporal downscaling of surface meltwater" (2512.12142) introduces a benchmark and methodological framework for generating high-resolution, daily maps of surface meltwater over the Helheim Glacier sector, East Greenland, by fusing data from SAR, passive microwave (PMW), and regional climate model outputs. The authors systematically evaluate deep learning and traditional approaches for spatiotemporal downscaling, specifically targeting the gap between high-resolution spatial and temporal meltwater mapping that affects the quantification of ice-sheet hydrological processes and sea-level rise projections.

Study Area and Data Synthesis

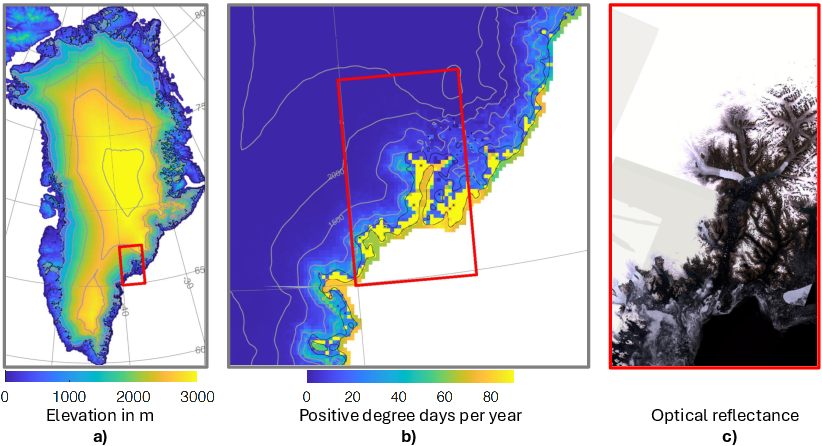

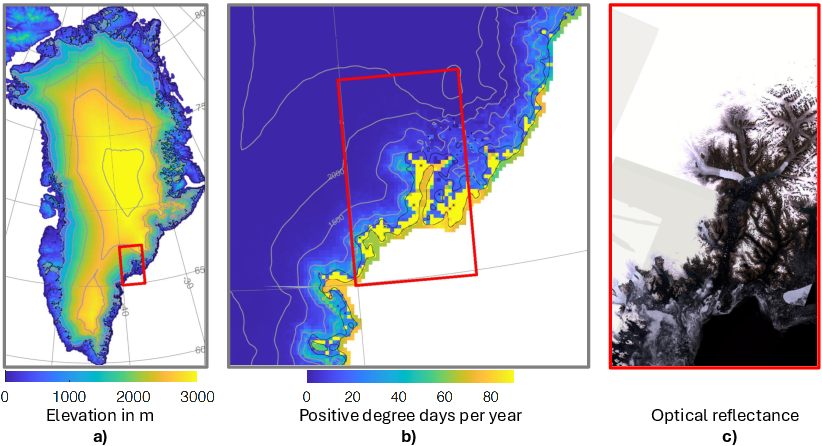

The focus area is the Helheim Glacier and adjacent Sermilik Fjord region, a well-monitored sector with complex topography and high relevance for Greenland Ice Sheet (GrIS) mass loss processes.

Figure 1: Delineation of the study area, surface elevation, melt season air temperature statistics, and Landsat-derived satellite mosaic.

The paper synthesizes core daily inputs at 100 m resolution for the 2017–2023 melt seasons:

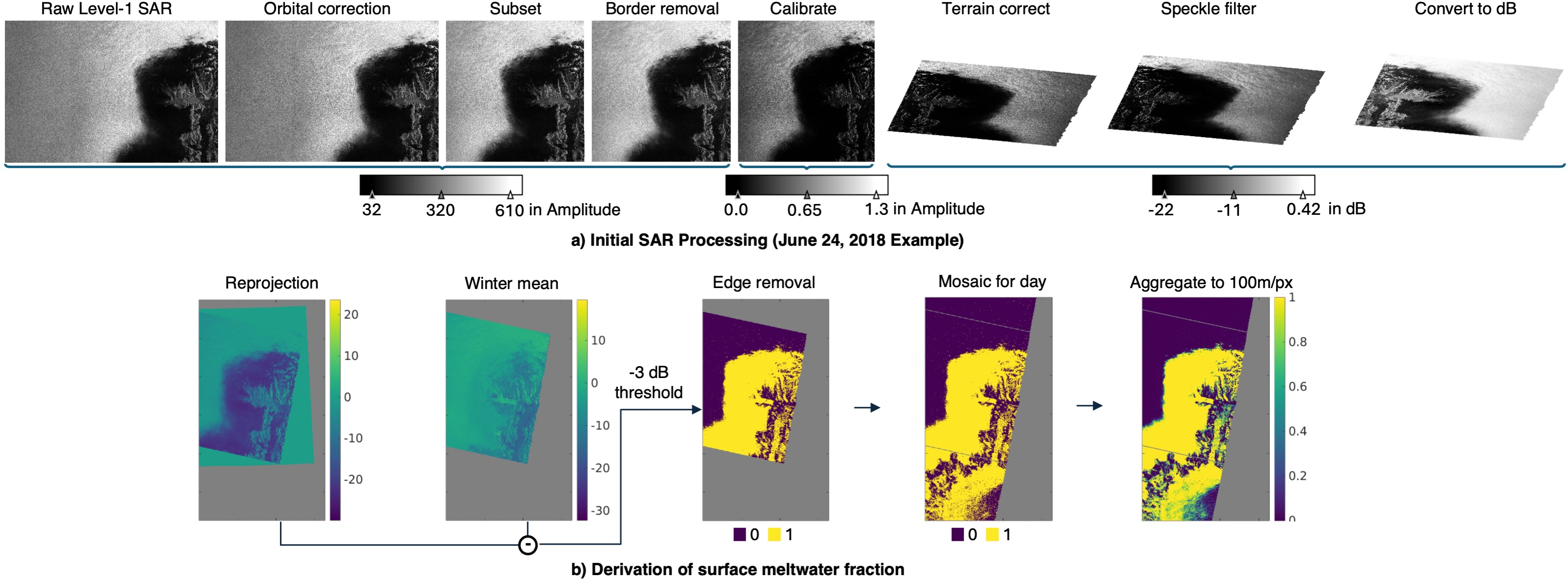

- Sentinel-1 SAR for surface meltwater fraction “ground truth,” mosaicked and aggregated to 100 m via established thresholding of backscatter with respect to winter means.

- PMW (SSMIS) brightness temperature at ∼3–25 km resolution.

- MARv3.14 RCM outputs (notably, liquid water content to 1 m), reprojected to 100 m grids.

- High-resolution DEM and land-ocean masks.

Critical artifacts—SAR swath gaps, atmospheric and sensor-driven anomalies—are systematically masked, resulting in a dataset with ~63% invalid pixels per map, emphasizing the interpolation challenge.

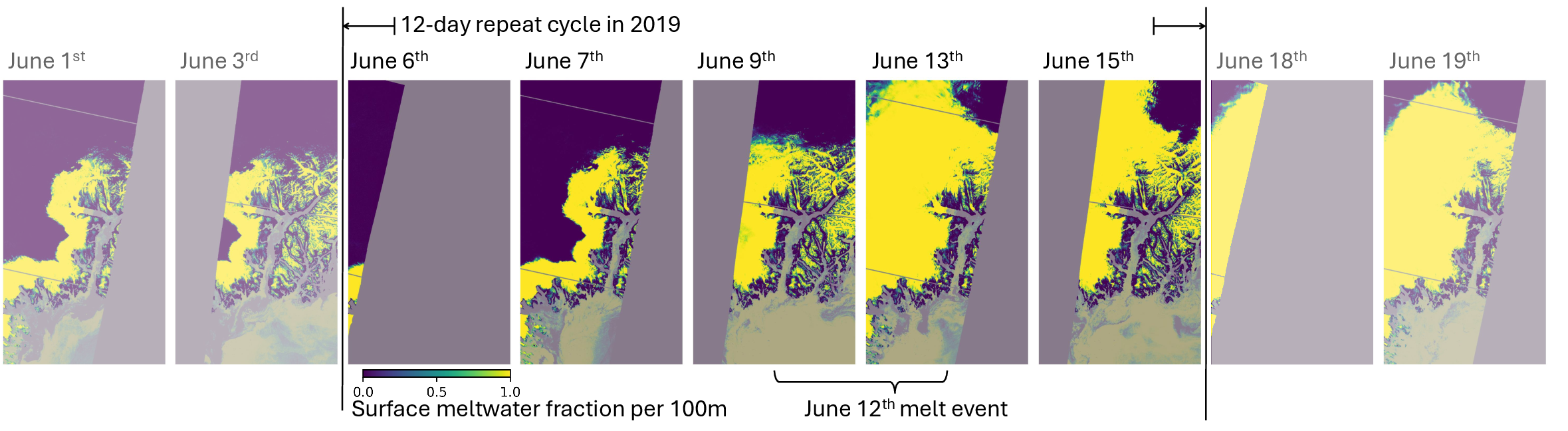

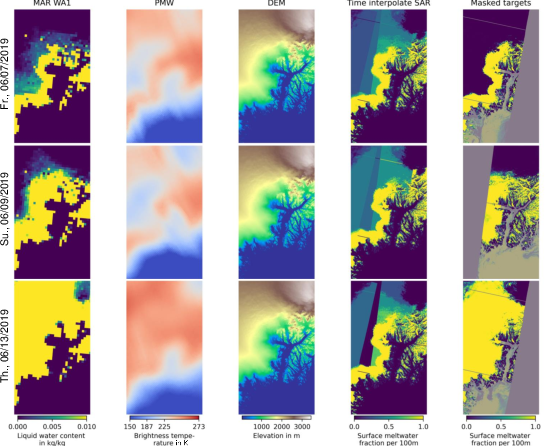

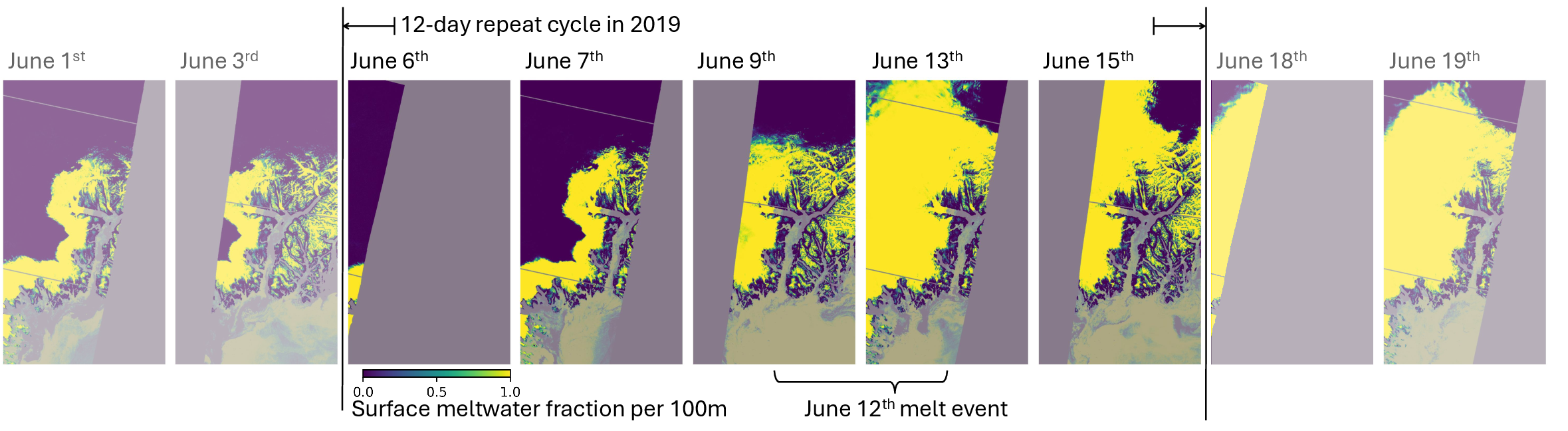

Figure 2: SAR-retrieved surface meltwater fractions during a rapid 2019 melt event, illustrating both physical melt expansion and satellite coverage artifacts.

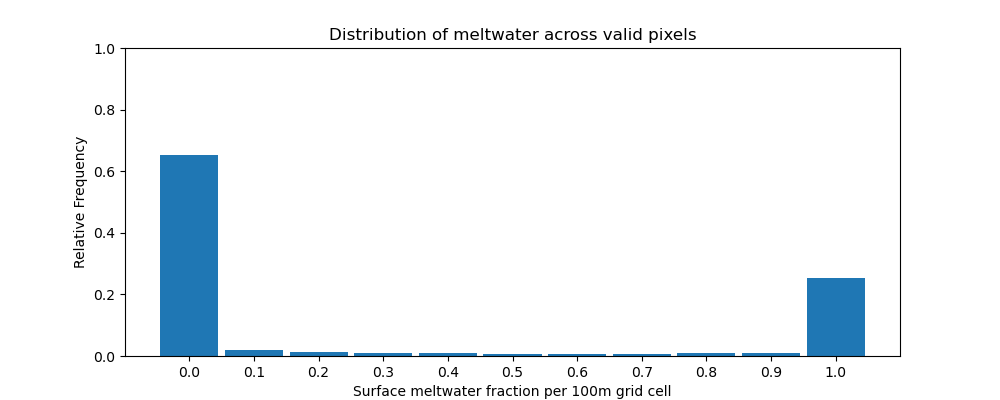

The “MeltwaterBench” dataset is composed of spatiotemporally aligned, multi-source geotiffs and evaluation splits for reproducible ML experiments. The primary prediction target is the daily 100 m SAR-derived map of meltwater fraction, which presents a distinctly bimodal and imbalanced distribution with spatial and temporal coverage constraints.

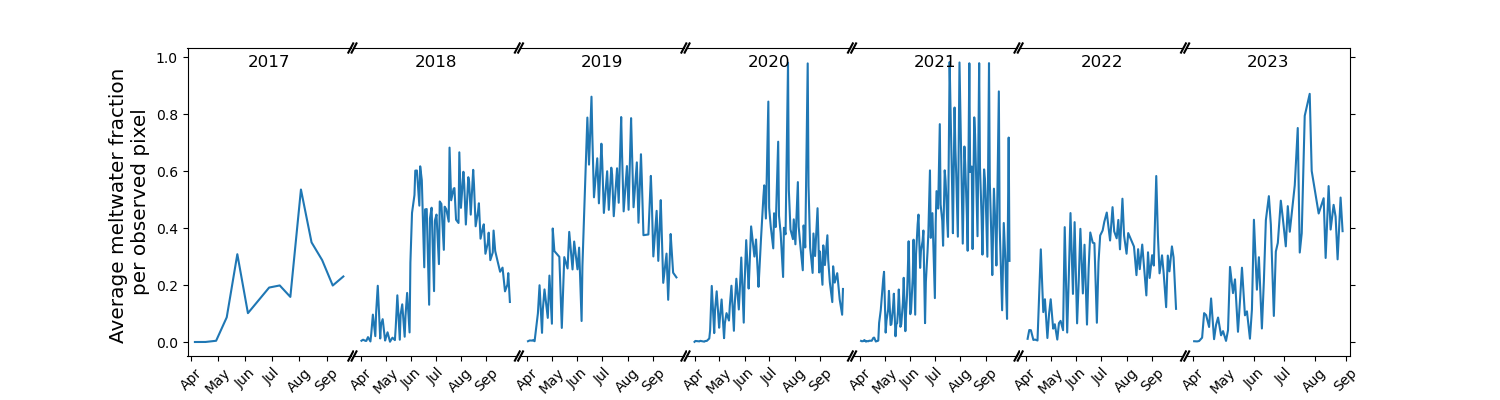

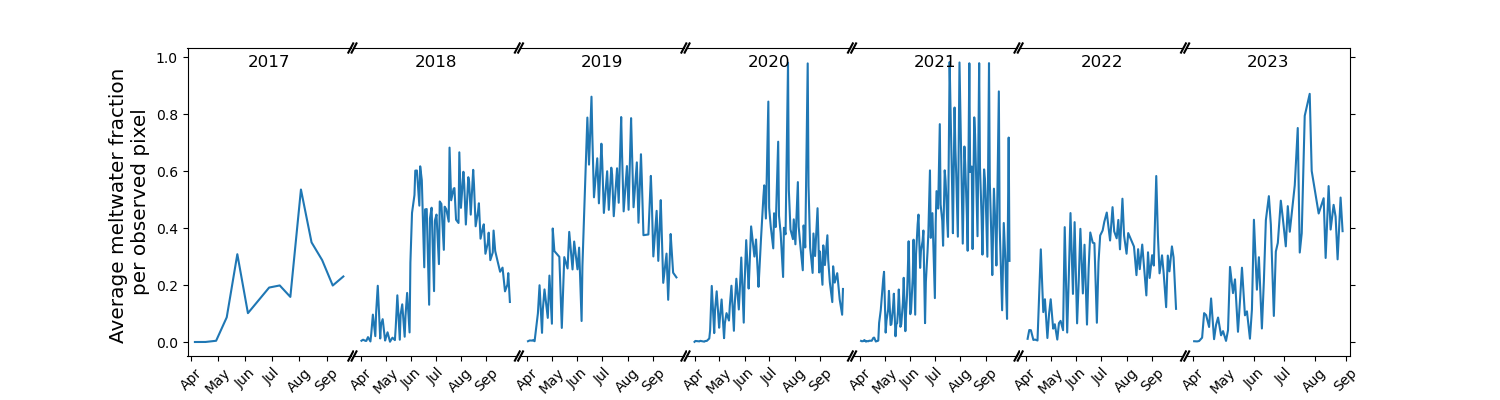

Figure 3: Daily average surface meltwater fraction from SAR, highlighting the temporal sparsity and high event-to-event variability, including prominent melt events.

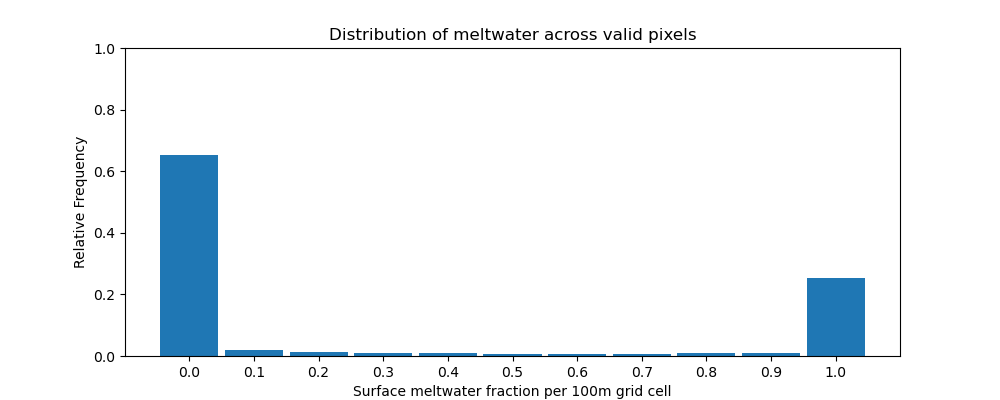

Figure 5: Histogram of surface meltwater target values, emphasizing the predominance of no-melt pixels and informing classification thresholds.

Data Fusion Approach and Deep Learning Models

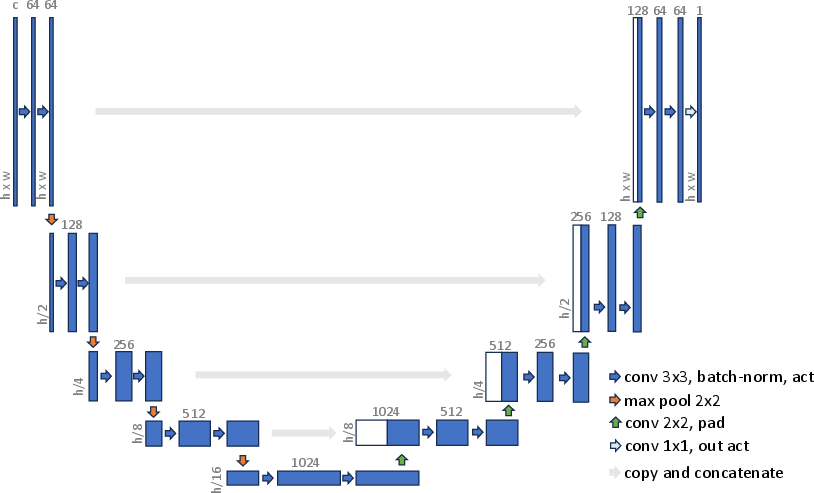

The methodological core consists of training CNN-based architectures to learn a mapping from low-resolution MAR and PMW, static DEM, and SAR-derived running means to high-resolution SAR targets, leveraging both spatial and temporal context.

Evaluation Protocol

Test and validation splits are stratified monthly to maximize representation of melt season heterogeneity, avoiding year-based leakage and supporting robust skill attribution to both interpolation and superresolution.

Metrics include:

- Pixelwise MAE, MSE, RMSE

- Structural similarity (SSIM)

- Binary classification accuracy, F1, precision, recall at physically motivated thresholds

All computations are restricted to valid land pixels.

Empirical Results

Deep learning models, in particular the tuned UNet SMP (49.6M parameters), deliver 95% pixelwise classification accuracy, significantly outperforming both threshold PMW (72%) and direct MAR (83%) as well as pure running mean SAR interpolation (90%) on SAR-covered areas. This yield translates into a 40% improvement in MAE and substantial gains in SSIM, with the networks effectively correcting coarse-scale biases and capturing sub-kilometric topographic controls on meltwater at high fidelity.

(Figure 14a)

Figure 9: Comparison of model predictions against daily observed SAR-derived meltwater fractions, showcasing superior tracking of high-frequency melt variability with UNet-based models.

Event Reconstruction and Bias Analysis

The UNet is capable of reconstructing extreme melt events—e.g., the June 2019 episode—at high accuracy, despite input sparsity. Unlike traditional models, which either spatially or temporally oversmooth, the UNet leverages all modalities (SAR, PMW, MAR, DEM) to correct systematic biases, particularly the consistent overestimation (underestimation) of melt in MAR and PMW during early (late) melt season. Temporal artifacts or interpolation failures in non-DL methods are not observed in the UNet product.

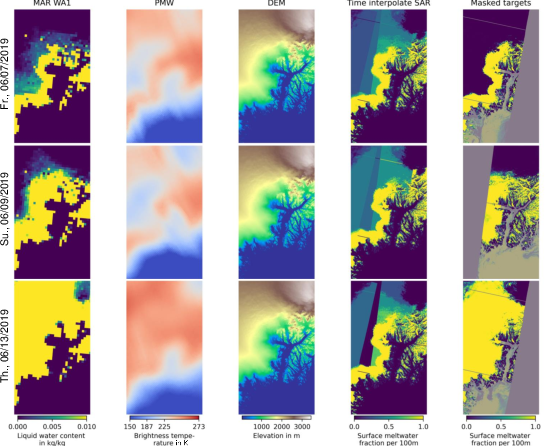

Figure 10: Illustrative data snapshot of input streams and meltwater target on June 12, 2019, showing the multichannel nature of model input and substantial data gaps in SAR coverage during an extreme event.

SAR Preprocessing Robustness

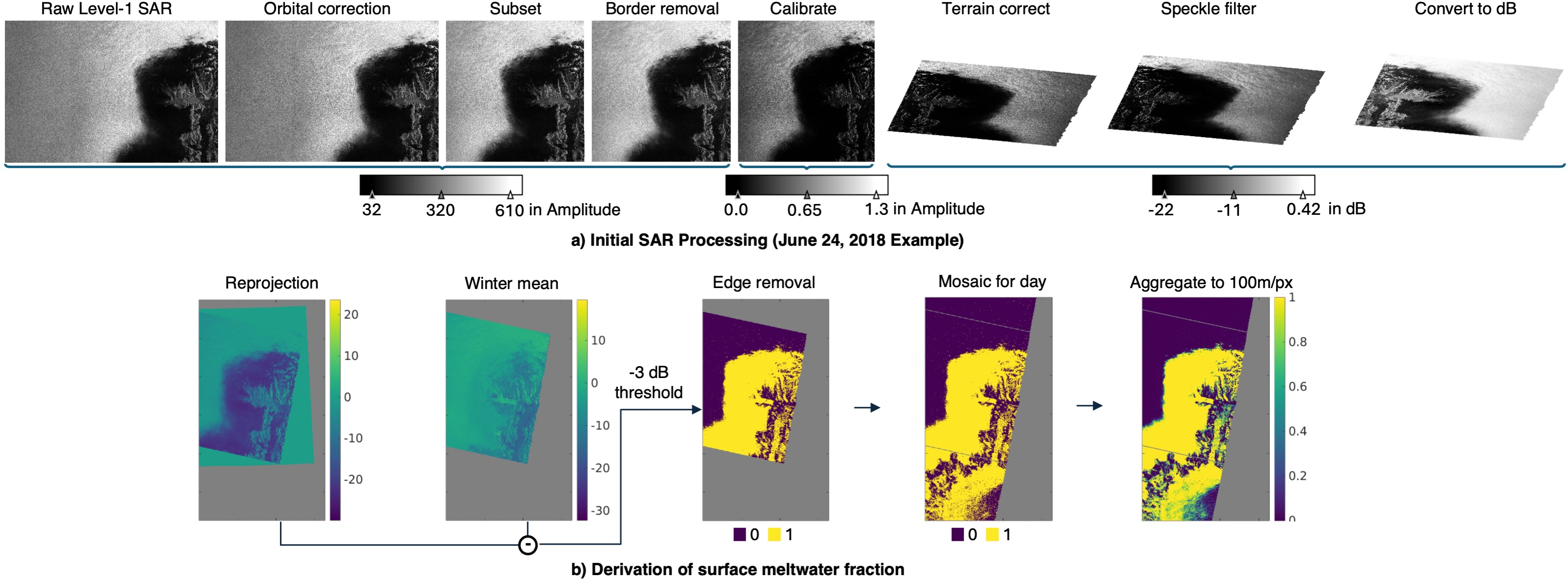

The SAR melt fraction derivation pipeline, involving orbital correction, radiometric calibration, terrain correction, and stringent masking, is detailed and ensures that mapped fractions align physically with season-long melt patterns. The SAR product remains the limiting “ground truth,” with its own uncertainties cited as a key physical constraint.

Figure 11: SAR processing workflow from Level-1 to 100 m fractional melt target, emphasizing deterministic, physically justified thresholding steps.

Benchmark Utility and Implications for AI and Earth Science

MeltwaterBench is released as an open, fully annotated testbed for objective comparison of climate downscaling, multimodal superresolution, and physics-constrained ML architectures. It explicitly presents the challenge of fusing and interpolating across modalities with large-scale bias, a limitation in canonical superresolution and even prior downscaling benchmarks.

Notably, this dataset bridges the methodological gap between traditional empirical-statistical downscaling and deep CNN or generative models, providing physically relevant targets with real-world artifacts. Metrics and protocol standardization facilitate cross-publication comparison, enabling fair attribution of skill to architecture or regularization improvements.

Potential extensions include:

- Domain generalization to other glaciological regions or seasons

- Further leveraging physically constrained or generative methods (e.g., diffusion models) for stochastic event prediction

- Integration as a supervised pretraining or finetuning test case for emergent geospatial foundation models

- Downscaling application to mass loss and runoff projections, with direct impact on sea-level rise assessments

Conclusion

The paper establishes that multimodal deep learning methods can deliver high-fidelity, temporally and spatially dense meltwater maps not obtainable via traditional satellite or climate model data streams alone. The open MeltwaterBench dataset, with its comprehensive preprocessing, evaluation protocol, and highly competitive DL baselines, is expected to serve as a testbed for both theoretical and application-driven research on geospatial downscaling and hydrological prediction frameworks. The implications extend to both practical monitoring and process-oriented glaciology, as well as to the methodological AI subfields advancing multimodal data fusion, generalization under missing data, and physically constrained learning (2512.12142).

References

See (2512.12142) for the full reference and dataset/code access.