- The paper introduces an information-theoretic framework that decomposes and quantifies extended history dependence in stochastic processes.

- It employs minimal models and conditional mutual information to recover true dependency orders beyond the limitations of standard autocorrelation.

- Application to high-resolution fly behavior data reveals scale-invariant non-Markovian dynamics with distinct time scales, enhancing biological insight.

Decomposing Non-Markovian History Dependence: An Information-Theoretic Framework

Introduction

The paper "Decomposing Non-Markovian History Dependence" (2512.13933) addresses a fundamental challenge in the analysis of biological and complex systems: the quantification and characterization of non-Markovian dynamics. While physical systems tend to exhibit Markovian behavior under their fundamental laws, biological and other complex systems often display dynamics that depend intricately on past states extending far beyond what Markovian models can capture. The lack of a principled and operational framework for quantifying these historical dependencies motivates the research. The authors propose an information-theoretic formalism to decompose and quantify history dependence in stochastic processes, extend minimal model analysis, and apply the methodology to high-resolution experimental recordings of fly behavior.

Theoretical Framework

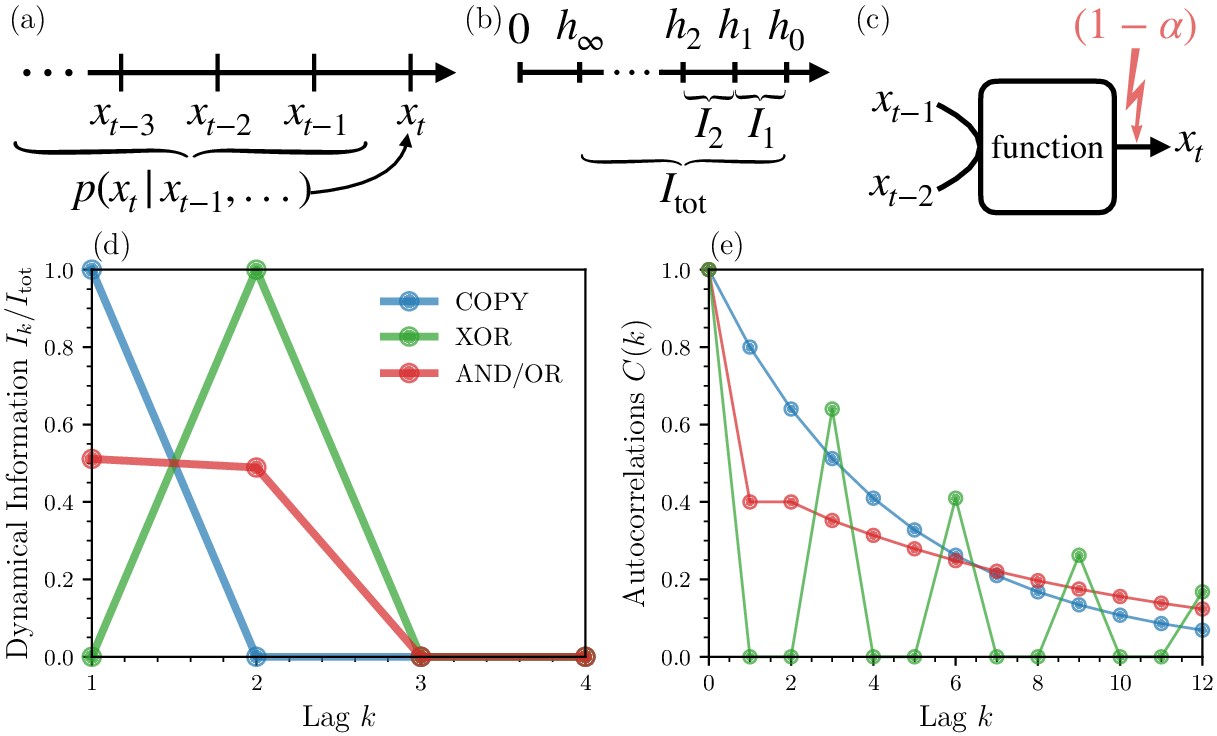

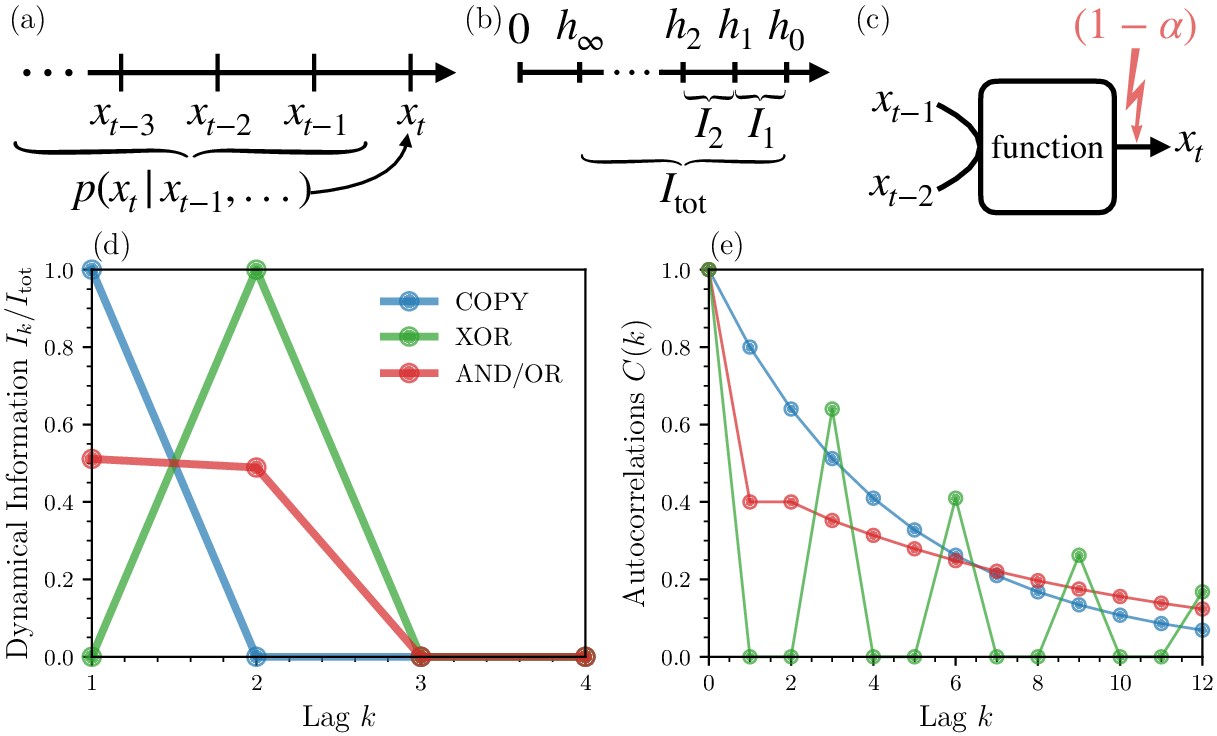

The central contribution is an information-theoretic decomposition of history dependence termed "dynamical information." For a stochastic process where the state xt evolves in discrete time, the conditional probability p(xt∣xt−1,…) may depend on arbitrarily long histories, not just the immediate past. The total dynamical information Itot is defined as the reduction in uncertainty about xt obtained from knowing the entire past, given by the difference between the marginal entropy and the entropy rate:

Itot=h0−h∞.

Between the extremes of no knowledge and complete knowledge of the past, one considers the conditional entropy given the k previous states, hk=H[xt∣xt−1,…,xt−k], forming a non-increasing sequence. The authors introduce the kth-order dynamical information Ik=hk−1−hk, equivalent to the conditional mutual information between xt and xt−k:

Ik=I[xt;xt−k∣xt−1,…,xt−k+1]

leading to

Itot=∑k=1∞Ik.

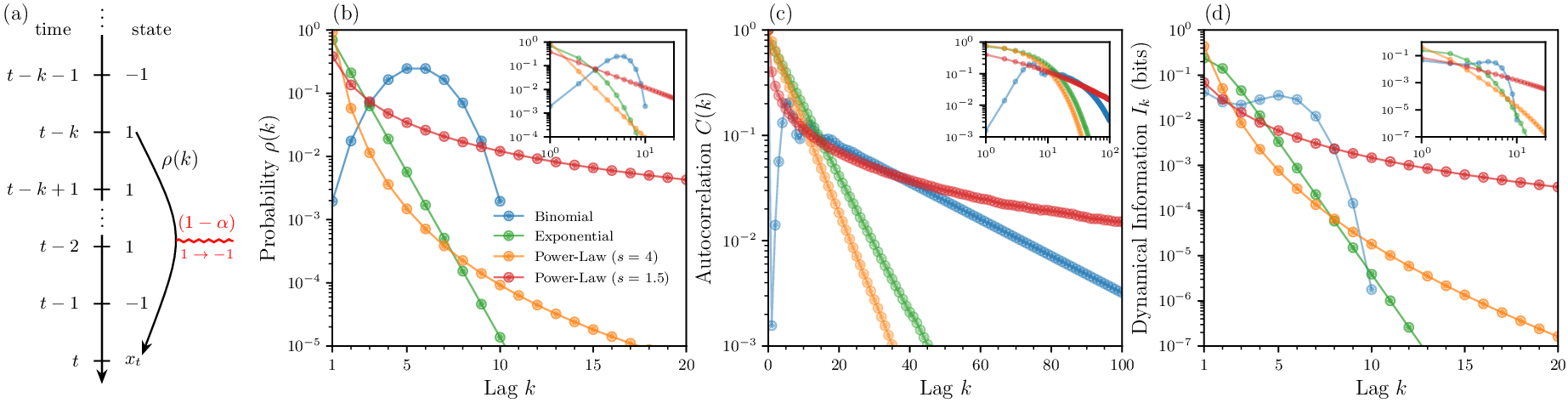

Figure 1: Decomposition of dynamical information in non-Markovian processes, showing the hierarchy of entropy reductions and their relationship to the order of history dependence.

This decomposition attributes non-negative, interpretable information contributions to each order of history dependence, providing a fine-grained, theoretically justified measure of non-Markovianity. The approach stands in contrast to the common use of autocorrelation, which only measures pairwise (second-order) dependencies and can obscure or fail to capture higher-order and non-linear history dependence.

Minimal Model Analysis

To demonstrate and validate the framework, the authors analyze minimal models where the state xt is generated as a noisy logical function (e.g., COPY, AND, OR, XOR) of previous states. In such finite-order dynamics, the decomposition robustly identifies the true order of dependence—Ik vanishes for k above the true process order, regardless of autocorrelation structure.

For instance, in the XOR model, although second-order dependence is crucial, autocorrelation can misleadingly suggest no dependence at that order. The dynamical information decomposition, however, correctly attributes information to I2 (second order) and identifies vanishing I1 (first-order Markovian information), revealing the irreducible non-Markovian character.

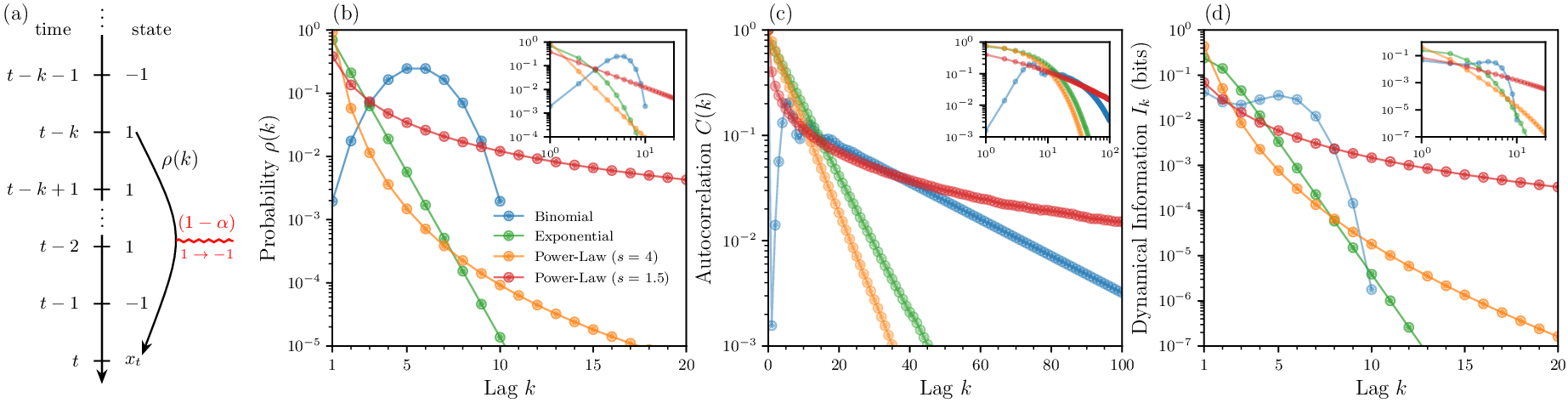

Further, the authors consider stochastic processes where dependencies are distributed across lags according to a specified kernel ρ(k) (e.g., binomial, exponential, power law), controlling the nature and scaling of history dependence.

Figure 2: Comparison of autocorrelation functions and dynamical information Ik across models with binomial, exponential, and power-law history-dependence kernels.

The analysis exposes a key result: autocorrelation can decay exponentially or exhibit power-law scaling regardless of true dependency structure, and may fail to differentiate between finite-order, exponential, and genuine power-law non-Markovianity. In contrast, the dynamical information reliably tracks the true scaling encoded in ρ(k), scaling as Ik∼ρ(k)2, even in regimes where autocorrelation analysis would be misleading.

Application to Fly Behavioral Data

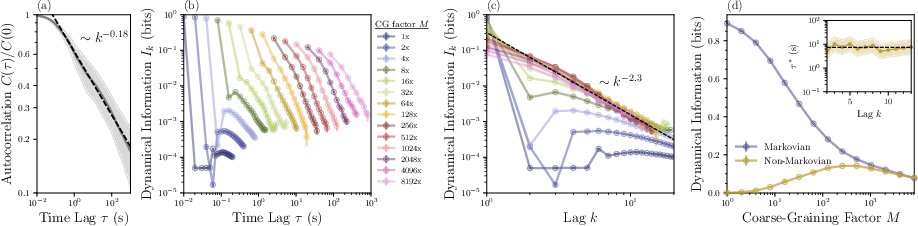

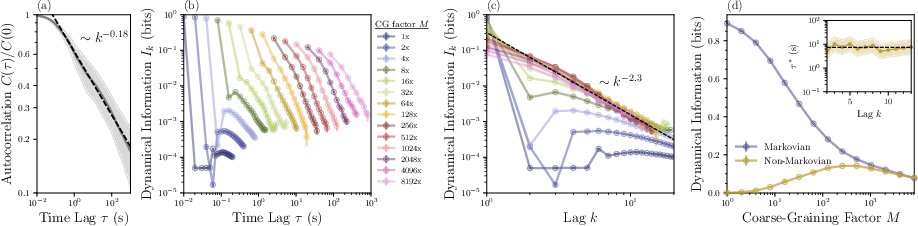

The authors apply their methodology to a publicly available dataset of Drosophila behavior. Using high-resolution time-series and further coarse-graining to two activity states, they estimate Ik across lags and time-scales, overcoming finite-data challenges via sampling and extrapolation methods. Temporal coarse-graining is introduced by binning time points, enabling measurement of dependencies across time scales from milliseconds to minutes.

Figure 3: Analysis of fly behavioral data: (a) power-law decay of autocorrelations, (b) Ik dependencies across coarse-graining levels, (c) collapse of dynamical information vs. lag, (d) distinct scaling of Markovian and non-Markovian information with coarse-graining.

The analysis reveals that:

- Autocorrelation decays as a power law with exponent ∼0.18, confirming prior observations of long-range temporal structure. However, its value is ambiguous for determining the underlying order or strength of history dependence.

- Dynamical information Ik exhibits self-similar (power-law) scaling across multiple orders of magnitude in both lag and temporal coarse-graining, with exponents γ≈2.3.

- The total non-Markovian information I>1 is non-monotonic with respect to temporal scale, reaching a maximum at an intermediate coarse-graining of ∼7 seconds (comparable to biologically relevant timescales in working memory and burstiness).

- Scaling collapse across temporal resolutions indicates self-similarity and scale invariance in non-Markovian dependencies, a property not observed in autocorrelation.

Implications and Perspectives

The proposed decomposition supplies a robust, quantitative tool for dissecting historical dependencies in complex systems beyond the limitations of autocorrelation and finite-order models. In minimal models, it recovers the ground-truth order and strength of dependencies. Empirical application to biological data uncovers nuanced, scale-invariant patterns of information propagation through history, with unique timescales of maximal non-Markovianity.

The implications are significant:

- Theoretical: The method refines the vocabulary for non-Markovian analysis, distinguishing between mere correlation decay and irreducible, higher-order predictive structure. It situates the dynamical information as an operational measure linked to total correlation growth rate.

- Practical: Improved diagnosis of non-Markovianity in biological, ecological, and neural systems refines models of memory, adaptation, and control, with downstream impact on the formulation of effective theories and inference algorithms.

- Computational: Estimation remains data intensive; future research is needed to develop approximation and bounding techniques for higher-order Ik in both synthetic and real datasets.

With increasing data depth, the framework stands to drive new insights into memory encoding, temporal hierarchy, and information bottlenecks in living systems. The invariance and non-monotonicity of non-Markovian information scaling observed in animal behavior suggest potential universality classes in biological processes and highlight the timescales—such as those related to working memory—where such dependencies are maximized.

Conclusion

The information-theoretic decomposition introduced in this paper offers a principled approach for parsing the intricacies of non-Markovian dynamics, both in theoretical models and empirical biological systems. By exposing the limitations of autocorrelation and providing a hierarchy of dynamical information terms, the framework advances the state of the art in time-series analysis of complex, history-dependent behaviors. Future work will focus on scaling these methods to higher-order dependencies, broadening their application to increasingly rich data, and elucidating the mechanisms by which non-Markovian structure emerges across spatial and temporal scales.