GLM-TTS Technical Report

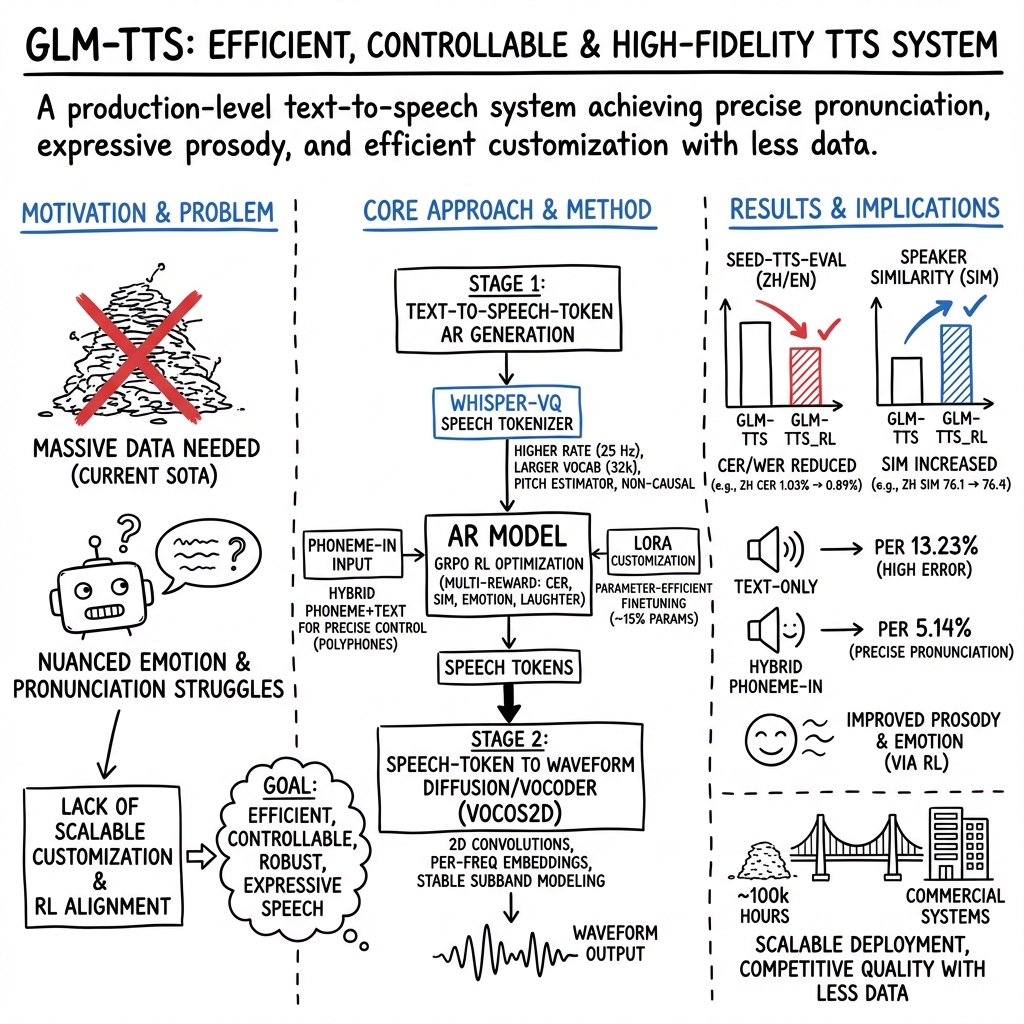

Abstract: This work proposes GLM-TTS, a production-level TTS system designed for efficiency, controllability, and high-fidelity speech generation. GLM-TTS follows a two-stage architecture, consisting of a text-to-token autoregressive model and a token-to-waveform diffusion model. With only 100k hours of training data, GLM-TTS achieves state-of-the-art performance on multiple open-source benchmarks. To meet production requirements, GLM-TTS improves speech quality through an optimized speech tokenizer with fundamental frequency constraints and a GRPO-based multi-reward reinforcement learning framework that jointly optimizes pronunciation, speaker similarity, and expressive prosody. In parallel, the system enables efficient and controllable deployment via parameter-efficient LoRA-based voice customization and a hybrid phoneme-text input scheme that provides precise pronunciation control. Our code is available at https://github.com/zai-org/GLM-TTS. Real-time speech synthesis demos are provided via Z.ai (audio.z.ai), the Zhipu Qingyan app/web (chatglm.cn).

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Explain it Like I'm 14

Overview

This paper introduces GLM-TTS, a powerful system that turns written text into natural-sounding speech. The goal is to make voices that are clear, expressive (they can sound happy, sad, etc.), and easy to control, while keeping the system fast and affordable to use in real products like apps and websites.

What were they trying to figure out?

The researchers focused on common problems that make real-world text-to-speech (TTS) hard:

- How to make high-quality voice clones using less training data and shorter voice samples.

- How to make speech sound more emotional and lifelike without relying on lots of manual labels.

- How to get precise pronunciation for tricky languages (especially Chinese), including rare words and characters with multiple pronunciations.

- How to use reinforcement learning (a way of teaching models with feedback) in TTS without training becoming unstable.

- How to customize premium voices cheaply and quickly without retraining giant models.

How does GLM-TTS work?

The two-stage speaking pipeline

Think of making speech like making music:

- Text-to-token: The system first turns the text into small “building blocks” called tokens (like musical notes or Lego pieces). This step uses an autoregressive model, which predicts the next token based on what it already produced.

- Token-to-wave: A diffusion model then turns these tokens into a real audio waveform. Diffusion is like starting with a noisy sound and gradually polishing it into clean, natural speech.

This split makes the system both controllable and high-quality.

Better “building blocks” (speech tokenizer)

A tokenizer converts audio to tokens and back. GLM-TTS improves this part so the speech is more accurate and expressive:

- Faster token rate: It creates tokens twice as fast as before, which helps with clear pronunciation at high speaking speeds and improves natural sounds like breathing or laughter.

- Bigger vocabulary: More token types let the system capture finer voice details.

- Pitch estimator: A new module keeps the voice’s pitch (how high or low it sounds) aligned with the reference voice, helping emotion and melody.

- Non-causal design: Removing some “one-direction-only” constraints lets the model look at more context, improving accuracy and naturalness.

A clean, smart data pipeline

Before training, the team carefully cleans and prepares audio data, much like washing and sorting ingredients before cooking:

- Detecting speech parts and cutting out silence.

- Removing background noise and separating speakers in multi-person audio.

- Checking transcriptions with multiple speech recognition models and keeping only audio with very low error rates.

- Adjusting punctuation based on how long characters are spoken, so pauses and commas feel natural.

- Scaling up processing with distributed systems so it’s fast and efficient.

Teaching with feedback (reinforcement learning)

They use GRPO, a reinforcement learning method, and “grade” the system’s speech on four areas at the same time:

- Pronunciation accuracy: Measured by CER/WER (lower is better).

- Voice similarity: Does the clone sound like the target speaker?

- Emotion: Does the voice sound happy, sad, angry, etc., when it should?

- Laughter: Can it produce natural laughs in the right spots?

They add smart tricks to keep training stable, like dynamic resampling (try different examples when feedback becomes too “same-ish”) and adaptive gradient clipping (limit extreme updates early, loosen them later), which helps avoid “reward hacking” and makes the voice sound more human.

Easy voice personalization (LoRA)

Customizing a high-quality voice usually means retraining a huge model, which is expensive. LoRA is like adding a small “plug-in” that fine-tunes only part of the model:

- They fine-tune about 15% of the model’s parameters.

- It needs only about 1 hour of single-speaker audio.

- It cuts training costs by around 80% compared to full retraining.

- Results are stable and close to full-model quality.

Precise pronunciation control (Phoneme-in)

Text alone can be ambiguous, especially in Chinese where a character may have multiple pronunciations. Phonemes are like detailed pronunciation notes. GLM-TTS uses a hybrid input system:

- It keeps text for most words but swaps in phonemes for tricky characters or rare words.

- A dictionary flags words that need special attention.

- During training, the model learns to handle a mix of text and phonemes.

- During use, the system automatically replaces ambiguous parts with exact phonemes, keeping natural rhythm but ensuring accuracy.

A new sound maker (Vocos2D vocoder)

The vocoder turns spectrograms (visual-like representations of sound) into actual audio. Vocos2D:

- Uses 2D operations similar to image processing to better handle frequency bands.

- Adds training techniques to make learning stable without forcing the generator to copy random changes.

- Trains on high-quality singing data to broaden pitch and style range.

- Produces cleaner, richer audio than the original Vocos.

What did they find, and why is it important?

Here are the main results and why they matter:

- Strong performance with less data: With about 100k hours of training data (much less than some competitors), GLM-TTS reaches leading results among open-source systems of similar size.

- Better pronunciation and similarity: On a key Chinese test set, the system gets CER around 1.03% and speaker similarity (SIM) around 76.1. After reinforcement learning, CER improves to 0.89% and SIM to 76.4, meaning clearer words and a voice closer to the target.

- Emotion and laughter: The multi-reward learning approach improves emotional expression and realistic laughter, making speech more lifelike.

- Precise pronunciation control: On a tough internal test, using phoneme-in cuts pronunciation errors from about 13.23% to 5.14%, which is a big deal for education and testing.

- Better audio quality: The Vocos2D vocoder beats the older version on multiple metrics, leading to smoother, more pleasant sound.

- Low-cost customization: With LoRA, you can create premium voices using only ~1 hour of audio and much less training time, which makes personalized voices practical for many businesses.

What’s the impact?

GLM-TTS moves TTS closer to sounding truly human while staying efficient and controllable. This can help:

- Voice assistants sound more natural and expressive.

- Audiobooks, podcasts, and dubbing achieve higher quality and consistent style.

- Educational tools and exams get exact pronunciation, especially for languages with complex writing systems.

- Accessibility tools become more pleasant and trustworthy for everyday use.

- Companies quickly roll out customized voices without huge costs, thanks to LoRA and the hybrid text–phoneme inputs.

In short, GLM-TTS offers a practical, open-source framework that balances quality, control, and cost. It shows how careful design—splitting the task into tokens and audio, cleaning data well, using multi-reward reinforcement learning, and enabling precise pronunciation—can make AI voices sound more real and be easier to use in the real world.

Knowledge Gaps

Knowledge Gaps, Limitations, and Open Questions

Below is a concise, actionable list of what remains missing, uncertain, or unexplored in the paper. Each point is framed so future researchers can directly design studies or experiments to address it.

Data, Coverage, and Reproducibility

- The composition of the 100k-hour proprietary training dataset (speaker demographics, languages, accents, recording conditions, SNR, text domains) is unspecified, limiting reproducibility and bias/fairness analysis.

- No public release (or synthetic proxy) of the data pipeline outputs to enable independent verification of data cleaning, VAD/diarization quality, and WER-based filtering effects.

- Lack of analysis on how aggressive cleaning (source separation, denoising, WER<5% filtering) alters prosody distributions and whether this introduces domain shift when synthesizing “in-the-wild” speech.

- Insufficient coverage and evaluation beyond Chinese and English (e.g., other languages, code-switching, multilingual prompts, cross-lingual cloning), despite claims of dialect data inclusion.

Architecture and Training Details

- The abstract claims a token-to-waveform diffusion stage, but the methods focus on a GAN-based vocoder (Vocos2D); the actual token-to-waveform component (diffusion vs GAN) and training integration are unclear and need explicit specification and ablation.

- Missing details on the text-to-token autoregressive model architecture (layer types, depth, context window, alignment mechanism, decoding strategy, training loss), preventing precise replication.

- No latency/throughput measurements (e.g., real-time factor, memory footprint, GPU requirements) for each stage; deployment efficiency claims are not quantified across hardware tiers (edge vs server).

Speech Tokenizer (Whisper-VQ Modifications)

- No ablation isolating the impact of: higher token rate (25 Hz), larger vocab (32k), non-causal architecture, and the Pitch Estimator module; it is unclear which change drives gains and at what computational cost.

- Lack of streaming feasibility analysis after removing causality (non-causal conv/attention), including buffering strategies and impact on end-to-end latency.

- Pitch Estimator integration is under-specified (losses, supervision signals, coupling with prosody features); objective pitch accuracy gains are not reported (e.g., F0 RMSE, correlation vs ground truth).

Reinforcement Learning (GRPO Alignment)

- Reward model provenance, training, and calibration are not described (CER/SIM/EMO/laughter detectors), making reward reliability and cross-domain validity uncertain.

- Fusion weights and normalization strategies for multi-reward training are not justified or compared; no study on adaptive/learned reward weighting over training.

- RL credit assignment granularity (token- vs utterance-level) and its effect on prosody shaping remain unexplored.

- The tension between dynamic sampling improving CER/SIM but harming EMO is acknowledged but unresolved; no principled method is proposed to mitigate this trade-off.

- Risk of reward hacking is noted, yet no formal detection/mitigation protocol (e.g., adversarial validation, held-out adversarial stress tests) is provided.

- Generalization of RL gains to unseen speakers, languages, long-form content, and noisy prompts is not evaluated.

LoRA-Based Premium Voice Customization

- The “15% parameters” claim lacks architectural specificity (which layers, ranks, adapters, placement); no sensitivity study on LoRA rank/placement and its trade-off with quality and stability.

- No comparison to full fine-tuning on the same speakers with matched data/compute to quantify exact gaps in naturalness, SIM, and prosody.

- Catastrophic forgetting and cross-language transfer during customization (e.g., retaining base multilingual capabilities) are not assessed.

- Robustness of customization to low-quality data (channel noise, reverberation, domain mismatch) and to different emotional styles is not reported.

Phoneme-In Precision Control

- The G2P module’s error characteristics, coverage of polyphone and rare character dictionaries, and maintenance/update process (including conflict resolution in dynamic expansion) are not detailed.

- Impact of hybrid phoneme+text input on naturalness/prosody (MOS, rhythm, stress) is not measured; PER improvements are shown without naturalness trade-off quantification.

- Generalizability beyond Chinese (e.g., English heteronyms, Japanese pitch accent, tonal languages) is not evaluated; no multilingual G2P strategy is discussed.

- Runtime cost and user experience for manual phoneme injection in production (authoring tools, markup standards) are not described.

Vocoder (Vocos2D)

- Comparative analysis vs state-of-the-art diffusion/flow vocoders and neural codecs is missing; current comparisons are limited to Vocos baseline.

- Objective measures of pitch fidelity, phase coherence, and artifacts (e.g., transient smearing, pre-echo) are absent; DA effects on these dimensions are not quantified.

- The impact of mixing singing data on speech-only scenarios (prosody drift, vibrato leakage) is not measured; potential trade-offs are not explored.

- Streaming capability, sampling-rate generalization (e.g., 16 kHz vs 32 kHz), and robustness to conditioning mismatch (mel/token noise) are not reported.

Evaluation and Benchmarks

- Human evaluation protocols (panel size, instructions, inter-rater reliability) for MOS/EMO are under-specified; some emotion results rely on internal tests without public reproducibility.

- Speaker similarity relies on WavLM-large embeddings; sensitivity to the choice of embedding model and bias across genders/accents is not analyzed.

- Hard-case (polyphones, rare words) benchmark is mentioned but not reported in Table 3; a public, reproducible hard-case set and metrics are needed.

- Long-form stability (multi-minute synthesis), consistency of style/emotion over extended segments, and error accumulation are not evaluated.

- Robustness tests (noisy prompts, channel mismatch, background music, overlapping speech) are absent, despite denoising and source separation in the data pipeline.

Deployment, Controls, and Safety

- No quantitative deployment metrics (RTF, CPU/GPU utilization, memory, batching performance) to substantiate “production-level” and “real-time” claims across varied hardware.

- Controls for explicit emotion/style steering (prompting interfaces, controllable prosody parameters) are not specified; RL improves EMO but user-side control mechanisms are unclear.

- Security and misuse safeguards (watermarking, spoof detection, traceability, consent verification for premium voices) are not addressed.

- Privacy implications of proprietary datasets and voice customization (speaker identity leakage, reconstruction risks) are not discussed.

Open Research Directions

- Systematic scaling studies: how performance scales with model size, token rate, vocab size, and data hours (including diminishing returns and compute costs).

- Joint optimization of tokenizer, AR model, and vocoder (end-to-end finetuning or multi-task objectives) to reduce quantization mismatch and improve prosody consistency.

- Principled multi-reward RL frameworks (e.g., inverse RL or preference learning with human pairwise judgments) to reduce reliance on proxy reward models and mitigate hacking.

- Multilingual phoneme+text control frameworks with language-agnostic markup standards and adaptive G2P, evaluated on code-switching and cross-lingual cloning.

Practical Applications

Immediate Applications

Below are concrete, deployable use cases that can be built now from GLM-TTS’s methods and results.

- Premium voice cloning at scale for content production (media, advertising, gaming)

- Sectors: media/entertainment, marketing, gaming, creator economy

- Built on: LoRA-based customization that matches full-model quality by tuning ~15% params using ~1 hour of high-quality single-speaker audio; GRPO-aligned expressivity for emotion and laughter

- Tools/Workflows: brand-voice onboarding kits; “voice store” catalogs; studio plug-ins (e.g., Premiere/Final Cut) with one-click LoRA fine-tuning and A/B emotion takes; campaign QA using SIM metrics

- Assumptions/Dependencies: legally consented training audio; GPU time for LoRA fine-tune (reduced vs full FT); stable inference infra; careful brand safety controls for emotional styles

- Precise pronunciation for education and standardized testing (edtech, assessments)

- Sectors: education, language learning, testing and certification

- Built on: Phoneme-in hybrid input with dynamic dictionaries for polyphones/rare words; G2P-backed inference path; demonstrated PER drop from 13.23% to 5.14% on hard cases

- Tools/Workflows: SSML-like “phoneme tags” or inline annotations; domain dictionaries (medical, legal, STEM); grader voice packs for test readouts

- Assumptions/Dependencies: robust front-end G2P; domain dictionary maintenance; instructor workflows for exceptions; highest benefits in Chinese (English support improving with data)

- High-throughput localization and dubbing with controllable emotion

- Sectors: film/TV, streaming, gaming, corporate training

- Built on: two-stage TTS (text-to-token AR + token-to-waveform vocoder), multi-reward RL (pronunciation, similarity, emotion, laughter), dialect-augmented tokenizer

- Tools/Workflows: batch script generation; emotion presets (happy, sad, angry) with RL-aligned rewards; engineering hooks to constrain laughter/pauses; QC gates using CER/SIM/WER

- Assumptions/Dependencies: target-language dictionaries; alignment with lip-sync tools (third-party); rights for voice cloning of talent

- Accessible reading experiences with natural prosody (accessibility, daily life)

- Sectors: accessibility (WCAG), publishing, productivity

- Built on: higher token rate (25 Hz) and 32k vocab to reduce glitches at fast speech rates; Vocos2D vocoder for high MOS/UTMOS; emotional naturalness for long-form listening

- Tools/Workflows: screen readers, audiobook tools, document-to-voice pipelines; user-tunable prosody profiles (calm/engaging/neutral)

- Assumptions/Dependencies: deployment on-device vs cloud (latency/power); user privacy controls for personal voice use

- Customer service voice agents with brand-consistent tones

- Sectors: finance, e-commerce, telco, travel

- Built on: LoRA premium voices; RL-aligned emotion control to avoid flat or inappropriate affect; tokenizer improvements for high-speed, low-error disclaimers

- Tools/Workflows: IVR voice packs; compliance text modes for disclaimers at higher speech rates; SIM/CER dashboards for brand QA

- Assumptions/Dependencies: call-center stack integration; latency budgets; governance to prevent misuse (e.g., unauthorized clone of agents)

- Multidialect customer outreach and public announcements

- Sectors: government/public sector, logistics, retail

- Built on: tokenizer trained with dialect data; hybrid phoneme+text for hard terms and place names

- Tools/Workflows: local-dialect voice packs; geo-targeted announcement generation; dictionary management console for toponyms and rare terms

- Assumptions/Dependencies: dialect coverage (best in Chinese family per paper); acceptance testing with community reviewers; data refresh for new terms

- Creator tools for short-form video with expressive TTS

- Sectors: social media, creator economy, marketing

- Built on: RL-improved emotion and laughter; Vocos2D for crisp, “studio-like” timbre; fast sampling rate to handle rapid edits

- Tools/Workflows: NLE timelines with emotion keyframes; “punch-in” phoneme overrides on tricky words; preset libraries (comedic, dramatic, tutorial)

- Assumptions/Dependencies: platform policies on synthetic voice disclosure; handling of background music mix (downstream)

- Voice banking for patients and personalized AAC devices

- Sectors: healthcare, assistive tech

- Built on: low-data LoRA customization (~1 hour); prosody-preserving tokenizer and PE module for pitch

- Tools/Workflows: clinical voice-banking kits; patient app to record scripts; SIM-based verification of closeness to original

- Assumptions/Dependencies: clinical consent; quality of home-recorded data; clinician oversight for style boundaries

- Cost-optimized dataset curation for labs and enterprises

- Sectors: academia, R&D, enterprise ML teams

- Built on: end-to-end pipeline (VAD, source separation, diarization, multi-ASR WER filtering, punctuation optimization, gRPC-distributed processing)

- Tools/Workflows: turnkey audio-cleaning microservices; reproducible WER thresholds (<5%); feature extraction jobs for speaker embeddings/tokens

- Assumptions/Dependencies: access to ASR models (Whisper/Paraformer/Reverb); GPU cluster for throughput; dataset licensing

- Synthetic data generation for ASR/NLU robustness

- Sectors: software/ML, speech tech

- Built on: controllable TTS to produce diverse accents, emotions, speeds; Phoneme-in to stress-test rare pronunciations

- Tools/Workflows: curriculum generators; domain-term augmentation; dialed speed/pitch sampling for edge cases

- Assumptions/Dependencies: bias audits to avoid overfitting to synthetic distributions; alignment with target ASR domains

- High-fidelity singing segments and jingles

- Sectors: advertising, music production, streaming

- Built on: Vocos2D trained with singing data; pitch estimator for prosody alignment

- Tools/Workflows: jingle and tag-line generation; melody-aware prompt workflows; quick “scratch track” creation

- Assumptions/Dependencies: melody and rhythm conditioning (external); rights for melodic content; evaluation by music producers

- On-prem TTS microservice for privacy-sensitive sectors

- Sectors: finance, healthcare, public sector

- Built on: open-source code/models; gRPC-based scalable pipeline; LoRA custom voices within private perimeter

- Tools/Workflows: containerized deployment; role-based access to voice assets; internal evaluation harness (CER/SIM)

- Assumptions/Dependencies: DevOps maturity; GPU availability; policy for voice IP and staff consent

Long-Term Applications

These use cases are feasible but may require further research, scaling, or integration beyond the current report.

- Fully multimodal conversational agents with nuanced paralinguistics

- Sectors: virtual assistants, customer experience, robotics

- Built on: GRPO multi-reward alignment (CER/SIM/Emotion/Laughter) extended to real-time dialogue; broader paralinguistic controls (sighs, hesitations)

- Tools/Workflows: preference-learning loops with human-in-the-loop raters; session-level style memory

- Assumptions/Dependencies: richer reward models and guardrails; low-latency streaming token-to-wave synthesis

- Cross-lingual, code-switching voice cloning with consistent identity

- Sectors: global media, education, customer support

- Built on: expanded multilingual training; Phoneme-in dictionaries for multiple scripts; PE-guided prosody transfer

- Tools/Workflows: bilingual/cross-script termbanks; voice identity preservation metrics across languages

- Assumptions/Dependencies: large, balanced multilingual corpora; evaluation standards for cross-lingual SIM

- Lip-sync-accurate dubbing with phoneme/prosody constraints

- Sectors: film/TV, localization

- Built on: hybrid phoneme+text and duration control combined with video alignment models

- Tools/Workflows: timeline-constrained generation; automatic retiming to hit viseme targets

- Assumptions/Dependencies: tight coupling with lip-reading/viseme predictors; fine-grained duration controls within TTS

- Safety tooling: watermarking, provenance, and clone detection for voices

- Sectors: policy, platform trust & safety, legal-tech

- Built on: consistent tokenization/vocoder signatures; RL penalties for removing watermarks

- Tools/Workflows: “consent ledger” for voice assets; detectors that flag synthetic or cloned speech in the wild

- Assumptions/Dependencies: community-agreed watermark standards; adversarial robustness; regulatory adoption

- Personal on-device TTS with fast personalization

- Sectors: mobile, IoT, automotive

- Built on: parameter-efficient adaptation (LoRA or adapters) and compact model distillation

- Tools/Workflows: on-device fine-tune from short scripts; edge inference with emotion presets

- Assumptions/Dependencies: model compression/quantization; privacy-preserving training; hardware acceleration

- Therapeutic and empathetic digital companions

- Sectors: digital health, mental wellness

- Built on: GRPO-aligned prosody controls extended to empathy cues; paralinguistic modeling (laughter, warmth)

- Tools/Workflows: clinician-supervised style templates; session-aware prosody modulation

- Assumptions/Dependencies: clinical validation; ethical guidelines; risk/abuse mitigation

- Fairness and dialect equity audits for TTS

- Sectors: academia, policy, public sector

- Built on: dialect-augmented tokenizer/datasets; standardized benchmarks for dialect intelligibility and respectfulness

- Tools/Workflows: continuous evaluation across dialects; participatory data collection frameworks

- Assumptions/Dependencies: representative datasets; community governance; multilingual Phoneme-in expansions

- Autonomous content creation pipelines with human preference RL

- Sectors: media ops, marketing ops

- Built on: GRPO with dynamic sampling/clipping scaled to human feedback on style/brand-fit

- Tools/Workflows: feedback orchestration (rater UI); continuous post-training of style-specific heads

- Assumptions/Dependencies: scalable, reliable reward models (emotion, laughter, brand tone); prevention of reward hacking

- Voice privacy and anonymization through controllable prosody transfer

- Sectors: privacy tech, research, healthcare

- Built on: pitch/prosody disentanglement (via PE and tokenization); targeted style transfer without identity leakage

- Tools/Workflows: anonymization sliders (pitch, timbre, rhythm); reversible transformations with user keys

- Assumptions/Dependencies: rigorous identity leakage audits; legal frameworks for reversible anonymization

- High-fidelity singing TTS and virtual vocalists

- Sectors: music tech, entertainment

- Built on: Vocos2D with extended singing datasets; pitch-conditioned prosody controls

- Tools/Workflows: DAWs integrations; melody/lyrics conditioning APIs; timbre marketplace

- Assumptions/Dependencies: music-theory-aligned conditioning; rights management; producer adoption

- Synthetic data factories for robust speech/NLP systems

- Sectors: ML tooling, enterprise AI

- Built on: controllable generation across accents/emotions/speeds/domains; dataset curation pipeline (VAD→ASR WER filter→features)

- Tools/Workflows: closed-loop training with active learning; domain-shift generators; bias monitors

- Assumptions/Dependencies: careful validation to prevent overfitting to synthetic distributions; licensing for downstream use

- Standards and regulation for synthetic voice disclosure and labeling

- Sectors: policy, platforms, journalism

- Built on: measurable metrics (CER, SIM, EMO) as part of compliance attestations; watermarking and consent records

- Tools/Workflows: disclosure APIs; platform-side auto-labeling of synthetic content

- Assumptions/Dependencies: multi-stakeholder agreement; international harmonization; enforcement mechanisms

Notes on feasibility across applications:

- Performance is strongest in Chinese under current training balance; English and other languages would benefit from more data.

- Phoneme-in requires robust, domain-specific G2P and ongoing dictionary management.

- RL reward quality (emotion, laughter) hinges on reliable detectors; mitigate reward hacking with careful clipping/dynamic sampling settings as demonstrated.

- LoRA fine-tuning depends on clean, consented audio; results degrade with noisy or mismatched data.

- Vocos2D quality assumes access to high-quality spectrograms and training with diverse vocal conditions; singing quality improves with more curated singing data.

- Real-time/edge deployment requires further compression and low-latency optimization beyond the report.

Glossary

- Adaptive gradient clipping: A training technique that dynamically limits gradient magnitude to stabilize reinforcement learning and prevent exploitation of rewards. "we adopt an adaptive gradient clipping scheme, setting Ehigh and Elow as dynamic values that adjust according to training steps."

- Attention-based sequence-to-sequence models: Neural architectures that map input sequences to output sequences using attention mechanisms (e.g., Tacotron) for TTS. "attention-based sequence-to-sequence models (e.g., Tacotron (Wang et al., 2017), Transformer- TTS (Li et al., 2019))"

- Automatic Speech Recognition (ASR): Systems that convert spoken audio into text. "Open-source Automatic Speech Recognition (ASR) models including Paraformer (Gao et al., 2023b) and Sense Voice (An et al., 2024) are used for transcription."

- Autoregressive (AR): A modeling paradigm where each output token is generated conditioned on previous tokens. "auto-regressive (AR) zero-shot TTS based on neural codec"

- Block attention: A constrained attention mechanism operating over blocks; its removal lifts sequential constraints. "The block attention structure was removed"

- Causal convolution: Convolution that ensures outputs depend only on past inputs, used for sequential modeling. "causal convolution has been replaced with standard convolution"

- Character Error Rate (CER): The fraction of character-level transcription errors; a lower value indicates better pronunciation accuracy. "Character Error Rate (CER) for pronunciation accuracy (lower is better)"

- Cosine similarity: A metric measuring similarity between embeddings via the cosine of the angle between vectors. "measured by calculating the cosine similarity between speaker embeddings extracted using fine-tuned WavLM-large(Chen et al., 2022)."

- ConvNeXt: A modern convolutional network architecture adapted here for 2D vocoder blocks. "The Vocos2D backbone block adapts the original ConvNeXt design"

- DAPO: An open-source LLM RL system whose techniques (e.g., Clip-Higher, Dynamic Sampling) are adopted. "We adopt two techniques from DAPO (Yu et al., 2025): Clip-Higher and Dynamic Sampling."

- Depth-wise convolution: Convolution applied independently per channel/band, enabling efficient sub-band processing. "DwConv denotes depth-wise convolution."

- DiT-style residual connections: Residual design patterns inspired by Diffusion Transformers to enhance vocoder modeling. "DiT-style residual connections"

- Diffusion-based non-autoregressive (NAR) paradigms: Generative methods using diffusion processes without sequential token dependence. "flow-matching or diffusion-based non-autoregressive (NAR) paradigms"

- Discriminator augmentation (DA): Differentiable transformations applied only to the discriminator to improve GAN training stability. "DA improves training stability by applying differentiable transformations only to the discriminator"

- Dynamic sampling: A training strategy that resamples batches to avoid homogeneous rewards and mitigate gradient vanishing. "we implement a dynamic sampling strategy to mitigate potential gradient vanishing within a batch."

- Flow-matching: A training approach aligning probability flows, used as an alternative to diffusion in generative models. "flow-matching or diffusion-based non-autoregressive (NAR) paradigms"

- Forced alignment: Aligning text and audio to obtain precise timing for each character/phoneme. "we perform text-speech forced alignment (Kürzinger et al., 2020)"

- Fundamental frequency constraints: Constraints related to pitch (F0) used to improve tokenizer modeling and speech quality. "optimized speech tokenizer with fundamental frequency constraints"

- GAN (Generative Adversarial Network): A generative framework with a generator and discriminator; here used for vocoders. "The original Vocos (Siuzdak, 2024), a GAN-based vocoder"

- Grapheme-to-Phoneme (G2P): Conversion from written characters to phonetic representations. "Grapheme-to-Phoneme (G2P) module"

- GRPO (Group Relative Policy Optimization): A reinforcement learning algorithm used to optimize TTS with multiple rewards. "Adopting a GRPO-based RL framework, we fuse four critical rewards"

- Hinge loss: A loss function commonly used in discriminators to enforce margin-based separation. "using hinge loss (Zhang et al., 2019; Pan et al., 2023)"

- Hybrid Phoneme + Text input: An input scheme mixing phoneme and text to control pronunciation precisely. "A 'Hybrid Phoneme + Text' input scheme"

- In-context learning (ICL): The ability of a model to adapt behavior based on context provided at inference without fine-tuning. "in-context learning (ICL) zero-shot voice cloning"

- LLM: Transformer-based models trained on massive text corpora, here extended to speech tokens. "Transformer-based (Vaswani et al., 2023) LLM-driven paradigms"

- LoRA (Low-Rank Adaptation): A parameter-efficient fine-tuning technique using low-rank adapters. "we optimize the LoRA (Low-Rank Adaptation) fine-tuning paradigm"

- Mel-Band Roformer: A model variant targeting source separation using mel-band representations. "Mel- Band Roformer model (an improved variant of the RoFormer model (Wang et al., 2023b))"

- Mel spectrogram: A time-frequency representation on the mel scale used as vocoder conditioning. "input Mel spectrogram Xin"

- MOS (Mean Opinion Score): Subjective human rating of audio quality. "complemented by subjective MOS evaluations."

- Multi-period discriminator (MPD): A GAN discriminator analyzing waveform periodicities; removed here for better performance. "removing the multi-period discriminator, as it degraded performance"

- Multi-resolution discriminator (MRD): A discriminator operating at multiple spectrogram resolutions to assess audio realism. "multi- resolution discriminator (Jang et al., 2021)"

- NISQA: A model-based metric for assessing speech quality without reference signals. "Objective metrics include UTMOS (Saeki et al., 2022), NISQA (Mittag et al., 2021)"

- Non-autoregressive (NAR): Models that generate outputs without conditioning on previously generated tokens. "flow-matching or diffusion-based non-autoregressive (NAR) paradigms"

- Non-causal architecture: Architectures without causal constraints, allowing bidirectional context and improved accuracy. "Adoption of Non-Causal Architecture."

- Paralinguistic features: Non-verbal vocal cues like laughter and breathing contributing to expressiveness. "paralinguistic features such as laughter and breathing sounds."

- Phoneme Error Rate (PER): Error rate measuring mispronunciations at the phoneme level. "yields a Phoneme Error Rate (PER) of 13.23%."

- Pitch Estimator (PE): A module that estimates pitch to improve prosody modeling and alignment. "Introduction of Pitch Estimator (PE) Module."

- Point-wise convolution: Convolution with 1x1 kernels used for channel-wise transformations. "PwConv denotes point-wise convolution"

- Prosody: The rhythm, stress, and intonation of speech impacting naturalness. "improving the prosody alignment between the cloned TTS output and reference (prompt) audio."

- pyannote.audio: A toolkit/model for speaker diarization and audio processing. "We leverage the pyannote.audio model (Bredin et al., 2019) to implement multi-speaker separation"

- PQ (Meta AudioBox Aesthetics production quality score): An automatic audio aesthetics/quality metric from Meta’s AudioBox. "the production quality (PQ) score from Meta AudioBox Aesthetics (Tjandra et al., 2025)"

- Reinforcement learning (RL): Optimization via reward-driven trial-and-error to align outputs with preferences. "reinforcement learning (RL), while promising for aligning speech outputs with human preferences"

- Reward hacking: A failure mode where models exploit reward definitions to achieve high scores without desired behavior. "This resolves the reward hacking and training instability issues plaguing prior RL-based TTS"

- SIM (Speaker Similarity): A metric quantifying similarity between generated and target speaker voices. "Speaker Similarity (SIM, higher is better)"

- Source separation: The process of isolating speech from background sounds. "Source Separation and Denoising."

- Speaker diarization: Assigning speech segments to speakers in multi-speaker audio. "Speaker Diarization and Concatenation."

- Spectrogram: A time-frequency representation used for conditioning and discriminator inputs. "allowing direct incorporation of the input spectrogram condition."

- Speech tokenizer: A model that converts continuous speech into discrete tokens for LLM-style modeling. "GLM-TTS introduces a series of optimizations to the Whisper-VQ speech tokenizer"

- Tacotron: An attention-based seq2seq TTS model that maps text to acoustic features. "Tacotron (Wang et al., 2017)"

- Text-to-token autoregressive model: The stage that generates discrete speech tokens from text sequentially. "text-to-token autoregressive model"

- Token rate: The frequency (Hz) at which discrete speech tokens are generated. "The token rate is doubled from 12.5Hz to 25Hz"

- Token-to-waveform diffusion model: The stage that generates audio waveforms from speech tokens using diffusion. "token-to-waveform diffusion model"

- Transformer: A neural architecture based on attention mechanisms widely used in language and speech tasks. "Transformer-based (Vaswani et al., 2023)"

- UTMOS: A learned objective metric predicting MOS-like scores for speech quality. "Objective metrics include UTMOS (Saeki et al., 2022)"

- Voice Activity Detection (VAD): Detecting segments containing speech to segment and clean audio. "Voice Activity Detection (VAD) technology (Bredin et al., 2019) is applied to segment valid speech fragments"

- Vocoder: A model that converts intermediate acoustic representations (e.g., spectrograms) into waveforms. "a GAN-based vocoder"

- Vocos: A Fourier-based neural vocoder architecture preceding Vocos2D. "The original Vocos (Siuzdak, 2024)"

- Vocos2D: A redesigned vocoder using 2D convolutions and DiT-style connections for sub-band modeling. "A novel Vocos2D vocoder replaces 1D convolutions with 2D operations and DiT-style residual connections"

- Vocabulary pruning: Reducing tokenizer vocabulary to improve alignment and generation stability. "Vocabulary Pruning for Alignment Stability."

- WavLM-large: A large self-supervised speech model used to extract speaker embeddings. "speaker embeddings extracted using fine-tuned WavLM-large(Chen et al., 2022)"

- Word Error Rate (WER): The fraction of word-level transcription errors used to assess recognition accuracy. "Word Error Rate (WER) for pronunciation accuracy (lower is better)"

- Whisper: An open-source ASR model for transcribing English audio. "Open-source ASR models including Whisper (Radford et al., 2022)"

- Whisper-VQ speech tokenizer: A speech tokenizer variant based on Whisper with vector quantization. "GLM-TTS introduces a series of optimizations to the Whisper-VQ speech tokenizer"

- Zero-shot voice cloning: Generating a new voice style from minimal prompt audio without speaker-specific training. "in-context learning (ICL) zero-shot voice cloning"

Collections

Sign up for free to add this paper to one or more collections.