Is Nano Banana Pro a Low-Level Vision All-Rounder? A Comprehensive Evaluation on 14 Tasks and 40 Datasets

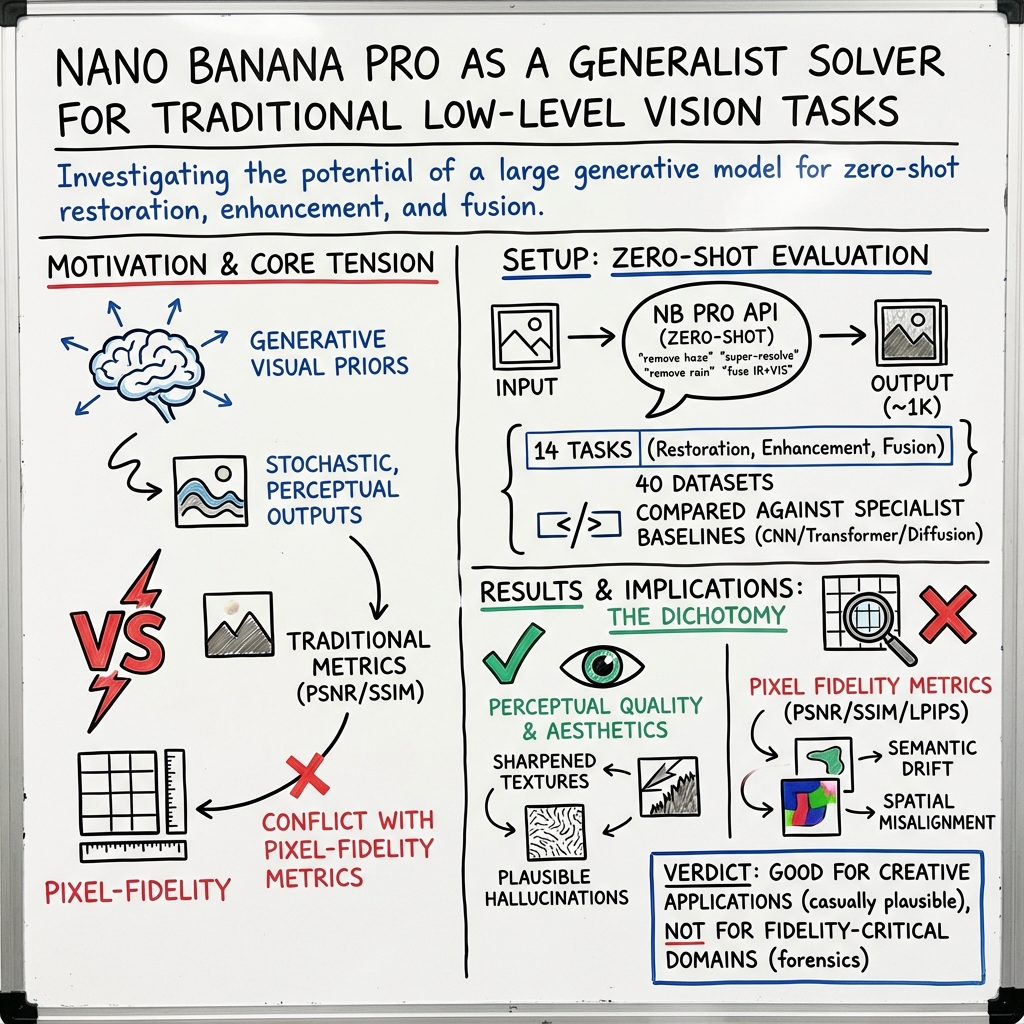

Abstract: The rapid evolution of text-to-image generation models has revolutionized visual content creation. While commercial products like Nano Banana Pro have garnered significant attention, their potential as generalist solvers for traditional low-level vision challenges remains largely underexplored. In this study, we investigate the critical question: Is Nano Banana Pro a Low-Level Vision All-Rounder? We conducted a comprehensive zero-shot evaluation across 14 distinct low-level tasks spanning 40 diverse datasets. By utilizing simple textual prompts without fine-tuning, we benchmarked Nano Banana Pro against state-of-the-art specialist models. Our extensive analysis reveals a distinct performance dichotomy: while \textbf{Nano Banana Pro demonstrates superior subjective visual quality}, often hallucinating plausible high-frequency details that surpass specialist models, it lags behind in traditional reference-based quantitative metrics. We attribute this discrepancy to the inherent stochasticity of generative models, which struggle to maintain the strict pixel-level consistency required by conventional metrics. This report identifies Nano Banana Pro as a capable zero-shot contender for low-level vision tasks, while highlighting that achieving the high fidelity of domain specialists remains a significant hurdle.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Explain it Like I'm 14

Overview

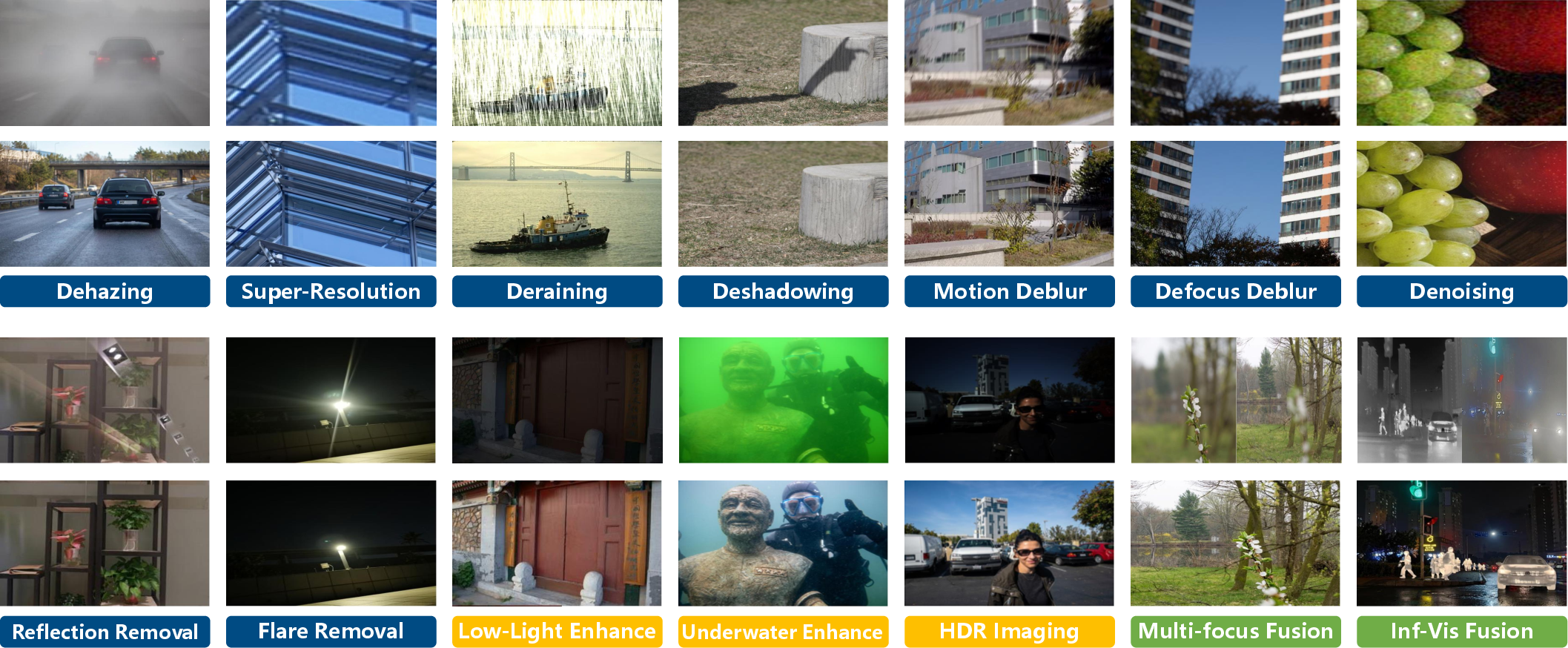

This paper asks a simple question: can a powerful image generator called “Nano Banana Pro” fix and improve everyday photos as well as the best specialized tools? The authors test it on 14 different “low-level vision” tasks (like clearing fog, removing rain, sharpening, and fixing shadows) using 40 datasets. They use only simple text prompts (no training or fine-tuning) to see how well the model performs without extra help.

Objectives

The paper focuses on three easy-to-understand goals:

- Can a creative image generator, used with plain text prompts, solve classic photo-fixing problems without special training?

- How does it compare to expert models that were built specifically for each task?

- Why do results that “look great” to people sometimes score worse on traditional numerical tests?

Methods and Approach

Think of low-level vision tasks as chores for cleaning up photos:

- Restoration: fixing damage (e.g., removing fog or rain, sharpening a blurry photo).

- Enhancement: making photos look better (e.g., improving contrast or colors).

- Fusion: combining information from multiple images to get a clearer result.

What the authors did:

- Zero-shot testing: They didn’t teach the model anything new. Instead, they gave it a simple instruction like “Please remove the rain while keeping colors the same,” and let it try.

- Compared to specialist tools: They checked Nano Banana Pro against top models designed for each specific job.

- Measured in two ways:

- Pixel-level metrics (PSNR, SSIM): Like comparing your cleaned photo to a perfect reference picture, pixel by pixel. Higher scores mean the output is closer to the original.

- Perceptual/no-reference metrics (NIQE, NIMA, MUSIQ): These estimate how natural, pleasing, or high-quality the image looks to humans, even if it’s not exactly the same as the original.

Analogy:

- Pixel-level metrics are like grading by how closely you copied a drawing, line for line.

- Perceptual metrics are like judging how good the drawing looks overall, even if you changed some lines to make it prettier.

Main Findings

Here are the main results, explained simply:

- Looks good, but not pixel-perfect:

- Nano Banana Pro often makes images that look sharp, clean, and aesthetically pleasing. It can “guess” and fill in missing details in a convincing way.

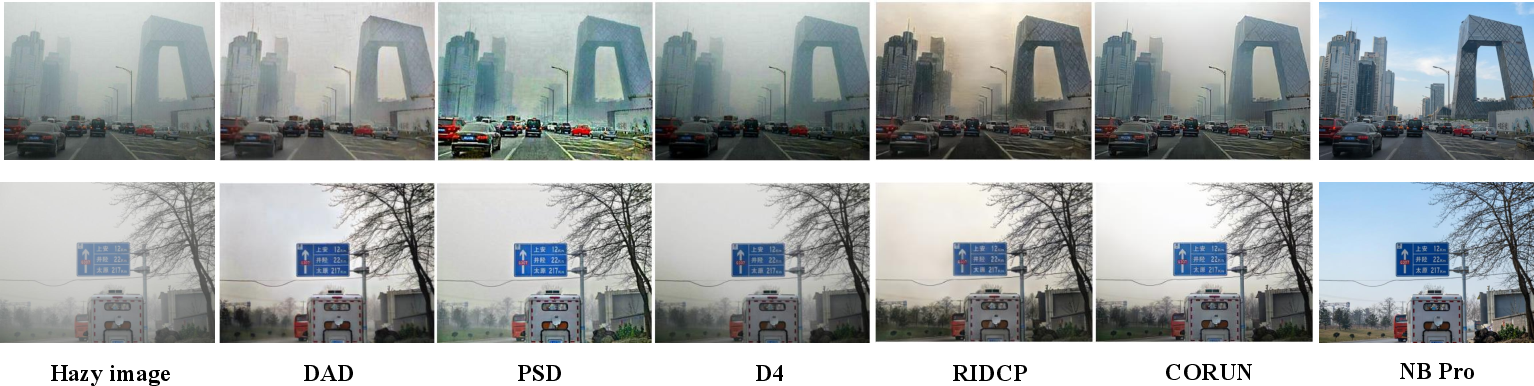

- However, it usually scores worse on the pixel-accuracy tests (PSNR/SSIM) than specialist models. This happened across tasks like dehazing (fog removal), super-resolution (making images larger and sharper), deraining (removing rain), and shadow removal.

- Why the mismatch?

- As a generative model, it aims for plausible, nice-looking results rather than copying the exact original pixels. This can cause small differences that lower numerical scores, even if the image looks better.

- Specific observations from tasks:

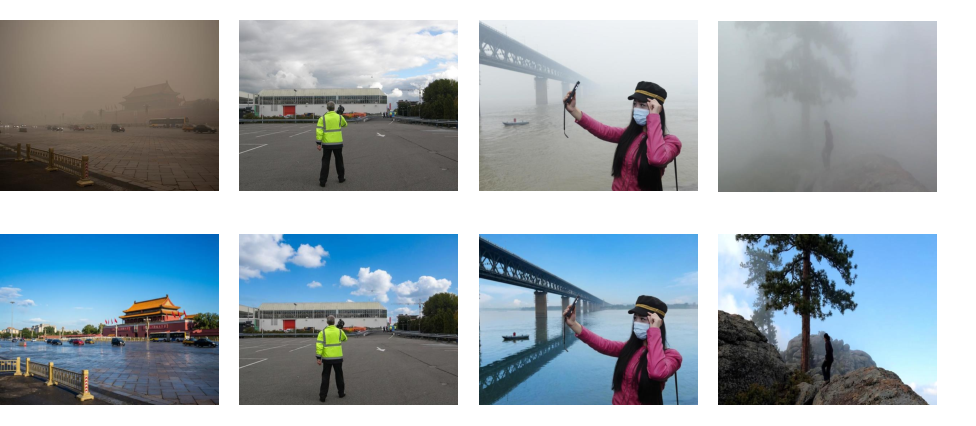

- Dehazing: It can make foggy scenes look clearer and more detailed, but sometimes pushes colors too far (e.g., turning neutral skies into overly blue ones), which hurts realism.

- Super-resolution: It makes textures look sharp and clean, and often improves how natural the image feels (great NIQE scores). But it can invent details (hallucinations), slightly change the field of view (add content at the edges), or misread text—so it’s not faithful to the original.

- Deraining: It struggles on strict metrics versus top specialized models. Under light rain, it does better. Under heavy rain, it may shift colors or lose tiny details. It sometimes removes haze along with rain, which looks cleaner but doesn’t match the ground truth.

- Shadow removal: It can produce visually pleasing results, but again falls far behind on pixel-accuracy compared to state-of-the-art methods.

- Prompting matters:

- The tests used simple, fixed prompts. With more careful prompt design or multiple tries, results might improve—but the study kept things basic to be fair and consistent.

Why This Is Important

- It shows that modern generative AI can be surprisingly good at photo cleanup without special training—especially in making images look attractive and clean.

- It also warns that “looking good” isn’t the same as “being accurate.” For tasks where exact details matter (like medical imaging, scientific analysis, or evidence), generative models can change or invent content.

- It highlights a big challenge: our current evaluation metrics might not fully capture what people care about (visual quality), and we need to balance that with truthfulness to the original image.

Implications and Impact

- Good for creative and everyday use:

- Nano Banana Pro is promising for casual photo enhancement, artistic upscaling, and making old photos look nicer—places where visual appeal matters more than exact replication.

- Not yet a complete replacement for specialists:

- For high-stakes or precision tasks, specialist models trained for specific degradations still win on accuracy.

- Future directions:

- Combine the strengths of generative models (great visuals) with physical or task-specific constraints (keeping colors and structures faithful).

- Develop better, perception-aligned metrics that judge both how good an image looks and how true it stays to the original.

- Explore smarter prompt engineering and hybrid systems to reduce hallucinations and color shifts.

In short: Nano Banana Pro is a strong zero-shot contender that makes photos look great, but it’s not yet the all-around champion for faithful, pixel-precise restoration. The path forward is to blend creativity with correctness.

Glossary

- Atmospheric scattering laws: Physical formulations that model how light is scattered by particles in the atmosphere, used to constrain dehazing. "task-specific physical constraints (e.g., atmospheric scattering laws)"

- Bicubic downsampling: A resampling operation using bicubic interpolation to reduce image resolution, commonly used to synthesize degradation in super-resolution. "simple bicubic downsampling"

- BRISQUE: A blind no-reference image quality metric assessing statistical naturalness; lower values indicate more natural-looking images. "It also demonstrates favorable FADE and BRISQUE scores on the RTTS dataset."

- Camera response functions: Nonlinear mappings from scene radiance to pixel intensities specific to camera hardware and processing pipelines. "varying camera response functions"

- CLIPIQA: A CLIP-based no-reference image quality assessment metric that predicts perceptual quality from learned multimodal features. "No-Reference perceptual metrics (NIQE, MUSIQ, CLIPIQA)"

- Dark Channel Prior (DCP): A hand-crafted dehazing prior based on the observation that at least one color channel has low intensity in haze-free patches. "Dark Channel Prior (DCP)"

- Denoising Diffusion Probabilistic Models (DDPMs): Generative models that iteratively denoise data from noise, achieving high-fidelity synthesis through diffusion processes. "Denoising Diffusion Probabilistic Models (DDPMs) have emerged as the new state-of-the-art."

- FADE: A no-reference dehazing quality metric related to haze density and visibility restoration. "FADE , BRISQUE and NIMA"

- Field-of-View (FOV) expansion: An artifact where generated outputs hallucinate content beyond original image boundaries, altering spatial extent. "unintended Field-of-View (FOV) expansion"

- Generative Adversarial Networks (GANs): A class of generative models that pit a generator against a discriminator to synthesize realistic images. "Generative Adversarial Networks (GANs)"

- LPIPS: Learned Perceptual Image Patch Similarity; a deep feature–based metric for perceptual similarity between images. "LPIPS to assess perceptual similarity."

- MUSIQ: A learned no-reference image quality metric that estimates perceptual and aesthetic quality across scales. "No-Reference perceptual metrics (NIQE, MUSIQ, CLIPIQA)"

- NIQE: Naturalness Image Quality Evaluator; a no-reference metric quantifying naturalness statistics of images (lower is better). "No-Reference perceptual metrics (NIQE, MUSIQ, CLIPIQA)"

- No-Reference metrics: Image quality metrics that do not require ground-truth references and estimate perceptual quality from the test image alone. "No-Reference (NR) metrics NIQE, MUSIQ, and CLIPIQA"

- Non-Local Prior (NLP): A dehazing prior leveraging non-local self-similarity and statistical structures across the image. "Non-Local Prior (NLP)"

- NIMA: Neural Image Assessment; a learned metric that predicts human aesthetic quality of images. "achieving top-tier NIMA scores on both benchmarks"

- Perception-Distortion trade-off: The tension between improving perceptual quality and maintaining pixel-level fidelity to a reference. "This observation underscores the prevalent perception-distortion trade-off in image restoration tasks."

- Pixel-wise optimization: Training strategies that minimize per-pixel losses (e.g., MSE), emphasizing exact reconstruction over perceptual realism. "pixel-wise optimization (e.g., MSE loss)"

- PSNR: Peak Signal-to-Noise Ratio; a full-reference fidelity metric measuring the reconstruction error relative to a ground-truth. "PSNR and SSIM to evaluate signal fidelity"

- Real-World Image Super-Resolution (Real-ISR): Super-resolution under complex, unknown real degradations beyond simple synthetic downsampling. "Real-World Image Super-Resolution (Real-ISR) aims to restore high-fidelity, high-resolution content from low-resolution inputs"

- Reference-based metrics: Full-reference metrics computed against ground-truth images to quantify pixel-level fidelity. "reference-based metrics (e.g., PSNR, SSIM)"

- Semantic-driven priors: Generative priors that leverage semantic knowledge to infer plausible content in degraded regions. "demonstrating the potential of semantic-driven priors in tackling highly ambiguous degradations"

- SSIM: Structural Similarity Index Measure; a full-reference metric assessing structural similarity between images. "PSNR and SSIM to evaluate signal fidelity"

- Stable Diffusion: A large-scale text-to-image diffusion model providing strong generative priors for restoration tasks. "pre-trained text-to-image models (e.g., Stable Diffusion)"

- Stochasticity of generative models: The inherent randomness in generative sampling that can cause variability and pixel-level misalignment. "the inherent stochasticity of generative models"

- Text-to-Image (T2I): Models that generate images conditioned on textual prompts. "Text-to-Image (T2I) models"

- Transformer-based methods: Vision restoration/enhancement approaches using Transformer architectures for long-range dependencies. "Transformer-based methods"

- Zero-shot evaluation: Assessing a model on tasks without task-specific training or fine-tuning. "a comprehensive zero-shot evaluation across 14 distinct low-level tasks"

Collections

Sign up for free to add this paper to one or more collections.