Don't Guess, Escalate: Towards Explainable Uncertainty-Calibrated AI Forensic Agents

Abstract: AI is reshaping the landscape of multimedia forensics. We propose AI forensic agents: reliable orchestrators that select and combine forensic detectors, identify provenance and context, and provide uncertainty-aware assessments. We highlight pitfalls in current solutions and introduce a unified framework to improve the authenticity verification process.

Summary

- The paper proposes an orchestrated forensic agent that integrates heterogeneous detectors with uncertainty calibration for enhanced evidentiary reliability.

- It employs adaptive tool selection and selective escalation to avoid overconfident misclassification in evolving AI-generated media.

- The framework delivers transparent, evidence-grounded explanations with full logging for improved accountability and auditability in forensic analysis.

Explainable Uncertainty-Calibrated AI Forensic Agents: An Orchestrated Approach

Introduction: Limitations of Standalone Forensic Detectors

The verification of authenticity and provenance in multimedia content has been severely challenged by the exponential advance of generative AI. Contemporary GANs, diffusion models, and neural codecs produce synthetic images, videos, and voices that are increasingly indistinguishable from genuine content. Traditional forensic approaches—reliant on handcrafted, modality-specific artifacts such as sensor fingerprints, compression traces, and noise inconsistencies—are demonstrably limited in this terrain. These methods, and even the latest deep learning-based detectors, are susceptible to failure when confronted with evolving generation techniques, continuous distribution shifts, and the proliferation of adversarial manipulations.

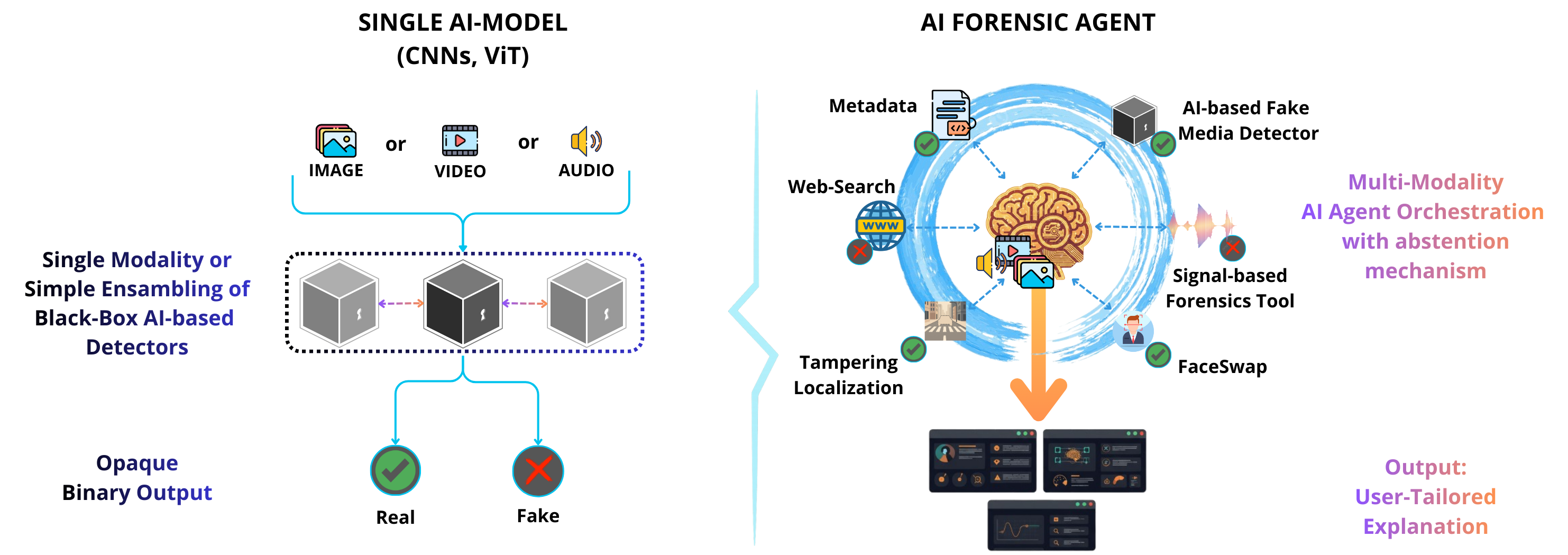

While ensembles of detectors have been pursued, they typically combine outputs via opaque heuristics, lacking transparency and nuanced uncertainty calibration. This presents substantial reliability and explainability deficiencies, especially in adversarial contexts where attackers exploit blind spots or distributional changes. There is thus a compelling demand for a paradigm shift from monolithic, single-purpose detectors toward orchestrated, adaptive, agent-based forensic systems.

The Diversity of Forensic Traces in AI-Generated Media

The synthetic media landscape is dominated by sophisticated GANs—such as StyleGAN, GigaGAN, and their descendants—and U-Net-based or Transformer-based diffusion models. While early generation methods produced semantic inconsistencies and visible visual artifacts, state-of-the-art models now routinely yield photorealistic outputs. Forensic cues in such media are bifurcated: some generators leave low-level statistical/spectral signatures, while others introduce high-level semantic anomalies. No single feature level provides full coverage, as illustrated by characteristic failures of uni-modal or artifact-specific detectors.

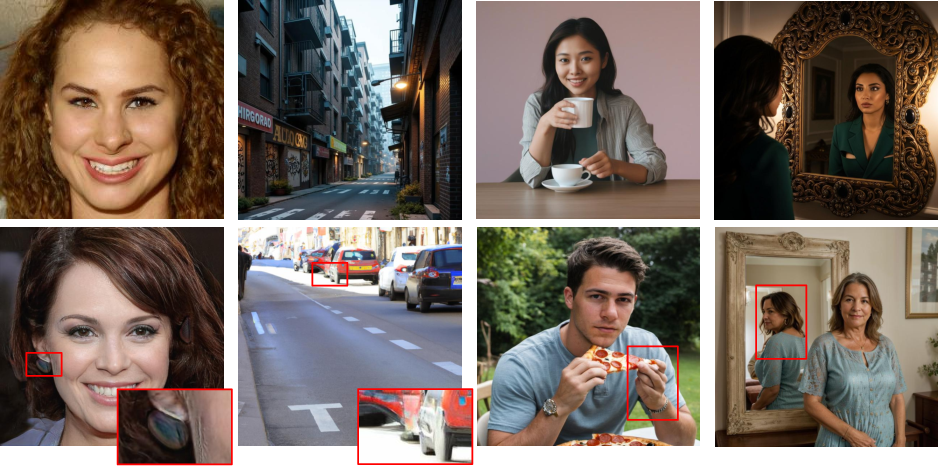

Figure 1: Examples of AI-generated images exhibiting near-photorealistic quality (top) and, conversely, images with obvious visual artifacts (bottom).

Consequently, effective forensic analysis must integrate information from both low-level traces (e.g., frequency-based anomalies) and high-level semantic errors, as well as metadata and contextual correlations. The emergence of sophisticated multimodal techniques, passive and active provenance methods, and detector-specific fingerprints underscores the need for a comprehensive orchestration of diverse evidence sources.

From Single Detectors to Orchestrated Forensic Agents

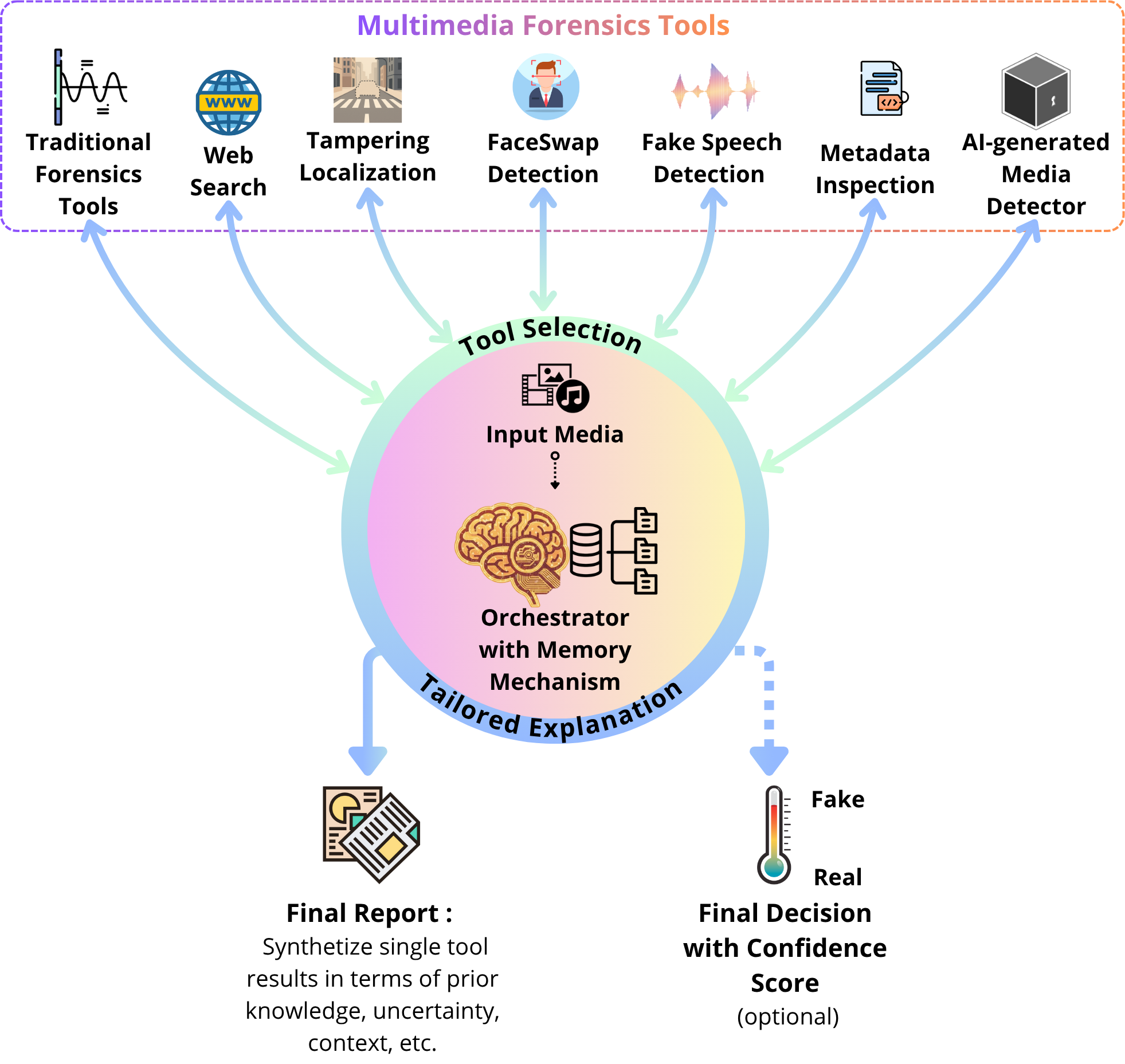

The authors advocate for the explicit transition from single or weakly fused detector systems to agent-based orchestrated frameworks. In this scheme, an AI forensic orchestrator is positioned as an autonomous agent that dynamically selects and coordinates specialized tools (e.g., fingerprint matchers, semantic consistency checkers, metadata analyzers, web-based provenance engines) based on the input scenario. This enables not only adaptive integration of new detectors but also context-aware calibration of evidence aggregation. The orchestrator is capable of explicit reasoning, adaptive uncertainty quantification, and abstention in the face of conflicting or insufficient evidence.

Figure 2: Transition from traditional opaque model ensembles to the AI forensic agent paradigm that dynamically fuses the outputs of heterogeneous forensic modules.

Such an architecture results in transparent, evidence-grounded reports rather than opaque integrity scores. The orchestrator can log which tools were utilized, the sequence of their invocation, and how their outputs influenced the final decision, yielding heightened provenance, accountability, and auditability.

Figure 3: Abstract architecture for orchestrated AI forensic agents, showing selective invocation and integration of multiple detection and provenance modules.

Uncertainty Quantification and Selective Escalation

A central claim of the paper is that uncertainty handling is fundamentally inadequate in current multimedia forensics. Existing detectors seldom provide calibrated confidence and do not abstain from delivering potentially erroneous binary decisions. The orchestrated agent framework remedies this by enabling selective prediction and explicit abstention: when detectors disagree or cumulative evidence does not meet a reliability threshold, the system 'escalates' instead of guessing.

Uncertainty aggregation incorporates calibration techniques drawn from mature domains (e.g., temperature scaling, deep ensembles, Bayesian inference), probabilistic fusion across heterogeneous cues, and principled scoring rules for reliability evaluation. Selective abstention (reject-option classification or conformal prediction) further reduces overconfident misjudgments—a critical requirement for forensic applications with legal, journalistic, or societal ramifications.

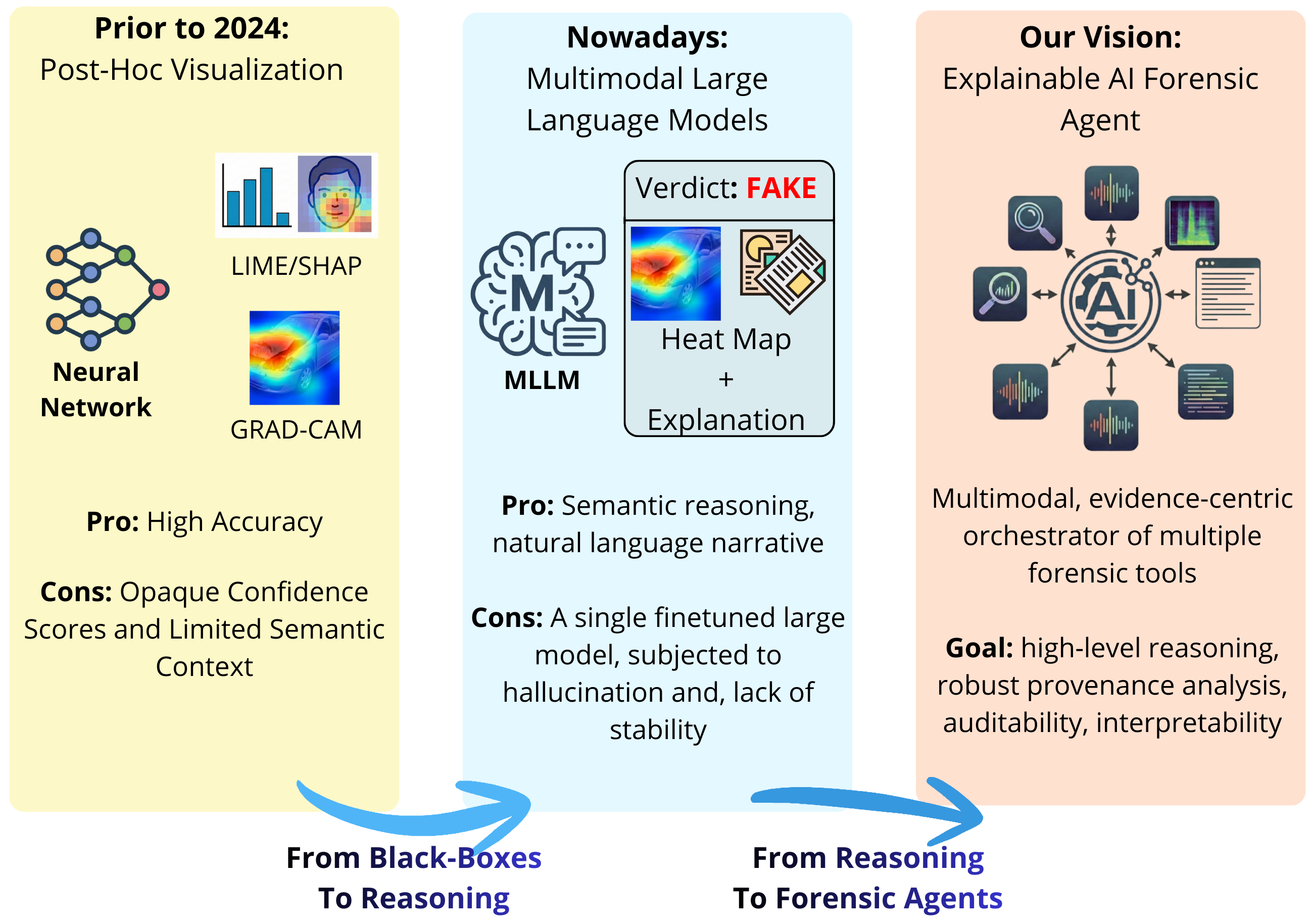

Explainability, Interpretability, and Provenance

The evolution of explainable AI in digital forensics is depicted as transitioning from pure post-hoc heatmap visualizations (e.g., Grad-CAM, LIME, SHAP) to more advanced chains-of-thought, generative, and evidence-linked explanations facilitated by modern MLLMs. While post-hoc visual explanations have proven limited—especially for non-local, distributional forensic cues—the orchestrator can structure explanations around the underlying signals detected, the measurements used, and the rationale for their fusion.

Figure 4: The progression of explainability, from black-box visualizations (left), to semantic reasoning via MLLMs (center), to orchestrated evidence-centric explanations (right).

Moreover, the orchestrated agent architecture is well suited for robust provenance analysis. The ability to dynamically invoke both passive (e.g., artifact detection) and active (e.g., watermark, C2PA) provenance tools enables richer tracking and reconstruction of the content’s lifecycle. Chain-of-custody and full analytic transparency are maintained, and explanations can be tailored to stakeholders across technical and legal domains.

Practical and Theoretical Implications

This orchestrated approach has strong implications for both the operational deployment and the theoretical development of AI-driven forensic systems:

- Practical robustness: Modular orchestration, adaptive selection of detectors, and selective abstention are critical for real-world deployment—particularly in adversarial, distribution-shifting environments.

- Accountability and auditability: Fine-grained logging, explainability, and traceability directly support legal admissibility and investigative reproducibility.

- Theoretical generalization: Bayesian/frequentist fusion approaches for uncertainty and selective abstention prevent overfitting to training distributions and push toward domain-agnostic, future-proof forensics.

Conclusion

The paper "Don't Guess, Escalate: Towards Explainable Uncertainty-Calibrated AI Forensic Agents" (2512.16614) presents a formal re-imagining of multimedia forensics: advancing from isolated, monolithic classifiers to orchestrated, uncertainty-calibrated, explainable AI agents. By explicitly quantifying uncertainty, integrating heterogeneous evidence, embracing principled abstention, and providing evidence-linked explanations, this paradigm directly addresses the most critical methodological and operational flaws in current practice. The framework significantly enhances operational reliability, interpretability, and trust—imperatives for forensic systems mediating truth in both media and societal contexts. The adoption of agent-based orchestrators is projected to undergird future developments not only in multimedia forensics but in any AI application demanding robust, explainable, and trustworthy decision-making.

Paper to Video (Beta)

No one has generated a video about this paper yet.

Whiteboard

No one has generated a whiteboard explanation for this paper yet.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Continue Learning

- How does the orchestrated forensic agent compare with traditional single-detector systems?

- What methods are used for uncertainty calibration in the proposed system?

- How does selective escalation improve forensic decision-making in adversarial environments?

- What challenges remain in achieving full explainability and auditability in AI-driven forensic agents?

- Find recent papers about explainable AI forensic systems.

Related Papers

- GenAI Mirage: The Impostor Bias and the Deepfake Detection Challenge in the Era of Artificial Illusions (2023)

- Toward Reliable Models for Authenticating Multimedia Content: Detecting Resampling Artifacts With Bayesian Neural Networks (2020)

- Towards AI Forensics: Did the Artificial Intelligence System Do It? (2020)

- FakeScope: Large Multimodal Expert Model for Transparent AI-Generated Image Forensics (2025)

- The Chronicles of Foundation AI for Forensics of Multi-Agent Provenance (2025)

- ForenX: Towards Explainable AI-Generated Image Detection with Multimodal Large Language Models (2025)

- From Evidence to Verdict: An Agent-Based Forensic Framework for AI-Generated Image Detection (2025)

- AI-Generated Image Detection: An Empirical Study and Future Research Directions (2025)

- REVEAL: Reasoning-enhanced Forensic Evidence Analysis for Explainable AI-generated Image Detection (2025)

- INSIGHT: An Interpretable Neural Vision-Language Framework for Reasoning of Generative Artifacts (2025)

Collections

Sign up for free to add this paper to one or more collections.