- The paper presents a novel diffusion-based fusion framework that unifies single-frame estimation and cross-frame tracking for deformable linear objects.

- It leverages a dual-branch architecture and graph-convolutional DDPM to mitigate occlusion challenges while ensuring both global coherence and local precision.

- Experimental results demonstrate robust zero-shot sim-to-real transfer, outperforming baselines with up to 50% occlusion in complex manipulation tasks.

Introduction

"UniStateDLO: Unified Generative State Estimation and Tracking of Deformable Linear Objects Under Occlusion for Constrained Manipulation" (2512.17764) proposes a unified deep learning framework for DLO perception, focusing on robust 3D state estimation and tracking in environments suffering severe occlusions. The framework addresses the limitations of previous vision-based methods that are brittle under occlusion, lack temporal smoothness, and require laborious manual parameter tuning. The core contributions include a conditional generative formulation of both state estimation and tracking, leveraging diffusion models to achieve high accuracy and robustness without explicit priors or real-world supervision.

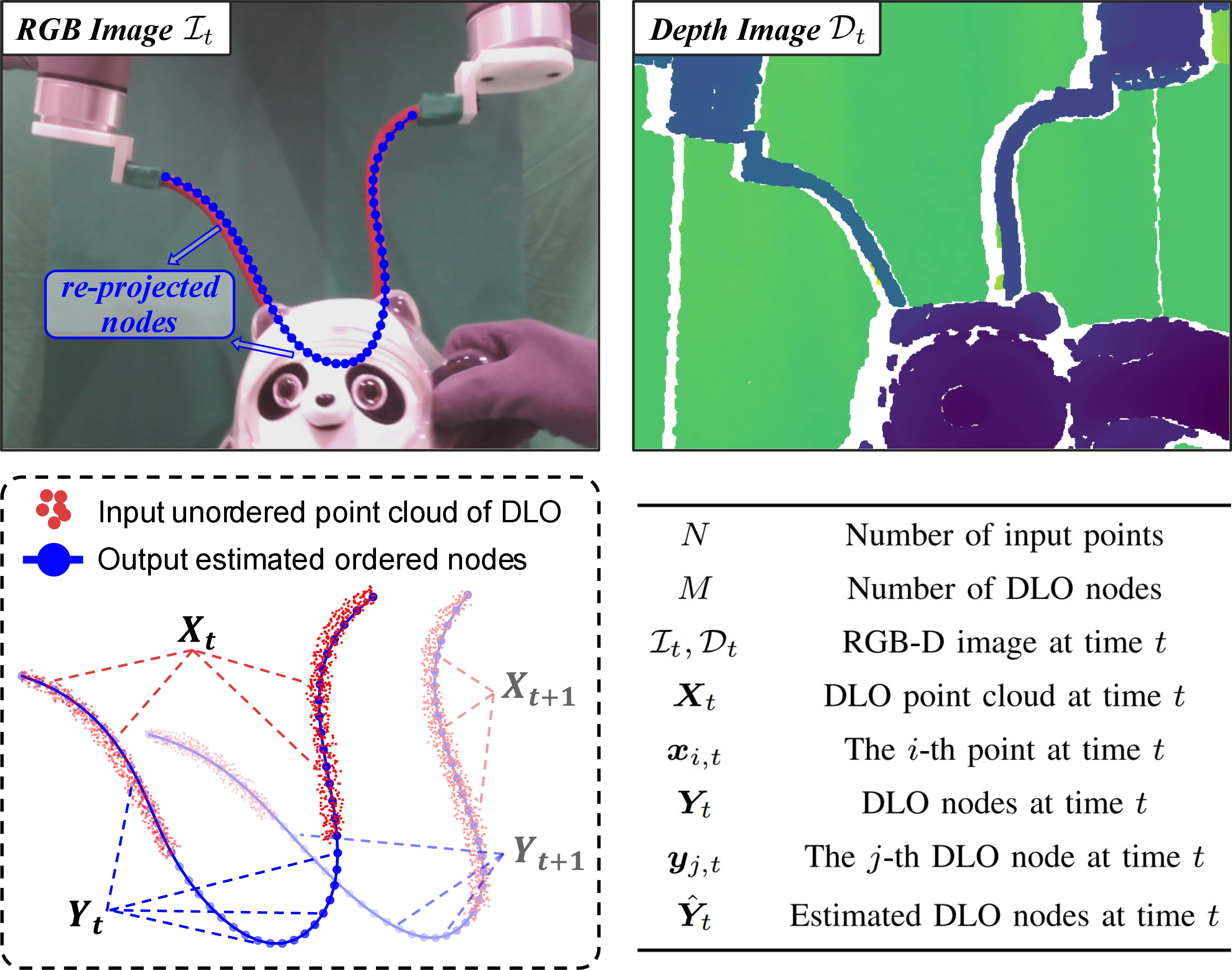

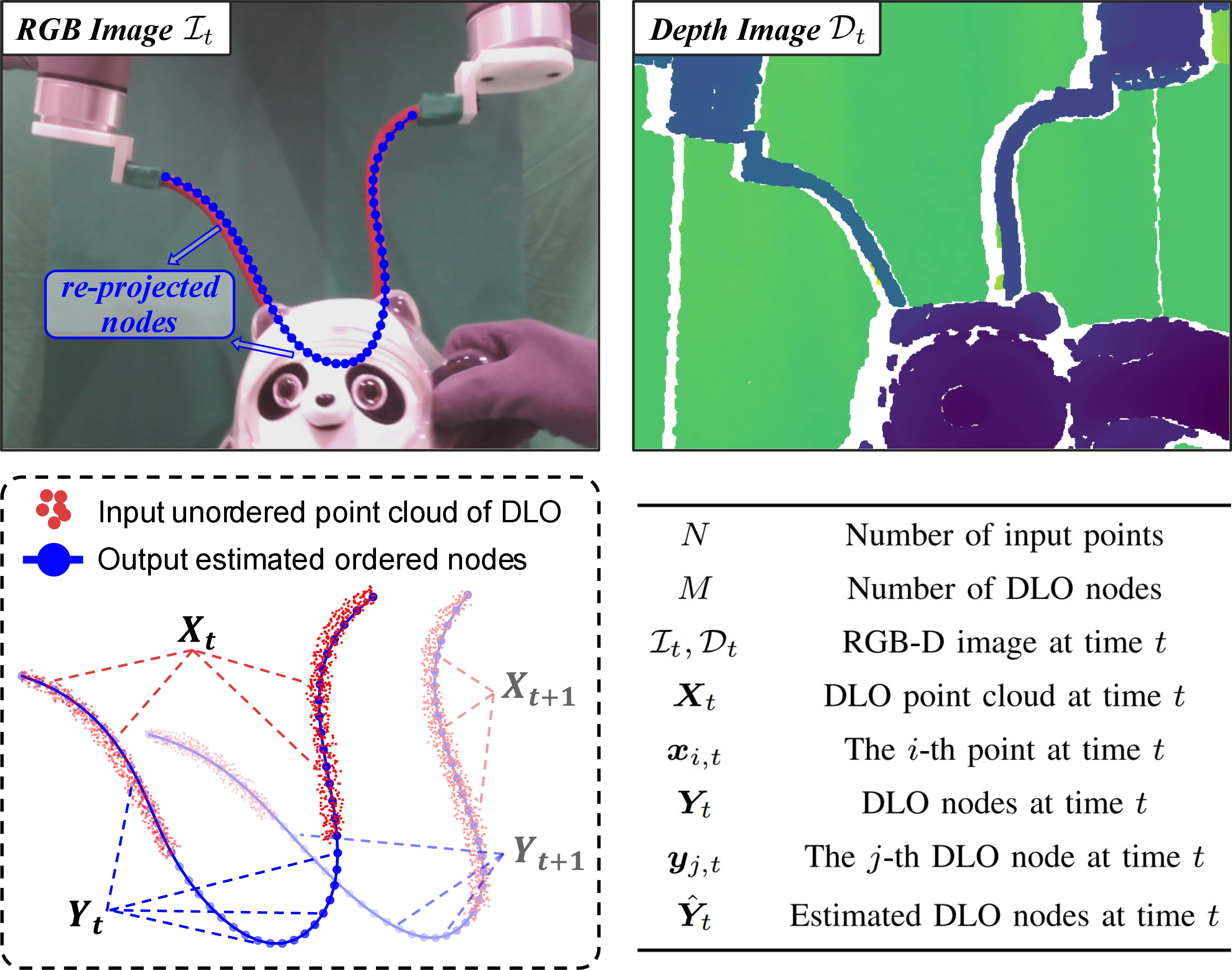

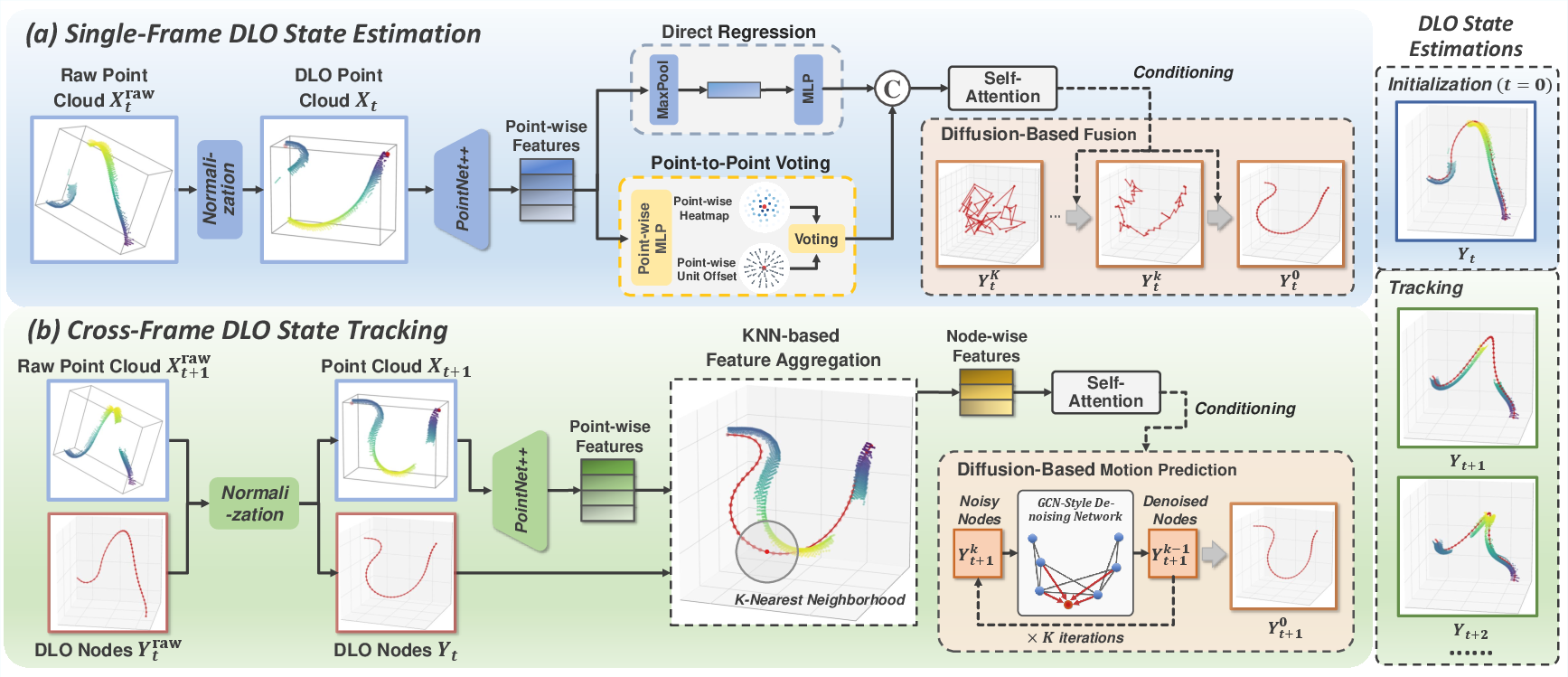

DLO perception is defined as estimating an ordered sequence of M 3D nodes Yt from partial, noisy point clouds Xt derived from RGB-D observations at time t, under diverse occlusion sources including environmental clutter, self-intersection, and sensor noise. The pipeline (Figure 1) comprises:

- Single-frame state estimation for robust initialization, operating independently per frame.

- Cross-frame state tracking for temporal smoothness and topological consistency, propagating priors through per-node, local feature aggregation.

Figure 2: The DLO perception scenario and state definition, illustrating recovery of a sequential 3D node chain from partial observations.

Figure 1: Schematic of UniStateDLO, unifying initialization and sequential tracking via complementary branches and diffusion-based generative fusion modules.

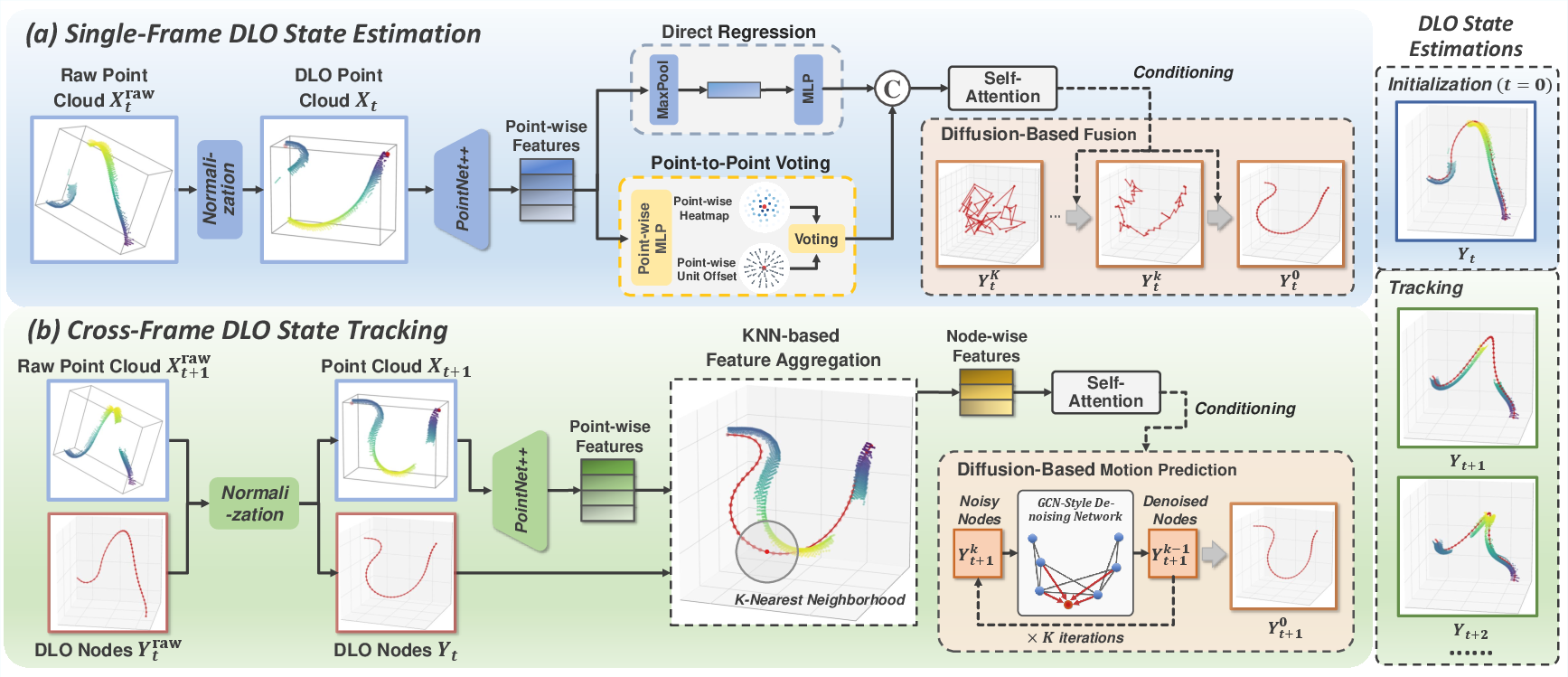

Single-Frame State Estimation: Dual-Branch Fusion

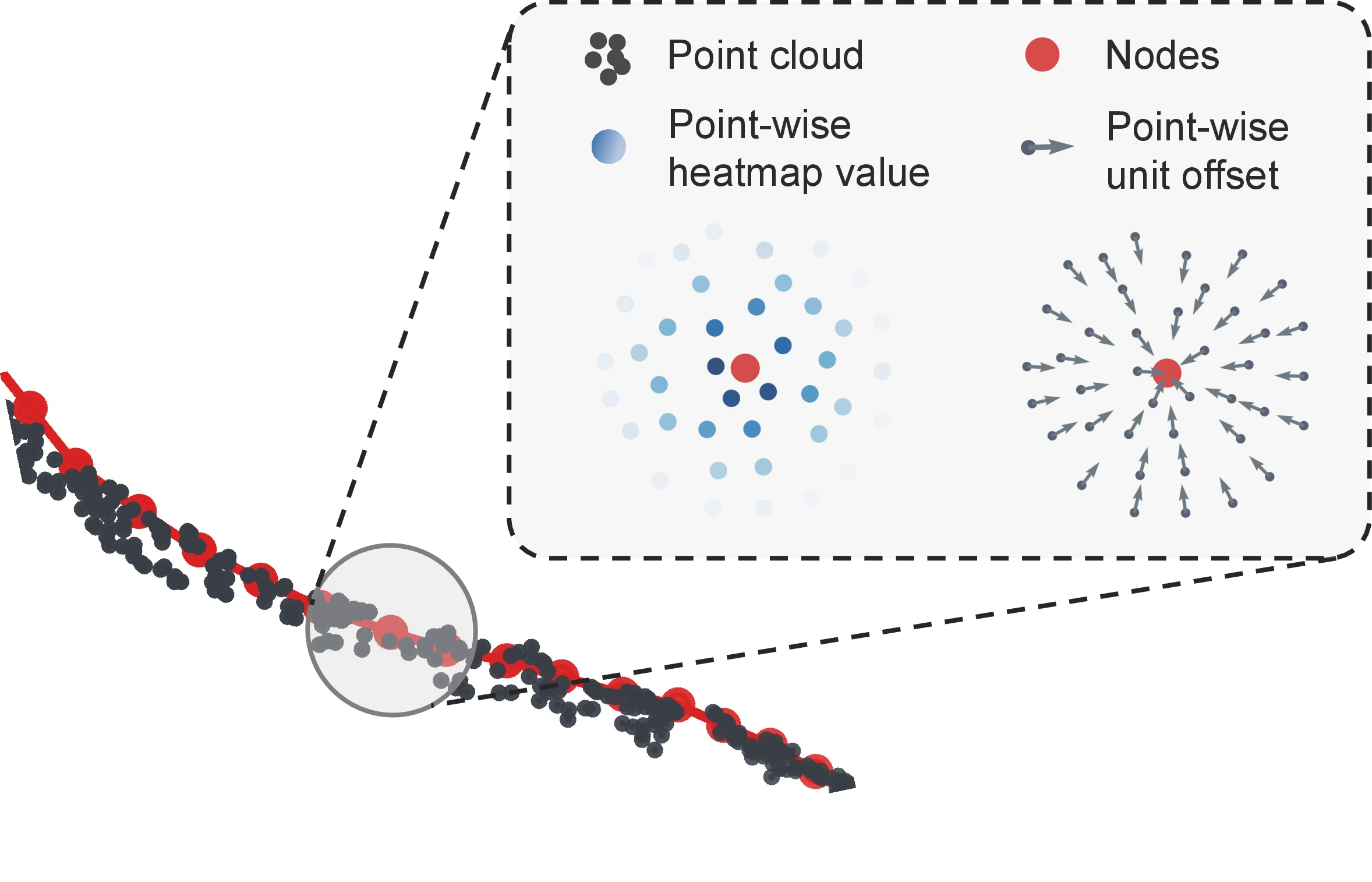

The single-frame estimation module decomposes the perception task into two parallel branches sharing a PointNet++ backbone:

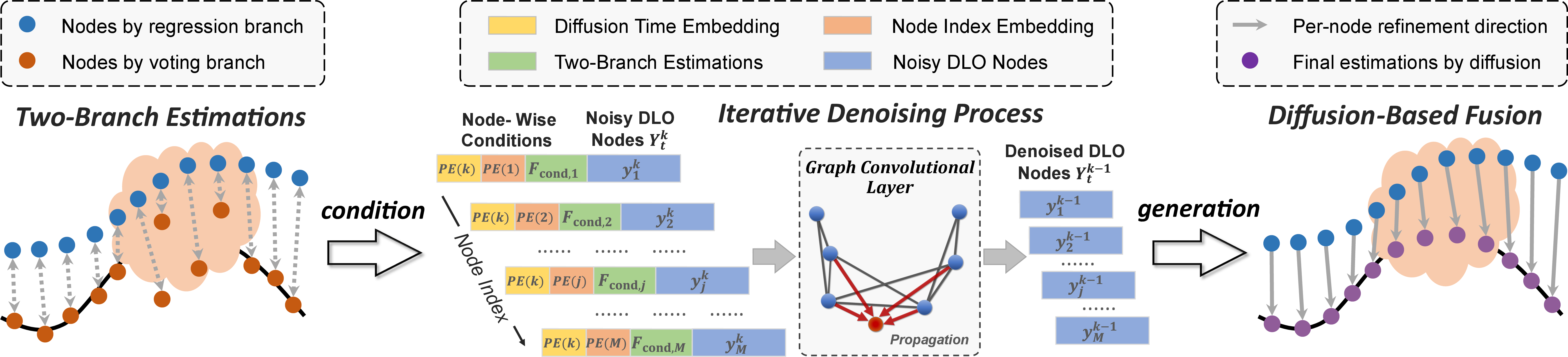

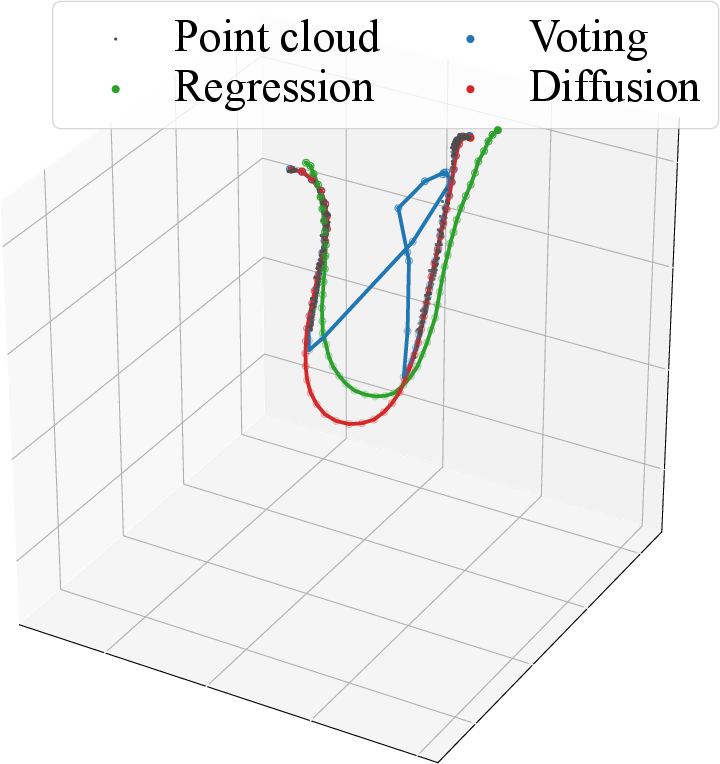

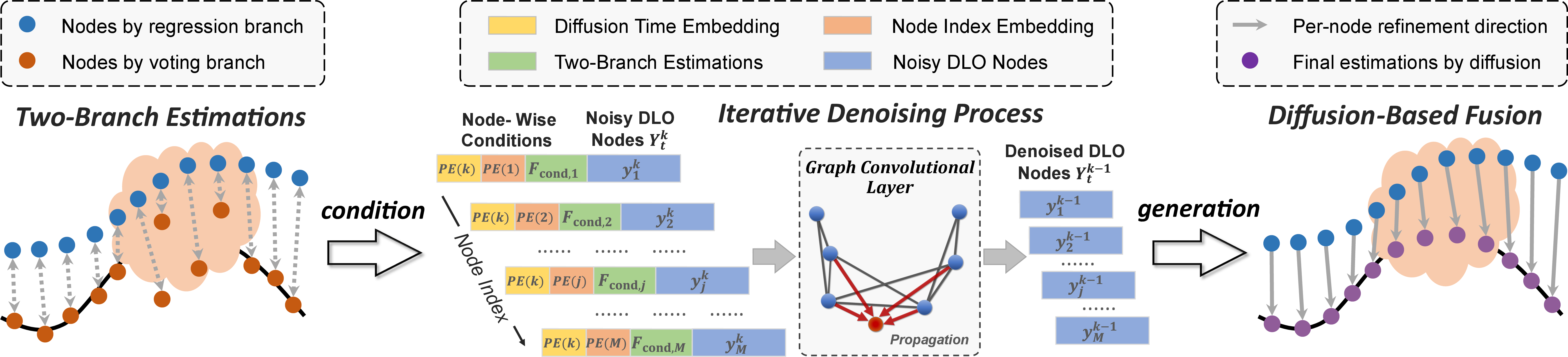

Diffusion-based fusion (Figure 4) then treats both branches' coarse predictions as conditional inputs to a node-wise, graph-convolutional DDPM. This generative model learns to reconstruct both visible and occluded node sequences, outperforming deterministic or registration-based fusions in both accuracy and generalization.

Figure 4: Diffusion-based fusion module demonstrating optimal combination of globally coherent and locally precise node estimations via graph convolutions on conditional inputs.

Cross-Frame State Tracking: Node-Conditioned Generative Prediction

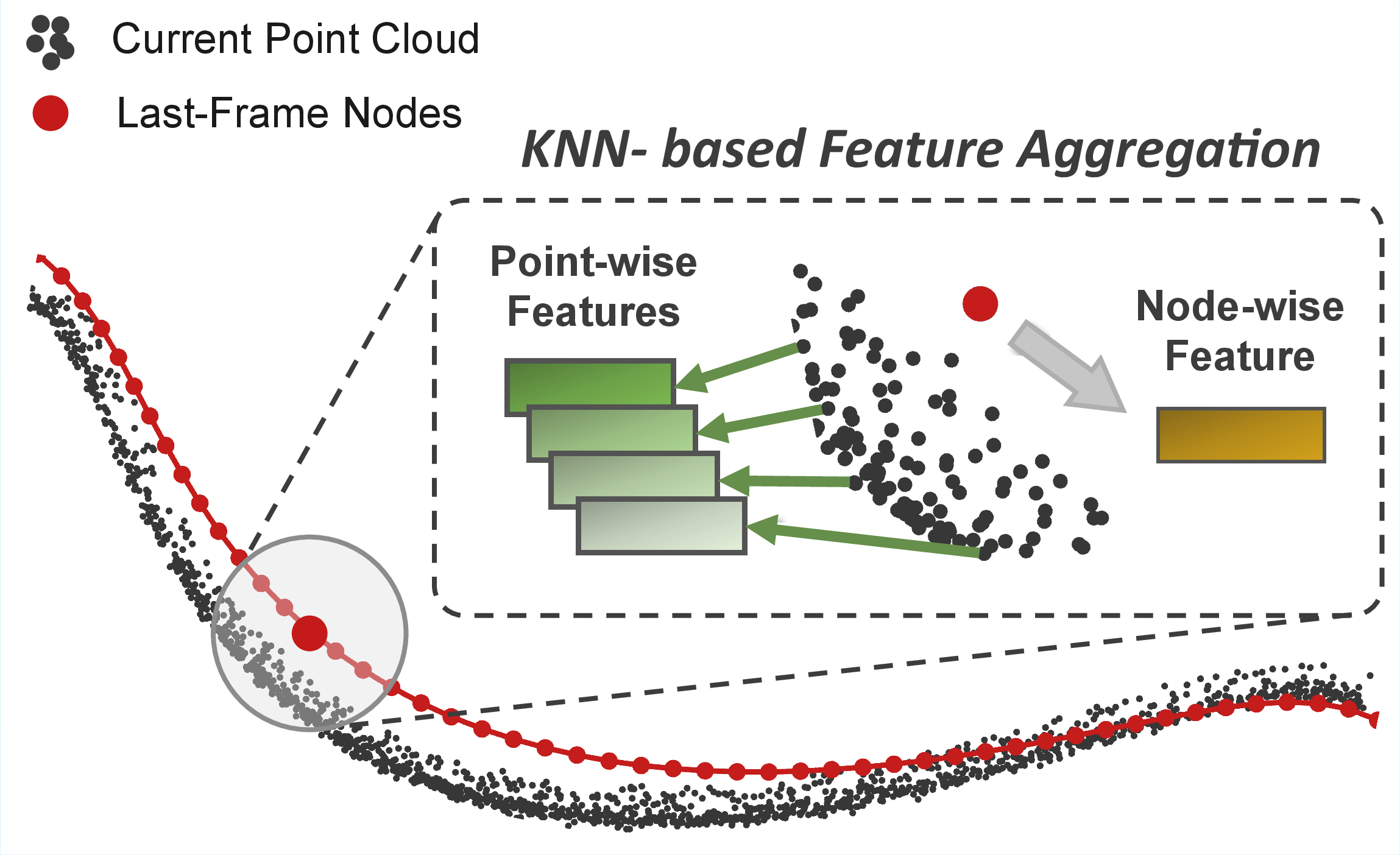

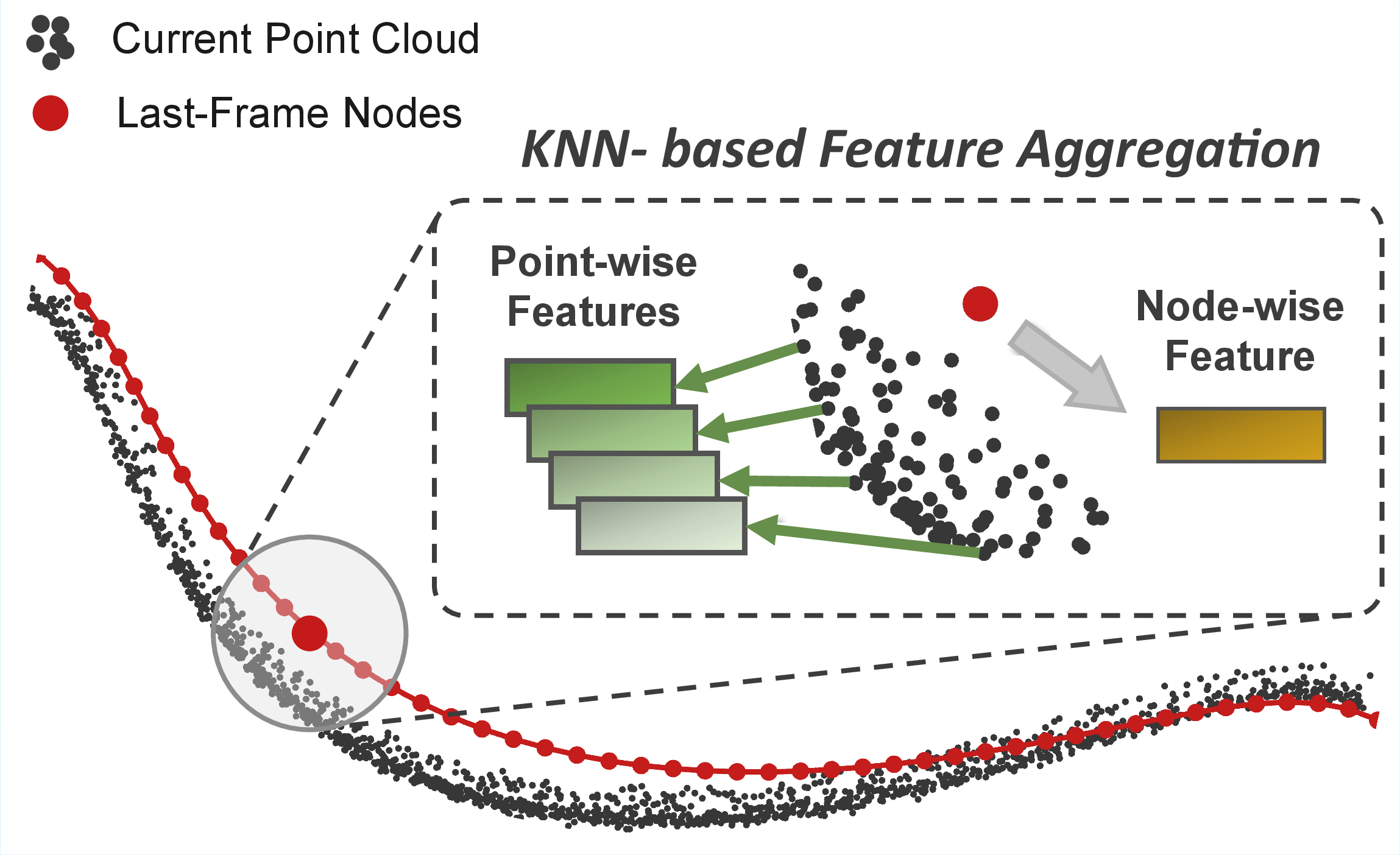

For sequential tracking of DLO deformation, UniStateDLO aggregates local point cloud features around previous-frame node centroids via a KNN set abstraction (Figure 5), then applies global context augmentation via MHSA. The diffusion-based tracking model predicts inter-frame node displacements, enforcing both spatial (via GCN layers) and temporal coherence.

Figure 5: Architecture of KNN-based feature aggregation: prior node locations serve as centroids for localized context, enabling temporally consistent tracking.

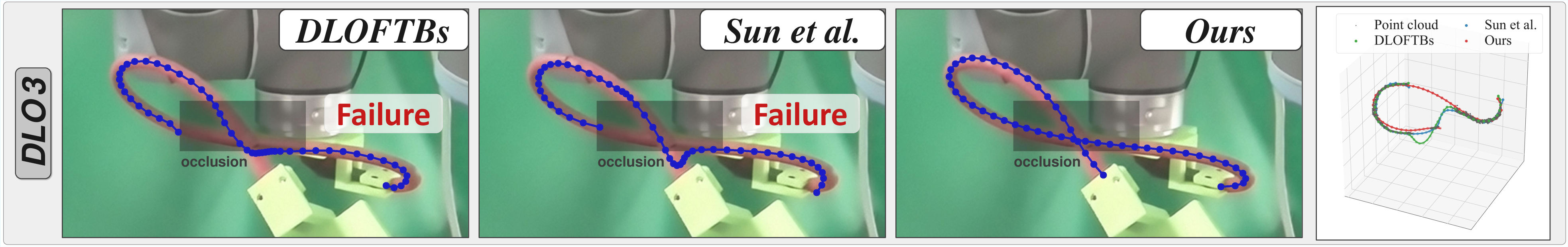

This formulation supports re-initialization after tracking failures due to extreme, prolonged occlusions, where the system can fallback to single-frame estimation for recovery.

Data Normalization and Synthetic Training

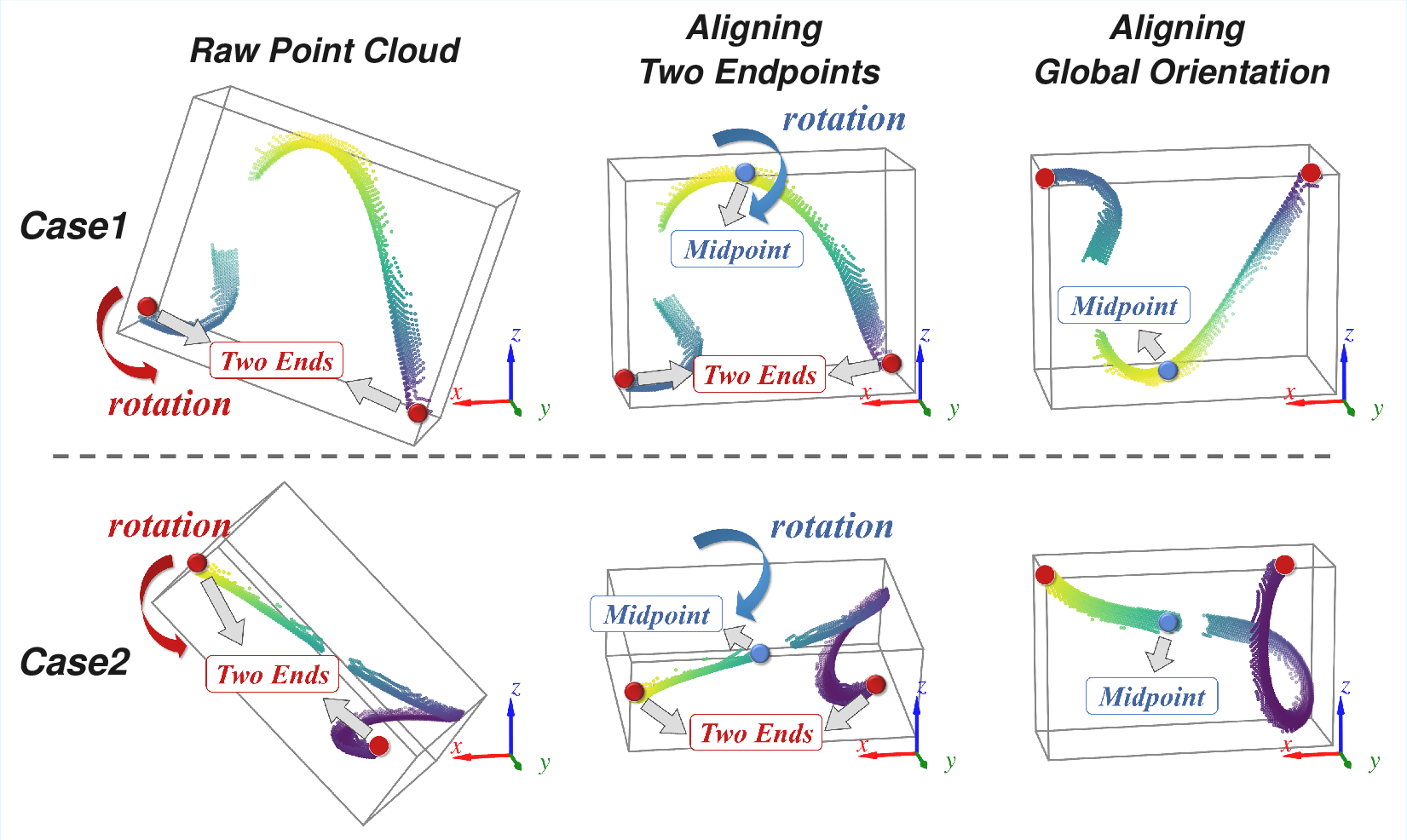

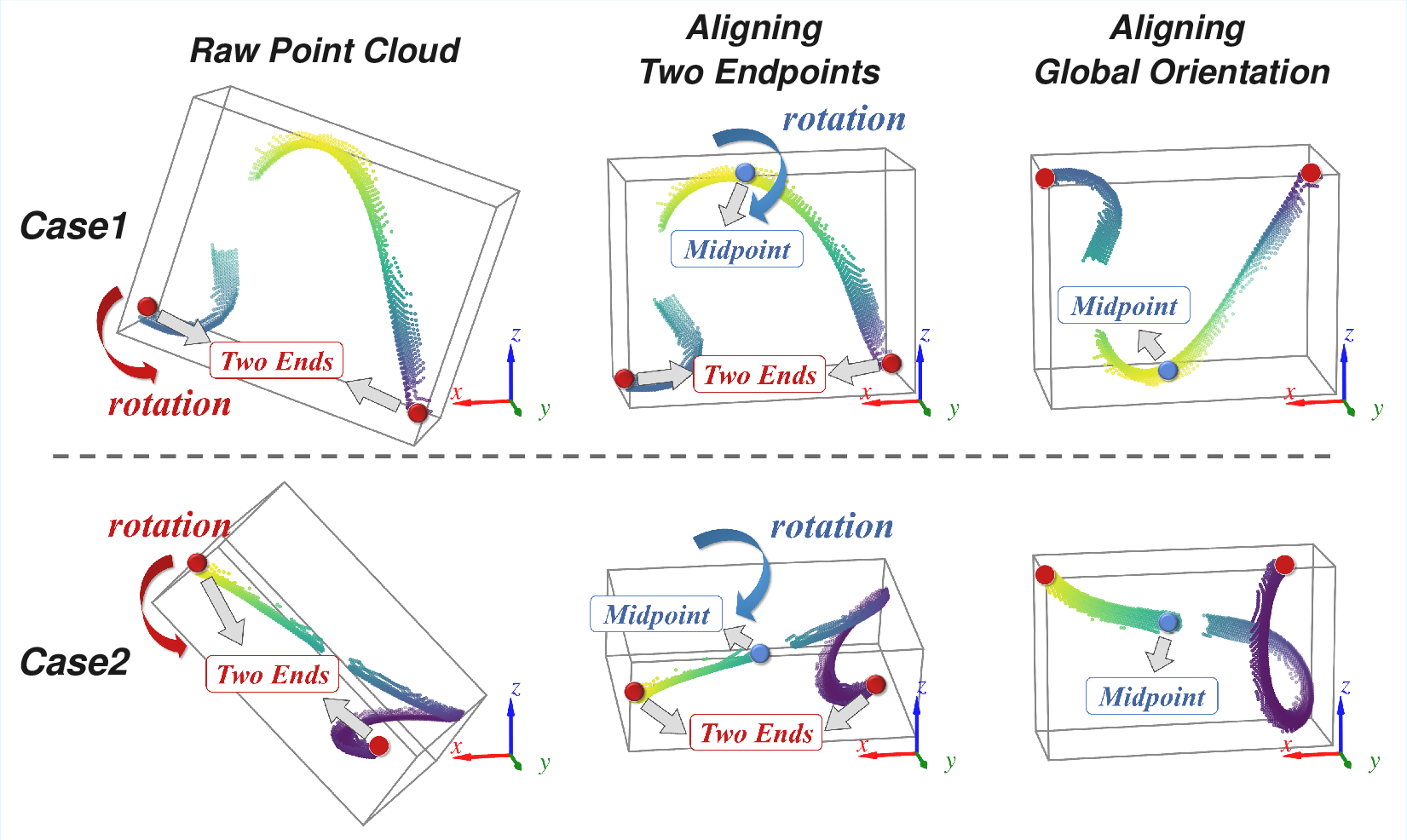

A canonical normalization scheme aligns endpoints and midpoints of point clouds (Figure 6), controlling for arbitrary orientation and scale variability. This standardization increases robustness and generalization, especially in sim-to-real transfer.

Figure 6: Canonical normalization process: endpoints are aligned to coordinate axes and midpoints, followed by scale adjustment and centering.

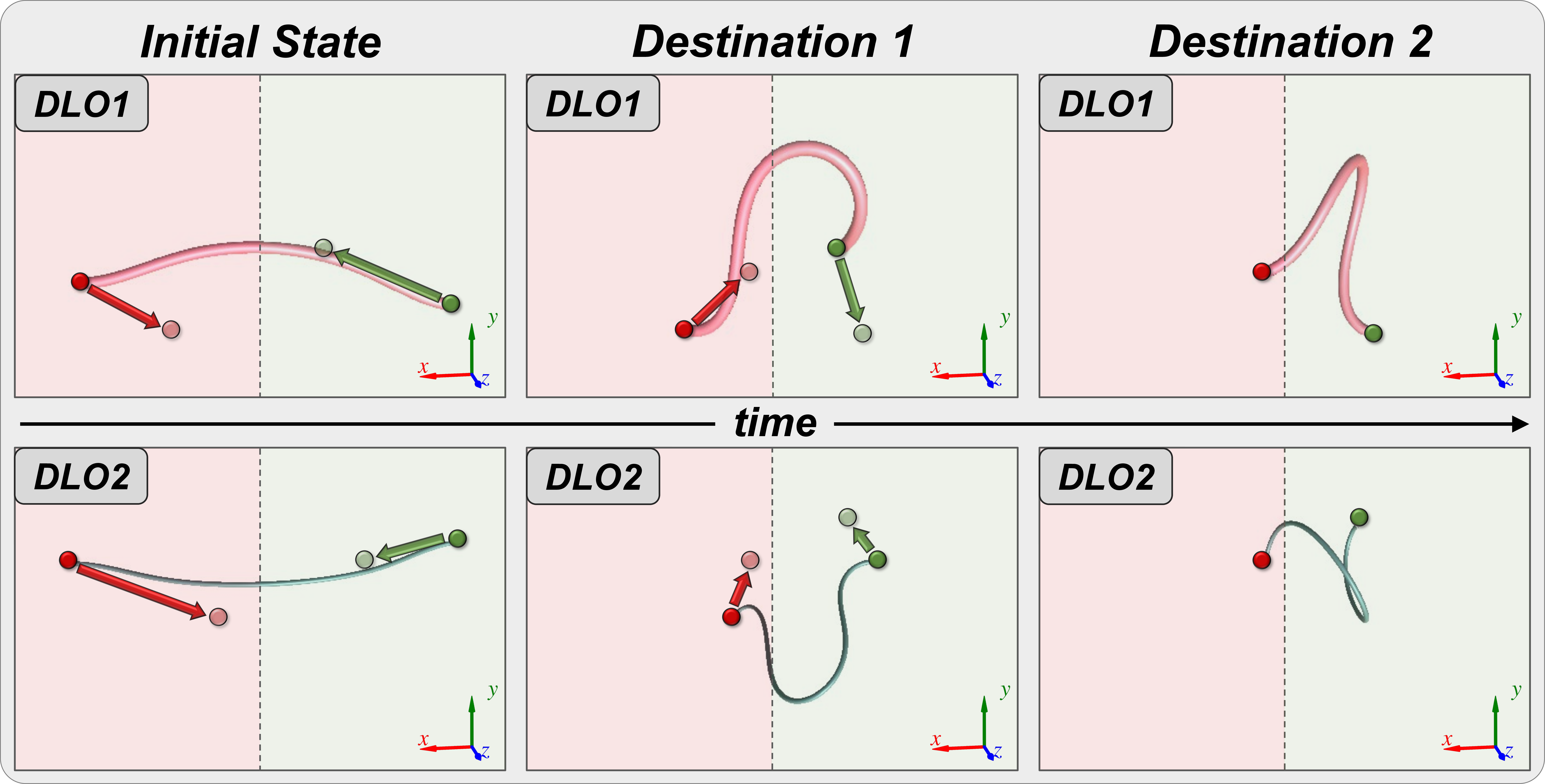

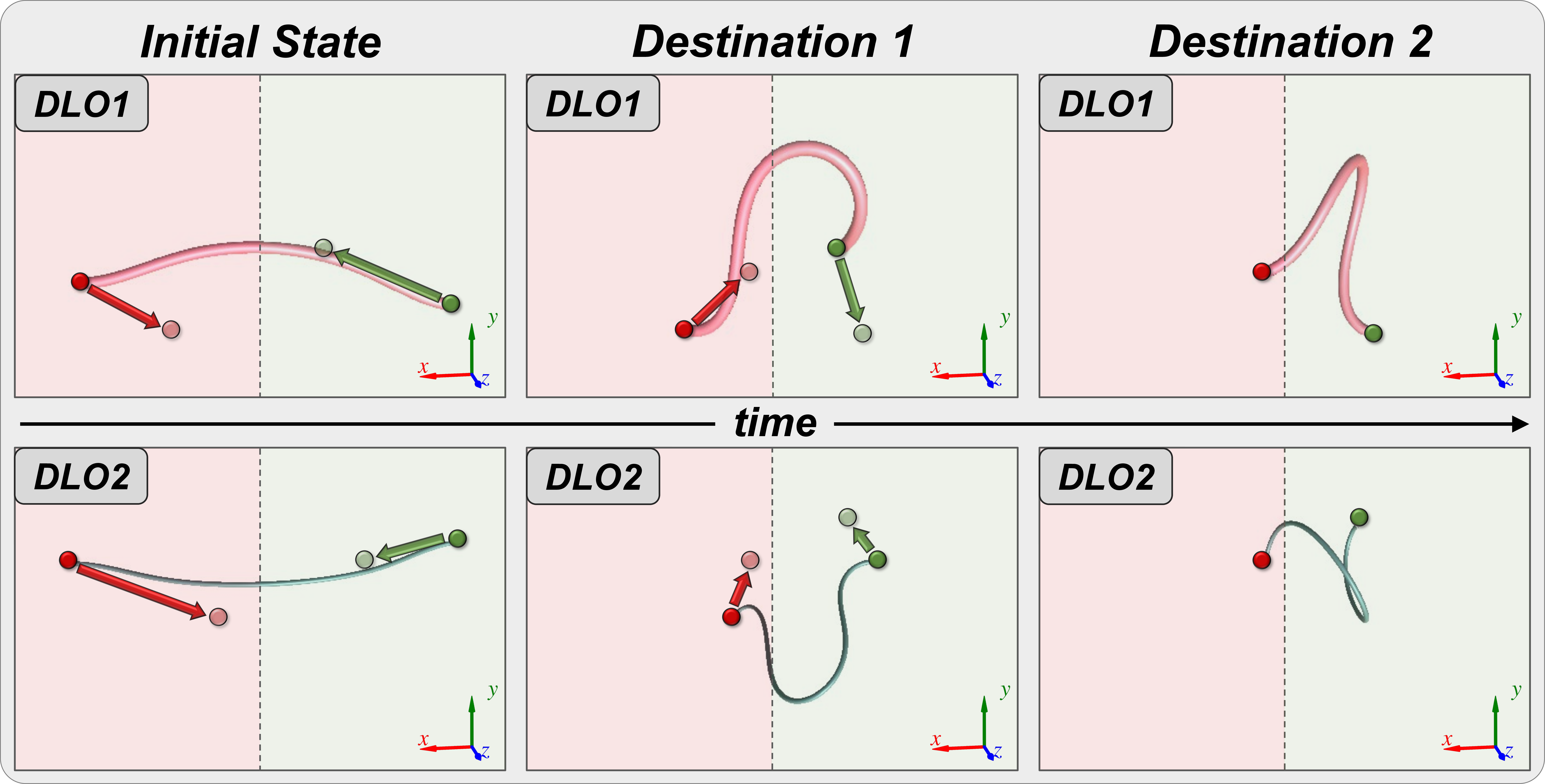

Training is conducted exclusively on a procedurally generated dataset (Figure 7) featuring diverse DLO physical properties, endpoint-driven randomized manipulation trajectories, and large-scale occlusion masking. Zero-shot sim-to-real generalization is demonstrated without any fine-tuning.

Figure 7: Synthetic data collection methodology: endpoints are manipulated through random targets and motions, enabling broad coverage of feasible DLO configurations.

Experimental Results

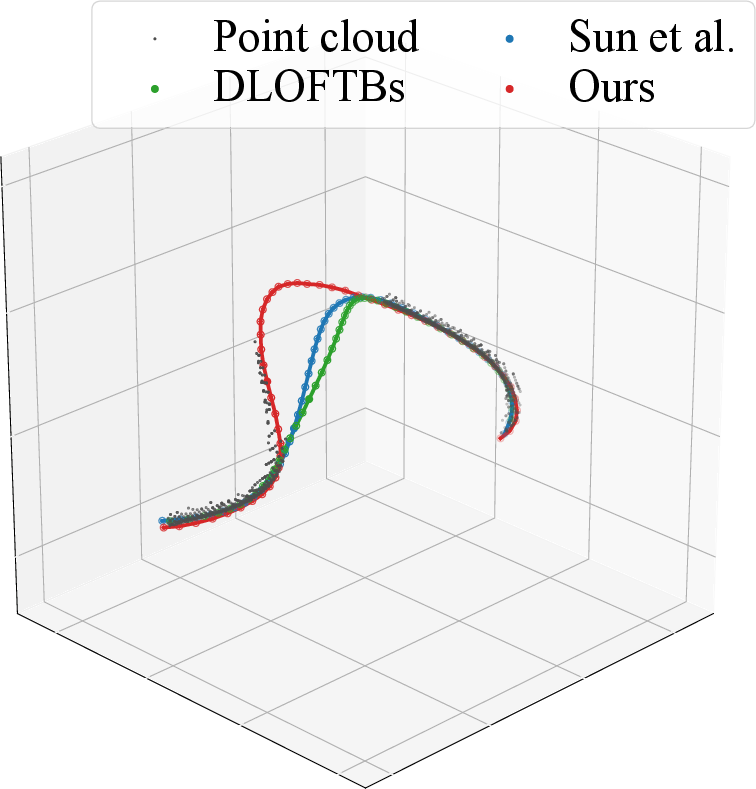

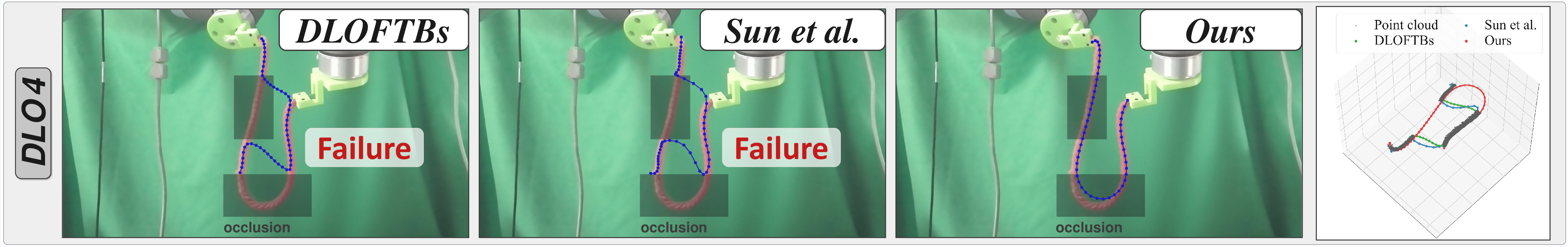

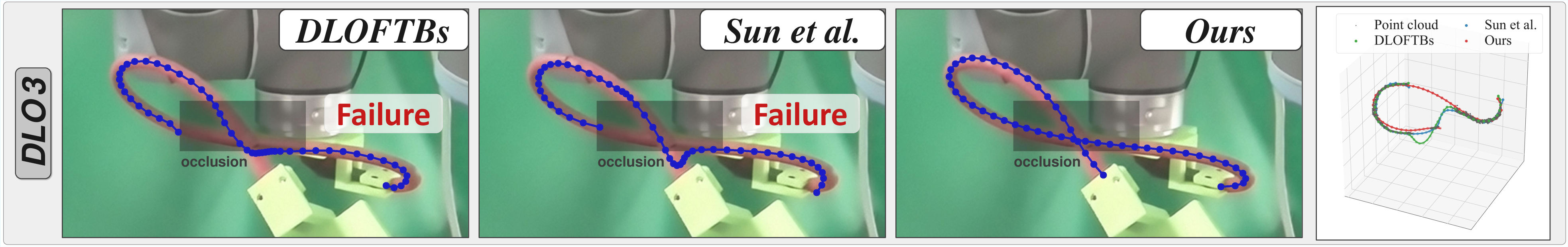

UniStateDLO achieves statistically superior results for MPNE, PCN, and NSS metrics over baselines such as DLOFTBs, Sun et al., CDCPD2, and TrackDLO across synthetic and real data, including under up to 50% occlusion (see main text for tabular data). Fusion via diffusion models surpasses MLP and registration-based approaches, especially in severe occlusion regimes.

Visualizations and Qualitative Analysis

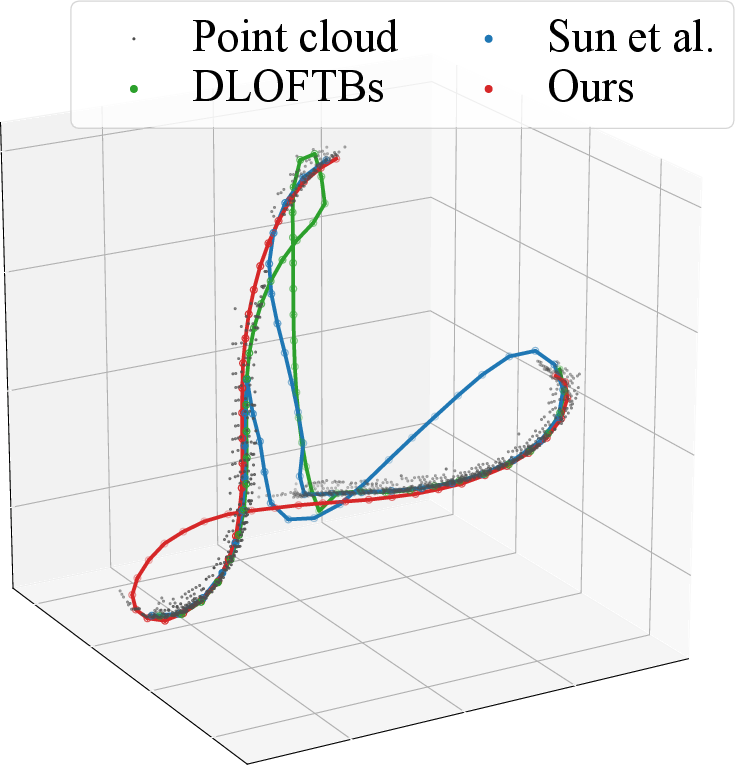

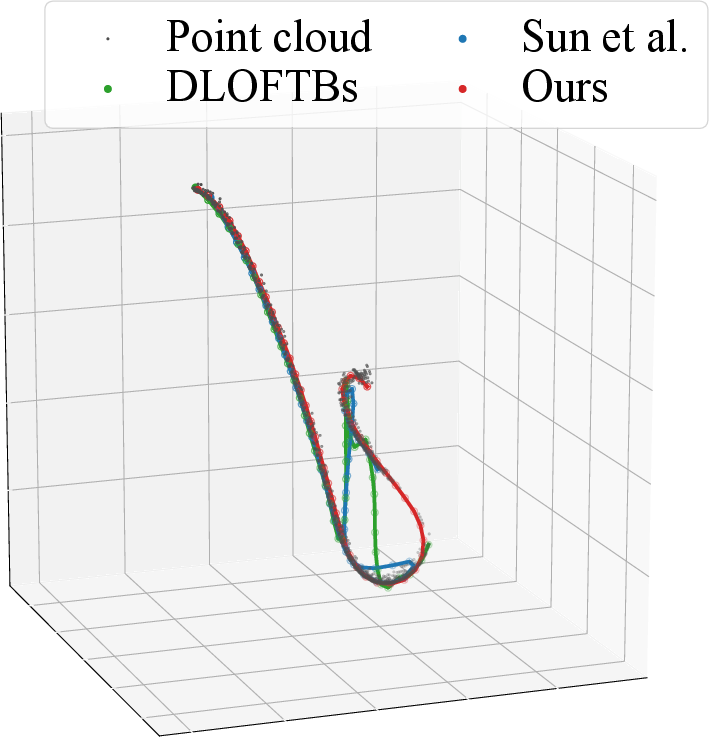

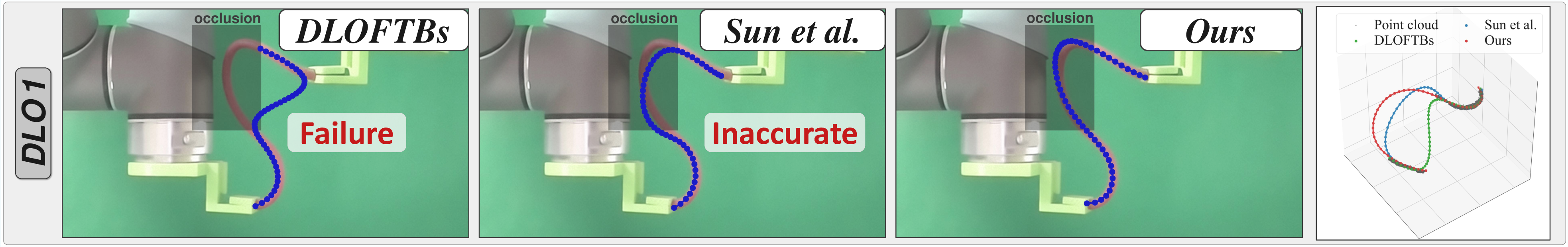

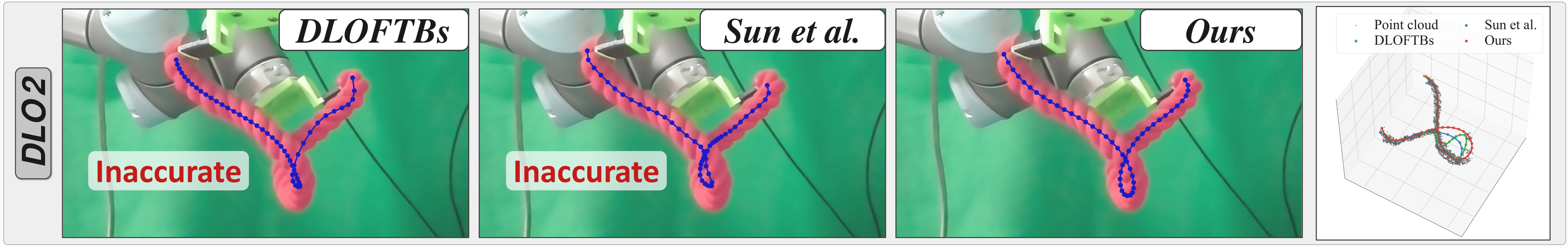

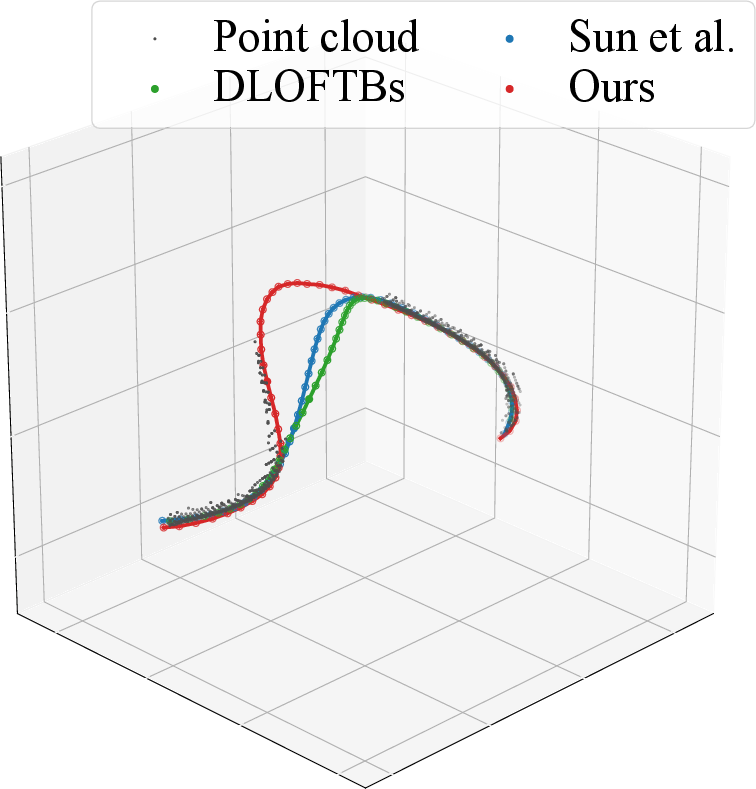

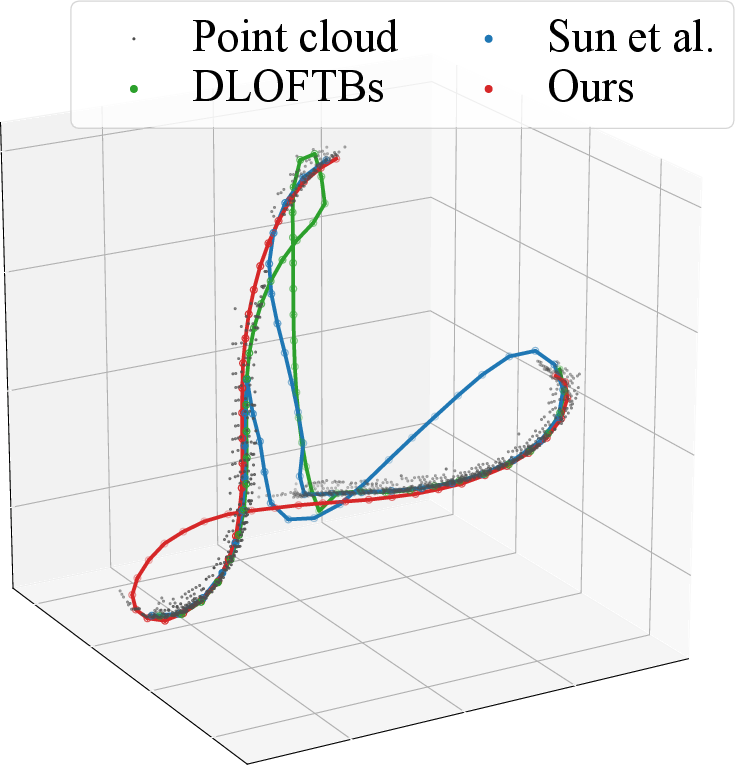

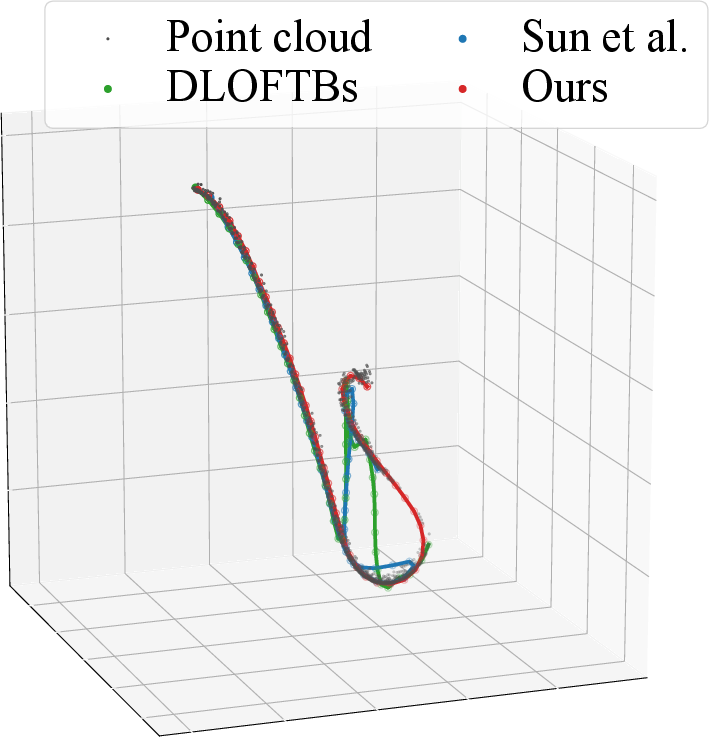

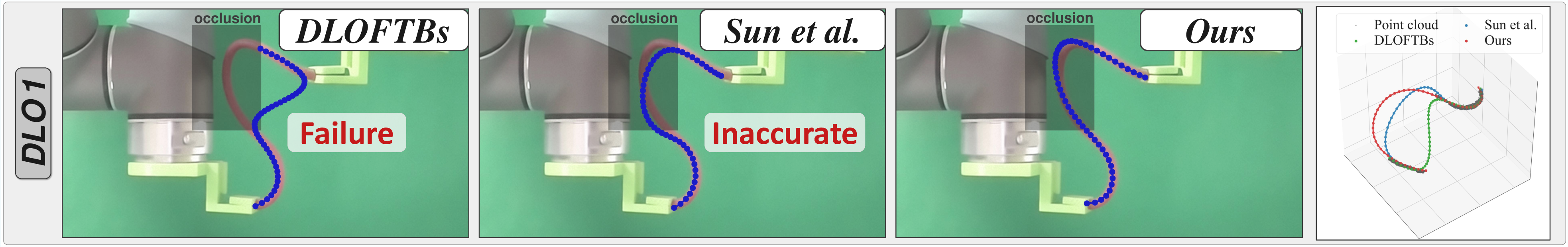

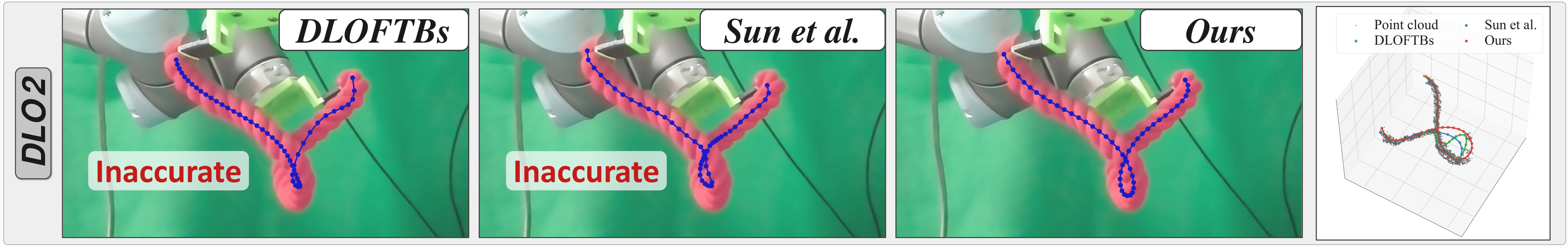

Figure 8: Comparative visualizations—UniStateDLO preserves both global structure and local correctness even under heavy occlusion, exceeding the baselines’ ability to interpolate or connect unobserved segments.

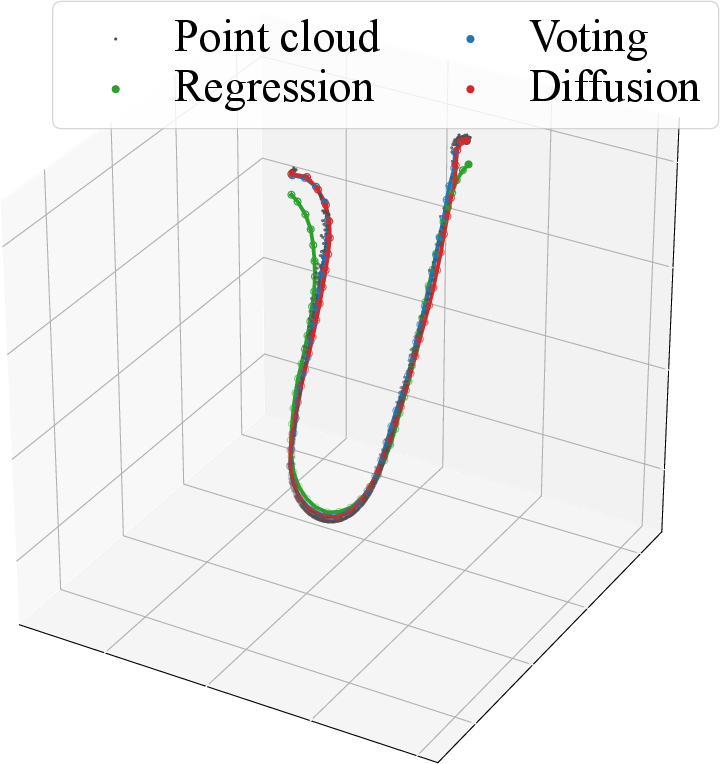

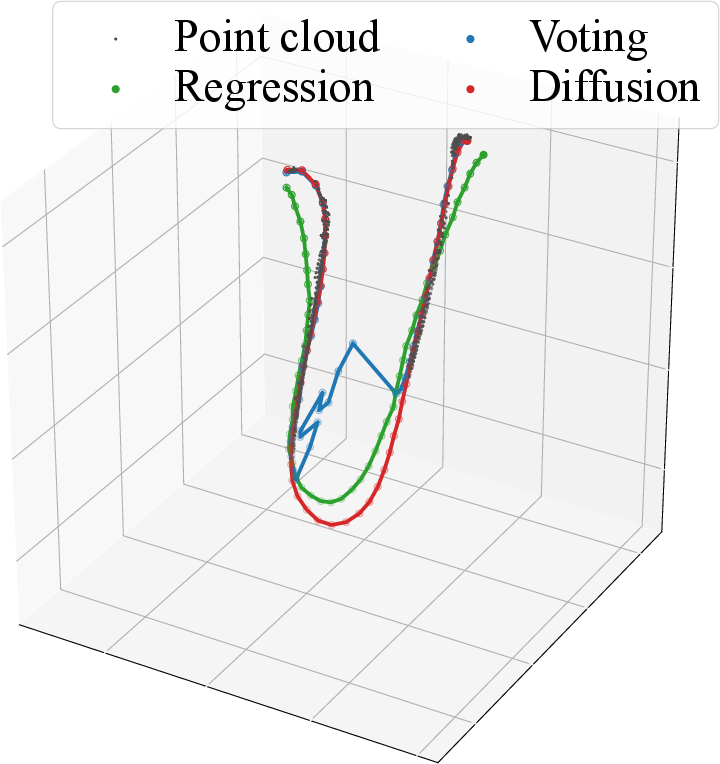

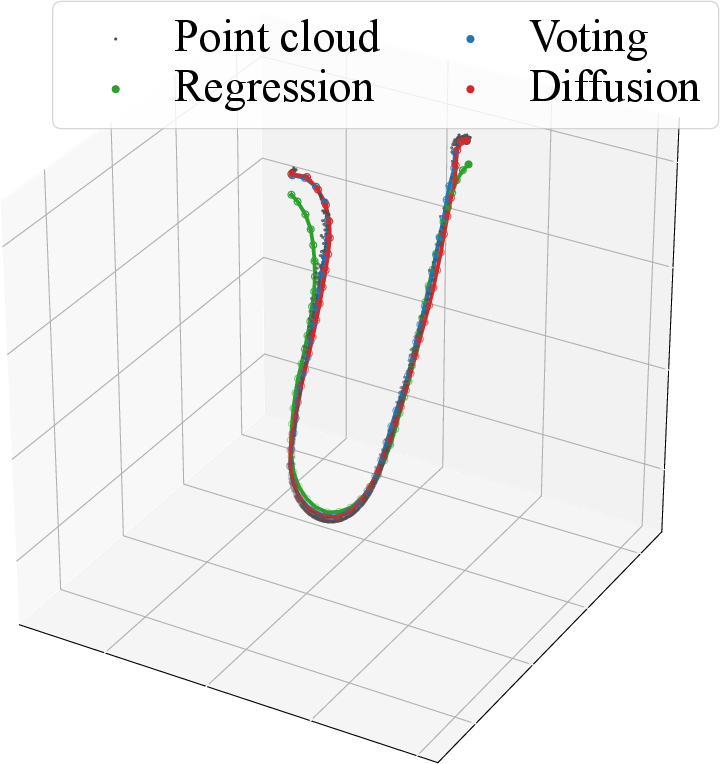

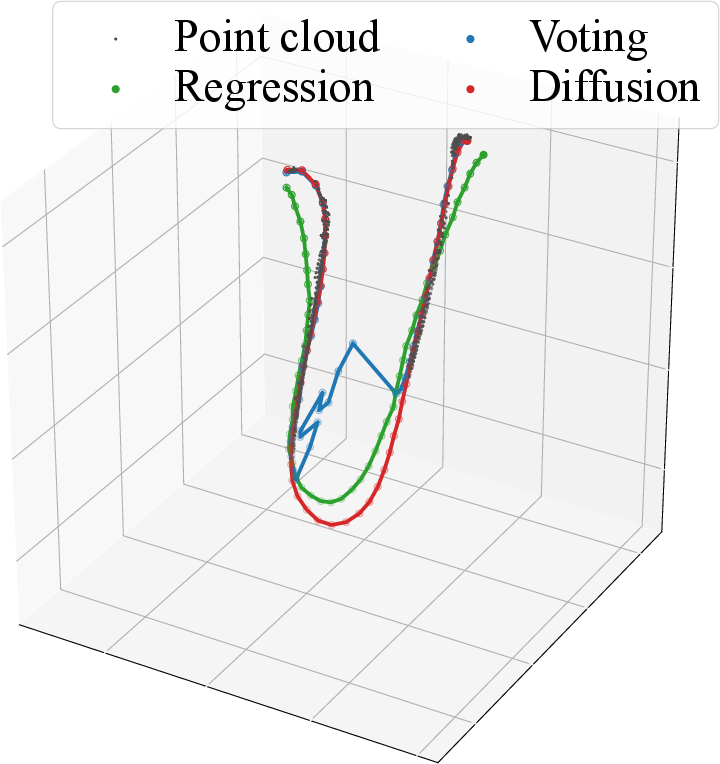

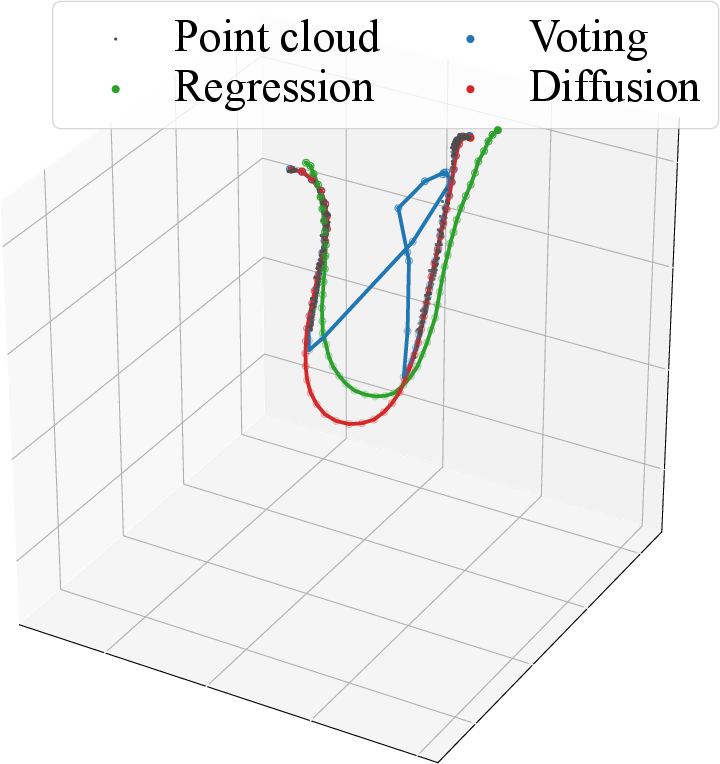

Figure 9: Node estimation at increasing occlusion: diffusion-based fusion corrects and interpolates both regression and voting branches for rigorous shape recovery.

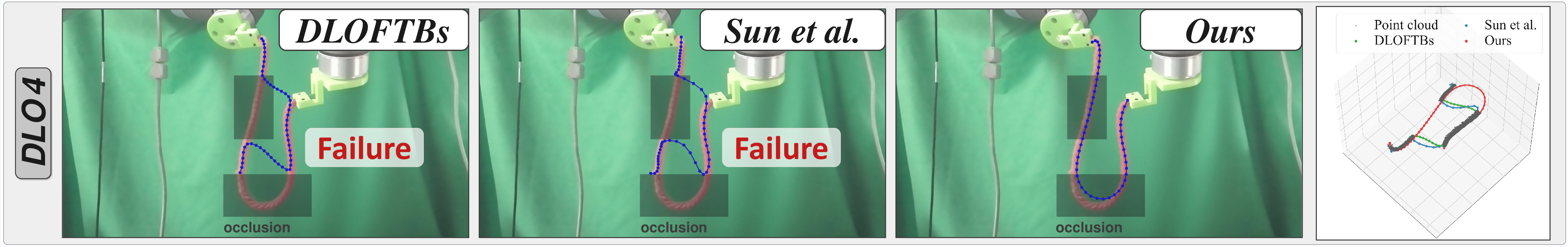

Figure 10: Robustness to occlusion and material variation: UniStateDLO generalizes from synthetic to real-world DLOs, maintaining high node accuracy.

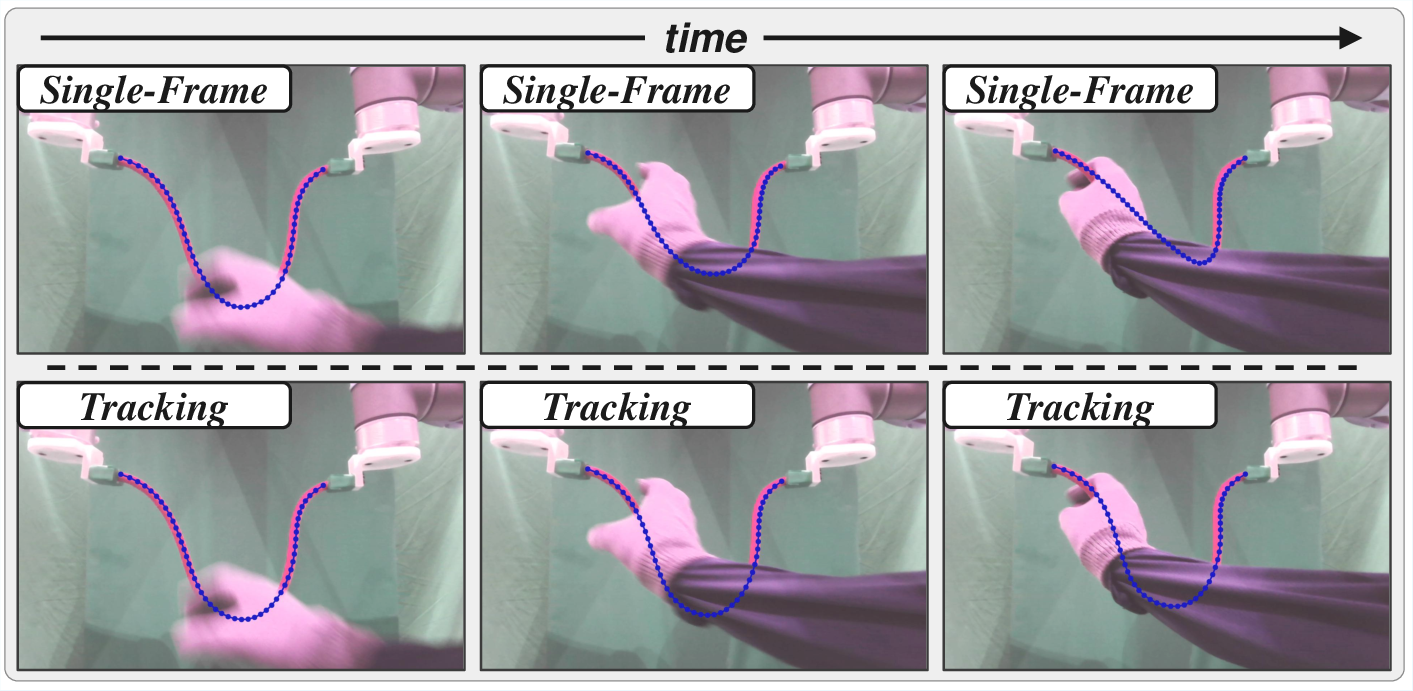

Figure 11: Temporal smoothness: cross-frame tracking preserves continuity, avoiding abrupt configuration changes seen in single-frame estimation methods.

Integration and Applications

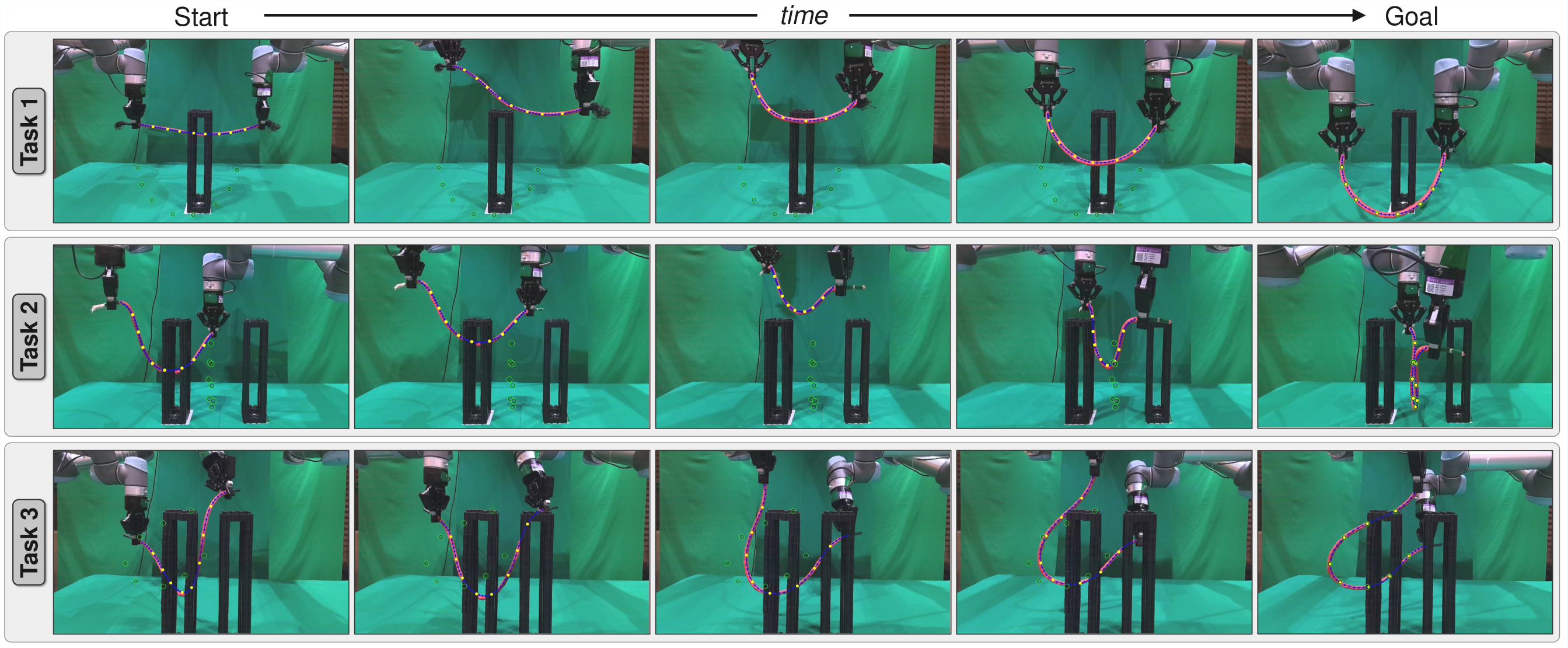

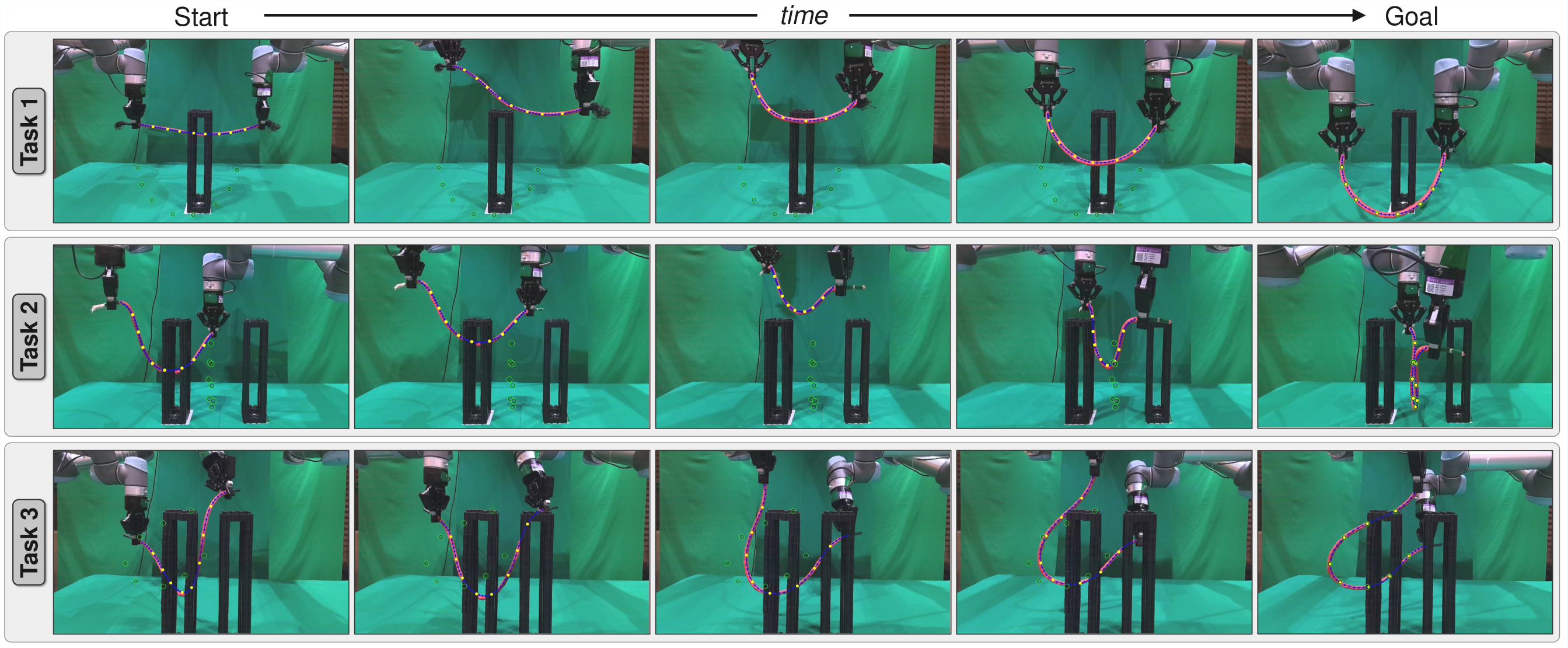

Integration with a dual-arm robot for closed-loop DLO manipulation is showcased (Figure 12), where UniStateDLO supports feedback control, obstacle avoidance, and long-horizon manipulation under dynamically changing occlusion patterns. Real-world tasks involve large deformations, continuous feedback, and endpoint occlusion regimes.

Figure 12: Demonstration of complex shape control tasks where UniStateDLO enables stable and precise feedback for constrained environments.

Limitations and Prospects

While UniStateDLO sets a new standard for DLO perception under occlusion, several limitations persist:

- Segmentation is assumed as input; real-world deployments in clutter require integration with advanced instance segmentation systems.

- Extremely soft/knotted DLO behaviors are outside the current simulation/training distribution.

- Endpoint occlusion is treated implicitly, not explicitly reasoned, potentially limiting resilience.

- Pipeline complexity could impede deployment; future work may streamline architecture and accelerate inference.

Theoretical implications include the value of generative modeling for high-dimensional, ambiguous state estimation in robot perception. Practical ramifications extend to broader deformable object manipulation settings, where zero-shot generalization and robust temporal tracking are critical.

Conclusion

UniStateDLO establishes a comprehensive, unified approach to deformable linear object perception under severe occlusion, combining dual-branch features with generative diffusion models for both initialization and tracking. Empirical results demonstrate high accuracy, robustness, and real-world viability for feedback-driven robotic manipulation in constrained environments. Future directions include segmentation integration, extension to highly deformable DLOs, uncertainty propagation, and system simplification—a solid foundation for next-generation robotic manipulation research.