- The paper introduces EcoSplat, enabling explicit control over 3D Gaussian primitive count via a dual-stage pipeline combining pixel-aligned training and importance-aware finetuning.

- Its two-stage approach, featuring a ViT-based encoder and progressive learning with opacity loss, efficiently compacts primitives to as low as 5% without significant quality loss.

- Experimental results show substantial improvements in PSNR, SSIM, and LPIPS, along with an inference speed of 1042 FPS, making it suitable for scalable real-time applications.

Efficiency-Controllable Feed-Forward 3D Gaussian Splatting: EcoSplat

Introduction

EcoSplat ("Efficiency-controllable Feed-forward 3D Gaussian Splatting from Multi-view Images") (2512.18692) introduces an advanced feed-forward framework for dense-view 3D Gaussian Splatting (3DGS) that explicitly controls the number of output primitives at inference time. While conventional feed-forward 3DGS methods generate pixel-aligned primitives per view, resulting in excessive and redundant primitives—especially in dense multi-view scenarios—EcoSplat addresses both the scalability and controllability issues by introducing novel importance-aware learning mechanisms and adaptive primitive compaction.

Framework Architecture

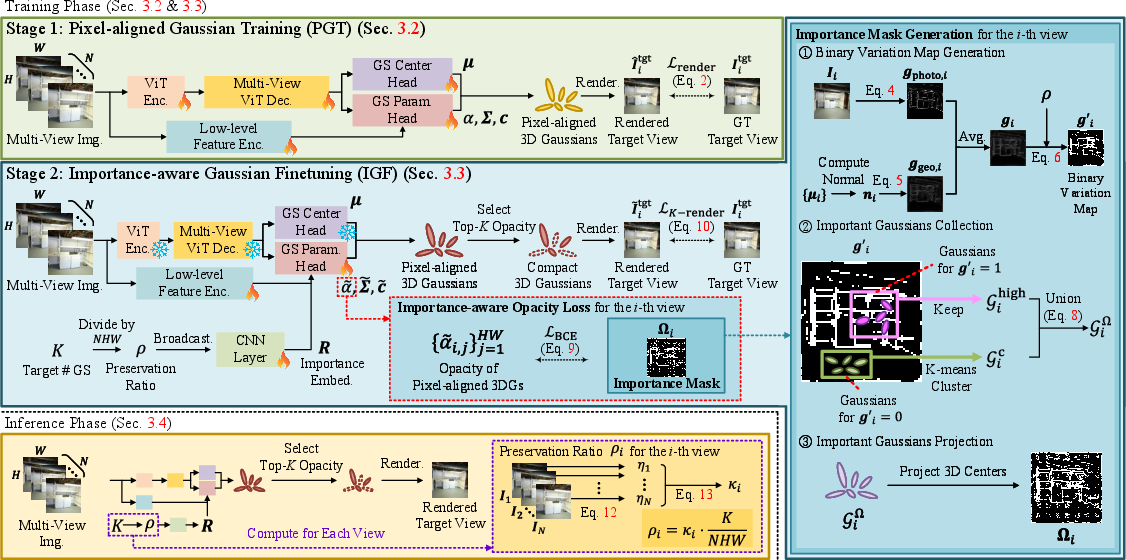

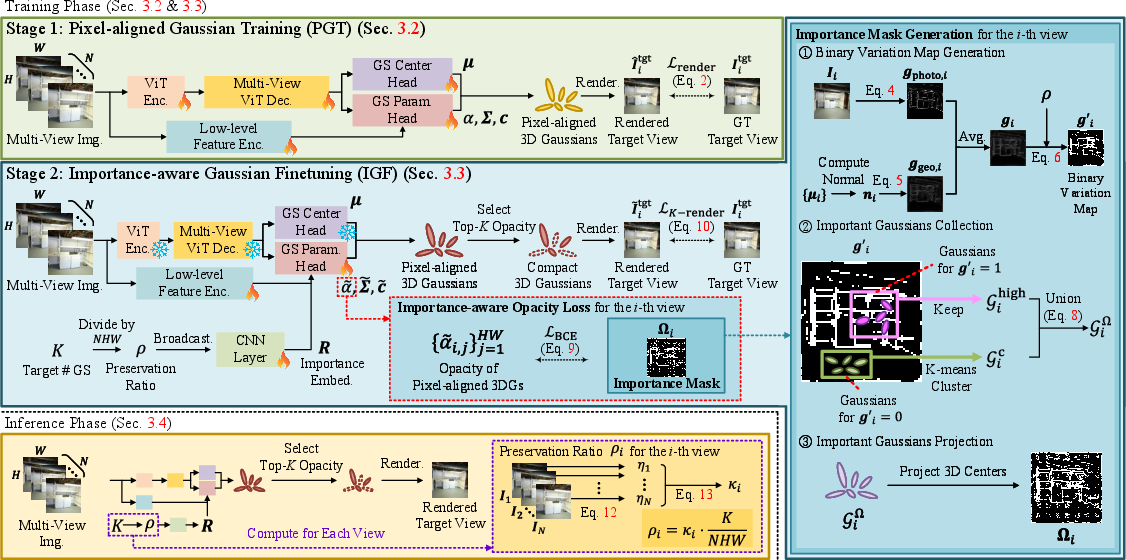

EcoSplat utilizes a two-stage pipeline:

- Pixel-aligned Gaussian Training (PGT): The initial stage trains a ViT-based encoder-decoder to predict pixel-wise 3D Gaussian primitives from multi-view inputs. Each pixel in an input image is mapped to a parameterized Gaussian (center, opacity, covariance, color) leveraging robust multi-view aggregation.

- Importance-aware Gaussian Finetuning (IGF): The second stage injects an explicit target primitive count, K, conditioning the model to predict primitive opacities and parameters adaptively. An importance embedding derived from K is fused with Gaussian parameters, allowing the model to suppress the visibility (opacity) of less informative primitives, retaining only the K most significant Gaussians for rendering.

Figure 1: Overview of EcoSplat, illustrating training via pixel-aligned Gaussian prediction and efficiency-controlled importance ranking for target primitive counts.

The IGF stage introduces the importance-aware opacity loss Lio and a progressive learning strategy (PLGC) for compaction robustness across a dynamic range of K values. The architecture guarantees that inference can yield a variable level of compression, allowing for resource-adaptive deployments on client devices.

Importance Analysis and Loss Design

EcoSplat derives pseudo ground truth importance masks for each view by combining photometric and geometric gradients, facilitating localized selection of detail-preserving Gaussians and efficient clustering of redundant low-variation regions. Importance masks supervise the opacity prediction, leading to tight correspondence between the retained 3D Gaussians and scene complexity distribution.

PLGC progressively anneals the K sampling range during training, enabling stable adaptation and effective compaction from the full primitive set down to highly compact representations (<5% of initial primitives) without catastrophic degradation.

Inference and Adaptive Primitive Allocation

During inference, EcoSplat adaptively allocates primitive counts per input view by quantifying extracted high-frequency components. A softmax-normalized high-frequency score assigns more primitives to views with greater detail content, maximizing rendering quality under a global primitive budget.

Experimental Results

EcoSplat demonstrates substantial improvements over prior feed-forward and post-optimization-based 3DGS methods under aggressive primitive constraints:

- At 5% of pixel-aligned primitives, EcoSplat achieves PSNR of 24.72 dB, SSIM of 0.822, and LPIPS of 0.183, outperforming all baselines by wide margins (Table 1).

- The framework maintains high fidelity with extremely compact outputs, where existing methods collapse or suffer severe artifacts.

- EcoSplat runs at 1042 FPS with only 78K primitives, an order of magnitude faster and more storage efficient than other tested feed-forward models.

These characteristics solidify EcoSplat’s claim: explicit and reliable primitive count control with optimal rendering quality-efficiency trade-off across the primitive budget spectrum. Cross-domain evaluations on ACID further demonstrate generalization, where EcoSplat matches or surpasses more primitive-heavy models even with drastically reduced primitive sets.

Ablation and Design Validation

Ablation studies confirm the necessity of both PGT and IGF: omitting importance-aware finetuning causes catastrophic quality collapses, and removing PLGC or the opacity loss leads to brittle or suboptimal compaction.

(Figure 2)

Figure 2: Visual results of ablation highlighting the impact of the PGT stage, importance-aware opacity loss, and PLGC strategy on rendering quality under aggressive primitive constraints.

(Figure 3)

Figure 3: Distribution of predicted Gaussian opacities for compact and non-compact primitive budgets, showing effective suppression of redundancy and retention of informative components.

Implications and Future Directions

EcoSplat offers practical flexibility for deployments where compute, memory, or latency constraints dictate a variable representation size. It paves the way for streamable 3DGS on heterogeneous devices, improved real-time NVS experiences, and scalable remote rendering.

On the theoretical front, EcoSplat’s approach demonstrates the advantage of learned, importance-aware compaction strategies over ad-hoc pruning and post-hoc compression pipelines.

Future work could extend the framework to dynamic scene NVS, integrating temporal consistency learning and dynamic/static Gaussian partitioning, as suggested by recent advances in 4D scene reconstruction. The model could further benefit from self-supervised consistency constraints or optimized training for object deformation.

Conclusion

EcoSplat advances the field of feed-forward 3DGS by enabling direct, explicit, and continuous control over primitive efficiency, maintaining state-of-the-art rendering quality under stringent constraints. The principled integration of importance-aware learning and progressive compaction establishes a robust, scalable foundation for downstream rendering tasks in real-world VR, AR, and graphics applications.