- The paper introduces a statistical-causal framework that treats AI explanations as parameters inferred from computational traces with uncertainty quantification.

- It demonstrates that popular interpretability methods can yield false discoveries, reminiscent of dead salmon artifacts, due to non-identifiability.

- The study emphasizes rigorous hypothesis testing against randomized null models to establish reliability and clarity in AI interpretability.

The Statistical Challenges in AI Interpretability

Introduction

The paper "The Dead Salmons of AI Interpretability" (2512.18792) critically examines the methodological challenges in the field of AI interpretability, drawing parallels to a famous incident in neuroscience that exposed statistical pitfalls. It argues for a thorough statistical reframing of interpretability methods to prevent false discoveries akin to the "dead salmon" artifacts, emphasizing the importance of identifying and addressing non-identifiability in interpretability queries. The study proposes a statistical-causal framework that treats explanations as parameters inferred from computational traces, underscoring the need for rigorous hypothesis testing against meaningful null models.

The Dead Salmon Analogy and Statistical Fragility

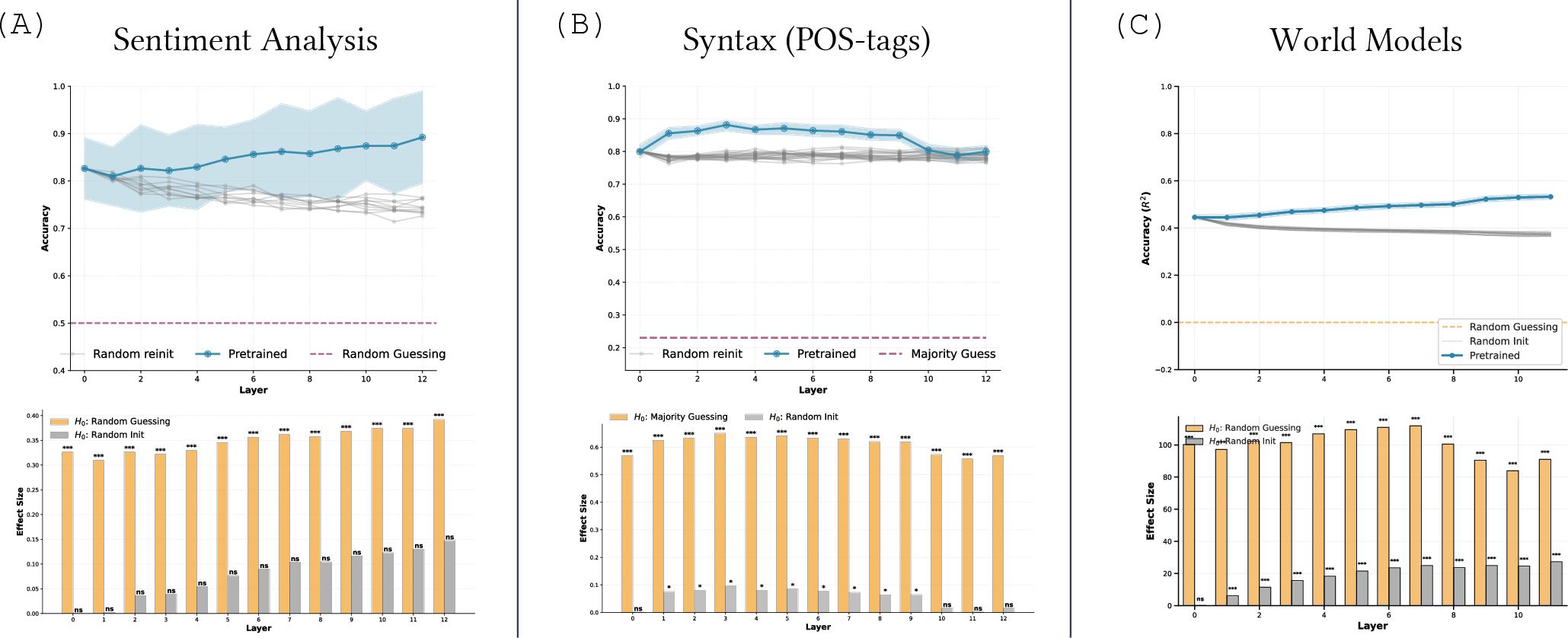

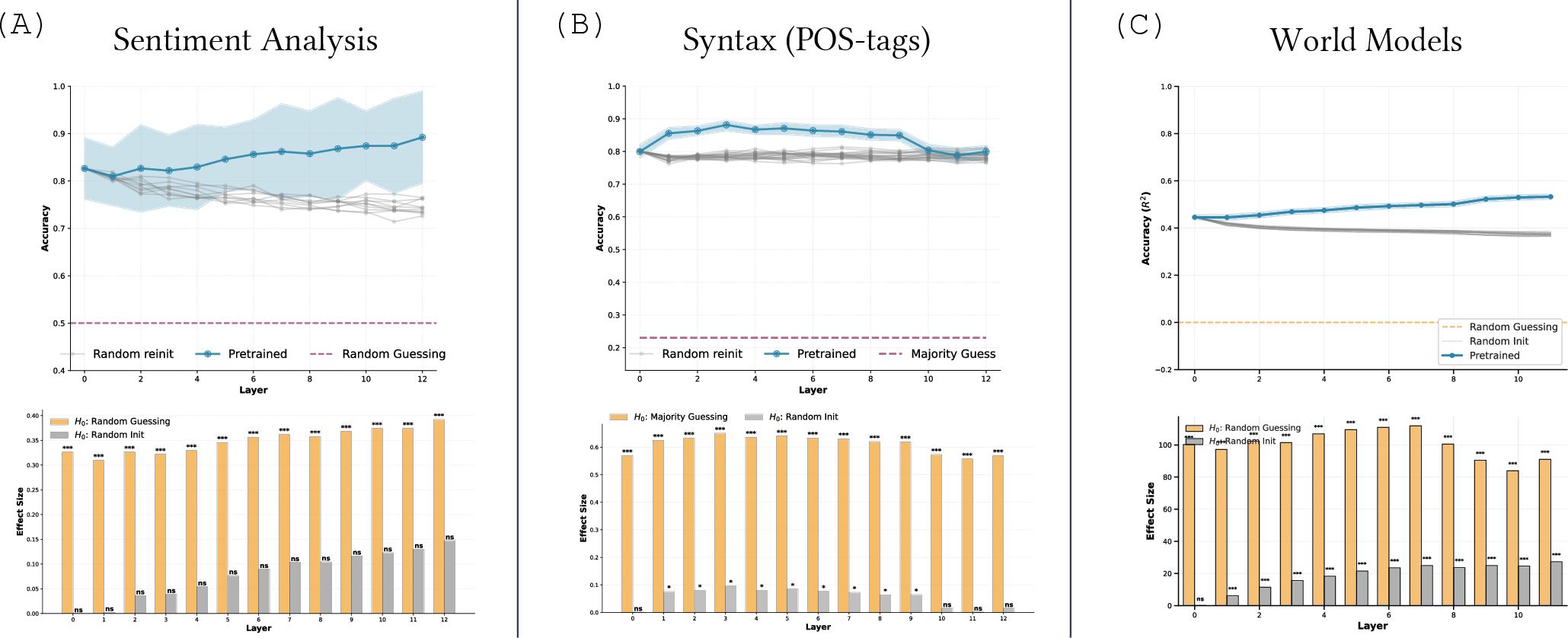

The infamous dead salmon study from neuroscience serves as an analogy for AI interpretability's pitfalls, wherein statistical missteps can lead to seemingly plausible but incorrect explanations. In AI, methods such as feature attribution, probing classifiers, and sparse auto-encoders are prone to generating explanations even for randomly initialized networks, highlighting interpretability's statistical fragility. These artifacts are not mere curiosities but systemic issues that challenge the validity of interpretability approaches, especially in high-stakes applications where transparency and reliability are paramount.

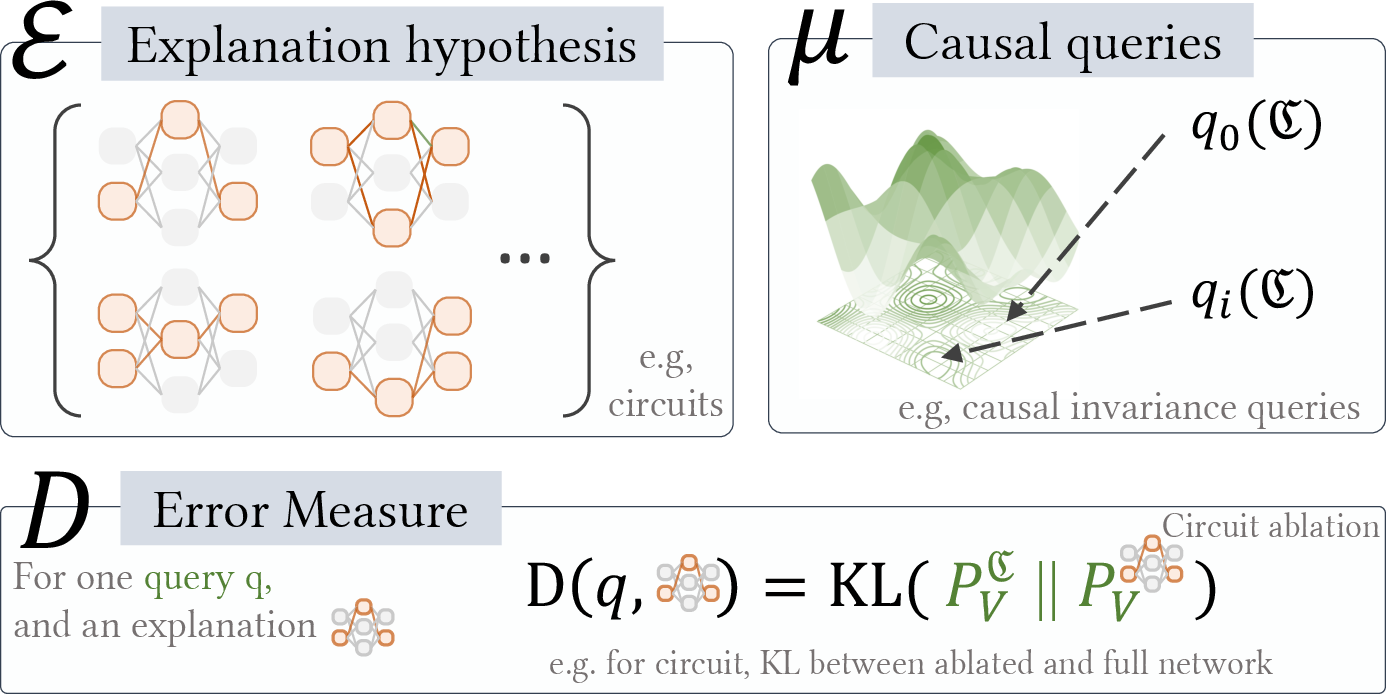

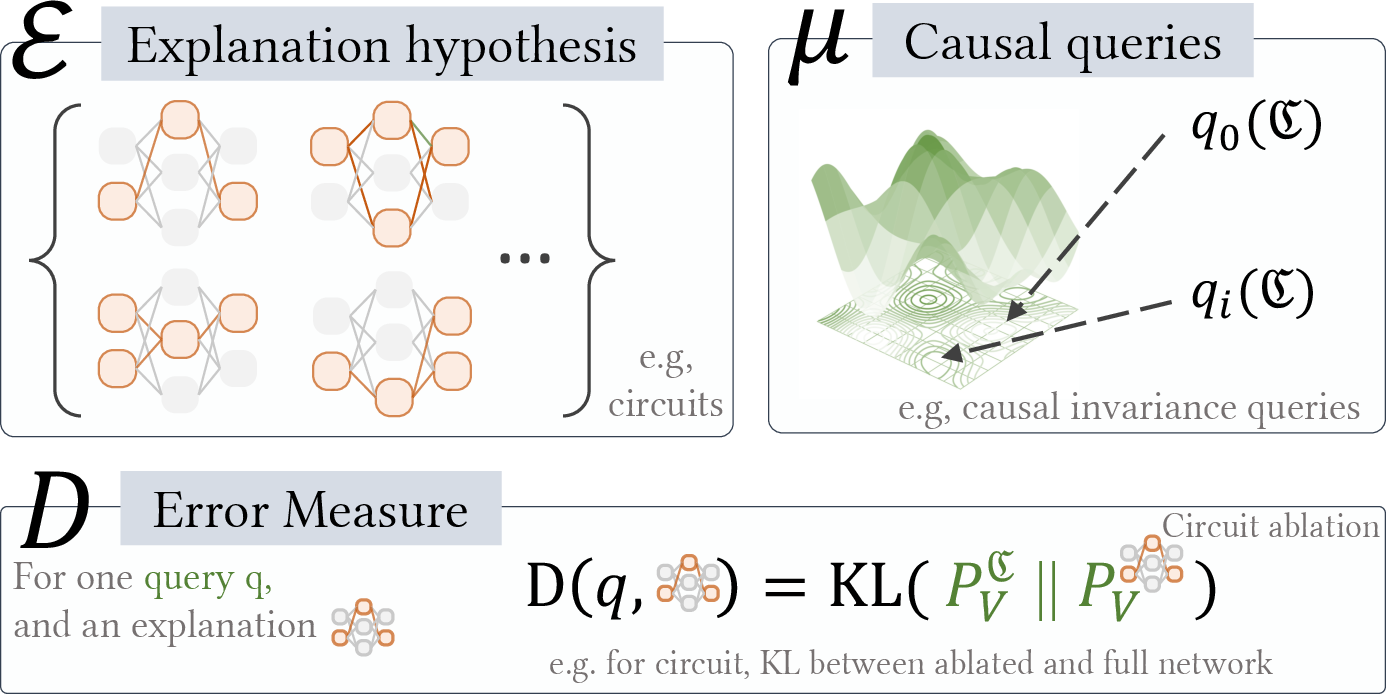

Figure 1: An interpretability task is defined by three elements: E....

Statistical-Causal Reframing

The authors propose a shift towards a statistical-causal inference perspective to rectify these issues. Rather than viewing interpretability as merely producing explanations, this reframing conceptualizes explanations as statistical models inferred from computational data, subject to uncertainty quantification. This approach emphasizes the need for identifiability in interpretability queries, suggesting that many current methods fail to provide unique and reliable explanations due to the inherent non-identifiability of their explanatory tasks.

Practical Implications and Methodological Guardrails

A key implication of this work is the call to align AI interpretability with robust statistical practices. By framing interpretability within statistical inference, the paper suggests that methods should be judged on their ability to withstand rigorous hypothesis testing against suitably randomized null models. This would not only curb the emergence of dead salmon artifacts but also ensure that interpretability becomes a more rigorous scientific discipline. The paper advocates for explicit uncertainty quantification, recognizing that many explanatory claims made by interpretability methods might be fundamentally ambiguous without it.

Figure 2: (A) Sentiment analysis experiment where probes on pretrained BERT are compared against probes trained on random computation. (B) Same experiment based on predicting syntactic labels (POS tags). (C) Reproducing the first experiment of Table 2 in \cite{gurnee2024language}.

Identifiability and Future Directions

Identifiability, a cornerstone of statistical inference, emerges as a critical concern. The lack of identifiability in current interpretability tasks leads to poor generalization, sensitivity to methodological choices, and potential false discoveries. The paper argues that interpretability should be explicitly modeled as identifiable statistical tasks, which would open avenues for methodologically sound advancements in the field. It suggests that while this statistical-causal inference perspective is still in its nascent stages, it provides a foundational framework that can be refined and expanded upon to achieve reliable interpretability in AI systems.

Conclusion

"The Dead Salmons of AI Interpretability" underscores the necessity for methodological rigor in AI interpretability, advocating for a paradigm shift towards statistical-causal frameworks. By positioning interpretability within the domain of statistical inference and emphasizing the need for identifiability, the paper sets a roadmap for future research to transform AI interpretability into a discipline grounded in scientific rigor and reliability. This approach holds promise for developing more nuanced, accurate, and actionable explanations in complex AI systems, paving the way for dependable AI deployment in sensitive and consequential domains.