- The paper introduces two novel trifocal tensor solvers using IMU-derived vertical direction to reduce degrees of freedom in pose estimation.

- The four-point solver offers a linear closed-form solution while the three-point solver employs Grӧbner basis methods for minimal correspondence estimation.

- Empirical evaluations on synthetic and KITTI data demonstrate improved computational efficiency and accuracy over traditional multi-view methods.

Trifocal Tensor and Relative Pose Estimation with Known Vertical Direction: Technical Essay

The estimation of the relative pose across multiple views is foundational in computer vision, underpinning systems for SLAM and SfM. Standard two-view pose estimation techniques, such as 5pt-Nister, leverage epipolar geometry but require at least five point correspondences and often struggle with degenerate configurations. Three-view estimation, built upon trifocal tensor constraints, offers tighter geometric structure and potential for lower point correspondence thresholds.

This paper introduces two novel solvers for the multi-view pose estimation problem, specifically exploiting knowledge of the vertical direction, typically available from onboard IMUs. Vertical direction knowledge allows reduction in degrees of freedom for each view: only the yaw angle and translation vector remain unknown, simplifying the estimation process and increasing computational efficiency. The authors present a linear closed-form solution using four points across three views and a minimal solution using only three points, leveraging state-of-the-art Gr\"obner basis solvers.

Mathematical Approach and Algorithmic Contributions

Trifocal Tensor Structure with Vertical Direction

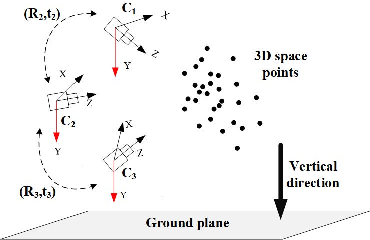

The classic trifocal tensor formalism ties three calibrated camera poses via a set of tensor constraints, each encoding the linkage between multiple feature correspondences. By aligning camera coordinate frames using the gravity vector from IMU readings, roll and pitch angles are resolved, leaving only yaw as an unknown for rotation matrices Rk.

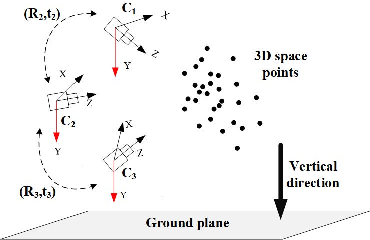

Camera alignment via IMU readings is illustrated in Figure 1.

Figure 1: Camera alignment with known vertical direction, enabling reduction in rotational DoF per view.

With this reduction, the three-view pose estimation problem requires estimation of only two yaw angles (θ2, θ3) and two translation vectors. This allows the formulation of linear constraints and tractable polynomial systems for three-point and four-point cases.

For the four-point scenario, the authors substitute aligned camera models into trifocal tensor equations. Linear relationships between point correspondences and pose parameters are derived, resulting in a system Aq=0 with 17 unknowns, solvable via SVD. Explicit expressions for yaw angles and translation vectors are provided, guaranteeing a closed-form solution.

Three-Point Minimal Solver Using Gr\"obner Basis

The minimal solver, requiring only three point correspondences, parameterizes the rotation using the Cayley transform. Constraints are distilled into high-degree (up to 12) polynomials in two yaw parameters, s2=tan(θ2/2) and s3=tan(θ3/2). The resulting system is addressed via an automatic Gr\"obner basis solver, which selects all real roots as candidate solutions.

Both solvers enforce trifocal tensor structural constraints post-estimation, utilizing null-vector and epipolar relationships to refine results.

Empirical Evaluation: Synthetic and Real-World Data

Efficiency and Numerical Stability

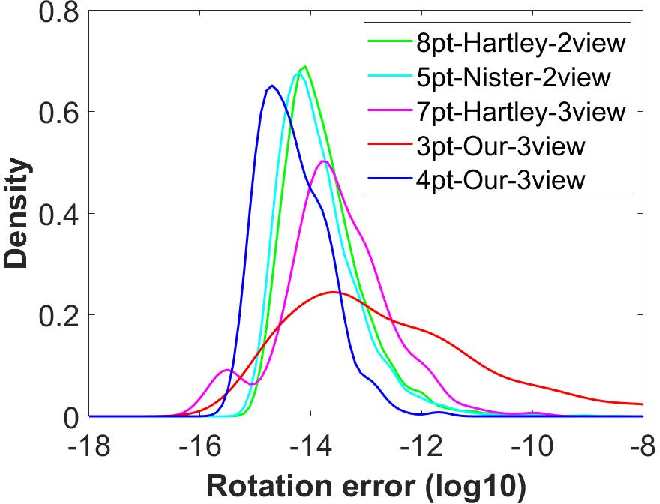

Runtime comparisons demonstrated competitive performance for the new methods. Notably, the linear four-point solver achieves 0.95 ms per instance, outperforming other 3-view solvers (such as 7pt-Hartley-3view at 4.24 ms) and being more efficient than two-view baselines when normalized by number of estimated poses.

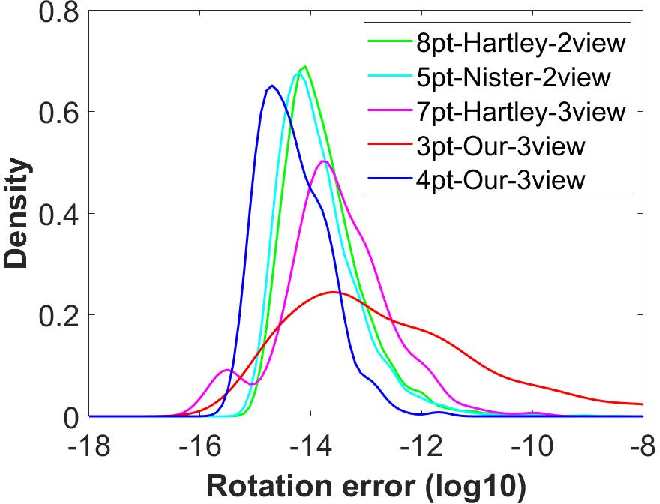

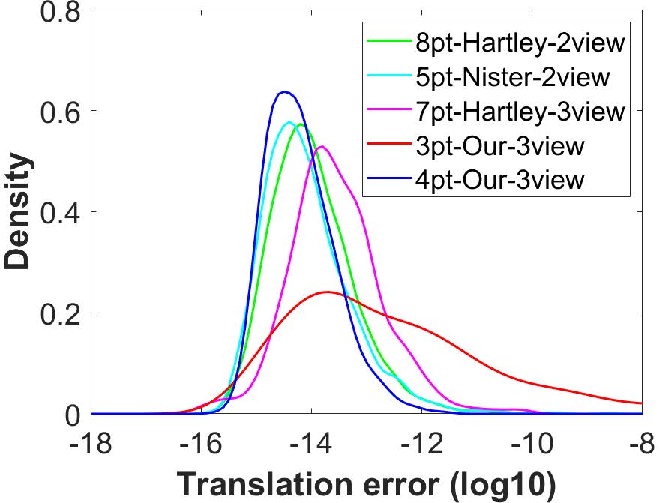

Probability density over pose errors in noiseless conditions highlights the methods' numerical stability, with the four-point approach exhibiting minimal variance.

Figure 2: Probability density functions of rotation/translation estimation errors; four-point solver shows tight distribution.

Sensitivity to Image and IMU Noise

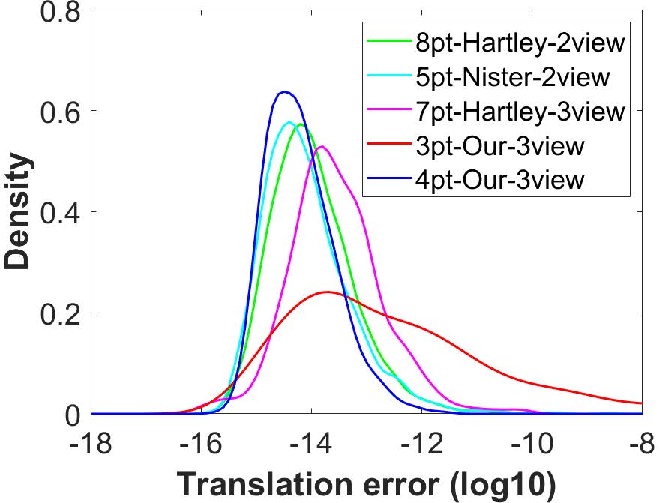

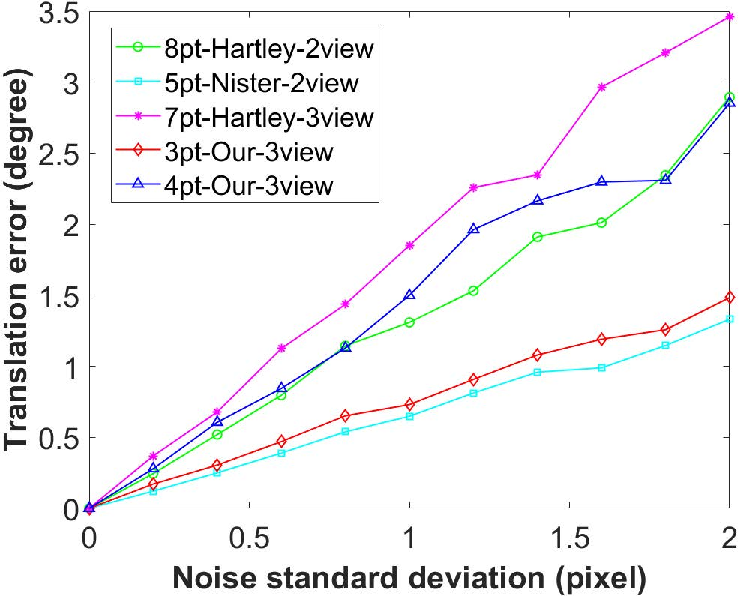

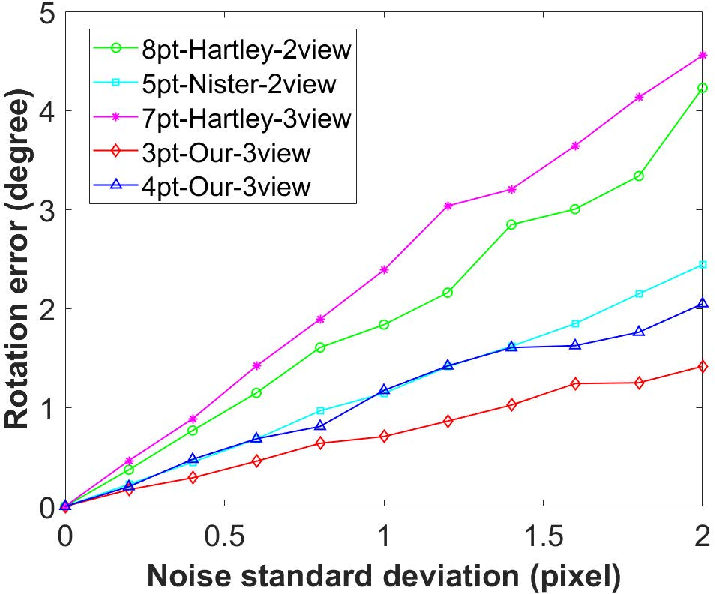

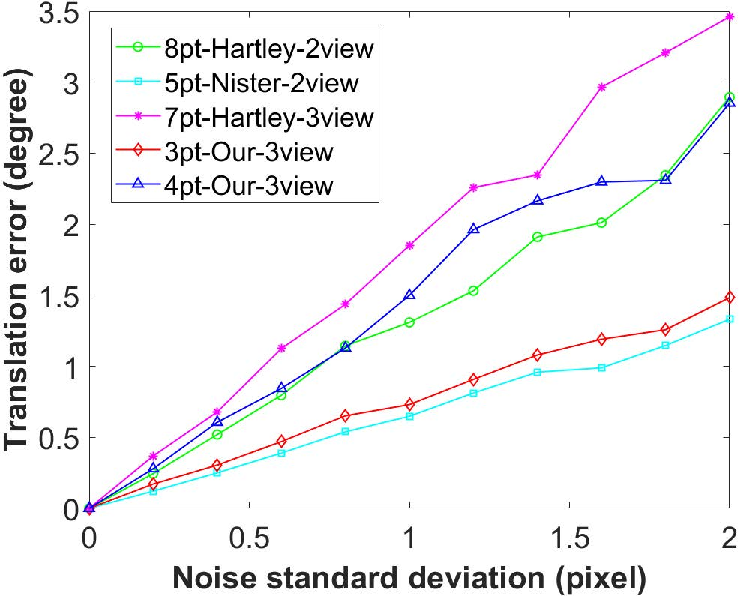

Synthetic experiments examined resilience against image noise and errors in IMU vertical alignment. The three-point solver maintained low rotation errors even under moderate noise, matching or exceeding the precision of traditional two-view methods.

Figure 3: Accuracy degradation with increasing image noise; three-point solver excels in rotation estimation robustness.

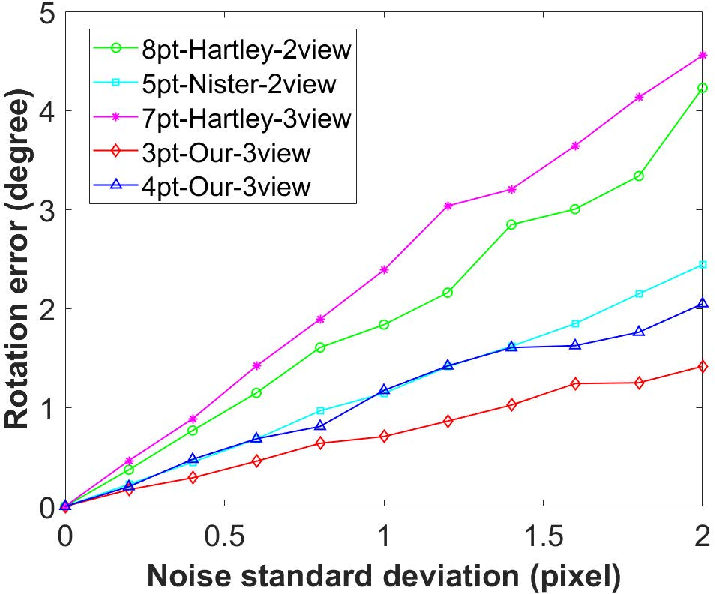

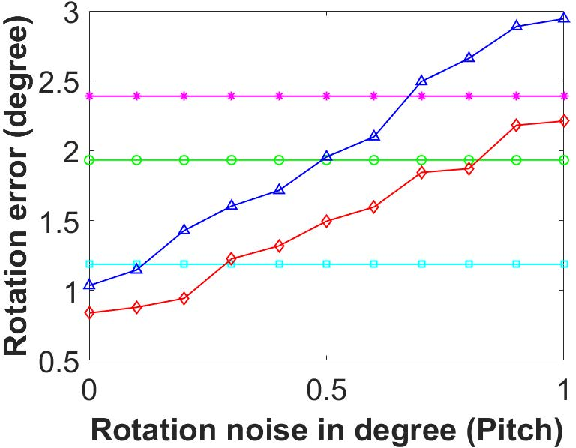

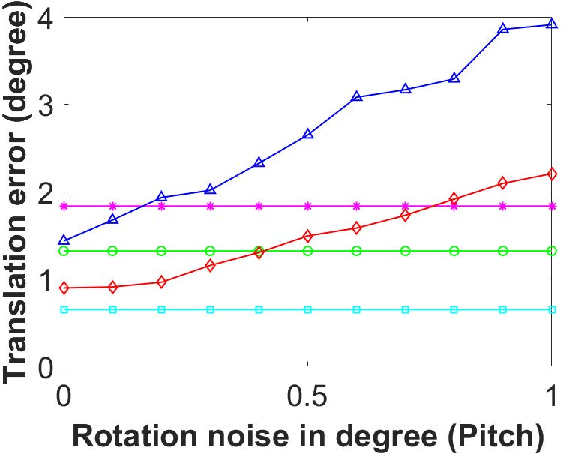

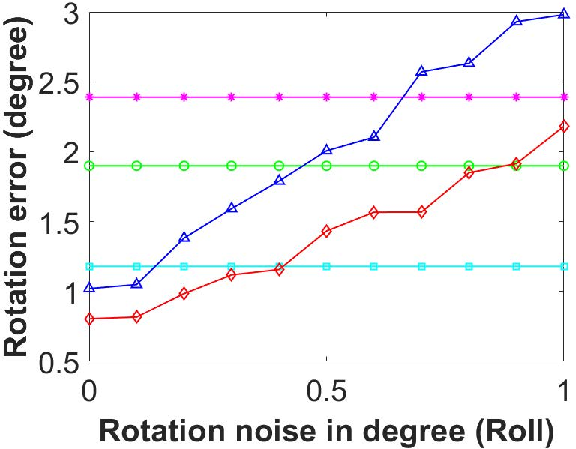

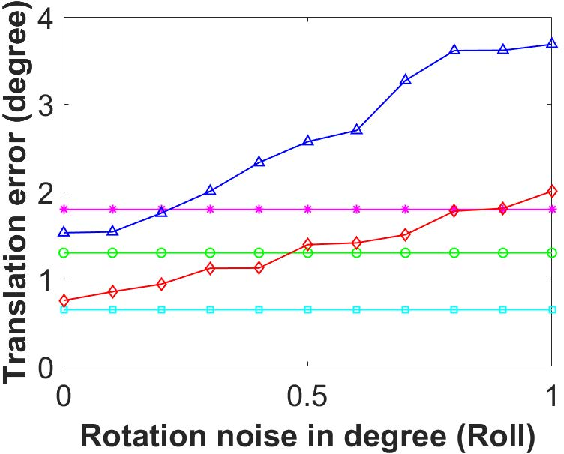

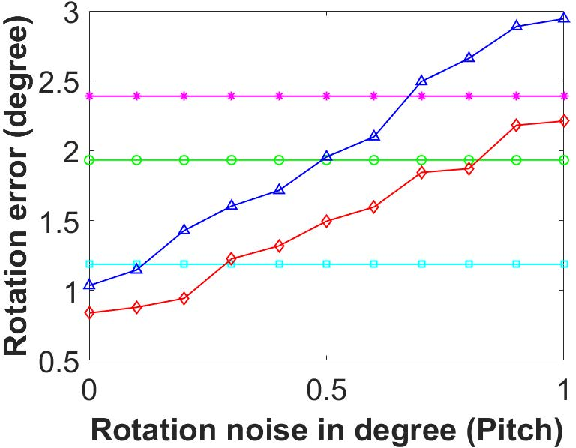

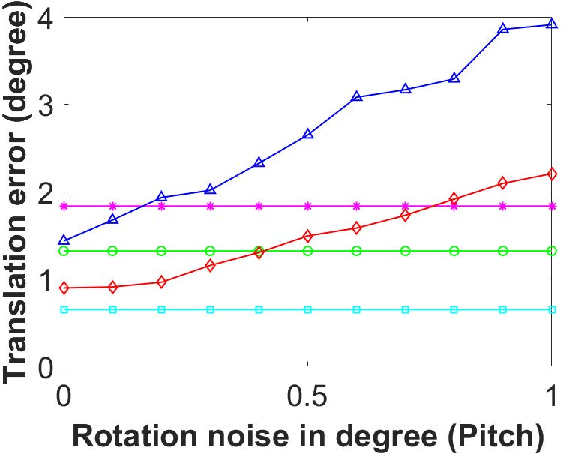

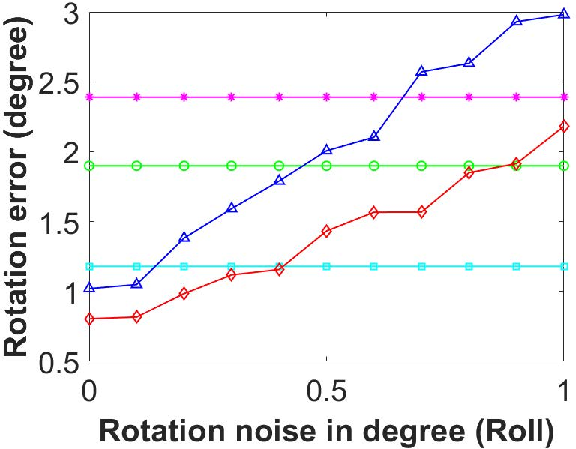

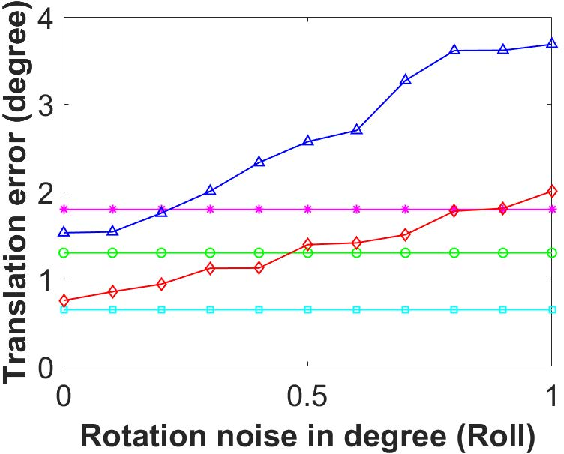

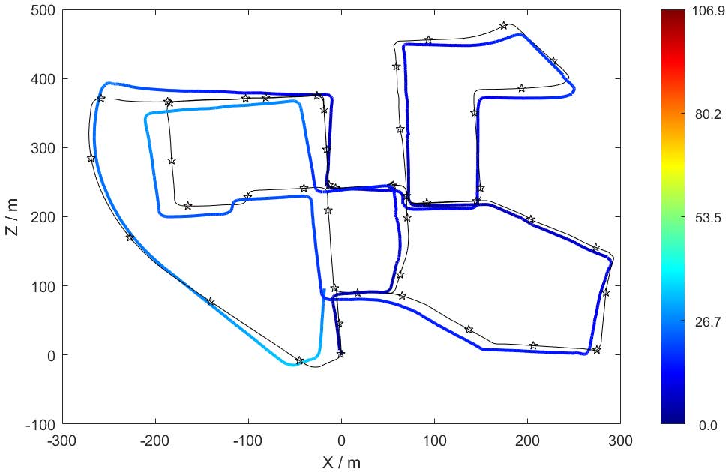

Under IMU pitch/roll angle noise, both proposed solvers retained competitive accuracy provided angular error did not exceed typical consumer IMU bounds (i.e., <0.5°). Translation estimates were more affected, but remained within acceptable margins.

Figure 4: Algorithm accuracy in presence of IMU angle noise for rotation and translation estimation.

KITTI Benchmark Evaluation

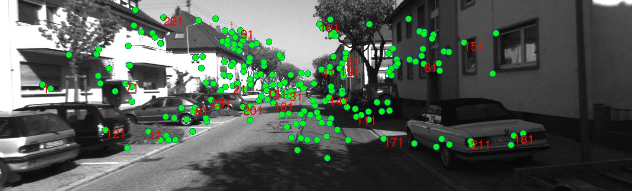

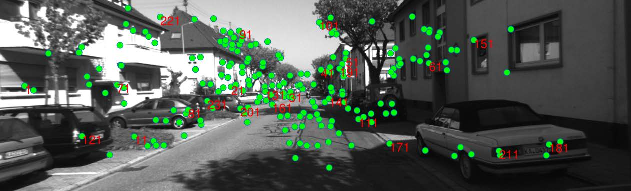

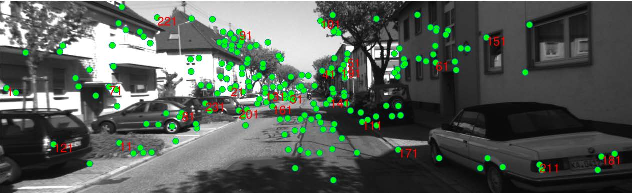

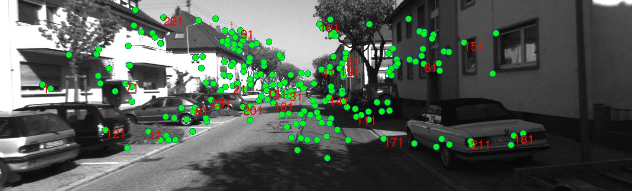

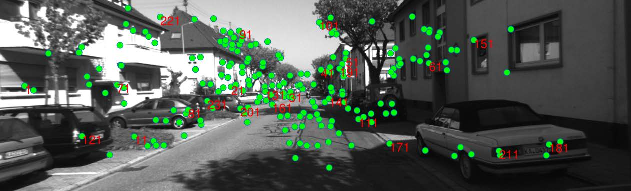

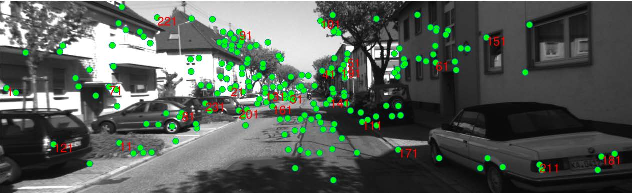

Evaluation on KITTI sequences validated performance in real-world driving scenarios. SIFT was employed for correspondences, and robust RANSAC filtering mitigated outlier effects. Triple-point correspondences are illustrated in Figure 5.

Figure 5: Example of point correspondences across three sequential frames in KITTI sequence.

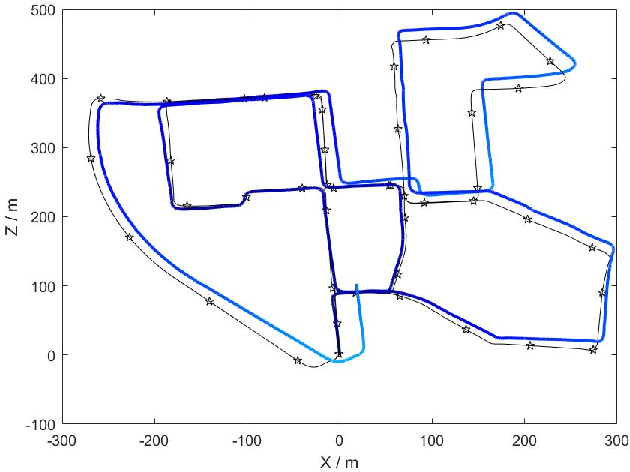

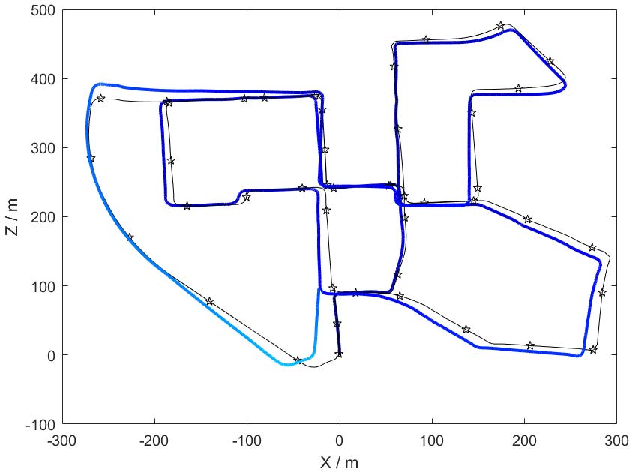

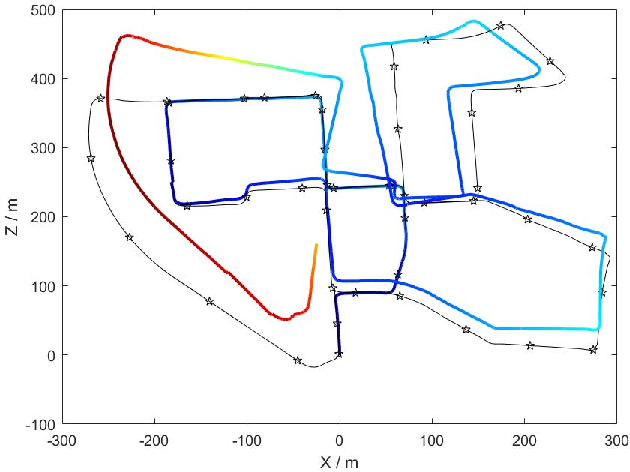

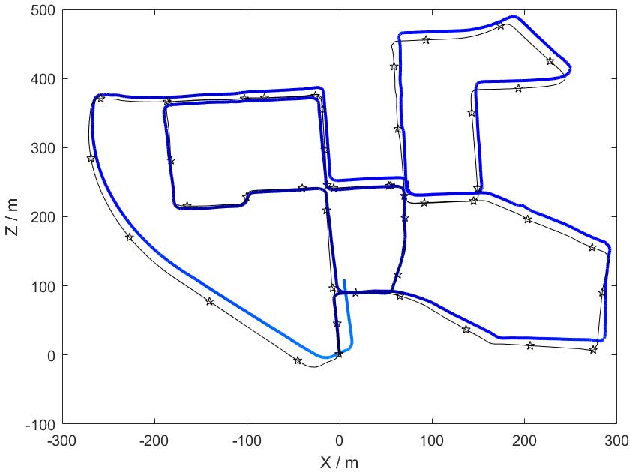

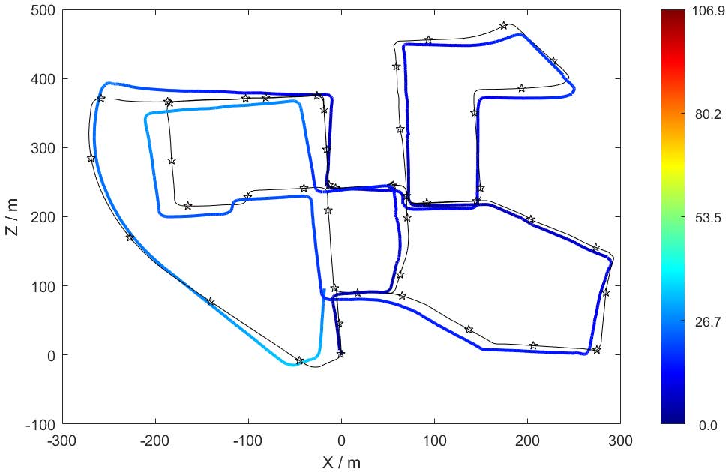

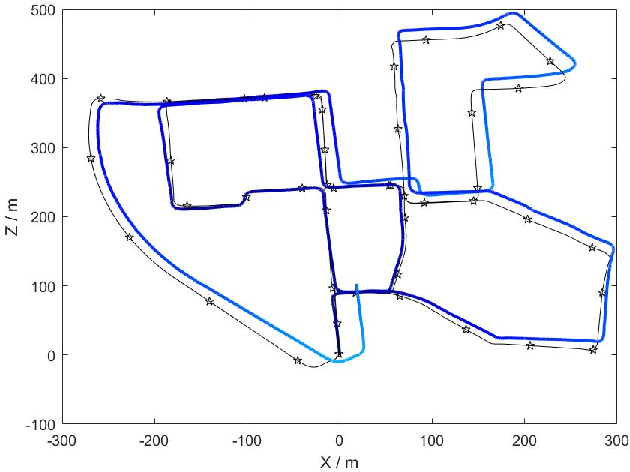

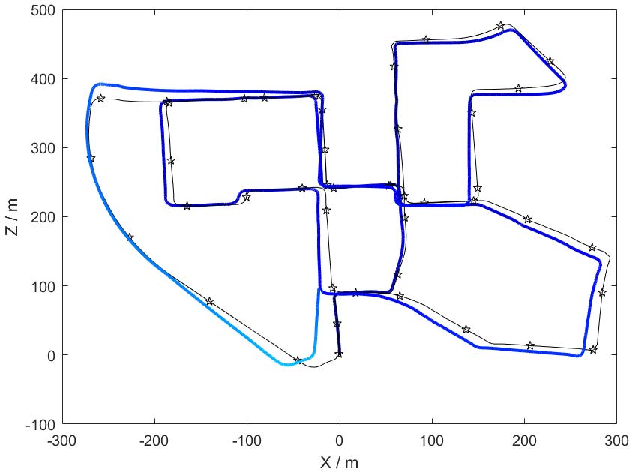

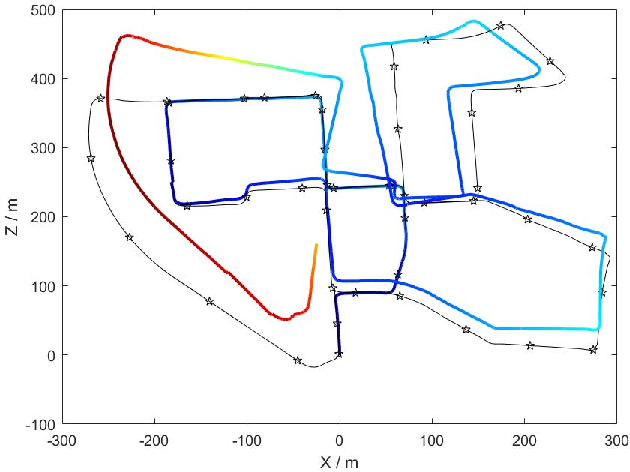

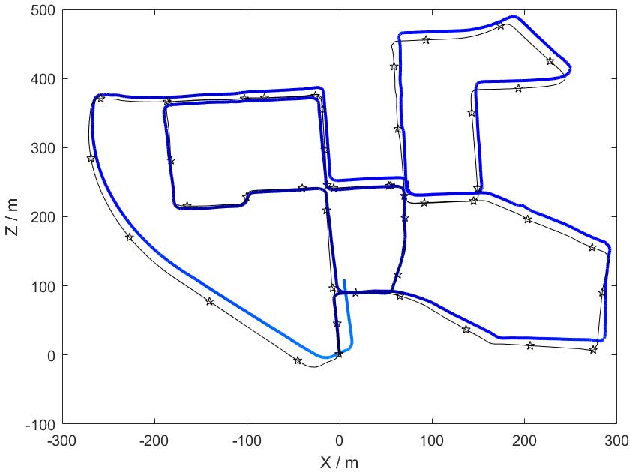

Trajectory estimates demonstrate that both new solvers produce lower absolute trajectory errors than 3-view or 2-view linear baselines, particularly for rotational accuracy.

Figure 6: Visual odometry trajectories on KITTI-00; four-point and three-point methods align closely with ground truth.

Quantitative results show that the three-point solver achieved the lowest median rotation error across all sequences, reducing error two- to threefold relative to standard approaches. Translation errors were comparable or better than traditional methods in most cases.

Implications and Future Outlook

The integration of IMU vertical direction information into trifocal tensor-based relative pose estimation provides a principled mechanism for reducing correspondence requirements and improving both computational efficiency and accuracy. These gains have important practical implications for deployment in resource-constrained robotics or autonomous systems, where real-time operation and robust failure modes are essential.

The proposed three-point and four-point solvers offer fallback pose recovery when conventional two-view estimation yields degenerate solutions, a critical property for robust navigation pipelines. The reliance on low-complexity polynomial solvers, instead of GPU-accelerated homotopy-continuation approaches, further facilitates portable real-time implementation.

Theoretically, the formulation highlights the importance of sensor fusion in geometric vision and opens avenues for further reductions in sample complexity for multi-view estimation problems. The use of algebraic solvers like Gr\"obner basis methods may stimulate future research into faster or more scalable polynomial system solvers for computer vision.

Open questions remain regarding joint optimization with additional sensor cues (e.g., magnetometers or wheel odometry) and adaptation to non-vertical scenarios or general 3D sensor pose estimation tasks.

Conclusion

This work presents highly efficient and accurate trifocal tensor solvers for three-view pose estimation leveraging IMU-derived vertical direction. Both a linear four-point closed-form algorithm and a minimal three-point Gr\"obner-basis solver achieve significant reductions in point requirements and computational demands, demonstrated through synthetic benchmarks and automotive datasets. The advances enable robust multi-view pose estimation even in challenging or degenerate conditions, promising practical impact in autonomous navigation and real-time SLAM systems.