Over++: Generative Video Compositing for Layer Interaction Effects

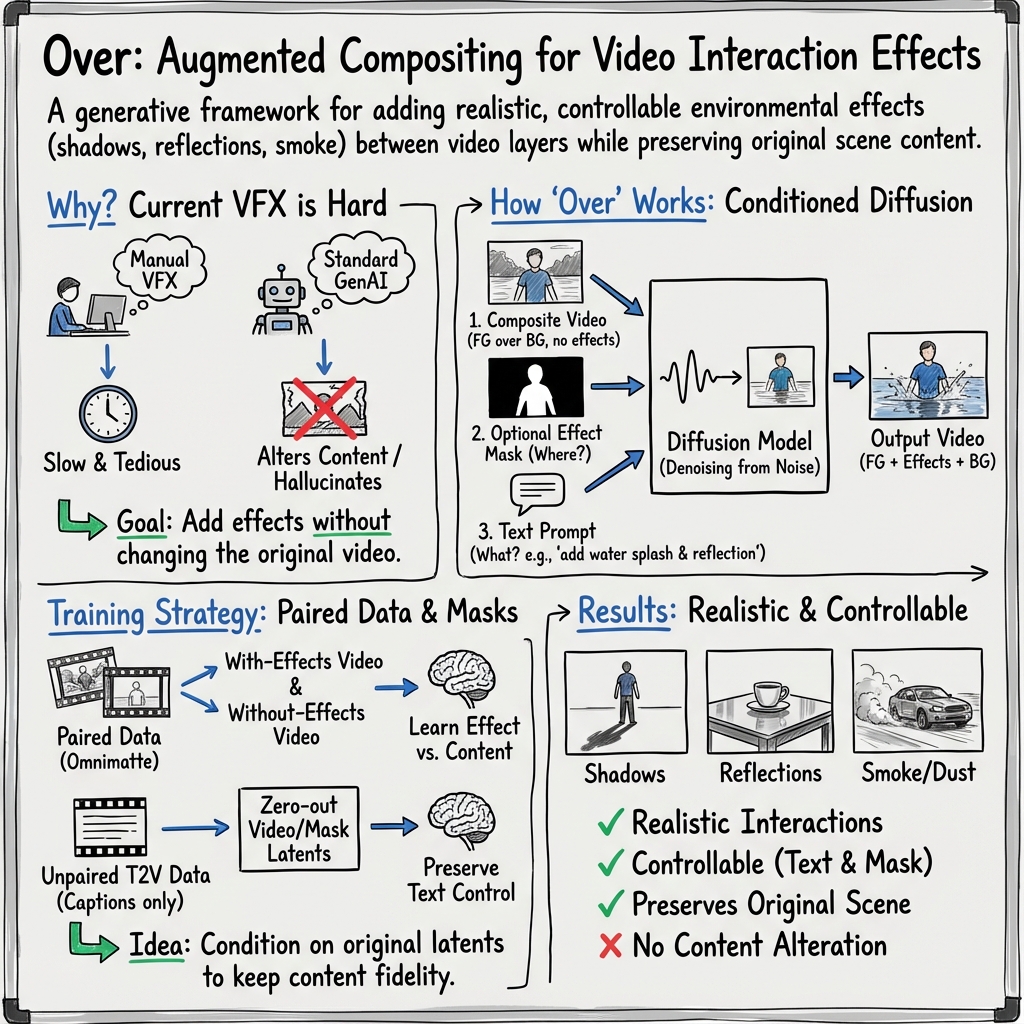

Abstract: In professional video compositing workflows, artists must manually create environmental interactions-such as shadows, reflections, dust, and splashes-between foreground subjects and background layers. Existing video generative models struggle to preserve the input video while adding such effects, and current video inpainting methods either require costly per-frame masks or yield implausible results. We introduce augmented compositing, a new task that synthesizes realistic, semi-transparent environmental effects conditioned on text prompts and input video layers, while preserving the original scene. To address this task, we present Over++, a video effect generation framework that makes no assumptions about camera pose, scene stationarity, or depth supervision. We construct a paired effect dataset tailored for this task and introduce an unpaired augmentation strategy that preserves text-driven editability. Our method also supports optional mask control and keyframe guidance without requiring dense annotations. Despite training on limited data, Over++ produces diverse and realistic environmental effects and outperforms existing baselines in both effect generation and scene preservation.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Explain it Like I'm 14

What is this paper about?

This paper introduces Over (read “Over-plus-plus”), a tool that helps add realistic “environment” effects to videos—things like shadows, reflections, dust, smoke, wakes, splashes—between a foreground subject and a background. The goal is to make two video layers look like they truly belong together, without changing how the original people or places look or move.

What questions are the authors trying to answer?

- How can we automatically add believable effects (like shadows and splashes) that connect a moving subject to its surroundings?

- How can we do this while keeping the original video almost unchanged?

- How can artists control where the effects go and what they look like, using simple tools like a mask (a stencil) and a short text prompt?

- Can we train a system to do this even if we don’t have many “before-and-after” training videos?

- Does this approach work better than current video editing or inpainting tools?

How does the method work?

Think of video compositing like layering transparent stickers: you place a person (foreground) over a scene (background). If you just stick them together, you miss the “glue” that makes it look real—things like a shadow on the ground or water splashing at their feet. Over’s job is to generate that “glue” automatically and realistically.

Here’s the approach, explained simply:

- Input

- A composite video without effects (the person over the background, but no shadow/splash yet).

- An optional mask video (a stencil that says where effects may appear).

- A short text prompt (for example, “soft shadow” or “red smoke”).

- Output

- The same video, but now with the requested effects added in the right places, while the original look and motion stay intact.

The core idea: a guided “denoising” generator

Over is based on a video diffusion model. Imagine starting with TV static and “unfuzzing” it step by step into a video. During this process, Over is guided by:

- The composite video (so it knows what must be preserved).

- The mask (if provided) to localize effects.

- The text prompt (what kind of effect to create).

Unlike some inpainting tools that blank out masked regions and then fill them in, Over keeps the full scene info during generation. This helps avoid weird guesses and keeps the original scene consistent.

Building training data (even when paired videos are rare)

To learn this skill, the model needs examples. But videos that come in pairs—one version without effects and the same video with effects—are hard to find. The authors built a clever pipeline:

- They use existing tools to split a real video with effects into layers:

- Foreground (subject plus effects) and

- A clean background (as if no effect happened).

- Then they re-composite just the subject over the clean background to make a “without effects” version.

- By comparing the “with” and “without” versions, they create an effect mask that shows where effects happen. They clean this mask with simple image operations to remove noise.

They collect:

- 54 real paired videos (with and without effects),

- 573 synthetic paired videos (made with 3D tools and datasets),

- 460 unpaired text-to-video clips (only “with effects,” used to keep the model good at following text).

This combination helps the model learn realism, control, and prompt-following without needing thousands of expensive, hand-annotated examples.

Controlling effects: masks and prompts

- Mask control: A mask acts like a stencil for where effects are allowed. Over is robust even to rough, hand-drawn masks.

- Text control: Prompts like “soft shadow,” “turbulent wake,” or “red smoke” nudge the style, intensity, and color. The authors also use a technique called classifier-free guidance (CFG), which is like turning a dial to make the effect more or less pronounced.

- Keyframes: You don’t have to mask every frame. You can mark a few key frames, and Over fills in the rest smoothly.

What did they find?

- Quality and preservation: Over adds realistic interaction effects while keeping the original subject and background consistent—better than several strong baselines and even comparable to a commercial system on overall quality, with stronger preservation and control.

- Control works: Over responds to both masks (where) and prompts (what/how), and it can be steered to produce subtle or strong versions of the same effect.

- Works with limited data: Despite relatively small training sets, Over generalizes to many scenes and effects, including shadows, splashes, smoke, dust, and reflections.

- New evaluation twist: Effects can be subtle and hard to score. The authors introduce a “directional” CLIP metric that checks whether the change you made (from no-effect to effect) matches the change in a ground-truth example. Over scores best on this measure, supporting that it adds the “right” kind of change.

- User study: In pairwise comparisons (with both VFX pros and non-experts), Over was preferred most of the time for matching the prompt, respecting the mask, and preserving original video content.

Why is this important?

- Saves time for artists: Instead of hand-painting shadows or simulating water splashes frame by frame, artists can guide Over with a quick mask and a short prompt.

- Keeps the director’s intent: Because it preserves the original subject and background, it fits professional workflows where continuity and identity matter.

- Flexible and accessible: Works with or without masks, supports keyframes, and can be prompted to produce different styles and intensities of effects.

- Broader impact: This approach, called “augmented compositing,” could make high-quality video effects more accessible to small studios, creators, and educators, not just big-budget productions.

Limitations and future directions

- Not pixel-perfect yet: Small differences can appear due to how videos are encoded and decoded. Future work could sharpen fidelity even more.

- Rare tricky cases: The model may occasionally add odd effects in hard scenes. Training with stronger base models and more diverse data could help.

- Complementary tasks: Color matching and relighting are out of scope here but could be combined in future systems for an even more complete solution.

In short, Over is a controllable, effect-focused video generator that adds the missing “glue” between layers—shadows, splashes, smoke, dust—making composites look real while keeping the original video intact.

Knowledge Gaps

Knowledge gaps, limitations, and open questions

The paper proposes Over for generative video compositing of environmental effects. While promising, several concrete gaps remain for future work:

- Dataset scale and diversity

- Training relies on a very small paired dataset (54 real-world, 573 synthetic) with limited effect types and scene conditions; coverage of complex volumetric effects (e.g., fire, fog, heavy smoke), multi-object interactions, indoor lighting, and extreme camera motion is unclear.

- Paired “with/without effects” videos are derived via Omnimatte decomposition and recompositing, not true ground-truth physical captures; this introduces bias and artifacts that may mis-train the model.

- Lack of long-duration, high-resolution, high-motion videos in training; generalization to 4K/feature-film pipelines is untested.

- The paper states the rendered dataset/code “will be released,” but clarity on releasing real-world pairs (licensing, reproducibility) is missing.

- Ground-truth masks and supervision

- Effect masks are computed as thresholded differences with morphological cleanup; these are noisy approximations (particularly for real data) and not pixel-accurate supervision.

- No quantitative analysis connecting mask quality to effect quality; how sensitive is Over to mask misalignment, over-/under-segmentation, or temporal mask jitter?

- The “tri-mask” (gray for unknown frames) is a heuristic; there is no probabilistic treatment of uncertainty or mask confidence learning.

- Effect representation and compositing outputs

- Over outputs a full composited video; it does not produce separate, physically meaningful effect layers (e.g., RGBA/alpha-residual passes) that VFX pipelines typically require for downstream manipulation.

- Semi-transparency constraints (crucial for shadows/reflections/smoke) are not enforced during training; there is no explicit loss encouraging “effect-only” residuals.

- Lack of channel/AOV outputs (e.g., shadow-only pass, reflection-only pass, masks for effect alphas) limits use in professional compositing tools.

- Physical plausibility and scene constraints

- No explicit modeling or conditioning on depth, surface normals, light direction/intensity, or material properties; shadows/reflections may be misaligned with scene lighting/geometry.

- Occlusion handling is implicit; without depth-aware conditioning, effects may spill over occlusion boundaries or fail under complex layering.

- No evaluation or constraints on conservation-like properties (e.g., splash trajectories), fluid/volumetric dynamics, or contact mechanics.

- Control and editability

- Control is limited to binary masks and text prompts; there are no continuous effect strength maps, directional vector fields (e.g., wind), or parametric controls (e.g., light azimuth/elevation for shadows, splash intensity).

- Prompt-driven edits depend on unpaired T2V augmentation; there is no principled solution to language drift or a study of failure modes when prompts conflict with scene physics.

- No mechanisms for style locking or consistent effect appearance across multiple shots in a sequence (e.g., same dust color/density across scenes).

- Determinism and reproducibility across iterative edits—critical in VFX workflows—are not addressed (e.g., seed management, edit locking).

- Preservation of original content

- VAE encoding/decoding degrades pixel fidelity; while acknowledged, there is no preservation loss (e.g., mask-weighted identity loss) or test-time optimization to guarantee identity/texture stability outside effect regions.

- No quantitative, mask-weighted metric reporting content preservation outside the edit region (e.g., LPIPS/PSNR computed outside mask only).

- Temporal consistency and long sequences

- For >85 frames, temporal multidiffusion (chunking) is used; potential seam artifacts at chunk boundaries are not evaluated.

- No explicit temporal consistency losses or alignment mechanisms targeting effect flicker, temporal drift, or long-horizon coherence.

- Lack of effect-specific temporal metrics (e.g., optical flow consistency of shadow boundaries or splash lifecycles).

- Evaluation methodology

- Benchmarks use only 24 videos; generalization across datasets, domains, and longer sequences remains unclear.

- The proposed CLIP_dir metric is not validated against expert perceptions of effect realism or physical correctness; correlation with user studies is not established.

- No localized metrics focusing on masked regions, and no physics-aware metrics (e.g., shadow orientation vs. estimated sun direction, reflection geometry consistency).

- Comparisons omit physics-based simulation baselines or artist-created ground-truth effect passes, which are standard in VFX evaluation.

- Robustness and failure cases

- Robustness to scenes already containing similar effects (risk of doubling effects) is untested.

- Effects in highly dynamic or low-light backgrounds, rolling shutter, strong motion blur, or heavy lens effects (bokeh, flare, film grain) are not analyzed.

- Failure cases are briefly noted (hallucinations) but not systematically categorized, quantified, or linked to scene attributes.

- Integration with professional pipelines

- Color management, relighting, harmonization, motion blur, grain/noise matching, lens distortion, and camera metadata are not integrated; the paper defers these as “orthogonal,” yet they are essential for deployment.

- Runtime, memory footprint, latency, and scalability (e.g., 4K/8K frames) are not reported; no discussion of distillation or acceleration for interactive use in Nuke/After Effects.

- No plugin/UI or iterative refinement interface, and no guarantees about edit stability under iterative changes (a core workflow requirement).

- Training design and objectives

- Standard L2 diffusion loss with no compositing-aware objectives (e.g., residual/alpha consistency, mask-weighted regularization, or cycle consistency between with- and without-effect variants).

- No ablation of the tri-mask design or study of alternative learning strategies (e.g., mask confidence modeling, curriculum from coarse to fine masks).

- Unpaired T2V augmentation relies on LLM-generated prompts; reproducibility and bias introduced by LLMs are not discussed.

- Scope of supported effects

- Demonstrations focus on smoke, dust, water splashes, shadows, and reflections; other common effects (fire, rain, snow, fog, sparks, debris, footprints, wetness/dryness transitions) are not evaluated.

- Multi-effect interactions (e.g., shadow plus reflection; wake plus spray plus foam) and multiple light sources (multiple shadows) are out of scope.

- Multi-layer and multi-subject interactions

- Compositing scenarios with multiple foreground layers, multiple moving subjects, and interacting effects (e.g., crossing shadows, overlapping splashes) are not addressed.

- No support for multi-layer outputs that allow downstream re-timing or re-ordering typical in compositing stacks.

- Mask-free use and placement accuracy

- In mask-free mode, how accurately does the model localize effects? There is no metric or study of effect placement accuracy without masks, nor guidance on controlling placement via prompts alone.

- Continuity across shots

- Continuity across multiple shots (consistent effect look, direction, and intensity) is not discussed; no techniques for cross-shot conditioning or sequence-level constraints.

- Ethical and misuse concerns

- Risks of creating misleading composites are not discussed; no watermarking or detection strategies for synthetic effect insertion are proposed.

Practical Applications

Immediate Applications

Below are actionable use cases that can be deployed with the paper’s current method (Over) and training/data strategy, assuming access to the released weights or a comparable fine-tune and standard GPU resources.

- Film/TV/VFX post-production (VFX sector)

- Use cases: Auto-generate interaction effects (shadows, reflections, dust, splashes, wakes) between prepped foreground/background layers; mask- or keyframe-guided “effect passes” that preserve actor/plate fidelity; rapid variations via prompts/CFG (soft vs harsh shadows, mild vs turbulent wake); quick alternates when swapping backgrounds while maintaining context-aware effects.

- Tools/products/workflows: Nuke/After Effects plugin or node; batch command-line tool; Over-powered “effects layer” track in compositing; shot-prep assistant that proposes effect regions via difference maps; preview passes for director reviews.

- Assumptions/dependencies: Foreground/background layers and alpha are available; color space/HDR/bit-depth handled elsewhere (e.g., OCIO); separate harmonization/relighting/motion-alignment tools remain in the pipeline; GPU availability; legal rights to source material.

- Advertising/marketing and e-commerce product videos (Advertising/Retail sectors)

- Use cases: Scale product video variants by swapping backplates while generating consistent shadows/reflections; add subtle dust/smoke trails for lifestyle shots; prompt-driven style/intensity for A/B testing.

- Tools/products/workflows: Web API for creative automation; Shopify/Adobe Commerce integrations; template-driven batch render.

- Assumptions/dependencies: Clean product alpha; brand safety review; background licensing; GPU/cloud rendering costs.

- Broadcast graphics and virtual sets (Broadcast/Live events)

- Use cases: Add plausible shadows/reflections for on-field/studio AR graphics and lower-thirds over live video; enhance virtual set composites to feel “grounded.”

- Tools/products/workflows: Integration with Vizrt/Unreal broadcast pipelines; effect track that follows tracked graphics; keyframe mask for critical beats.

- Assumptions/dependencies: Tight latency budgets (use smaller distilled models or server-side acceleration); robust tracking/masks from vision systems.

- Social media and creator tools (Consumer software/Creator economy)

- Use cases: Mobile/desktop creation of stylized or realistic interaction effects with coarse, hand-drawn masks; “prompt + mask” filters for splashes/smoke/shadows tied to subject motion.

- Tools/products/workflows: TikTok/Snap/Instagram effects; desktop app plug-ins; prompt presets (e.g., “cinematic shadow,” “misty ground fog”).

- Assumptions/dependencies: On-device models or cloud inference; safety filters for harmful prompts; battery/latency constraints.

- Pre-visualization and animatics (Production/design)

- Use cases: Director/DP can preview practical vs CG choices with rapid, scene-preserving interaction effects; quick beats for storyboards/animatics that respond to prompt changes.

- Tools/products/workflows: Previz assistants in ShotGrid/Storyboard Pro; batch passes for sequence reviews.

- Assumptions/dependencies: Accept approximate realism; modest GPU access during preproduction.

- Education in compositing and cinematography (Education sector)

- Use cases: Interactive teaching of where/why effects occur; “what-if” exercises with prompts/masks to explore style/intensity; difference maps to visualize effect placement.

- Tools/products/workflows: Classroom kits; tutorial projects; prompt libraries.

- Assumptions/dependencies: Educational licenses; commodity GPUs or cloud credits.

- Vision dataset augmentation (Computer vision/ML)

- Use cases: Add realistic/environmentally grounded shadows/reflections/smoke to diversify training data and stress-test perception models under illumination/occlusion shifts.

- Tools/products/workflows: Data augmentation SDK using Over to preserve labels in non-edited regions; scripted prompt/mask generators.

- Assumptions/dependencies: Layered or synthetically composited inputs to maintain GT fidelity; audit for label drift.

- Compositing QA assistant (Quality control tooling)

- Use cases: Flag “missing” interactions by comparing no-effect input vs Over output; difference maps for shot review checklists.

- Tools/products/workflows: Dailies panel that overlays suggested effect regions; human-in-the-loop acceptance.

- Assumptions/dependencies: False positives/negatives expected; maintains creative discretion.

- Academic benchmarking utilities (Research)

- Use cases: Evaluate subtle, localized edits with the paper’s directional metric (CLIP_dir); study robustness to coarse masks and keyframe-only supervision.

- Tools/products/workflows: Metrics library; masked/keyframe control baselines; reproducible scripts once the dataset/code are released.

- Assumptions/dependencies: Availability of the paired/unpaired data and reference implementations; CLIP versioning for metric stability.

Long-Term Applications

These use cases need further research, scaling, engineering, or policy alignment (e.g., model distillation, live performance, physics grounding, provenance).

- Real-time on-set and live broadcast overlays (Virtual production/Broadcast)

- Vision: Live, low-latency generation of context-aware interaction effects on LED volumes or during sports broadcasts.

- Needed advances: Model distillation/acceleration; streaming-friendly inference; robust on-the-fly masks/tracking.

- AR/VR/XR filters with physically plausible interactions (AR/VR)

- Vision: Wearable/mobile AR that adds shadows/reflections/smoke that “stick” to real-world geometry without full 3D recon.

- Needed advances: On-device efficiency; depth/geometry priors; latency-safe mask estimation; battery-aware scheduling.

- Hybrid simulation + generative effects (Software/Graphics/Gaming)

- Vision: Blend graphics simulation (e.g., rigid/soft-body) with generative volumetrics (dust/smoke/foam) for realism at game/runtime scales.

- Needed advances: Interface specs for sim-to-gen conditioning; temporal stability guarantees; editor tooling for art direction and reproducibility.

- End-to-end augmented compositing suite (VFX/Post)

- Vision: Unified pipeline that adds interaction effects plus motion alignment, relighting, color harmonization, and HDR fidelity in one controllable model.

- Needed advances: Multi-task training; HDR/OCIO-accurate VAEs; scene-scale temporal consistency; failure case safeguards.

- Multi-shot/sequence-aware direction (VFX/Editorial)

- Vision: Maintain directorial continuity of interactions across shots/sequences with shared control tokens and shot-to-shot constraints.

- Needed advances: Long-range temporal training; cross-shot conditioning; continuity-aware metrics.

- Provenance, watermarking, and disclosure (Policy/Standards)

- Vision: Automatic labeling and cryptographic provenance (e.g., C2PA) for AI-generated interaction effects in broadcast/ads/cinema.

- Needed advances: Standardized effect-layer tagging; robust watermarking that survives post pipelines; compliance workflows.

- Safety and misuse detection (Policy/Trust & safety)

- Vision: Tools to detect/flag synthetic environmental effects in forensic analysis (e.g., faked puddles/smoke/shadows in disinformation).

- Needed advances: Detectors for generative interaction cues; public datasets of benign/malicious edits; policy guidelines for disclosure.

- Scalable synthetic data generation for robotics/perception (Robotics/Autonomy)

- Vision: Domain randomization at scale by adding physically plausible interactions to improve robustness to shadows, reflections, and particulate occlusions.

- Needed advances: Label-preserving protocols; causal stress-test suites; automated mask/prompt curricula tied to task metrics.

- Commerce-grade asset marketplaces (Creative tooling/Platforms)

- Vision: “Interaction effect packs” and promptable presets for brands/agencies; standardized APIs for batch creative ops.

- Needed advances: IP/licensing frameworks for generated content; SLA-backed rendering services; brand safety filters.

- Edge deployment and green compute (Sustainability/Systems)

- Vision: Efficient Over-like models on consumer hardware with energy-aware scheduling.

- Needed advances: Quantization/distillation; spatiotemporal caching; memory-lean VAEs and attention kernels.

Cross-cutting assumptions and dependencies (impacting feasibility)

- Layered inputs: Most applications assume access to foreground/background layers and reasonable segmentation masks; tri-mask/keyframe control alleviates but does not remove this need.

- Compute and latency: Current training uses multi-GPU servers; real-time or mobile use will require significant optimization/distillation.

- Color and fidelity: VAE encoding/decoding may limit pixel-perfect fidelity and HDR accuracy; high-end post requires color-managed pipelines and possibly EXR/16-bit+ support.

- Scope boundaries: Relighting/harmonization/motion alignment are out-of-scope and must be handled by complementary tools for production use.

- Generalization and safety: Limited paired data can cause domain-shift or hallucinations in complex scenes; human-in-the-loop review and conservative defaults are recommended.

- Legal/policy: Rights to source footage and backgrounds; disclosure/provenance for AI-generated content; brand safety for ads; moderation of harmful prompts.

Glossary

- Alpha matte: A per-pixel opacity value used to blend foreground and background layers in compositing. "where denotes the per-pixel alpha matte,"

- Augmented compositing: A generative task that adds semi-transparent environmental effects to a composite while preserving the original scene. "We introduce augmented compositing, a new task that synthesizes realistic, semi-transparent environmental effects conditioned on text prompts and input video layers, while preserving the original scene."

- Attention: A mechanism in transformers that conditions generation on multiple inputs by focusing on relevant features. "via attention~\cite{vaswani_attention_2023}."

- CLIP score: A metric that measures alignment between visual content and text using CLIP embeddings. "CLIP score~\cite{hessel2021clipscore}"

- CLIP_dir: A directional CLIP metric assessing whether generated changes align with ground-truth changes. ""

- Classifier-free guidance (CFG): A sampling technique to strengthen conditioning signals (e.g., text or mask) during diffusion. "and leverage classifier-free guidance (CFG) at inference, enabling both mask- and prompt-conditioned effect generation."

- DDIM inversion: A process that reverses diffusion to obtain latent representations of an input for editing. "per-frame DDIM~\cite{song_denoising_2022} inversion"

- DreamBooth: A fine-tuning method that personalizes generative models to a specific subject. "DreamBooth~\cite{ruiz_dreambooth_2023_fixed}"

- Force-Prompting: A conditioning strategy that uses force-based inputs to simulate object–environment interactions. "Force-Prompting~\cite{gillman_force_2025} extends this line by fine-tuning an I2V backbone with force-based conditioning"

- Fréchet Video Distance (FVD): A distributional metric for video quality that measures distance between feature distributions. "debiased FVD~\cite{ge2024content}"

- image+video-to-video (I+V2V): A control mode that edits videos using both an image and a video as references. "a tuning-free image+video-to-video (I+V2V) framework"

- image-to-video (I2V): A generation mode that animates a single image into a video conditioned on text or other controls. "image-to-video (I2V)~\cite{wang2025dreamvideo, ren2024consisti2v, singer2022make}"

- Inpainting: Filling or editing regions of visual content, often guided by masks, to add or modify details. "Recent inpainting-based approaches (e.g.,~\cite{vace}) allow users to specify regions for modification via masks,"

- Latents: Encoded representations in a model’s latent space used for conditioning or generation. "we pass through the fully-encoded latents to preserve scene context and suppress hallucinations."

- LoRA: Low-Rank Adaptation; a parameter-efficient method to fine-tune large models. "LoRA~\cite{hu2022lora}"

- LPIPS: A perceptual similarity metric evaluating visual differences aligned with human judgments. "SSIM, PSNR, and LPIPS across all frames."

- Mask pruning: Cleaning a binary mask by removing noise and artifacts to better localize effect regions. "this mask pruning process effectively removes noise from the initial effect mask, especially for real-world data."

- Morphological operations: Pixel-level image processing steps (e.g., erosion, dilation, median filtering) to refine masks. "through a sequence of morphological operations---erosion, dilation, and median filtering---"

- Motion transfer: Techniques to transfer movement patterns or dynamics from one source to another. "prior work on motion transfer~\cite{gu_diffusion_2025}"

- Omnimatte: A decomposition approach that separates video into RGBA layers containing objects and their associated effects. "Omnimatte~\cite{lu_omnimatte_2021} and related methods aim to decompose a visual input into RGBA matte layers, each containing an object and its associated effects."

- Otsu’s thresholding: An automatic method to binarize images by selecting an optimal threshold. "Otsu’s thresholding~\cite{4310076}."

- Over operator: A Porter–Duff compositing operator that blends a foreground over a background using alpha. "defining the ``over\" operator to combine image elements with a pre-multiplied alpha channel."

- Pre-multiplied alpha: A representation where color channels are multiplied by alpha to simplify compositing math. "pre-multiplied alpha channel."

- PSNR: Peak Signal-to-Noise Ratio; a fidelity metric indicating reconstruction quality. "SSIM, PSNR, and LPIPS across all frames."

- SSIM: Structural Similarity Index; measures image similarity based on luminance, contrast, and structure. "SSIM~"

- Temporal multidiffusion: A strategy to generate long sequences by combining overlapping temporal windows in diffusion. "we apply temporal multidiffusion~\cite{Zhang_2024_CVPR} for videos longer than 85 frames,"

- Text-to-video (T2V): Generating video content directly from textual descriptions. "text-to-video (T2V) diffusion transformer model, CogVideoX-5B~\cite{yang_cogvideox_2024}"

- Textual Inversion: A technique that learns a token representing a specific concept for text-driven generation. "Textual Inversion~\cite{gal_image_2022}"

- Tri-mask design: A training scheme that mixes annotated, unannotated, and uncertain mask regions to improve robustness. "we introduce a tri-mask design that supports training under both masked and unmasked conditions."

- VAE reconstructions: Outputs from a Variational Autoencoder that may introduce artifacts or misalignments. "due to imperfections in the subject segmentation mask $$, VAE reconstructions, and video decomposition"

- VBench: A benchmark assessing video generation consistency and quality across multiple dimensions. "VBench (overall consistency)~\cite{huang_vbench_2023_fixed}"

- VFX generation: Creating visual effects (e.g., smoke, splashes) for film or video, often requiring realism and control. "Visual effects (VFX) generation plays a crucial role in modern video production."

- VMAF: Video Multimethod Assessment Fusion; a perceptual metric for video quality. "VMAF~\cite{blog_toward_2017}"

- Video harmonization: Adjusting inserted or edited content to match the color and lighting of the target scene. "video harmonization~\cite{Harmonizer}"

- Visual concept composition: Combining multiple reference inputs to synthesize coherent content and style. "Visual concept composition aims to merge multiple reference inputs into a coherent output."

Collections

Sign up for free to add this paper to one or more collections.