LightTact: A Visual-Tactile Fingertip Sensor for Deformation-Independent Contact Sensing

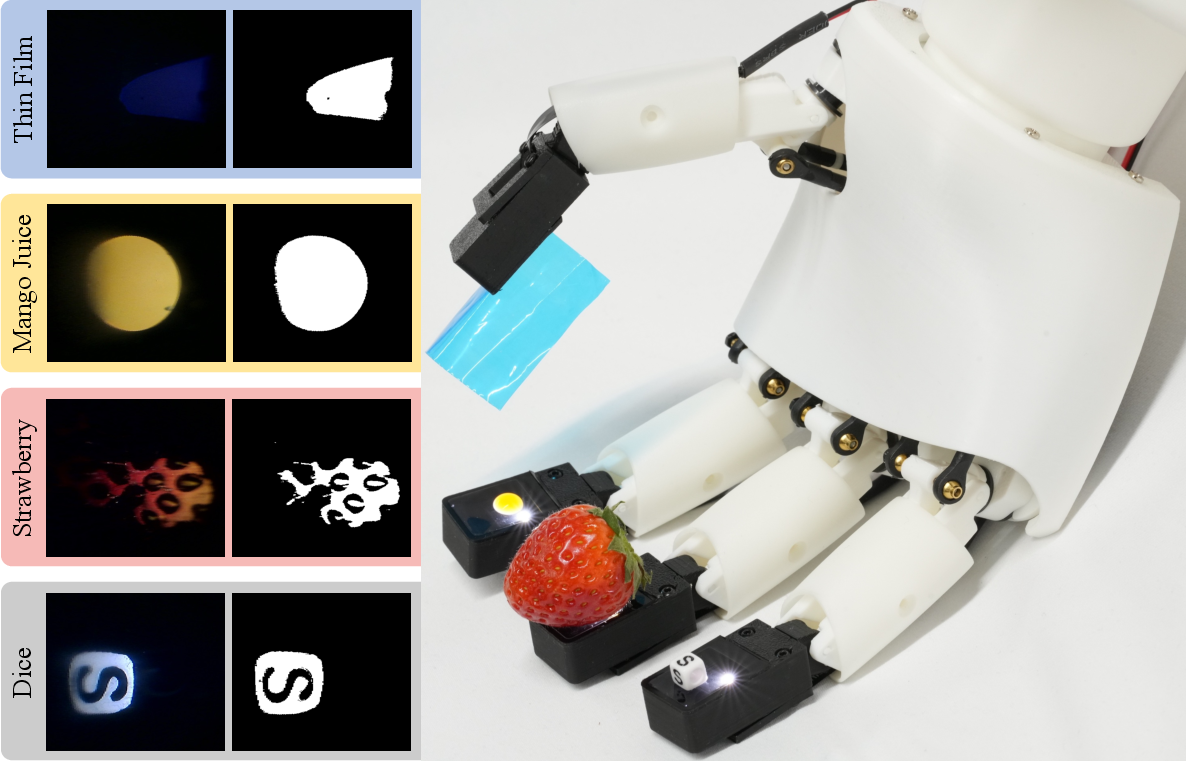

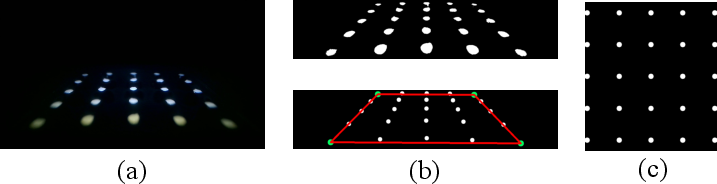

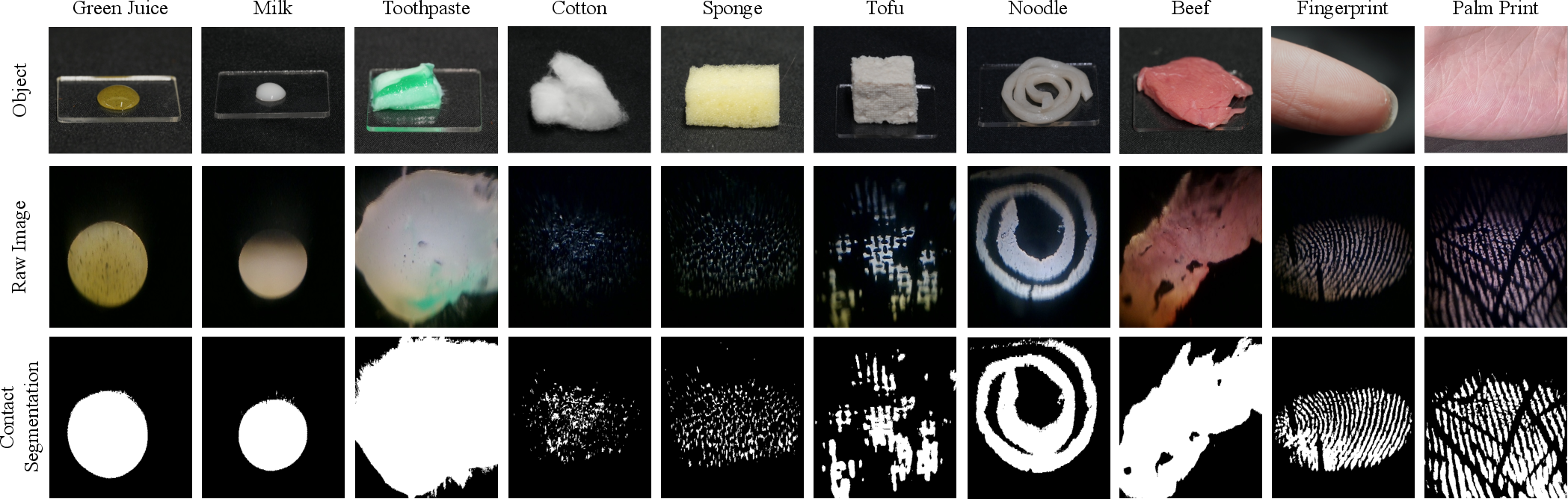

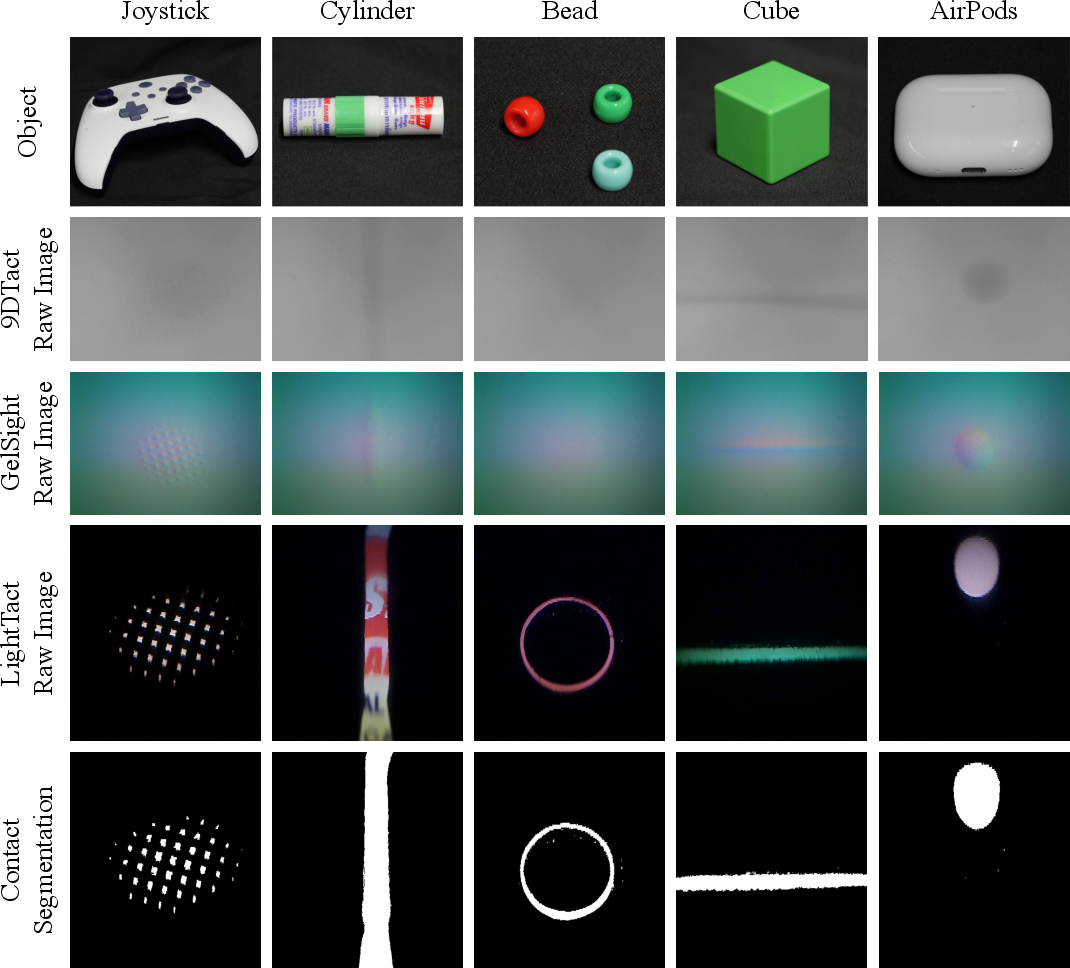

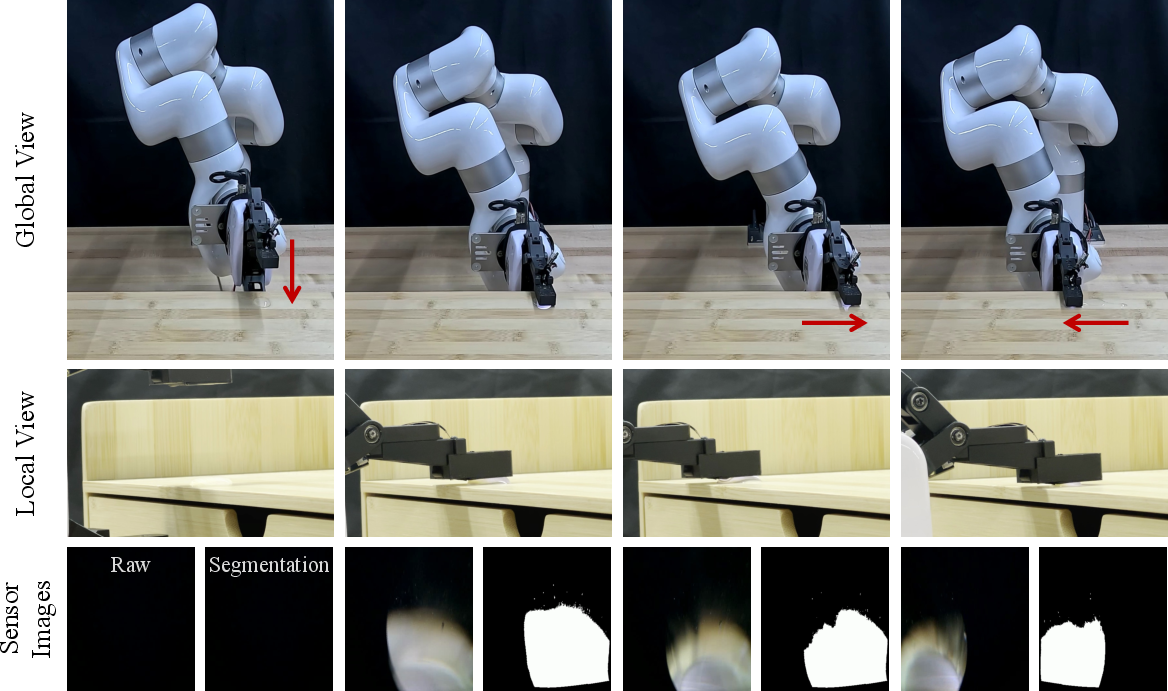

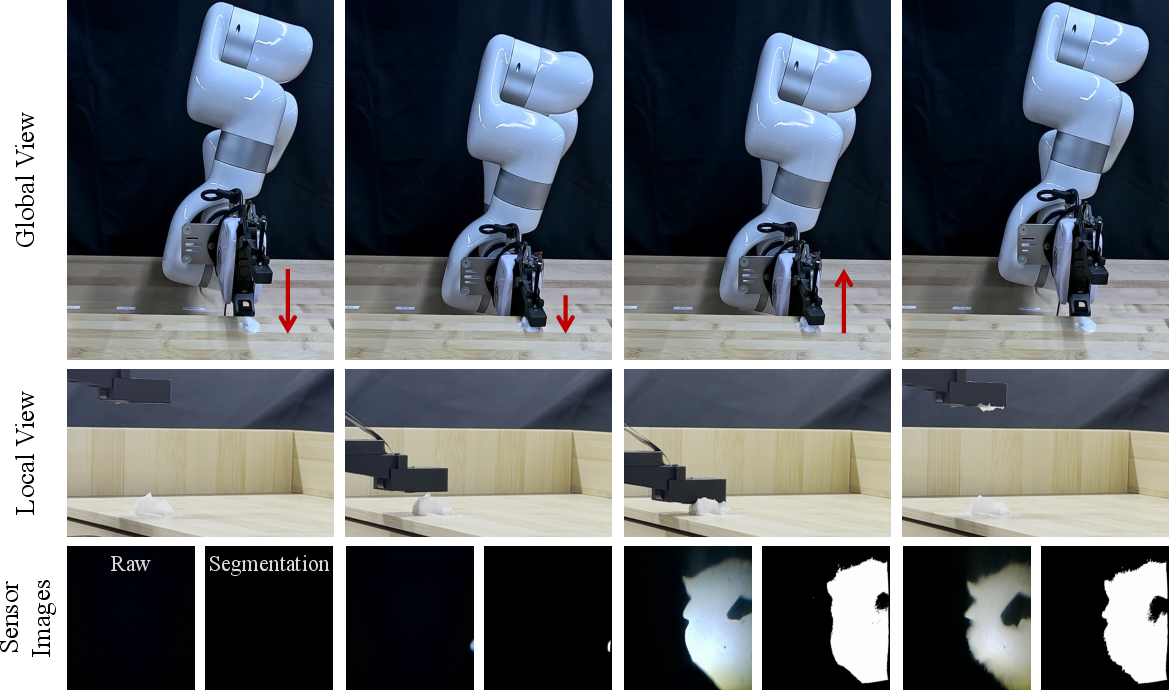

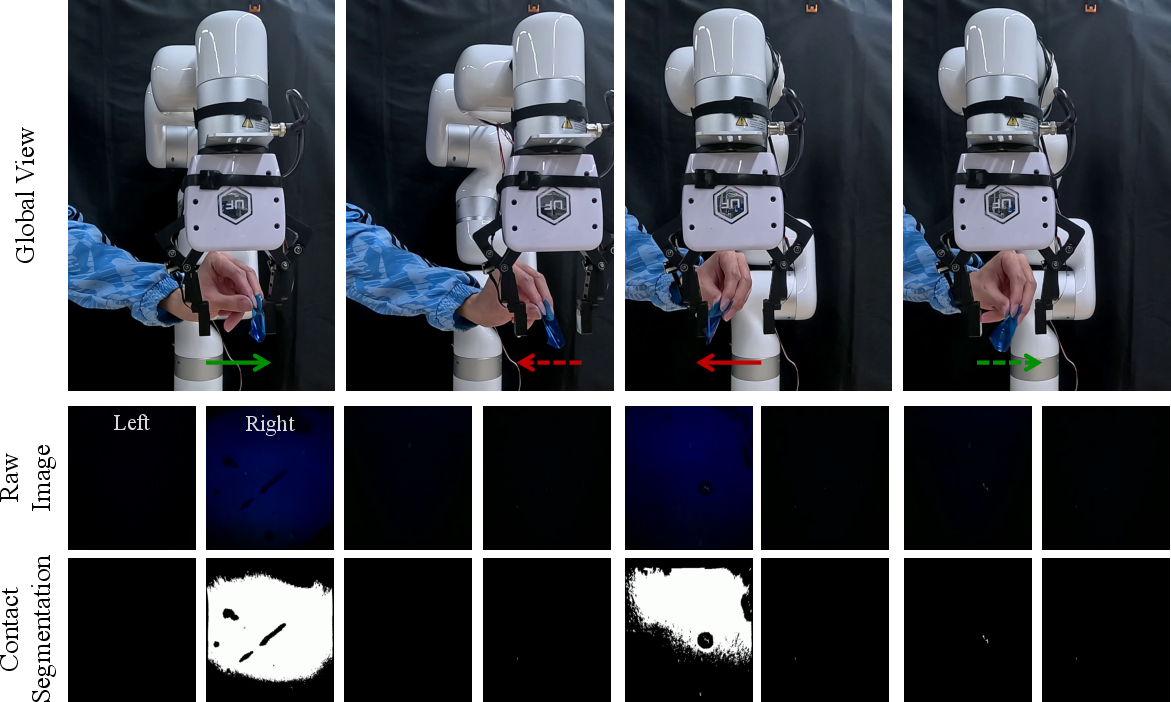

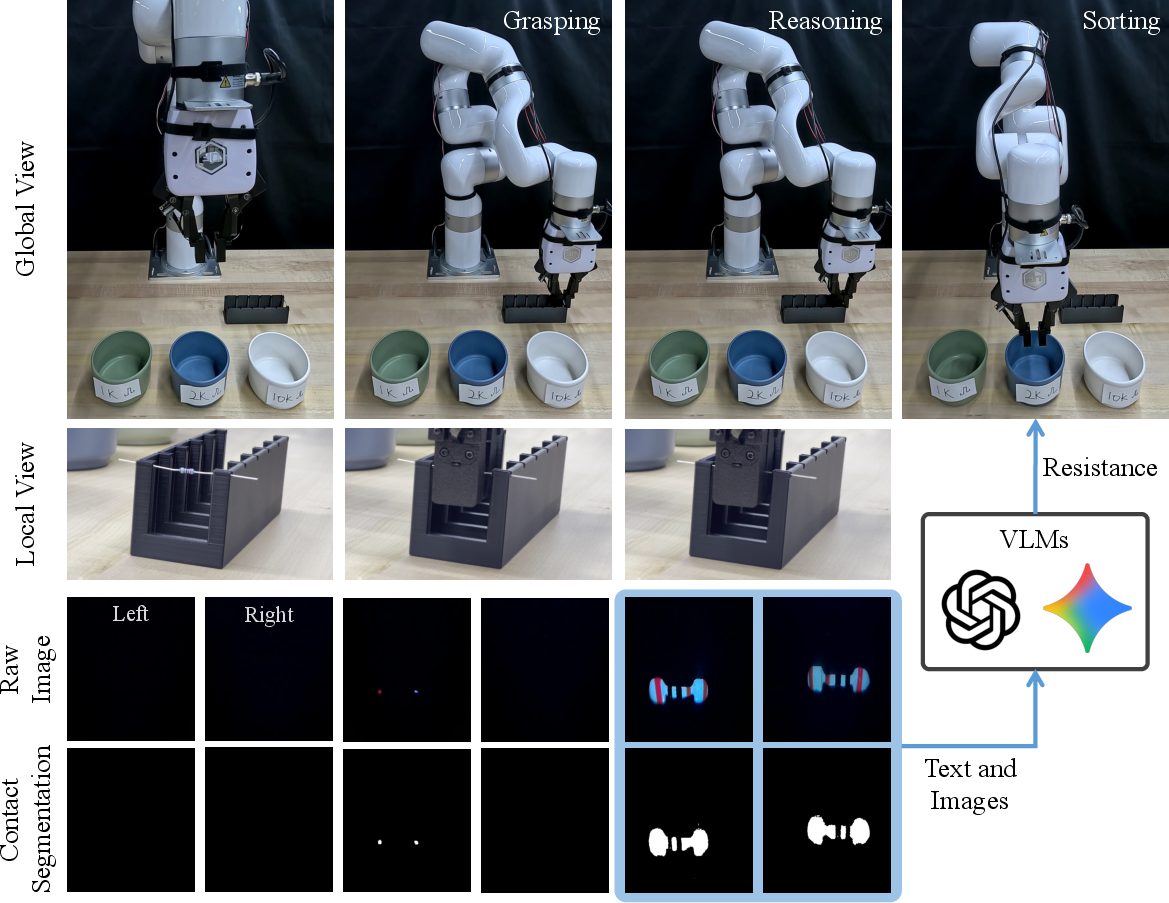

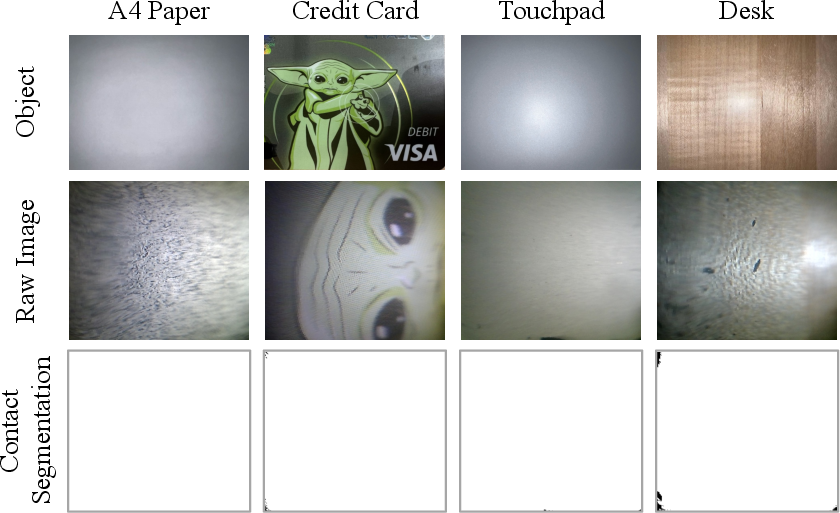

Abstract: Contact often occurs without macroscopic surface deformation, such as during interaction with liquids, semi-liquids, or ultra-soft materials. Most existing tactile sensors rely on deformation to infer contact, making such light-contact interactions difficult to perceive robustly. To address this, we present LightTact, a visual-tactile fingertip sensor that makes contact directly visible via a deformation-independent, optics-based principle. LightTact uses an ambient-blocking optical configuration that suppresses both external light and internal illumination at non-contact regions, while transmitting only the diffuse light generated at true contacts. As a result, LightTact produces high-contrast raw images in which non-contact pixels remain near-black (mean gray value < 3) and contact pixels preserve the natural appearance of the contacting surface. Built on this, LightTact achieves accurate pixel-level contact segmentation that is robust to material properties, contact force, surface appearance, and environmental lighting. We further integrate LightTact on a robotic arm and demonstrate manipulation behaviors driven by extremely light contact, including water spreading, facial-cream dipping, and thin-film interaction. Finally, we show that LightTact's spatially aligned visual-tactile images can be directly interpreted by existing vision-LLMs, enabling resistor value reasoning for robotic sorting.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Explain it Like I'm 14

What is this paper about?

This paper introduces LightTact, a small fingertip sensor that lets robots “see” when they touch something, even if the touch is extremely gentle (like touching water or a thin plastic film). Unlike most touch sensors that need the surface to squish or bend to detect contact, LightTact uses clever optics (how light travels) so it can detect contact without relying on deformation.

What questions does the paper try to answer?

To make the idea clear, the paper focuses on three simple questions:

- Can we design a fingertip sensor that shows contact directly in the camera image—even when there’s no visible squishing?

- Will this work reliably with many kinds of materials (liquids, gels, soft stuff, hard objects) and in normal room lighting?

- What new robot skills become possible if the robot can sense very light touch this way?

How does LightTact work?

Think of LightTact as a tiny, blacked‑out box with a clear tip. Inside the box are:

- a small LED (a light source), and

- a tiny camera.

The clear tip has two surfaces:

- the touching surface (where the object meets the sensor), and

- the viewing surface (where light exits toward the camera).

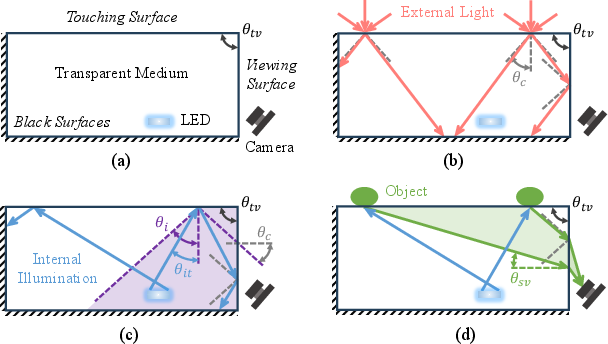

The trick is the shape and coating of the parts:

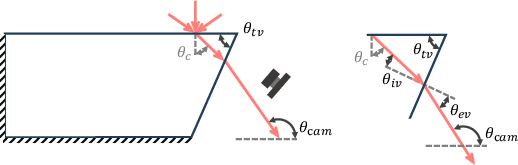

- The touching and viewing surfaces aren’t parallel; they’re tilted relative to each other (like a thin wedge).

- The inside walls are painted matte black so stray light gets absorbed.

- Only the area being touched lets the right kind of light escape to the camera.

Here’s the idea in everyday terms:

- When nothing touches the sensor, light coming from outside or bouncing around inside hits the surfaces at steep angles that make it bounce back inside (this is like how light stays inside a fiber‑optic cable). Because of the wedge shape and black walls, that light never reaches the camera. Result: the camera sees a nearly all‑black image.

- When something actually touches the tip, the tiny air gap disappears. The LED light scatters off the object in many directions, and some of that scattered light exits through the viewing surface at just the right angles to reach the camera. Result: the camera sees bright pixels exactly where the contact happens—and those pixels also show the object’s natural appearance (its color and texture).

So LightTact acts like a “light trap” that stays dark unless there is real contact. That’s why the sensor can detect extremely light touches without needing the material to squish.

How they built it

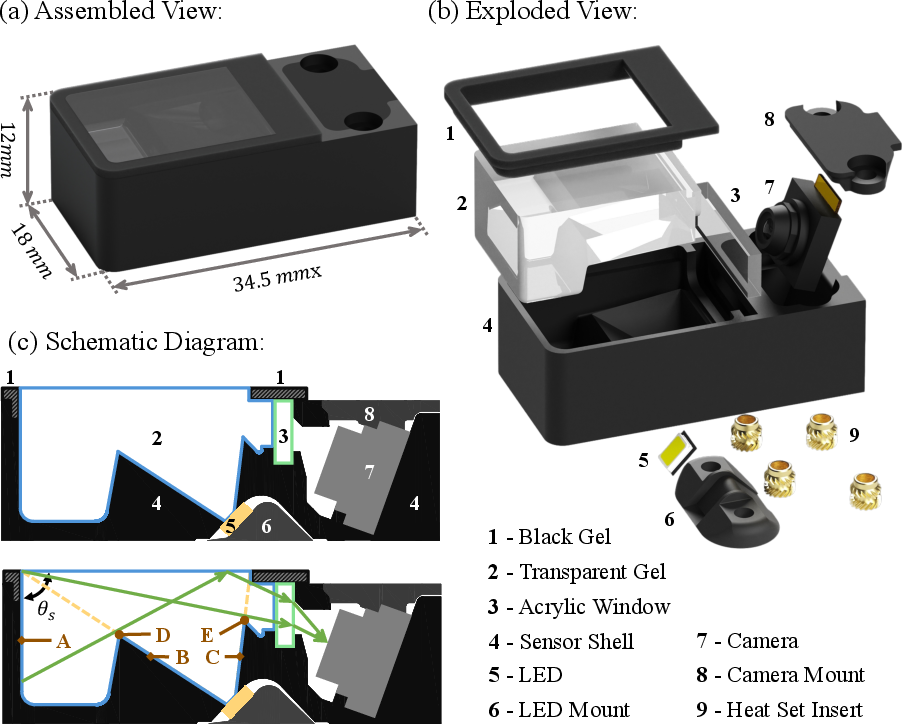

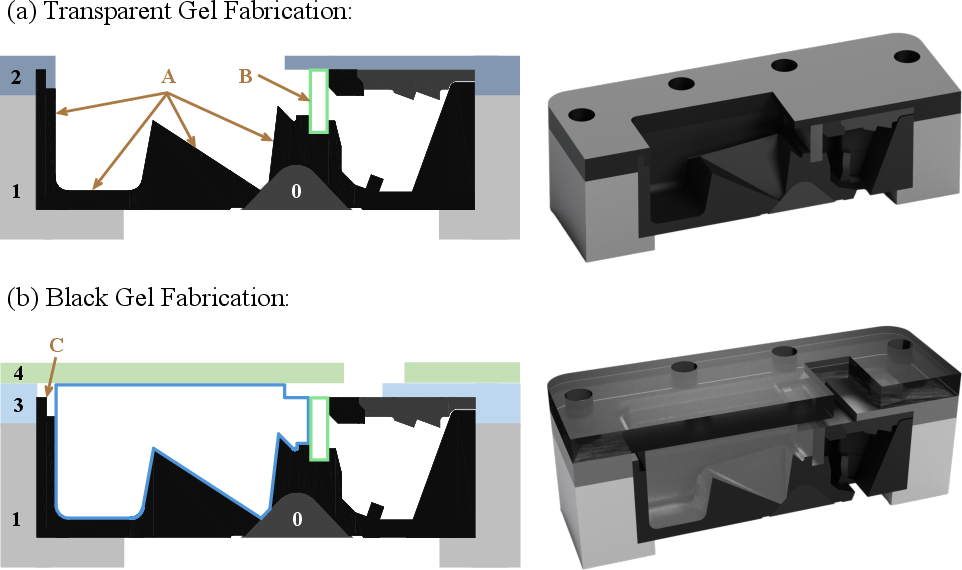

To make the sensor small, stable, and safe to touch:

- The touching surface is a soft, clear silicone gel (comfortable for contact).

- The viewing surface is a thin, rigid acrylic window (keeps the image sharp).

- The LED is angled for even lighting, and baffles (little walls) block unwanted light paths.

- A ring of soft black gel around the clear tip keeps the transition smooth and blocks side light leaks.

How the images are processed

Because non‑contact pixels are almost pure black and contact pixels are bright, the software can segment contact very simply:

- Record a short “no‑contact” reference image.

- For each new frame, subtract the reference image.

- Mark pixels that get brighter in a consistent way as “contact.”

This is a fast, simple method because the optics already did most of the hard work.

What did they find?

In brief, LightTact does what they hoped—and more.

- High‑contrast contact: Non‑contact pixels stay near black (mean gray value < 3), while contact pixels pop out clearly. This holds even under bright room lighting.

- Works across many materials: It detects contact with liquids (water, milk, juice), semi‑liquids (toothpaste, facial cream), very soft items (cotton, sponge, tofu, noodles, meat, skin), and also hard objects (beads, plastic, gadgets). It does not need a minimum force to detect contact.

- Pixel‑level segmentation: The sensor can precisely outline where contact happens, not just say “touch/no touch.”

- Natural appearance: The bright contact pixels preserve what the object looks like (color, pattern, texture). This makes the image useful for higher‑level recognition.

- Robot demos:

- Water spreading: The robot can keep gentle contact with a puddle and spread it smoothly without scraping the surface.

- Cream dipping: The robot can dip into facial cream until a certain contact coverage is reached, then lift off cleanly.

- Thin film interaction: With two LightTact sensors, the robot can respond to a delicate touch from ultra-thin food film (about 0.1 mm thick) with practically zero force.

- Vision‑language reasoning: Because the contact image shows natural appearance, they can crop it and ask a vision‑LLM to read resistor color bands. The robot then sorts resistors by value (about 80% success in their trials).

Why is this important?

- Gentler, safer manipulation: Robots can handle delicate items—liquids, gels, thin films, soft foods—without needing to press hard or rely on deformation.

- Better sensing in normal environments: Unlike past optics‑based tactile sensors that need dark rooms or controlled lighting, LightTact works in everyday indoor light.

- Smarter robot perception: Because contact pixels preserve real appearance, general‑purpose AI models (like vision‑LLMs) can understand the images without special training, enabling tasks such as reading labels, codes, or color bands right at the fingertip.

- New applications: This could help household robots wipe surfaces, apply creams, handle thin materials in packaging, sort small parts in factories, assist in food preparation, or support gentle medical/assistive tasks.

Overall, LightTact shows that making “light itself” indicate contact—rather than waiting for squishing—can unlock a new class of precise, gentle, and intelligent robot behaviors.

Knowledge Gaps

Knowledge gaps, limitations, and open questions

Below is a single, actionable list of unresolved issues that future work could address:

- Quantify the maximum ambient illumination under which non-contact pixels remain reliably “near-black” and segmentation remains error-free (e.g., direct sunlight >10,000 lux, outdoor specular highlights, strong backlighting), and establish robustness margins.

- Characterize failure modes with optically smooth, specular, or transparent objects at contact (e.g., glass, polished metals, clear films) where diffuse reflection may be minimal; test index-matched contacts (silicone–acrylic, PDMS–glass) that could undermine the assumed scattering behavior.

- Assess the impact of persistent wet residues (water films, creams, oils) and particulate contamination on the touching and viewing surfaces: how do thin films alter optical paths, increase false positives, or demand recalibration; develop cleaning protocols and protective coatings (hydrophobic/oleophobic).

- Provide quantitative spatial performance metrics: mm/pixel resolution, contact localization error, repeatability across materials and lighting, and segmentation precision/recall versus annotated ground truth.

- Measure detection thresholds in physical units (e.g., minimum normal force, contact pressure, or applied impulse) across object classes; determine whether brightness or contact area correlates with force to enable force/pressure estimation.

- Evaluate dynamic performance: latency, end-to-end frame rate, motion blur, and segmentation stability during rapid sliding, slip onset, rolling contacts, and impacts.

- Analyze long-term stability and drift: LED aging, temperature-induced index changes, mechanical creep of silicone, and paint/gel wear that increase internal reflections; propose auto-recalibration strategies beyond fixed reference-frame differencing.

- Study sensitivity to fabrication tolerances: acceptable bounds for wedge angle, gel thickness, baffle heights, and refractive-index variations; perform tolerance analysis to predict and prevent light leakage.

- Investigate mechanical durability: gel tearing, delamination at the gel–acrylic interface, black paint abrasion, and shell impacts; develop accelerated life tests and service/repair procedures.

- Define the operating envelope (temperature, humidity, chemicals/solvents, UV exposure): how do environmental factors affect silicone swelling, refractive index, and critical angle, and what mitigations are needed.

- Optimize internal illumination: LED placement, emission pattern, and wavelength (e.g., monochromatic, IR) to improve uniformity and ambient rejection; compare single vs. multi-LED designs and PWM control for power/thermal constraints.

- Refine optical shielding: baffle geometry and “black surface” materials/coatings with measured reflectance over relevant angles/wavelengths; quantify how internal surface reflectivity growth (due to wear or residue) degrades ambient blocking.

- Validate alternative camera placements and wedge geometries (mentioned in the appendix) experimentally, including trade-offs in size, manufacturability, and robustness to non-contact light leakage.

- Enhance segmentation beyond fixed thresholds: adaptive, learning-based methods that handle variable ambient conditions, sensor noise, and contamination while preserving low compute cost; quantify gains vs. simple differencing.

- Extend sensing capabilities beyond binary contact: infer contact topology (curvature), shear/friction indicators from temporal brightness patterns, and material properties (e.g., viscosity for liquids, texture descriptors).

- Benchmark comprehensively against TIR-based and deformation-based sensors on standardized tasks and metrics (contact detection under light force, dynamic slip detection, appearance fidelity, robustness to ambient light).

- Examine multi-contact and large-area contact cases: overlapping contact regions, occlusions, and interactions among multiple objects; assess segmentation accuracy and stability when contact spans most of the sensor.

- Evaluate performance with luminous or emissive objects (LEDs, displays) that violate the “non-luminous” assumption; determine if emissive light at contact causes false positives or can be leveraged.

- Quantify and correct visual–tactile alignment errors after rectification (parallax, lens distortion) and report residual pixel-to-mm mapping error across the field of view.

- Report system-level resource use: camera throughput, CPU/GPU load, achievable real-time rates on embedded controllers, and power draw/thermal rise from the LED during prolonged operation.

- Address integration in dexterous hands and arrays: cross-sensor synchronization, mutual optical interference, and mechanical mounting constraints; explore array-level calibration and joint segmentation across multiple fingertips.

- Improve VLM-driven manipulation evaluation: expand tasks beyond resistors, compare different VLMs, analyze failure causes (color confusions, lighting variations), and test prompt engineering or finetuning to boost reliability.

- Provide datasets and code for quantitative evaluation (raw/rectified images, contact masks, ambient conditions, object categories), enabling reproducible benchmarking and community-driven improvement.

- Investigate safety and human-contact considerations: LED brightness near skin, thermal comfort, biocompatibility of gels/coatings, and sanitation for frequent human–robot interactions.

Practical Applications

Below is a structured analysis of practical, real-world applications enabled by the LightTact visual–tactile fingertip sensor. Each item specifies the sector, what can be done now versus later, and the tools/products/workflows it could enable, alongside key assumptions or dependencies.

Immediate Applications

- Gentle liquid interaction for cleaning and process control (Robotics; Facilities; Consumer) — Robots detect, spread, and regulate contact with liquids (e.g., wiping spills, spreading water/cleaners) using contact-coverage control and PD height regulation. — Tools/workflows: LightTact-equipped grippers; ROS node publishing pixel-level contact masks; closed-loop “coverage controller”; fixed exposure camera driver. — Assumptions/dependencies: Typical indoor ambient light (<~2000 Lux), non-luminous liquids, adherence to wedge-angle and LED placement constraints; regular cleaning of gel to avoid surface contamination.

- Controlled application of semi-liquids (adhesives, creams, greases) (Manufacturing; Cosmetics; Maintenance) — Robots precisely detect and regulate dipping or deposition depth by contact coverage without relying on force thresholds. — Tools/products: “Contact-percentage” stop rules; station for cream/adhesive pickup with LightTact; minimal-contact dip routines. — Assumptions/dependencies: Stable LED power (e.g., ~430 Lux at touch), fixed exposure camera settings, compliant gel durability in oily/chemically active media.

- Handling ultra-thin, ultra-soft materials (films, foils, fibers) (Manufacturing; Packaging; Food; Textiles) — Responsive manipulation (left/right tracking, centering, pick-and-place) with minimal force; reliable binary and pixel-level contact on thin films, tofu, noodles, sponges, cotton. — Tools/workflows: Dual-LightTact reflex controller; “return-to-center” behavior; segmentation-driven alignment in pick-and-place. — Assumptions/dependencies: Proper calibration of pixel–surface mapping; maintenance of matte-black, absorbing boundaries; routine gel inspection to prevent scratches/clouding.

- VLM-guided fine-grained sorting and classification by tactile appearance (Electronics; Logistics) — Sorting small parts whose visual features are only clear at touch (e.g., resistors by color bands) with out-of-the-box VLM prompting. — Tools/products: VLM prompt templates; crop-and-prompt pipeline; automatic gripper closure criteria (min contact pixels); part bins linked to VLM outputs. — Assumptions/dependencies: VLM availability and reliable color interpretation; controlled exposure to avoid over/underexposure; sufficient compute (local or cloud) and network latency tolerances.

- Safety-critical touch detection in occlusions and confined spaces (Robotics; HRI; Industrial Safety) — Detect first contact when wrist cameras are occluded, preventing collisions by halting or modulating motion without requiring measurable force. — Tools/workflows: Contact-triggered soft-stop; “no-force touch” approach routines; perimeter black gel to preserve optics in enclosure. — Assumptions/dependencies: Correct wedge angle (θtv > 2θc) and black coatings to suppress ambient/internal light at non-contact; fixed exposure and LED positioning; mechanical integration in grippers.

- Multimodal dataset collection for robot learning (Academia; AI/ML) — Create spatially aligned visual–tactile images for contact segmentation and multimodal reasoning without custom labels (near-black background enables simple thresholding). — Tools/workflows: Automated calibration jig (5×5 bump array); rectification pipeline; frame differencing segmentation; dataset export scripts. — Assumptions/dependencies: Repeatable calibration; fixed exposure; consistent LED intensity; care in maintaining gel clarity.

- Education/lab teaching modules (STEM Education) — Hands-on teaching of optics (TIR vs refractive paths), tactile sensing, and multimodal robotics; low-cost fingertip sensors for undergraduate labs. — Tools/workflows: Assembly kits; visualization demos comparing TIR-based vs LightTact optics; ROS exercises for contact segmentation. — Assumptions/dependencies: Access to silicone casting and acrylic machining; adherence to design constraints (LED baffles, matte coatings).

- Process monitoring for coverage/contact area (Quality Assurance) — Real-time verification of surface coverage during application steps (e.g., cream/grease spread, label seating) using contact masks. — Tools/workflows: Coverage metrics; pass/fail thresholds; logging to MES/QMS systems. — Assumptions/dependencies: Reliable segmentation under process lighting; compatible materials (non-luminous, diffuse reflection at contact); stable mounting and camera calibration.

Long-Term Applications

- Dexterous household assistants for hygiene and personal care (Consumer; Healthcare) — Robots that can wipe spills, apply lotions, and perform hygiene tasks safely using minimal-contact sensing. — Tools/products: LightTact arrays on multi-finger hands; policy libraries for liquid/cream manipulation; washable, replaceable gel cartridges. — Assumptions/dependencies: Regulatory approval for healthcare contexts; durable, biocompatible materials; sealed designs for repeated cleaning/disinfection.

- Surgical and medical robotics with ultra-gentle contact sensing (Healthcare) — Instruments that detect tissue contact without compression, reducing trauma during palpation, dressing application, or graft placement. — Tools/products: Sterilizable LightTact variants; surgical tooltips with optical isolation; OR-integrated vision–tactile pipelines. — Assumptions/dependencies: Medical-grade materials and sterilization protocols; proof of safety and reliability; integration with surgical navigation systems; robust performance on wet/biological surfaces.

- Industrial micro-assembly and fragile component handling at scale (Electronics; Energy; Photonics) — Automated manipulation of thin membranes, separator films (batteries), solar wafers, and delicate optics with pixel-level contact maps. — Tools/workflows: High-throughput grippers fitted with arrays of LightTact; factory calibration and QC routines; segmentation-driven alignment in micro-assembly cells. — Assumptions/dependencies: Scalable manufacturing of sensors; long-term gel stability and scratch resistance; adaptation to cleanroom environments; ESD-safe integration.

- Standardized “soft-touch” safety skins and compliance sensors for robots (Policy; Industrial Safety) — Establish contact detection standards that do not depend on force thresholds, enabling certification of “gentle approach” behaviors in collaborative robots. — Tools/products: Conformance tests based on pixel-level contact detection; safety controllers that gate motion on contact masks. — Assumptions/dependencies: Industry consensus on metrics; durability and self-test features; interoperability with existing safety PLCs and standards (e.g., ISO/TS 15066).

- Tactile–visual foundation models and generalist robotic policies (AI/ML; Software) — Training foundation models on aligned visual–tactile images to improve manipulation of deformable, semi-liquid, and visually challenging objects. — Tools/workflows: Large-scale collection pipelines; self-supervised pretraining recipes using near-black background; multimodal policy distillation with VLM guidance. — Assumptions/dependencies: Sufficient data and compute; standard APIs for sensor streaming; robust data hygiene across diverse lighting/materials; improvements in on-device reasoning.

- Consumer prosthetic fingertips with appearance-preserving touch (Healthcare; Assistive Tech) — Prostheses that “see at touch,” enabling gentle contact detection and object identification by local appearance. — Tools/products: Battery-efficient LightTact modules; haptic feedback mapping from contact masks; companion mobile apps for object recognition at touch. — Assumptions/dependencies: Miniaturization and power constraints; ergonomic integration and robustness; user safety and comfort; long-term clarity of gels.

- Autonomous lab automation for wet/soft materials (R&D; Biotech) — Pipetting, gel handling, and reagent spreading guided by pixel-level contact segmentation to avoid sample damage. — Tools/workflows: Lab-grade LightTact modules; sterile enclosures; task libraries for soft material manipulation. — Assumptions/dependencies: Chemical compatibility of gel and coatings; sterilization; precision calibration; reliability on translucent/biological media.

- Inspection and QA of surface finish and contamination at touch (Automotive; Aerospace) — Detect residue/film on parts by contact appearance and segmented regions, enabling in-line rework decisions. — Tools/workflows: Inspection fixtures with LightTact; anomaly detection models over segmented contact patches. — Assumptions/dependencies: Repeatable contact conditions; domain-specific thresholds; material-dependent reflection characteristics; maintenance of optical surfaces.

- Multi-sensor tactile arrays for 3D contact reconstruction (Robotics) — Arrays of LightTact modules enabling richer contact geometry estimation and coordinated manipulation of deformable objects. — Tools/workflows: Array calibration/synchronization; 3D fusion of contact masks; cooperative gripper control. — Assumptions/dependencies: Mechanical packaging; synchronization across cameras; real-time fusion algorithms; thermal management for LEDs.

Notes on feasibility and dependencies across applications:

- Optical constraints must be preserved (θtv > 2θc for non-contact suppression, LED placement to ensure TIR at non-contact) and cameras must run with fixed exposure to keep non-contact pixels near-black.

- Performance degrades under extremely bright ambient or poor internal light absorption; robust matte-black coatings and baffles are critical.

- Gels and acrylic windows require maintenance for clarity and scratch resistance; chemical compatibility and sterilization pathways are pivotal in medical/biotech contexts.

- VLM-based workflows depend on reliable color/appearance interpretation and may require fine-tuning for domain-specific palettes or contamination.

- Scaling to industry requires ruggedization (sealed housings, replaceable gel layers), standardized calibration, and integration with existing control and safety systems.

Glossary

- Ambient-blocking optical configuration: An optical layout designed to suppress external and internal light at non-contact regions while passing only contact-generated light; example: "LightTact uses an ambient-blocking optical configuration that suppresses both external light and internal illumination at non-contact regions"

- Auto-exposure: A camera feature that automatically adjusts exposure time based on scene brightness; example: "The camera is set with auto-exposure disabled and uses a fixed exposure time of during operation."

- Critical angle: The minimum angle of incidence beyond which total internal reflection occurs at an interface; example: "critical angle of "

- Diffuse reflection: Light scattering from a surface into many directions, preserving appearance under contact; example: "the contacting surface produces diffuse reflection."

- Field of view: The angular extent of the observable scene from a camera; example: "with a field of view"

- Force-torque (FT) sensor: A sensor that measures forces and torques applied to a robot’s end-effector; example: "force-torque (FT) sensor"

- Frustrated total internal reflection (TIR): A phenomenon where TIR is disrupted by a contacting medium, allowing light to escape and highlight contact; example: "frustrated total internal reflection (TIR)"

- Lux: A unit of illuminance indicating luminous flux per unit area; example: "As LED brightness increases to ,"

- PD controller: A proportional-derivative feedback controller used to regulate robot motion; example: "We regulate the end-effector height using a PD controller to maintain approximately contact coverage."

- Pixel-level contact segmentation: Determining which image pixels correspond to physical contact regions; example: "LightTact achieves accurate pixel-level contact segmentation that is robust to material properties, contact force, surface appearance, and environmental lighting."

- Refractive index: A measure of how much light bends when entering a material; example: "Both materials have similar refractive indices ()"

- Shore A hardness: A scale for measuring the hardness of elastomers and soft plastics; example: "shore A27"

- Specular reflection: Mirror-like reflection where light reflects at a single angle; example: "reflect specularly off non-contact regions of the touching surface"

- Total internal reflection (TIR): Reflection of light entirely within a medium when the incidence angle exceeds the critical angle; example: "total internal reflection (TIR)"

- UVC camera: A USB Video Class compliant camera that streams video without specialized drivers; example: "Imaging is performed by a compact UVC camera (based on OV5693) with a field of view"

- Vision-based tactile sensors (VBTSs): Tactile sensors that use cameras to capture visualized contact signals; example: "Vision-based tactile sensors (VBTSs) have gained prominence in recent years due to their high spatial resolution and low cost"

- Vision–LLMs (VLMs): Models that jointly process visual and textual inputs for reasoning; example: "vision-LLMs (VLMs)"

- Warping map: A pixel mapping used to geometrically transform images into a rectified view; example: "compute a warping map to rectify and crop each raw image to a top-down view"

- Wedge angle: The angle between the touching and viewing surfaces in the sensor’s nonparallel geometry; example: "By choosing a sufficiently large wedge angle ()"

- Wyman–White fingerprint imager: An optical device for fingerprint capture that inspired aspects of the sensor’s light management; example: "WymanâWhite fingerprint imager"

Collections

Sign up for free to add this paper to one or more collections.