Learning Factors in AI-Augmented Education: A Comparative Study of Middle and High School Students

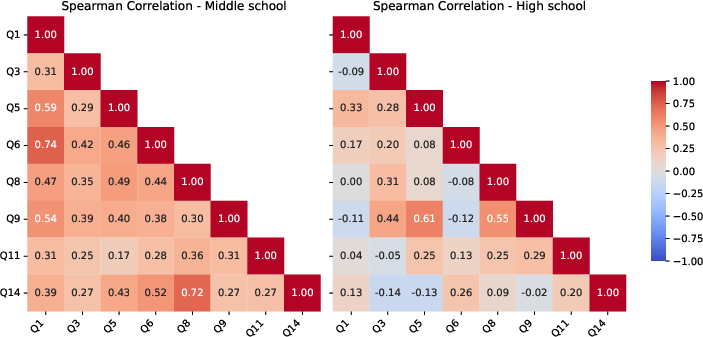

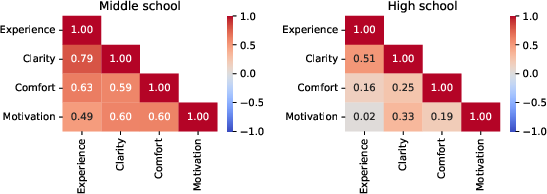

Abstract: The increasing integration of AI tools in education has led prior research to explore their impact on learning processes. Nevertheless, most existing studies focus on higher education and conventional instructional contexts, leaving open questions about how key learning factors are related in AI-mediated learning environments and how these relationships may vary across different age groups. Addressing these gaps, our work investigates whether four critical learning factors, experience, clarity, comfort, and motivation, maintain coherent interrelationships in AI-augmented educational settings, and how the structure of these relationships differs between middle and high school students. The study was conducted in authentic classroom contexts where students interacted with AI tools as part of programming learning activities to collect data on the four learning factors and students' perceptions. Using a multimethod quantitative analysis, which combined correlation analysis and text mining, we revealed markedly different dimensional structures between the two age groups. Middle school students exhibit strong positive correlations across all dimensions, indicating holistic evaluation patterns whereby positive perceptions in one dimension generalise to others. In contrast, high school students show weak or near-zero correlations between key dimensions, suggesting a more differentiated evaluation process in which dimensions are assessed independently. These findings reveal that perception dimensions actively mediate AI-augmented learning and that the developmental stage moderates their interdependencies. This work establishes a foundation for the development of AI integration strategies that respond to learners' developmental levels and account for age-specific dimensional structures in student-AI interactions.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Explain it Like I'm 14

Overview

This paper looks at how middle school and high school students feel and think about using AI tools while learning programming. The authors focus on four important parts of learning—experience, clarity, comfort, and motivation—and ask whether these parts connect to each other in the same way when AI is involved, and whether those connections change with age.

What questions did the researchers ask?

The study asks two simple questions:

- Do the four learning factors (experience, clarity, comfort, motivation) still relate to each other when students use AI tools in class?

- Are these relationships different for middle school students compared to high school students?

How did they study it?

The researchers ran programming activities that included AI tools in real classrooms:

- Middle schoolers used Teachable Machine to try out things like image or sound classification and chatted with simple chatbots.

- High schoolers built Python apps with help from AI tools like Gemini and Claude, and learned basic prompting (how to ask AI the right way).

They then gave students a survey with two parts:

- Number ratings (called Likert scales) where students picked options like 1–5 for experience or 1–4 for comfort. Think of this like rating a movie: 1 = bad, 5 = great.

- Open-ended questions where students wrote freely about what they liked, found helpful, or struggled with.

To understand the results, they used:

- Correlation analysis: This checks how two things move together. For example, if students who say the AI is clear also say the experience is good, those two scores “go together.”

- Mann-Whitney U tests: A way to compare two groups (middle school vs. high school) without needing fancy assumptions.

- Text mining: Counting the most common words students wrote and seeing which words often appear together. Imagine making a word cloud and linking words that are frequently used in the same sentence.

They also checked that the survey questions worked well together using a reliability measure (Cronbach’s alpha). The scores were strong, which means the questions were consistent.

What did they find and why does it matter?

Here are the main results:

- Both groups generally had positive attitudes toward using AI in class.

- Ease of use showed a clear age difference: high school students found the AI tools easier to use than middle school students.

- The big discovery was how the four learning factors connect:

- Middle school: Strong positive links across all four factors. If something felt clear, students also felt more comfortable, more motivated, and rated the experience higher. This is a “holistic” pattern—one good thing spreads to other areas.

- High school: Weaker or near-zero links between some factors. For example, a good experience didn’t necessarily mean higher motivation. This is a “differentiated” pattern—older students judge each factor separately and more critically.

From the written answers:

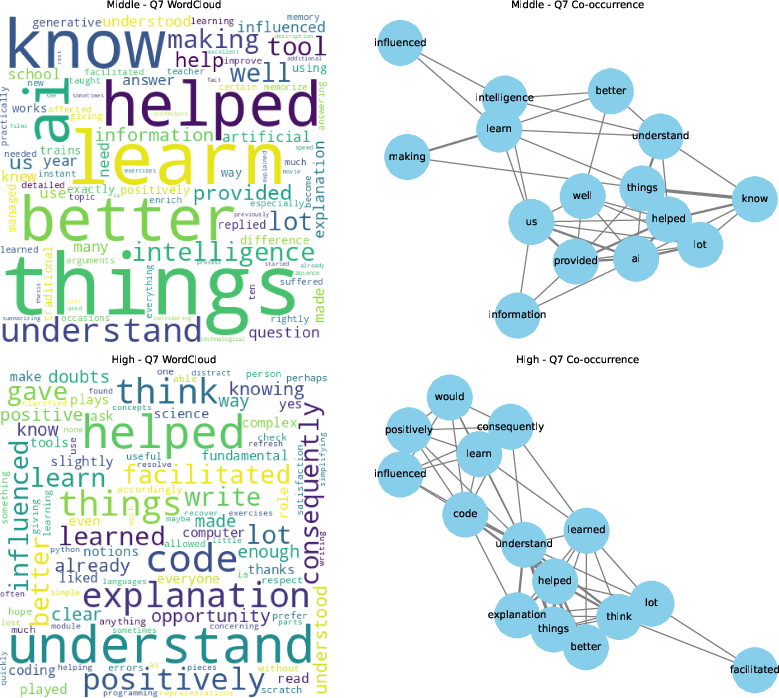

- Middle school students often used words like “learn,” “understand,” “helped,” and “better.” They saw AI mainly as a tool that helps them understand more easily.

- High school students used words like “understand,” “explanation,” “think,” “code,” and “positively.” They focused on AI helping with reasoning, explanations, and coding tasks.

Why it matters:

- For younger learners, making AI responses very clear and easy tends to raise comfort, motivation, and overall experience all at once.

- For older learners, you can’t assume that clarity or ease automatically raises motivation—you need to support each factor directly.

What does this mean for schools and teachers?

- Design AI activities by age:

- For middle school, prioritize clarity, step-by-step guidance, and simple interactions. Clear AI explanations can lift everything else.

- For high school, also work directly on motivation (make tasks meaningful, challenging, and relevant), not just usability.

- Teach prompting skills: Students struggled with asking AI the right way. Helping them write better prompts can improve outcomes.

- Support teachers: Professional development should include AI literacy and practical strategies for using AI well in class.

- Introduce AI gradually:

- Use templates and structured prompts for younger students.

- Offer more freedom and complex tasks for older students.

Limitations to keep in mind:

- The students were from extracurricular computer science programs, so they may already be more motivated and tech-savvy than average.

- Different AI tools and task types were used across age groups, which could influence results.

Overall, the study suggests that the core parts of learning—experience, clarity, comfort, and motivation—still matter when AI is involved. But the way these parts connect changes as students grow older. Understanding those differences can help schools use AI in smarter, fairer, and more effective ways.

Knowledge Gaps

Knowledge gaps, limitations, and open questions

The following list highlights what remains missing, uncertain, or unexplored in the paper and suggests concrete directions for future research.

- Limited sample size (N=53; 25 middle school, 28 high school) likely underpowers detection of nuanced inter-group differences and correlation structures; conduct a priori power analysis and replicate with larger cohorts.

- Convenience sampling from extracurricular CS schools introduces selection bias toward motivated, tech-interested students; test generalizability in formal school settings and non-CS classes.

- Cross-sectional design prevents causal inference and obscures novelty effects; implement longitudinal studies to track how dimensional structures evolve with AI literacy and continued exposure.

- Confounding differences in AI tools and tasks between age groups (Teachable Machine vs LLMs; classification vs coding) make developmental comparisons ambiguous; use identical AI tools, tasks, and scaffolds across groups in controlled experiments.

- Dimensional measurement validity is not established beyond Cronbach’s alpha; perform EFA/CFA and test measurement invariance across age groups to ensure constructs (Experience, Clarity, Comfort, Motivation) are comparable.

- Aggregation of items into dimensions is not fully specified (scoring, weighting, normalization); provide transparent scoring protocols and validate composite scale reliability per dimension.

- Motivation is operationalized via “future usage intentions” and “felt motivation,” potentially conflating adoption intent with motivational constructs; use validated motivation scales (e.g., IMI, AMS) and distinguish intrinsic vs extrinsic motivation.

- Comfort dimension combines general comfort and comprehension difficulty without parsing technological, social, and spatial facets; measure and analyze these subcomponents separately.

- Prior AI exposure, digital literacy, and programming experience—likely moderating factors—were not measured or controlled; include these covariates to disentangle developmental from experience effects.

- Demographics (gender, SES, school type) are not reported; examine subgroup differences and equity implications in perception and AI-mediated learning.

- Instructor and classroom effects (authors as implementers) may bias perceptions; adopt blinded data collection and multi-instructor designs to control for demand characteristics.

- No behavioral or outcome measures (learning gains, code quality, task completion, cognitive load) are linked to perceptions; integrate objective performance metrics and cognitive load measures to validate the predictive power of perception dimensions.

- Correlation differences between groups are interpreted descriptively; formally test group differences in correlations (e.g., Fisher r-to-z) and adjust for multiple comparisons.

- Treatment of Likert data (ordinal vs interval) and the chosen correlation statistic are not specified; use appropriate ordinal methods (e.g., polychoric correlations) and report robustness checks.

- Multiple testing corrections are applied to U tests but not to correlations; control family-wise error or false discovery rates in correlation analyses.

- Open-ended text analysis relies on WordClouds and co-occurrence networks without qualitative coding or reliability; conduct thematic analysis with coder agreement and triangulate with quantitative findings.

- Language handling of open-ended responses (collection language, translation procedures) is not described; document translation protocols and assess potential semantic drift.

- Missing data handling, item nonresponse rates, and data cleaning procedures are not reported; provide transparency and sensitivity analyses.

- Age ranges are broad (HS includes up to 20-year-olds); analyze within-group heterogeneity (grade-level, age bands) to refine developmental inferences.

- The term “vibe coding” is not defined, hindering replicability; specify instructional modalities, materials, and task designs in sufficient detail to reproduce the study.

- Trust in AI, perceived accuracy, and ethical/privacy concerns—known to shape student perceptions—were not measured; incorporate validated scales for trust, perceived reliability, and ethics.

- Prompt engineering is highlighted as important but not experimentally manipulated; test whether prompt training causally shifts clarity, comfort, and motivation, and whether effects differ by age.

- Potential novelty and social desirability effects were not controlled; include repeated measures and anonymous/blinded protocols to mitigate these biases.

- Cultural context is limited to Italy; assess cross-cultural generalizability by replicating in diverse educational systems and languages.

- Domain generality is unknown; verify whether holistic vs differentiated perception patterns persist in non-CS subjects (e.g., math, language arts, science).

- The role of cognitive load in mediating Clarity’s effects is assumed but unmeasured; include cognitive load instruments (e.g., NASA-TLX) and test mediation models.

- Structural relationships among dimensions (e.g., Clarity mediating Experience→Motivation) are inferred from correlations; use SEM to test causal pathways and moderation by developmental stage.

- Behavioral usage traces are not collected; integrate log data (prompt quality, interaction frequency, error rates) to link perceptions with interaction patterns.

- Data and instruments (questionnaire, code, stimuli) are not declared as available; share materials and analysis scripts to enable replication and meta-analysis.

Practical Applications

Immediate Applications

The following applications can be deployed now, leveraging the paper’s key finding that middle school students evaluate AI holistically (clarity strongly drives experience, comfort, and motivation), whereas high school students evaluate dimensions more independently (e.g., ease of use is higher but motivation is decoupled).

- Age-sensitive AI classroom integration strategies

- Sector: Education (K–12, CS education)

- Use case: Design lessons where middle school students use structured templates and clarity-first activities (e.g., step-by-step prompts, simplified outputs), while high school students use autonomy-driven tasks (e.g., code explanation, reasoning prompts, debugging).

- Tools/workflows: Prompt templates library for grades 6–8; LLM “clarity mode” settings; assignment scaffolds and rubrics that explicitly target clarity and comfort for younger learners, and motivation/challenge for older learners.

- Assumptions/dependencies: Teacher capacity and PD; model safety/accuracy; access to reliable infrastructure; generalizability beyond extracurricular CS contexts (sample size N=53, Italy).

- Perception-based learning analytics dashboards in LMS

- Sector: Education technology, Learning analytics

- Use case: Embed the study’s 17-item instrument into LMS or class apps to compute dimension-level correlations by age group and automatically recommend interventions (e.g., increase scaffolding when “clarity ↔ experience” correlation weakens).

- Tools/workflows: Lightweight survey modules (e.g., Wooclap-like forms), correlation heatmap dashboards, rule-based triggers for teacher alerts.

- Assumptions/dependencies: Privacy and consent (GDPR/FERPA); small-sample stability; student survey fatigue; instrument reliability holds across subjects (Cronbach’s alpha was strong in the study).

- Targeted teacher professional development in AI literacy and prompt engineering

- Sector: Education, Workforce training

- Use case: PD tracks tailored by grade level—middle school modules emphasize clarity-building prompts and safety rules; high school modules emphasize technical prompting, evaluating AI limitations, and motivational strategies.

- Tools/workflows: Micro-credential courses; practice labs with Gemini/Claude; classroom case studies; prompt design playbooks.

- Assumptions/dependencies: PD time/budget; alignment with curriculum; evolving vendor policies and model capabilities.

- Grade-appropriate CS programming activities with AI support

- Sector: Education (CS), EdTech

- Use case: Middle school: Teachable Machine classification exercises and guided chatbot interactions focusing on comprehension. High school: LLM-assisted code explanation, debugging, and reasoning tasks.

- Tools/workflows: Curated assignments; “explain-then-try” cycles; structured reflection prompts (e.g., Q7-style “How did AI influence your learning?”).

- Assumptions/dependencies: Tool reliability; safety configurations; task complexity matched to developmental stage.

- District-level guidance for age-differentiated AI use

- Sector: Policy, School administration

- Use case: Policies that mandate stronger scaffolding and clarity monitoring for middle school, while encouraging autonomy and critical evaluation in high school; standardize opt-in consent and data-protection procedures.

- Tools/workflows: Policy templates; implementation checklists; periodic perception audits using the four dimensions.

- Assumptions/dependencies: Legal compliance frameworks; stakeholder consensus; capacity to monitor and report.

- Age-aware UX features in EdTech products

- Sector: Software/EdTech

- Use case: Product toggles that adjust interface complexity, reading level, and feedback style based on class level (e.g., “Clarity-first Mode” for younger learners, “Challenge Mode” for older learners).

- Tools/workflows: Teacher-set class-level profiles; readability controls; scaffolded UI patterns; limited autonomy for younger users.

- Assumptions/dependencies: Accurate age-level configuration (or teacher override); model controllability; UX testing across diverse contexts.

- Parent/guardian guides for at-home AI use

- Sector: Daily life (home learning)

- Use case: Brief guides encouraging clarity-first, short interactions and reflection for middle schoolers; independent evaluation and critical thinking for high schoolers.

- Tools/workflows: One-page tips; suggested prompts; family rules for safe use.

- Assumptions/dependencies: Accessibility/translation; parental buy-in; alignment with school policies.

- Replication studies using the validated survey instrument

- Sector: Academia (Education research)

- Use case: Researchers replicate the study across subjects (e.g., math, language arts), school systems, and cultures to examine whether dimensional structures hold and to refine age-sensitive integration.

- Tools/workflows: Open instrument repository; shared analysis scripts (correlations, text mining); cross-institutional data sharing.

- Assumptions/dependencies: IRB approvals; broader sampling; instrument validity across domains.

Long-Term Applications

The following applications require further research, scaling, or development (e.g., larger datasets, integration work, model-level features, or multi-stakeholder governance).

- Adaptive AI tutors driven by perception signals (experience, clarity, comfort, motivation)

- Sector: EdTech, AI

- Use case: Real-time detection of low clarity/comfort and dynamic adaptation (e.g., switch to examples, analogies, slower pacing for younger learners; increase challenge and autonomy for older learners).

- Tools/workflows: Embedded perception sensing (surveys + interaction telemetry), sentiment and text-mining of reflections, reinforcement learning to optimize dimension scores.

- Assumptions/dependencies: Reliable measurement and low-latency adaptation; ethical safeguards; longitudinal validation across diverse schools.

- Cross-age AI policy frameworks and product accreditation

- Sector: Policy, Standards, Quality assurance

- Use case: National/state standards that require age-sensitive deployment and minimum “clarity thresholds” for younger learners; accreditation labels for compliant products.

- Tools/workflows: Standardized benchmarks of clarity/comfort/motivation; third-party audits; reporting pipelines.

- Assumptions/dependencies: Consensus on metrics; governance mechanisms; sustained funding for audits.

- Longitudinal learning analytics platforms combining perception and behavior

- Sector: Analytics, Research

- Use case: Track how correlations among dimensions evolve as AI literacy grows; run A/B tests on scaffolding types; identify when holistic vs differentiated evaluation emerges or shifts.

- Tools/workflows: Secure data warehouses; instrumented LMS; analysis notebooks; teacher-facing insight reports.

- Assumptions/dependencies: Strong data governance; interoperability; robust causal inference designs.

- Model-level pedagogical control knobs for LLMs

- Sector: AI platform providers

- Use case: Expose explicit parameters for clarity, cognitive load, autonomy, and reading level, enabling age-tailored outputs via API (e.g., “Explain like 6th grade,” “Encourage reflection,” “Reduce ambiguity”).

- Tools/workflows: Meta-prompt frameworks; evaluation harnesses; developer SDKs for educational settings.

- Assumptions/dependencies: Model architecture supports fine-grained control; standardized evaluation sets; ongoing calibration.

- Teacher co-pilot that auto-synthesizes interventions from class heatmaps

- Sector: EdTech

- Use case: AI assistant that ingests perception dashboards and suggests differentiated lesson adjustments, activities, or prompts to improve clarity for younger groups or motivation for older groups.

- Tools/workflows: Integration with LMS and class rosters; interpretable recommendations; versioned lesson plans.

- Assumptions/dependencies: Teacher trust and oversight; explainability; generalization beyond CS.

- Certification and benchmarking tools for AI-supported curricula

- Sector: Assessment/QA

- Use case: Independent tools that test AI modules against age-specific outcomes (clarity, comfort, motivation) and publish scorecards for districts to inform procurement.

- Tools/workflows: Multisite trials; validated instruments; public dashboards.

- Assumptions/dependencies: Wide participation; stable metrics; funding for evaluations.

- Equity-focused AI deployment at scale

- Sector: Education

- Use case: Tailor AI integration for schools with lower digital literacy or limited resources, ensuring scaffolding that compensates for disparities and expanding beyond CS into other subjects.

- Tools/workflows: Tiered support packages; translated materials; local capacity-building.

- Assumptions/dependencies: Resource allocation; culturally responsive design; community engagement.

- Extension to workforce training and adult education

- Sector: Corporate learning, Vocational training

- Use case: Map the study’s developmental insights onto novice vs. experienced learners in workplaces (holistic vs. differentiated evaluation), tuning AI training flows accordingly.

- Tools/workflows: Adaptive onboarding coaches; skill-level toggles; reflection-based prompts for novices and autonomy-driven tasks for experienced staff.

- Assumptions/dependencies: Validity of analogies between age and experience; domain-specific customization; organizational buy-in.

Glossary

- Adaptive interventions: Data-driven educational strategies that tailor support or content based on learner characteristics or developmental stage. "adaptive interventions that account for age-specific dimensional structures in studentâAI interactions"

- Bonferroni correction: A multiple-comparison adjustment that lowers the significance threshold to control the family-wise error rate. "Bonferroni~\cite{dunn1964multiple} correction ($\alpha_{\text{Bonferroni} = 0.05/8 = 0.00625$)"

- Claude: A LLM developed by Anthropic, used for AI-assisted tasks. "Claude \cite{claude}"

- Co-occurrence network analysis: A text mining technique that maps how terms co-appear to reveal semantic relationships. "Word frequency analysis and co-occurrence network analysis."

- Cognitive load: The mental effort required to process information; reducing it can improve learning outcomes. "Clarity reduces cognitive load and enhances achievement"

- Computer-assisted instruction: The use of computers to deliver or support instructional content and activities. "\ccsdesc[500]{Applied computing~Computer-assisted instruction}"

- Correlation matrix: A table showing pairwise correlation coefficients among variables to reveal patterns of association. "Correlation matrices, and Mann-Whitney U tests~\cite{nachar2008mann}"

- Cronbachâs alpha: A reliability coefficient measuring internal consistency of a set of survey or test items. "Cronbachâs alpha \cite{cronbach1951coefficient}"

- Cross-sectional survey design: A study that collects data from participants at a single point in time to analyze current relationships. "We adopted a cross-sectional survey design to examine student perceptions"

- Gemini: A LLM from Google, used for generative AI tasks in the study. "Gemini \cite{gemini}"

- Human-AI Interaction: The study of how humans work with and evaluate AI systems, including usability and trust. "Human-AI Interaction"

- Internal consistency: The extent to which items within a scale measure the same construct. "indicating internal consistency and supporting the adequacy of the instrument."

- LLMs: Generative AI models trained on vast text corpora to perform language tasks such as generation and reasoning. "LLMs"

- Learning Analytics: The collection and analysis of learner data to understand and optimize learning processes and environments. "Learning Analytics"

- Likert-scale: A psychometric scale using ordered response options to measure attitudes or perceptions. "Likert-scale~\cite{likert1932technique}"

- Mann-Whitney U test: A nonparametric statistical test for comparing differences between two independent groups. "Mann-Whitney U tests~\cite{nachar2008mann}"

- Multimethod quantitative analysis: An approach combining multiple quantitative techniques to triangulate findings. "Using a multimethod quantitative analysis, which combined correlation analysis and text mining"

- Natural language generation: AI methods that produce human-like text from data or prompts. "\ccsdesc[300]{Computing methodologies~Natural language generation}"

- Prompt engineering: The practice of designing and refining inputs to elicit better outputs from generative AI models. "prompt engineering into the CSE AI module curriculum"

- Scaffolding: Instructional supports provided to learners to help them accomplish tasks they could not do independently. "rules, safety, and scaffolding in AI-supported activities"

- Teachable Machine: A web-based tool that lets users create simple machine learning models through interactive examples. "Teachable Machine \cite{teachablemachine}"

- Text mining: The computational analysis of textual data to extract patterns, topics, or relationships. "combined correlation analysis and text mining"

- Vibe coding: A pedagogical approach to programming that emphasizes exploratory, creative coding practices. "vibe coding"

- Wooclap: An audience response system used to deliver and collect questionnaire data. "The questionnaire was delivered through Wooclap \cite{wooclap}."

Collections

Sign up for free to add this paper to one or more collections.