Uncertainty in security: managing cyber senescence

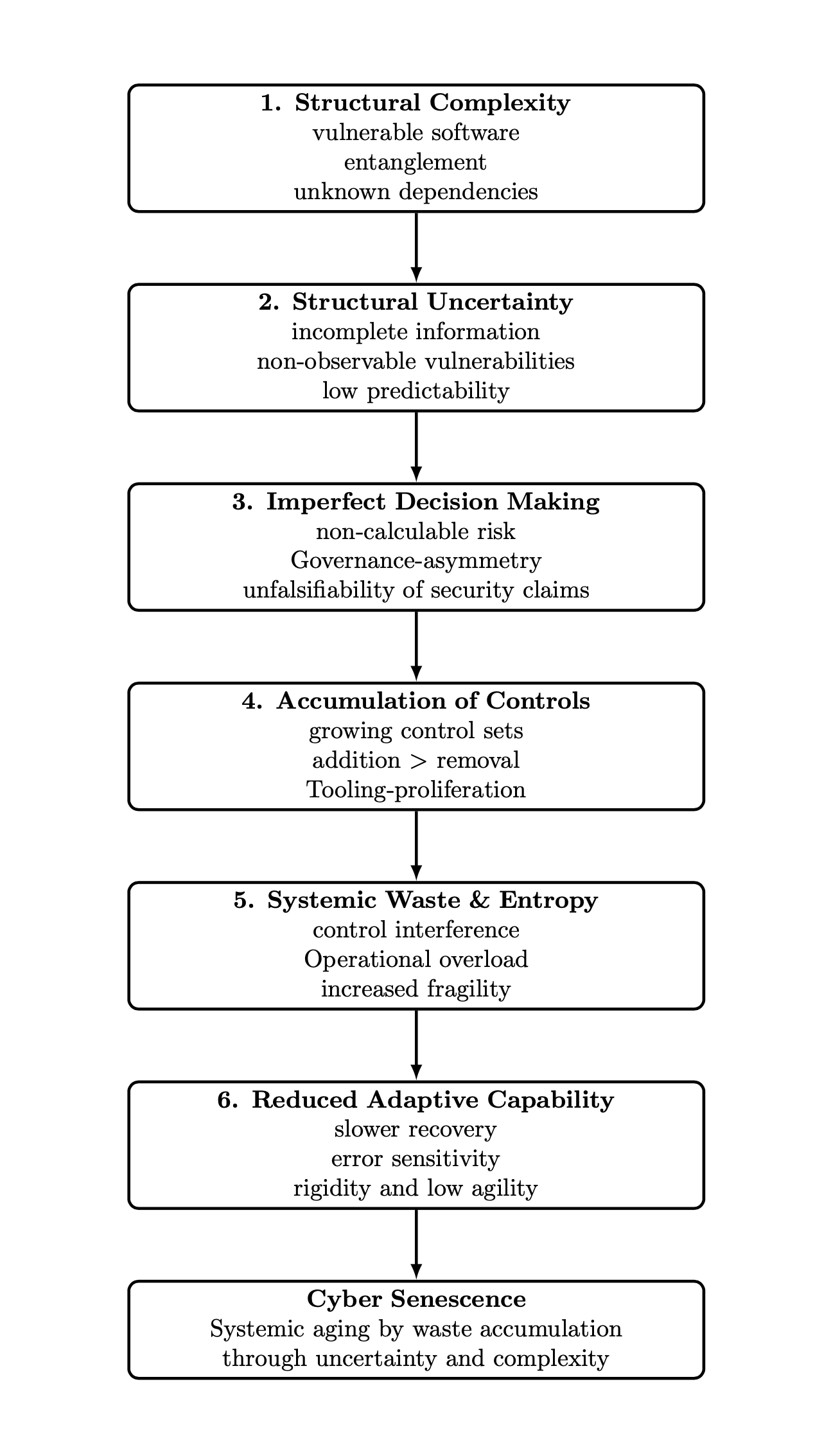

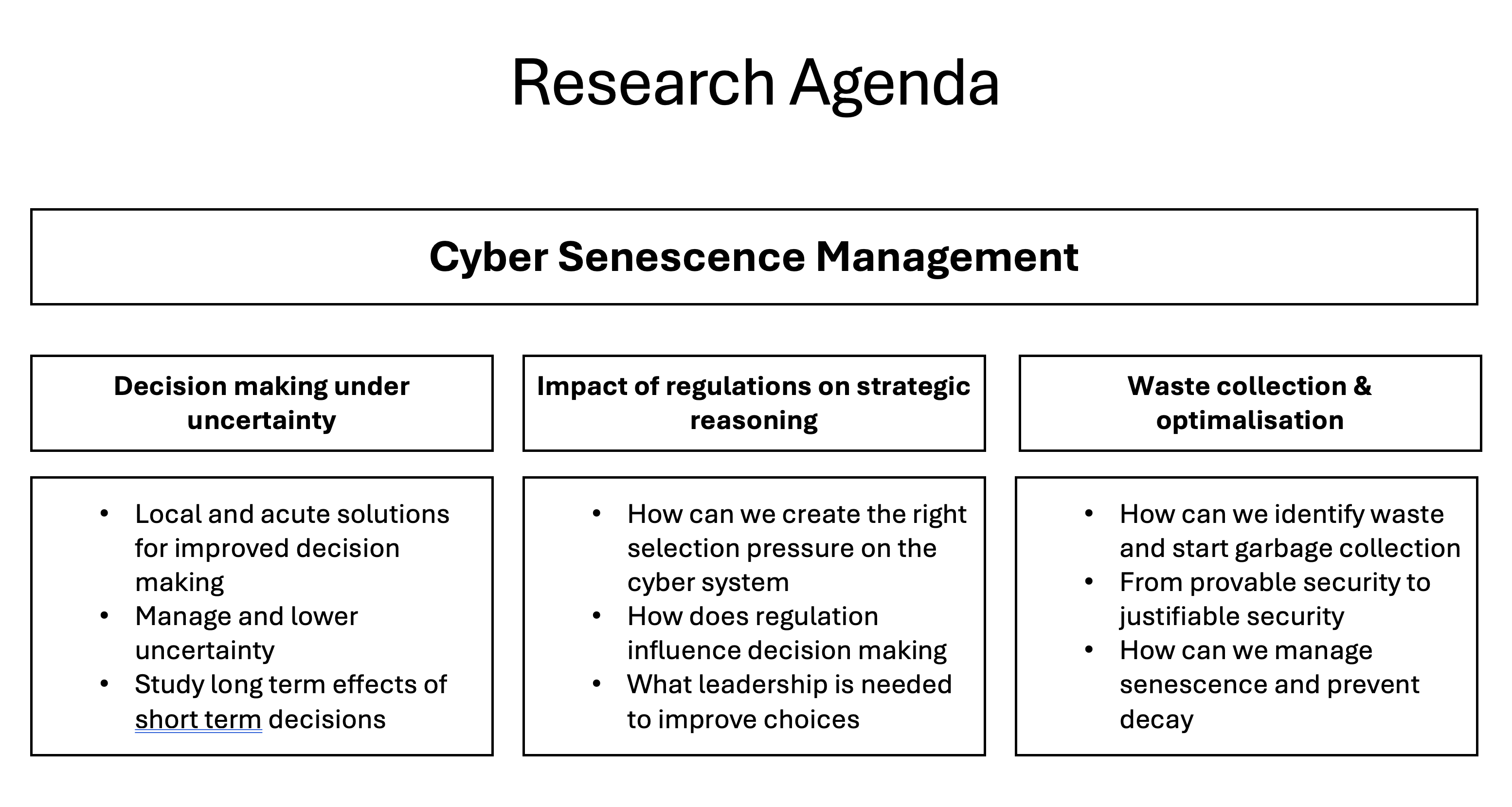

Abstract: My main worry, and the core of my research, is that our cybersecurity ecosystem is slowly but surely aging and getting old and that aging is becoming an operational risk. This is happening not only because of growing complexity, but more importantly because of accumulation of controls and measures whose effectiveness are uncertain. I introduce a new term for this aging phenomenon: cyber senescence. I will begin my lecture with a short historical overview in which I sketch a development over time that led to this worry for the future of cybersecurity. It is this worry that determined my research agenda and its central theme of the role of uncertainty in cybersecurity. My worry is that waste is accumulating in cyberspace. This waste consists of a multitude of overlapping controls whose risk reductions are uncertain. Unless we start pruning these control frameworks, this waste accumulation causes aging of cyberspace and could ultimately lead to a system collapse.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Explain it Like I'm 14

What is this paper about?

This paper warns that our digital world is “aging” in a harmful way. The author calls this cyber senescence: over time, we keep adding more and more security rules, tools, and settings, but we’re not sure how well many of them really work. Because they’re hard to remove safely, they pile up like digital junk. This growing complexity can make systems slower, harder to fix, and more likely to fail—sometimes on a massive scale.

What questions does the paper ask?

The paper explores simple but important questions:

- Why is cybersecurity getting so complex and fragile?

- Why do security tools and rules keep piling up, even when their benefits are unclear?

- What makes it so hard to make good security decisions?

- How can we stop (or at least manage) this “digital aging” so the internet remains safe and reliable long term?

How did the author study the problem?

Instead of lab experiments, the author takes a big-picture approach that combines:

- Real-world stories: For example, a CrowdStrike update in 2024 caused about 8.5 million computers to crash, and a Cloudflare update in 2025 broke services worldwide. Ironically, both incidents were caused by security products meant to protect us.

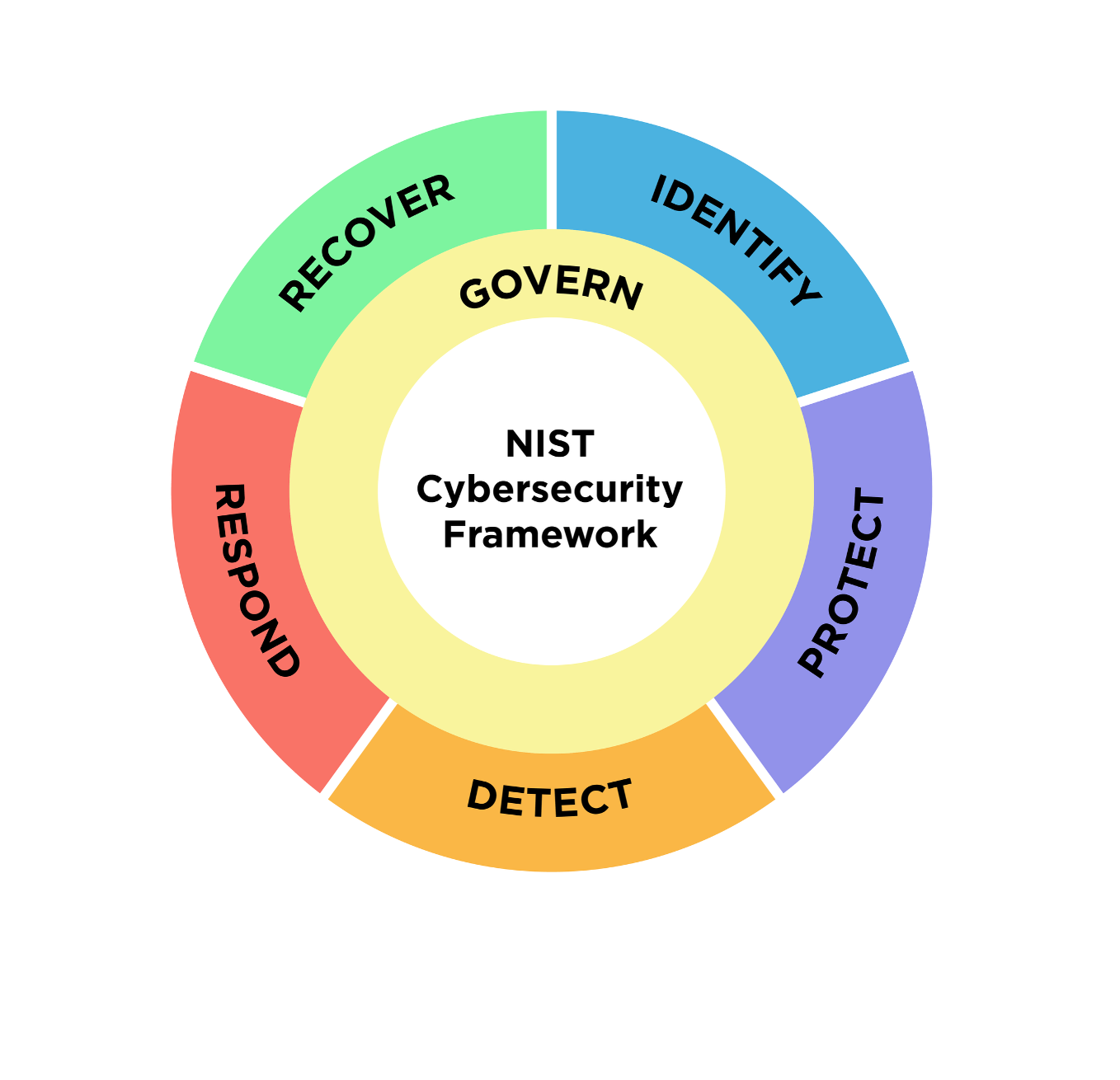

- History and standards: He looks at how security frameworks like NIST’s Cybersecurity Framework grew from about 400 controls to around 1200, and how new areas like “governance” were added as security became everyone’s responsibility—not just the IT team’s.

- Ideas from math and science: He explains why it’s often impossible to know if a system is truly secure (you can’t prove something will “never” go wrong in the future). This is similar to how, in math, not everything true can be proven. He also uses “resilience engineering” to show that when a system collects too much “waste” (unnecessary or overlapping stuff), it becomes weaker.

- Laws and incentives: He examines how laws like NIS2 and DORA push companies to test their security, share information with partners and suppliers, and involve top leaders—because bad security can break whole supply chains.

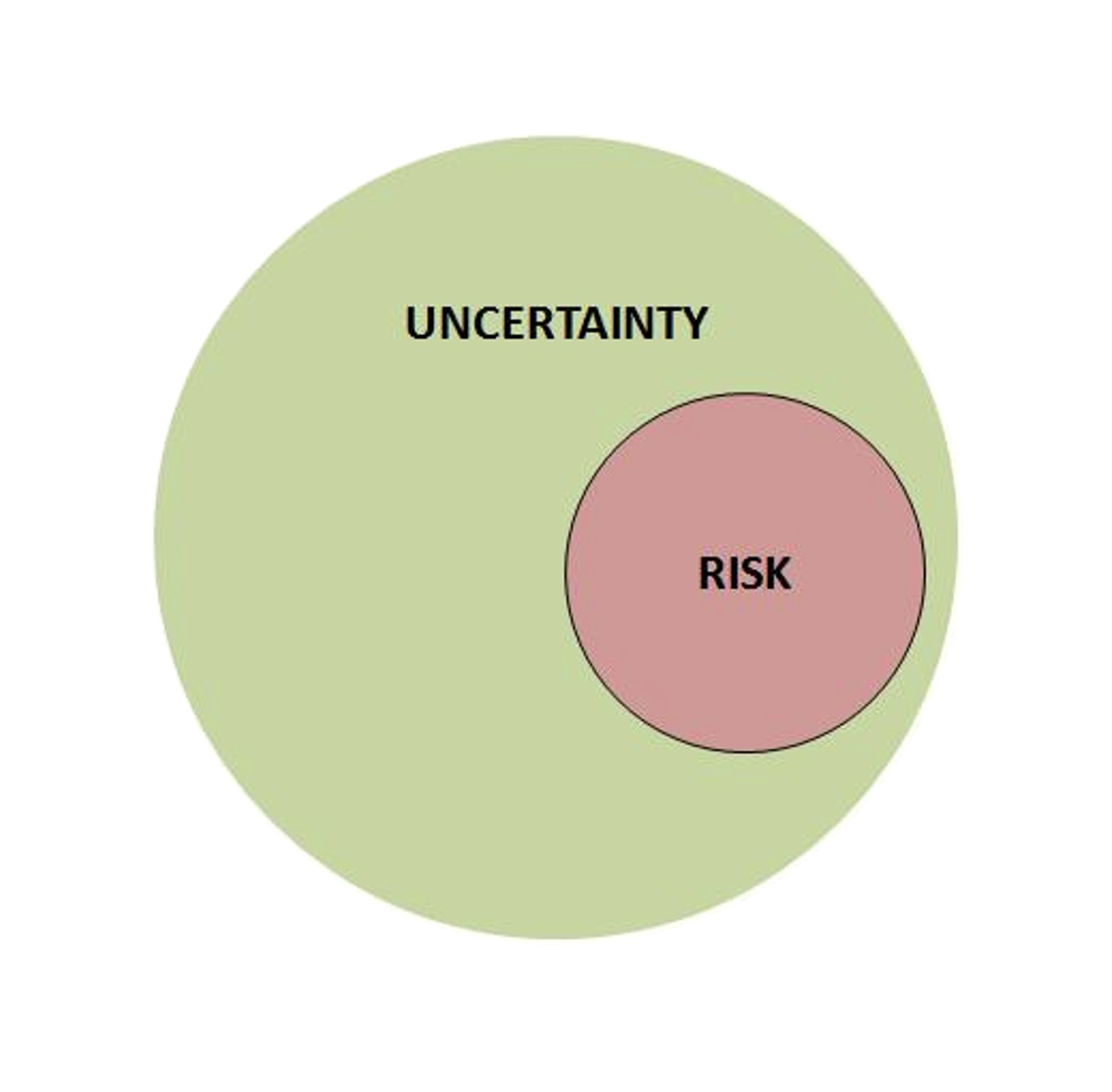

To explain a key limitation, he contrasts risk and uncertainty. Risk uses the formula (Risk = Probability × Impact). But in cybersecurity, we often don’t know P (how likely an attack is) or I (how bad it will be), so calculations can be shaky.

What did the author find, and why is it important?

Main findings:

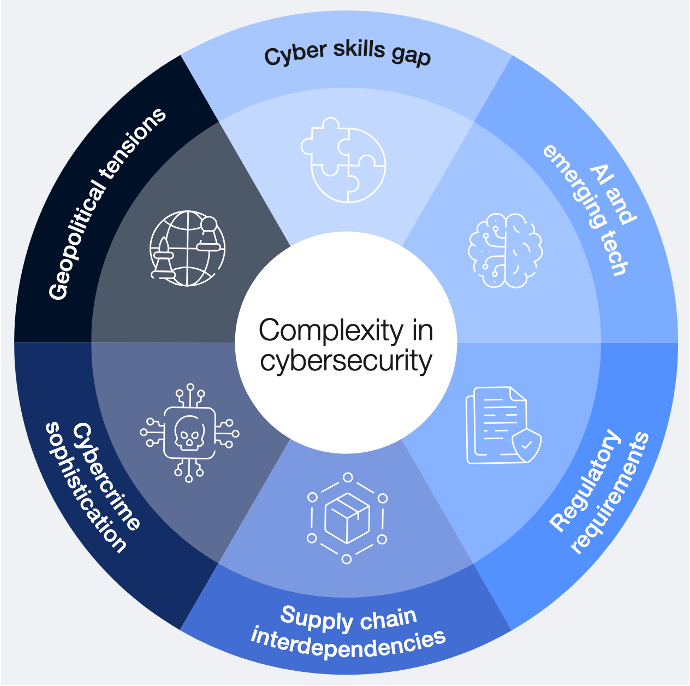

- Complexity is exploding: Software, cloud services, and long supplier chains make everything tightly connected. A mistake in one place can ripple across the globe.

- Vulnerabilities are normal: Software always has flaws. Patching is constant, and attackers may trade secret (“zero-day”) flaws. So uncertainty is built in.

- Security tools can cause outages: The very tools meant to protect us can break things at massive scale when updates go wrong (CrowdStrike, Cloudflare).

- Controls keep accumulating: It’s easy to argue “add one more control for safety,” but almost impossible to prove that removing a control is safe. Because you can’t prove a system will stay secure, people avoid pruning. This creates “security waste”: overlapping, outdated, or low-value measures.

- Waste makes systems fragile: From resilience engineering, systems that collect waste become less able to adapt and recover. That increases the chance of big failures.

- Incentives are misaligned: Software makers ship fast and fix later; customers bear much of the risk; the security industry profits from ongoing uncertainty. This encourages more tools and controls—not smarter, simpler systems.

- Regulation helps, but isn’t enough alone: Laws like NIS2 and DORA push testing, information-sharing, and leadership accountability. They reduce uncertainty and force attention at the top. Still, they don’t automatically remove waste.

Why it matters: If we don’t change course, our defense systems can “age” like a body that’s overloaded and fragile. The paper uses the octopus as a metaphor: it’s amazingly capable but has a short life because of built-up genetic “waste.” Likewise, cybersecurity might look advanced but can decay if we just keep adding complexity without pruning.

What are the implications?

The author proposes a three-part plan to manage cyber senescence and keep our digital world trustworthy:

- Make better local decisions under uncertainty

- Improve detection and analysis (for example, using AI to sift huge amounts of security signals).

- Use threat intelligence more smartly to choose controls that actually matter.

- Use smart regulation to shape the whole ecosystem

- Ensure suppliers share security information.

- Require realistic testing (ethical hacking, recovery drills).

- Put responsibility on leaders so security isn’t ignored.

- Learn to prune and recycle “security waste”

- Identify outdated or overlapping controls.

- Move from “provable security” (which isn’t realistic) to “justifiable security” (clear reasons and evidence for what we keep).

- When removal isn’t possible, find ways to simplify or combine controls to create new value.

The big takeaway: Security isn’t just about adding more locks. It’s about understanding uncertainty, choosing wisely, and regularly cleaning up. If we do this, we can slow or manage cyber senescence and build a digital future that’s safer, more reliable, and easier to repair.

Knowledge Gaps

Knowledge gaps, limitations, and open questions

Below is a single, actionable list of what remains missing, uncertain, or unexplored in the paper that future researchers could address:

- A formal, operational definition of “cyber senescence” with quantifiable indicators (e.g., control growth rate, configuration entropy, mean time-to-deprecate controls) and a validated index for longitudinal tracking.

- Empirical evidence that accumulation of controls causally reduces resilience or increases incident likelihood/severity, beyond anecdotal cases (CrowdStrike, Cloudflare); design of longitudinal, cross-organizational studies controlling for confounders.

- Methods to measure the real-world effectiveness of individual controls and their interactions (synergies, antagonisms), including quasi-experimental designs, natural experiments, or controlled “A/B” security trials.

- A principled “garbage collection” framework for security controls: pruning criteria, sunset clauses, deprecation workflows, reversibility safeguards, and audit trails to justify and govern removal decisions.

- Decision-making frameworks for uncertainty management beyond classical risk quantification (e.g., imprecise probabilities, Dempster–Shafer theory, info-gap decision theory): when to use which, and how to integrate them in governance.

- Practical approaches to handle unfalsifiability of security claims in decision processes (e.g., counterfactual risk assessments, adversarial red-team testing protocols, confidence bounds and evidence grading).

- Measurement and mitigation of supply-chain entanglement and concentration risk: metrics for dependency centrality, standards for transparency (e.g., dependency/asset graphs), and incentive-compatible mechanisms to reduce systemic nodes.

- Systematic evaluation of NIS2/DORA’s net effect on selection pressure and senescence (do they inadvertently increase control accumulation?); development of metrics and natural experiments to assess regulatory impact and unintended consequences.

- Incentive-alignment mechanisms among software vendors, security providers, and end-users (e.g., liability regimes, warranties, secure-by-default mandates, patch quality KPIs, SBOM quality scores) and empirical testing of their effects.

- Early-warning indicators and thresholds for impending system fragility or collapse due to senescence; resilience metrics, stress-testing protocols, and criteria for triggering pruning or architectural refactoring.

- Concrete, evidence-based guidelines for using AI to reduce uncertainty without exacerbating complexity (false positive/negative trade-offs, interpretability requirements, minimal viable telemetry sets, complexity budgets).

- Governance models to counter liability-driven overcontrol: decision support tools, bias-aware processes, and training interventions; measurement of whether personal liability increases control accumulation and risk aversion.

- Standardized, privacy-preserving datasets that link control inventories, vulnerabilities, updates, incidents, and outcomes; common schemas and trusted repositories to enable reproducible research on control effectiveness and senescence.

- Comparative analyses across sectors and countries (e.g., vendor monoculture vs. diversification; regions unaffected by major vendor outages) to test hypotheses about centralization and resilience differences.

- Optimal update cadence models balancing uncertainty reduction versus update-induced outage risk; control-theoretic or operations-research formulations to determine safe frequencies by context.

- Metrics and remediation strategies for “control debt” (complexity and maintenance burden of overlapping/obsolete controls); architectural refactoring patterns and case studies for “lean security.”

- Mechanisms for “security-by-design” at ecosystem scale where no central designer exists: distributed governance protocols, shared standards, and stewardship arrangements for joint risk management.

- Empirical validation of the “law of stretched systems” in cyber contexts: does added capability get fully consumed, and how can slack be preserved (policy levers, caps, or complexity budgets)?

- Adaptation of safety/assurance case methodologies to cybersecurity for control removal decisions (claims, evidence, arguments) and criteria regulators would accept as “justifiable security.”

- Field evidence for Herley’s unfalsifiability implications in practice: rates of successful/failed challenges to “secure” claims, acceptance criteria for removal proposals, and observed decision patterns.

- A structured, stepwise method for justifying and piloting control removal under uncertainty (e.g., canary deployments, circuit breakers, reversible toggles), including safeguards and performance thresholds.

- Taxonomy and quantitative measures of “waste” in security (redundant, obsolete, low-efficacy, high-cost controls); cost–benefit analysis methodologies and tooling to inventory and score waste.

- System-level models (agent-based or system dynamics) to simulate incentive structures, regulation, control accumulation, and the evolution of senescence; calibration with real-world data.

- Evaluation of advanced cloud defense tooling (graph-based detection, asset algebra) for net impact on senescence and resilience; prerequisites for effectiveness and strategies to avoid complexity spirals.

- Ethical, organizational, and societal implications of aggressive pruning (stakeholder risk tolerance, transparency, consent); frameworks for communicating and governing risk trade-offs.

- Outcome evaluation of cybersecurity leadership education interventions: do trained leaders measurably reduce senescence (e.g., fewer obsolete controls, better pruning decisions, improved resilience metrics)?

- Concrete strategies to “create new value from waste” (repurposing telemetry, consolidating controls, deriving meta-controls); feasibility and benefit measurement frameworks.

- Operational definitions of “collapse” in cybersecurity (availability, integrity, safety thresholds), recovery pathways, and design of minimum viable security baselines for degraded modes.

- Standards and audit criteria that reward simplicity and justified control removal (simplicity/index metrics, proportionality tests, decomplexification audits) and analysis of adoption barriers.

- Quantification of vendor monoculture risks and design of diversification strategies (multi-vendor redundancy, graceful degradation); modeling of insurance pricing and market incentives to encourage diversification.

Practical Applications

Immediate Applications

The paper highlights concrete practices organizations and regulators can adopt now to reduce uncertainty, curb control sprawl, and improve resilience without waiting for new science or tools.

- Security control lifecycle management and “garbage collection” of controls

- Use case: Create and maintain a control register with purpose, cost, dependencies, and evidence-of-effectiveness to identify redundant/overlapping controls for deprecation.

- Sectors: Finance, healthcare, energy, government, software.

- Tools/workflows: Add “sunset clauses” and removal criteria to every new control; run periodic “control pruning sprints”; require “justifiable security” memos that document reasoning and uncertainty bands rather than single risk scores; add a Control Change Advisory Board gate for additions/removals.

- Assumptions/dependencies: Executive buy-in; GRC tooling to track lineage and dependencies; agreement on decision criteria despite unfalsifiability of “less secure” claims.

- Safer change management for security tooling to prevent “defender-caused” outages

- Use case: Stage and canary-rollout updates for EDR/AV, WAF, bot management, and cloud security policies with kill switches and automatic rollbacks.

- Sectors: Software/SaaS, cloud, telecom, critical infrastructure, hospitals.

- Tools/workflows: Canary rings, feature flags, pre-prod testbeds, chaos/negative testing of security agents, vendor SLAs for ringed deployments; incident runbooks to disable or quarantine faulty updates.

- Assumptions/dependencies: Vendor cooperation; operational telemetry to detect regressions; tolerance for slower update adoption in exchange for safety.

- Supply-chain security posture exchange and concentration risk management (NIS2/DORA implementation)

- Use case: Contractual clauses to share security posture, SBOM/VEX, TLPT/red-team results, and incident data; dashboards for third-party exposure and concentration risk.

- Sectors: Finance (DORA), energy/water/transport/healthcare (NIS2), public sector.

- Tools/workflows: Standardized questionnaires (e.g., SIG), SBOM ingestion, continuous vendor monitoring, critical supplier risk thresholds and diversification plans.

- Assumptions/dependencies: Legal readiness, standard formats, privacy/IP constraints, data quality, enforcement by procurement and legal.

- Threat-led testing and resilience-by-design (accepting unavoidable incidents)

- Use case: Threat-led penetration testing (TLPT), purple teaming, tabletop/technical recovery drills; emphasize response and recovery functions in line with NIST CSF 2.0.

- Sectors: All regulated sectors; cloud-native enterprises.

- Tools/workflows: MITRE ATT&CK-driven testing, recovery time objectives (RTO), mean time to recover (MTTR) metrics on executive dashboards; “switch-off playbooks” for high-risk services (e.g., remote-access gateways).

- Assumptions/dependencies: Testing capacity and safe testing windows; board acceptance of resilience metrics alongside prevention metrics.

- Uncertainty-aware risk reporting and governance

- Use case: Replace single-point risk scores with scenario ranges and confidence levels; capture assumptions explicitly; embed cognitive-bias checklists into governance.

- Sectors: Boards and risk functions across industries.

- Tools/workflows: Decision briefs with uncertainty bands; pre-mortem and red team reviews; bias-awareness checklists drawn from governance research.

- Assumptions/dependencies: Cultural shift in risk committees; training to interpret uncertainty ranges; tolerance for decisions without “exact” numbers.

- AI-enhanced threat intelligence and telemetry triage (now with existing SOC stacks)

- Use case: Automate CTI ingestion, normalization, TTP mapping, prioritization of patching and detections using AI-enhanced pipelines described by the authors.

- Sectors: Any organization with SIEM/SOAR/UEBA/SOC.

- Tools/workflows: CTI parsers, enrichment, ATT&CK mapping, deduplication, confidence scoring; feedback loops from incident outcomes to improve prioritization.

- Assumptions/dependencies: Sufficient telemetry coverage; curated data feeds; staff to tune models; MLOps for model drift.

- Procurement and contracting to align vendor incentives with resilience

- Use case: Require vendors to provide staged-update capabilities, pre-production artifacts, rollback plans, and outage liability clauses; mandate minimum secure development and patch processes.

- Sectors: Large buyers in finance, healthcare, government; cloud customers.

- Tools/workflows: Security addenda, secure-by-design questionnaires, evidence of ringed releases; procurement scorecards that penalize concentration and opaque update strategies.

- Assumptions/dependencies: Market power to negotiate; legal alignment with local jurisdictions.

- Cloud and identity complexity control

- Use case: Keep IAM and policy sprawl within “complexity budgets”; prioritize attack-path reduction using existing CSPM/CIEM tools while awaiting more mature graph/algebra capabilities.

- Sectors: Cloud-first enterprises; software.

- Tools/workflows: Periodic attack-path reviews, policy-linting, least-privilege sprints; “complexity budgets” as SRE-like guardrails.

- Assumptions/dependencies: Current tools’ limitations vs. promised future graph-based methods; skilled cloud security engineers.

- Individual and small-organization resilience practices

- Use case: Staggered auto-updates for critical endpoints; offline/backup access to core workflows (e.g., payments, patient care); avoid single points of failure in critical security agents.

- Sectors: SMEs, clinics, local governments, households.

- Tools/workflows: Backup/restore drills; dual-vendor or layered protection where feasible; documented fallback procedures.

- Assumptions/dependencies: Cost constraints; simplicity vs. redundancy trade-offs.

Long-Term Applications

The paper proposes a research and policy agenda that, once matured, can reshape how cyber risk is governed and how complexity and waste are managed across ecosystems.

- A discipline and toolkit for managing “cyber senescence”

- Use case: Establish methods to identify, measure, and remove “security waste” (redundant/ineffective controls) without compromising resilience.

- Sectors: Cross-sector; GRC vendors; standards bodies.

- Tools/products/workflows:

- Cyber Senescence Index to quantify control sprawl, coupling, and maintenance drag.

- “Control Portfolio Manager” modules in GRC stacks for lifecycle, dependency graphs, and ablation testing in sandboxes.

- Standard “control kill-switch” interfaces for safe deactivation and rollback.

- Assumptions/dependencies: Agreement on metrics; safe experimentation environments; cultural acceptance that removal can be responsible.

- Standards and assurance for control deprecation and “justifiable security”

- Use case: Formalize evidence templates and audit practices that justify control removal under uncertainty, acknowledging unfalsifiability of “less secure” claims.

- Sectors: Regulators, auditors, critical infrastructure, finance.

- Tools/workflows: Standards for deprecation tests, acceptance criteria, attestations; integration into ISO/IEC/NIST profiles and sectoral supervision.

- Assumptions/dependencies: Regulator and auditor adoption; consensus across industries; case law development.

- Regulatory and market mechanisms to realign incentives

- Use case: Incentivize secure-by-design and reduce vulnerability debt; disincentivize unsafe rapid changes in security agents.

- Sectors: Software/cloud vendors; policymakers.

- Tools/workflows: Software liability regimes, update-safety transparency, SBOM+VEX mandates, concentration risk caps for critical functions, shared incident data repositories.

- Assumptions/dependencies: Political feasibility; global harmonization; avoiding perverse incentives.

- Ecosystem-level change control for security updates

- Use case: Sector-coordinated “safety-critical” release rings for defensive controls (e.g., EDR, DDoS, bot management) akin to aviation-grade change control.

- Sectors: Telecom, finance, healthcare, government, cloud.

- Tools/workflows: National/sector CERT-facilitated canary cohorts, cross-vendor rollback protocols, pre-release conformance testing labs.

- Assumptions/dependencies: Cross-competitor cooperation; shared funding; liability frameworks.

- Advanced AI for uncertainty estimation and causal impact of controls

- Use case: Move beyond detection to quantify uncertainty and causal effects (did this control reduce incidents?) to inform keep/remove decisions.

- Sectors: Academia, SOC vendors, large enterprises.

- Tools/workflows: Bayesian/causal inference over SOC telemetry, active learning CTI pipelines, counterfactual simulations in cyber digital twins.

- Assumptions/dependencies: High-quality labeled data; privacy-preserving data sharing; methodological advances.

- Cyber ecosystem digital twins for resilience and “what-if” pruning

- Use case: Simulate systemic effects of adding/removing controls across supply chains and cloud dependencies.

- Sectors: Critical infrastructure, finance, government emergency planning.

- Tools/workflows: Attack-graph and dependency-graph simulators, stress tests of control portfolios, regulatory “resilience exercises.”

- Assumptions/dependencies: Accurate dependency mapping; modeling fidelity; shared data across organizations.

- Governance innovations to manage complexity growth

- Use case: Institutionalize “complexity budgets,” routine sunset clauses, and “safety cases” for major security changes.

- Sectors: Enterprise governance across industries.

- Tools/workflows: Policy frameworks capping policy/IAM/agent count vs. staff capacity; safety-case dossiers modeled on high-reliability industries.

- Assumptions/dependencies: Organizational discipline; monitoring automation; acceptance by auditors.

- Workforce development at the strategy–security interface

- Use case: Scale programs like the Cybersecurity Leadership Academy to train leaders in uncertainty-aware decision-making and bias mitigation.

- Sectors: Academia, professional education, large enterprises.

- Tools/workflows: Cross-disciplinary curricula (security, economics, decision science, regulation); board training modules.

- Assumptions/dependencies: Funding; time-to-competency; measurable outcomes for governance quality.

- Diversity-in-defense and concentration risk reduction at scale

- Use case: Policy and design patterns that ensure diversity of critical defensive components and reduce single-vendor dependencies.

- Sectors: Critical infrastructure, cloud, finance.

- Tools/workflows: Dual-control architectures, vendor diversity thresholds, automatic failover to alternative defensive stacks.

- Assumptions/dependencies: Interoperability standards; cost of redundancy; performance impacts.

- Sector-wide data exchanges for incidents, effectiveness, and near-misses

- Use case: Confidential sharing of control effectiveness and outage data to lower uncertainty and counteract unfalsifiability with collective evidence.

- Sectors: All critical sectors; ISACs/ISAO networks.

- Tools/workflows: Trusted data enclaves, clean-room analytics, standardized effectiveness metrics.

- Assumptions/dependencies: Legal safe harbors; anonymization; mutual trust.

These applications flow directly from the paper’s core insights: (1) today’s cyber ecosystem is complex and unpredictable; (2) risk quantification alone is insufficient under deep uncertainty; (3) unfalsifiability creates an asymmetry that favors adding controls, accumulating “security waste”; and (4) regulation and governance can and should create selection pressure for resilience, including justified removal of controls.

Glossary

- AI (Artificial Intelligence): Techniques enabling machines to perform tasks that typically require human intelligence, such as learning and pattern recognition; "like artificial intelligence"

- ARPANET: Early packet-switching network funded by the U.S. DoD that preceded and influenced the development of the modern Internet; "ARPANET"

- asset-graphs: Graph-based representations of systems and their components (assets) used to model relationships and risks for security analysis; "asset-graphs and algebras driven by AI"

- Azure tenant: A dedicated, isolated instance of Microsoft Azure services associated with a specific organization, used for identity and resource management; "protect azure tenants"

- bot-management system: Security tooling that detects and mitigates automated bot traffic to protect online services; "Their bot-management system received a faulty update"

- calculemus: Leibniz’s motto advocating that reasoning can be reduced to calculation; "âcalculemus!â"

- cyber resilience: The ability of an organization to prepare for, respond to, and recover from cyber incidents while maintaining essential operations; "we rather talk about cyber resilience instead of cyber security."

- cyber risk quantification: Methods and tools to express cyber risk in numerical terms to support decision-making; "âcyber risk quantificationâ products"

- cyber senescence: Proposed term describing the aging and decay of cybersecurity ecosystems due to accumulating, uncertain-effect controls and complexity; "âcyber senescenceâ"

- DDoS attacks (Distributed Denial of Service): Attacks that overwhelm services with traffic from multiple sources to make them unavailable; "DDoS attacks (distributes denial of service)"

- Digital Operational Resiliency Act (DORA): EU regulation for the financial sector mandating operational resilience, including cybersecurity and ICT risk management; "Digital Operational Resiliency Act (DORA)"

- Falcon Sensor: CrowdStrike’s endpoint security agent that detects and responds to cyber threats on hosts; "Falcon Sensor is software designed to protect computer systems against cyber-attacks."

- governance (NIST CSF category): Organizational controls and processes for directing and overseeing cybersecurity; "This new category, called âgovernanceâ, contains controls needed to govern security"

- ISO/IEC 15408 (Common Criteria): International standard for evaluating the security of IT products and systems; "which later became ISO/IEC 15408 in 1999."

- law of stretched systems: Safety engineering principle asserting that systems use any new capacity until they are again operating at their limits; "the law of stretched systems (that states that any system will consume any new capability completely until it reached full capacity again)"

- lex specialis: Legal principle where a more specific law governs over a general one; "is a lex specialis of NIS2"

- Network and Information Security 2 (NIS2) directive: EU-wide cybersecurity legislation imposing requirements on essential and important entities; "Network and Information Security 2 (NIS2) directive"

- NIST Cybersecurity Framework (CSF): A standardized set of cybersecurity controls and practices published by NIST; "in the latest version of the NIST CSF"

- Orange Book: U.S. DoD Trusted Computer System Evaluation Criteria, a seminal security assurance standard; "the so-called Orange Book"

- operational risk: The risk of loss resulting from inadequate or failed processes, systems, or external events; "aging is becoming an operational risk."

- penetration tests (threat led): Intelligence-driven security tests simulating realistic threat scenarios to assess defenses; "âthreat led penetration testsâ"

- resilience engineering: Discipline focusing on designing systems that sustain operations under stress and recover from failures; "From the theory of resilience engineering, we know that a system that accumulates waste, becomes less resilient"

- security by design: Practice of embedding security requirements and protections into systems from the outset of development; "Security by design is not a new notion"

- security posture: An organization’s overall security status, including controls, processes, and readiness; "their security posture"

- telemetry: Automatically collected data from systems and security tools used for monitoring and analysis; "analyse the increasing volumes of telemetry"

- unfalsifiability: The property of a claim being impossible to disprove, applied here to security claims about “being secure”; "unfalsifiability and waste"

- vulnerability patching: Applying updates or fixes to software to remediate known security flaws; "spending significant amounts of time on patching them."

Collections

Sign up for free to add this paper to one or more collections.