- The paper introduces kooplearn, unifying kernel, deep, and linear operator learning techniques to outperform traditional methods in spectral analysis.

- It employs reduced rank kernel regression and randomized solvers to achieve superior eigenfunction approximation and computational efficiency.

- Empirical results show enhanced scalability and sample-efficiency on both deterministic and stochastic systems, validated on benchmarks like Lorenz 63.

Introduction

The modeling, prediction, and spectral analysis of dynamical systems are central challenges across physics, engineering, and computational sciences. The operator-theoretic approach, and in particular the theory of Koopman and transfer operators, provides a linear, interpretable framework for analyzing nonlinear stochastic and deterministic dynamical systems. "kooplearn: A Scikit-Learn Compatible Library of Algorithms for Evolution Operator Learning" (2512.21409) introduces a comprehensive open-source library that unifies state-of-the-art algorithms for learning evolution operators, facilitating integration into mainstream machine learning workflows.

Evolution Operator Learning: Principles and Scope

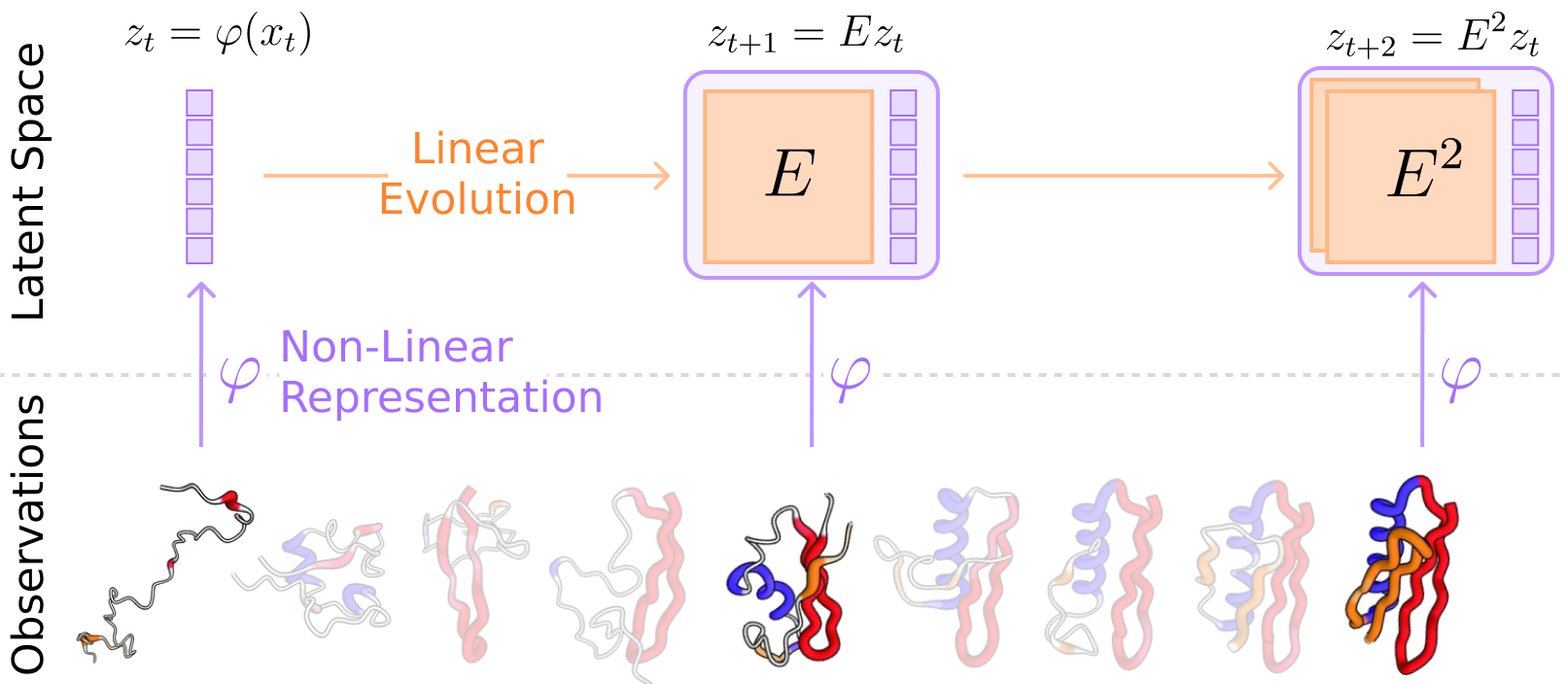

kooplearn centers on the data-driven learning of evolution operators E, encompassing both Koopman operators (for deterministic flows) and transfer operators (for stochastic Markov processes). The central idea is to approximate the linear evolution of observables or latent functions as a proxy for the potentially highly nonlinear underlying dynamics. The modeling framework consists of a static or learnable representation φ, potentially nonlinear, that lifts the system state into a latent space, and a linear evolution map E operating in this space; the pair (φ,E) together approximate the spectral and predictive properties of the true evolution operator. This setup enables the application of spectral methods, reduced-order modeling, and forecasting on a broad range of systems.

Figure 1: Schematic of the application of an evolution operator, where nonlinear state trajectories are encoded via φ and advanced linearly with E.

kooplearn distinguishes itself from alternative operator-learning platforms by supporting kernel, linear, and deep learning estimators for both discrete-time operators and continuous-time infinitesimal generators. The interface is designed for maximal compatibility with the scikit-learn estimator protocol, thereby streamlining adoption by the scientific and engineering communities.

Algorithmic Innovations

Reduced Rank Kernel Regression

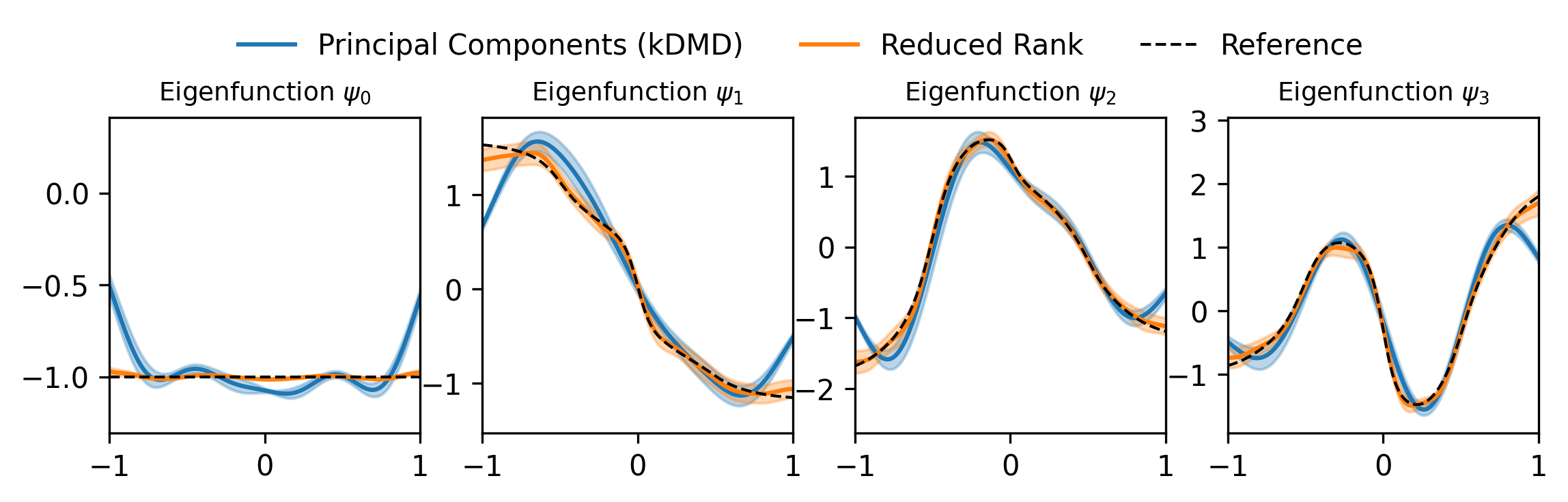

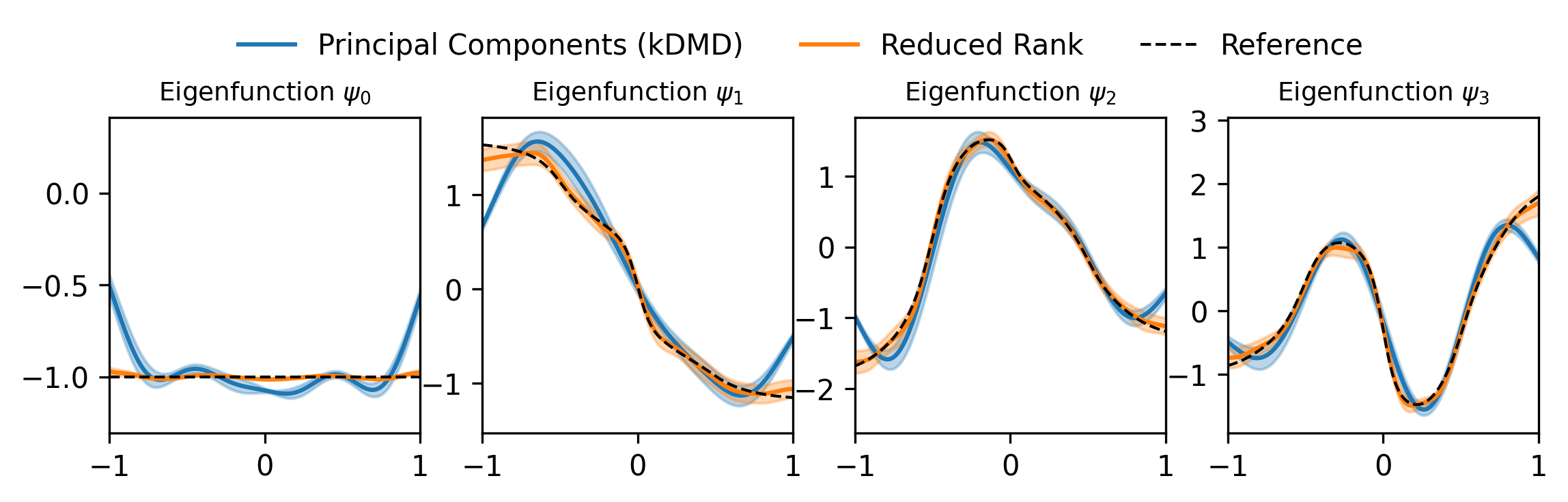

A core contribution is the implementation of kernel-based reduced rank regression for operator estimation. This estimator offers consistent spectral approximation, outperforming classical kernel Dynamic Mode Decomposition (kDMD) in both accuracy and sample efficiency, especially in approximating the leading eigendecomposition of the evolution operator for systems such as overdamped Langevin dynamics.

Figure 2: The reduced rank kernel estimator provides enhanced accuracy in identifying leading eigenfunctions compared to traditional kDMD for stochastic systems.

Efficient Large-Scale Estimation

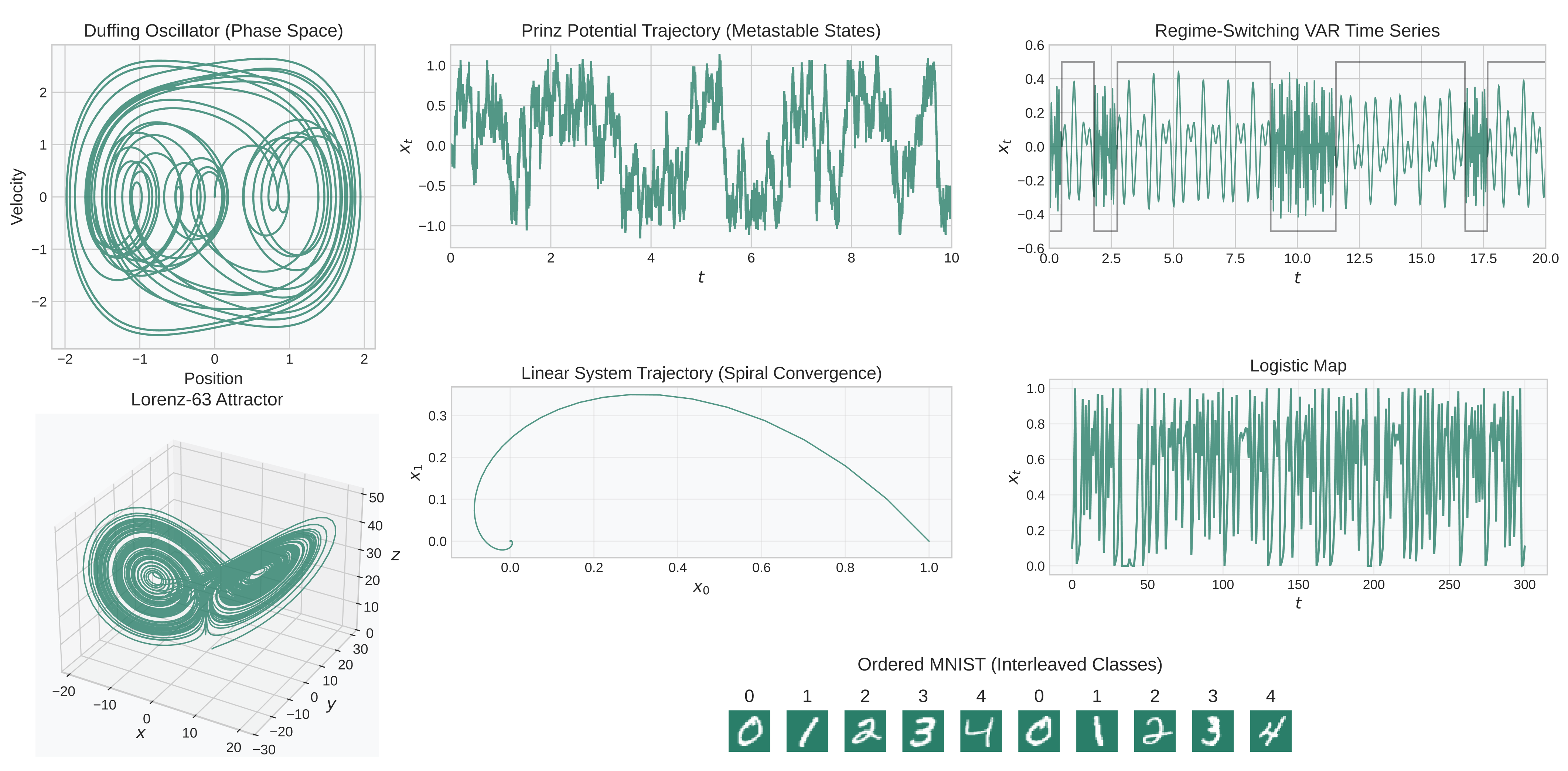

Scalability is addressed via randomized and Nyström-based kernel estimators, enabling operator learning on datasets encompassing thousands of high-dimensional trajectories. These advances make kernel operator regression feasible for problems where standard methods are computationally intractable. On benchmarks such as the Lorenz 63 system, kooplearn demonstrates competitive fit times, attributable to optimized kernel solvers and scalable matrix sketching techniques.

Figure 3: kooplearn leverages randomized solvers for efficient kernel model fitting on large-scale Lorenz 63 data.

kooplearn interfaces with PyTorch and JAX, providing modular loss functions for learning representations φ with structural (e.g., graph-based) priors. Both encoder-decoder (based on the Koopman autoencoder approach) and encoder-only (VAMPnets, spectral contrastive) paradigms are supported, enhancing flexibility for capturing system-specific invariants and nonlinearities.

For continuous-time SDEs, kooplearn further implements learning of the infinitesimal generator L. By leveraging recent kernel and neural operator algorithms developed for generator estimation, the library enables direct kinetic modeling from equilibrium data, reducing the requirement for explicit lag-time trajectory information. These generator-based techniques yield improved sample-efficiency and avoid spurious spectral artifacts, fundamentally strengthening data-driven analysis of diffusive systems.

Data and Benchmarking

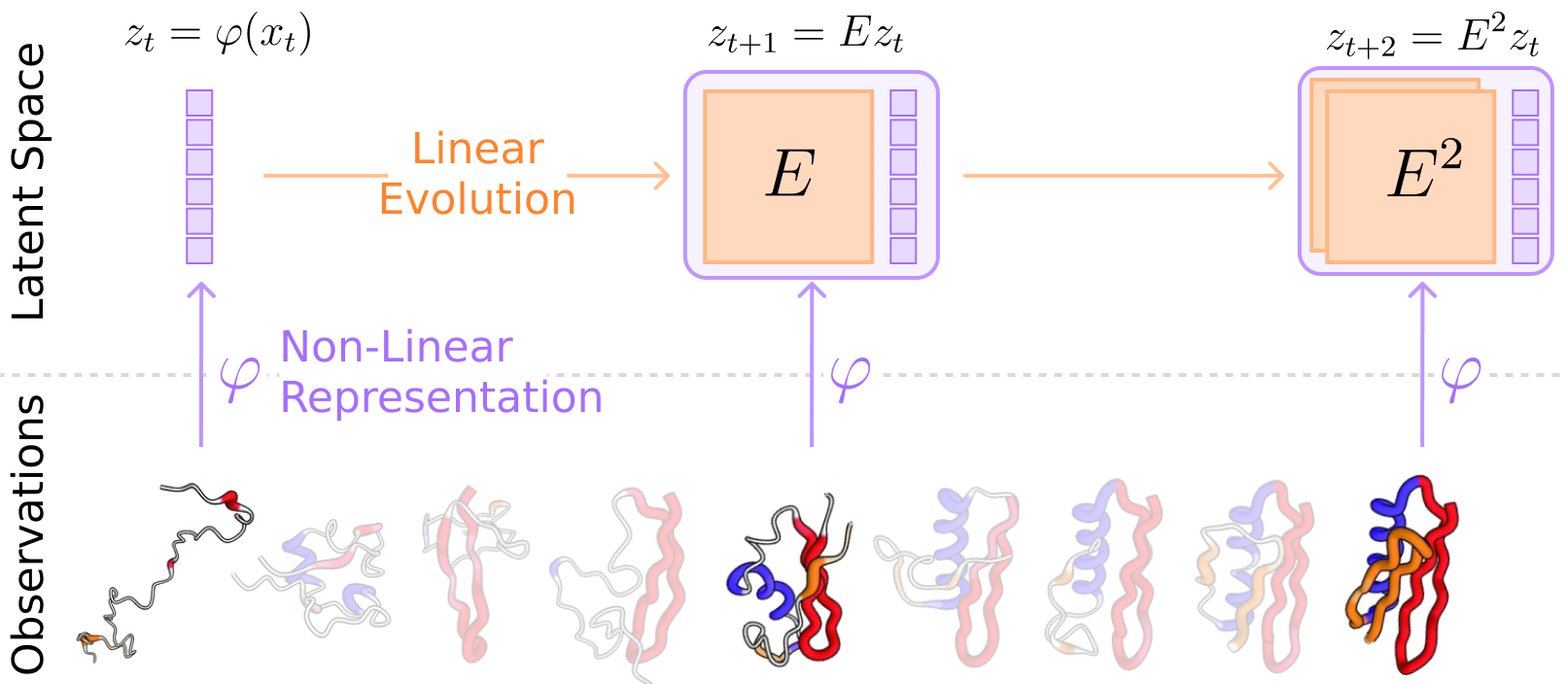

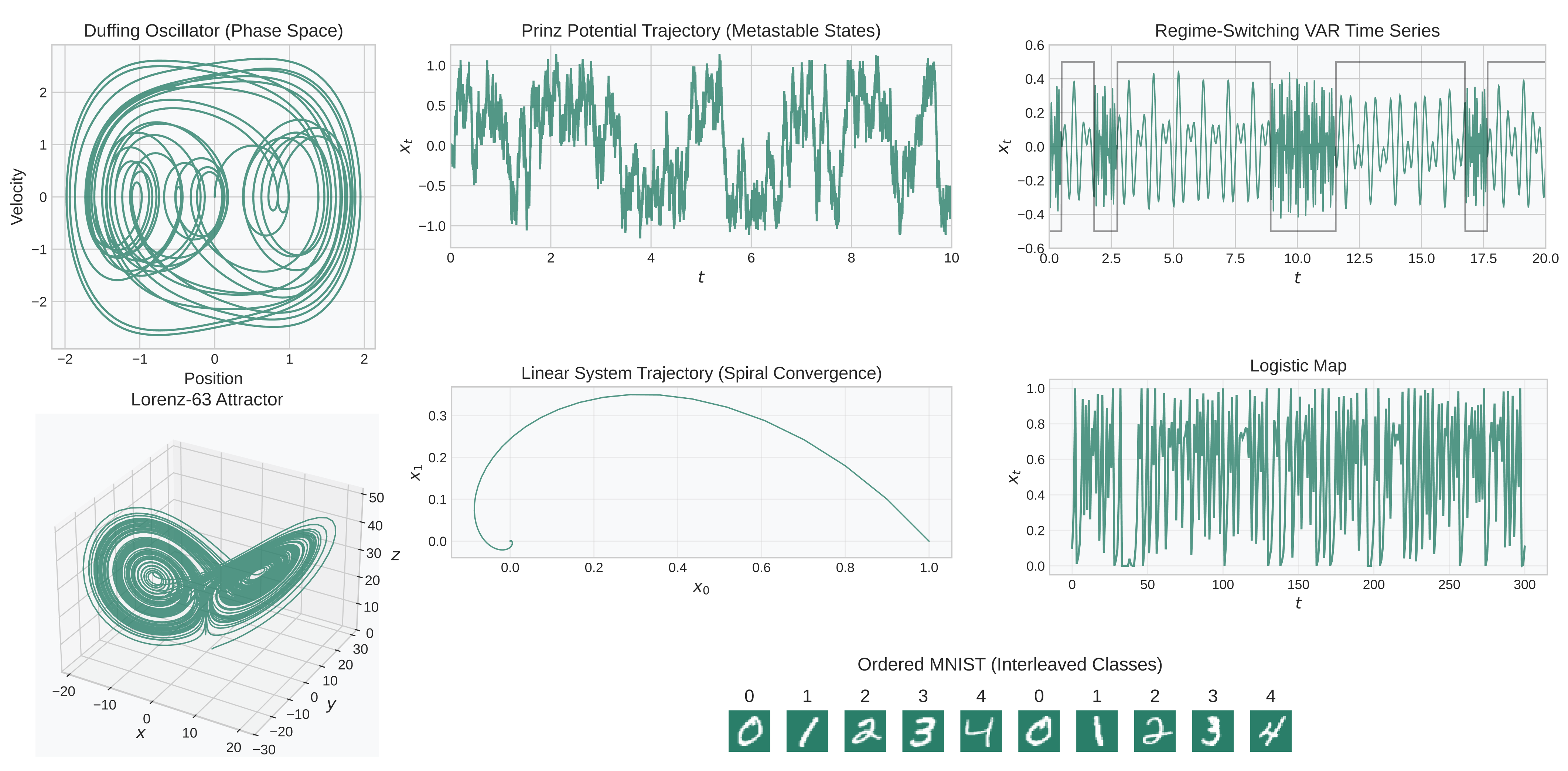

To ensure reproducibility and facilitate algorithm benchmarking, kooplearn ships with a curated suite of synthetic and real-world datasets. These span low-dimensional chaotic maps, metastable diffusions, and high-dimensional structured sequences (e.g., Ordered MNIST), with accessible codes for ground-truth spectral quantities.

Figure 4: kooplearn provides benchmark datasets, including systems with known ground-truth spectral decompositions, supporting rigorous evaluation of operator learning algorithms.

This framework supports robust comparisons, enabling quantitative evaluation of operator spectrum identification and forecasting performance.

Empirical Results and Claims

The paper presents strong empirical claims:

1. Superior spectral approximation: Reduced rank kernel estimators systematically outperform classical kernel and linear DMD for the computation of dominant eigenfunctions and eigenvalues.

2. State-of-the-art scalability: The use of randomized and Nyström-based strategies allows for kernel operator estimation with unprecedented speed in moderate to large data regimes, which is quantitatively substantiated on canonical chaotic systems.

3. Enhanced data efficiency for SDEs: Generator-based learning approaches implemented in kooplearn achieve improved sample complexity and reduced bias compared to methods relying on trajectory data with explicit lag times.

Practical and Theoretical Implications

From a practical standpoint, kooplearn provides a unified, production-ready platform for evolution operator learning that integrates seamlessly into the scikit-learn/PyTorch/JAX ecosystem. This enables practitioners in fluid dynamics, molecular dynamics, and beyond to rapidly prototype and benchmark data-driven dynamical models, perform operator spectral analysis, and deploy reduced-order models.

Theoretically, kooplearn’s modularization and benchmarking capacity will catalyze rapid progress in operator-theoretic learning, particularly for high-dimensional nonlinear systems where kernel and deep learning methods are essential. The availability of infinitesimal generator estimation routines markedly lowers the barrier for physically regularized and equilibrium-based kinetic modeling, with direct implications for computational chemistry, climate modeling, and materials science.

Looking forward, kooplearn’s extensible architecture is well-positioned to incorporate advances in operator learning—such as equivariant Gaussian processes, neural differential operators, and graph-based encoders—enabling systematic ablation studies and comparison with future state-of-the-art methods.

Conclusion

kooplearn establishes a new standard for reproducible, efficient, and extensible evolution operator learning. By systematically implementing and unifying linear, kernel, and deep operator estimators, including recent advances in reduced-rank regression and generator-based models, the platform provides robust tools for both theoretical algorithm development and practical scientific discovery. Its scikit-learn compatibility, computational optimizations, and curated benchmarks ensure broad impact in both machine learning and dynamical systems communities.