Geometric decomposition of information flow for overdamped Langevin systems and optimal transport in subsystems

Abstract: Information flow between subsystems is a central concept in information thermodynamics, which provides the second-law-like inequalities for subsystems. This paper discusses the geometric decomposition of information flow, which was introduced for Markov jump systems [Y Maekawa, R Nagayama, K Yoshimura and S Ito, arXiv:2509.21985 (2025)], and applies it to overdamped Langevin systems. For overdamped Langevin systems, the geometric decomposition of information flow into excess and housekeeping contributions is related to the conventional definition of the $2$-Wasserstein distance between marginal distributions in optimal transport theory. This formulation offers an optimal-transport interpretation of subsystem dynamics, and this optimal-transport formulation is simpler for overdamped Langevin systems than for general Markov jump systems. It is also possible to handle features that are specific to overdamped Langevin systems, such as representations based on the Koopman mode decomposition, as well as their relationship with the Fisher information matrix. As with the results for Markov jump systems, we generalize the second law of information thermodynamics using housekeeping and excess information flow, leading to the concept of excess and housekeeping demons. We also derive a thermodynamic uncertainty relation and an information-thermodynamic speed limit incorporating excess information flow. These results are illustrated for the Gaussian case, and we discuss the conditions under which the excess and housekeeping demons emerge.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Explain it Like I'm 14

Overview

This paper looks at how “information” moves between two parts of a noisy physical system and how that movement affects energy and entropy (disorder). The authors split this information movement into two types—excess and housekeeping—and show how each one matters for the rules of thermodynamics in small, noisy systems. They focus on a common model of random motion called the overdamped Langevin equation, and they connect their decomposition to optimal transport, a way of measuring the most efficient way to move probability around. They also introduce simple ways to understand when “Maxwell’s demons” appear—special situations where one part seems to reduce entropy by using information from the other part.

Key Questions

The paper asks, in simple terms:

- How can we divide the “information flow” between two interacting parts of a system into pieces that change correlations (excess) and pieces that keep them going (housekeeping)?

- How does this division show up in systems described by the overdamped Langevin equation (think: particles jiggling in water with strong friction)?

- Can this division be linked to optimal transport (the most efficient way to move probability) and used to sharpen thermodynamic rules for subsystems?

- What new limits does this give—like uncertainty relations or speed limits on how fast things can change?

- When do different kinds of “demons” (housekeeping vs. excess) appear, and what makes them possible?

What Methods Did They Use?

To keep the ideas approachable, here’s what the methods mean in everyday language:

- Overdamped Langevin dynamics: Imagine a tiny bead in thick honey, constantly shaken by random jitters. Because the honey is thick, inertia doesn’t matter; friction dominates. The bead’s position changes due to a force plus random noise. The whole system can be split into two parts, X and Y, that influence each other.

- Probability flows: Instead of tracking one bead, the authors track the probability of where the bead is. That probability flows like dye in water. This is described by the Fokker–Planck equation, a continuity equation for probability.

- Information flow: “Information” here measures how knowing the state of X helps you predict the state of Y (and vice versa). The rate at which this mutual information changes because of X or Y’s dynamics is called information flow. Think of it as “who is influencing whom” over time.

- Geometric decomposition: The authors split the “thermodynamic force” (which drives probability flow) into two parts:

- A conservative part (like a downhill push from a potential landscape), which they call “excess.”

- A nonconservative part (a circulation or swirl that can keep things moving even in a steady state), which they call “housekeeping.”

- This split uses a mathematical “projection” onto gradient fields and is solved by a function whose gradient gives the conservative part.

- Optimal transport and the 2-Wasserstein distance: Imagine moving a pile of sand from one shape to another with minimal effort. The 2-Wasserstein distance measures the least “work” to reshuffle probability from one distribution to another. The authors show the excess part of information flow is closely related to this optimal reshuffling between marginal distributions of X and Y. This gives a clean geometric picture: excess flow steers you along the most efficient path in “probability space.”

- Fisher information and Koopman modes:

- Fisher information tells you how sensitive Y is to changes in X. It sets bounds on how much information can flow.

- Koopman mode decomposition breaks complex dynamics into simpler “patterns of motion,” helping analyze the housekeeping part.

Main Findings

Here are the most important results and why they matter:

- A clear split of information flow: The information moving from X to Y (and from Y to X) can be separated into excess (which can change correlations) and housekeeping (which sustains correlations, especially in steady states).

- A simpler, continuous-state formulation: For systems with continuous states (like positions), this split is more straightforward than for discrete jump systems, and it connects naturally to optimal transport theory via the 2-Wasserstein distance.

- Generalized second law for subsystems: The usual second law says entropy production is nonnegative. For subsystems, apparent violations (negative entropy change) can happen—but they must be balanced by information flow. The authors refine this law by showing how the excess and housekeeping information flows enter separately, leading to sharper, subsystem-specific inequalities.

- Thermodynamic uncertainty relations (TURs) and speed limits: They derive new bounds that link how much a subsystem’s quantities can fluctuate (uncertainty) or how fast they can change (speed limits) to the excess information flow and Wasserstein geometry. This ties “how fast you can move” in probability space to “how much information you use.”

- Demons clarified:

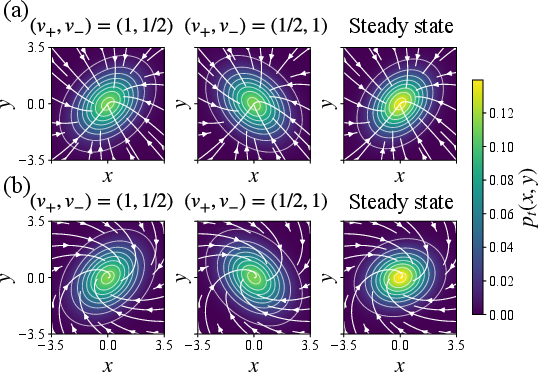

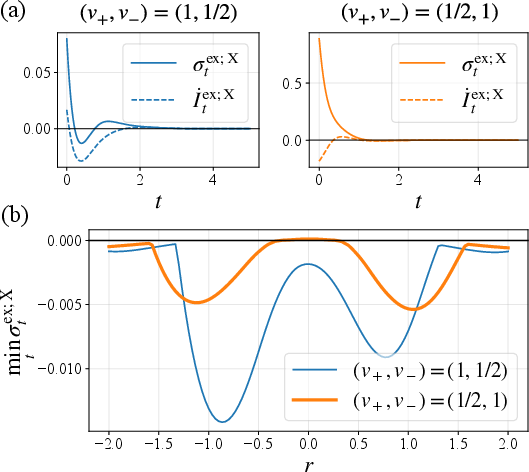

- Excess demon: Appears during transient (changing) dynamics; it uses excess information flow to make a subsystem’s apparent entropy change negative.

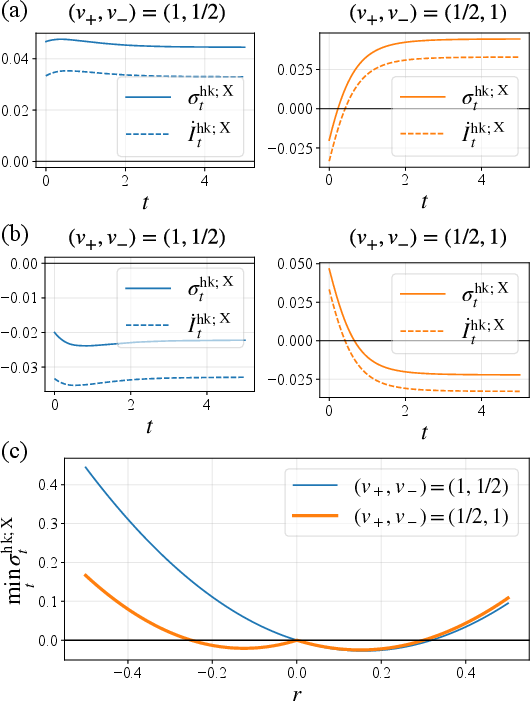

- Housekeeping demon: Appears when nonconservative forces are present; it uses housekeeping information flow and can operate even in steady states.

- The paper shows conditions for when each demon can exist, and illustrates them in the Gaussian case, where formulas simplify.

- Fisher-information bounds: They show that information flow is limited by Fisher information. Put simply, you can’t have unlimited influence; how “tunable” Y is by X sets a cap on usable information flow and on how much the second law can be “beaten” in appearance.

- Koopman decomposition for housekeeping: The housekeeping part (the sustained circulation) can be broken into clean signal-like modes, making it easier to analyze and interpret steady-state influences.

Why This Is Important

This work builds a bridge between several powerful ideas:

- It gives a geometric, optimal-transport view of information and thermodynamics in interacting subsystems, making complex dynamics feel like efficient routes through probability space.

- It sharpens the second law for parts of a system, showing exactly how information can offset apparent entropy reductions, and how much is possible given sensitivity (Fisher information).

- It unifies tools—information flow, Wasserstein geometry, uncertainty relations, speed limits, and Koopman modes—into one framework for continuous systems.

- It clarifies when different “demons” can appear and how they use information, helping us understand autonomous information engines in physics, biology, and engineered nanosystems.

In short, the paper offers a cleaner, more intuitive way to understand and control how information drives thermodynamics in coupled, noisy systems—making it easier to design small devices or interpret living systems that use information to do useful work.

Knowledge Gaps

Knowledge gaps, limitations, and open questions

Below is a single, consolidated list of what remains missing, uncertain, or unexplored in the paper. Each item is stated concretely to aid follow-up research.

- Scope restricted to overdamped Langevin dynamics: generalization of the housekeeping–excess information-flow decomposition to underdamped dynamics, colored/multiplicative noise, position-dependent mobility, and non-isothermal environments is not addressed.

- Bipartite noise assumption: the block-diagonal mobility/diffusion matrix excludes cross-correlated noise between subsystems; extending the framework to correlated baths or hydrodynamically coupled noise remains open.

- Two-subsystem setting: the decomposition and bounds are formulated for exactly two subsystems; extending to multiple interacting subsystems and networked architectures (including hierarchical or overlapping partitions) is left unexplored.

- Mathematical existence/uniqueness and regularity of the geometric potential φt: rigorous conditions under which Eq. (geometric potential) admits unique, well-posed solutions (including boundary conditions, domain regularity, and growth constraints) need to be formalized, especially in high dimensions.

- Computational methods for φt in practice: fast, scalable numerical PDE solvers (and error guarantees) for computing φt and the conservative projection in high-dimensional, non-Gaussian systems are not provided.

- Optimal transport link beyond constant diffusion: the relation to the 2-Wasserstein geometry is presented for constant diffusion; how the results adapt to state-dependent diffusion (weighted/warped Wasserstein metrics) or anisotropic, spatially varying noise is unclear.

- Monge–Kantorovich formulation: the asserted connection to Monge maps lacks precise conditions (absolute continuity, cyclic monotonicity, uniqueness) under which the subsystem excess term equals the OT cost; a rigorous treatment is needed.

- Discrete/continuous bridge: while the continuous case is said to be “simpler” than Markov jump systems, a formal mapping and tight equivalence of decompositions (including discrete Benamou–Brenier extensions) is not fully developed.

- General non-Gaussian dynamics: analytic and numerical results focus on Gaussian cases; the emergence and quantification of excess/housekeeping demons for nonlinear, non-Gaussian, and multimodal distributions are not studied.

- Tightness and attainability of TUR and speed-limit bounds: conditions under which the proposed bounds (incorporating excess information flow) are tight or saturable, and the classes of protocols achieving equality, are not characterized.

- Finite-time versus rate formulations: integrated finite-time bounds and their relation to rate inequalities (including boundary terms and time discretization effects) are not explicitly analyzed.

- Koopman mode decomposition: the partial housekeeping EP’s Koopman-mode decomposition is introduced but lacks algorithmic procedures, robustness analyses under noise, and guarantees for modal identification in nonlinear stochastic systems.

- Data-driven estimation: practical estimators (with bias–variance characterization) for information flow, conditional Fisher information, excess/housekeeping EP rates, and Wasserstein distances from finite time-series data are missing.

- Experimental validation: no experimental protocols or target systems (e.g., colloids, active matter, electronic circuits) are proposed to directly measure and validate the housekeeping/excess information flow, TURs, and speed limits at the subsystem level.

- Role of nonconservative forces: the emergence of the housekeeping demon is illustrated for Gaussian settings; general conditions (force topology, curl structure, and spectral properties) for housekeeping/excess demons in non-Gaussian, nonlinear systems remain uncharacterized.

- Causal interpretation and transfer entropy: while connections to transfer entropy and backward transfer entropy are noted, a precise causal framework (e.g., directed information, Granger causality) reconciling these quantities with the geometric decomposition is not established.

- Partial observation and hidden variables: the impact of unobserved degrees of freedom (coarse-graining) on the decomposition, bounds, and demon emergence is not analyzed; robustness to model misspecification and hidden coupling remains open.

- Steady-state antisymmetry reliance: the antisymmetric steady-state relation for information flows hinges on bipartite, Markovian assumptions; extensions to delayed coupling, time-dependent protocols, or non-Markovian memory kernels are not provided.

- Local excess/housekeeping rates: the definitions and variational characterizations of local rates lack discussion of gauge freedoms, coordinate dependence, and physical measurability (e.g., how to operationalize local rates in experiments).

- Boundary-term assumptions: the blanket assumption that boundary terms vanish (fast decay of densities) lacks a treatment for confined domains, reflecting boundaries, or absorbing boundaries; impacts on the decomposition and inequalities are unknown.

- Multiple heat baths and temperature heterogeneity: the theory assumes a single uniform temperature; generalization to distinct baths per subsystem (with different temperatures) and the resulting modifications to information-thermodynamic inequalities is absent.

- Interaction strength and coupling structure: sensitivity of the decomposition and bounds to strong coupling, time-dependent coupling, and non-potential interactions is not quantified.

- Entropic optimal transport vs. Wasserstein: the role of entropy-regularized OT (Schrödinger bridges) versus pure Wasserstein geometry in defining excess subsystem dynamics is not examined; potential advantages for noisy, data-driven settings remain unexplored.

- Protocol design: strategies to engineer or suppress excess/housekeeping demons via control (feedback, protocol shaping) and optimization over force fields are not provided.

- Uncertainty quantification: confidence intervals or probabilistic guarantees for estimated information flows and Fisher influences are not developed, limiting empirical application.

- Extension to quantum and hybrid systems: whether the decomposition and bounds have quantum analogues (e.g., Lindbladian dynamics, quantum Fisher information) or hybrid classical–quantum systems is left open.

- Generalization to pathwise formulations: pathwise (trajectory-level) definitions of excess/housekeeping information flow and EP, and their fluctuation theorems, are not discussed.

- Relationship to curvature and gradient-flow structures: the connection to entropic curvature, EVI (Evolution Variational Inequalities), and JKO (Jordan–Kinderlehrer–Otto) schemes in the subsystem setting needs formalization to clarify geometric control of nonequilibrium coupling.

Practical Applications

Immediate Applications

Below are actionable use cases that can be deployed with today’s methods and data, derived from the paper’s decomposition of information flow (excess vs housekeeping), optimal-transport interpretation (2‑Wasserstein), generalized second laws for subsystems, TURs, speed limits, Koopman-mode links, and Fisher-information bounds.

- Analysis toolkit for coupled stochastic experiments (e.g., optical tweezers, single-molecule biophysics)

- Sectors: healthcare/biotech, materials science, soft matter

- What to do: From trajectory data, estimate diffusion matrices and drift, compute excess/housekeeping information flow, partial entropy production, and test the generalized second-law inequalities; use Gaussian-case formulas where appropriate to rapidly screen regimes with “excess” versus “housekeeping” demons.

- Tools/workflows: SDE parameter estimation + OT solvers (POT/GeomLoss/OTT-JAX), Benamou–Brenier variational solvers, mutual-information and Fisher-information estimators, pykoopman for Koopman modes.

- Assumptions/dependencies: Overdamped Langevin regime; bipartite (block-diagonal noise); sufficient data to estimate p_t, fluxes, and gradients; negligible boundary terms; known/estimable diffusion.

- Energy-aware feedback control in micromanipulation and micro-robotics

- Sectors: robotics, precision instrumentation

- What to do: Design feedback protocols that exploit excess information flow in transients and minimize housekeeping costs in steady operation; plan distributions along Wasserstein geodesics to reduce dissipation while achieving target marginals.

- Tools/workflows: Real-time OT-based trajectory planning; controller objectives that penalize housekeeping entropy; online estimation of information flow; integration with optical/magnetic tweezer controllers.

- Assumptions/dependencies: Accurate online inference of marginals and diffusion; real-time solvability of OT subproblems; system approximated by overdamped Langevin dynamics.

- Certification and auditing of sensing/IoT energy budgets via information-thermodynamic bounds

- Sectors: energy, IoT, embedded systems

- What to do: Use Fisher-information bounds and the subsystem second-law to compute lower bounds on energy per bit of information extracted; certify whether devices operate near limits or are over-spending energy to sustain correlations (housekeeping).

- Tools/workflows: On-device telemetry to estimate mutual information rates, diffusion surrogates from calibration; dashboards showing σX, İX, Fisher-based lower bounds; regression to detect nonconservative forcing.

- Assumptions/dependencies: Ability to estimate conditional/marginal densities; stationary vs transient phases identified; measurement noise characterized.

- Causal-influence diagnostics that separate transient and steady coupling

- Sectors: neuroscience, econometrics/finance, climate science

- What to do: Decompose directional information exchange into excess (transient, correlation-changing) versus housekeeping (steady, correlation-maintaining) components to distinguish shock propagation from persistent driving.

- Tools/workflows: Continuous-time info-flow estimators; Wasserstein distance between marginals across time; Koopman-mode analysis to attribute steady driving; false-discovery control via TUR-based uncertainty bounds.

- Assumptions/dependencies: Time series admit diffusion approximations; sufficiently smooth densities; block-diagonalizable noise at subsystem level or justified approximation.

- Uncertainty quantification using Wasserstein-based TURs in forecasting and control

- Sectors: finance, operations, climate/energy systems

- What to do: Bound estimator variance and control performance using TURs that incorporate excess information flow and 2‑Wasserstein geometry between marginals; use speed limits to assess minimum time to achieve distributional targets.

- Tools/workflows: Add TUR/SL constraints to forecasting/risk engines and MPC solvers; scenario analysis that flags infeasible performance promises.

- Assumptions/dependencies: Models provide access to fluxes or surrogate drifts; calibrated diffusion; reliable estimation windows.

- Diagnostics for learned SDEs and generative models

- Sectors: software, machine learning

- What to do: Post-train audits that compute housekeeping vs excess entropy production and information flow to check if learned dynamics respect the generalized second laws and TUR/SL bounds; detect spurious nonconservative couplings.

- Tools/workflows: Apply decomposition to diffusion models, neural SDEs; penalty terms during training for excessive housekeeping cost; monitoring dashboards.

- Assumptions/dependencies: Model yields differentiable log-density or score; access to synthetic trajectories; ergodicity/overdamped approximation acceptable.

- Protocol design for information engines in tabletop experiments

- Sectors: physics labs, education

- What to do: Design measurement/feedback schedules to create “excess demons” during transients and suppress/enable “housekeeping demons” via controlled nonconservative forcing; demonstrate apparent second-law violations compensated by information flow.

- Tools/workflows: Controlled potentials (e.g., optical traps), feedback timing synchronized to predicted excess windows; evaluate entropy-accounting equality with measured İ and σ.

- Assumptions/dependencies: Accurate control of nonconservative forces; robust estimation of mutual information dynamics; Gaussian approximations for rapid prototyping.

- Curriculum modules and visualization of information–energy trade-offs

- Sectors: education, training

- What to do: Use the optimal-transport geometry and Koopman perspective to teach nonequilibrium thermodynamics of subsystems; lab modules that visualize Wasserstein geodesics and info-flow decomposition.

- Tools/workflows: Notebooks combining POT/pykoopman/mutual-information estimators; interactive demos.

- Assumptions/dependencies: Access to compute and data; simplified 2D Gaussian examples.

Long-Term Applications

These rely on further research, scaling, or technology maturation (e.g., robust real-time OT solvers, nanoscale actuation, broader model classes).

- Autonomous information engines and low-power computation at the nanoscale

- Sectors: nanoelectronics, synthetic biology, lab-on-chip

- What to build: Devices that harvest/work with correlations by targeting excess information flow in transients and sustaining function via minimal housekeeping; logic or memory elements that use nonconservative forces to maintain correlations at low energy cost.

- Dependencies: Reliable nanoscale actuation/sensing; robust estimation of information flow in situ; materials enabling precise nonconservative driving; integration with biochemical/CMOS platforms.

- Energy-aware AI and data-center policy based on information–thermodynamic auditing

- Sectors: policy, cloud computing

- What to do: Operational policies that cap or price “housekeeping” information maintenance across services; SL/TUR-informed SLAs for streaming/edge inference; procurement standards referencing minimal entropy budgets.

- Dependencies: Standardized telemetry for information-flow metrics; accepted benchmarks; regulatory alignment; scalable estimators for large distributed systems.

- Swarm and multi-agent robotics coordinating via minimal housekeeping costs

- Sectors: robotics, logistics

- What to build: Coordination protocols that maintain necessary correlations among agents at minimal energetic housekeeping cost, using Wasserstein-based planning for transient phases and Koopman diagnostics for steady coordination.

- Dependencies: Fast distributed OT solvers; accurate diffusion approximations of agent interactions; resilience to non-ideal noise correlations.

- Smart-grid and infrastructure control with speed limits

- Sectors: energy, transportation

- What to do: Use information-thermodynamic speed limits to design safe, minimal-energy transitions between operating points; identify when persistent nonconservative couplings (housekeeping) are required for stability.

- Dependencies: Stochastic state estimation at system scale; validated diffusion surrogates; policy acceptance of physics-based operational bounds.

- Clinical and physiological subsystem monitoring

- Sectors: healthcare, digital health

- What to do: Detect pathological nonconservative couplings (e.g., cardio-respiratory, neurovascular) by tracking housekeeping vs excess information flows; guide interventions that reduce harmful housekeeping costs.

- Dependencies: High-fidelity continuous biosignals; validated diffusion models for physiology; clinical trials linking decomposition metrics to outcomes.

- Standards for thermodynamic compliance and green sensing

- Sectors: standards bodies, manufacturing

- What to do: ISO-like frameworks that certify devices against TUR/SL-informed energy–information metrics; product labels indicating minimal energy per unit of actionable information.

- Dependencies: Consensus test protocols; reference datasets; cross-industry buy-in; tooling for third-party audits.

- Quantum generalizations for open-system control and certification

- Sectors: quantum technologies

- What to build: Quantum analogues of the housekeeping/excess decomposition for Lindbladian dynamics; certification of quantum controllers against information–thermodynamic speed limits.

- Dependencies: Theoretical extension beyond overdamped Langevin; experimental access to quantum mutual information rates; robust tomography/estimation.

- Active matter and programmable materials with embedded information processing

- Sectors: materials, soft robotics

- What to do: Design microstructures where nonconservative forces maintain desired correlations (housekeeping) to yield macroscopic functions; exploit excess phases for reconfiguration.

- Dependencies: Material platforms with tunable active driving; multiscale modeling to map decomposition metrics to bulk properties; in situ measurement of subsystem marginals.

- Industrial process monitoring via Koopman-mode housekeeping metrics

- Sectors: manufacturing, process engineering

- What to do: Use Koopman decomposition of housekeeping entropy in sensor networks to detect persistent exogenous driving vs transient disturbances; trigger maintenance or control actions accordingly.

- Dependencies: Stable Koopman operator identification; dense time-series; validated mappings from modes to physical drivers.

Notes on cross-cutting assumptions and feasibility

- Model class: Results assume overdamped Langevin (Markov diffusion), bipartite subsystems with uncorrelated noise blocks, smooth densities, and vanishing boundary terms. Extensions to underdamped, non-Markovian, or correlated-noise systems need care.

- Estimation: Many applications require reliable estimation of marginal/conditional densities, fluxes, and Fisher information from finite data; bias/variance trade-offs can limit fidelity.

- Computation: Solving Benamou–Brenier problems and geometric potentials in high dimensions is computationally demanding; scalable approximations (entropic OT, low-rank maps, score models) are often needed.

- Experiment–model mismatch: Deviations from diffusion approximations (jumps, delays) and unknown nonconservative forces can confound attributions; robustness analyses are advisable.

Glossary

- 2-Wasserstein distance: A metric on probability distributions in optimal transport measuring minimal quadratic transport cost between distributions. "For overdamped Langevin systems, the geometric decomposition of information flow into excess and housekeeping contributions is related to the conventional definition of the $2$-Wasserstein distance between marginal distributions in optimal transport theory."

- Affinities: Thermodynamic driving forces that sustain nonequilibrium currents, often associated with cycles in networked systems. "Such asymmetries can be detected using probability fluxes~\cite{schnakenberg1976, sekimoto2010stochastic,de2013non, seifert2025stochastic}, affinities~\cite{schnakenberg1976, sekimoto2010stochastic,de2013non,seifert2025stochastic}, cross-correlation functions~\cite{casimir1945, ohga2023}, or response functions~\cite{Onsager1931,Onsager1931-2, Owen2020}, and reflect a violation of the detailed balance condition."

- Autonomous Maxwell's demon: A steady-state information-processing subsystem that induces apparent second-law violations without external measurement-feedback control. "Since then, it has provided a theoretical explanation for the phenomenon of the autonomous Maxwell's demon~\cite{mandal2012,strasberg2013}, often referred to as the autonomous demon, which can work in the steady state."

- Backward transfer entropy: An information-theoretic measure of reverse-time influence, used to characterize non-Markovian and feedback systems. "This representation can also be rewritten as a contribution involving simple simultaneous mutual information, the transfer entropy, and the backward transfer entropy (see Refs.~\cite{ito2015maxwell,ito2016backward})."

- Benamou–Brenier formula: The dynamic optimal transport formulation expressing Wasserstein distance via minimal kinetic energy under a continuity constraint. "Unlike discrete-state formulations based on extensions of the BenamouâBrenier formula~\cite{maas2011gradient,yoshimura2023housekeeping}, the continuous-state approach based on the Benamou-Brenier formula~\cite{benamou2000computational} considered here is also related to the MongeâKantorovich formulation of optimal transport~\cite{villani2008optimal}."

- Bipartite systems: Stochastic systems partitioned into two subsystems with block-diagonal noise, enabling partial thermodynamic quantities and information flows. "Among these formulations, a specific expression~\cite{Allahverdyan_2009,ito2013,Hartich_2014,horowitzesposito2014} defined for a restricted class of Markovian dynamics, known as bipartite systems, has attracted attention in recent years~\cite{amano2022insights,Ryota2022,Leignton2024}."

- Clausius heat theorem: The thermodynamic relation linking entropy change to heat exchanged divided by temperature. "This expression corresponds to the Clausius heat theorem in classical thermodynamics."

- Conditional Fisher information matrix: A matrix quantifying sensitivity of the conditional distribution p(Y|X) to changes in X, used to bound information flow. "with the conditional Fisher information matrix"

- Cross-correlation functions: Statistical tools measuring correlations between two signals at different times, used to detect directional asymmetries. "Such asymmetries can be detected using probability fluxes~\cite{schnakenberg1976, sekimoto2010stochastic,de2013non, seifert2025stochastic}, affinities~\cite{schnakenberg1976, sekimoto2010stochastic,de2013non,seifert2025stochastic}, cross-correlation functions~\cite{casimir1945, ohga2023}, or response functions~\cite{Onsager1931,Onsager1931-2, Owen2020}, and reflect a violation of the detailed balance condition."

- Detailed balance condition: The equilibrium constraint ensuring microscopic reversibility and zero net probability currents. "Such asymmetries can be detected using probability fluxes~\cite{schnakenberg1976, sekimoto2010stochastic,de2013non, seifert2025stochastic}, affinities~\cite{schnakenberg1976, sekimoto2010stochastic,de2013non,seifert2025stochastic}, cross-correlation functions~\cite{casimir1945, ohga2023}, or response functions~\cite{Onsager1931,Onsager1931-2, Owen2020}, and reflect a violation of the detailed balance condition."

- Dirac delta function: A generalized function representing an idealized point mass, used to describe white-noise correlations. "where stands for the expected value, stands for the Dirac delta function, and is the identity matrix."

- Differential entropy: The continuous analogue of Shannon entropy, defined as the integral of −p log p over state space. "This entropy can be regarded as the differential entropy "

- Fisher information matrix: A measure of the sensitivity of a distribution to parameter changes; here related to conditional influences and bounds. "as well as their relationship with the Fisher information matrix."

- Fokker-Planck equation: A partial differential equation describing the time evolution of probability densities for diffusion processes. "Its time evolution corresponding to Eq.~\eqref{Langevineq} can be described by the Fokker-Planck equation, which is given by the following continuity equation"

- Housekeeping demon: A conceptual agent that sustains nonconservative steady-state correlations to cause apparent entropy reductions in a subsystem. "The housekeeping demon and the excess demon are introduced to provide apparent violations of the generalized second law of thermodynamics via housekeeping information flow and excess information flow, respectively (see also Fig.~\ref{exhkdemon}(b))."

- Housekeeping entropy production rate: The nonconservative part of entropy production associated with maintaining steady currents or correlations. "We further develop a Koopman-mode decomposition of the partial housekeeping entropy production rate (Sec.~\ref{sec3c})"

- Housekeeping information flow: The nonconservative component of information flow that maintains correlations in steady operation. "The housekeeping demon in system uses housekeeping information flow to make the apparent housekeeping entropy change rate in system negative."

- Ito calculus: The framework for stochastic integration with Itô’s interpretation, governing differentials of SDEs. "where we used Ito calculus "

- Koopman mode decomposition: A spectral analysis of dynamical systems via the Koopman operator acting on observables, yielding modal structure. "such as representations based on the Koopman mode decomposition"

- Mobility matrix: A positive-definite matrix mapping forces to velocities in overdamped dynamics, related to diffusion via Einstein relation. "and is the mobility matrix that is assumed to be positive definite."

- Monge–Kantorovich formulation: The static optimal transport framework minimizing cost over couplings between distributions. "related to the MongeâKantorovich formulation of optimal transport~\cite{villani2008optimal}."

- Onsager matrix: The linear-response coefficient matrix relating thermodynamic forces to fluxes, here pD in Langevin systems. "quantity can be regarded as the Onsager matrix."

- Optimal transport theory: The mathematical theory of transporting probability mass optimally under a cost, connected to thermodynamic bounds. "Restricting our analysis to continuous-state Langevin systems clarifies the connection to optimal transport theory~\cite{villani2008optimal,ito2024geometric}, within which a natural decomposition into housekeeping and excess contributions~\cite{ito2024geometric,dechant2022geometric,dechant2022geometric2,yoshimura2023housekeeping,nagayama2025geometric} emerges."

- Overdamped Langevin equation: A stochastic differential equation describing friction-dominated dynamics with Gaussian noise. "We now consider the -dimensional overdamped Langevin equation"

- Partial entropy production rate: The contribution to entropy production attributable to a subsystem in a bipartite system. "the total entropy production rate can be decomposed into the partial entropy production rates of the subsystems $\dot{\Sigma}_t^{\rm X} :=\langle \boldsymbol{f}^{\rm X}_t, \boldsymbol{f}^{\rm X}_t\rangle_{p_t \mathsf{D}^{\rm X}$ and $\dot{\Sigma}_t^{\rm Y} :=\langle \boldsymbol{f}^{\rm Y}_t, \boldsymbol{f}^{\rm Y}_t\rangle_{p_t \mathsf{D}^{\rm Y}$"

- Probability fluxes: Vector fields representing the flow of probability in state space, indicating nonequilibrium asymmetries. "Such asymmetries can be detected using probability fluxes~\cite{schnakenberg1976, sekimoto2010stochastic,de2013non, seifert2025stochastic}, affinities~\cite{schnakenberg1976, sekimoto2010stochastic,de2013non,seifert2025stochastic}, cross-correlation functions~\cite{casimir1945, ohga2023}, or response functions~\cite{Onsager1931,Onsager1931-2, Owen2020}, and reflect a violation of the detailed balance condition."

- Response functions: Quantities characterizing how systems respond to perturbations, central to linear response theory. "Such asymmetries can be detected using probability fluxes~\cite{schnakenberg1976, sekimoto2010stochastic,de2013non, seifert2025stochastic}, affinities~\cite{schnakenberg1976, sekimoto2010stochastic,de2013non,seifert2025stochastic}, cross-correlation functions~\cite{casimir1945, ohga2023}, or response functions~\cite{Onsager1931,Onsager1931-2, Owen2020}, and reflect a violation of the detailed balance condition."

- Stratonovich discretization: A midpoint interpretation of stochastic integrals yielding chain-rule-consistent calculus. "where stands for the Stratonovich discretization."

- Thermodynamic force: The effective driving field combining physical force and probability gradient, appearing in flux–force relations. "where is the diffusion matrix, is the thermodynamic force."

- Thermodynamic speed limit: A bound on the minimal time required for state transformation given dissipation/metrics, here via Wasserstein geometry. "We also derive a thermodynamic uncertainty relation and an information-thermodynamic speed limit incorporating excess information flow."

- Thermodynamic uncertainty relation: A universal trade-off between current precision and entropy production in nonequilibrium systems. "We also derive a thermodynamic uncertainty relation and an information-thermodynamic speed limit incorporating excess information flow."

- Transfer entropy: A directional measure of information flow capturing how one process influences another’s future. "This representation can also be rewritten as a contribution involving simple simultaneous mutual information, the transfer entropy, and the backward transfer entropy (see Refs.~\cite{ito2015maxwell,ito2016backward})."

Collections

Sign up for free to add this paper to one or more collections.