GaMO: Geometry-aware Multi-view Diffusion Outpainting for Sparse-View 3D Reconstruction

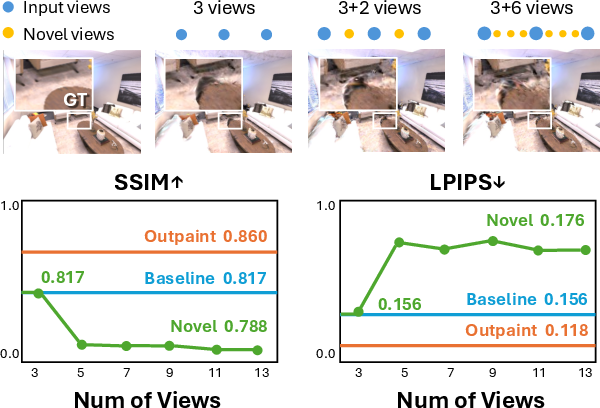

Abstract: Recent advances in 3D reconstruction have achieved remarkable progress in high-quality scene capture from dense multi-view imagery, yet struggle when input views are limited. Various approaches, including regularization techniques, semantic priors, and geometric constraints, have been implemented to address this challenge. Latest diffusion-based methods have demonstrated substantial improvements by generating novel views from new camera poses to augment training data, surpassing earlier regularization and prior-based techniques. Despite this progress, we identify three critical limitations in these state-of-the-art approaches: inadequate coverage beyond known view peripheries, geometric inconsistencies across generated views, and computationally expensive pipelines. We introduce GaMO (Geometry-aware Multi-view Outpainter), a framework that reformulates sparse-view reconstruction through multi-view outpainting. Instead of generating new viewpoints, GaMO expands the field of view from existing camera poses, which inherently preserves geometric consistency while providing broader scene coverage. Our approach employs multi-view conditioning and geometry-aware denoising strategies in a zero-shot manner without training. Extensive experiments on Replica and ScanNet++ demonstrate state-of-the-art reconstruction quality across 3, 6, and 9 input views, outperforming prior methods in PSNR and LPIPS, while achieving a $25\times$ speedup over SOTA diffusion-based methods with processing time under 10 minutes. Project page: https://yichuanh.github.io/GaMO/

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Explain it Like I'm 14

Overview: What is this paper about?

This paper is about building 3D models of a place (like a room) from only a few photos. That’s hard because the camera doesn’t see everything, so the 3D model often has holes, blurry edges, or weird “ghosting” effects. The authors introduce a new method called GaMO that “outpaints” each photo—meaning it carefully extends the image beyond its original edges—so the 3D model has more complete and consistent information to work with.

Think of it like finishing a jigsaw puzzle when you don’t have all the pieces: instead of guessing whole new pictures from different angles, GaMO expands the edges of the pieces you already have so they fit together better.

What questions are the researchers trying to answer?

The paper focuses on three easy-to-understand goals:

- How can we fix missing parts around the edges of photos so the 3D scene has fewer holes and blurry areas?

- How can we keep the geometry (the shape and layout of things) consistent across all the photos, so there’s less ghosting or misalignment?

- How can we make this process faster and simpler than methods that generate lots of new camera views?

How did they do it? (Methods explained simply)

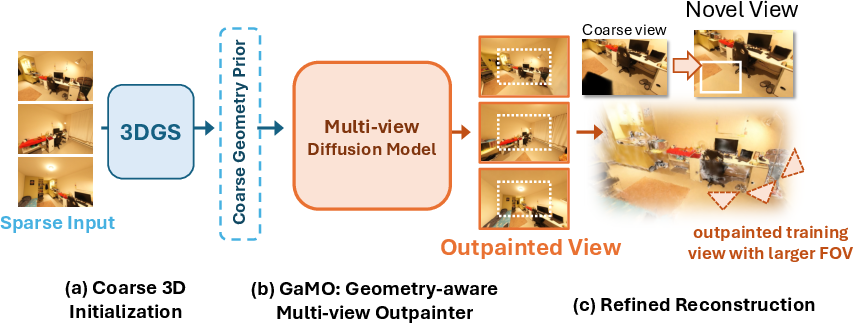

The method works in three main stages. Here’s the big picture:

- Coarse 3D setup

- The system first creates a rough 3D model from the few input photos. Imagine placing lots of tiny, see-through “paint blobs” (called 3D Gaussians) in space to roughly shape the scene.

- From this rough model, it makes:

- An opacity mask: a map showing where the model is solid and where it’s empty. Empty areas at the photo edges are the places that need outpainting.

- A coarse color render: a low-detail image of what the scene might look like from each camera, used as a guide.

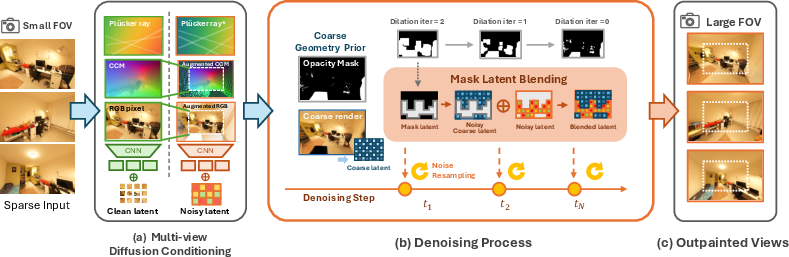

- Geometry-aware multi-view outpainting

- Instead of inventing totally new camera angles, GaMO expands the existing photos’ field of view (FOV). Think of the camera “zooming out” to see more around the edges.

- It uses a diffusion model (a type of AI that starts with noisy images and repeatedly “cleans” them to produce realistic pictures) to fill in the missing parts.

- To keep everything aligned and believable, GaMO adds geometry cues:

- Camera ray info (a way to describe where each pixel looks into the scene) so the AI knows the 3D directions from the camera.

- Warped copies of the original image and its internal coordinates, so the center stays true to the input and the edges follow the scene’s structure.

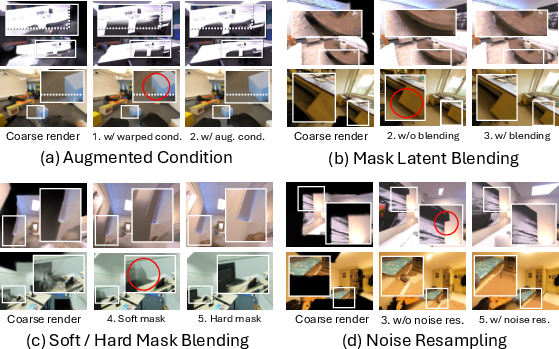

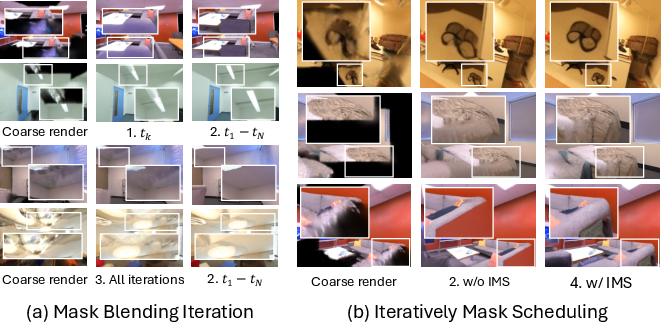

- While denoising, it blends in the coarse render only in the empty regions (guided by the opacity mask). This happens at a few carefully chosen steps and gradually shrinks the masked area, so the outpainted content blends smoothly with known parts.

- It also resamples noise near the boundaries to avoid sharp seams—like gently smudging lines so two patches match.

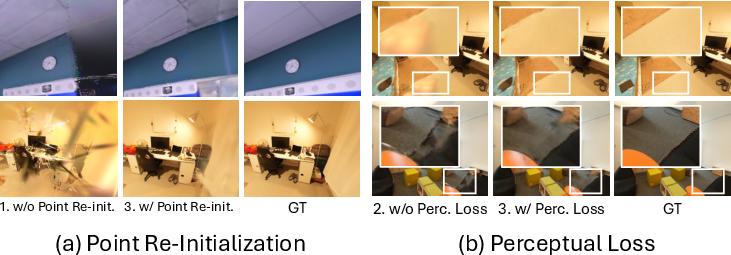

- Refine the 3D model

- Finally, the improved, outpainted images are used to retrain the 3D Gaussians. This fills holes and sharpens geometry, making the final 3D scene look better from new viewpoints.

Simple analogies for the technical bits

- 3D Gaussian Splatting: Imagine building a 3D scene out of thousands of tiny, soft marbles of color. When you view the scene from a camera, these marbles blend together to form the image.

- Diffusion model: Picture an artist starting with a very noisy sketch and repeatedly erasing noise while adding details until the image looks realistic.

- Outpainting: Extending a photo beyond its borders, like imagining what’s just off the edge of a picture and drawing it in.

- Field of View (FOV): How wide the camera can see—narrow FOV is like tunnel vision; a wide FOV shows more of the surroundings.

What did they find, and why does it matter?

Key results:

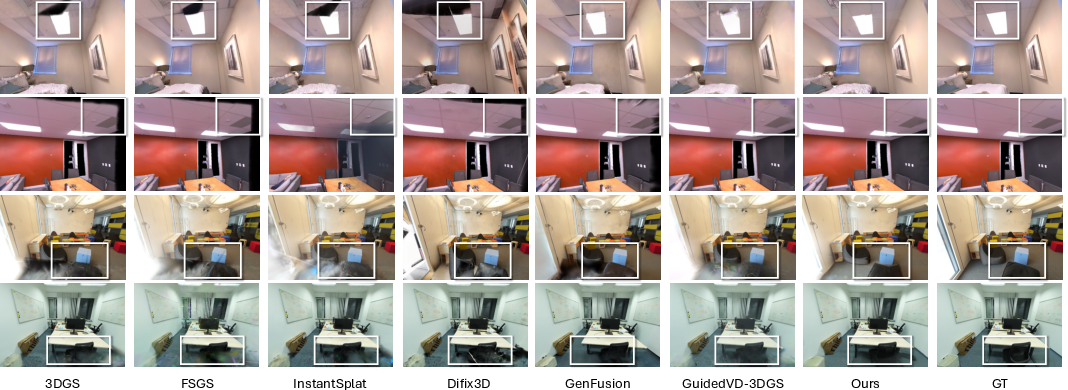

- Better image quality: On two standard datasets (Replica and ScanNet++), GaMO produced sharper, more accurate images with fewer holes and less ghosting. It beat other top methods on metrics like PSNR, SSIM, and LPIPS (scores that measure clarity, structural accuracy, and how close the images look to real photos).

- Much faster: GaMO is about 25 times faster than some diffusion-based methods. It can process a scene in under 10 minutes.

- More consistent geometry: Because GaMO expands existing views rather than inventing new camera angles, the 3D shapes line up better across images.

Why it matters:

- With only a few photos, current 3D tools often struggle. GaMO makes the most of the photos you already have, improving coverage and consistency without complicated camera planning or slow pipelines.

- This can help in real-world tasks like virtual property tours, gaming, AR/VR, and telepresence—any situation where you want a clean 3D representation but only have limited views.

What’s the bigger impact?

- Practical use: Faster, cleaner 3D reconstructions from fewer photos mean easier content creation for apps, museums, real estate, and more.

- Smarter strategy: The paper suggests a shift in thinking—from generating lots of new views (which can conflict and slow things down) to outpainting the views you have (which tends to keep geometry correct and is simpler).

- Limitations and future ideas: GaMO can’t see through objects; if something is hidden in every photo, it can’t guess it perfectly. Also, if the few photos are all taken from the same spot or badly aligned, results get worse. Future work could adapt how much to expand the FOV per scene and combine outpainting with other methods for tricky cases.

In short

GaMO is a geometry-aware, multi-view outpainting approach that expands the edges of the photos you have, keeps the 3D shapes consistent, and refines the 3D model—leading to better quality and much faster results than methods that try to generate lots of new viewpoints.

Knowledge Gaps

Knowledge gaps, limitations, and open questions

The following points highlight what remains missing, uncertain, or unexplored in the paper and suggest concrete directions for future research:

- Sensitivity to coarse 3D initialization quality: The pipeline relies on DUSt3R and a coarse 3DGS render for masks and priors, but the paper does not quantify how errors in initial point clouds or camera poses propagate to outpainting artifacts and final 3D reconstruction. Systematic experiments varying DUSt3R accuracy, pose noise, and point density are needed.

- Dependence on input view distribution: Performance is said to degrade for clustered or misaligned views, yet no analysis characterizes what distributions (baselines, overlap, spacing) are sufficient. Establishing minimal view coverage, baseline thresholds, and pose diversity requirements would make the method more predictable.

- Outpaint scale selection and mask thresholding: The focal-length scaling and opacity threshold are manually set (e.g., , ) without a principled selection strategy. An adaptive policy (per-scene or per-view) and sensitivity curves for these parameters would reduce trial-and-error.

- Blending schedule and resampling hyperparameters: The latent blending timesteps () and resampling count () are heuristic. A comprehensive study to optimize or learn these schedules (potentially conditioned on scene geometry or uncertainty) is missing.

- Lack of explicit 3D consistency metrics: Evaluations focus on PSNR/SSIM/LPIPS/FID for novel views, but do not measure geometric fidelity (e.g., multi-view reprojection error, depth/normal consistency, surface deviation from ground truth meshes, epipolar consistency). Incorporating such metrics would directly validate the claimed geometric improvements.

- Hallucination vs. correctness of outpainted content: Outpainting can generate plausible but semantically incorrect content in unobserved regions. The paper lacks semantic consistency assessments (e.g., object-level correctness, human studies) or uncertainty estimates to prevent diffusion artifacts from dominating 3D optimization.

- Pseudo-supervision risks during refinement: Using generated outpainted views as training targets can lock in diffusion biases. Methods to modulate their influence (e.g., confidence-weighted losses, uncertainty-aware masking, consistency checks against input views) and ablations on are needed.

- Robustness to camera calibration errors: The method assumes accurate intrinsics/extrinsics and pinhole cameras. Sensitivity to calibration noise, lens distortion, and rolling-shutter effects is not evaluated; robustness strategies or correction modules could be investigated.

- Domain generalization beyond indoor scenes: Main results are on Replica and ScanNet++ (indoor). Systematic testing on outdoor scenes, large-scale environments, texture-poor settings, strong parallax, and challenging lighting (HDR, specular/transparent surfaces) is needed to establish generality.

- Dynamic scenes and view-dependent effects: The pipeline assumes static scenes; it is unclear how it handles motion, temporal inconsistencies, or strong view-dependent appearance (specularities, transparency). Extensions for dynamics and explicit handling of non-Lambertian effects are open.

- Coverage limits with single-pass outpainting: A single outpainting pass may still leave gaps in peripheral regions. Investigating multi-pass or cross-view coordinated outpainting (with variable per view) to maximize coverage while maintaining consistency is an open direction.

- Alternative conditioning and geometry constraints: Conditioning uses Plücker embeddings and CCM, but not explicit epipolar attention or 3D feature volumes. Evaluating whether stronger geometric constraints inside diffusion (epipolar/voxel attention, differentiable rasterization) further reduce inconsistencies would clarify design choices.

- End-to-end joint optimization: The current pipeline is sequential (coarse 3D → outpainting → refine) and zero-shot. Studying light finetuning of the diffusion backbone with 3D constraints, or end-to-end joint optimization with 3DGS, could improve cross-view consistency and reduce heuristic scheduling.

- Minimum viable views: Results focus on 3/6/9 views; the method’s behavior for extremely sparse settings (e.g., 1–2 views) is not reported. Establishing limits and designing safeguards for ultra-sparse cases would broaden applicability.

- Scaling to high resolution and large scenes: Runtime and memory versus image resolution, number of views, and scene size are not detailed (e.g., 4K inputs, building-scale scenes). Profiling and optimization for high-res, large-scale scenarios are needed.

- Fairness and standardization of comparisons: Baselines use different initializations (e.g., MASt3R vs. DUSt3R) and settings. A standardized, common initialization and protocol would isolate the contribution of outpainting more cleanly.

- Quantifying multi-view consistency gains: While qualitative improvements are shown, quantitative multi-view consistency metrics (e.g., cycle consistency, triangulation residuals, cross-view LPIPS on overlapping regions) would substantiate claims that outpainting preserves geometry better than novel view generation.

- VAE latent compression effects: The method operates in VAE latent space; the impact of VAE reconstruction bias/artifacts on outpainted detail and subsequent 3D fidelity is not analyzed. Testing different VAE backbones or latent resolutions could reveal trade-offs.

- Integration with alternative 3D representations: The approach is tailored to 3DGS. Exploring meshes, NeRF variants, or hybrid representations for refinement (and whether outpainting benefits transfer) remains open.

- Failure mode characterization: Beyond the stated limitations (occlusion and view distribution), the paper lacks a taxonomy and quantitative frequency of failures (e.g., seams at mask boundaries, texture drift, geometry warping), which would guide targeted improvements.

Practical Applications

Immediate Applications

Below are actionable applications that can be deployed now, leveraging GaMO’s geometry-aware multi-view diffusion outpainting and 3DGS refinement pipeline. Each item notes sector(s), potential tools/workflows, and key assumptions/dependencies.

- Sparse-to-complete virtual tours from a handful of photos (Real Estate, PropTech)

- Tools/workflows: Mobile capture app or web upload that accepts 3–9 photos per room, runs GaMO server-side, returns a navigable 3DGS or mesh; plugins for Matterport/Immersive view platforms.

- Dependencies: Accurate or auto-estimated camera poses (e.g., DUSt3R); mostly static indoor scenes; a GPU backend; user consent for cloud processing; licensing for pre-trained diffusion models.

- Feasibility note: Under ~10 min processing and 25× speedup vs. prior diffusion pipelines enables near real-time listing preparation.

- Rapid scene capture for set extension and previsualization (Media/VFX, Game Dev)

- Tools/workflows: DCC plugin (Unreal/Unity/Nuke) to outpaint plate edges and convert Gaussians-to-mesh; shot prep from 3–6 reference photos; asset scouting with minimal on-location capture.

- Dependencies: Stable lighting across sparse views; static or minimally dynamic sets; conversion tools from 3DGS to meshes for downstream rendering.

- Low-footprint indoor mapping for robotics and AR (Robotics, Software)

- Tools/workflows: Robot/operator collects a few snapshots per room; GaMO generates wide-FOV views and refined 3DGS; export to point clouds/meshes for navigation and occlusion-aware AR.

- Dependencies: Posed images or reliable self-calibration; static layout; 3DGS integration with SLAM/VIO maps; GPU availability on base station.

- Site documentation and facilities management with minimal capture (AEC/FM)

- Tools/workflows: Field teams take sparse images; GaMO outpaints coverage for digital twins or as-builts; compare to BIM for discrepancy detection.

- Dependencies: Indoor bias (method validated on Replica/ScanNet++); tolerance for plausible (not guaranteed literal) completion in unobserved regions; alignment to BIM.

- Claims and damage assessment from sparse evidence (Insurance)

- Tools/workflows: Adjusters collect a few images; GaMO creates a navigable 3D reconstruction for triage and remote review.

- Dependencies: Clearly communicated uncertainty and provenance marking for outpainted regions; policy guidelines around synthetic completion; static scenes.

- Try-before-you-buy room staging (E-commerce, Home Improvement)

- Tools/workflows: Customers upload a few photos; retailer app reconstructs room, enabling AR placement of furniture/fixtures; fast “good-enough” geometry via GaMO.

- Dependencies: Indoor scenes; calibration/resectioning quality; clear labeling of synthesized content.

- Heritage rooms and small exhibits digitization under access constraints (Cultural Heritage)

- Tools/workflows: Capture limited viewpoints due to ropes/fragility; GaMO fills coverage gaps for viewing and curation.

- Dependencies: Outpainted content is plausible but not a substitute for scientific ground truth; metadata to flag inferred areas.

- Education and research baselines for sparse-view 3D (Academia)

- Tools/workflows: Course labs using GaMO to demonstrate outpainting vs. novel-view generation; benchmark suite for sparse-view reconstruction with 3/6/9 views.

- Dependencies: Access to MV diffusion backbones (e.g., MVGenMaster); reproducible pipelines and GPU time.

- API/SaaS: “Sparse-to-3D” service (Software)

- Tools/workflows: REST API accepting 3–9 images + intrinsics; returns outpainted views, 3DGS, and optional mesh; dashboard for quality checks (LPIPS/SSIM).

- Dependencies: GPU inference (diffusion + 3DGS); usage-based pricing; observability and rate limiting; model licensing and updates.

- Data augmentation for 3D perception models (Academia, Software)

- Tools/workflows: Use GaMO to widen FOV and synthesize peripheral content to expand datasets for monocular depth/segmentation/relighting.

- Dependencies: Proper labeling of synthesized regions; careful use to avoid bias or reinforce hallucinations in downstream models.

- Telepresence/remote walkthroughs with minimal setup (Communications)

- Tools/workflows: Capture a few angles of a meeting space or lab; GaMO reconstructs for remote participants to navigate.

- Dependencies: Privacy and consent; static scenes; device guidance to capture diverse orientations.

- Quality assurance for photogrammetry gaps (Software, AEC)

- Tools/workflows: Integrate GaMO into RealityCapture/Metashape as a pre-fill step when coverage is insufficient; validates coverage masks and suggests additional shots.

- Dependencies: Interoperability with existing photogrammetry workflows; clear flagging of synthesized peripheries.

- Forensics visualization with explicit uncertainty overlays (Public Safety)

- Tools/workflows: Render outpainted views with mask overlays and confidence maps for exploratory visualization only.

- Dependencies: Strict disclaimers; chain-of-custody policies; separation of exploratory visuals from evidentiary material.

Long-Term Applications

The following opportunities require further research, scaling, or validation (e.g., outdoor generalization, dynamic scenes, regulatory standards, on-device performance).

- Online mapping with live outpainting in SLAM loops (Robotics, AR)

- Vision: Integrate GaMO-style FOV expansion into SLAM to “complete” maps in real time for occlusion handling, path planning, and semantic tasks.

- Dependencies: Real-time or near-real-time diffusion on edge devices; dynamic-scene robustness; active view planning fused with outpainting; uncertainty-aware fusion into maps.

- Outdoor and large-scale environments (Mapping, Smart Cities)

- Vision: Apply geometry-aware outpainting to streetscapes/campuses to reduce capture passes and fill coverage gaps.

- Dependencies: New training/backbones tuned to outdoor lighting/geometry; handling of reflective/translucent surfaces; scalable pose estimation across long baselines.

- Certified reconstructions with calibrated uncertainty and provenance (Policy, Public Safety, Legal Tech)

- Vision: Standards and audits for synthetic completion, with pixel-level provenance and confidence measures used in evidence handling and inspections.

- Dependencies: Uncertainty quantification for outpainted regions; cryptographic provenance (e.g., C2PA); regulatory frameworks and best practices.

- Edge/on-device deployment for mobile and drones (Mobile, UAS)

- Vision: Run GaMO-like pipelines on-device for field operations (inspection, emergency response, logistics) with latency targets under a few minutes.

- Dependencies: Efficient diffusion backbones, quantization, and accelerator support; memory-constrained 3DGS optimization; thermal/power limits.

- Hybrid planning: adaptive outpaint scale and minimal active capture (Software, Robotics)

- Vision: Algorithms that pick optimal focal-length scaling S_k and recommend the few extra shots that maximally reduce uncertainty.

- Dependencies: Confidence-driven planning; multi-objective optimization (time, coverage, accuracy); closed-loop user guidance UX.

- Infrastructure inspection and asset management with sparse drone shots (Energy, Utilities, Transportation)

- Vision: Reconstruct substations, bridges, or cell towers from limited or flight-restricted angles, filling gaps for digital twin maintenance.

- Dependencies: Domain-tuned diffusion models for specular/metallic surfaces; safety regulations; verifiable boundaries between real vs. synthesized geometry.

- Data-centric training pipelines: outpaint-then-reconstruct for robust 3D models (Academia, Foundation Models)

- Vision: Use GaMO to augment sparse-view datasets at scale, pretraining 3DGS/NeRF variants or 3D diffusion models with improved coverage and consistency.

- Dependencies: Bias assessment; large-scale compute; methods to prevent overfitting to synthetic peripheries.

- Interactive capture assistants (Consumer, Enterprise)

- Vision: Real-time HUD that visualizes uncovered peripheries and suggests minimal camera motions; “good-enough” capture certification for non-experts.

- Dependencies: Fast opacity-mask prediction and coverage scoring; UX research; device capability variance.

- Virtual production: live set reconstruction and occlusion-aware compositing (Media)

- Vision: On-set outpainting to extend volumes and optimize camera blocking; consistent geometry for live previews.

- Dependencies: Sub-minute latency; integration with LED wall workflows; precise color management.

- Medical and industrial endoscopy-like recon with coverage completion (Healthcare, Manufacturing QA)

- Vision: Assistive reconstruction from sparse viewpoints within constrained spaces (pipes, ducts, borescopes), improving operator awareness.

- Dependencies: Domain-specific training; safety validation; robust handling of non-Lambertian surfaces and severe lens distortion.

- Compliance-ready digital twins for permitting and code checks (Policy, AEC)

- Vision: Use sparse captures + outpainting to accelerate twin creation, paired with automated checks, while carrying provenance for synthesized regions.

- Dependencies: Standards for synthetic disclosure; inspectors’ acceptance criteria; mesh conversion fidelity and scale accuracy.

Notes on Assumptions and Dependencies Across Applications

- Camera poses and calibration: Accuracy is critical; DUSt3R initialization or equivalent is assumed. Misaligned or clustered views degrade performance.

- Scene characteristics: Best for static, indoor scenes (validated on Replica/ScanNet++). Fully occluded regions cannot be “recovered” with ground-truth fidelity; outpainting is plausible, not guaranteed accurate.

- Compute and latency: Sub-10-minute runtime assumes a modern GPU; on-device or edge deployment needs model optimization and hardware acceleration.

- Model licensing and updates: Access to pre-trained multi-view diffusion backbones (e.g., MVGenMaster) and 3DGS toolchains; adherence to licenses.

- Provenance and ethics: Synthetic peripheries should be clearly marked; applications in forensics, compliance, or safety-critical domains need uncertainty visualization and policy alignment.

- Interoperability: Downstream conversion (3DGS → mesh) and integration with existing pipelines (photogrammetry/SLAM/game engines) may be required.

- Generalization: Outdoor, dynamic scenes, reflective surfaces, and textureless regions remain open challenges and may need domain-specific finetuning or hybrid methods.

Glossary

- 3D Gaussian Splatting (3DGS): A real-time scene representation that renders radiance fields using anisotropic 3D Gaussian primitives blended along viewing rays. "3D Gaussian Splatting (3DGS)~\cite{kerbl20233d} uses a collection of anisotropic 3D Gaussian primitives to present a scene."

- Alpha-blending: A compositing method that blends ordered semi-transparent layers based on their opacities to compute pixel colors. "The color of pixel is computed via -blending of ordered Gaussians:"

- Canonical Coordinate Map (CCM): A per-pixel coordinate encoding used as a conditioning signal to provide spatial structure to the diffusion model. "original and augmented Canonical Coordinate Map (CCM) and RGB"

- DDIM (Denoising Diffusion Implicit Models) sampling: A deterministic sampling procedure for diffusion models that accelerates generation while preserving quality. "using the DDIM~\cite{song2021denoising} sampling process."

- Diffusion models: Generative models that synthesize data by reversing a progressive noising process through learned denoising steps. "Diffusion Models generate samples through a learned denoising process that reverses a forward noising process."

- DUSt3R: A method for producing geometrically consistent point clouds and relative poses from images, used to initialize 3D reconstruction. "we use DUSt3R~\cite{wang2024dust3r} to generate an initial point cloud"

- Epipolar attention: An attention mechanism that enforces multi-view geometric consistency by constraining correspondences along epipolar lines. "epipolar attention~\cite{huang2024epidiff}"

- Field of view (FOV): The angular extent of the scene captured by a camera; expanding FOV increases coverage beyond the original image boundaries. "We enlarge the FOV by reducing the focal lengths with a scaling ratio (i.e., , )."

- Fréchet Inception Distance (FID): A distribution-level metric that measures the realism of generated images by comparing feature statistics. "Evaluation uses standard metrics: PSNR, SSIM~\cite{wang2004image}, LPIPS~\cite{zhang2018unreasonable}, and FID~\cite{heusel2017gans}."

- Gaussian bundle adjustment: Joint optimization that refines Gaussian scene parameters and camera poses to improve reconstruction accuracy. "Gaussian bundle adjustment~\cite{fan2024instantsplat}"

- Gaussian unpooling: A depth-guided operation that expands or refines Gaussian primitives to better cover sparse observations. "depth-guided Gaussian unpooling~\cite{zhu2024fsgs}"

- Iterative Mask Scheduling (IMS): A progressive blending schedule that adjusts the outpainting mask over timesteps to smoothly integrate generated content. "To gradually integrate generated content with the existing geometric structure, Iterative Mask Scheduling progressively adjusts $\mathcal{M}_{\text{latent}^{(k)}$ over iterations to control the ratio between outpainting and known coarse regions."

- LPIPS (Learned Perceptual Image Patch Similarity): A perceptual similarity metric that correlates with human judgment of image quality. "Evaluation uses standard metrics: PSNR, SSIM~\cite{wang2004image}, LPIPS~\cite{zhang2018unreasonable}, and FID~\cite{heusel2017gans}."

- Multi-view diffusion: Diffusion models conditioned on multiple synchronized views to generate geometrically consistent images across cameras. "Using multi-view diffusion models~\cite{cao2025mvgenmaster}, adding more diffusion-generated novel views degrades reconstruction quality, suggesting that sparse views alone can yield robust results."

- Noise resampling: Re-noising a latent at a given timestep during denoising to smooth transitions and reduce boundary artifacts after blending. "we perform noise resampling after each blending operation."

- Noise schedule: The predefined sequence of noise levels controlling the forward and reverse diffusion processes. "where is a predefined noise schedule."

- Novel view generation: Synthesizing images from new, unseen camera poses to increase viewpoint coverage for reconstruction. "novel view generation mainly focuses on enhancing angular coverage of existing geometry and often overlooks the extension beyond the periphery"

- Opacity mask: A binary mask derived from rendered opacity that marks low-confidence regions to be outpainted. "The opacity mask is then obtained by thresholding the opacity map with $\mathcal{M} = \mathbb{I}(\mathcal{O} < \eta_{\text{mask})$"

- Outpainting: Extending an image beyond its original boundaries by generating plausible new content consistent with the existing scene. "We observe that outpainting, rather than novel view generation, offers a more suitable paradigm for enhancing sparse-view 3D reconstruction."

- Plücker ray embeddings: A 6D representation of camera rays (origin and direction) used to encode geometry-aware conditioning for diffusion. "we employ Plücker ray embeddings~\cite{xu2024dmv3d} that provide dense 6D ray parameterizations for each pixel"

- Quaternion: A four-parameter representation for 3D rotations used to define Gaussian orientations. "The covariance matrix is decomposed into a scaling vector and rotation quaternion "

- Score Distillation Sampling (SDS): A technique that transfers gradients from a diffusion model to optimize 3D representations without paired data. "Diffusion models provide learned priors through Score Distillation Sampling~\cite{poole2022dreamfusion,wang2023prolificdreamer,liang2024luciddreamer}."

- Spherical harmonic coefficients: Parameters of basis functions used to model view-dependent color on Gaussians. "and spherical harmonic coefficients for view-dependent color."

- SSIM (Structural Similarity Index): A perceptual metric assessing structural fidelity between images. "Quantitative metrics (SSIM, LPIPS) show that adding novel views degrades quality due to inconsistencies"

- Variational Autoencoder (VAE): A probabilistic encoder–decoder used to map images to and from latent space for diffusion conditioning. "The input RGB images are encoded through a variational autoencoder (VAE) to obtain clean latent features ."

- Warping (unprojection/reprojection): Geometric mapping of pixels across views by lifting to 3D and projecting into another camera. "we warp input RGB images and Canonical Coordinate Maps (CCM) to align with the expanded FOV by unprojecting pixels to 3D and reprojecting onto the outpainted camera plane"

Collections

Sign up for free to add this paper to one or more collections.