Pat-DEVAL: Chain-of-Legal-Thought Evaluation for Patent Description

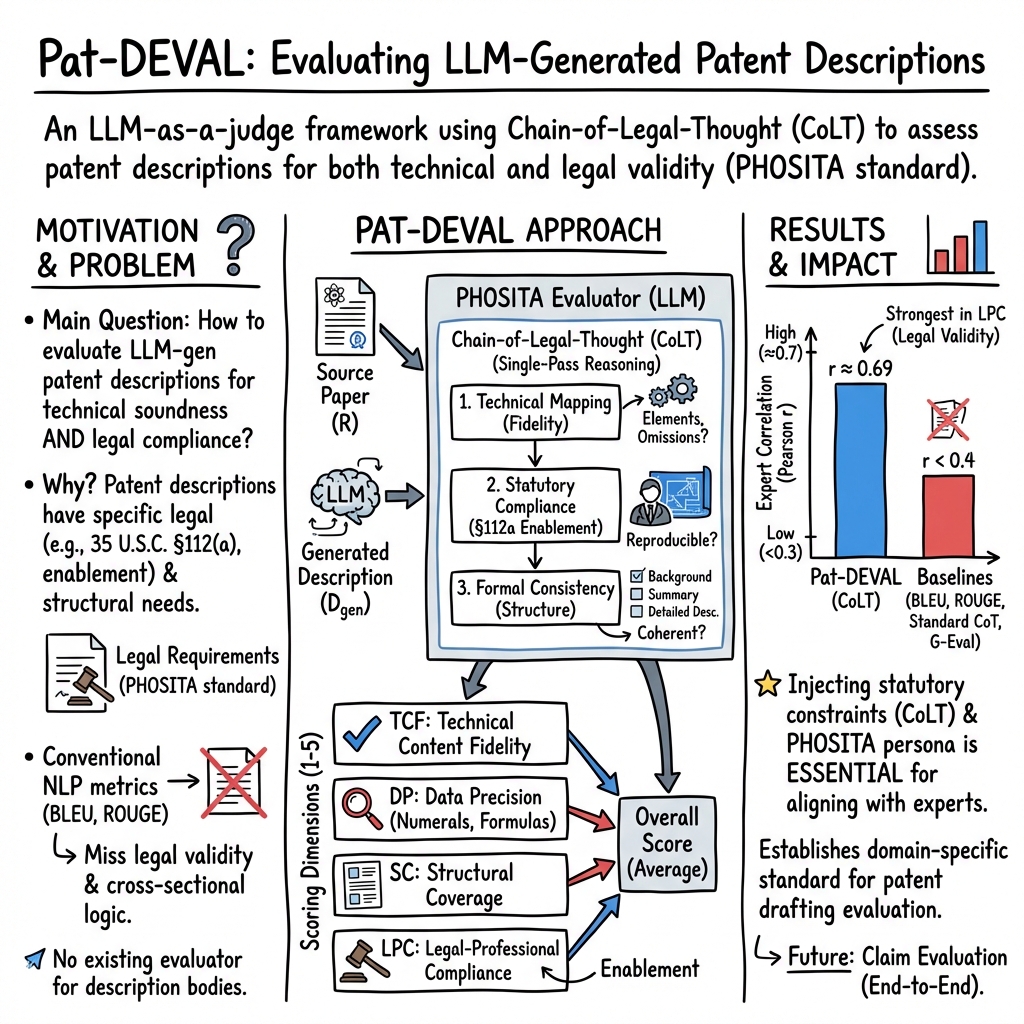

Abstract: Patent descriptions must deliver comprehensive technical disclosure while meeting strict legal standards such as enablement and written description requirements. Although LLMs have enabled end-to-end automated patent drafting, existing evaluation approaches fail to assess long-form structural coherence and statutory compliance specific to descriptions. We propose Pat-DEVAL, the first multi-dimensional evaluation framework dedicated to patent description bodies. Leveraging the LLM-as-a-judge paradigm, Pat-DEVAL introduces Chain-of-Legal-Thought (CoLT), a legally-constrained reasoning mechanism that enforces sequential patent-law-specific analysis. Experiments validated by patent expert on our Pap2Pat-EvalGold dataset demonstrate that Pat-DEVAL achieves a Pearson correlation of 0.69, significantly outperforming baseline metrics and existing LLM evaluators. Notably, the framework exhibits a superior correlation of 0.73 in Legal-Professional Compliance, proving that the explicit injection of statutory constraints is essential for capturing nuanced legal validity. By establishing a new standard for ensuring both technical soundness and legal compliance, Pat-DEVAL provides a robust methodological foundation for the practical deployment of automated patent drafting systems.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Explain it Like I'm 14

A simple explanation of “Pat-DEVAL: Chain-of-Legal-Thought Evaluation for Patent Description”

Overview

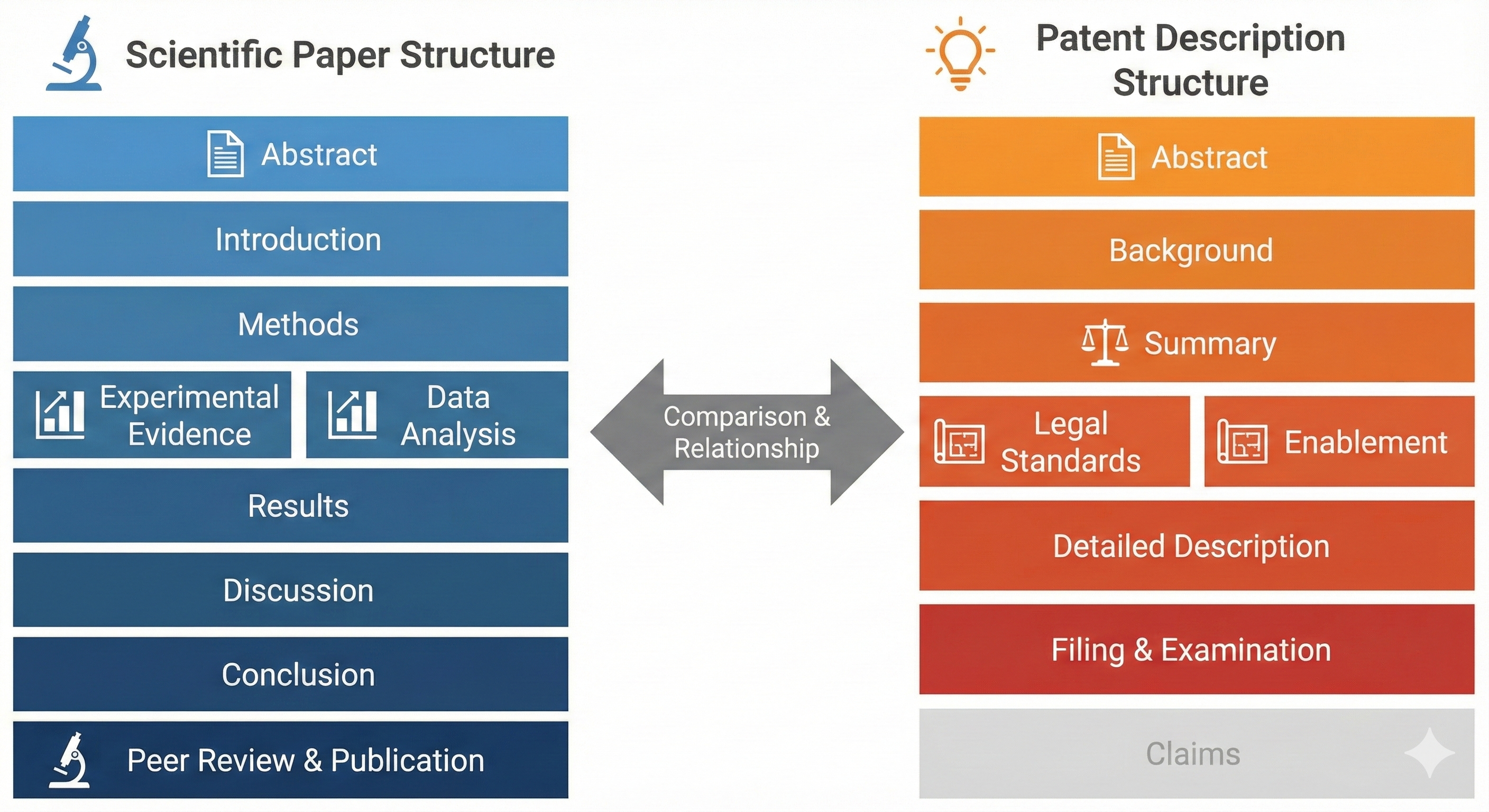

This paper is about making sure AI-written patent descriptions are both technically accurate and legally correct. A patent description is the part of a patent that explains how an invention works in enough detail that a skilled person could build or use it. The authors created a new way, called Pat-DEVAL, to carefully judge these AI-written descriptions so they are clear, complete, and follow important patent laws.

What questions does the paper try to answer?

The paper asks, in simple terms:

- Does the AI’s description match the real invention and explain its key ideas correctly?

- Are important facts and numbers exact and not made up?

- Is the description organized like a proper patent (with all the right sections)?

- Does it meet legal rules, especially the requirement to explain the invention well enough for someone skilled in the field to reproduce it?

How did they do it?

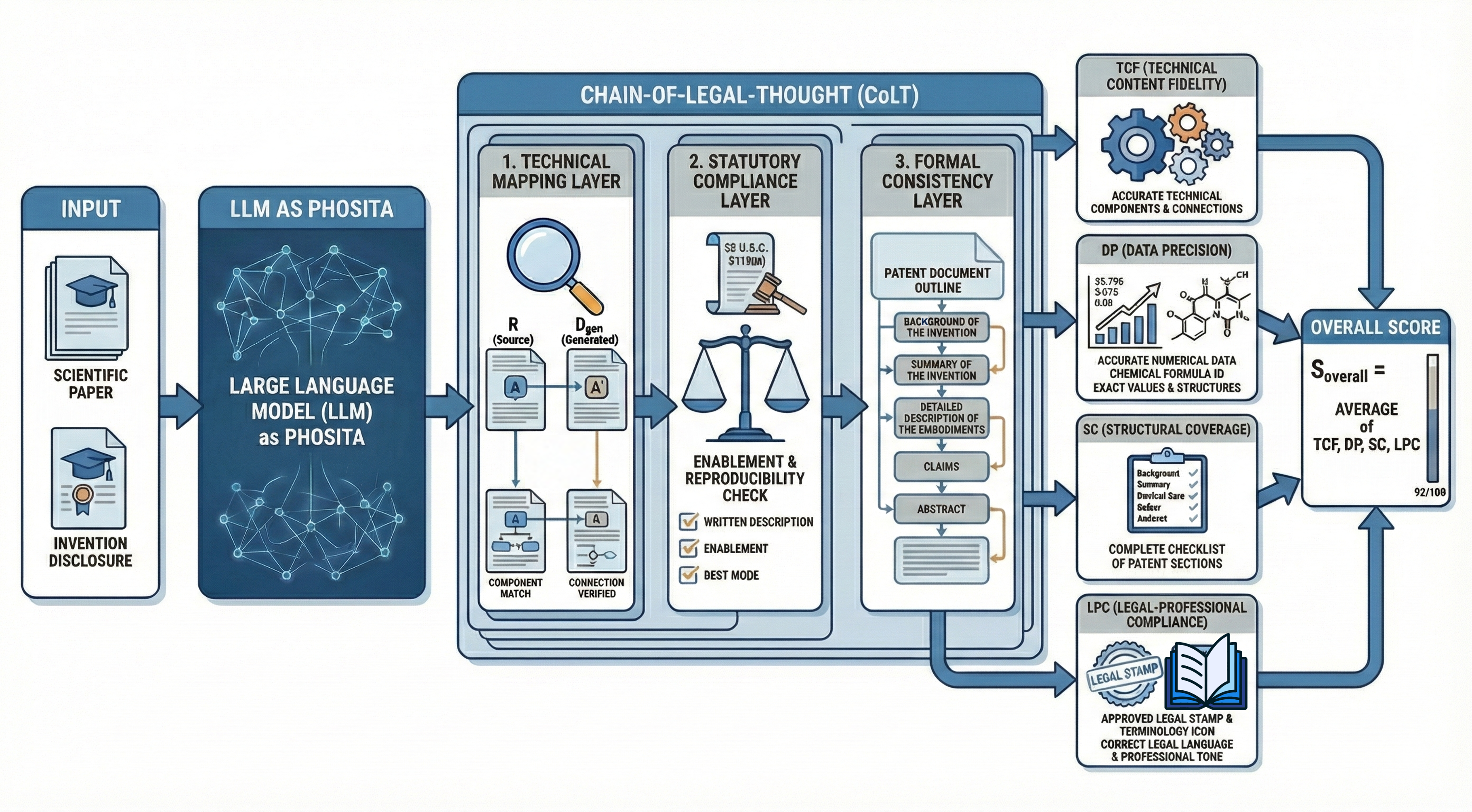

The authors use a LLM—a powerful AI that understands text—as a smart “judge.” They give it a professional role: think like a typical expert in the field (called a PHOSITA, short for “Person Having Ordinary Skill in the Art”). They also guide the AI to follow patent law rules while evaluating.

To make the AI judge think in the right order, they created a step-by-step reasoning process called Chain-of-Legal-Thought (CoLT). You can imagine CoLT like a careful checklist a teacher uses to grade a science project:

- First, compare the invention’s real details to the AI’s description (no missing parts or fake facts).

- Next, check legal requirements, especially a U.S. rule called 35 U.S.C. § 112, which says the description must teach others how to make and use the invention without too much guesswork.

- Finally, check that the document is properly structured (background, summary, detailed description, etc.) and uses professional, clear language.

From this process, the AI judge gives four scores (each from 1 to 5). These scores cover:

- Technical Content Fidelity: Does it explain the core idea correctly?

- Data Precision: Are numbers, steps, and details accurate?

- Structural Coverage: Are all the needed sections present and complete?

- Legal-Professional Compliance: Does it meet legal standards and use proper patent style?

The researchers also built a trusted test set by pairing real scientific papers with their related patents, then had certified patent professionals score the AI-generated descriptions. They compared Pat-DEVAL’s scores to the experts’ ratings to see how well Pat-DEVAL matches human judgment.

What did they find, and why does it matter?

The key result: Pat-DEVAL matched human expert judgments much better than other methods. On average, it reached a correlation of 0.69 (on a scale where 1.0 is perfect agreement), which is strong. It did especially well on judging legal compliance, with a correlation of 0.73—meaning it’s very good at checking whether a description truly meets the law’s requirements.

Other common text scoring methods (like BLEU or ROUGE that look at word overlap) did poorly because good patent descriptions don’t always use the same words—they must be complete, precise, and legally sound, not just similar in wording. Even general AI-evaluation tools were not as good because they didn’t focus on patent law.

The team also ran tests to see which parts of Pat-DEVAL matter most:

- Removing the legal step-by-step thinking (CoLT) made performance drop a lot. This shows that legal reasoning is essential.

- Not telling the AI to act as a PHOSITA reduced accuracy.

- Scoring everything as one big score (instead of four focused parts) made results worse. Breaking the task into clear pieces helps the AI judge more carefully.

What’s the impact?

This research helps make AI-written patent descriptions safer and more trustworthy. That means:

- Faster, higher-quality patent drafting for inventors and companies.

- Fewer legal mistakes that could cause patents to be rejected or challenged.

- A clearer path for using AI to handle complex legal documents in real-world settings.

In the future, this approach could become a standard tool for checking AI-generated patents, could be combined with systems that evaluate patent claims, and might help patent offices and businesses review drafts quickly while maintaining legal quality.

Knowledge Gaps

Knowledge gaps, limitations, and open questions

The paper leaves the following concrete gaps and open questions for future research to address:

- Jurisdictional generalizability: Extend CoLT beyond 35 U.S.C. §112(a) to EPC (Art. 83/84), PCT, CN, JP, and KR requirements; compare correlations with practitioners across jurisdictions.

- Description–claims alignment: Add evaluators that check whether claims are fully supported by the description (e.g., antecedent basis, §112(b) definiteness, means-plus-function support) and quantify alignment.

- Figure and drawings consistency: Incorporate multimodal evaluation (OCR/vision) to verify that figures, reference numerals, and Brief Description of Drawings are accurately described and cross-referenced in the Detailed Description.

- Numeric and unit robustness: Replace exact-match-only Data Precision checks with tool-augmented verification allowing ranges, uncertainty, unit conversions, and significant figures; report effects on DP correlation.

- Backbone dependency: Replicate Pat-DEVAL across diverse evaluator LLMs (sizes and families) to quantify sensitivity to backbone choice, including smaller open models for cost-constrained settings.

- Test–retest reliability: Measure evaluator stability across seeds, temperatures, and prompt paraphrases; report intra-model ICC and self-consistency metrics.

- Adversarial robustness and gaming: Stress-test against outputs optimized to the rubric (Goodhart’s Law), adversarially obfuscated omissions, and stylistic overfitting; report failure modes and mitigation.

- Length scaling: Evaluate performance as description length grows (e.g., 5k–20k tokens), including chunking/long-context strategies and their impact on CoLT reasoning fidelity.

- Reference scarcity: Assess Pat-DEVAL when the source technology is not a full paper (e.g., invention disclosures, lab notes) or missing entirely; design reference-light or reference-free variants.

- Dataset scope and diversity: Expand Pap2Pat-EvalGold beyond 146 pairs, stratified by CPC/IPC fields (biotech, chem, EE, software, mechanical) to analyze domain-wise performance and PHOSITA variance.

- External validity: Correlate Pat-DEVAL scores with real-world outcomes (office actions, §112 rejections, time to allowance, RCE rate, litigation outcomes) to test predictive utility.

- Weighting scheme: Learn or elicit legally grounded weights for TCF/DP/SC/LPC (rather than unweighted averaging) and validate against expert preferences and outcome-based objectives.

- Decision thresholds: Calibrate pass/fail or “filing readiness” cutoffs; report sensitivity/specificity, ROC/AUC, and error costs for operational deployment.

- Generator diversity: Evaluate robustness across multiple generators and decoding strategies (model families, temperatures, nucleus sampling) to avoid generator-specific overfitting.

- Tool-augmented judging: Integrate symbolic/numeric checkers, citation verifiers, section parsers, and glossary/definition matchers to reduce LLM judge hallucinations; quantify gains over pure LLM judging.

- Multilingual coverage: Extend to non-English patents/papers (JP, CN, DE, KR), including translation-aware CoLT or native-language evaluators; report cross-lingual reliability.

- Expanded legal scope: Pilot CoLT modules for additional statutory issues relevant to descriptions (best mode, enablement across claim breadth, undue experimentation factors) and for patent-eligibility (§101) where description content is implicated.

- PHOSITA calibration: Parameterize the PHOSITA persona by art unit/technology domain and experience level; measure how domain-specific PHOSITA settings affect LPC judgments.

- Evaluator error analysis: Publish fine-grained error taxonomy (especially for LPC) and analyze systematic LLM-judge vs human disagreements to target prompt/model/tool fixes.

- Statistical reporting: Complement Pearson r with Spearman ρ, Kendall τ, confidence intervals, and calibration/error analysis (e.g., Brier score for pass/fail) to characterize reliability more fully.

- Contamination and bias checks: Audit potential training-data overlap between evaluator LLMs and the evaluation corpus (papers/patents), and measure bias against non-native English or discipline-specific styles.

- Cost and latency: Report tokens processed, runtime, and monetary cost for single-pass CoLT at different lengths/backbones; benchmark against multi-turn variants to justify the single-pass design.

- Continuous updating: Establish procedures for legal-drift resilience (e.g., Guidelines updates), versioned constraints, and regression tests to maintain validity over time.

- Human-in-the-loop loops: Use Pat-DEVAL disagreements to prioritize human review and data acquisition; study active learning strategies that most improve evaluator reliability per unit expert time.

- Improving generators with feedback: Use CoLT-derived signals as rewards or constraints (RLHF/RLAIF) to train drafting models; measure end-to-end improvements and risk of reward hacking.

- Multi-source grounding: Extend CoLT to reason over multiple heterogeneous sources (papers, prior patents, lab notebooks), resolving conflicts and provenance to tighten fidelity checks.

Practical Applications

Practical, Real-World Applications of Pat-DEVAL

The following applications translate the paper’s findings and innovations (Pat-DEVAL, Chain-of-Legal-Thought, PHOSITA simulation, four-dimensional scoring, and reference-free evaluation) into actionable use cases across industry, academia, policy, and daily life.

Immediate Applications

- Pre-filing patent description quality check for law firms and corporate IP teams

- Sectors: Legal services, software/semiconductors, healthcare/medical devices, energy, manufacturing

- Tools/Workflows: An API or plugin in patent drafting editors (e.g., Microsoft Word/DOCX, web-based patent SaaS) that produces TCF/DP/SC/LPC scores and CoLT-driven rationale; redlining suggestions for enablement gaps and formal inconsistencies before filing

- Assumptions/Dependencies: Access to the invention’s source materials (“R”), secure handling of confidential data, evaluator LLM (e.g., Qwen3-32B or equivalent) availability; jurisdiction-specific adaptations beyond 35 U.S.C. §112(a)

- LLM drafting guardrails and revision loop for automated patent generation

- Sectors: Software (AI tooling), LegalTech

- Tools/Workflows: Integrate Pat-DEVAL as a “judge-in-the-loop” to automatically flag omissions (e.g., hyperparameters, embodiments) and cross-sectional inconsistencies; auto-rewrite prompts until target LPC and SC thresholds are met

- Assumptions/Dependencies: Reliable linkage between generator and evaluator models; compute/latency budget; domain-specific PHOSITA persona prompts

- Vendor acceptance testing and procurement standards for AI patent drafting tools

- Sectors: Enterprise IP, LegalTech procurement

- Tools/Workflows: Use Pap2Pat-EvalGold and Pat-DEVAL scores as a benchmark to assess vendors; set minimum correlation-to-human targets or dimensional thresholds for Legal-Professional Compliance

- Assumptions/Dependencies: Agreement on evaluation protocols; coverage expansion beyond the 146-sample dataset for broader trust

- Examiner assistance and internal training aids (first-pass enablement screening)

- Sectors: Patent offices, in-house IP departments

- Tools/Workflows: Triage dashboards where descriptions with low LPC or SC are queued for deeper review; CoLT rationale used in examiner/onboarding training to illustrate statutory deficiencies

- Assumptions/Dependencies: Policy clearance for AI-assisted screening; data privacy; clarity on non-binding nature of AI outputs

- Academic benchmarking and reproducible evaluation in NLP/patent research

- Sectors: Academia, research labs

- Tools/Workflows: Standardized evaluation suite using Pat-DEVAL to compare LLM drafting systems; publish dimensional results alongside BLEU/ROUGE/BERTScore to reflect legal validity

- Assumptions/Dependencies: Dataset licensing and expansion; open prompts and rubrics; consistent PHOSITA role definitions across technical domains

- Training for junior patent drafters and inventors with structured feedback

- Sectors: Legal education, STEM education

- Tools/Workflows: Interactive training modules where learners receive CoLT step-by-step critiques (Technical Mapping, Statutory Compliance, Formal Consistency) and practice fixing enablement gaps

- Assumptions/Dependencies: Access to realistic drafting cases; professional supervision to contextualize AI feedback

- SMB and independent inventor “preflight” checker

- Sectors: Daily life/startups, small businesses

- Tools/Workflows: Lightweight web tool that ingests an invention summary and drafted description to return Pat-DEVAL scores and prioritized fixes before engaging counsel

- Assumptions/Dependencies: Simplified UX; disclaimers that it is not legal advice; careful scoping for complex biotech/chemistry disclosures

- Compliance reporting and IP risk dashboards for portfolio management

- Sectors: Enterprise IP management, finance (IP-backed assets)

- Tools/Workflows: Portfolio-level SC/LPC risk heatmaps; alerts for filings with low enablement confidence; integration with IP management platforms

- Assumptions/Dependencies: Scalable batch evaluation; continuous updates to legal rubrics; secure data pipelines

- MLOps evaluation harness for RAG-based patent drafting systems

- Sectors: Software/AI ops

- Tools/Workflows: CI/CD gates where new drafting models must meet specified TCF/DP/SC/LPC metrics on held-out sets (e.g., Pap2Pat-EvalGold) before deployment

- Assumptions/Dependencies: Stable test sets; compute resources; approval workflows tied to model performance

Long-Term Applications

- End-to-end, closed-loop patent drafting systems with automatic enablement remediation

- Sectors: LegalTech, AI software

- Tools/Workflows: Generators that iteratively incorporate CoLT feedback to add missing embodiments, parameter ranges, and cross-sectional consistency; one-click “file-ready” drafts

- Assumptions/Dependencies: Stronger domain-specific knowledge bases; robust multi-turn refinement with low latency; human-in-the-loop sign-off

- Patent office policy integration for AI-assisted pre-screening and “quality certificates”

- Sectors: Public policy, government

- Tools/Workflows: Optional pre-submission Pat-DEVAL “enablement compliance” reports; examiner triage queues weighted by LPC/SC; standardized evaluation protocols across jurisdictions

- Assumptions/Dependencies: Regulatory approval; transparency and auditability; alignment with EPO/JPO/KIPO rules; safeguards against over-reliance on AI

- Industry-wide quality benchmarks and certification (“Pat-DEVAL Certified”)

- Sectors: LegalTech, standards bodies

- Tools/Workflows: Certification programs for AI drafting tools and workflows based on minimum dimensional scores and correlation-to-human targets; public disclosures for trust

- Assumptions/Dependencies: Community consensus on thresholds; third-party audit mechanisms; periodic rubric updates

- Portfolio-wide enablement audits and litigation risk prediction

- Sectors: Finance (IP valuation, M&A), legal risk analytics

- Tools/Workflows: Cross-sectional scoring of thousands of patents to identify enablement vulnerabilities; link LPC/SC signals to predicted litigation or validity-challenge risk

- Assumptions/Dependencies: Historical outcome labels; domain calibration (biotech vs. software); robust confidentiality handling

- Jurisdiction- and domain-specific evaluator expansions (biotech, chemistry, robotics)

- Sectors: Healthcare/biotech, chemicals, robotics, energy

- Tools/Workflows: CoLT variants encoding domain norms (e.g., sequence listings, dosage ranges, materials specs) and local statutory requirements; specialized PHOSITA personas

- Assumptions/Dependencies: Expert-curated rubrics per field; integration of multimodal evidence (drawings, spectra, sequences)

- Multimodal and claims-integrated evaluation for holistic patent quality

- Sectors: LegalTech, AI research

- Tools/Workflows: Extend Pat-DEVAL to figures, flowcharts, and claims to assess antecedent basis, scope clarity, and description-claim alignment

- Assumptions/Dependencies: Reliable OCR/vision models; claims-specific legal reasoning modules (e.g., antecedent basis, means-plus-function)

- Global harmonization and multilingual evaluation frameworks

- Sectors: International IP systems

- Tools/Workflows: CoLT prompts adapted to EPO/JPO standards; multilingual evaluators for cross-border filings; consistent scoring across languages

- Assumptions/Dependencies: High-quality multilingual LLMs; localized legal corpora; cultural/terminology normalization

- Due diligence accelerators for licensing and tech transfer

- Sectors: Technology licensing, university TTOs, venture capital

- Tools/Workflows: Rapid enablement and structural integrity checks to screen patents for licensing; prioritize remediation work before deal closure

- Assumptions/Dependencies: Access to full specs and supporting R&D documentation; confidentiality agreements; domain calibrations

- Education and professional development platforms with simulated PHOSITA feedback

- Sectors: Legal education, professional training

- Tools/Workflows: Interactive courses that use CoLT traces to teach enablement, written description, and cross-sectional structure; scenario-based drafting exercises with AI feedback

- Assumptions/Dependencies: Curriculum alignment with jurisdictional standards; educator oversight; continual rubric refinement

- Continuous documentation improvement for R&D reproducibility

- Sectors: Industrial R&D, academia

- Tools/Workflows: Use CoLT feedback to identify missing implementation details in technical writeups, feeding better source materials (“R”) into drafting pipelines and improving scientific reproducibility

- Assumptions/Dependencies: Willingness to modify internal documentation practices; integration with ELNs and lab data systems

Notes on feasibility across all applications:

- Strong performance in Legal-Professional Compliance (correlation ) supports immediate use for enablement checks, but broader generalization requires larger and more diverse datasets.

- Assumes access to the invention’s source technology and secure model deployment; confidentiality and auditability are critical.

- Statutory alignment is currently U.S.-centric (35 U.S.C. §112(a)); international deployment needs localized legal constraints.

- Human-in-the-loop review remains essential, particularly for high-stakes filings and domain-complex cases (biotech/chemistry).

Glossary

- Ablation study: An experimental method that removes or alters components to assess their individual contributions to overall performance. "we conducted an ablation study using the Qwen3-32B backbone model."

- Abstractive summarization: A text generation approach that produces novel phrasing and reworded summaries rather than extracting exact sentences. "to enhance abstractive summarization performance for long documents,"

- Antecedent basis: A patent drafting requirement ensuring that claim elements are properly introduced and supported elsewhere in the specification. "deductive logical rigor and the consistency of the antecedent basis,"

- BERTScore: An embedding-based evaluation metric that compares generated text to references using contextualized BERT embeddings. "embedding-based metrics like BERTScore~\citep{zhang2019bertscore}"

- BigBird: A transformer architecture designed for long sequences using sparse attention patterns to scale efficiently. "the introduction of BigBird~\citep{zaheer2020bigbird}, which utilizes a sparse attention mechanism to efficiently process contexts spanning thousands of tokens,"

- BLEU: A classic n-gram overlap metric for machine translation and text generation quality assessment. "n-gram overlap metrics such as BLEU, ROUGE, and METEOR~\citep{papineni2002bleu, lin2004rouge, banerjee2005meteor}."

- Chain-of-Legal-Thought (CoLT): A legally constrained reasoning process that enforces sequential patent-law-specific analysis before scoring. "Pat-DEVAL introduces Chain-of-Legal-Thought (CoLT), a legally-constrained reasoning mechanism that enforces sequential patent-law-specific analysis."

- Chain-of-Thought (CoT): A prompting technique that induces step-by-step reasoning in LLMs to improve evaluation or problem-solving. "We propose a legally-constrained reasoning strategy that extends standard CoT by injecting statutory constraints"

- Data Precision (DP): An evaluation dimension measuring the exactness of experimental values, numerals, and formulas in generated text. "Data Precision (DP) to verify technical integrity,"

- Earth Mover's Distance: A metric that quantifies the distance between probability distributions, often used in comparing embeddings. "which utilize contextualized embeddings and Earth Mover's Distance, respectively,"

- Enablement Requirement: A legal standard under 35 U.S.C. §112(a) requiring that a patent’s disclosure enables a skilled person to make and use the invention. "Evaluate whether satisfies the Enablement Requirement (35 U.S.C. \S 112(a))."

- Formal Consistency Layer: A CoLT component that checks cross-sectional coherence and adherence to professional patent drafting conventions. "3. Formal Consistency Layer: Assess the structural integrity of the document (e.g., Background, Summary, Detailed Description) and its adherence to professional patent drafting conventions and legal terminology."

- G-Eval: An LLM-based evaluation approach that uses Chain-of-Thought prompting to align better with human judgments. "G-Eval demonstrated that employing Chain-of-Thought (CoT) prompting can achieve higher alignment with human experts in summarization tasks~\citep{liu-etal-2023-geval}."

- Gumbel-Softmax: A differentiable approximation for sampling from categorical distributions, enabling discrete choices within neural networks. "Proposed a "Dynamic Gating Module" using Gumbel-Softmax for sparsity."

- Intraclass Correlation Coefficient (ICC): A reliability statistic quantifying the consistency of ratings across multiple evaluators. "We validated the reliability of these judgments using Krippendorff's Alpha and the Intraclass Correlation Coefficient (ICC)."

- Krippendorff's Alpha: A measure of inter-rater agreement accounting for chance, used to validate annotation reliability. "We validated the reliability of these judgments using Krippendorff's Alpha and the Intraclass Correlation Coefficient (ICC)."

- Legal-Professional Compliance (LPC): An evaluation dimension verifying adherence to legal standards like enablement and appropriate legal phrasing. "Legal-Professional Compliance (LPC)"

- Likert scale: An ordered rating scale used for subjective evaluations, often ranging from 1 to 5. "Each dimension is evaluated on a 1-to-5 Likert scale,"

- LLM-as-a-Judge: A paradigm where LLMs act as evaluators to assess the quality or validity of generated content. "Recently, the paradigm has shifted toward LLM-as-a-Judge, leveraging the reasoning capabilities of LLMs to act as surrogate evaluators."

- METEOR: A machine translation evaluation metric that incorporates stemming and synonyms to improve correlation with human judgments. "n-gram overlap metrics such as BLEU, ROUGE, and METEOR~\citep{papineni2002bleu, lin2004rouge, banerjee2005meteor}."

- MoverScore: An embedding-based text evaluation metric that leverages Earth Mover’s Distance to compare semantic content. "MoverScore~\citep{zhao2019moverscore}, which utilize contextualized embeddings and Earth Mover's Distance, respectively,"

- Pap2Pat-EvalGold: A curated benchmark of paper–patent pairs annotated by experts for evaluating patent description quality. "We constructed Pap2Pat-EvalGold, a high-precision dataset derived from the Pap2Pat~\citep{knappich2025pap2pat} corpus."

- PatentScore: An LLM-driven framework for evaluating patent claims on legal consistency and semantic fidelity. "PatentScore~\citep{yoo2025patentscore}, have advanced the evaluation of patent claims"

- Person Having Ordinary Skill in the Art (PHOSITA): A legal persona used as the reference standard for enablement and reproducibility in patent law. "Person Having Ordinary Skill in the Art (PHOSITA)"

- Prometheus-2: A fine-tuned LLM specialized for evaluation tasks to improve feedback reliability. "Prometheus-2 recorded a correlation of 0.46,"

- Reference-Free Evaluation Paradigm: An assessment approach that evaluates outputs against source technology without relying on fixed references. "Reference-Free Evaluation Paradigm: We establish a source-anchored framework that evaluates descriptions directly against the source technology, accommodating the ``one-to-many'' nature of valid patent drafting where conventional reference-based metrics fail."

- ROUGE-L: A summarization metric based on the longest common subsequence between system and reference texts. "Traditional n-gram metrics such as BLEU and ROUGE-L exhibit negligible correlation with human experts, yielding coefficients of 0.09 or lower."

- Sparse attention mechanism: An attention strategy that reduces computational complexity by limiting attention connections, enabling long-context processing. "utilizes a sparse attention mechanism to efficiently process contexts spanning thousands of tokens,"

- Statutory Compliance Layer: A CoLT component that evaluates satisfaction of legal requirements like enablement and written description. "2. Statutory Compliance Layer: Evaluate whether satisfies the Enablement Requirement (35 U.S.C. \S 112(a))."

- Structural Coverage (SC): An evaluation dimension measuring completeness of mandatory patent sections. "Structural Coverage (SC): Determines the completeness of mandatory sections required by patent statutes."

- Technical Content Fidelity (TCF): An evaluation dimension assessing distortion or omission of core technical mechanisms. "Technical Content Fidelity (TCF): Measures the distortion or omission of the source technology's core mechanisms."

- Undue experimentation: Excessive effort required by a PHOSITA to reproduce an invention due to insufficient disclosure, violating enablement. "without \"undue experimentation.\""

- Written Description Requirement: A legal criterion ensuring the inventor possessed the claimed invention as evidenced by the specification. "Also, check for the Written Description Requirement to ensure the inventor had possession of the invention."

Collections

Sign up for free to add this paper to one or more collections.