The CoinAlg Bind: Profitability-Fairness Tradeoffs in Collective Investment Algorithms

Abstract: Collective Investment Algorithms (CoinAlgs) are increasingly popular systems that deploy shared trading strategies for investor communities. Their goal is to democratize sophisticated -- often AI-based -- investing tools. We identify and demonstrate a fundamental profitability-fairness tradeoff in CoinAlgs that we call the CoinAlg Bind: CoinAlgs cannot ensure economic fairness without losing profit to arbitrage. We present a formal model of CoinAlgs, with definitions of privacy (incomplete algorithm disclosure) and economic fairness (value extraction by an adversarial insider). We prove two complementary results that together demonstrate the CoinAlg Bind. First, privacy in a CoinAlg is a precondition for insider attacks on economic fairness. Conversely, in a game-theoretic model, lack of privacy, i.e., transparency, enables arbitrageurs to erode the profitability of a CoinAlg. Using data from Uniswap, a decentralized exchange, we empirically study both sides of the CoinAlg Bind. We quantify the impact of arbitrage against transparent CoinAlgs. We show the risks posed by a private CoinAlg: Even low-bandwidth covert-channel information leakage enables unfair value extraction.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Explain it Like I'm 14

Overview

This paper studies “CoinAlgs,” which are shared trading algorithms that make investment decisions for a group of users. Think of a CoinAlg like a team coach that decides what moves everyone on the team makes. The paper’s big idea is called the “CoinAlg Bind”: CoinAlgs face a fundamental choice. If they keep their strategy secret, insiders can use hidden knowledge to take unfair advantage. If they make their strategy public, outsiders can copy or exploit it, reducing profit. In short, you can’t have both perfect fairness and maximum profit at the same time.

Key Questions

The paper asks three simple questions:

- What counts as “privacy” and “fairness” for a shared trading algorithm?

- Why do secret strategies risk unfair insider behavior?

- Why do public strategies lose money to copycats and market “ambushers” (arbitrageurs)?

How They Studied It

The authors use three approaches:

1) A clear model of how CoinAlgs work

They build a simple framework to describe:

- The market and its state (who owns what)

- Trades (who buys or sells which asset)

- The algorithm’s behavior (how it chooses trades)

- What the public knows about the algorithm

“Privacy” means people can’t accurately predict the algorithm’s next moves. “Transparency” means they can. They measure privacy by how different the public’s best guess is from what the algorithm actually does.

2) A fairness game

They define a “game” to test fairness. Imagine two people:

- An insider who knows the algorithm’s plan

- A regular user who only knows public info

Both try to place trades around the algorithm’s trade. If the insider can consistently end up with more money than the regular user beyond some threshold, the CoinAlg is considered unfair. This captures “insider value extraction” — using secret info to take advantage.

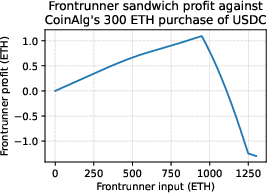

A key idea here is “sandwiching”: someone jumps in front of your trade to move the price, then sells after you to pocket the difference. It’s like cutting in front of you in line to make you pay more, then turning around and cashing in when the price goes up because of your purchase.

3) Real data experiments

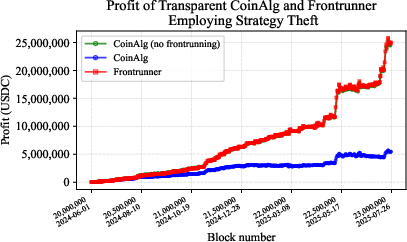

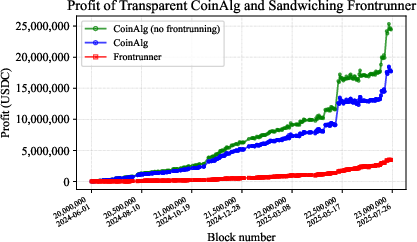

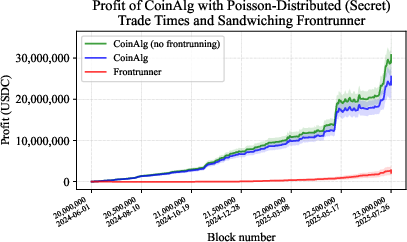

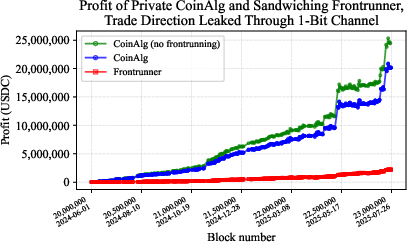

They simulate CoinAlgs using actual trading data from Uniswap (a popular decentralized exchange on Ethereum) over more than a year (June 2024–July 2025). They test both sides:

- Private CoinAlgs: even small leaks of information can let insiders extract value

- Transparent CoinAlgs: public strategies get copied or sandwiched, which reduces profit

Main Findings and Why They Matter

Here are the core results:

- If a CoinAlg is unfair (insiders can get extra profit), then it must be private in some meaningful way. In other words, unfairness needs secrecy to exist.

- If a CoinAlg is transparent (public strategy), then arbitrageurs can exploit it. They either copy trades (strategy theft) or “sandwich” them to skim profit. This creates a “cost of transparency,” meaning the algorithm makes less money because others use its signals against it.

- In experiments, both problems show up in real markets. Even tiny leaks by a private CoinAlg let insiders take advantage. And when the strategy is public, attempts to defend against attackers still reduce profit.

This matters because CoinAlgs are growing fast — from robo-advisors in traditional finance to AI-powered “investment DAOs” in crypto. The paper shows that whether you choose secrecy or openness, there is a real risk to users’ money.

Implications and Impact

The “CoinAlg Bind” means designers and users must choose which risk to accept:

- Keep strategies private: better profits, but risk insider unfairness.

- Make strategies public: fairer access, but expect lower profits due to exploitation.

In traditional finance, rules and oversight help reduce insider abuse when strategies are private. In crypto and other non-custodial settings, those protections often don’t exist, so the risks are more direct.

The authors suggest adding practical guardrails for private CoinAlgs (for example, limiting how information can leak or using protected trade routes), but they emphasize that the tradeoff is fundamental. If you want perfect fairness, you sacrifice some profit. If you want maximum profit, you accept some fairness risk.

Bottom line

There’s no perfect design for shared trading algorithms. To protect everyday investors, we need to be honest about the tradeoffs, use strong safeguards, and, where possible, bring in protections like those in traditional finance — especially as AI-driven collective investing becomes more common.

Knowledge Gaps

Knowledge gaps, limitations, and open questions

Below is a single, focused list of concrete gaps and unresolved questions that future work could address to strengthen, generalize, and operationalize the paper’s claims.

- Validity of the “grim-trigger invalidation” model: The transparency analysis relies on an ultimatum game where an adversary can invalidate the CoinAlg’s transactions via a trigger strategy. How can such invalidation be realized on mainstream blockchains (e.g., Ethereum PoS) without impractical reorg capabilities or collusion with block producers? What realistic on-chain mechanisms (if any) support this assumption, and how do conclusions change if invalidation is infeasible?

- Constant-product AMM modeling vs Uniswap V3 reality: The sandwich model assumes a single constant-product AMM, but Uniswap V3 is concentrated liquidity with non-uniform price curves, fee tiers, and liquidity ranges. How do results (cost of transparency, sandwich profitability) change under V3-specific microstructure, and under multi-hop routes and routing optimizers?

- Missing slippage tolerance and execution constraints: The model omits user-set slippage tolerances, fee tiers, gas costs, and order routing decisions that critically bound sandwich profitability. What is the cost of transparency when realistic slippage settings, gas auctions, and MEV-bundle protections are included?

- Single-venue assumption: The analysis focuses on one AMM pair; real trading is cross-venue (multiple AMMs, aggregators, CEX-DEX arbitrage). How does transparency cost scale across multi-venue markets with cross-pool price impact and external liquidity?

- Competition among arbitrageurs and builders: The adversary is modeled as a single actor; in practice there are many searchers, builders, relays, and private orderflow networks competing. How does adversary competition, builder-relay market structure, and auction dynamics (e.g., MEV-Boost) affect equilibrium profits, sandwich feasibility, and transparency costs?

- Calibrating the public-information function L: Privacy is defined via statistical distance between a “public” distribution and the true trade distribution, but the paper assumes uniform distributions over feasible trades under partial disclosure (e.g., asset-only, direction-only). How should L be calibrated to represent realistic beliefs (non-uniform priors, learned patterns), and how can epsilon-privacy be estimated empirically from real leakage?

- Quantitative linkage between privacy and unfairness: Theorem 1 shows unfairness implies privacy, but does not bound epsilon-privacy in terms of observed unfairness (alpha, t). Can we derive actionable, tight quantitative relationships (bounds or tradeoff curves) between privacy parameters and adversary advantage for realistic markets?

- Measuring economic utility of leaked information: Theorem 2 assumes “economically useful” leakage but does not operationalize utility. What metrics and methodologies can quantify the minimum leakage bandwidth (or types of hints) that enable meaningful insider extraction under realistic costs and constraints?

- Detecting insider value extraction: The paper claims private CoinAlgs enable potentially undetectable insider extraction. What detection frameworks (statistical tests, audit trails, on-chain forensics) can identify sandwich-like or preferential orderflow patterns caused by insiders, and what false-positive/false-negative rates are achievable?

- Experimental realism of the “profitable predictor”: Simulations assume a CoinAlg that correctly predicts future prices over a year-long window. How robust are results when using out-of-sample tests, realistic forecasting models with finite accuracy, transaction costs, and model drift? What is the cost-of-transparency under noisy signals?

- Reproducibility and release details: The experiments on Uniswap V3 are summarized but do not specify datasets, code, parameter choices (fees, liquidity snapshots, slippage, routing). Can the authors release code and detailed configs to enable replication and sensitivity analyses?

- Alternative adversarial strategies beyond sandwiching: The study focuses on strategy theft and sandwiching; other MEV forms (e.g., just-in-time liquidity provision, path-dependent arbitrage, time-bandit attacks, backrun-only strategies, cross-domain arbitrage) are not analyzed. How do these affect the profitability-fairness tradeoff?

- Internal fairness among CoinAlg participants: Fairness is defined as adversary value extraction vs a baseline player, not investor welfare within the CoinAlg (e.g., allocation of gains/losses across participants, queue fairness, fee rebates). How should “economic fairness” be defined and enforced at the participant level?

- Risk-adjusted profitability: The “cost of transparency” is framed in raw profit terms; risk (variance, drawdowns, tail losses) is not considered. Does transparency reduce or increase risk, and how does the tradeoff look under risk-adjusted metrics (Sharpe, Sortino, CVaR)?

- Time dynamics and adaptive strategies: The adversary and CoinAlg strategies are static across repeated rounds; real systems adapt (e.g., randomized timing, batching, TWAPs, adaptive slippage). How does the bind evolve when both sides dynamically learn and respond over time?

- Defensive design space not evaluated: The paper mentions two guardrails but does not analyze their efficacy. What is the quantitative effectiveness and cost of defenses such as:

- Private orderflow networks (Flashbots Protect, OFNs), RFQs, batch auctions (e.g., frequent batch auctions), decentralized crossing, commit-reveal schemes

- Cryptographic approaches (TEE/MPC/FHE) for private strategy execution while minimizing insider access

- Randomization of trade timing and sizing to muddle predictability

- Transparency with private execution: The paper implies transparency forecloses sandwich protections, but transparent strategies could still use private orderflow or commit-reveal inclusion to conceal exact timing/size. Under what conditions can “transparent logic, private execution details” mitigate the transparency cost?

- Governance and regulatory considerations: TradFi mitigates fairness risks via regulation; the paper does not explore which on-chain governance or compliance mechanisms (audits, attestations, disclosures, fiduciary standards, enforcement) could reduce insider extraction in non-custodial CoinAlgs.

- Alternative market mechanisms: The analysis assumes continuous-time AMM markets. How would frequent batch auctions, call markets, or other mechanism designs change the bind by reducing exploitability of predictable orderflow?

- Multi-asset and portfolio-level effects: The model focuses on single-pair trades. How does transparency affect cross-asset portfolio rebalancing, multi-legged trades, and correlated execution that may amplify or dampen arbitrage opportunities?

- Parameter sensitivity and regime analysis: The transparency cost likely depends on liquidity depth, volatility, trade size, fee tiers, and network congestion. Can we produce sensitivity maps (across these variables) to understand when transparency is most/least costly?

- Insider knowledge pathways: The claim that “low-bandwidth covert channels” enable extraction is not tied to concrete channels (e.g., public signals, governance posts, time-of-day execution). What typical leakage paths exist in practice, and how can they be measured or curtailed?

- Formalizing investor-protection requirements for CoinAlgs: The conclusion notes TradFi protections matter but does not specify what minimum standards CoinAlgs need (disclosure, auditing, conflict-of-interest policies, execution controls). What concrete policy frameworks and technical standards should be adopted?

- Extending the fairness game to real sequencing control: The fairness game abstracts block sequencing. How do different sequencing regimes (builder-controlled ordering, user-specified inclusion, private mempools) alter adversary advantage and fairness outcomes?

- Approaches to quantifying epsilon-privacy from on-chain behavior: The privacy definition is theoretical; how can epsilon be estimated from observed trade patterns and public communications, enabling empirical privacy-fairness assessments of live CoinAlgs?

- Reconciling transparency with community accountability: Many CoinAlgs market themselves on openness. What disclosure practices preserve community trust without revealing exploitable execution details (e.g., delayed reporting, hashed commitments, audited but non-public logic)?

Practical Applications

Immediate Applications

The following applications leverage the paper’s formal model, empirical results, and design insights to improve how collective investment algorithms (CoinAlgs) are built, audited, and used today.

- Cost-of-Transparency dashboards for CoinAlgs

- Description: Quantify expected profit erosion from arbitrage when a strategy is publicly known or can be simulated; benchmark against private routing baselines.

- Sectors: Finance (DeFi, quant funds, robo-advisors), Software analytics.

- Tools/products/workflows: Simulation modules using Uniswap-style AMM data; per-strategy “transparency tax” scores; alerts when cost exceeds tolerance.

- Assumptions/dependencies: Access to historical trade data; realistic market-impact models; adversary can simulate strategy behavior.

- Insider-leakage red-teaming and covert-channel audits

- Description: Structured tests to detect low-bandwidth information leaks that enable insider value extraction, as evidenced by the paper’s experiments.

- Sectors: Finance (DeFi platforms, robo-advisors), Security auditing.

- Tools/products/workflows: Transaction pattern analysis; correlation tests between insider access and pre/post-trade behavior; controlled “leak” experiments; continuous monitoring.

- Assumptions/dependencies: Granular transaction logs; ability to label insider vs public actors; statistical power to detect small leakage.

- Protected order-flow routing as default operational guardrail

- Description: Route trades via private mempools or batch auctions to reduce sandwiching/MEV (e.g., Flashbots Protect, MEV Blocker, CoWSwap-style solvers).

- Sectors: Finance (DeFi execution), Software/infrastructure.

- Tools/products/workflows: RPC configuration playbooks; venue-selection policies; execution policies that avoid public mempool exposure; slippage and timing controls.

- Assumptions/dependencies: Availability and reliability of private routing; validator/builder participation; residual MEV risks remain in fragmented ecosystems.

- Strategy-obfuscation in execution (without increasing leak risk)

- Description: Randomize trade sizes/timing within acceptable bounds to reduce predictability while monitoring that obfuscation does not create new covert channels.

- Sectors: Finance (quant execution, DeFi trading).

- Tools/products/workflows: TWAP/VWAP variants with randomized partitions; multi-venue splitting; adaptive noise calibrated to liquidity.

- Assumptions/dependencies: Liquidity sufficient to absorb randomization; careful design to avoid predictable patterns; obfuscation may reduce raw alpha.

- CoinAlg fairness audits and investor disclosures

- Description: Include “fairness risk” and “privacy posture” sections in offering documents; disclose insider access, simulation capabilities, and protective routing used.

- Sectors: Policy/compliance (SEC/CFTC-aligned disclosures), Finance (funds, DAOs).

- Tools/products/workflows: Standardized audit templates derived from the paper’s fairness game; attestations on insider knowledge boundaries; periodic updates.

- Assumptions/dependencies: Willingness to adopt voluntary practices; third-party auditors with domain expertise; regulatory guidance may evolve.

- On-chain fairness monitors for DAOs

- Description: Public dashboards tracking realized slippage, pre/post-trade patterns, and suspected sandwiching against DAO trades; community alert systems.

- Sectors: Finance (DeFi DAOs), Civic tech.

- Tools/products/workflows: Data pipelines from DEXes; heuristics for frontrun/backrun detection; threshold-based alerting; governance incident reviews.

- Assumptions/dependencies: Data availability; false-positive handling; clear governance process for responses.

- Execution playbooks for retail users of AI trading bots

- Description: Practical guidance to reduce personal exposure: smaller orders, protected routing, avoiding predictable schedules, monitoring realized execution quality.

- Sectors: Daily life (retail investing), Education.

- Tools/products/workflows: Checklist UIs inside consumer platforms; slippage/MEV exposure scorecards; simple toggles for safer routing.

- Assumptions/dependencies: Platforms integrate protective options; users can trade off convenience vs protection.

- Exchange/venue policies to dampen sandwich profitability

- Description: Fee structures, minimum tick size, or batch matching windows that reduce immediate arbitrage gains from transparent flows.

- Sectors: Finance (DEX/CEX operators), Market design.

- Tools/products/workflows: Frequent batch auctions; randomized inclusion; solver competitions that internalize price impact costs.

- Assumptions/dependencies: Venue-level control; user acceptance of slightly different UX; possible competition from venues without protections.

- Strategy-simulation access policies

- Description: Limit black-box simulation access (rate limits, delayed outputs) to reduce exploitable advance knowledge, while still enabling research/backtesting.

- Sectors: Software (API design), Finance (quant platforms).

- Tools/products/workflows: API governance; query auditing; watermarking outputs; tiered access with contractual constraints.

- Assumptions/dependencies: Balance research openness vs adversary misuse; enforceable terms; logging and review.

- Governance charters defining conflict-of-interest boundaries

- Description: Explicit rules barring insiders from trading ahead of or around CoinAlg transactions; slashing/penalties in DAOs; cooling-off periods.

- Sectors: Policy (self-regulation), Finance (DAOs, funds).

- Tools/products/workflows: Smart-contract enforced policies; role-based access; code-of-conduct; transparent breach procedures.

- Assumptions/dependencies: Strong community governance; enforceability in decentralized settings; legal clarity for sanctions.

- Education modules for quant, DeFi, and policy practitioners

- Description: Training on the “CoinAlg Bind,” privacy-fairness tradeoffs, and operational safeguards.

- Sectors: Academia, Professional education.

- Tools/products/workflows: Short courses; case studies replicating Uniswap findings; lab exercises on sandwich detection.

- Assumptions/dependencies: Curriculum adoption; access to datasets and tooling.

- Insurance-like risk scoring for CoinAlgs

- Description: Provide indicative premiums or risk scores based on strategy transparency and fairness posture.

- Sectors: Finance (risk, insurance), Compliance.

- Tools/products/workflows: Scoring models using the paper’s definitions; underwriting guidelines; incident reporting pipelines.

- Assumptions/dependencies: Market appetite; reliable detection and claims triggers; potential moral hazard concerns.

Long-Term Applications

The following applications require further research, infrastructure changes, or ecosystem coordination to fully realize the paper’s implications.

- MEV-resistant market designs at scale

- Description: Broad adoption of frequent batch auctions, sealed-bid mechanisms, or solver-based matching that neutralizes sandwiching against known flows.

- Sectors: Finance (DEX/CEX redesign), Market infrastructure.

- Tools/products/workflows: Protocol upgrades; L2-specific auction mechanisms; standardized solver APIs.

- Assumptions/dependencies: Ecosystem consensus; performance and UX parity; integration across chains/venues.

- Order-flow privacy as a public good

- Description: End-to-end encrypted order flow (e.g., threshold encryption, SUAVE-like systems) generalized across DeFi to mitigate public leakage.

- Sectors: Finance (DeFi infra), Cryptography.

- Tools/products/workflows: Builder networks supporting encryption; attestation of privacy procedures; open standards.

- Assumptions/dependencies: Trust in cryptographic protocols/hardware; latency and reliability tradeoffs.

- Verifiable private strategy execution

- Description: TEEs/MPC + zero-knowledge proofs to demonstrate strategy adherence while hiding sensitive logic and preventing insider misuse.

- Sectors: Finance (quant funds, DAOs), Advanced cryptography.

- Tools/products/workflows: Remote attestation; zk-proofs of constraint satisfaction; audit trails proving “no unauthorized leaks.”

- Assumptions/dependencies: Secure hardware supply chains; scalable zk systems; formal specifications of “fairness constraints.”

- Standardized Fair CoinAlg certification

- Description: Industry standards and audits for privacy budgets, insider-access controls, and empirical fairness metrics (based on the paper’s definitions).

- Sectors: Policy/compliance, Finance.

- Tools/products/workflows: Certification bodies; continuous monitoring requirements; incident disclosure norms.

- Assumptions/dependencies: Multi-stakeholder governance; international harmonization; alignment with regulators.

- Incentive-compatible DAO governance for fairness

- Description: Tokenomics and slashing conditions that discourage insider value extraction and reward adherence to fairness policies.

- Sectors: Finance (DAOs), Mechanism design.

- Tools/products/workflows: On-chain enforcement; oracle-driven breach detection; staking rules tied to fairness outcomes.

- Assumptions/dependencies: Robust detection; avoiding false positives; game-theoretic stability.

- Parametric insurance for fairness breaches

- Description: Coverage that triggers payouts upon detected sandwiching/insider extraction beyond thresholds.

- Sectors: Finance (insurance), Risk management.

- Tools/products/workflows: On-chain metrics; auditors/oracles; premium pricing based on transparency cost.

- Assumptions/dependencies: Reliable trigger definitions; capital adequacy; avoiding manipulation of triggers.

- Curriculum integration and research programs on the CoinAlg Bind

- Description: Academic centers focused on the profitability–fairness tradeoff, extending the game-theoretic models and empirical measurements across venues.

- Sectors: Academia, Education.

- Tools/products/workflows: Benchmark datasets; reproducible notebooks; theory-plus-systems courses.

- Assumptions/dependencies: Funding; access to diverse market data; collaboration with industry.

- Developer toolkits for “leak-safe” AI trading agents

- Description: Libraries that constrain information release (privacy budgets), simulate adversarial arbitrage, and verify compliance with fairness constraints before deployment.

- Sectors: Software/AI, Finance.

- Tools/products/workflows: Static/dynamic analyzers for covert channels; adversarial simulation harnesses; CI pipelines for fairness checks.

- Assumptions/dependencies: Formal APIs for strategies; shared metrics; community adoption.

- Public fairness scoreboards for consumer protection

- Description: Regulator- or NGO-run portals ranking CoinAlgs by realized fairness and transparency costs.

- Sectors: Policy, Civic tech, Daily life.

- Tools/products/workflows: Data ingestion from chains/venues; standardized scoring; dispute resolution processes.

- Assumptions/dependencies: Data reliability; buy-in from platforms; avoiding gaming of metrics.

- Cross-venue anti-arbitrage coordination

- Description: Agreements among exchanges/solvers/builders to reduce exploitability of known flows (e.g., synchronized batch timings).

- Sectors: Finance (market operators).

- Tools/products/workflows: Inter-venue APIs; shared cryptographic primitives; compliance frameworks.

- Assumptions/dependencies: Competitive dynamics; antitrust considerations; technical synchronization.

- Formal “grim-trigger” mitigation strategies

- Description: Strategy designs that neutralize the ultimatum-style leverage highlighted by the paper (e.g., invalidate-on-detection with minimal collateral damage).

- Sectors: Finance (quant research), Game theory.

- Tools/products/workflows: Detection algorithms; fallback execution modes; proofs of equilibrium outcomes under new rules.

- Assumptions/dependencies: Reliable detection; avoidance of self-inflicted losses; validator cooperation.

- Privacy-preserving co-investment clubs for retail

- Description: Consumer-facing pooled investment tools that cryptographically hide strategy signals while offering verifiable protections against insider extraction.

- Sectors: Daily life (retail investing), Fintech.

- Tools/products/workflows: Managed private routing; attestations; simplified fairness reports for non-experts.

- Assumptions/dependencies: Usable cryptographic UX; regulatory clarity; platform scalability.

These applications operationalize the paper’s core findings: private CoinAlgs invite insider unfairness if information is economically useful, while transparent CoinAlgs suffer profit erosion via arbitrage. Effective deployment depends on realistic adversary models, robust data, market-structure support (private routing, batch auctions), and evolving standards that encode fairness and privacy expectations.

Glossary

- AMM (Automated Market Maker): A smart-contract-based trading mechanism that prices assets via a formula and enables swaps against a liquidity pool. "a single, constant-product AMM."

- Arbitrage: Profit-seeking by exploiting predictable trades or price differences, often at the expense of other actors. "expose it to arbitrage and degraded profits—what we term a cost of transparency."

- Arbitrageur: A trader who performs arbitrage, capitalizing on publicly known strategies or price gaps. "arbitrageurs to erode the profitability of a CoinAlg."

- Assets under management (AUM): The total value of assets a platform or fund manages for clients. "assets under management (AUM)"

- Backrunning: Executing a trade immediately after a known trade to profit from its price impact. "paired frontrunning and backrunning trades."

- Black-box access: The ability to query an algorithm’s inputs/outputs without visibility into its internal logic. "white- or black-box access to "

- CoinAlg: An algorithm that drives collective investment actions for a community of users. "CoinAlgs are algorithms that drive collective investment actions."

- CoinAlg Bind: The fundamental tradeoff that CoinAlgs face between ensuring fairness and maintaining profitability. "We call this tension the CoinAlg Bind"

- Constant-product AMM: An AMM whose invariant is the product of reserves, maintaining x·y=k pricing. "a single, constant-product AMM."

- Cost of transparency: The profitability loss incurred when a strategy is public, enabling others to arbitrage it. "a cost of transparency."

- Covert channel: An unintended or hidden communication pathway that leaks sensitive information. "covert-channel information leakage"

- Custodial: A setup where a financial entity holds and manages users’ funds on their behalf. "TradFi are custodial"

- DAO (Decentralized Autonomous Organization): A blockchain-governed organization operating via smart contracts and collective rules. "Decentralized Autonomous Organizations (DAOs)"

- Decentralized exchange (DEX): An on-chain trading venue without centralized intermediaries. "a decentralized exchange"

- DeFi (Decentralized Finance): Blockchain-based financial systems operating without traditional intermediaries. "decentralized finance (DeFi)"

- Fiduciary: A duty to act in investors’ best interests, often imposing fairness obligations. "collectivity introduces fiduciary and thus fairness obligations"

- Flashbots Protect: A transaction-routing service designed to mitigate MEV and sandwich attacks. "Flashbots Protect"

- Frontrunning: Executing a trade just before a known pending trade to profit from its expected price impact. "paired frontrunning and backrunning trades."

- Game-theoretic model: A formal framework analyzing strategic interactions between agents. "in a game-theoretic model"

- Grim-trigger strategy: A strategy that punishes deviations indefinitely after one defection to enforce cooperation. "grim-trigger strategy—the threat of invalidating the {CoinAlg}'s transactions—"

- High-frequency trading (HFT): Algorithmic trading executing many fast, frequent trades to exploit short-term opportunities. "high-frequency trading (HFT) firms"

- Mempool: The pool of pending transactions awaiting inclusion in a block. "public or private mempools"

- Memecoin: A speculative crypto token whose value is largely meme-driven rather than fundamentals-based. "“memecoin”"

- MEV (Maximal Extractable Value): The maximum profit miners/validators or others can extract by reordering or inserting transactions. "``miner / maximal extractable value'' (MEV)"

- Non-custodial: A setup where users retain control over their assets rather than entrusting them to a custodian. "are typically non-custodial"

- Prediction oracle: An information source that forecasts an algorithm’s trades, enabling strategic exploitation. "which is a prediction oracle"

- Repeated game: A strategic interaction played across multiple periods where history-dependent strategies apply. "a repeated game where intentional transaction invalidation forms the basis of a trigger strategy."

- Sandwiching: Combining a frontrun and a backrun around a target trade to siphon value and degrade its profitability. "Sandwiching strategies are more amenable to analytic study than strategy theft."

- Stablecoin: A cryptoasset pegged to a stable value (e.g., USD) to minimize volatility. "USD stablecoin"

- Statistical distance: A measure of how different two probability distributions are, used to quantify privacy. "non-negligible statistical distance"

- TEE (Trusted Execution Environment): A secure hardware enclave that executes code privately, hiding internal randomness or logic. "sampled inside a TEE"

- Total variation distance: A specific statistical metric quantifying the maximal difference between two distributions. "where denotes total variation distance."

- TradFi (Traditional Finance): Conventional, regulated financial systems and institutions. "a.k.a TradFi"

- Trigger strategy: A contingent strategy that changes future behavior based on past actions (e.g., punishment after deviation). "forms the basis of a trigger strategy."

- Ultimatum game: A bargaining game where one party proposes a split and the other accepts or rejects, modeling strategic leverage. "as an ultimatum game"

- White-box access: Full visibility into an algorithm’s internal code or decision logic. "white- or black-box access"

Collections

Sign up for free to add this paper to one or more collections.