- The paper presents a method converting amplitude-damping errors into heralded erasures via dual-rail encoding, boosting fault-tolerance thresholds.

- It combines theoretical analysis with experimental demonstrations to show that erasure-dominated noise significantly improves QEC efficiency while reducing hardware overhead.

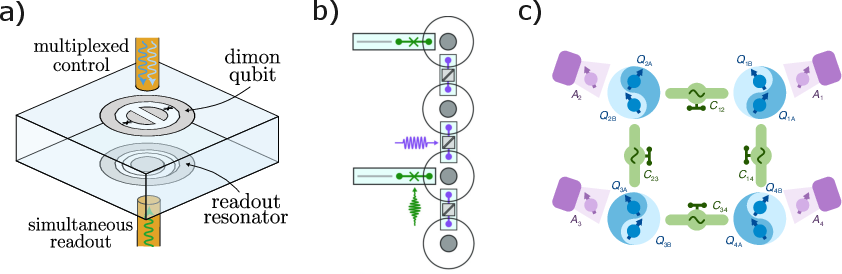

- The work highlights practical implementations using coupled transmons, multimode (dimon) qubits, and cavity QED systems to bridge current hardware limitations with scalable quantum computing.

Developments in Superconducting Erasure Qubits for Hardware-Efficient Quantum Error Correction

Overview

The paper "Developments in superconducting erasure qubits for hardware-efficient quantum error correction" (2601.02183) offers an in-depth Perspective on the emergence, theoretical underpinnings, and practical advancements of erasure qubits in superconducting quantum computing hardware. By focusing on the dual-rail encoding paradigm, the authors systematically analyze how the intentional engineering of erasure-dominated noise profiles leads to substantially higher noise thresholds and lower hardware overheads for quantum error correction (QEC) codes. Integrating recent theoretical developments with hardware demonstrators, the work presents erasure qubits as a credible path to scalable, fault-tolerant quantum computation.

Motivation: Hardware-Efficient Quantum Error Correction

A cardinal challenge in realizing fault-tolerant quantum computers lies in the mismatch between the physical error rates of qubits and the requirements for logical error correction. Standard QEC protocols such as the surface code require orders of magnitude more physical qubits than are currently available, significantly impeding technological scalability. This work motivates a hardware-software co-design philosophy: by tailoring hardware error processes to match QEC protocols, one can drastically reduce logical error rates and, consequently, the overall physical qubit overhead.

Superconducting qubits, while amenable to fabrication and high-fidelity control, are predominantly limited by T1 (amplitude damping) and T2 (dephasing) processes, and frequently suffer from leakage errors. Standard approaches treat noise as randomly occurring, unknown-location Pauli errors. Erasure qubits, in contrast, convert dominant noise—specifically amplitude damping—into detectable, heralded events with known locations, leveraging this structure for more efficient error correction.

Erasure Noise and Dual-Rail Encoding

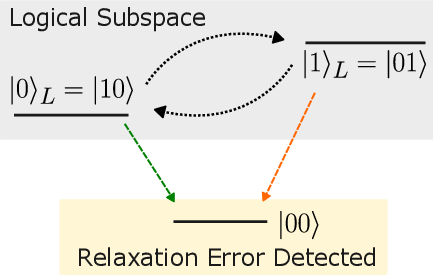

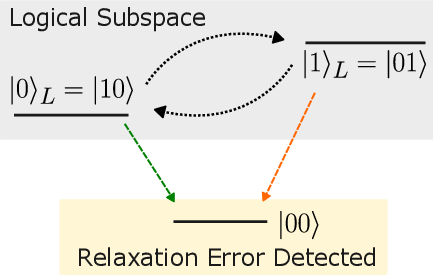

Erasure qubits are constructed such that their dominant error channel leads to an easily identifiable escape from the computational subspace. Key to this approach is the dual-rail encoding, wherein logical information is encoded in states ∣10⟩ and ∣01⟩ across two physical qubits. Spontaneous de-excitation drives these to the ground state ∣00⟩, which is outside the encoded subspace and can be efficiently flagged via tailored measurements termed erasure checks.

Figure 1: Logical encoding and erasure error conversion in dual-rail superconducting qubits, highlighting how amplitude damping translates into detectable erasure events.

The intentional conversion of amplitude-damping (or other noise) into erasures means that the QEC code's decoder receives both syndrome information and precise error locations, which dramatically increases the fault-tolerance threshold.

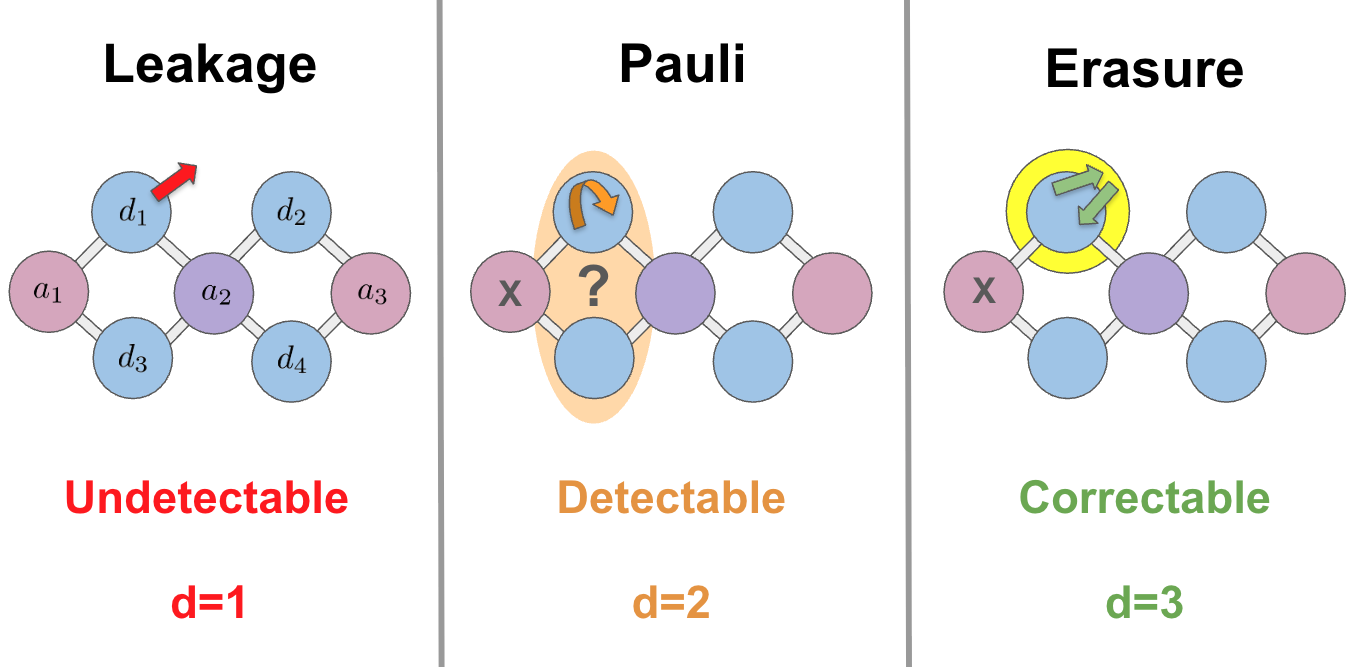

Hierarchy and Correction of Errors

The theoretical analysis establishes a clear hierarchy:

By engineering erasure errors to be the dominant noise source (with suppressed rates of Pauli and leakage errors), codes can correct a greater number of errors per code distance, gaining substantial overhead and threshold benefits.

Theoretical Advances and Scaling Laws

Theoretical analysis and numerical simulations, including recent studies, show orders-of-magnitude improvements in logical error probabilities and substantial increases in error thresholds when location information is incorporated. For instance, surface codes with erasure-dominated noise achieve theoretical thresholds up to 50\% in the code-capacity regime—far surpassing the ∼19% depolarizing threshold [see refs. in (2601.02183)]. Under simulation, the threshold for practical protocols is increased by factors of 2–5, depending on code and bias ratio. The logical error rate pL under erasure noise of rate e and threshold e∗ scales as pL∝(e/e∗)d, compared with (p/p∗)⌈d/2⌉ for Pauli noise, where d is the code distance.

Crucially, the applicability of these gains is not limited to the surface code. High-rate quantum LDPC codes and even certain logical gate protocols (such as magic-state distillation and cultivation) similarly benefit, enabling early fault-tolerant applications at near-term device fidelities.

Implementation: Superconducting Erasure Qubits

Three main experimental approaches have been demonstrated for superconducting erasure qubits:

- Coupled Transmons: Logical qubits are dual-railed across two transmons; amplitude damping in either triggers detectable erasure. This approach maintains compatibility with standard architectures and has been demonstrated at Caltech, AWS, and other groups.

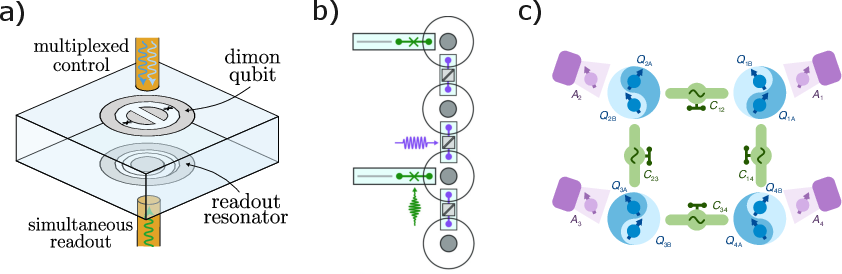

- Multimode (Dimon) Qubits: A coaxial "dimon" qubit leverages two transmon-like modes, encoding the erasure qubit in their single-excitation subspace. OQC has demonstrated improved coherence in this setting, and the approach is compatible with minimal infrastructure changes.

- Cavity QED Systems: Dual-rail qubits encoded in cavity-QED setups exhibit superior coherence properties and have enabled mid-circuit erasure detection and two-qubit operations preserving the erasure-biased hierarchy (Yale, Quantum Circuits, et al.).

Figure 3: Examples of hardware implementations: multimode coaxial dimon qubits, cavity QED dual-rail qubits, and coupled-transmon architectures.

Alternative designs (e.g., exploiting qutrits or more complex inner codes) are under active exploration, but dual-rail structures offer an optimal trade-off between hardware complexity and error-detectability.

Practical and Theoretical Implications

The implications of this paradigm shift toward erasure-biased, hardware-efficient QEC are multifold:

- Thresholds and Resource Reduction: Substantially higher error thresholds and improved scaling enable logical error rates to reach target levels with fewer physical qubits.

- Mitigation and NISQ Applications: Even without full fault tolerance, heralded erasure detection enables effective error mitigation via post-selection on error-free runs. These primitives provide immediate practical utility for noisy intermediate-scale quantum (NISQ) devices.

- Decoding Advances: Decoders exploiting erasure-location information (e.g., modified Union-Find and MWPM algorithms) significantly outperform standard approaches, especially when erasure noise is prevalent.

The theoretical framework suggests that these systems may soon reach operational regimes where logical error rates and hardware overheads become compatible with large-scale quantum algorithms, providing a path beyond the NISQ era.

Open Questions and Outlook

Despite these advances, several directions merit continued investigation:

- Optimizing Erasure Checks: Balancing frequency, false positives/negatives, and reset protocols in erasure circuits.

- Decoder and Simulation Tooling: Adapting design, simulation, and resource estimation tools—most of which are tailored to Pauli noise—to properly benchmark and exploit erasure-based QEC architectures.

- Exploring Code and Hardware Synergy: Characterizing which outer codes gain most from erasure-biased inner codes, and engineering noise profiles for optimal overall performance.

Addressing these practical and theoretical challenges will determine the extent to which erasure qubits catalyze the transition to scalable, fault-tolerant quantum computing.

Conclusion

Superconducting erasure qubits, enabled by dual-rail encoding and hardware error engineering, represent a technically compelling route for achieving hardware-efficient QEC. By transforming the dominant physical error mechanism into a detectable erasure process, logical error probabilities and code thresholds are dramatically improved with minimal hardware overhead. Recent theoretical and experimental progress points to near-term realization of logical qubits with error rates and overheads compatible with early quantum advantage, bridging the gap between current hardware and scalable architectures. The results establish erasure qubits as a central paradigm for advancing both the theory and practical implementation of fault-tolerant quantum computation.