- The paper presents a novel deep visual odometry approach using distortion-aware feature extraction and omnidirectional bundle adjustment.

- It introduces SphereResNet and DAS-Feat to mitigate spherical projection distortions and enhance feature reliability in 360° imagery.

- Experimental results demonstrate significant improvements in tracking accuracy and robustness across both synthetic and real-world datasets.

360DVO: Deep Visual Odometry for Monocular 360-Degree Cameras

Introduction

360DVO presents the first deep learning-based omnidirectional visual odometry (OVO) framework specifically designed for monocular 360-degree cameras. This method addresses major limitations in legacy OVO pipelines that rely on handcrafted features and photometric objectives, which suffer significant performance degradation in challenging real-world environments exhibiting nontrivial scene dynamics, rapid illumination changes, aggressive camera motion, and omnidirectional image distortion. The core advances in 360DVO include the introduction of a distortion-aware spherical feature extractor (DAS-Feat) based on a novel SphereResNet architecture and the derivation of an omnidirectional differentiable bundle adjustment (ODBA) module, enabling efficient, robust, and precise pose and geometry estimation in monocular 360-degree imagery.

360DVO Framework Overview

The 360DVO system consists of two interconnected components: the DAS-Feat feature extraction pipeline and the ODBA optimization backend. Sequential 360-degree RGB frames are input to the system, where DAS-Feat extracts both matching and context features using the proposed SphereResNet, which is explicitly constructed to accommodate projection-induced distortions in spherical images. Feature patches are identified based on local maxima in the feature gradient space, then tracked and registered across frames utilizing the ODBA module, which jointly optimizes for camera poses and 3D patch depth by enforcing spherical reprojection consistency under the unified spherical camera model.

Figure 1: Framework overview of 360DVO, depicting sequential 360-perceptual input, distortion-aware feature extraction via DAS-Feat, patchification, optical flow estimation, and omnidirectional differentiable bundle adjustment in a unified pipeline.

A distinguishing aspect of 360DVO is the learned projection-aware feature representation, in contrast to prior methods that either ignore projection distortion or employ geometrically naive CNN encoders. The system's sparse-patch formulation further targets computational efficiency and robustness compared to dense grid-based approaches.

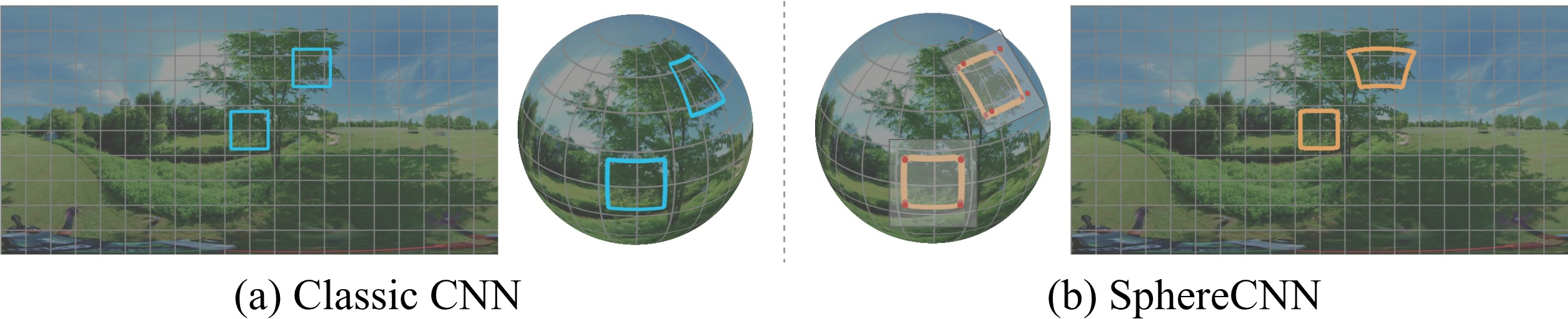

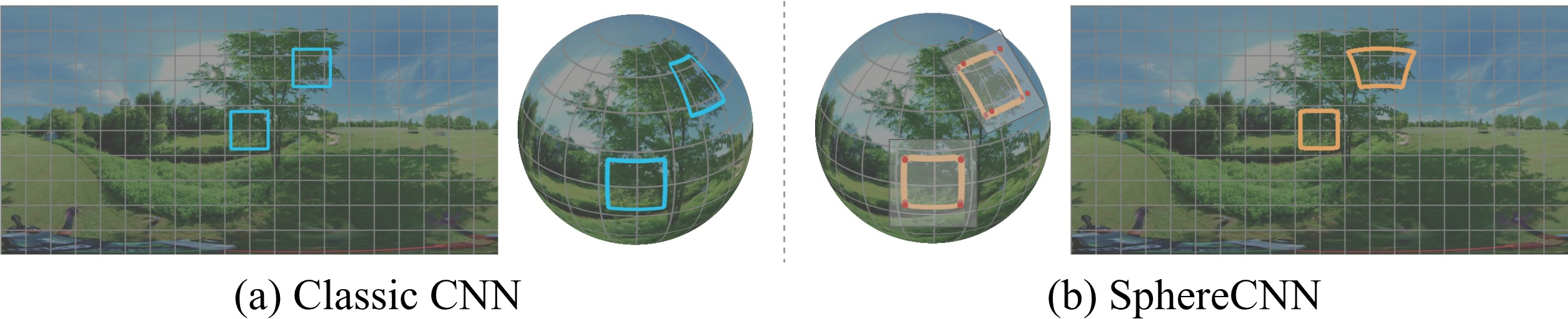

Omnidirectional images represented in equirectangular projection are subject to severe nonlinear distortion as a function of latitude, which undermines standard CNNs' translational invariance and leads to unreliable features when conventional kernels are used. 360DVO addresses this by implementing a hybrid network, SphereResNet, that fuses the spherical convolutional paradigm of SphereNet with deep residual connections. The spherical convolution kernel employs tangent-plane sampling to achieve distortion-adaptivity per latitude, guaranteeing that learned features are consistent and semantic across the sphere.

Figure 2: Comparison of feature extraction between the classic CNN and the SphereCNN [SphereNet], highlighting how distortion-aware convolution kernels (SphereCNN) adapt pixel sampling across latitudes for omnidirectional imagery.

SphereResNet outputs both matching features (low-level, high-gradient for tracking) and context features (higher-level for association and flow estimation). Critically, the extracted feature maps allow direct extraction of square, undistorted patches, simplifying subsequent geometric processing and increasing data fidelity. Dense feature map extraction is avoided to reduce computational burden, and a gradient-magnitude-driven patchification strategy ensures that patches cover the most information-rich regions in the image.

Omnidirectional Differentiable Bundle Adjustment

360DVO introduces an ODBA module that executes bundle adjustment within a spherical camera model framework. The ODBA objective is to jointly optimize camera poses and patch depth parameters by minimizing the squared error between predicted patch positions (via recurrent optical flow update) and their expected reprojected positions under the current pose/depth hypotheses. Jacobians with respect to SE(3) pose parameters and depth are analytically derived, enabling fast and stable optimization using Gauss–Newton iterations.

This approach leverages sparsity through a patch-graph structure, where patches are only associated and optimized across a fixed-radius neighborhood of frames, balancing long-term consistency and computational cost. The spherical reprojection model is essential for proper geometric registration in omnidirectional imagery; empirical ablation shows that naive extension of pinhole BA results in significant performance degeneration.

Experimental Evaluation

360DVO was evaluated on synthetic benchmarks (360VO, TartanAirV2) and a newly proposed real-world OVO dataset. Across all evaluated sequences and metrics—absolute trajectory error (ATE), translational relative pose error (RPE-t), and rotational RPE (RPE-r)—360DVO surpasses state-of-the-art baselines. In the 360VO dataset, 360DVO achieved a 10% ATE reduction over previous bests. On challenging real-world data, 360DVO improves robustness by 50% and accuracy by 37.5% compared to prior baselines, establishing a strong empirical foundation for the method's effectiveness.

The performance gains are consistent across both "Easy" (simple, static) and "Hard" (with complex rotations, dynamic objects, and illumination changes) data. Additional ablation studies confirm the necessity of projection-aware feature design and the efficacy of patch-based sparse BA. Notably, learning-based methods consistently outperform classical pipelines, demonstrating the importance of end-to-end representation learning in OVO.

Dataset and Benchmarks

A significant contribution of this work is the introduction of the 360DVO dataset—a real-world benchmark comprising 20 sequences of 360-degree videos recorded in a wide variety of settings, including wild, indoor, urban, and aerial environments. Sequences are classified into Easy and Hard subsets for rigorous performance stratification, and pseudo ground truth camera trajectories are reconstructed using Agisoft Metashape, whose accuracy is verified against synthetic benchmarks. This new dataset exposes the limitations of prior OVO methods under real-world perturbations and provides a challenging testbed for subsequent research.

Numerical Results and Claims

360DVO consistently outperforms both learning-based and classical methods on all trajectory accuracy metrics (ATE, RPE-t, RPE-r), with a 56% improvement over the best prior learning-based OVO system (DPV-SLAM) on Easy data and 37% over the best on Hard data (DPVO). The system maintains 100% tracking success rate on the new real-world dataset, whereas traditional methods and synthetic-data-trained learning-based systems exhibit frequent track loss or severe drift.

Ablation demonstrates that (1) replacing classic CNNs with a distortion-aware encoder is essential for stability; (2) increasing patch count or feature map resolution can degrade performance due to ambiguous correspondences in highly repetitive or low-entropy scenes; (3) fast variants of 360DVO can achieve competitive accuracy with substantially increased FPS, showing scalability for edge deployment.

Implications and Future Directions

360DVO substantively advances monocular 360-degree visual odometry by removing the limitations imposed by handcrafted features and pinhole-centric convolutional architectures. The proposed SphereResNet and ODBA modules are likely extensible to related dense mapping and 3D scene understanding tasks in omnidirectional settings. The modularity and analytic tractability of the proposed backend suggest compatibility with future progress in lightweight spherical feature extractors, efficient optimization solvers, and broader integration with differentiable rendering techniques.

Practically, 360DVO's robustness and efficiency position it as a strong candidate for deployment in robotics and mobile AR applications, where 360-degree awareness and real-time inference are increasingly necessary. The real-world dataset will likely serve as a reference for subsequent work aiming at even more challenging operational regimes, including dynamic and cluttered scenes.

Conclusion

360DVO unifies projection-aware deep feature learning and spherical geometry-based BA, yielding a state-of-the-art OVO framework that addresses the major limitations of current pipelines. The results on synthetic and real-world data, the rigorous ablation, and the introduction of a meaningful new benchmark indicate that its methodological advances can serve as a foundation for continued developments in omnidirectional perception and localization.