- The paper introduces a unified, type-safe architecture for LLM agent orchestration that ensures provider-agnostic integration and reproducible workflows.

- The paper demonstrates that a synchronous execution model with dynamic Python type hints provides explicit control flow and simplified debugging.

- Its modular design with pre- and post-execution hooks offers enhanced security, cost control, and transparent context management for robust research applications.

Authoritative Analysis of "Orchestral AI: A Framework for Agent Orchestration" (2601.02577)

Introduction and Landscape Position

The "Orchestral AI" framework is architected to address the fragmentation and operational complexity inherent in contemporary LLM agent systems, especially in environments requiring provider-agnostic integration, reproducibility, and deployment simplicity. Orchestral explicitly responds to limitations in frameworks such as LangChain, CrewAI, AutoGPT, N8N, and provider-specific SDKs by employing a unified, type-safe architecture. It targets use cases that require both production robustness and research agility, prioritizing explicit control flow and modular extensibility over multi-tiered asynchronous event handling and hidden agent orchestration.

Architecture Overview

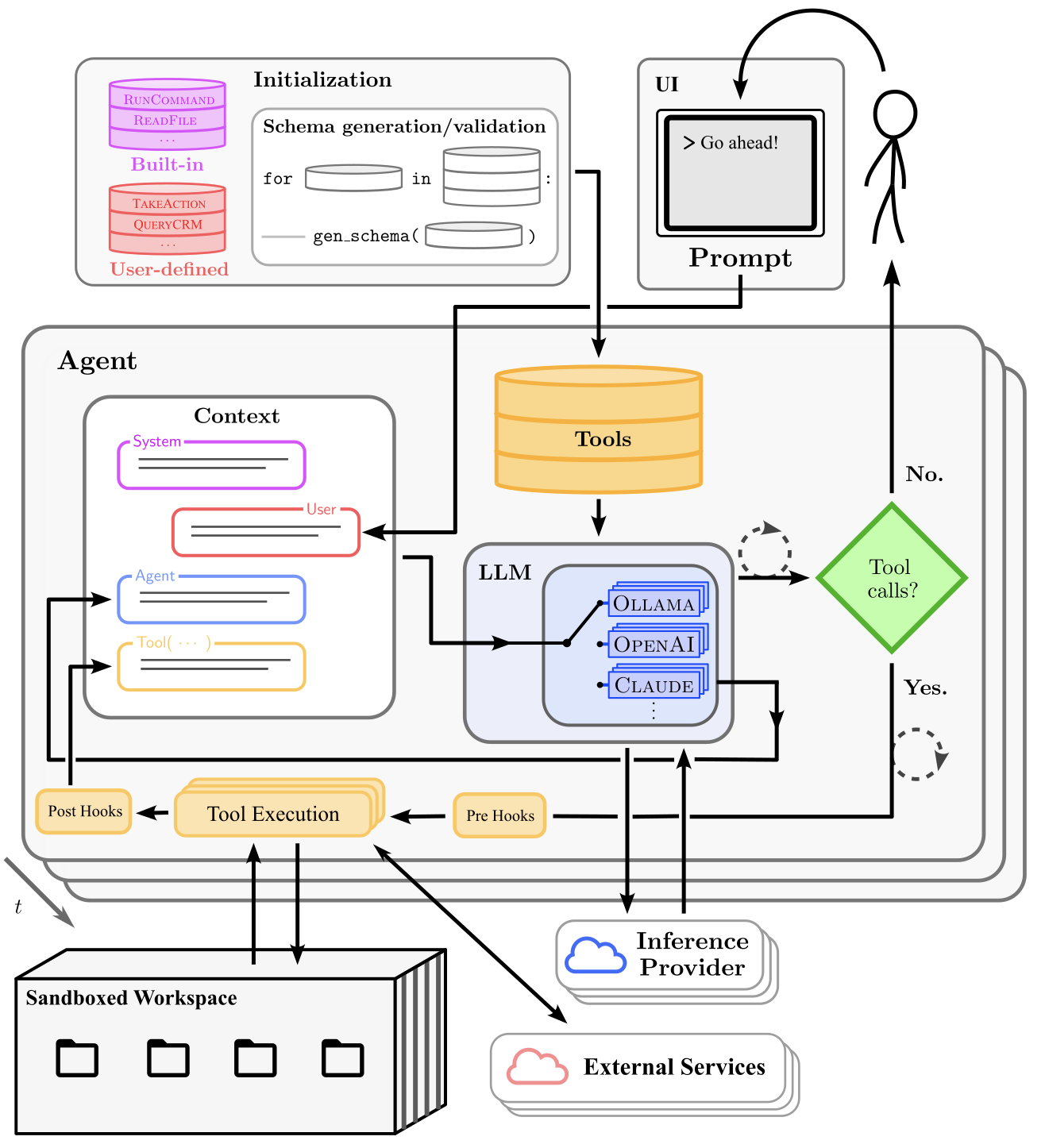

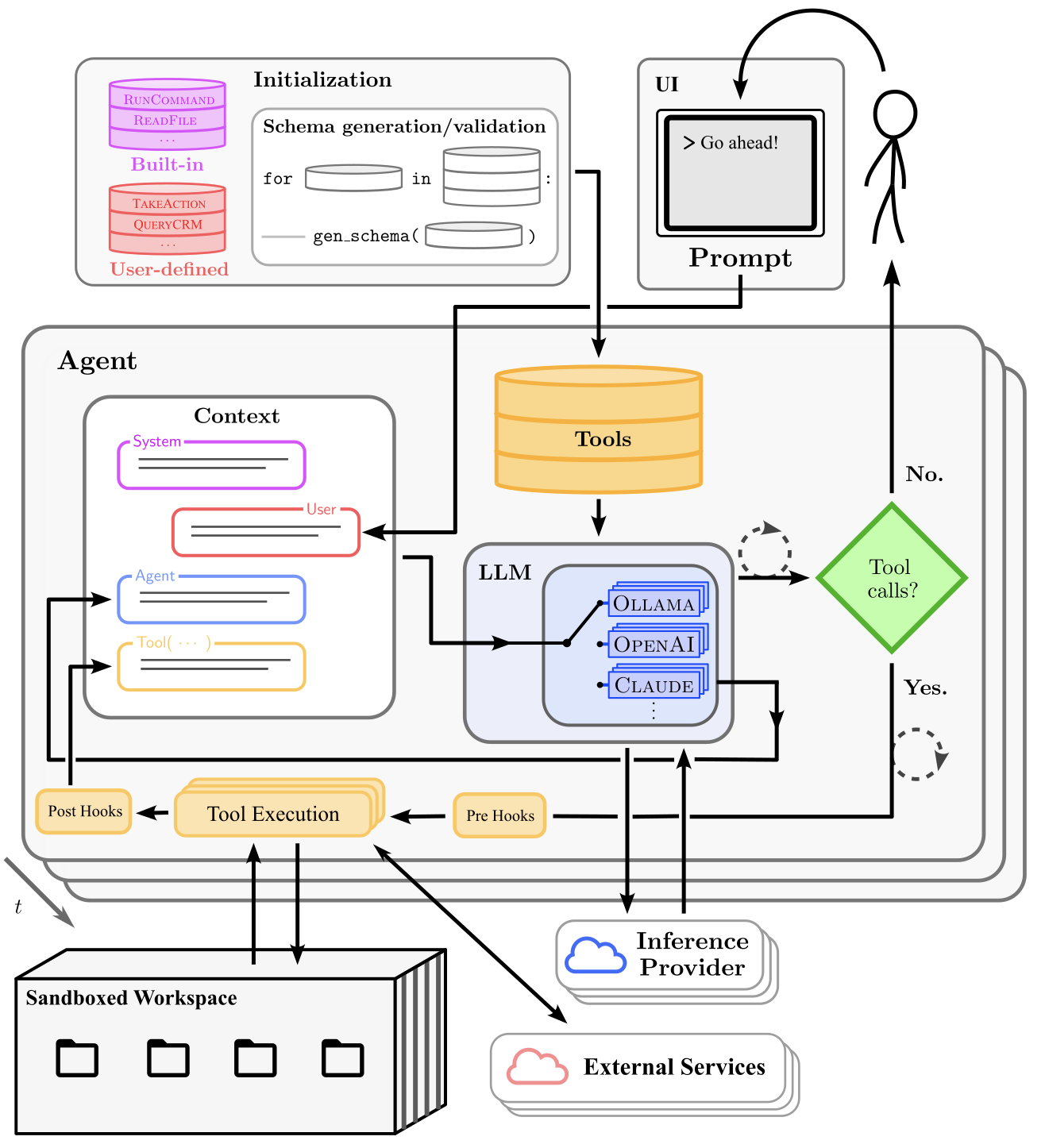

Central to Orchestral is the Agent object, which encapsulates the provider-agnostic LLM interface, a tool execution engine, and a persistent, validated conversation context. Provider integration is abstracted through a pluggable base class, supporting major vendors such as OpenAI, Anthropic, Google, Groq, Mistral, AWS Bedrock, and local deployments via Ollama. Switching providers is operationally trivial and incurs no architectural friction from the developer’s perspective.

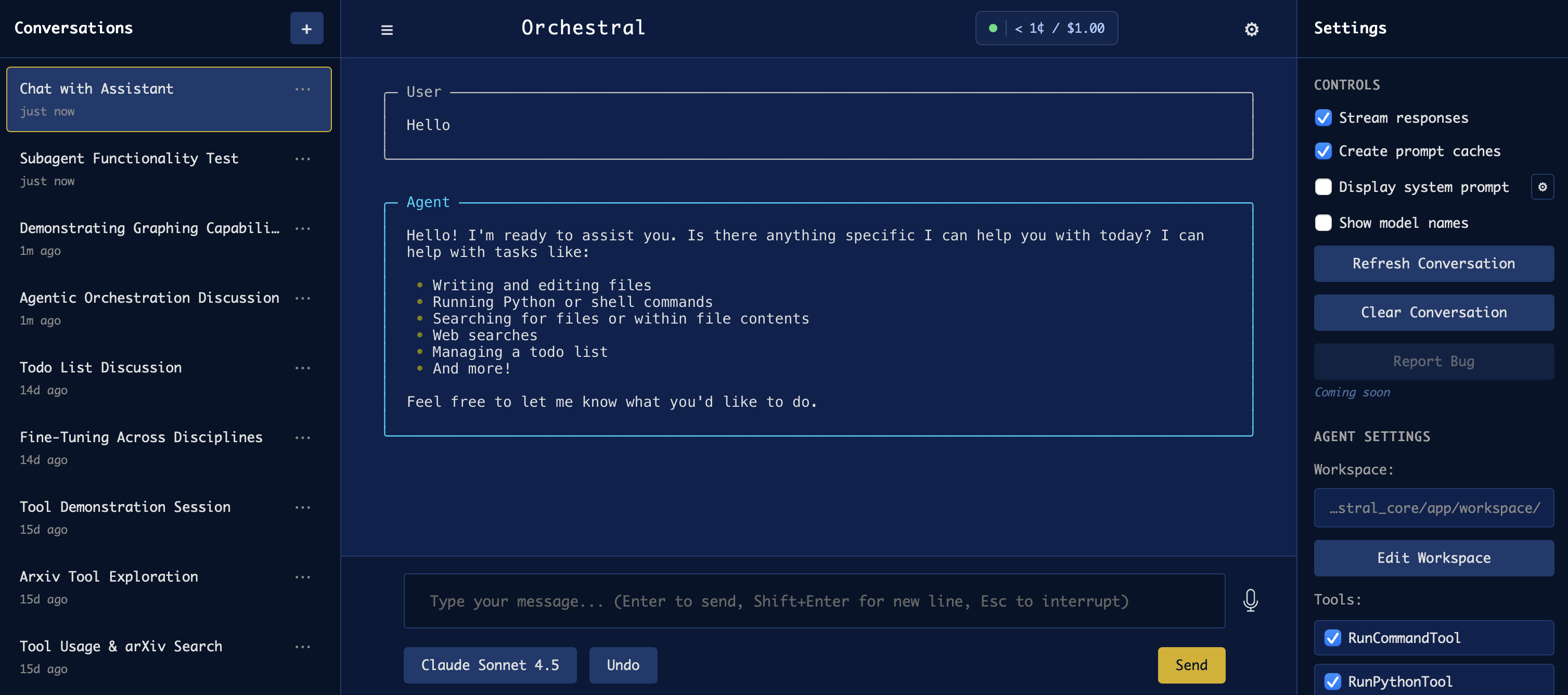

Figure 1: Orchestral architecture centered on the Agent object, which contains the LLM, Tools, and Context. The Agent manages tool execution flow through pre/post hooks, makes tool calling decisions, and updates the conversation context. External components include the sandboxed workspace, UI, and external services.

The tool system leverages Python type hints and decorators to dynamically generate validated schemas, eliminating manual descriptor writing while enforcing strict type safety across all provider boundaries. Stateful and stateless tools are supported via distinct object models, and runtime execution is tightly integrated with a two-layer hook mechanism (pre- and post-execution). Hooks facilitate security, approval, output shaping, cost control, and audit.

Context management in Orchestral ensures the rigorous validation absent in many contemporary frameworks, with orphaned tool results and mismatched message sequences automatically detected and corrected prior to every provider API interaction. Persistent conversation state and provider-independent serialization offer seamless handoff between agents and providers, satisfying both reproducibility and portability requirements.

Execution Model and Front-End Integration

Orchestral employs a synchronous execution paradigm, which guarantees explicit control flow and simplifies debugging down to standard Python stack traces. Streaming outputs are handled via generators, obviating the need for async machinery while supporting real-time feedback and computation interrupts.

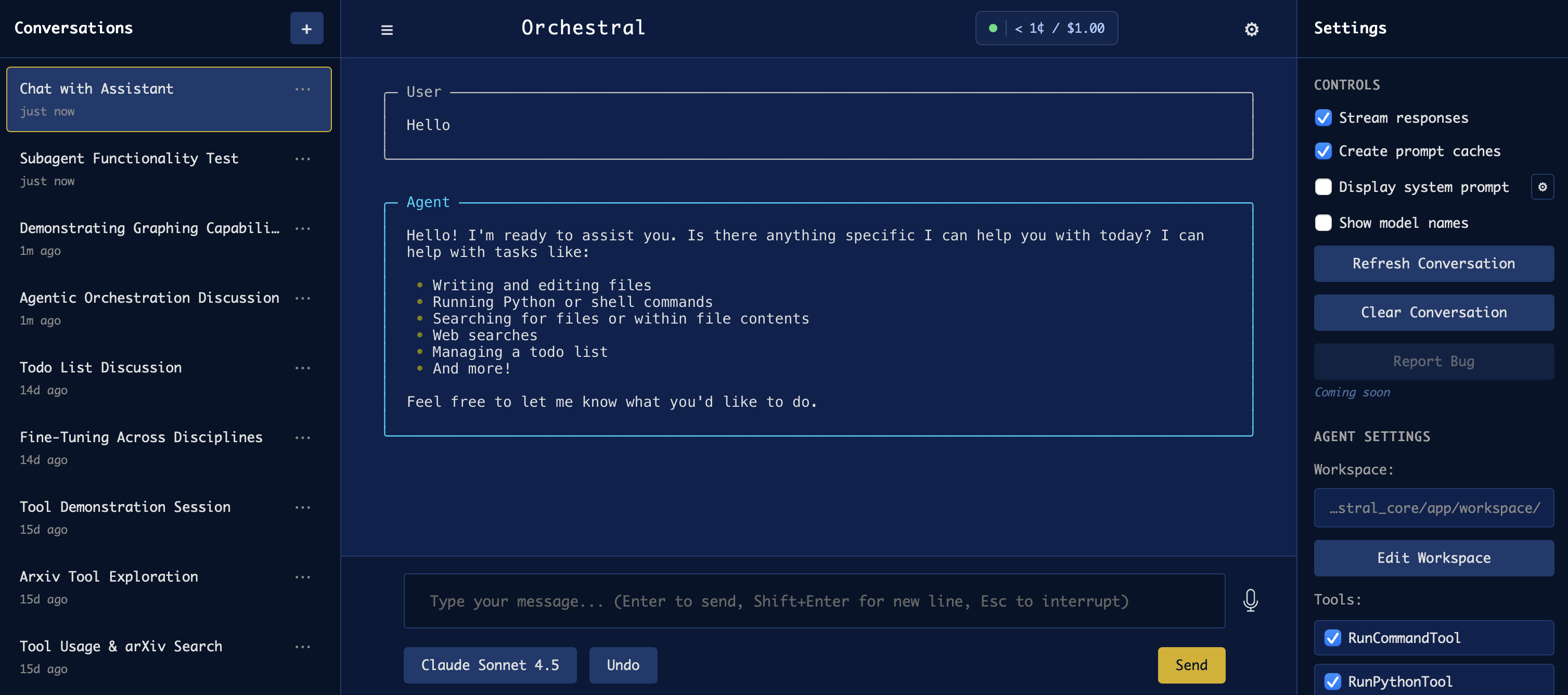

Figure 2: The Orchestral web UI showing a conversation with tool execution and streaming responses.

The UI component is decoupled, supporting both local web applications and CLI-based interaction modules. The Agent object can be embedded into third-party applications or run standalone for interactive exploration.

Security and Safety Controls

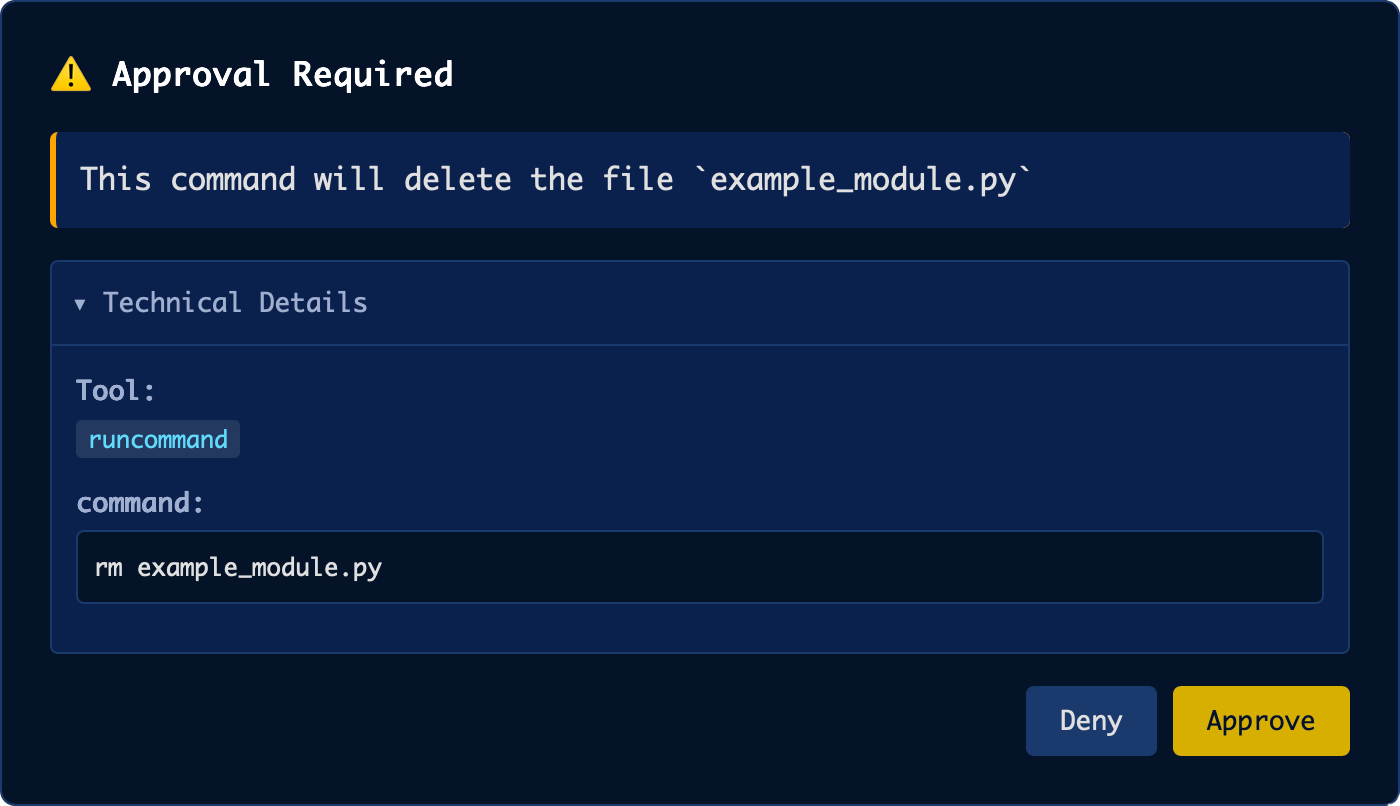

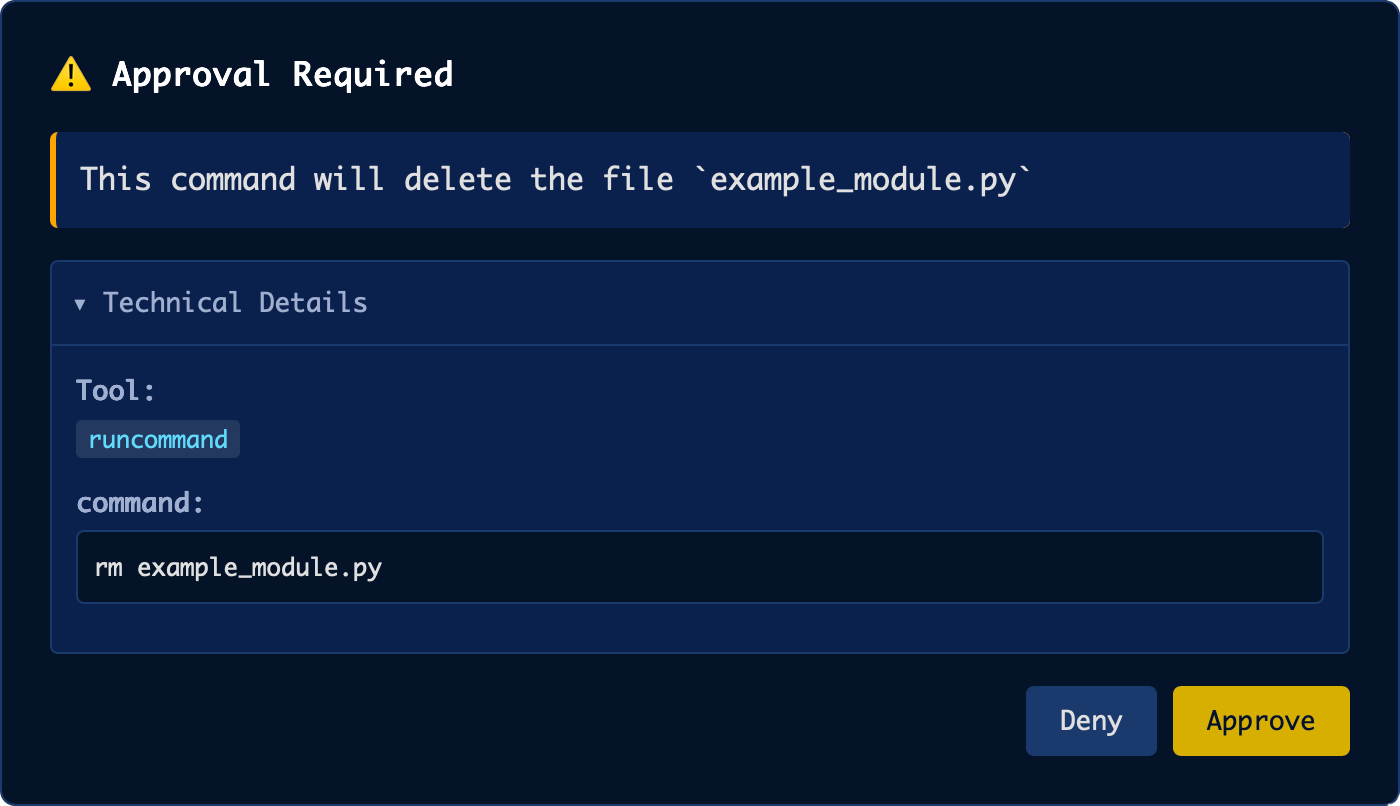

The framework’s multi-layered security approach incorporates pre-execution hooks such as pattern blockers, LLM-based safety analysis, and interactive user approval systems. The UserApprovalHook implements a triage system for potentially hazardous actions, mediating execution via explicit user consent.

Figure 3: User Approval Hook prompting the user to approve a potentially dangerous unwanted file deletion command.

Additionally, domain-specific tools such as EditFileTool enforce "read-before-edit" protocols, while consistency checks based on file hashes preempt race conditions when files are externally modified. These mechanisms are executed transparently and do not require specialized prompting.

Advanced and Research-Specific Capabilities

Orchestral provides a curated library of production-ready tools tailored for agentic workflows in scientific domains, including robust filesystem, execution, and web research functions. Subagent support enables hierarchical reasoning, wherein tools themselves can instantiate autonomous Agents with specialized prompts and toolsets.

Integration with the Model Context Protocol (MCP) ensures Orchestral’s extensibility into broader AI ecosystems, with standardized tool sharing and interface adaptation.

LaTeX export capabilities are inherently built into the UI, aligned to scientific publication workflows. The orchestral.tex module allows direct integration of conversational exchanges, tool invocations, and agent reasoning into academic documents by generating colored, environment-specific TeX code reproducible from conversation logs.

Persistent terminal sessions are supported, enabling stateful shell interaction consistent with human usage paradigms and facilitating complex, multi-step analyses in computational environments.

Empirical Results and Deployed Use Cases

The framework is actively deployed in high-energy physics (HEPTAPOD) and exoplanet atmosphere retrieval (ASTER), supporting agentic orchestration of Monte Carlo simulations, validation workflows, and integrative analysis. These deployments attest to the framework’s suitability for rigorous, auditable, and scalable agent workflows in research-oriented settings.

Limitations and Future Developments

The present limitations include lack of automatic context compaction/summarization, sequential rather than parallel tool execution, and an absence of native multi-agent orchestration primitives. Visual and multimodal functionality remains rudimentary, limited primarily to image analysis.

Planned advancements include hierarchical multi-agent coordination (via manager-worker decomposition), context summarization via tool abstraction, deeper MCP integration, and extended lightweight deployment across edge and serverless architectures.

Conclusion

Orchestral AI exemplifies a disciplined approach to agent framework design, privileging modularity, synchronous execution, provider abstraction, and transparent schema handling. It delivers a deterministic, auditable environment suitable for both production and research workflows. Key features such as provider-agnostic tool execution, reproducible and cost-aware workflows, explicit security modeling, and robust context management set a benchmark for agentic frameworks that avoid architectural bloat and vendor lock-in.

Future developments will expand support for multi-agent systems, automated context compaction, and ecosystem interoperation without sacrificing the clarity, debuggability, and portability central to Orchestral’s design. The framework constitutes a versatile backbone for agent-based scientific computing and offers a reproducible, transparent foundation for next-generation AI orchestration.