- The paper introduces a novel Swin Transformer U-Net 3D framework that integrates transformer-based attention with U-Net skip connections for accurate lesion segmentation in FDG-PET/CT scans.

- It employs a robust preprocessing pipeline and hierarchical attention mechanisms to capture both local details and global context, achieving a Dice score of 0.88 on the AutoPET III dataset.

- The approach demonstrates enhanced detection of small and irregular lesions with reduced false positives, promising streamlined integration into clinical oncology workflows.

Introduction

The paper "Lesion Segmentation in FDG-PET/CT Using Swin Transformer U-Net 3D: A Robust Deep Learning Framework" (2601.02864) introduces the Swin Transformer UNet-3D framework (SwinUNet3D) for lesion segmentation in Fluorodeoxyglucose Positron Emission Tomography / Computed Tomography (FDG-PET/CT) scans. PET/CT imaging plays a pivotal role in oncology, combining functional and anatomical information in a single scan. Traditional lesion segmentation methods, reliant on manual delineation, are labor-intensive and subject to variability among experts. The SwinUNet3D framework promises automated, accurate segmentation by leveraging the shifted window self-attention of Swin Transformers alongside U-Net-style skip connections.

Methodology

Preprocessing Pipeline

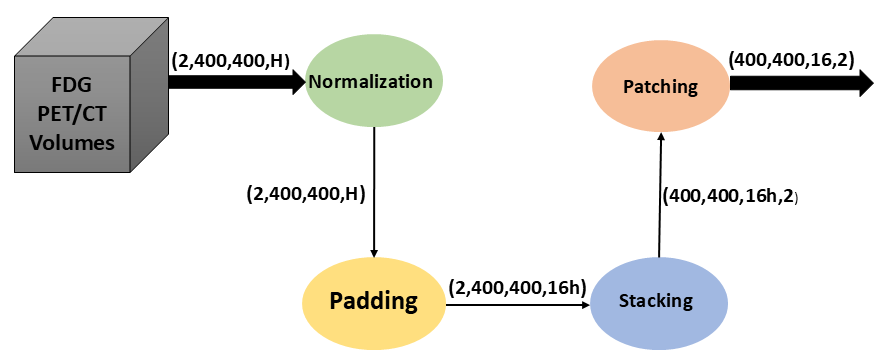

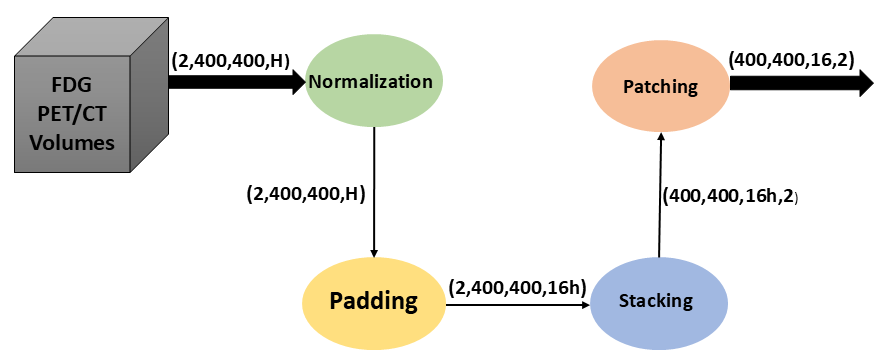

The preprocessing workflow applied to PET/CT data is pivotal for the effective operation of SwinUNet3D. The process comprises intensity normalization, zero-padding, and patching—steps that ensure the network receives standardized inputs and effectively utilizes 3D context.

Figure 1: Preprocessing workflow applied to PET/CT data. Raw inputs undergo intensity normalization, zero-padding, and patching before being fed into the SwinUNet3D network. This ensures consistent input dimensions and efficient utilization of 3D context.

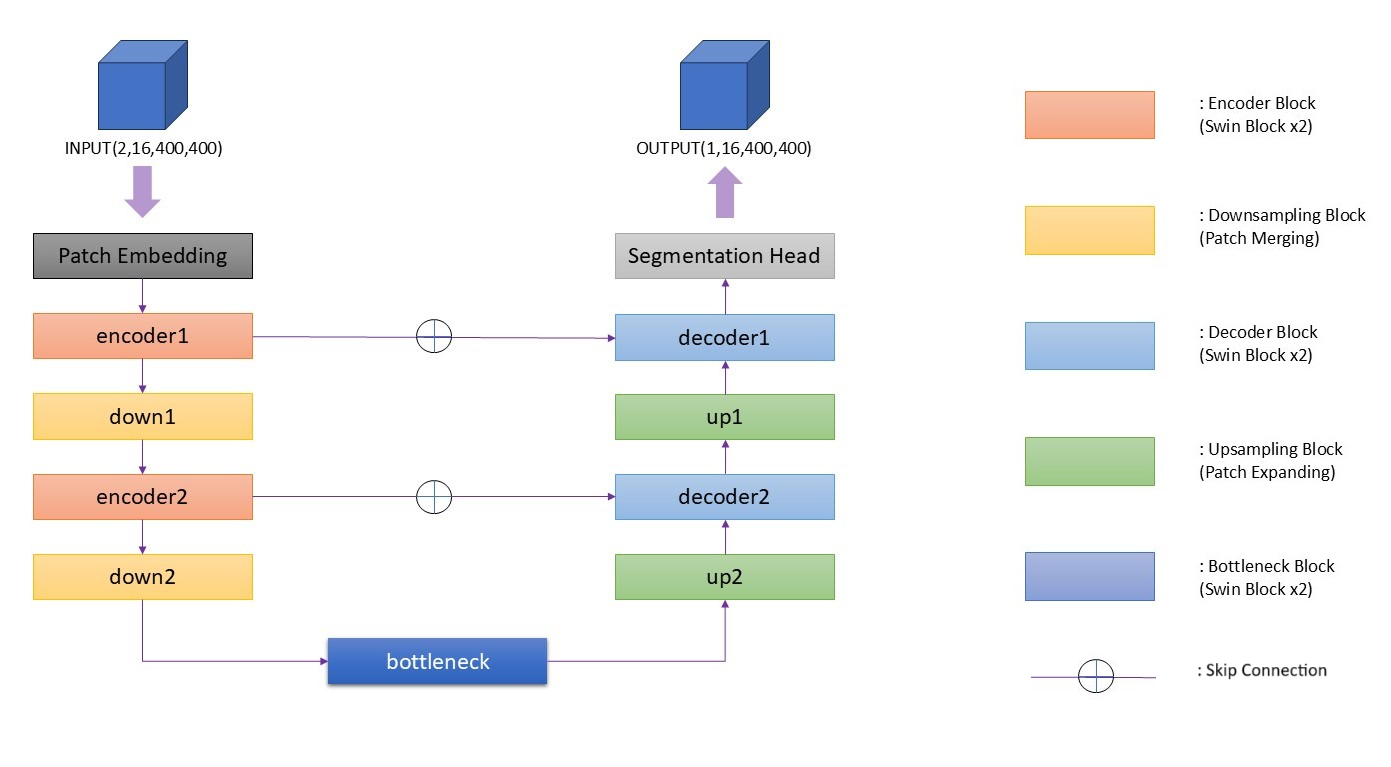

Model Architecture

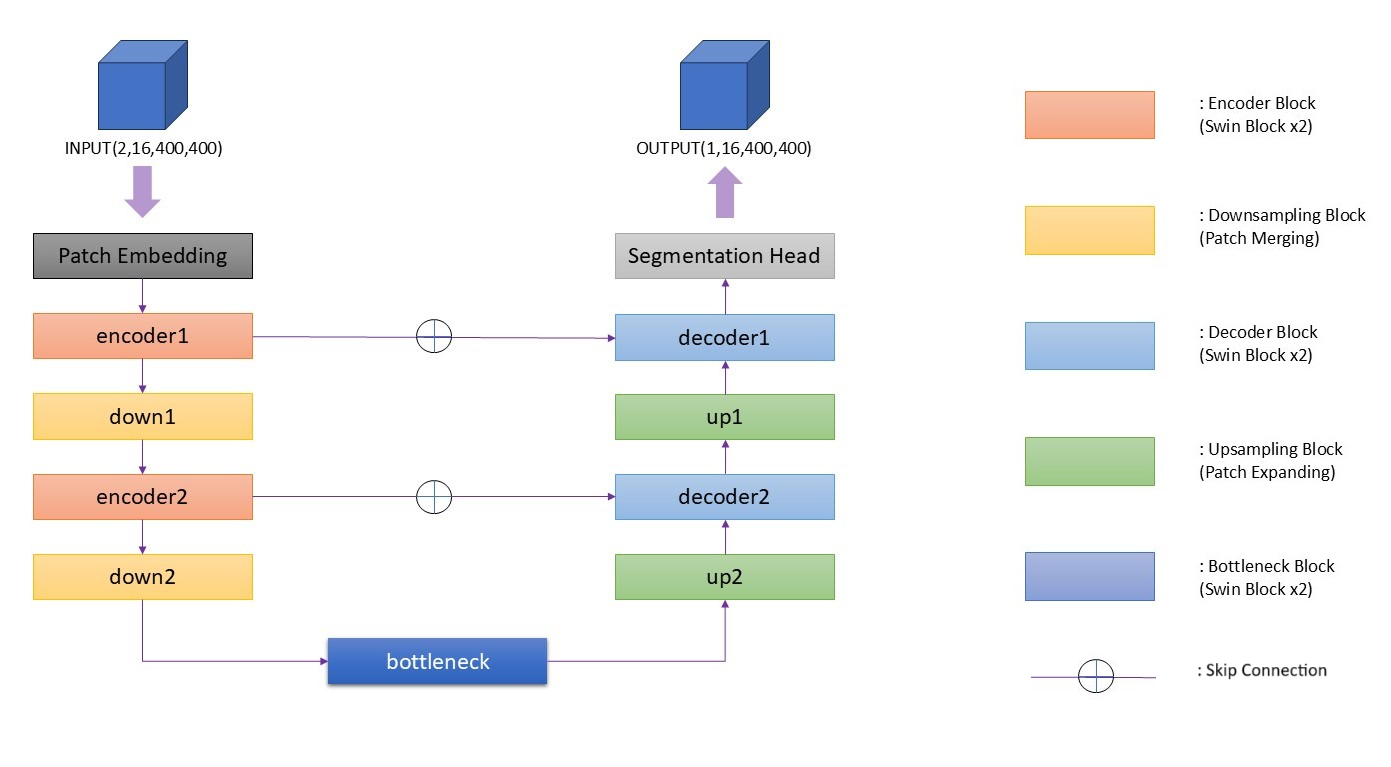

SwinUNet3D integrates Swin Transformer blocks within a U-Net framework, harmonizing local detail preservation with long-range spatial dependencies.

Figure 2: Overview of the proposed SwinUNet3D architecture. The model adopts a U-Net-like encoder-decoder design with hierarchical Swin Transformer blocks, patch embedding, bottleneck, and skip connections.

Key Components

- Patch Embedding: Converts 3D input volumes into feature tokens for transformer processing.

- Encoder Blocks: Employ Swin Transformer blocks to model spatial dependencies efficiently.

- Bottleneck: Captures global context and high-level semantics.

- Decoder Blocks: Recovers segmentation maps using upsampling and skip connections.

- Hierarchical Attention: Combats the computational cost of full attention mechanisms by using localized windows.

The architecture of SwinUNet3D represents an efficient and robust approach for PET/CT lesion segmentation tasks, enabling the model to capture complex and irregular lesion boundaries accurately.

Results

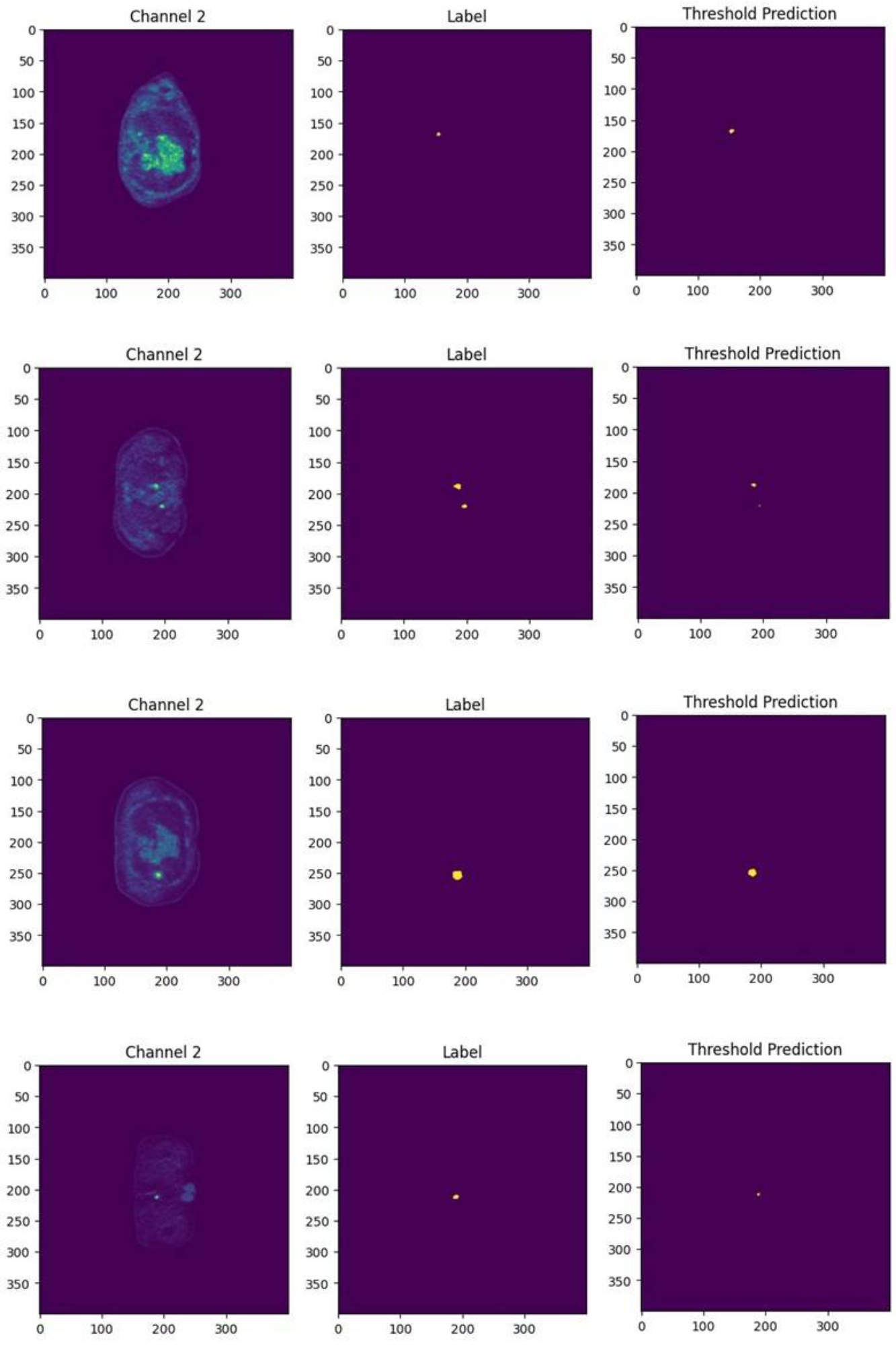

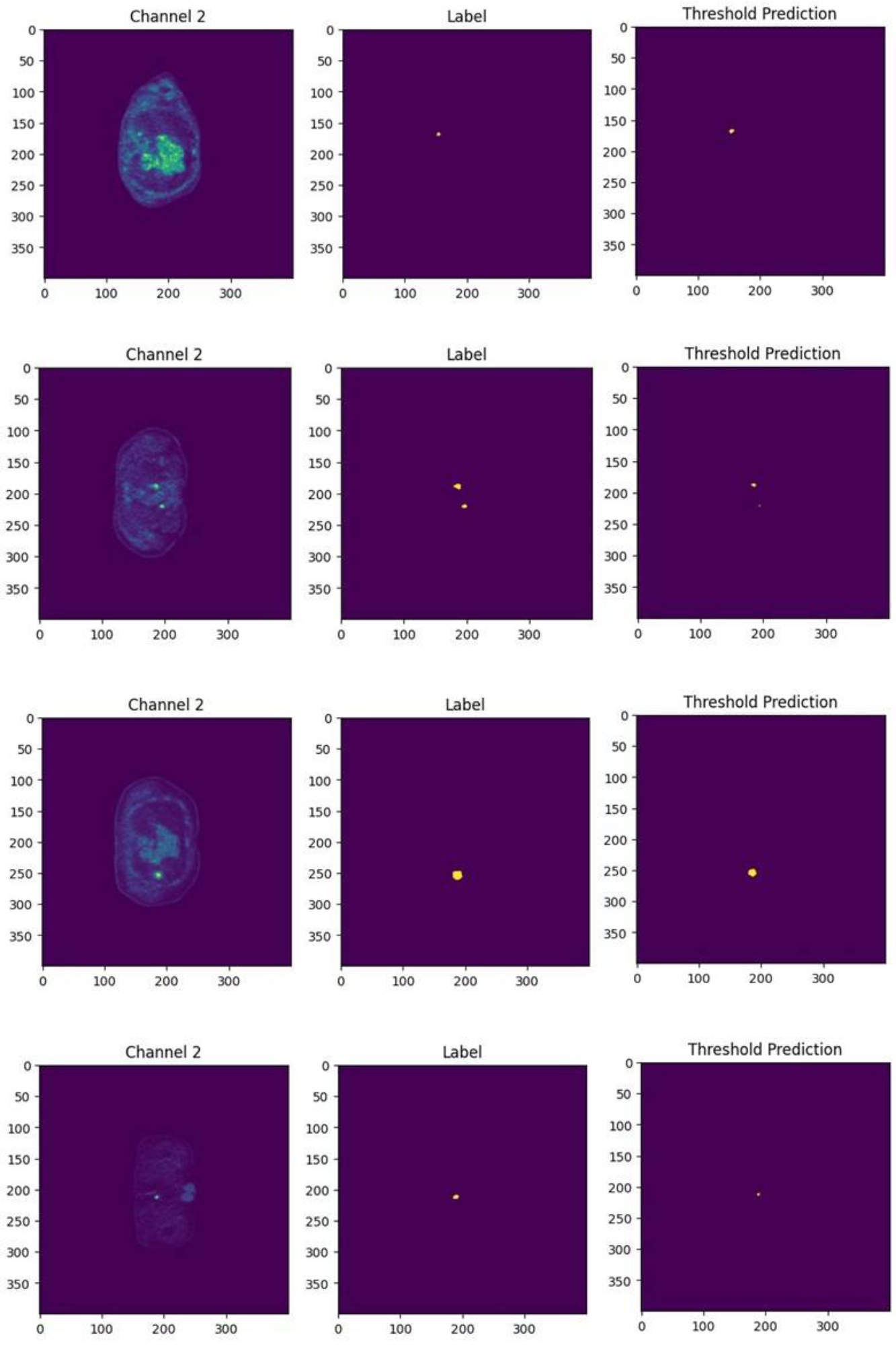

The SwinUNet3D model significantly outperforms traditional 3D U-Net implementations on the AutoPET III dataset, achieving a Dice score of 0.88 compared to 3D U-Net's 0.48. The qualitative results illustrate SwinUNet3D's proficiency in capturing small and irregular lesions more effectively than CNN-based approaches.

Figure 3: Qualitative segmentation results comparing 3D U-Net and SwinUNet3D, demonstrating reduction in false negatives and more precise lesion boundary delineation.

Discussion

Strengths and Implications

The SwinUNet3D framework exhibits several advantages:

- Robust Detection Capability: Improved identification of small and irregular lesion structures.

- Reduced False Positives: Hierarchical feature processing stabilizes predictions.

- Efficiency in Clinical Workflows: Faster inference times facilitate integration into real-time oncology imaging systems.

The model's success underscores the potential of transformer-based architectures to achieve higher segmentation accuracy in medical imaging, impacting both clinical and research settings.

Limitations and Future Directions

While SwinUNet3D offers substantial improvements, several limitations are noted:

- Single-Tracer Evaluation: Current evaluations are limited to FDG-PET/CT scans. Expanding to multi-tracer datasets is necessary.

- Hardware Constraints: Further optimization strategies are needed to fully harness the model's capabilities.

- Comparative Analysis: Broader benchmarking against other transformer-based models is recommended.

Future research pathways include extending the approach to incorporate different imaging tracers, scaling experiments for robustness, and validating clinical applicability with radiologists.

Conclusion

The introduction of SwinUNet3D marks a significant advancement in automated lesion segmentation, harnessing the power of Swin Transformers to enhance segmentation accuracy and computational efficiency. By excelling in both numerical and qualitative evaluations, the framework demonstrates promise for streamlining radiology workflows and addressing variability in lesion delineation through automation. Future work aims to expand the scalability and applicability of this framework across diverse imaging contexts, supporting the broader integration of transformers in clinical oncology imaging.