Who can compete with quantum computers? Lecture notes on quantum inspired tensor networks computational techniques

Abstract: This is a set of lectures on tensor networks with a strong emphasis on the core algorithms involving Matrix Product States (MPS) and Matrix Product Operators (MPO). Compared to other presentations, particular care has been given to disentangle aspects of tensor networks from the quantum many-body problem: MPO/MPS algorithms are presented as a way to deal with linear algebra on extremely (exponentially) large matrices and vectors, regardless of any particular application. The lectures include well-known algorithms to find eigenvectors of MPOs (the celebrated DMRG), solve linear problems, and recent learning algorithms that allow one to map a known function into an MPS (the Tensor Cross Interpolation, or TCI, algorithm). The lectures end with a discussion of how to represent functions and perform calculus with tensor networks using the "quantics" representation. They include the detailed analytical construction of important MPOs such as those for differentiation, indefinite integration, convolution, and the quantum Fourier transform. Three concrete applications are discussed in detail: the simulation of a quantum computer (either exactly or with compression), the simulation of a quantum annealer, and techniques to solve partial differential equations (e.g. Poisson, diffusion, or Gross-Pitaevskii) within the "quantics" representation. The lectures have been designed to be accessible to a first-year PhD student and include detailed proofs of all statements.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Explain it Like I'm 14

Overview

This paper is a set of clear, hands-on lecture notes about a powerful idea in computing called tensor networks. The authors focus on two special kinds of tensor networks—Matrix Product States (MPS) and Matrix Product Operators (MPO)—and show how they let ordinary (classical) computers handle problems that look “impossibly big” at first glance, like simulating certain quantum computers or solving complex equations from physics. The big message is: even though quantum computers work with huge spaces of possibilities, many useful cases have hidden structure that smart classical methods can exploit.

Key Objectives and Questions

The lectures aim to answer simple but important questions:

- How can we store and compute with gigantic vectors and matrices (with sizes like 2N) using much less memory?

- Which classical algorithms can match or compete with what quantum computers promise to do?

- How do we turn real problems—like simulating a quantum circuit or solving a differential equation—into a form that tensor networks can handle?

- Can we “learn” a good tensor network (like an MPS) from only a small number of samples of a large object?

Methods and Approach (explained simply)

To make the ideas friendly, think of the following:

- A tensor is like a multi-dimensional table of numbers (a vector is a 1D table, a matrix is 2D, a tensor can be 3D, 4D, and so on).

- A tensor network connects these tables by “wires” (shared indices), forming a circuit of small building blocks. Instead of one giant table, you store many small ones and only connect them when needed.

Here are the main tools the paper teaches:

Matrix Product States (MPS)

- Imagine a very long number list with 2N entries (like all possible outcomes of N coin flips). MPS represents this enormous list as a chain of small matrices linked together.

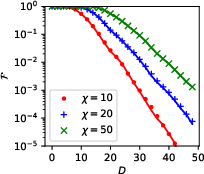

- The “bond dimension” (often called rank) measures how much “stuff” needs to pass through each link. If the list has simple structure (low entanglement), the bonds can stay small, so the whole chain is compact and fast to use.

Matrix Product Operators (MPO)

- MPOs are the same idea but for matrices (things that act on vectors). They let you apply huge matrices to huge vectors by only manipulating small pieces at a time.

Core Linear Algebra Tricks

- QR and SVD: ways to factor a matrix into simpler parts. Think of “cleanly rotating and stretching” data to find the most important directions. This is the backbone of compression (keep the important stuff, drop the tiny details).

- DMRG (Density Matrix Renormalization Group): a famous method to find the lowest-energy state (ground state) of quantum systems by walking along the MPS chain and improving one piece at a time.

- MPO–MPS product: the “matrix-times-vector” step done in the compressed MPS/MPO format.

Learning a Tensor Network: TCI

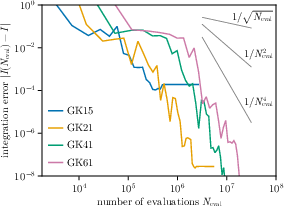

- Tensor Cross Interpolation (TCI) is a learning algorithm. If you can ask a giant object “what’s your value at these few positions?”, TCI figures out an MPS/MPO that matches it, without ever building the whole thing in memory. It’s like guessing a massive table by tasting only carefully chosen bites.

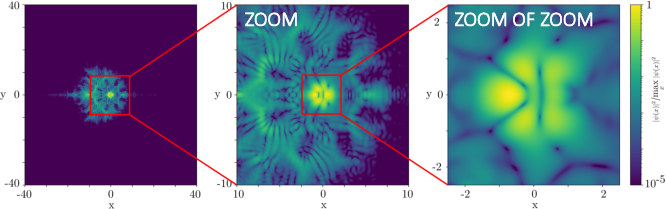

The “Quantics” (QTT) Representation

- Many functions live on grids with a huge number of points (like a million or more). Quantics reshapes one long list into many small dimensions using binary digits (like unpacking a number into its bits). In this form, calculus tasks—differentiation, integration, convolution, even the Fourier transform—become simple MPO/MPS operations. In the right cases, these can be much faster than standard methods.

Quantum Circuits as Tensor Networks

- A quantum circuit (a sequence of gates on qubits) can be drawn as a tensor network. Contracting (multiplying and summing) that network simulates the circuit.

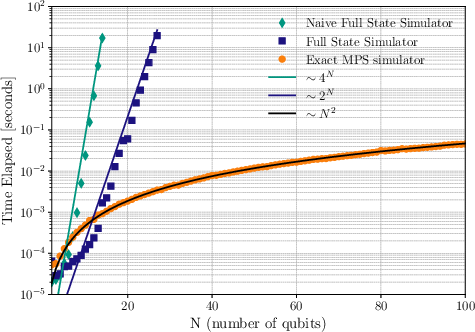

- The notes give two emulators:

- A full “state vector” simulator (simple but memory-hungry; good for few qubits).

- An exact MPS simulator for shallow circuits (often much faster; cost grows with how tangled—entangled—the circuit becomes, not just with qubit count).

Main Findings and Why They Matter

Here are the main takeaways and results, stated simply:

- Not all “exponentially big” problems are equally hard. If the data has structure (low entanglement/low rank), MPS/MPO compressions make them manageable.

- Classic algorithms can rival some quantum tasks:

- Exact or compressed simulation of many quantum circuits (especially shallow or structured ones) is feasible using MPS/MPO.

- Quantum annealers (another kind of quantum machine) can also be simulated in this framework.

- The lectures provide complete, build-it-yourself recipes:

- How to add, compress, and orthogonalize MPS.

- How to multiply MPOs, apply them to MPS, solve Ax = b, and find ground states (DMRG).

- How to use TCI to learn an MPS/MPO from queries, not full data.

- Powerful “calculus as tensor networks”:

- The authors show explicit MPOs for differentiation, indefinite integration, convolution, and even the quantum Fourier transform.

- In quantics form, key operations (including the Fourier transform) reduce to MPO–MPS products, which can be drastically faster than standard fast algorithms when the function is compressible in this representation.

- Real applications:

- Simulating quantum computers “exactly” or with controlled compression.

- Simulating quantum annealers.

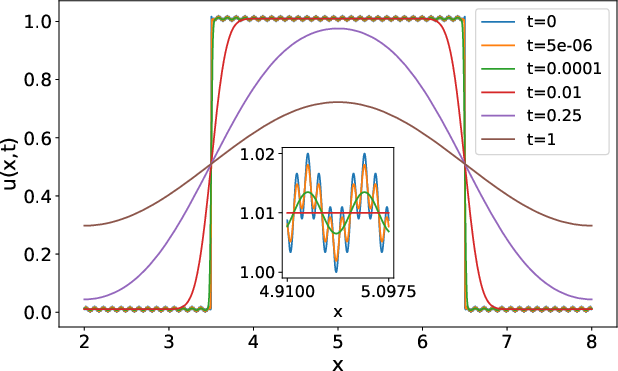

- Solving partial differential equations (like Poisson, diffusion, Gross–Pitaevskii) using quantics, which opens a path to extremely efficient solvers for large grids.

Why this is important:

- It shows where classical methods can still shine against near-term quantum devices, which face challenges like noise (decoherence) and limited readout. This helps separate hype from realistic expectations.

- It gives students and researchers practical, tested algorithms they can implement.

Implications and Potential Impact

In plain terms:

- For quantum computing: These methods set a moving target. If a quantum advantage is claimed, one must check whether MPS/MPO or related techniques already cover that case efficiently. The notes remind us that quantum computers output only a small amount of information (N measured bits), and noise limits circuit depth—both are real-world hurdles.

- For classical computing: Tensor networks are a versatile toolbox for crushing big problems by using structure. They let you do:

- Efficient simulations of many-body quantum physics.

- Learning and compressing massive datasets from limited samples (via TCI).

- Fast numerical calculus on huge grids (via quantics).

- For education and practice: The lectures are designed so a first-year PhD student (or a motivated learner) can code the algorithms, understand the proofs, and apply them to real problems. This widens access to state-of-the-art simulation and computation tools.

In short, the paper shows that with clever representations (MPS/MPO) and smart learning/compression (SVD, DMRG, TCI, quantics), classical computers can tackle many “too big to store” problems, sometimes matching or even beating what early quantum machines can do—especially when the underlying data has structure.

Knowledge Gaps

Knowledge gaps, limitations, and open questions

Below is a focused list of gaps and unresolved questions that emerge from the paper’s scope and claims; each item is framed to enable concrete follow-up work.

- Formal bounds on bond-dimension growth for MPS/MPO simulations across circuit families (random, shallow/deep, structured), and resulting runtime/memory complexity as a function of depth, topology, and gate set.

- End-to-end error control for approximate simulations: rigorous bounds on observable errors induced by SVD/QR truncations and successive compressions during MPO–MPS products.

- Practical and theoretical conditions under which the “quantics” FFT (and QFT-as-MPO) yields exponential speedups over classical FFT; explicit assumptions on structure, rank, and compressibility, with empirical benchmarks and stability analyses.

- Robustness and convergence guarantees of Tensor Cross Interpolation (TCI): sample complexity, stopping criteria, worst-case error bounds, and performance on non-smooth or highly oscillatory functions.

- Automated contraction-order planning for quantum-circuit tensor networks with guarantees (e.g., treewidth-aware heuristics), and integration with circuit graph structure to minimize intermediate tensor sizes.

- Efficient handling of mid-circuit measurements, classical control flow, and adaptive branching within MPS/MPO frameworks; impact on bond-dimension growth and error accumulation.

- Incorporation of noise and decoherence (non-unitary channels) as MPOs: accuracy–compression trade-offs, stability, and error propagation in long circuits.

- Systematic exploitation of symmetries (U(1), SU(2), parity, translational) to reduce ranks and costs in all presented algorithms; generalized canonical forms with symmetry blocks and proofs of correctness.

- Extension to fermionic and bosonic systems: mappings (e.g., Jordan–Wigner, Bravyi–Kitaev), sign structures, and compression efficacy; compatibility with the linear-algebra-first approach.

- Canonical gauge selection strategies: globally consistent gauge fixing to improve numerical stability and truncation quality; diagnostics to detect ill-conditioned gauges during simulations.

- Sampling from compressed MPS: exact vs approximate sampling algorithms after truncation, with quantified bias/variance and guarantees for estimated observables.

- Comparative benchmarks across realistic workloads (chemistry circuits, arithmetic/QFT circuits, random circuits at various depths): state-vector vs exact MPS vs compressed MPS vs TCI-based pipelines; scalability on modern HPC.

- Quantics-PDE solver generalization: variable coefficients, nonlinearities (e.g., stiff Gross–Pitaevskii), higher dimensions, irregular domains/meshes, and diverse boundary conditions; accuracy and preconditioning needs.

- Preconditioning and iterative solvers within quantics/MPO linear systems (Ax=b): design of MPO-compatible preconditioners, Krylov/DMRG convergence guarantees, and robustness to ill-conditioning.

- Automated construction (“compiler”) of MPOs from symbolic expressions for general differential operators (advection, variable-coefficient elliptic, fractional derivatives), including boundary condition handling.

- Mixed-state simulations via matrix product density operators (MPDO): compression strategies, observable evaluation, and noise modeling in density-matrix form.

- Parallel and distributed implementations of MPO–MPS products and TCI: communication-optimal algorithms, memory-layout strategies, and performance on GPUs/accelerators.

- Circuit-class detection: fast heuristics to identify area-law entanglement regimes amenable to classical simulation vs volume-law regimes requiring fallback strategies.

- SWAP-based vs MPO-based handling of non-neighbor gates: quantitative overhead comparisons, conditions where one dominates, and hybrid strategies.

- Empirical validation of claims about simulating quantum annealers: mapping annealing schedules/Hamiltonians to MPOs, accuracy under noise, and comparisons to hardware outputs.

- Quantitative study of the I/O bottleneck: strategies to recover global features from limited samples (compressive measurement, shadow tomography) and their integration with MPS-based emulators.

- Integration of advanced tensor networks (PEPS, MERA, tree TNs): when 2D/critical circuits benefit over MPS/MPO, and practical algorithms/gauges for QC emulation tasks.

- Formal link between the “O(ND)-dimensional” circuit manifold and classical tensor-network manifolds: metrics for “relevance,” compressibility, and barren-plateau avoidance in variational settings.

- Tooling gaps: reproducible, open-source implementations with standardized APIs for MPO libraries (symbolic MPO generator, contraction planners, TCI modules), and robust test suites covering edge cases.

Practical Applications

Immediate Applications

The paper’s techniques (MPS/MPO linear algebra, DMRG-like solvers, Tensor Cross Interpolation, and quantics tensor-train calculus) can be deployed today in the following concrete settings:

- Classical emulation and verification of quantum circuits

- Sectors: software, quantum hardware, academia, policy

- What you can do now:

- Run exact or compressed MPS/MPO simulators for shallow/structured circuits to verify hardware runs, reproduce distributions, and sanity-check claimed “quantum advantage.”

- Integrate an MPS/MPO backend into Qiskit/Cirq-like SDKs for pre-deployment testing, regression, and compiler feedback (e.g., entanglement growth and bond-dimension budgets).

- Use MPO “zip-up” products and gate factorizations (e.g., low-rank CNOT) for faster transpilation-time estimates and design-space exploration of circuits.

- Potential tools/products/workflows: an MPS-based circuit simulator plugin; compiler pass to predict bond-dimension growth; “TN-aware” benchmarking suite that contrasts depth/entanglement with simulability.

- Assumptions/dependencies: circuits remain relatively shallow or have favorable structure (limited entanglement growth, locality); access to sufficient RAM/compute; accurate gate factorization and ordering; measurement sampling from MPS when needed.

- Simulation and benchmarking of quantum annealers and Ising-type models

- Sectors: quantum hardware, optimization in industry, academia

- What you can do now:

- Use MPS/MPO to simulate transverse-field Ising schedules and annealing dynamics on modest-size/topologies to benchmark hardware (e.g., D-Wave) or test problem embeddings.

- Potential tools/products/workflows: annealer “digital twins” for schedule design and parameter-sensitivity analysis.

- Assumptions/dependencies: graph/topology with limited treewidth or 1D/weakly entangling couplings; compressed time-evolution manageable in MPS.

- Operator-aware linear algebra on extremely large arrays

- Sectors: computational physics, materials, electronics, energy

- What you can do now:

- Solve Ax = b and lowest-eigenpair problems using MPO/MPS (DMRG-like) routines when the operator and states are compressible.

- Potential tools/products/workflows: MPO Krylov/DMRG solvers for large structured operators; preconditioners tailored to MPO structure.

- Assumptions/dependencies: operator admits low MPO rank; solution has low bond dimension; numerics require rank/adaptive truncation control.

- PDE solvers in the quantics tensor-train representation

- Sectors: engineering, physics (fluids, electromagnetics), quantum matter

- What you can do now:

- Build MPOs for differentiation, indefinite integration, convolution, and apply FFT via MPO–MPS products to solve Poisson, diffusion, or Gross–Pitaevskii equations.

- Exploit the bitwise quantics grid mapping to reduce memory and time when fields/operators are compressible.

- Potential tools/products/workflows: TT-based PDE solvers; “TT-FFT” operators; operator pipelines that alternate in real/Fourier space using MPOs.

- Assumptions/dependencies: solution/operator compressibility in quantics; suitable boundary conditions/grids (often powers of two); robust truncation/error control.

- Tensor Cross Interpolation (TCI) for black-box model compression and surrogate modeling

- Sectors: scientific computing, CFD, energy systems, finance

- What you can do now:

- Learn MPS surrogates of high-dimensional response surfaces (functions accessible via an evaluation oracle), reducing sampling budgets and enabling UQ/optimization.

- Potential tools/products/workflows: TCI wrappers around legacy solvers; active-learning loops picking informative tensor entries; deployment of TT surrogates in pipelines.

- Assumptions/dependencies: function is sufficiently low TT-rank; evaluations are feasible at queried multi-indices; noise-aware TCI variants if simulator outputs are noisy.

- Scientific data compression and I/O acceleration

- Sectors: HPC centers, climate, astrophysics, materials, manufacturing

- What you can do now:

- Compress massive simulation snapshots or parameter sweeps as MPS/TT with controlled error to reduce storage, I/O, and enable in-situ analysis.

- Potential tools/products/workflows: TT-aware file formats; postprocessing pipelines that operate directly on compressed TT data (e.g., inner products, filtering).

- Assumptions/dependencies: data admits low TT rank at required accuracy; error metrics acceptable to stakeholders.

- Circuit transpilation and scheduling heuristics informed by tensor ranks

- Sectors: quantum software, compilers

- What you can do now:

- Use gate factorizations (e.g., CNOT rank-2 decomposition) and MPO-based estimates to reduce entanglement growth, optimize SWAP insertion, and minimize bond dimensions.

- Potential tools/products/workflows: “TN-cost” objective in transpilers; rank-aware gate reordering; MPO path planners for nonlocal interactions.

- Assumptions/dependencies: compiler access to hardware topology; cost model correlates with actual runtime/fidelity; rank estimates remain predictive under noise.

- Evidence-based assessment of “quantum advantage”

- Sectors: policy, standards, scientific publishing, R&D management

- What you can do now:

- Use MPS/MPO simulability analyses to design fair benchmarks, detect inflated claims, and set procurement/validation criteria.

- Potential tools/products/workflows: benchmark suites reporting depth, entanglement, MPO rank profiles, and classical runtime; guidelines for reporting inaccessible vs. accessible subspaces.

- Assumptions/dependencies: transparent circuit disclosures; consensus metrics (e.g., depth, two-qubit density, entanglement proxies) adopted by the community.

- Education and workforce training in tensor networks and quantum-inspired computing

- Sectors: education, R&D organizations

- What you can do now:

- Adopt the lecture notes’ algorithms (MPS/MPO basics, DMRG, TCI, quantics calculus) for courses and internal upskilling; implement hands-on labs to build from scratch.

- Potential tools/products/workflows: course modules; coding exercises based on ITensor or equivalent; open-source examples aligned with tensornetwork.org and tensor4all.org.

- Assumptions/dependencies: basic linear algebra and Python/C++ skills; access to modest compute.

Long-Term Applications

These require further algorithmic maturation, scaling, robust software engineering, or domain validation before broad deployment:

- General-purpose TT/quantics PDE frameworks with industrial robustness

- Sectors: multiphysics CAE, energy, electromagnetics, acoustics

- What could emerge:

- Production-grade TT solvers for complex boundary conditions, heterogeneous media, and multi-domain coupling; end-to-end operator libraries (discretize → MPO build → solve → postprocess) that consistently outperform traditional methods on compressible instances.

- Dependencies: robust preconditioning in TT; adaptive rank control; integration with existing meshing and finite-element/volume stacks; certification of accuracy and stability.

- High-dimensional finance and risk analytics via TT surrogates and TT-PDE solvers

- Sectors: finance, insurance

- What could emerge:

- TT-based multi-asset option pricing (PDE or operator splitting) and fast scenario generation; UQ at portfolio scale using TCI surrogates.

- Dependencies: strict error bounds; regulatory acceptance; handling discontinuities and path dependencies (which challenge low rank).

- Real-time digital twins and control with TT surrogates

- Sectors: robotics, advanced manufacturing, energy grids, aerospace

- What could emerge:

- Online TCI/TT models updating from sensor streams; TT operators for model predictive control in high-dimensional spaces.

- Dependencies: fast incremental TCI; robustness to noisy/partial observations; guarantees on latency and stability.

- Medical and geophysical imaging with MPO operator pipelines

- Sectors: healthcare, energy (subsurface), defense

- What could emerge:

- MPO-based accelerations for forward/adjoint PDEs (e.g., wave, diffusion) in large inverse problems; TT-compressed iterative reconstructions.

- Dependencies: mapping domain-specific operators to compressible MPOs; handling complex geometries; clinical/regulatory validation.

- Quantum-classical co-design and resource estimation at scale

- Sectors: quantum hardware and software, policy

- What could emerge:

- Standardized TN-based tooling to predict entanglement, error-correction overheads, and classical-simulation backstops for entire algorithm stacks; policy frameworks that require TN-based baselines for “advantage” claims.

- Dependencies: community standards for reporting TN metrics; shared benchmark corpora; transparent hardware noise models.

- Hardware-accelerated tensor-network computing

- Sectors: semiconductors, HPC, cloud

- What could emerge:

- GPU/TPU/FPGAs with kernels tuned for SVD/QR with truncation, MPO–MPS contractions, and TCI; cloud services exposing TN primitives as managed services.

- Dependencies: memory bandwidth and tiling strategies; mixed-precision stability; wide adoption to amortize engineering costs.

- Differentiable TN stacks for machine learning and scientific ML

- Sectors: AI/ML, scientific computing

- What could emerge:

- PyTorch/JAX-native TT/MPO layers with auto-diff, enabling neural operators and physics-informed models that exploit operator MPOs (d/dx, convolution, FFT) and trainable TT ranks.

- Dependencies: stable gradient flow with rank adaptation; efficient backward-pass for truncated SVD/QR; ML tooling integration.

- Standards for TT data formats, error-bounded scientific compression, and reproducibility

- Sectors: HPC, publishing, archives

- What could emerge:

- Community-agreed TT container formats with metadata for ranks, truncation errors, and operator provenance; FAIR-compliant repositories storing compressed fields and operators.

- Dependencies: consensus on metadata and error metrics; converters to/from legacy formats; long-term preservation guarantees.

- Broadening MPO calculus to complex geometries and multi-physics coupling

- Sectors: engineering, materials, climate

- What could emerge:

- Automated compilation from high-level PDE specs to MPOs (including boundary operators and coupling terms), enabling plug-and-play TT solvers for coupled systems.

- Dependencies: symbolic-to-numeric operator synthesis; domain decomposition in TT; rank-aware coupling strategies.

- Scalable classical competition with quantum algorithms in new regimes

- Sectors: quantum algorithms, optimization

- What could emerge:

- TN methods that keep pace with increases in qubit counts for specific algorithm families (e.g., QFT-heavy, local-depth circuits), redefining the practical boundary of “quantum advantage.”

- Dependencies: advances in contraction ordering, low-rank learning (TCI) under noise, and hybrid TN–Monte Carlo schemes.

Notes on cross-cutting assumptions and dependencies

- Compressibility is key: success hinges on low bond dimension/TT rank of states, operators, and functions; worst-case instances still scale exponentially.

- Discretization and mapping matter: quantics assumes bitwise grid structures (often powers of two) and boundary operators that keep ranks low.

- Truncation/error control: rigorous, automated criteria for SVD/QR truncation are needed to balance accuracy and performance.

- Integration and UX: adoption depends on clean APIs, interoperability with existing stacks (finite elements, ML frameworks, QC SDKs), and accessible diagnostics (rank growth, error budgets).

- Benchmark transparency: for policy and scientific validation, circuits/problems should be disclosed sufficiently to permit TN-based baseline comparisons.

Glossary

- barren plateaus: Regions in the optimization landscape of variational quantum algorithms where gradients vanish, making training difficult. "For instance, the problem of the ``barren plateaus'' in the variational quantum eigensolver (VQE) algorithm has been traced back to the fact that most of the states in the -dimensional subspace manifold are essentially chaotic, hence irrelevant"

- bond dimension: The size of a virtual index in a tensor network; controls expressiveness and entanglement capacity of MPS/MPO. "we take all the bond dimensions to be equal to "

- canonical form: A gauge choice for MPS where tensors are orthonormalized to simplify computations and measurements. "We will later use this freedom to work with a very convenient gauge known as the ``canonical form''"

- C-NOT (controlled NOT) gate: A two-qubit gate that flips the target qubit if and only if the control qubit is in state 1. "and the controlled NOT (or C-NOT) two-qubit gate"

- Cross Interpolation: A numerical method that approximates large matrices/tensors by selecting informative rows/columns (or fibers) via cross indices. "including the Singular Value Decomposition (SVD), the Cross Interpolation, and the associated partial-rank-revealing LU decomposition"

- decoherence: Loss of quantum coherence due to unwanted coupling to the environment, degrading quantum computation fidelity. "the phenomenon of decoherence, the other Achilles' heel of quantum computing"

- Density Matrix Renormalization Group (DMRG): A variational algorithm leveraging MPS to find low-energy eigenstates of many-body Hamiltonians. "including classic material (matrix product states and operators, Density Matrix Renormalization Group (DMRG) algorithm, etc.)"

- density matrix: An operator describing mixed or pure quantum states, enabling computation of observables and fidelities. "the density matrix "

- Einstein’s implicit sum notation: Convention that repeated indices in tensor expressions are summed over without writing the summation explicitly. "Any connection between two tensors implies that the involved indices take the same values and are summed over; this is Einsteinâs implicit sum notation."

- fidelity: Overlap measure quantifying how close an actual quantum state is to a target state. "the fidelity of the state $F(n) = {\Psi^{(n)} \rho^{(n)} |#1{\Psi^{(n)}$ between the targeted state $|#1{\Psi^{(n)}$ and the density matrix actually obtained decreases exponentially"

- flattening tensor: A “combiner” tensor that maps multiple indices into a single composite index to reshape tensors into matrices or vectors. "Another special tensor is the flattening tensor\footnote{The flattening tensor is also sometimes called combiner.} "

- Fourier transform: Linear transform mapping a function to frequency space; in quantics it becomes a simple MPO–MPS product. "We discuss how the Fourier transform translates into a simple MPO-MPS product in this context and can therefore be performed exponentially faster than the regular Fast Fourier Transform."

- GHZ (Greenberger-Horne-Zeilinger) state: A maximally entangled multi-qubit state of the form (|00…0> + |11…1>)/√2. "Let us consider an explicit quantum circuit that builds a GHZ (Greenberger-Horne-Zeilinger) state:"

- gauge freedom: The non-uniqueness in MPS/MPO factorizations allowing insertion of invertible matrices without changing the represented tensor. "there is what is known as ``gauge freedom'':"

- Gross--Pitaevskii: Nonlinear wave equation describing Bose–Einstein condensates; a PDE solvable in the quantics framework. "e.g.\ Poisson, diffusion, or Gross--Pitaevskii"

- Hadamard gate: A single-qubit unitary that creates superposition by mapping |0>→(|0>+|1>)/√2 and |1>→(|0>−|1>)/√2. "It creates the state ... using a Hadamard gate and two C-NOT gates"

- Hamiltonian: The operator governing the time evolution of a quantum system. "where is the time-ordering operator and is the Hamiltonian of the system."

- Hilbert space: The vector space of quantum states, whose dimension grows exponentially with the number of qubits. "It is very important to realize that the largest part of the Hilbert space of dimension will remain forever inaccessible"

- iDMRG: Infinite-size DMRG algorithm used for translationally invariant or thermodynamic-limit systems. "and many other tensor network techniques such as belief propagation, iDMRG, etc."

- I/O bottleneck: Limitation that only limited classical information can be read out from a quantum computation relative to the full state. "This is one of the two Achilles' heels of quantum computing, which we dub the I/O bottleneck"

- Kronecker tensor (copy): A multi-leg identity/copy tensor enforcing equality of connected indices; entries are products of Kronecker deltas. "An important one is the Kronecker tensor , also known as copy and defined for any number of legs as "

- logical qubit: An error-protected qubit encoded across multiple physical qubits using quantum error correction. "quantum error correction uses several physical qubits to build one ``logical'' qubit of better quality than the physical ones."

- LU decomposition (partial rank-revealing): Matrix factorization into lower and upper triangular factors with pivoting that exposes numerical rank. "and the associated partial-rank-revealing LU decomposition."

- Matrix Product Operator (MPO): A tensor-network representation of exponentially large matrices/operators as chains of local tensors. "Matrix Product Operators (MPO) that are representations of exponentially large matrices."

- Matrix Product State (MPS): A tensor-network representation of exponentially large vectors/states as chains of local tensors. "Matrix Product States (MPS) that are seen as representations of exponentially large vectors."

- MERA: Multiscale Entanglement Renormalization Ansatz; a hierarchical tensor network for scale-invariant systems. "More advanced tensor networks such as PEPS, PEPO, MERA, or tree tensor networks."

- MPO-MPS product: The tensor-network analog of a matrix–vector multiplication, applying an MPO to an MPS. "to apply the gate, we need to perform an MPO-MPS product"

- Pauli matrices: The set of 2×2 Hermitian matrices X, Y, Z forming a basis for single-qubit operations. "Typical examples include the one-qubit gates (the first three are the Pauli matrices)"

- PEPO: Projected Entangled Pair Operator; a higher-dimensional generalization of MPO for operators. "More advanced tensor networks such as PEPS, PEPO, MERA, or tree tensor networks."

- PEPS: Projected Entangled Pair State; a two-dimensional tensor-network ansatz for quantum states. "More advanced tensor networks such as PEPS, PEPO, MERA, or tree tensor networks."

- quantum annealer: A device that performs optimization via quantum annealing/adiabatic evolution rather than gate-based circuits. "the simulation of a quantum annealer"

- quantum error correction: Techniques to protect quantum information by encoding it redundantly and performing syndrome measurements. "The hope of quantum computing is to use quantum error correction to address this problem"

- quantum Fourier transform: The unitary version of the discrete Fourier transform used in quantum algorithms. "and the quantum Fourier transform."

- QR factorization: Matrix decomposition into an orthonormal matrix Q and an upper-triangular matrix R. "we will use three different types that we will explain in detail in turn: ... The factorization"

- Schrödinger equation: Fundamental equation describing unitary time evolution of quantum states. "according to the Schrödinger equation"

- Singular Value Decomposition (SVD): Matrix factorization into singular values and orthonormal factors; used for compression and orthogonalization. "including the Singular Value Decomposition (SVD)"

- SWAP gate: A two-qubit gate that exchanges the states of two qubits; can be constructed from C-NOTs. "the so-called SWAP 2-qubit gate."

- TDVP: Time-Dependent Variational Principle; method for time evolution within variational manifolds like MPS. "advanced time-evolution techniques such as TDVP"

- Tensor Cross Interpolation (TCI): A learning algorithm that builds MPO/MPS from function evaluations by selecting pivot indices. "recent learning algorithms that allow one to map a known function into an MPS (the Tensor Cross Interpolation, or TCI, algorithm)"

- tensor network: A graph of tensors with contracted edges representing high-dimensional data or operations compactly. "A tensor network is simply a collection of tensors where some of the indices are contracted."

- tensor-train representation (quantics): A tensor-network (TT/MPS) format for functions on high-dimensional grids within the quantics framework. "the quantics tensor-train representation, which allows one to use the above algorithms to solve partial differential equations."

- transverse field Ising model: A spin system with nearest-neighbor interactions and a transverse magnetic field; a benchmark problem. "the simulation of the transverse field Ising model"

- tree tensor networks: Hierarchical, acyclic tensor-network structures offering efficient representations for some systems. "More advanced tensor networks such as PEPS, PEPO, MERA, or tree tensor networks."

- variational Monte Carlo: A stochastic method optimizing variational parameters of wavefunctions via sampling. "we could have also included e.g.\ variational Monte Carlo"

- variational quantum eigensolver (VQE): A hybrid quantum-classical algorithm to approximate eigenstates using parameterized circuits. "as a classical counterpart to the variational quantum eigensolver popular in quantum computing"

- virtual index: An internal index connecting neighboring tensors in MPS/MPO that gets summed over in contractions. "where is the rank of the virtual (vertical line in the drawing) index."

- zip-up algorithm: A specific strategy to contract an MPO with an MPS sequentially from one end, splitting at each step. "Here we present the so-called ``zip-up'' algorithm:"

Collections

Sign up for free to add this paper to one or more collections.