- The paper introduces a higher-order PCA-like framework using central moments and polynomial representations to encode detailed, rotation-invariant shape descriptors.

- Key innovations include the use of Gaussian-modulated Hermite polynomial bases and systematic tensor contractions to achieve robust invariance under rotations.

- The method enables efficient shape similarity matching and scalability for applications in chemoinformatics, computational vision, and 3D scan analysis.

Higher Order PCA-like Rotation-Invariant Features for Detailed Shape Descriptors Modulo Rotation

Motivation and Background

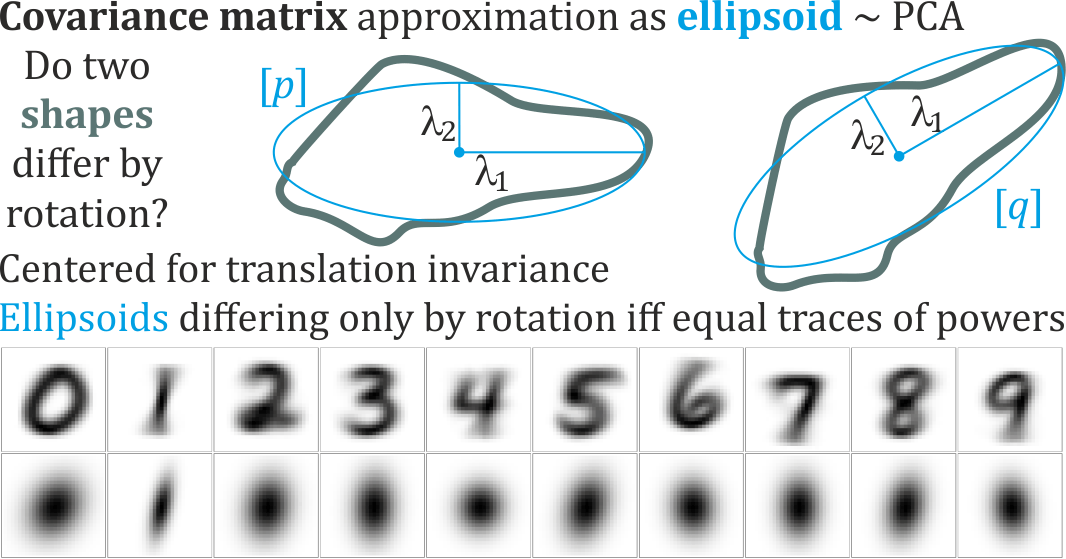

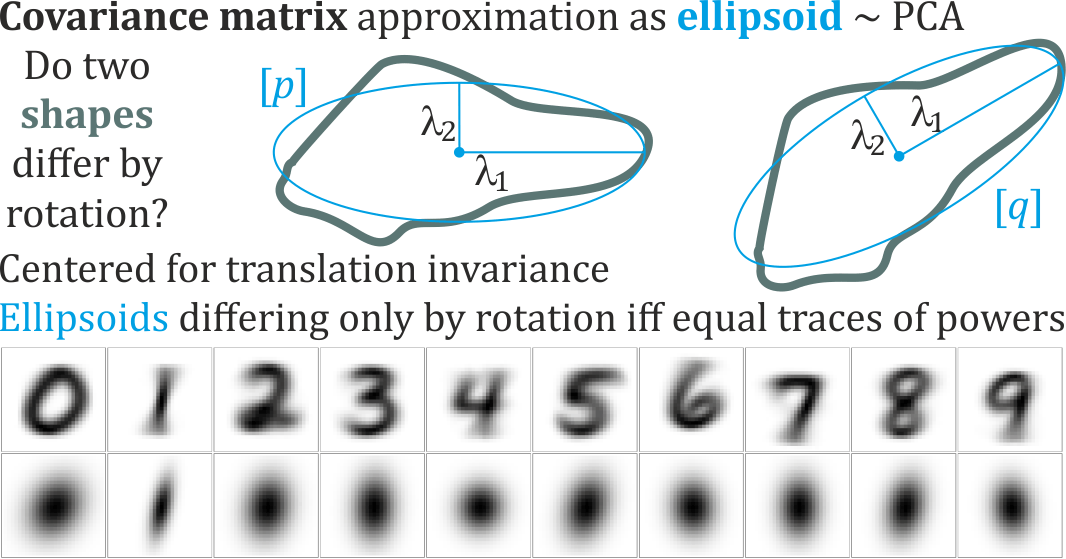

Principal Component Analysis (PCA) is a canonical technique for extracting rotation-invariant features from data, utilizing the covariance matrix to approximate shapes as ellipsoids. This representation, however, fails to capture the intricacy of most natural objects, whose geometry extends far beyond second-order correlations. The paper "Higher order PCA-like rotation-invariant features for detailed shape descriptors modulo rotation" (2601.03326) introduces a systematic extension to higher-order tensors (central moments), as well as polynomial representations (modulated by a Gaussian envelope), which enable the generation of detailed, decodable, and rotation-invariant features for complex shapes in arbitrary dimension.

The practical implications of this framework are clear for fields such as chemoinformatics, computational vision, and 3D scan analysis, where rotation- and possibly scale-invariant shape descriptors underlie robust recognition and efficient cross-object comparison. By leveraging high-order algebraic structures, the approach generalizes ellipsoid-based PCA to multi-dimensional central moments, encapsulating global and local geometric information, and providing fine-grained descriptors that remain stable under SO(d) transformations.

Polynomial and Tensor Representation of Shape

A core proposition is the representation of a shape or density as expectations of polynomial functions over the domain—a generalization that encompasses densities, volumes, and point clouds (e.g., atomic positions or pixel lattices). Starting from

E[f(x)]=∫f(x)ρ(x)dx

the paper systematically constructs central moments of order r using symmetric tensors:

pa1a2…ar=E[(xa1−E[xa1])⋯(xar−E[xar])]

These moments encode increasingly detailed shape information. While the order-2 tensor—the covariance matrix—underpins standard PCA, higher-order tensors and their associated polynomial representations allow for continuous, high-accuracy encoding, especially when multiplied by a spherically symmetric, decaying envelope such as a Gaussian.

Figure 1: Visual intuition for averaging over shape/density yielding a second-order polynomial (covariance) for ellipsoid approximation and motivating generalization to higher moments for richer shape representation.

The use of polynomials times Gaussian, with orthonormal Hermite polynomial bases in d dimensions,

f(j1,…,jd)(x)=hj1(x1)⋯hjd(xd)e−∣∣x∣∣2/2

supports decodable shape encoding and reconstruction.

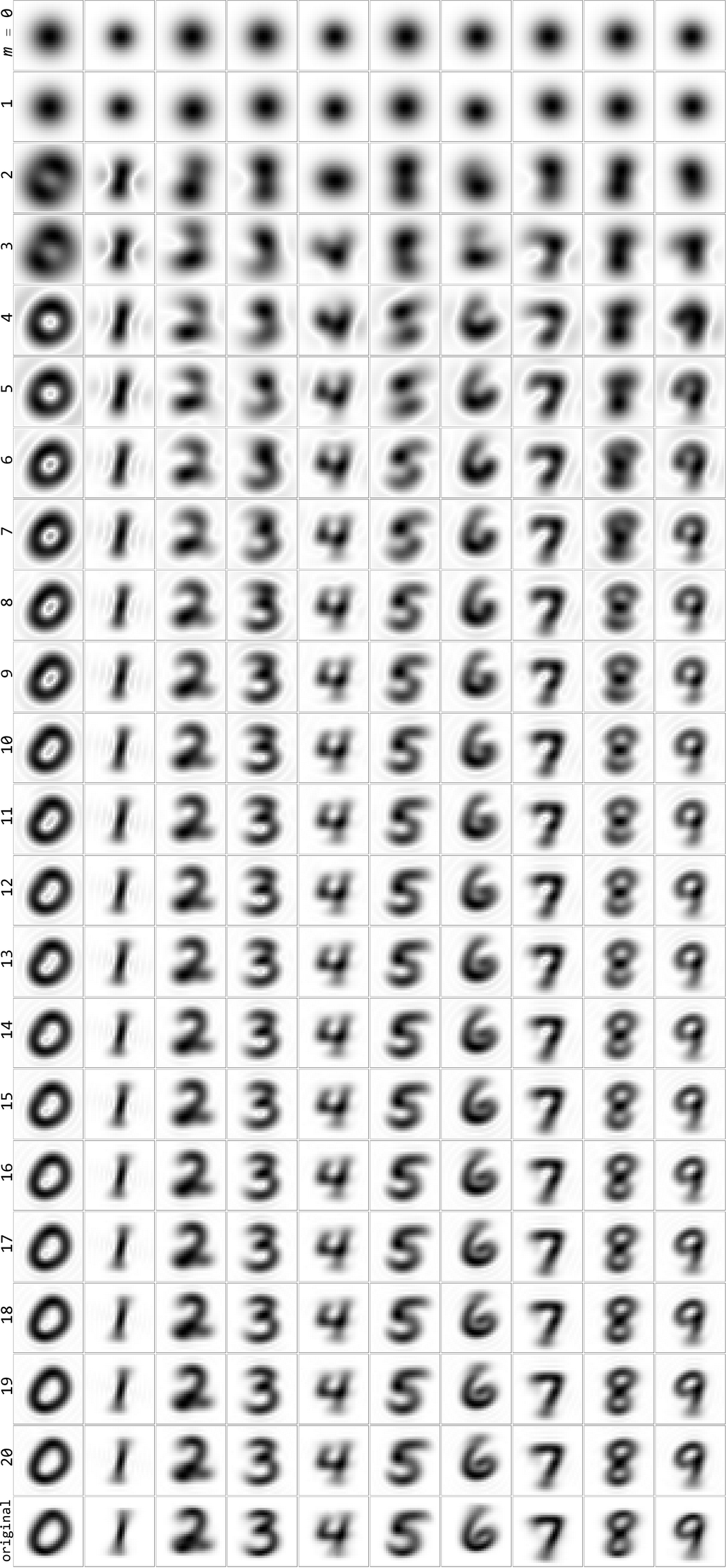

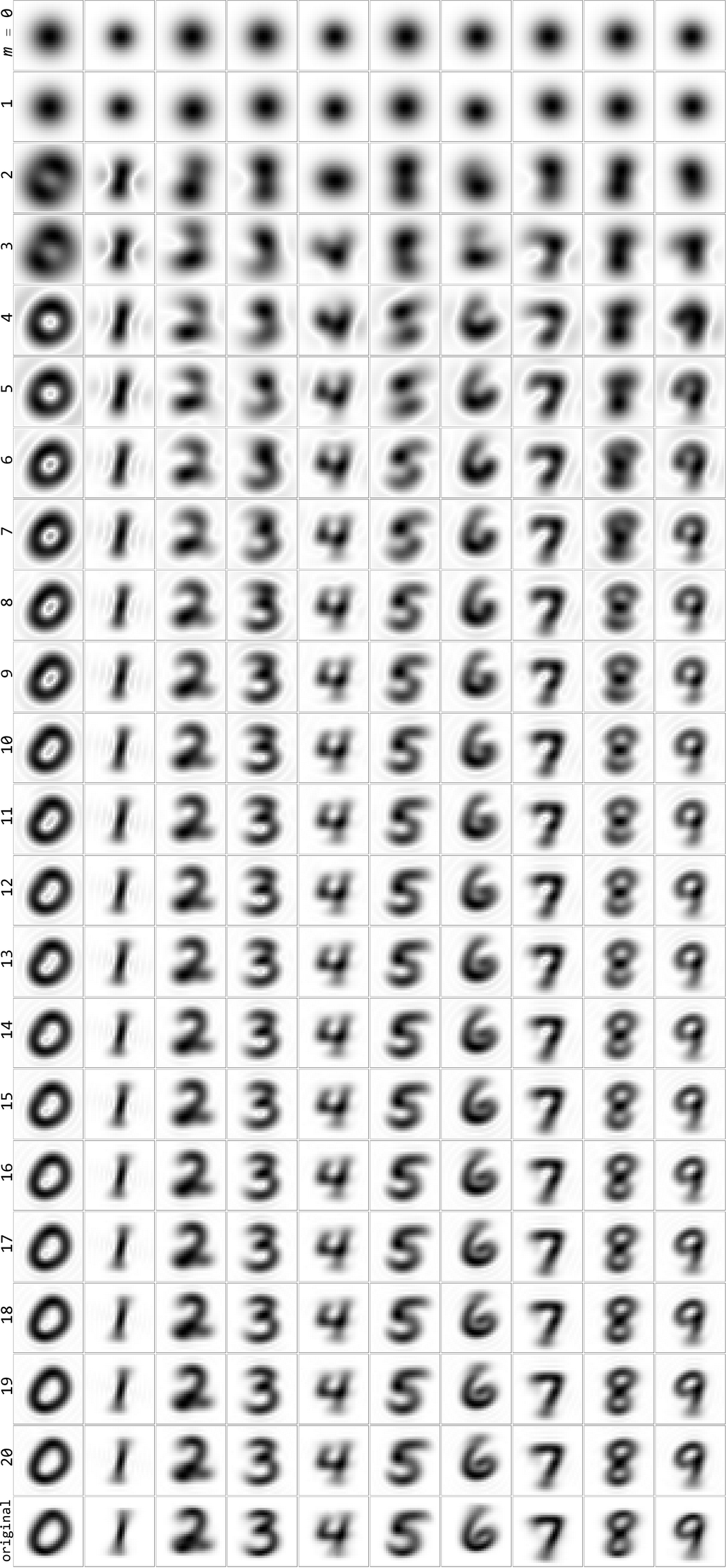

Figure 2: The accuracy of MNIST digit reconstructions as polynomial degree is increased in the Gaussian-modulated basis highlights rapid convergence and fidelity, especially for higher degrees.

Construction of Rotation-Invariant Features

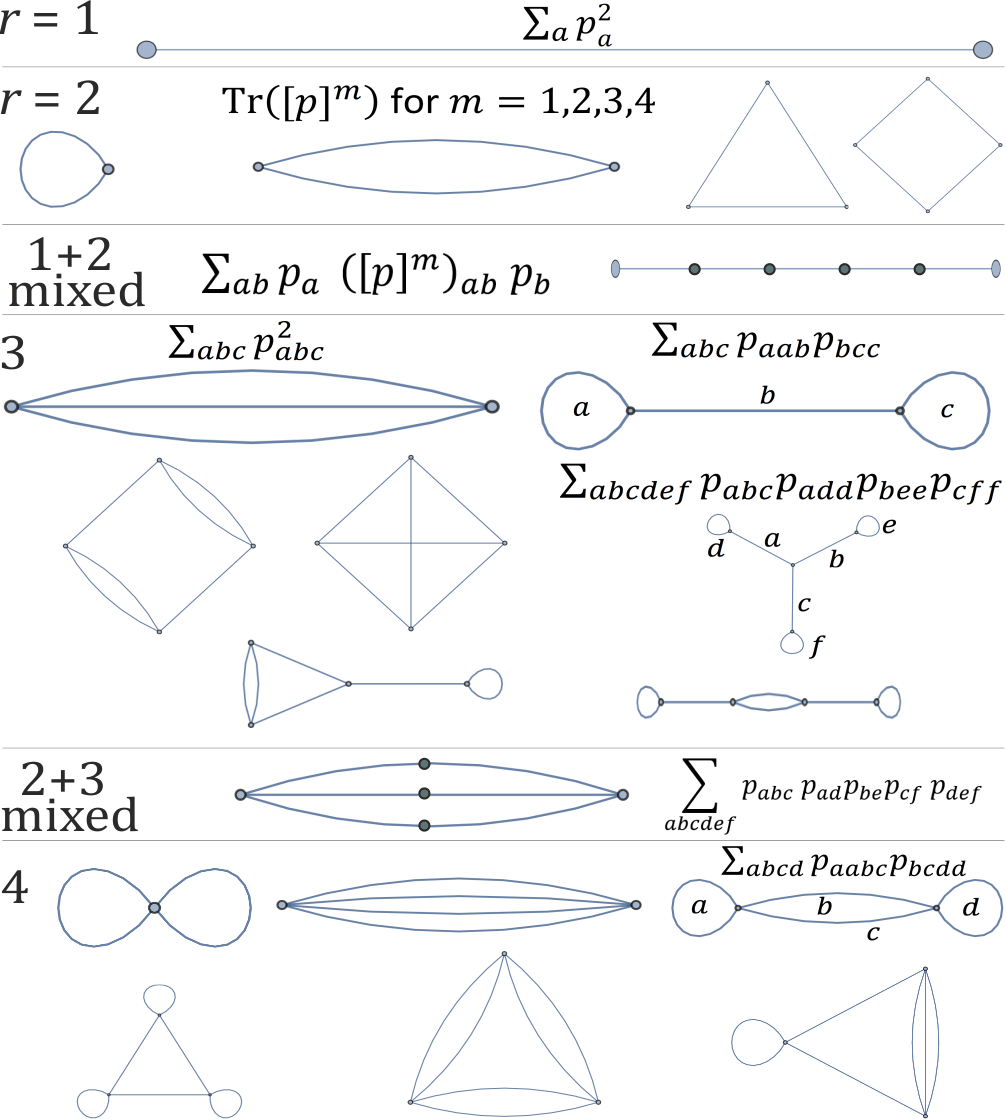

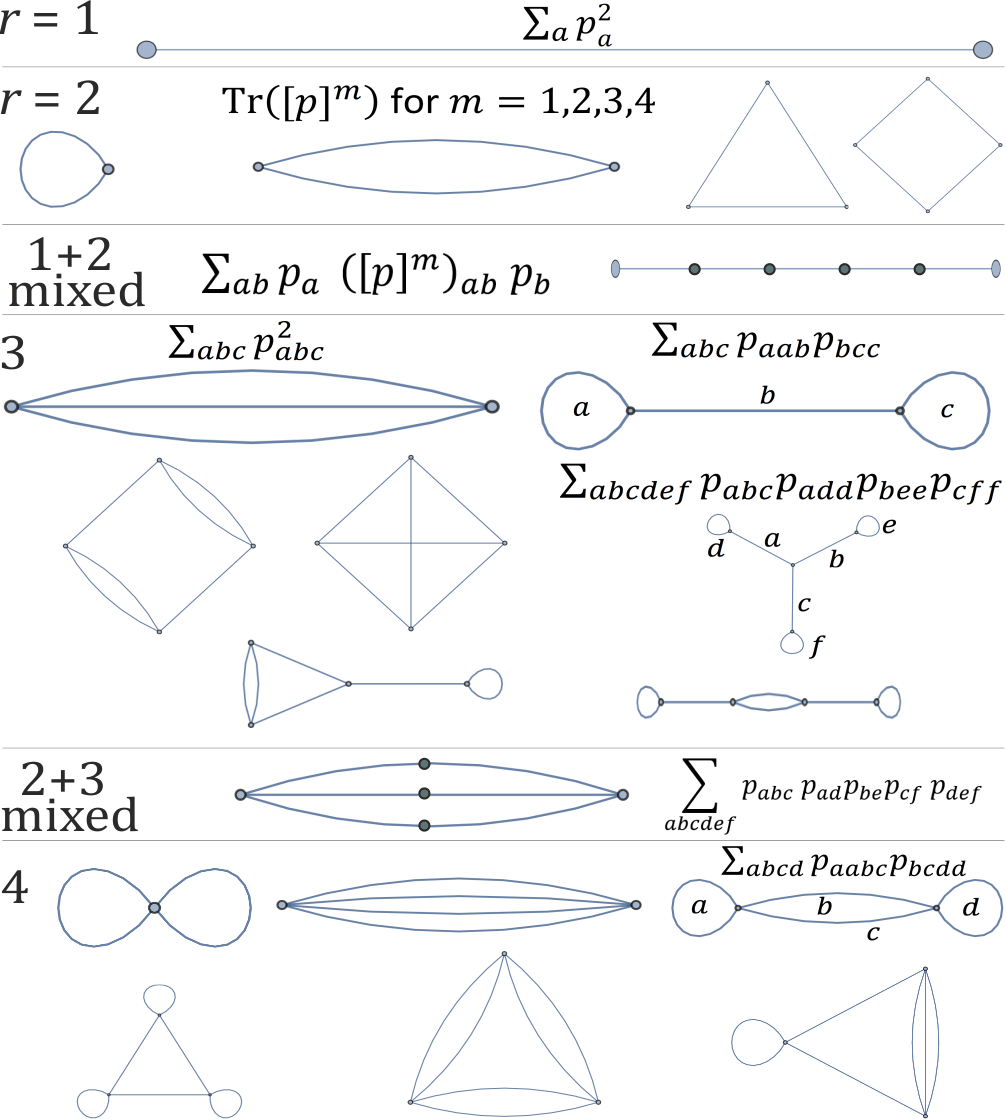

Rotation invariance is achieved by designing features that do not change under orthogonal transformations. For lower-order tensors, classical invariants such as traces of powers of the covariance matrix

Tr([p]i)

and vector norms are sufficient. The extension to higher-order tensors relies on diagrammatic and algebraic constructs; invariants are generated by summing over tensor contractions as encoded in graph-based diagrams. For example, degree-r tensor invariants correspond to summations over all permutations of indices, and products of contracted tensors yield graph-topology-derived invariants.

Figure 3: Diagrammatic representation of rotation invariants for homogeneous polynomials of varying degree, encoding summation patterns over tensor indices that remain unchanged under SO(d).

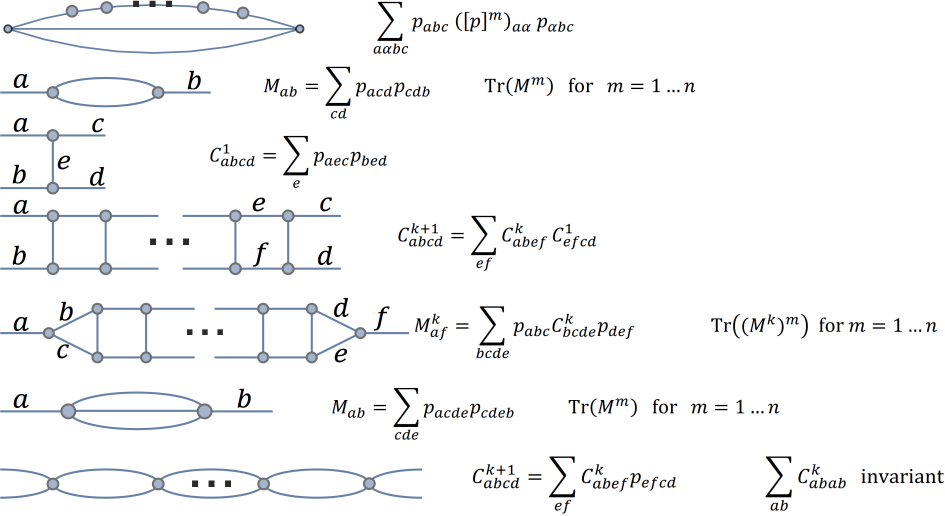

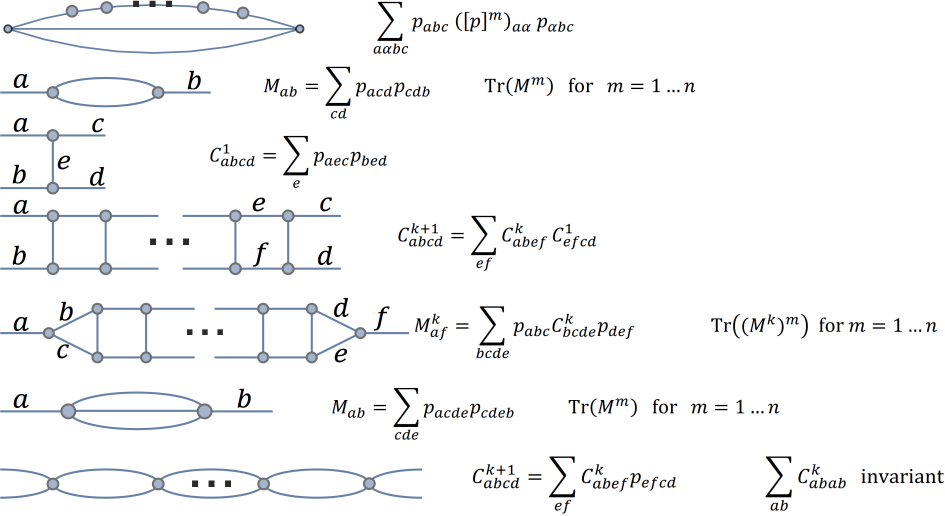

Further, systematic procedures for generating large numbers of invariants, such as forming higher-dimensional matrices from tensor products and computing traces of their powers, ensure redundancy and stability even in the presence of deformations.

Figure 4: Strategies for automating rotation invariant generation from high-order tensors via matrix construction and trace invariants, potentially covering d(d−1)/2 dimensions of SO(d).

Despite the necessity of constructing sufficient and independent invariants for higher-order tensors—a challenge mirrored by problems like graph isomorphism—it is feasible to create highly redundant and distortion-resistant descriptors suitable for practical applications.

Efficient Shape Similarity Metrics and Modulo-Rotation Comparison

The framework enables defining shape similarity metrics by forming feature vectors from rotation-invariant quantities, such as generalized means of eigenvalues from various order tensors, then computing the distance in the invariant feature space. This avoids computationally intensive optimization over rotation groups:

minO∈SO(d)∥p−q∘O∥

Instead, matching of invariants suffices to cluster and compare shapes modulo rotation—a critical benefit for large-scale data scenarios, e.g., molecular database searches or fast image retrieval.

The approach readily accommodates scale invariance via normalization of coordinates, aligning application to semantic recognition tasks where scaling nontrivially influences interpretability.

Inclusion of Shape Variability

Dynamic shapes, such as those arising in molecular dynamics or object tracking, can be encoded as distributions over invariant features. This supports statistical comparisons, e.g., between the conformational ensembles of molecules and protein binding sites, through the estimation of density functions in feature space.

Implications and Future Directions

The proposed higher-order PCA-like invariant feature framework offers a continuum between coarse, global descriptors (ellipsoid/PCA) and arbitrarily detailed, decodable shape encodings. The rotation invariants derived from polynomial and tensor representations support robust, efficient shape comparison and retrieval, with immediate transferability to chemoinformatics, biometric recognition, and object matching workflows. Challenges remain regarding the exhaustive characterization of independent invariants for high-order tensors, selection and weighting of features for application-specific metrics, and the extension to dynamic and noisy datasets.

Further theoretical inquiry into the completeness of invariant sets and their connection with combinatorial problems (such as graph isomorphism) is warranted. Practical optimization for benchmark tasks in molecular shape analysis and image recognition will solidify the utility and generality of this approach.

Conclusion

In summary, the extension of PCA-based rotation-invariant feature extraction to higher-order central moments and polynomial representations—augmented by Gaussian modulation—offers a mathematically rigorous, flexible, and decodable system for constructing detailed shape descriptors modulo rotation. This facilitates efficient similarity computation, robust recognition, and lays the foundation for future exploration into comprehensive invariant systems, with broad applicability in scientific and engineering disciplines.