Challenges and Research Directions for Large Language Model Inference Hardware

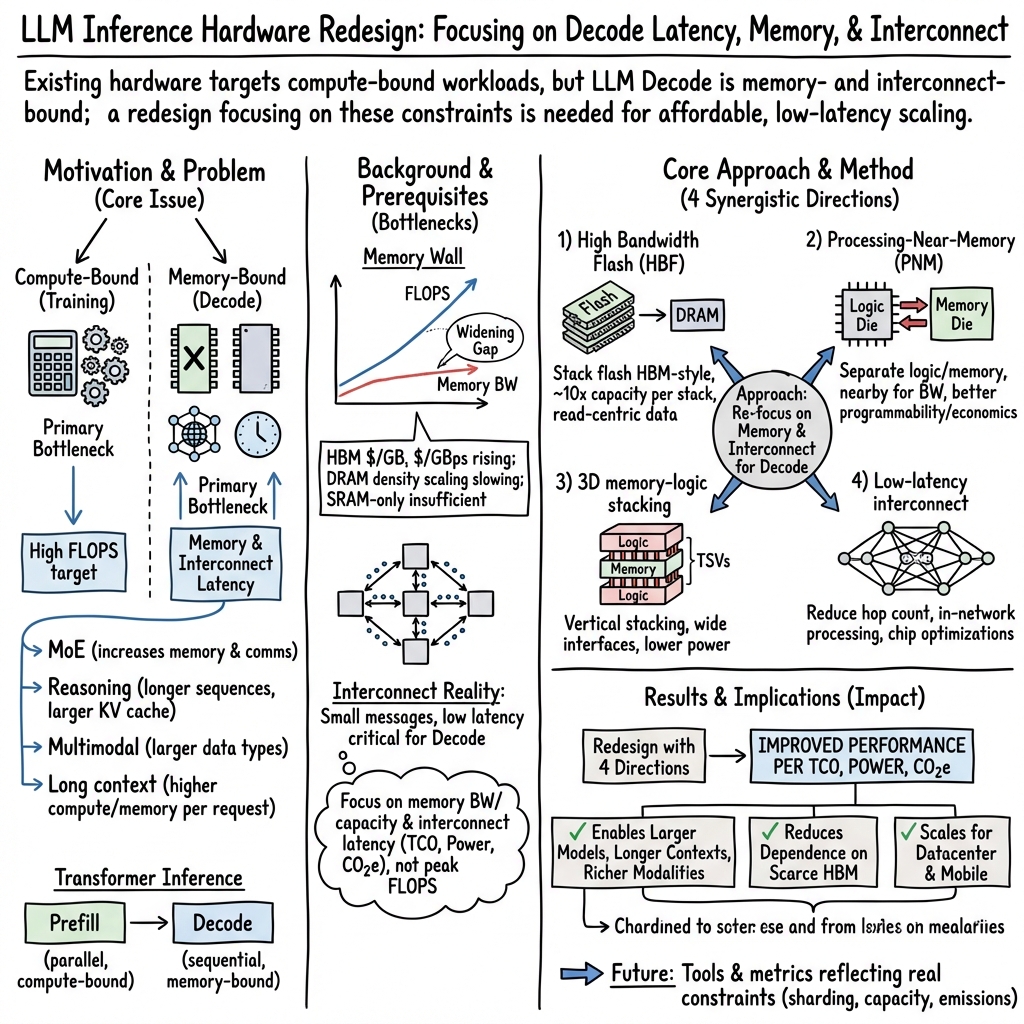

Abstract: LLM inference is hard. The autoregressive Decode phase of the underlying Transformer model makes LLM inference fundamentally different from training. Exacerbated by recent AI trends, the primary challenges are memory and interconnect rather than compute. To address these challenges, we highlight four architecture research opportunities: High Bandwidth Flash for 10X memory capacity with HBM-like bandwidth; Processing-Near-Memory and 3D memory-logic stacking for high memory bandwidth; and low-latency interconnect to speedup communication. While our focus is datacenter AI, we also review their applicability for mobile devices.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Explain it Like I'm 14

Overview: What this paper is about

This paper explains why running LLMs for users is getting hard and expensive, and suggests better ways to build computer hardware to make it faster and cheaper. The authors focus on the “inference” part of AI (when the model answers your question), not the “training” part (when the model learns). They argue that for modern LLMs, the biggest problems to solve are memory and communication between chips, not raw computing power.

Key questions in simple terms

The paper asks a few clear questions:

- Why does serving answers from big AI models feel slow and cost so much?

- Which parts of today’s computer chips and networks are holding LLMs back?

- What new hardware ideas could make LLMs respond quickly while using less power and money?

- How do trends like reasoning, long context, multimodal data (text + images + audio), and retrieval make the problem harder?

- What should researchers focus on to help both datacenters and mobile devices?

How the authors approached the problem

Instead of running experiments, the authors analyze how LLMs work and how current hardware is built. They break down LLM inference into two phases and use everyday analogies:

- Prefill phase: The model reads your whole prompt at once. Think of this like scanning an entire page so you can understand it. This part is “parallel” and mostly limited by compute.

- Decode phase: The model writes its answer one token (small piece of text) at a time, looking back at what it already wrote and at your prompt via a “KV cache.” This is like writing an essay word-by-word while constantly checking your notes. This part is “sequential” and mostly limited by memory and communication speed.

They then compare:

- Today’s chips (GPUs/TPUs) that are great for training but not ideal for the decode phase of inference.

- Different kinds of memory:

- Bandwidth (how wide the road is for data),

- Capacity (how big the parking lot is for data),

- Latency (how long it takes to start moving data).

- Network designs inside datacenters (how quickly small messages move between many chips).

- Two ways to place compute near memory:

- PIM (Processing-in-Memory): putting small processors directly inside memory chips.

- PNM (Processing-Near-Memory): putting compute very close to memory, but on separate chips.

- 3D stacking: building chips in layers (like a stack of apartments) to get fast, dense connections.

- Practical metrics beyond speed, like total cost, power, and carbon impact.

Main findings and why they matter

Here are the paper’s most important points, explained simply:

- The decode phase is the bottleneck. Training may show off huge compute numbers, but serving answers is often slowed by memory and network latency. Decode needs fast access to a lot of stored information and must send many small messages across chips, which current systems don’t handle well.

- New LLM trends make decode harder:

- Mixture of Experts (MoE): The model has many specialized “experts” inside. It improves accuracy but increases memory size and chip-to-chip communication.

- Reasoning models: They “think” step-by-step, creating long chains of internal tokens, which adds time and memory pressure.

- Multimodal: Handling images, audio, and video alongside text means bigger data and more bandwidth.

- Long context and RAG: Looking at longer prompts and pulling in extra facts from a database improves answers but increases memory use and latency.

- Diffusion (for images): This is different from text decode and mainly adds compute, not memory or network stress.

- Current GPUs/TPUs aren’t built for this. They emphasize compute and memory bandwidth, not low-latency communication for many small messages, and they rely heavily on expensive high-bandwidth memory (HBM), whose cost and capacity aren’t scaling as fast as needed.

- Four hardware directions could help a lot: 1) High Bandwidth Flash (HBF): Stack fast-access flash chips like HBM to get around 10x more memory capacity per node. Great for storing big, mostly read-only data (like model weights). Flash is slower for tiny reads and wears out with frequent writes, so it must be paired with DRAM/HBM. 2) Processing-Near-Memory (PNM): Keep compute close to memory on separate dies. This avoids cramming logic into memory chips and makes it easier for software to use large chunks of data without complex “sharding” into tiny banks. PNM is more practical for datacenters than PIM; PIM might still be useful on mobile where energy and sizes are smaller. 3) 3D memory-logic stacking: Layer memory and compute vertically with dense connections to boost bandwidth and reduce power. Two flavors: “compute on the HBM base die” and fully custom stacks. Challenges include heat (cooling stacked chips) and agreeing on standard interfaces. 4) Low-latency interconnect: Redesign networks for fewer hops (like choosing roads with fewer stoplights), handle small messages faster, and add “processing-in-network” so switches help with collective operations (like all-reduce). Also, place compute close to network interfaces and use reliability tricks that avoid waiting for stragglers.

- Use smarter metrics. Don’t just count FLOPS. Measure practical performance per dollar, per watt, and per unit of CO2. Datacenters are limited by power, space, and sustainability.

- A good simulator would accelerate research. A roofline-like tool that models memory capacity, bandwidth, sharding, and latency (not just compute) could guide better designs.

Why this matters: If we don’t fix memory and network issues, serving advanced LLMs will stay too slow and too expensive. These directions could make AI more affordable, faster, and greener.

Implications and potential impact

- For datacenters: Prioritize memory capacity, bandwidth-per-watt, and low-latency networks over chasing maximum compute. Combining HBF, PNM, 3D stacking, and better interconnects could shrink system size, cut costs, reduce power, and speed up responses.

- For mobile devices: Smaller models and tighter energy budgets may make PIM and tailored flash solutions more practical on phones and laptops.

- For researchers and industry: Focus on memory and network co-design, not just bigger compute. Build simulators that reflect real inference bottlenecks. Adopt metrics that include total cost, power, and carbon footprint.

- For users: Faster time-to-first-token, quicker full completion, and cheaper access to high-quality AI, including better reasoning and longer contexts.

In short, the paper says: Serving LLMs is mainly a memory and communication problem. If hardware teams aim at those targets—using high-capacity flash stacks, near-memory compute, 3D stacking, and low-latency networks—we can deliver quicker, more affordable, and more sustainable AI.

Knowledge Gaps

Knowledge gaps, limitations, and open questions

Below is a single, concrete list of what the paper leaves missing, uncertain, or unexplored, framed so future researchers can act on them.

- Lack of validated, open performance/power/TCO simulators for LLM Decode: no roofline-style, memory/latency-centric model with sharding-aware scheduling, network latency, KV-cache behavior, and modern metrics (TCO, CO2e) integrated and calibrated against real systems.

- No quantitative workload characterization for inference: missing distributions of input/output lengths, batch sizes, MoE routing fan-out, message sizes/hop counts, multimodal data mixes, and temporal locality that would drive memory hierarchies and interconnect design.

- Absence of empirical evaluation or prototypes for the proposed hardware directions (HBF, PNM, 3D stacking, low-latency interconnect): no measurements for bandwidth-per-watt, read latency profiles, reliability/yield, cost, or thermal behavior across realistic LLM inference workloads.

- Unspecified data placement and management policies for HBF: how to partition weights, slow-changing context, and hot KV-cache data across HBM/DRAM/HBF; when to replicate vs shard; and how to pin, prefetch, and evict to hide flash page-level latencies.

- How to mitigate HBF’s page-based, high-latency reads: concrete controller designs (read-ahead, sub-page access, caching tiers), attention-aware prefetching, and software APIs to align access granularity without degrading effective bandwidth.

- Strategies to manage HBF write endurance under realistic inference operations: quantifying write patterns from KV updates and context changes; endurance-aware placement; wear-leveling policies tuned for weights/context; and failure prediction/monitoring.

- Optimal DRAM/HBM/HBF capacity and bandwidth ratios per node for different model classes (MoE vs dense, long-context vs short, multimodal vs text-only), including sensitivity to cost, power, and latency targets.

- Integration of HBF with RAG corpora at datacenter scale: indexing, consistency/refresh cycles, security/isolation, and query pipelines that exploit flash density without violating latency SLAs.

- Mobile-device feasibility of HBF-like concepts: packaging constraints, LPDDR interfaces, energy/thermal budgets, intermittent connectivity, and on-device RAG memory sizes and refresh policies.

- Clear programming model and runtime support for PNM: kernel partitioning, memory-affinity APIs, compiler analysis for near-memory placement, synchronization primitives, and autotuning of shard sizes without bank-level constraints.

- Quantitative comparison of PIM vs PNM under LLM inference kernels: actual achievable compute in DRAM processes, end-to-end bandwidth-per-watt gains vs logic PPA penalties, and software overheads for bank-level sharding.

- Consistency, coherence, and scheduling policies across multiple PNM stacks: how to orchestrate attention/KV accesses, MoE dispatch, and reductions while minimizing cross-stack traffic and tail latency.

- Standardization gaps for 3D memory-logic stacking interfaces: defining inter-die protocols (signals, timing, reliability, ECC), interoperability across vendors, and composability with existing HBM/CXL ecosystems.

- Thermal management strategies for 3D stacks in memory-bound decode: concrete heat extraction designs, safe operating points (voltage/frequency caps), and performance impacts of throttling under real workloads.

- Reliability and yield implications of TSV-heavy 3D stacking: failure modes, testability, binning strategies, redundancy at stack/chip level, and their effects on latency, throughput, and TCO/CO2e.

- Software adaptation to altered bandwidth-to-capacity and bandwidth-to-FLOPS ratios in 3D stacks: attention algorithm variants, operator fusion/scheduling, and memory tiling schemes tailored to ultra-wide, low-power interfaces.

- Efficient communication between multiple memory-logic stacks and the main accelerator: topology choices, flow control, collective primitives, and minimizing serialization points for autoregressive decode.

- Concrete latency-oriented network designs for inference: quantified hop-count vs bisection trade-offs for tree/dragonfly/high-D torus; routing, congestion control, and NIC features tuned to small packets and frequent collectives.

- In-network processing for LLM collectives beyond basic all-reduce: APIs, correctness guarantees for MoE dispatch/collect, security/isolation in switches, and compiler/runtime integration to offload collectives automatically.

- Acceptable-quality approximations for latency reduction: rigorous evaluation of “fake data” or prior-result timeouts on model output quality, user experience, and robustness across tasks (reasoning, code, multimodal).

- Latency-aware accelerator microarchitecture: quantified benefits of SRAM-first packet buffering, compute engines co-located with NICs, and scheduling to avoid DRAM round-trips; plus area/power trade-offs.

- QoS, multi-tenancy, and interference management for inference networks: admission control, isolation, and tail-latency mitigation when many small, latency-sensitive jobs share the fabric.

- Detailed TCO and CO2e accounting frameworks for the proposed architectures: embodied vs operational carbon, yield impacts, packaging-related emissions, and datacenter capacity constraints included in design-space exploration.

- Security and privacy implications of large on-node persistent context (HBF): encryption, access control, data retention/erasure policies, tenant isolation, and side-channel risk in near-memory compute contexts.

- End-to-end partitioning of Prefill vs Decode across heterogeneous hardware: concrete architectures that split stages across different nodes/chips, with scheduling, dataflow, and interconnect requirements validated for latency.

- Targeted hardware support for KV-cache: compression/quantization schemes, streaming attention hardware, hierarchical KV placement across SRAM/DRAM/HBM/HBF, and prefetch/eviction tuned to attention patterns.

- MoE-specific systems issues: expert placement and replication, gating load balance, cold-start penalties, expert caching strategies, and interconnect patterns that minimize dispatch/collect stalls.

- Long-context handling across heterogeneous memories: ring/sliding-window attention mapping, prefetch distance tuning, address translation costs, and virtualization of context across HBM/HBF with predictable latency.

- Pricing and supply-chain uncertainty: sensitivity analyses of HBM/DRAM/flash cost trends (including spikes), availability risks, and implications for architecture choices and capacity planning.

- Standard programming abstractions for low-latency interconnect and in-network compute: portable APIs across Ethernet/InfiniBand/NVLink, vendor-neutral collective semantics, and compiler/runtime support.

- Concrete methodologies to evaluate proposed topologies under failures and stragglers: resilience mechanisms (local spares, rapid failover), their latency impact, and recovery policies compatible with inference SLAs.

Practical Applications

Immediate Applications

Below are near-term, deployable applications that leverage the paper’s findings about memory- and latency-centric bottlenecks in LLM inference, using today’s components and practices.

- Industry (Datacenter AI): Latency-first network configurations for Decode

- Application: Reconfigure cluster topologies and placement policies to minimize hop count and small-message latency for Decode (e.g., tree/dragonfly-like overlays on existing fabrics, rack-local scheduling).

- Tools/Products/Workflows: Use InfiniBand/Ethernet in-network compute features (e.g., NVIDIA SHARP, programmable switches) to accelerate collectives (broadcast, all-reduce) and MoE routing.

- Assumptions/Dependencies: Workload has frequent, small messages during Decode; orchestration stack can place shards closer; switch/NIC features are available and supported by frameworks.

- Industry (Datacenter AI): Interconnect-aware inference scheduling and sharding

- Application: Modify inference schedulers to co-locate experts/shards to reduce inter-node traffic, preferring fewer, shorter hops over peak bandwidth.

- Tools/Products/Workflows: Topology-aware placement (Kubernetes/Slurm plugins), framework-level MoE router hints, decode-time microbatch tuning.

- Assumptions/Dependencies: Accurate network topology discovery; inference framework exposes shard/experts mapping controls.

- Industry (Software/Platforms): Graceful degradation to cut tail latency

- Application: Implement timeout policies that use cached/fake data or prior results for straggling messages to bound time-to-first-token and time-to-completion.

- Tools/Products/Workflows: Inference middleware that supports partial/fallback responses, per-hop timeouts, and speculative execution.

- Assumptions/Dependencies: Product owners accept small quality trade-offs for latency; quality guardrails and monitoring are in place.

- Industry (Datacenter AI): Memory tiering for KV cache and context

- Application: Tier KV cache and slow-changing context across HBM → DDR → NVMe to relieve HBM pressure in Decode; pin only hot cache lines in HBM.

- Tools/Products/Workflows: Paged KV caches (e.g., vLLM-like paging) with prefetch at page granularity; DDR-heavy nodes to back long-context and RAG payloads.

- Assumptions/Dependencies: Access locality is sufficient for prefetch/paging; frameworks support heterogeneous memory pools.

- Industry (Semiconductors/Systems): Early PNM via CXL-attached near-memory compute

- Application: Attach compute-capable memory expansion (e.g., Marvell Structera-class) for filter/transform operations near DDR to reduce host-memory traffic during Prefill and Decode.

- Tools/Products/Workflows: CXL memory-expander + runtime operators offloaded to near-memory devices (e.g., layernorm, embedding lookups, dequant).

- Assumptions/Dependencies: Operator set matches device capabilities; software stack supports heterogeneous offload; power/thermal within rack budgets.

- Academia: LLM inference roofline simulators with cost/CO2e

- Application: Build open-source simulators that model Decode memory capacity/bandwidth, interconnect latency, sharding choices, and report performance/TCO/CO2e.

- Tools/Products/Workflows: Roofline models, traffic generators for MoE/long-context/RAG, standardized workload traces.

- Assumptions/Dependencies: Access to representative traces; consensus on evaluation metrics and reporting.

- Policy (Gov/Cloud Procurement): Metrics that prioritize latency, memory, and CO2e

- Application: Update RFPs and SLAs to include time-to-first-token, per-token latency, GBps-per-watt, and performance/CO2e, not only FLOPS.

- Tools/Products/Workflows: Procurement scorecards weighting latency-centric metrics; carbon accounting per inference.

- Assumptions/Dependencies: Providers can measure and attest to these metrics; auditors/regulators define acceptable methodologies.

- Daily Life (End-user Apps: healthcare, finance, education, software dev): Faster, more reliable assistants

- Application: Deploy latency-optimized inference backends for chat, coding, clinical summarization, and tutoring that reduce perceived lag and timeouts.

- Tools/Products/Workflows: Backend routing to latency-tuned clusters; UX that streams early tokens quickly and masks stragglers.

- Assumptions/Dependencies: App stacks support streaming; regulatory requirements (e.g., in healthcare/finance) allow partial/streaming outputs.

Long-Term Applications

These require further research, scale-up, standardization, or new manufacturing capabilities aligned with the paper’s proposed directions (HBF, PNM/3D stacking, low-latency interconnect).

- Industry (Semiconductors/Cloud): High Bandwidth Flash (HBF) for 10× capacity per node

- Application: Stacked, HBM-like flash to host read-mostly weights and slow-changing context, shrinking system size and network footprint for MoE, long-context, and RAG.

- Tools/Products/Workflows: HBF-enabled accelerator cards; runtime page-prefetchers; compiler/runtime policies for flash page granularity.

- Assumptions/Dependencies: Manage flash endurance (read-mostly usage); amortize page-level latency via prefetch and batching; supply chain maturity.

- Industry (Search/Software/EdTech): Massive in-memory corpora on HBF

- Application: Serve web/code/papers corpora directly from HBF for RAG and retrieval, enabling larger, fresher knowledge bases with lower TCO.

- Tools/Products/Workflows: RAG pipelines co-designed for flash page sizes; background refresh pipelines respecting write budgets.

- Assumptions/Dependencies: Effective caching and prefetch; corpora update rates compatible with flash endurance.

- Industry (Semiconductors): 3D memory-logic stacking for Decode-optimized bandwidth/watt

- Application: Custom 3D stacks or compute-on-HBM-base-die accelerators that deliver higher bandwidth at lower power for memory-bound Decode.

- Tools/Products/Workflows: New accelerator SKUs tuned for low-clock, low-voltage operation; thermal solutions (e.g., direct liquid cooling).

- Assumptions/Dependencies: Thermal feasibility; interface standardization between logic and memory dies; yield and reliability at scale.

- Industry (AI Systems): Processing-Near-Memory (PNM) platforms for easier sharding

- Application: Separate logic + memory dies (stacked or side-by-side) to allow GB-scale shards with high bandwidth, simplifying LLM partitioning vs PIM.

- Tools/Products/Workflows: PNM-aware partitioners; operator libraries mapped to near-memory tiles.

- Assumptions/Dependencies: Software ecosystem adopts new partitioning models; bandwidth gains outweigh off-chip coupling costs.

- Mobile/Edge (Consumer Devices): PIM-based on-device LLMs with compute-enabled flash

- Application: Energy-efficient, read-mostly inference on tens-of-billions parameter models with LPDDR + compute-enabled flash for Decode.

- Tools/Products/Workflows: Mobile runtime schedulers that pin hot KV in LPDDR and stream weights from on-package flash; thermal-aware governors.

- Assumptions/Dependencies: Workloads fit mobile thermal/power envelopes; limited write patterns; handset OEM integration timelines.

- Industry (Networking): New interconnects optimized for small-message latency

- Application: High-connectivity topologies (dragonfly, high-D tori, trees) and NIC/switch ASICs that prioritize sub-μs latency over peak bandwidth for Decode traffic.

- Tools/Products/Workflows: Latency-first routing; QoS for Decode flows; NICs that place packets directly to on-chip SRAM and schedule compute near the NIC.

- Assumptions/Dependencies: Acceptance of lower peak bandwidth; co-design between frameworks and network stacks.

- Academia/Standards: Open interfaces for 3D memory-logic stacking

- Application: Define standardized die-to-die memory interfaces and reliability/thermal specs to enable multi-vendor ecosystems for stacked designs.

- Tools/Products/Workflows: Consortia specifications; compliance test suites; reference designs.

- Assumptions/Dependencies: Cross-industry collaboration; IP licensing and interoperability agreements.

- Policy: Incentives for memory-centric AI and supply diversification

- Application: Grants/tax credits for HBF/PNM/3D-stack R&D and domestic fabrication; standards for reporting performance/CO2e; resilience plans for HBM shortages.

- Tools/Products/Workflows: Public–private partnerships; procurement preferences for memory-efficient designs.

- Assumptions/Dependencies: Legislative support; measurable impact on energy/carbon intensity.

- Industry (Cloud Architecture): Memory disaggregation with pooled HBF/DDR/CXL

- Application: Rack-scale memory pools that expose tiers (HBM/HBF/DDR) to inference services, right-sizing capacity per model/version without overprovisioning.

- Tools/Products/Workflows: CXL memory fabrics; tier-aware allocators; autoscaling policies based on sequence length and MoE activation patterns.

- Assumptions/Dependencies: CXL ecosystem maturity; latency penalties acceptable for targeted tiers.

- Cross-sector (Healthcare, Finance, Robotics, Media): Larger, cheaper models in production

- Application: MoE and long-context deployments enabled by higher capacity per node (HBF/PNM/3D) and lower latency networks, improving reasoning and multimodal quality.

- Tools/Products/Workflows: Domain-tuned MoE experts resident in HBF; long-context EHR/transaction/history processing with lower TCO.

- Assumptions/Dependencies: Validation for safety/compliance; data governance for large corpora; thermal/power within facility limits.

- Academia/Benchmarks: Decode-centric evaluation suites

- Application: Community benchmarks that emphasize KV/cache pressure, small-message collectives, and latency metrics for autoregressive Decode.

- Tools/Products/Workflows: Open traces for MoE/long-context/RAG; scorecards with time-to-first-token and per-token latency distributions.

- Assumptions/Dependencies: Broad community adoption; alignment with industry needs.

- Daily Life (Consumer Apps): Longer, richer contexts with stable latency

- Application: User-facing assistants that handle larger histories (documents, chats, codebases) without degraded responsiveness, enabled by memory-centric hardware.

- Tools/Products/Workflows: Client settings that request longer contexts; server policies that allocate HBF-backed context windows.

- Assumptions/Dependencies: Backends upgraded with memory-centric tiers; pricing reflects reduced TCO.

Glossary

- 3D memory-logic stacking: Vertical integration of compute and memory dies to achieve much higher on-package bandwidth and lower power than 2D arrangements. "3D memory-logic stacking has two versions:"

- All-reduce: A communication collective that aggregates values (e.g., sums) across devices and distributes the result back to all. "broadcast, all-reduce, MoE dispatch and collect"

- Arithmetic intensity: The ratio of compute operations to memory traffic; low values indicate memory-bound workloads. "LLM Decode inference already has low arithmetic intensity."

- ASIC: An application-specific integrated circuit; a custom chip optimized for particular tasks. "connect several HBM stacks to a single monolithic accelerator ASIC (Figure 1 and Table 1)."

- Autoregressive: A generation method where each output token depends on previously generated tokens, forcing sequential decoding. "The autoregressive Decode makes inference inherently memory bound, with new software trends heightening this challenge."

- Bank parallelism: Parallel access across multiple independent memory banks to increase throughput. "Bank parallelism needs sharding workloads to banks (e.g., 32-64 MB)"

- Base die: The bottom die in a stacked memory package that interfaces logic and stacked memory dies. "Compute-on-HBM-base-die reuses HBM designs by inserting the compute logic into the HBM base die."

- CO2e: Carbon dioxide equivalent; a metric that standardizes the climate impact of different greenhouse gases. "carbon dioxide equivalent emissions (CO2e)"

- Compute-on-HBM-base-die: A 3D stacking approach placing compute logic on the HBM base die for bandwidth at lower power. "Compute-on-HBM-base-die reuses HBM designs by inserting the compute logic into the HBM base die."

- Context window: The span of input tokens an LLM can attend to when generating outputs. "A context window refers to the amount of information the LLM model can look at when generating an answer."

- CXL: Compute Express Link; a cache-coherent interconnect standard for attaching accelerators and memory. "leveraged the CXL interface"

- Decode: The sequential inference phase that generates one token at a time and is typically memory-bound. "Decode is inherently sequential, as each step generates one output token ("autoregressive"), making it memory bound."

- DIMM: Dual In-line Memory Module; a standardized memory module format used in servers/desktops. "integrated compute logic in the DIMM buffer chip."

- Diffusion: A generative technique that starts from noise and iteratively denoises to produce outputs, emphasizing compute over memory. "the novel diffusion method generates all tokens (e.g., an entire image) in one step and then iteratively denoises the image to reach desired quality."

- Disaggregated inference: Running different inference phases on separate servers to better utilize resources. "Disaggregated inference allows software optimizations like batching to make Decode be less memory bound."

- Dragonfly (topology): A high-radix, low-diameter network topology designed to reduce hop count (latency). "such as tree, dragonfly, and high-dimensional tori"

- Embodied CO2e: The lifecycle carbon emissions associated with manufacturing and deploying hardware. "Manufacturing yield and life cycle set embodied CO2e."

- FLOPS: Floating-point operations per second; a measure of compute throughput. "memory bandwidth improves more slowly than compute FLOPS."

- High Bandwidth Flash (HBF): A proposed flash-based stacked memory offering HBM-like bandwidth with much higher capacity. "High Bandwidth Flash (HBF) combines HBM bandwidth with flash capacity"

- High Bandwidth Memory (HBM): Stacked DRAM connected via wide interfaces to deliver very high bandwidth. "Current datacenter GPUs/TPUs rely on High Bandwidth Memory (HBM)"

- High-dimensional tori: Multi-dimensional torus interconnects that can reduce path lengths at some bandwidth cost. "such as tree, dragonfly, and high-dimensional tori"

- Hop count: The number of network links a message traverses; fewer hops generally mean lower latency. "by increasing the hop count."

- Interconnect latency: The time delay for data to travel across the network fabric between chips/nodes. "Interconnect latency outweighs bandwidth."

- KV Cache: The key-value cache storing attention states across tokens to speed subsequent decoder steps. "The Key Value (KV) Cache connects the two phases"

- Long context: Extended input windows that allow models to reference far more tokens at once. "Long context. A context window refers to the amount of information the LLM model can look at when generating an answer."

- Memory Wall: The growing gap between processor speed and memory bandwidth/latency that limits performance. "AI processors face a Memory Wall."

- Mixture of Experts (MoE): A model architecture that routes tokens to a subset of specialized subnetworks (“experts”) to scale model capacity. "Mixture of Experts (MoE). Rather than a single dense feedforward block, MoE uses tens to hundreds of experts-256 for DeepSeekv3- invoked selectively."

- Multimodal: Models that process or generate across multiple data types (text, image, audio, video). "Multimodal. LLMs have evolved from text to image, audio, and video generation."

- NVLink: NVIDIA’s high-speed interconnect used in multi-GPU systems for low-latency, high-bandwidth communication. "NVIDIA NVLink and Infiniband switches that support in-switch reduction, and multicast acceleration through SHARP."

- Page-based reads: Flash access mode that retrieves data in page units with relatively high read latency. "Page-based reads with high latency."

- PIM (Processing-in-Memory): Embedding compute inside memory dies to minimize data movement and maximize bandwidth. "Processing-in-Memory (PIM), conceived by the 1990s8, obtains high bandwidth by augmenting memory dies with small, low-power processors attached to memory banks."

- PNM (Processing-Near-Memory): Placing compute on separate but closely coupled logic dies near memory to gain bandwidth while easing software constraints. "Processing-Near-Memory (PNM) is a technique that places memory and logic nearby but still uses separate dies."

- Prefill: The parallel inference phase that processes the entire input sequence at once, similar to training. "Prefill is similar to training by processing all tokens of the input sequence simultaneously"

- Processing-in-network: Executing parts of communication collectives inside the network fabric to reduce latency and traffic. "Processing-in-network. Communication collectives used by LLMs-broadcast, all-reduce, MoE dispatch and collect-are well suited for in-network acceleration"

- Retrieval-Augmented Generation (RAG): Augmenting prompts with retrieved external knowledge to improve responses. "Retrieval-Augmented Generation (RAG). RAG accesses a user-specific knowledge database to obtain relevant information as extra context to improve LLM results, increasing resource demands."

- Reticle: The maximum photolithography field size; “full reticle” often denotes very large chips near lithography limits. "full reticle chips"

- Roofline-based performance simulator: A modeling tool grounded in the roofline model to estimate performance given compute and memory ceilings. "a roofline-based performance simulator could be useful"

- SHARP: An in-network aggregation capability (by Mellanox/NVIDIA) that accelerates collectives. "and multicast acceleration through SHARP."

- Sharding: Partitioning model parameters or data across devices/nodes to fit memory and parallelize work. "software sharding that implies frequent communication."

- Silicon interposer: A passive silicon layer providing dense wiring between dies in advanced packages. "Silicon Interposer"

- Standby spare: A pre-provisioned redundant node to take over immediately upon failure, reducing disruption. "A local standby spare reduces system failures"

- Time-to-completion: The latency until all output tokens have been generated. "Time-to-completion challenge."

- Time-to-first-token: The latency until the first output token appears. "Time-to-first-token challenge."

- TCO (Total Cost of Ownership): A holistic cost metric including purchase, power, cooling, space, and operational expenses. "Total Cost of Ownership (TCO)"

- TSV (Through-silicon via): Vertical electrical connections passing through silicon dies to enable dense 3D stacking. "through silicon vias (TSVs)"

- Wafer-scale integration: Building a compute system spanning (most of) a full wafer rather than cutting it into separate dies. "(Cerebras even used wafer scale integration.)"

- Write endurance: The finite number of program/erase cycles flash can tolerate before wearing out. "Limited write endurance."

Collections

Sign up for free to add this paper to one or more collections.