- The paper introduces a unified Bayesian optimization framework that reduces experimental iterations by 40–60% in materials discovery.

- It employs a modular architecture integrating multiple surrogate models and acquisition functions to handle both single- and multi-objective tasks.

- Empirical benchmarks on TPMS structures, HEAs, and high-strength steels demonstrate Bgolearn’s superiority over traditional search techniques.

Bgolearn: Unified Bayesian Optimization for Accelerated Materials Discovery

Overview

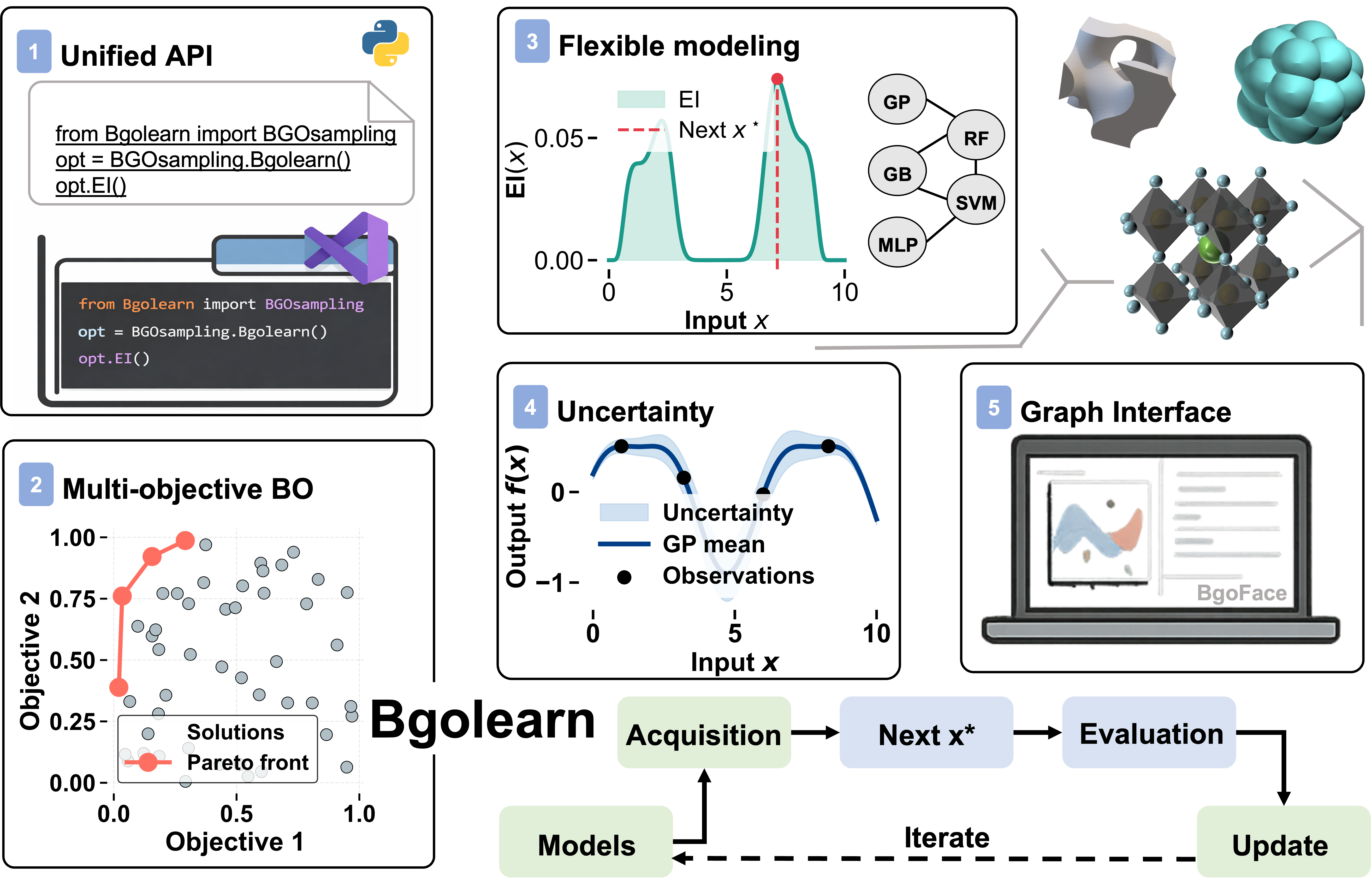

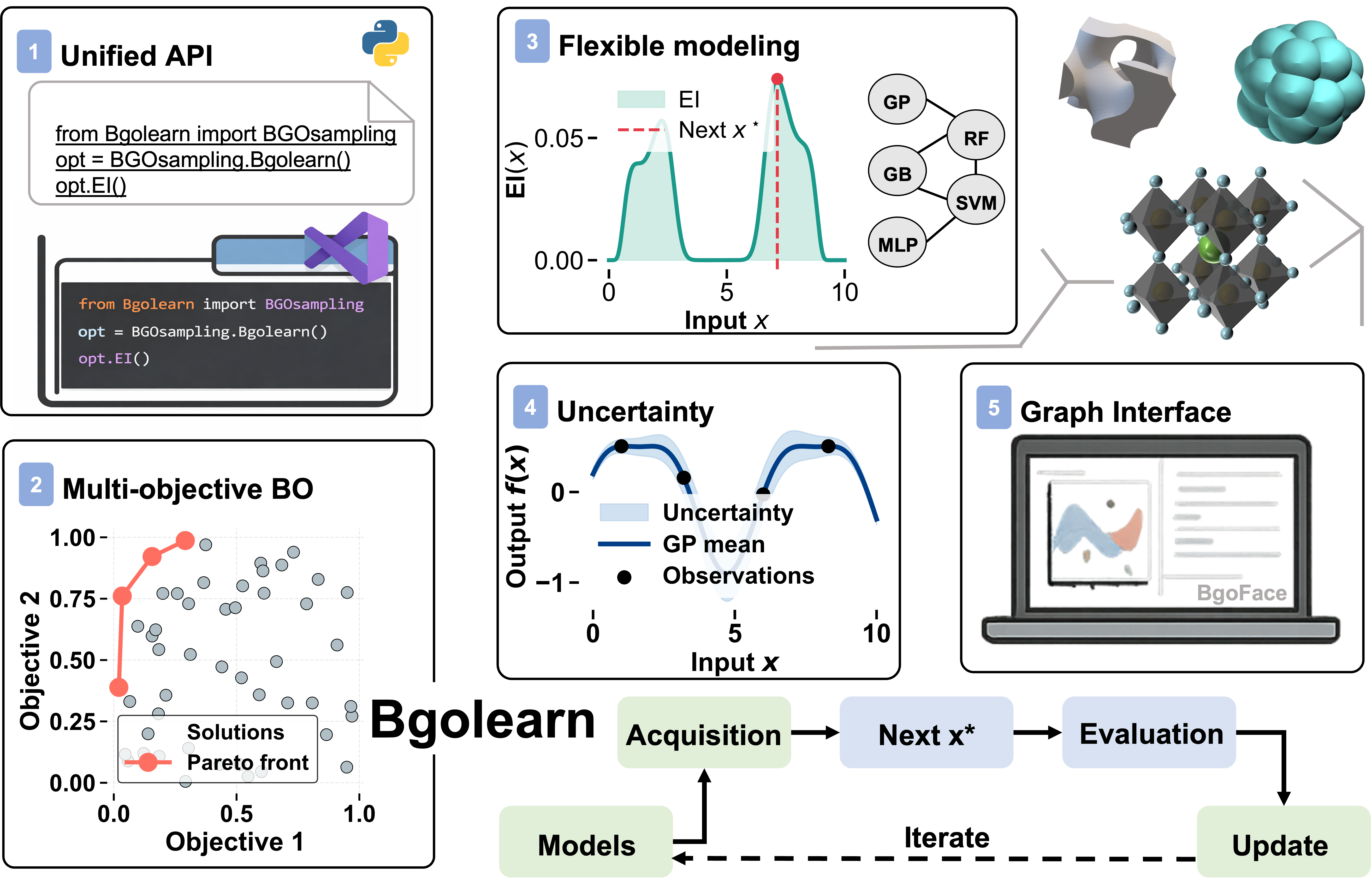

"Bgolearn: a Unified Bayesian Optimization Framework for Accelerating Materials Discovery" (2601.06820) presents a fully-featured Python-based toolkit specifically tailored for the efficient exploration of high-dimensional, multi-objective design spaces in materials science using Bayesian Optimization (BO). The framework is designed to substantially lower the technical barriers to entry for materials researchers by coupling rigorous algorithmic implementations with an intuitive API and GUI. Bgolearn natively supports both single-objective and multi-objective paradigms, multiple acquisition functions, a suite of surrogate models with bootstrap-based uncertainty quantification, and can be seamlessly integrated within both automated and traditional experimental workflows.

The authors provide extensive benchmarking across canonical numerical functions and diverse real-world materials discovery tasks—including the optimization of triply periodic minimal surface (TPMS) structures, high-entropy alloys (HEAs), and advanced high-strength steels. The empirical evidence demonstrates that Bgolearn consistently reduces required experiments by 40–60% relative to random, grid, or evolutionary search, without compromise in solution quality. The platform’s open modular architecture facilitates extensibility, reproducibility, and adoption by the broader materials community.

Figure 1: The components and workflow of Bgolearn for materials discovery.

Architectural Innovations and Implementation

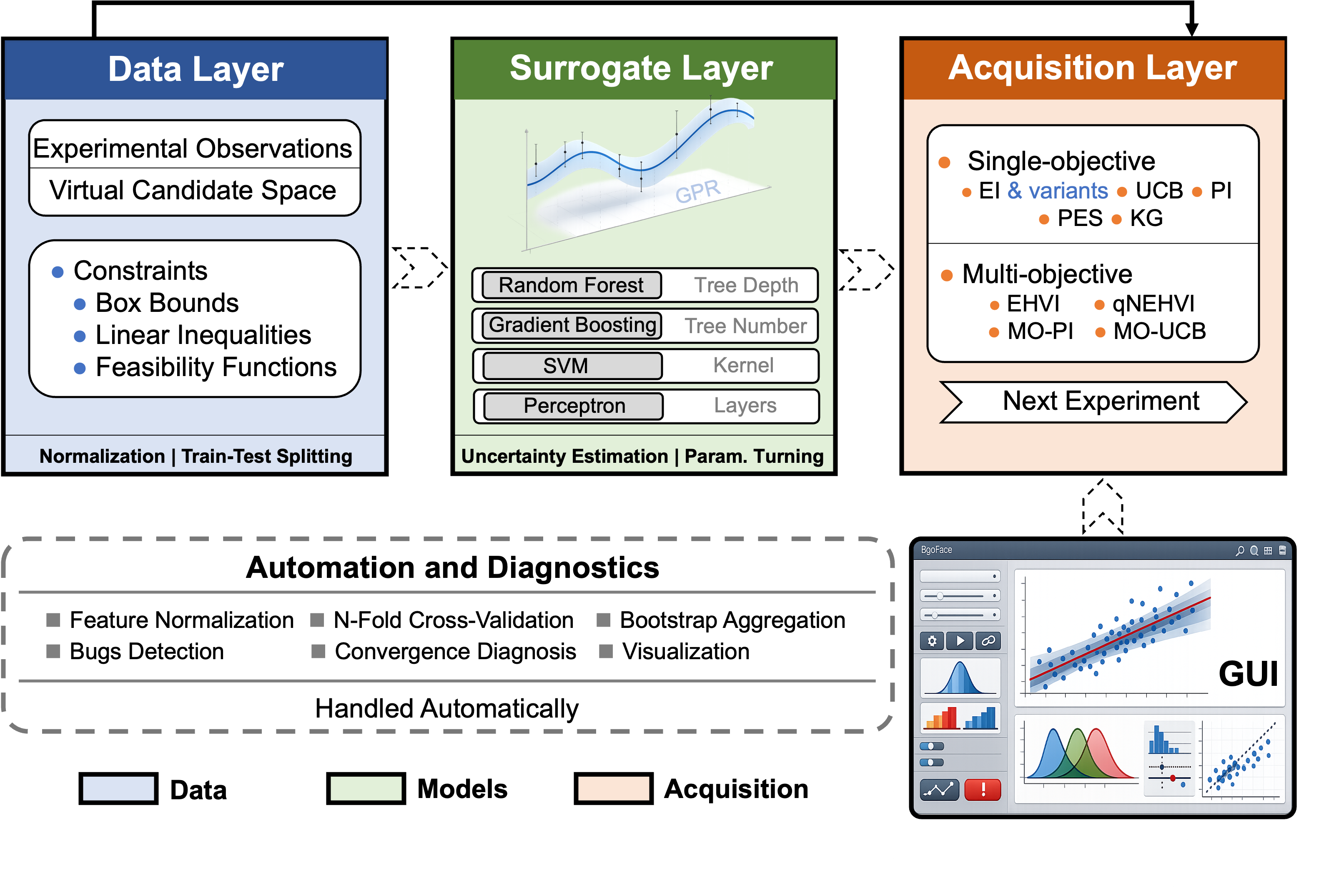

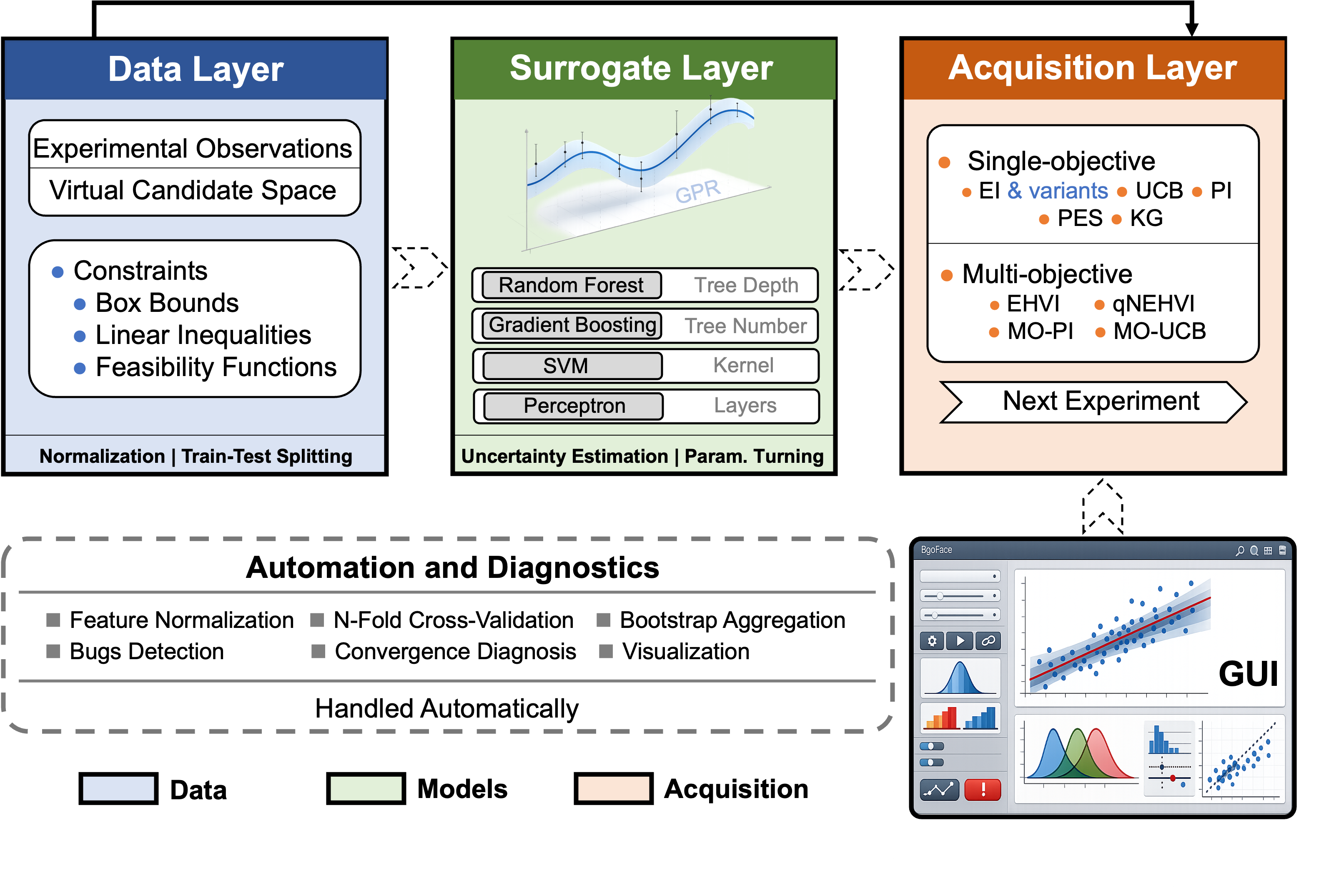

Bgolearn implements a modular, three-layer software architecture encompassing data ingestion/preprocessing, surrogate modeling, and acquisition optimization. Its surrogate layer includes Gaussian Processes (GP), Random Forests (RF), Gradient Boosting (GB), Support Vector Regression (SVR), and neural networks, each with cross-validated hyperparameter selection. Notably, Bgolearn brings robust uncertainty quantification to bootstrapped non-GP models, bridging a longstanding gap for scalable, practical surrogate selection.

The acquisition layer supports five single-objective and four multi-objective functions, including EI, UCB, PI, EHVI, and qNEHVI. The multi-objective backbone implements hypervolume indicator maximization and supports both batch and noisy parallel optimization.

For accessibility, the BgoFace GUI (built with PyQt) allows full end-to-end workflow configuration, experiment tracking, and interactive visualization, circumventing the need for programming expertise and generating equivalent Python code for reproducibility.

Figure 2: Overview of the Bgolearn software architecture, data flow, and the integrated BgoFace ecosystem interface.

Numerical Benchmarking: Optimization Efficiency

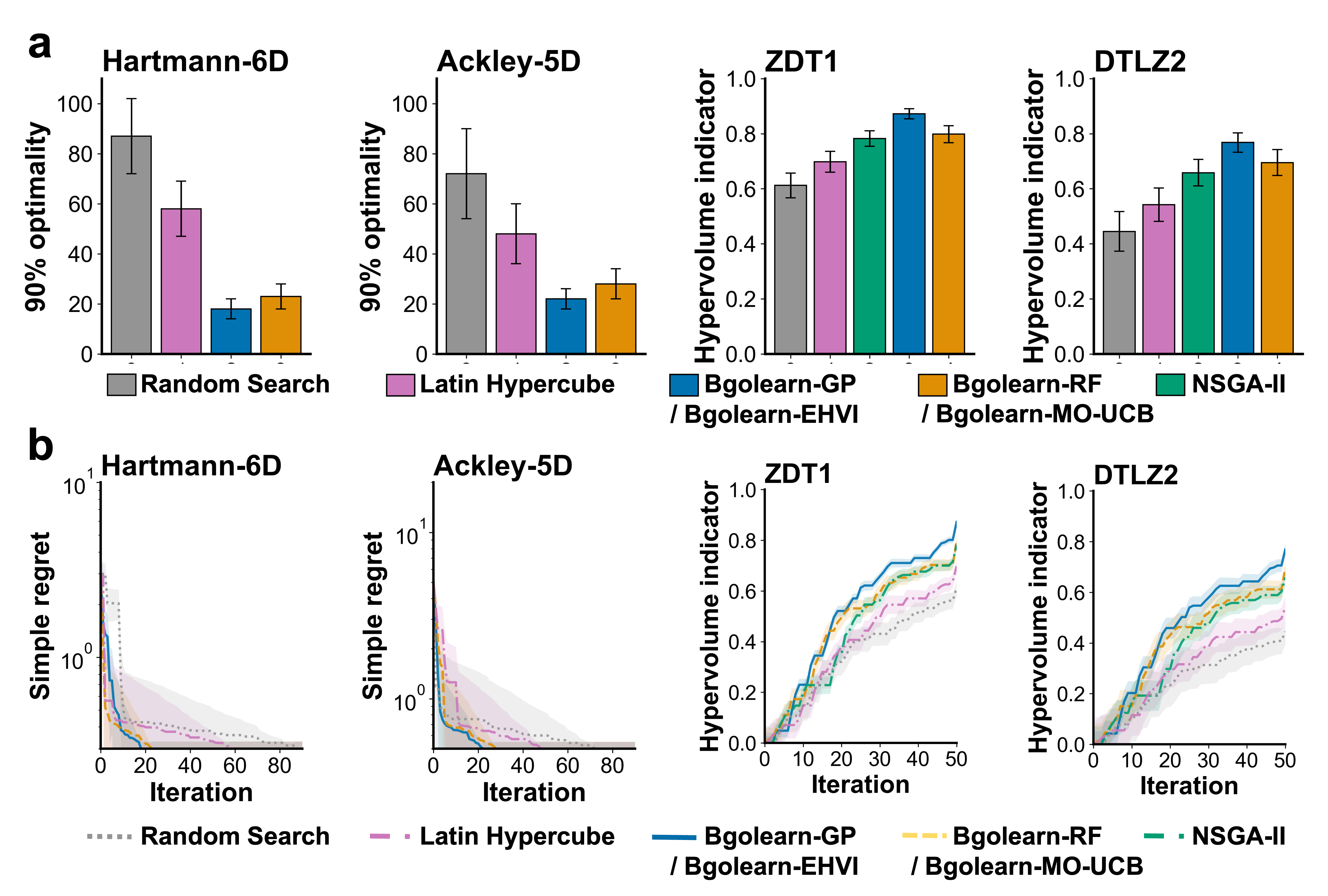

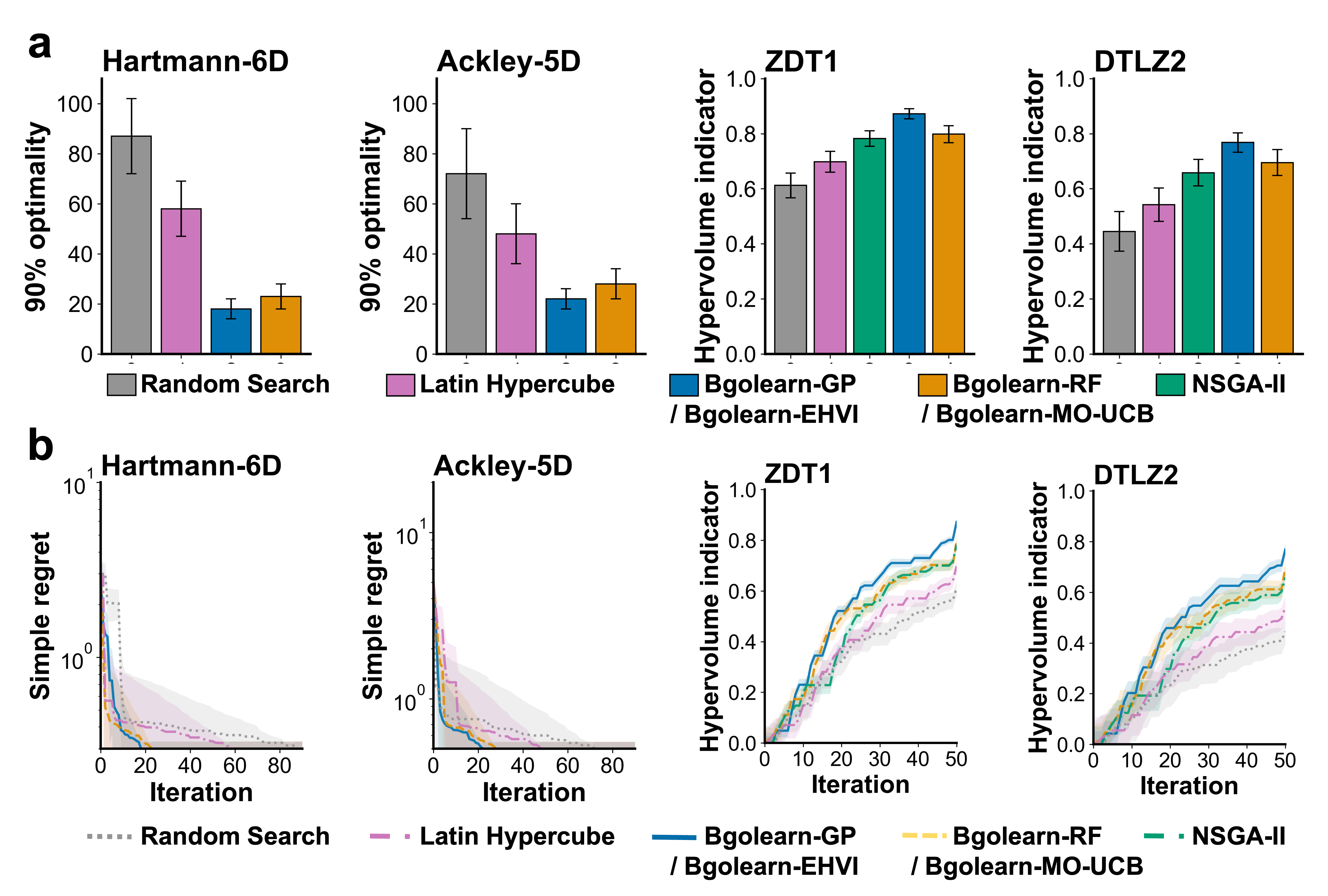

Bgolearn’s numerical performance was rigorously benchmarked on canonical single- and multi-objective functions (Hartmann-6D, Ackley-5D, ZDT1, DTLZ2), alongside standard baselines—random search, Latin hypercube sampling, and NSGA-II. Results indicate:

- Single-objective tasks: GP/EI-based Bgolearn achieves 90% optimality in only 20–31% of the iterations required by random or grid search, and at a lower computational cost than LHS.

- Multi-objective scenarios: Bgolearn-EHVI consistently outperforms both random search and NSGA-II in final Pareto hypervolume, e.g., achieving a hypervolume of 0.768±0.035 on DTLZ2 compared to 0.658±0.048 for NSGA-II.

- Computational efficiency: RF-based surrogates with bootstrap uncertainty reach comparable optimization efficiency to GPs while incurring only 61% of their computational overhead.

Bgolearn’s scalable batch-mode and parallelization capabilities are especially suited for high-throughput and noisy experimental regimes.

Figure 3: (a) Optimization efficiency of Bgolearn compared to baselines on benchmark functions. (b) Simple regret trajectories showing rapid convergence for Bgolearn-based methods.

Real-World Materials Discovery Applications

Optimization of TPMS Structures

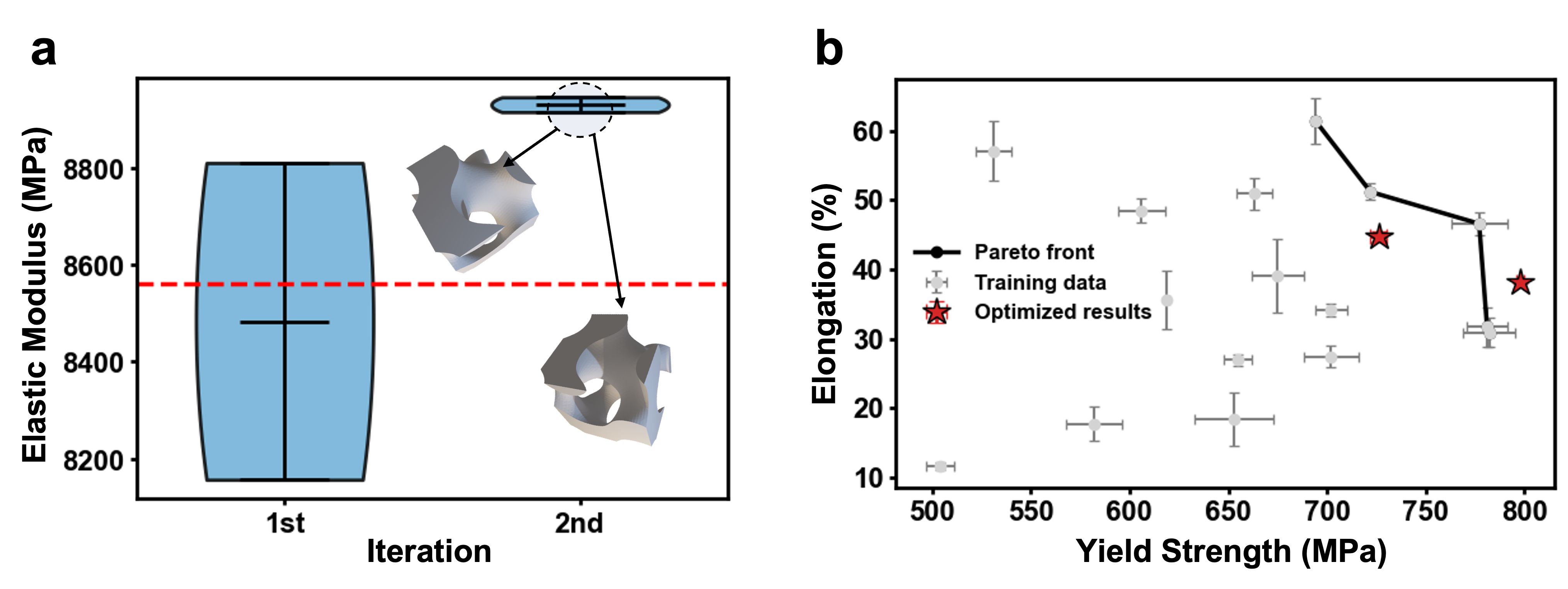

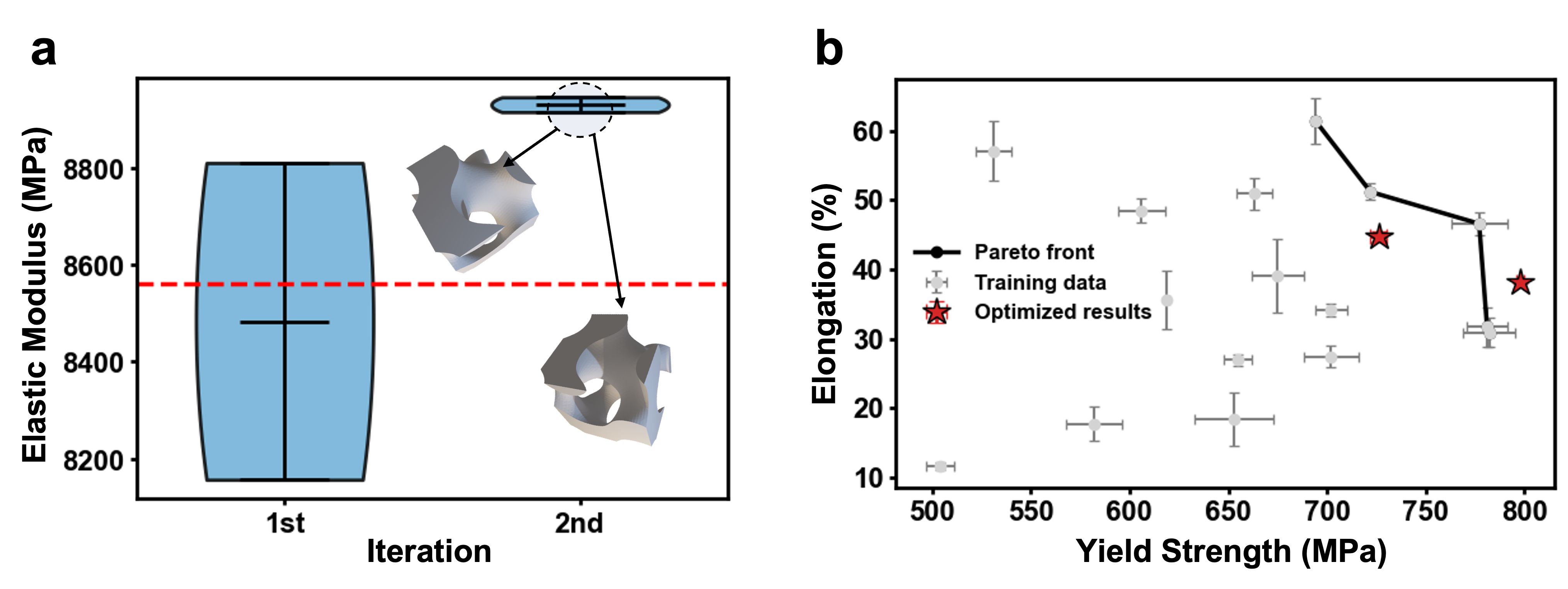

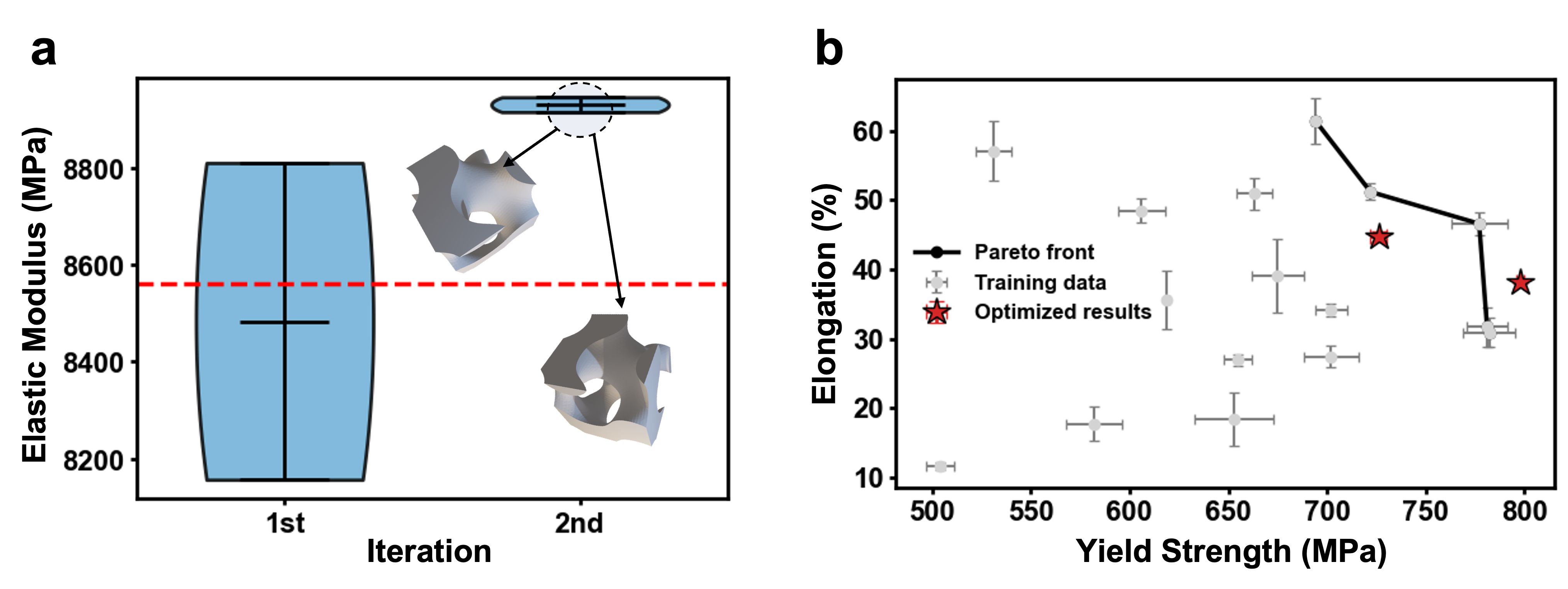

Bgolearn was applied to navigate the high-dimensional parametric space of TPMS structures for maximizing elastic modulus. Using GB surrogates and EI, the framework identified configurations with moduli up to 8945 MPa, surpassing those found in the initial training set in only two active learning iterations.

Figure 4: (a) Evolution of the elastic modulus during Bgolearn-accelerated optimization of TPMS structures. The dotted line shows the best pre-optimization configuration.

Ultra-High-Hardness High-Entropy Alloy Discovery

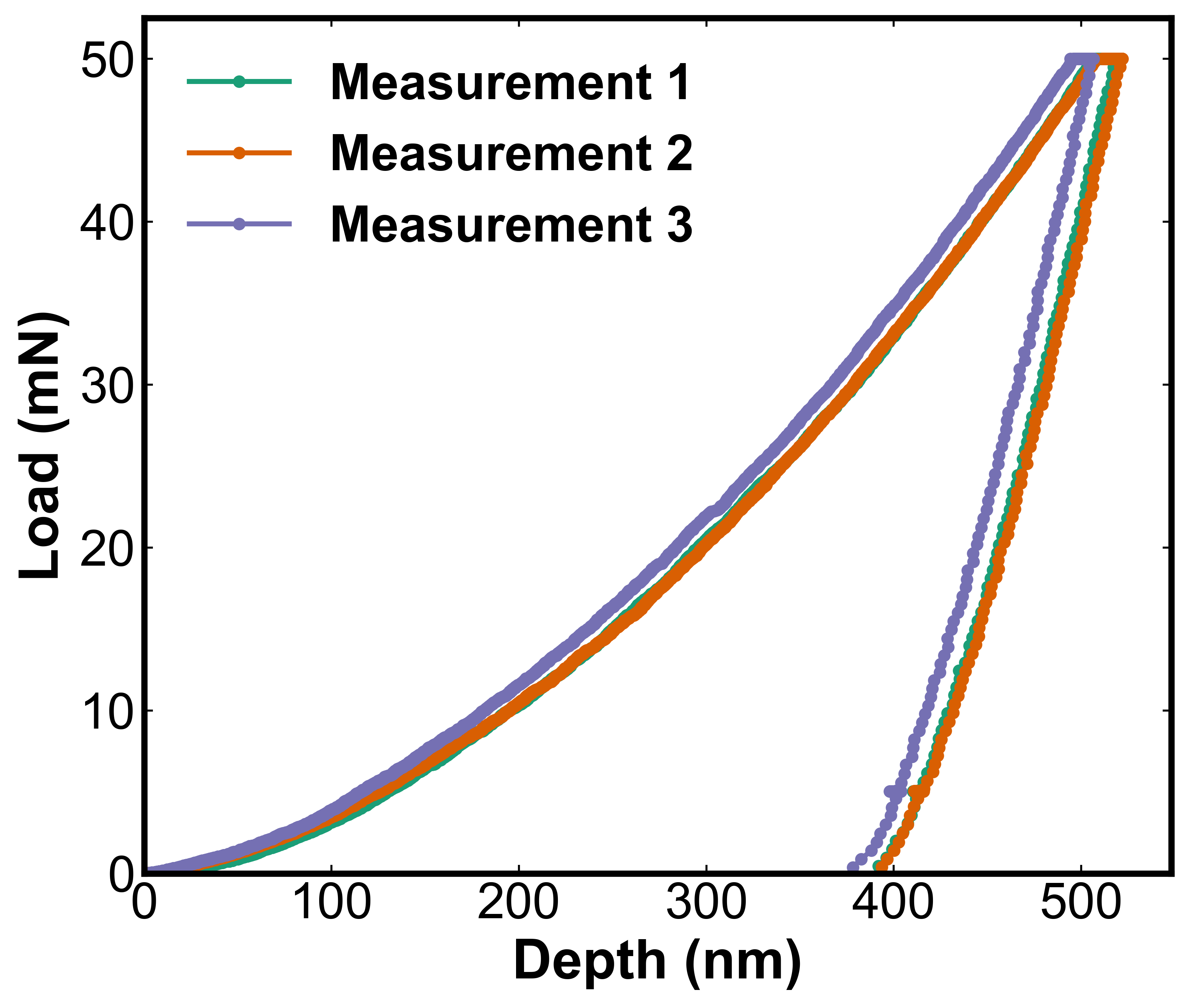

Applying Bgolearn’s active learning loop to the Al–Co–Cr–Fe–Cu–Ni HEA system, a previously unreported composition (Al46.47Co9.16Cr23.47Cu7.22Fe8.10Ni5.58) with Vickers hardness >1000 HV was recommended and experimentally validated, exceeding the hardness ceiling for all literature-reported alloys in the benchmark set. This demonstrates Bayesian optimization’s ability to transcend empirical property boundaries in complex compositional landscapes.

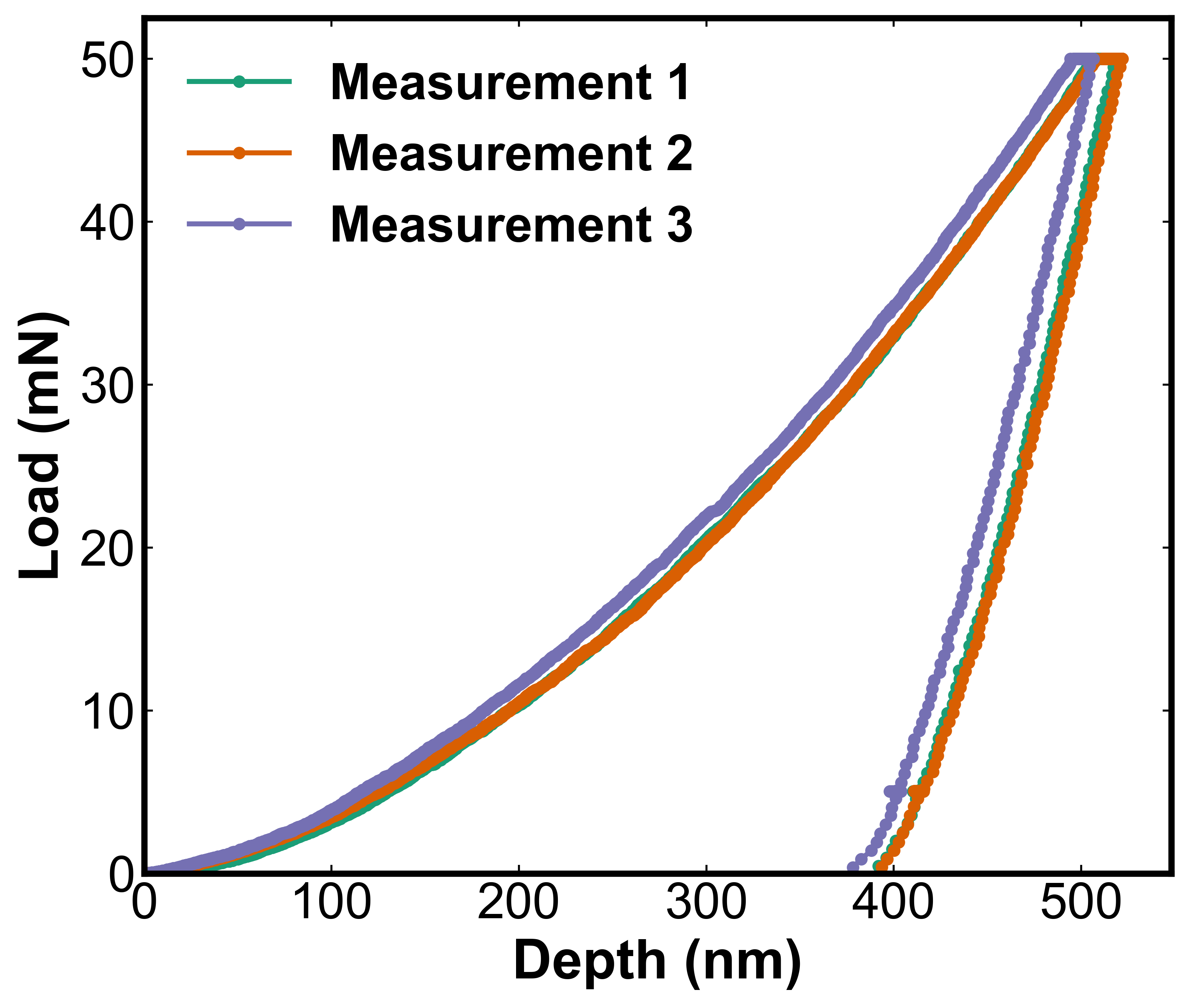

Figure 5: Depth–load curves from three independent nanoindentation experiments on the Bgolearn-discovered high-hardness HEA, demonstrating reproducible mechanical performance.

Multi-Objective Process Optimization for Medium-Mn Steels

In the discovery of high-yield-strength, high-ductility medium-Mn steels, Bgolearn’s MOBO functionality identified processing schedules that pushed beyond the experimentally-observed Pareto front, with one achieved yield strength-elongation combination lying entirely outside original trade-offs. This evidences the framework’s effectiveness for multi-target property enhancement under process variability.

Figure 4: (b) Yield strength and elongation outcomes for medium-Mn steels. Bgolearn’s recommended schedules (red stars) surpass the original experimental Pareto boundary (black line).

Theoretical and Practical Implications

Bgolearn establishes a practical and extensible reference platform for applying Bayesian optimization to nontrivial materials discovery tasks. Its unified support for multiple surrogate types, batch/multi-objective optimization, domain-specific constraint handling, and robust uncertainty quantification addresses persistent adoption barriers in the field. The platform's empirical superiority over brute-force, evolutionary, and grid strategies, both in simulation and laboratory settings, supports the claim that modern BO, when accessible, can universalize data-efficient experimentation for metal alloy, functional, and industrial process systems.

Bgolearn's compatibility with closed-loop and self-driving laboratory systems further positions it as a critical infrastructural asset for autonomous scientific discovery.

Future Directions

Research directions stemming from Bgolearn’s design include the integration of reinforcement learning for sequential decision-making under delayed/sparse observations or evolving acquisition objectives, incorporation of multi-fidelity modeling, and hybrid human-in-the-loop strategies. The modularization of surrogate and acquisition function selection, along with BO workflow automation, offers fertile ground for cross-domain generalization and adaptation to biological, chemical, and engineering design challenges. The open-source nature of Bgolearn will facilitate cross-community contributions and benchmarking, accelerating methodological progress in data-driven experimentation.

Conclusion

Bgolearn delivers a robust, scalable, and pragmatically accessible Bayesian optimization platform, setting a new standard for data-driven materials discovery. Its systematic reduction of experimental expenditure, theoretical rigor in uncertainty management, seamless extensibility, and empirical prowess across simulated and real-world scenarios substantiate its claim as a unified, production-ready framework for the materials informatics community. The infrastructure laid by Bgolearn will underpin future developments in autonomous experiment planning and intelligent design of materials and related systems.