3AM: 3egment Anything with Geometric Consistency in Videos

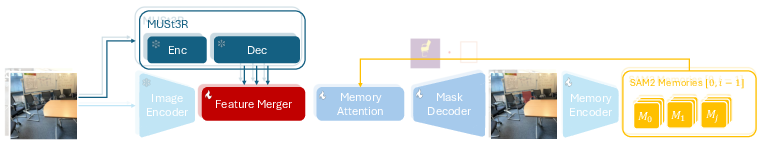

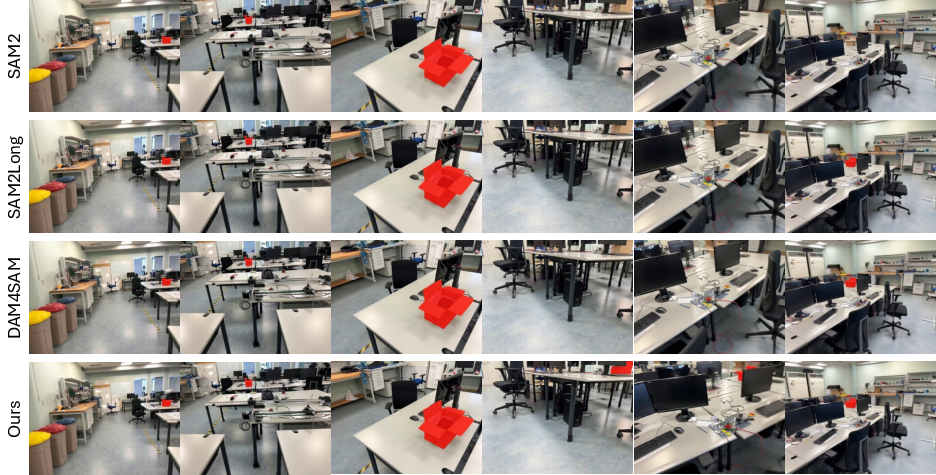

Abstract: Video object segmentation methods like SAM2 achieve strong performance through memory-based architectures but struggle under large viewpoint changes due to reliance on appearance features. Traditional 3D instance segmentation methods address viewpoint consistency but require camera poses, depth maps, and expensive preprocessing. We introduce 3AM, a training-time enhancement that integrates 3D-aware features from MUSt3R into SAM2. Our lightweight Feature Merger fuses multi-level MUSt3R features that encode implicit geometric correspondence. Combined with SAM2's appearance features, the model achieves geometry-consistent recognition grounded in both spatial position and visual similarity. We propose a field-of-view aware sampling strategy ensuring frames observe spatially consistent object regions for reliable 3D correspondence learning. Critically, our method requires only RGB input at inference, with no camera poses or preprocessing. On challenging datasets with wide-baseline motion (ScanNet++, Replica), 3AM substantially outperforms SAM2 and extensions, achieving 90.6% IoU and 71.7% Positive IoU on ScanNet++'s Selected Subset, improving over state-of-the-art VOS methods by +15.9 and +30.4 points. Project page: https://jayisaking.github.io/3AM-Page/

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Explain it Like I'm 14

Overview

This paper introduces 3AM, a smarter way to track and cut out objects in videos, even when the camera moves a lot or the object looks very different from one angle to another. It builds on SAM2 (a popular tool that lets you segment “anything” with clicks, boxes, or masks) by adding “3D awareness,” so the model doesn’t just remember how things look—it also learns where they are in space.

What questions is the paper trying to answer?

Here are the simple goals the researchers had:

- How can we keep tracking the same object across a video when the camera view changes a lot?

- Can we make 2D tools like SAM2 more reliable by teaching them about 3D geometry, but without needing special 3D inputs (like camera positions or depth) at test time?

- Is it possible to get consistent results across very different viewpoints quickly enough for real-world use?

How did they do it?

Think of tracking an object like keeping your eye on the same toy while you walk around a room. From each new angle, the toy looks different. A tool that only uses how the toy looks in 2D can get confused. But if the tool also knows the toy’s place in 3D space, it can tell it’s the same toy, even from a new angle.

Here’s the approach in everyday terms:

- SAM2 is good at recognizing how things look and remembering them across frames (like a short-term memory for a video).

- MUSt3R is a model that learns 3D structure from multiple images—it’s good at understanding how different views relate in space.

- 3AM blends both worlds. It takes SAM2’s “appearance features” (how the object looks) and MUSt3R’s “geometry-aware features” (where the object sits in space) and merges them using a small, efficient module called the Feature Merger.

- During training, they use a smart frame-picking strategy called “field-of-view aware sampling.” In simple terms: when teaching the model, they pick sets of frames that actually look at overlapping physical parts of the object, not totally different sides. This helps the model learn consistent 3D correspondence rather than getting mixed signals.

- At test time, 3AM only needs regular RGB video frames and a user prompt (a point, box, or mask). No camera poses, no depth maps, no heavy 3D preprocessing.

What did they find?

On challenging datasets with big camera motion and lots of viewpoint changes (like ScanNet++ and Replica), 3AM did much better than existing video object segmentation methods:

- On the hard “Selected Subset” of ScanNet++ (designed to test reappearance and wide viewpoint changes), 3AM reached about 90.6% IoU and 71.7% Positive IoU, beating strong SAM2-based baselines by +15.9 and +30.4 points.

- On the Replica dataset, 3AM also achieved the best scores, especially when the object was visible and correctly found.

- 3AM even showed strong 3D results without using 3D ground truth at test time: by keeping 2D masks consistent across views, you can project them into 3D and get reliable 3D instances. This suggests you don’t always need heavy 3D merging if your 2D tracking is geometry-aware.

Why this is important:

- It means we can get stable, consistent object masks across a video even when the camera moves a lot or the scene is cluttered.

- It keeps the best part of SAM2—promptable, interactive segmentation—while making it robust to difficult camera motion.

What’s the impact?

If you can track objects consistently across big viewpoint changes without needing special 3D inputs, lots of applications get better:

- Video editing: cleaner cut-outs even in tricky shots.

- Robotics and AR: more reliable understanding of objects in real spaces, as robots or phones move around.

- Autonomous driving and embodied AI: better scene understanding across changing angles.

- Photo collections: consistent object tracking across casual multi-view images, not just videos.

Big picture: 3AM shows that adding lightweight 3D awareness to 2D segmentation makes tracking much more robust, while staying interactive and efficient. It’s a step toward tools that understand both what things look like and where they are in space—without needing complicated 3D data at test time.

Collections

Sign up for free to add this paper to one or more collections.