Learning from Brain Topography: A Hierarchical Local-Global Graph-Transformer Network for EEG Emotion Recognition

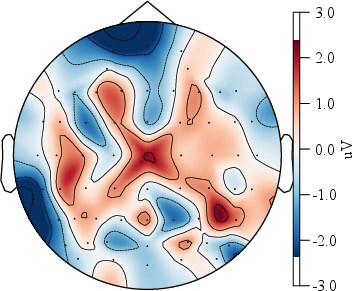

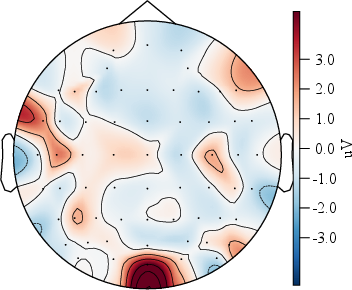

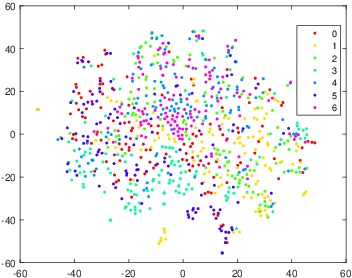

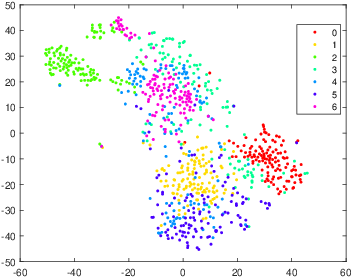

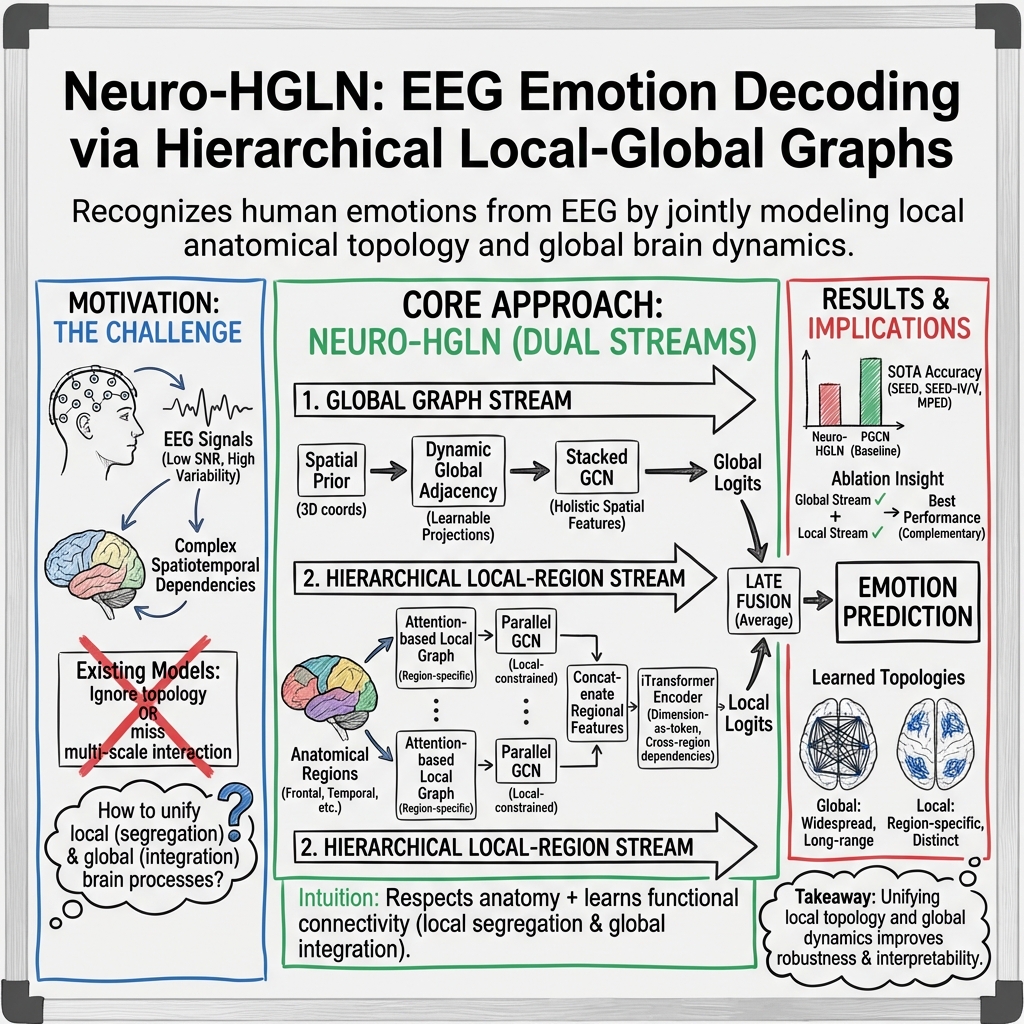

Abstract: Understanding how local neurophysiological patterns interact with global brain dynamics is essential for decoding human emotions from EEG signals. However, existing deep learning approaches often overlook the brain's intrinsic spatial organization, failing to simultaneously capture local topological relations and global dependencies. To address these challenges, we propose Neuro-HGLN, a Neurologically-informed Hierarchical Graph-Transformer Learning Network that integrates biologically grounded priors with hierarchical representation learning. Neuro-HGLN first constructs a spatial Euclidean prior graph based on physical electrode distances to serve as an anatomically grounded inductive bias. A learnable global dynamic graph is then introduced to model functional connectivity across the entire brain. In parallel, to capture fine-grained regional dependencies, Neuro-HGLN builds region-level local graphs using a multi-head self-attention mechanism. These graphs are processed synchronously through local-constrained parallel GCN layers to produce region-specific representations. Subsequently, an iTransformer encoder aggregates these features to capture cross-region dependencies under a dimension-as-token formulation. Extensive experiments demonstrate that Neuro-HGLN achieves state-of-the-art performance on multiple benchmarks, providing enhanced interpretability grounded in neurophysiological structure. These results highlight the efficacy of unifying local topological learning with cross-region dependency modeling for robust EEG emotion recognition.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Explain it Like I'm 14

1) What this paper is about (big-picture overview)

This paper is about teaching a computer to recognize a person’s emotions (like happy, sad, or neutral) by looking at their brainwave signals, called EEG. The authors build a new AI model, called Neuro-HGLN, that learns from the “map” of the brain: it pays attention to both nearby activity in small brain regions and long-distance connections across the whole brain. This helps the model read emotions more accurately and explain its decisions in a way that matches how the brain is organized.

2) What questions the researchers asked

The paper focuses on a few simple questions:

- How can we use the real layout of the brain (where sensors are placed) to help a computer understand emotions from EEG?

- Can we build a model that learns both “local” patterns (what’s happening in a small brain area) and “global” patterns (how different brain areas work together over time)?

- Will combining these local and global views make emotion recognition more accurate and easier to interpret?

3) How the researchers approached the problem (methods, explained simply)

Think of the brain like a city:

- Each EEG sensor is like a neighborhood.

- Roads between neighborhoods represent how strongly they are connected.

- Emotions are like events that involve both local neighborhoods and city-wide coordination.

The model has two “streams,” like two teams looking at the city map from different angles, then combining their insights:

- Global stream (whole-city view):

- The model starts with a map that connects neighborhoods based on how physically close the sensors are (nearby = stronger road).

- Then it learns to adjust these “roads” to reflect how the brain actually communicates during emotions (some far neighborhoods still talk a lot).

- This is done using a graph neural network (GCN), which is like passing messages along the road network to understand overall flow.

- Local stream (neighborhood view):

- The brain is split into meaningful regions (like frontal, temporal, occipital), similar to districts in a city.

- For each region, the model builds its own small map and learns the best local connections using attention (like asking, “which nearby spots matter most right now?”).

- Each region is processed in parallel so their unique patterns don’t get mixed too early.

- Then, the model uses a Transformer (specifically, an iTransformer) to learn how these regions interact—like coordinating between districts. Here, each EEG channel is treated as a “token” so the model can learn cross-region relationships.

Feature basics (kept simple):

- The EEG is turned into a few summary numbers for each sensor that capture different brainwave types (slow to fast rhythms), so the model doesn’t have to handle raw noisy signals.

Training with helpful rules:

- The model is taught to keep its learned maps close to the real sensor layout (so it doesn’t invent unrealistic brain connections).

- It’s also encouraged to make different regions learn different things (so they don’t all copy each other).

- Finally, the two streams each make their own prediction, and the final answer is the average—this often makes predictions more stable.

4) What they found and why it matters

Main findings:

- Neuro-HGLN achieved better accuracy than previous methods on several well-known emotion datasets (SEED, SEED-IV, SEED-V, MPED).

- It worked especially well even when trained and tested on different recording sessions (which is hard because brain signals change over time).

- It often beat even “domain adaptation” methods that are allowed to peek at test data during training—Neuro-HGLN didn’t need that.

- It provided better interpretability: because it uses the real positions of sensors and separates local vs. global learning, it’s easier to link its decisions to actual brain regions.

Why this matters:

- Emotions involve both local brain activity and long-distance coordination. Models that only look at one or the other miss important clues.

- By respecting the brain’s physical layout and learning functional connections, the model becomes both more accurate and more trustworthy.

5) What this could mean for the future (implications)

- Smarter emotion-aware technology: Better emotion recognition can improve mental health tools, personalized learning, and human–computer interaction (like making games or apps respond to how you feel).

- More trustworthy AI in neuroscience: Because the model’s design matches how the brain is organized, scientists can better understand and trust what it learns.

- A general blueprint: The same “local + global + attention” idea could be used for other brain-related tasks (like detecting stress, fatigue, or disorders), not just emotions.

Key takeaways (in plain terms)

- The brain is organized like neighborhoods and highways; emotions involve both.

- This model learns from both local neighborhoods and global highways—and connects them.

- It uses the real sensor map as a guide, then fine-tunes connections based on actual brain activity.

- Result: better, more explainable emotion recognition from EEG signals.

Knowledge Gaps

Knowledge gaps, limitations, and open questions

Below is a concise list of unresolved issues and missing analyses that future work could address:

- Lack of end-to-end temporal modeling: The pipeline relies on 1-second, non-overlapping DE/STFT features and treats frequency bands as static node features; it does not explicitly model long-range temporal dynamics across windows or inter-window dependencies.

- Sensitivity to spatial prior hyperparameters: The choice of Gaussian kernel temperature (τ), distance metric (Euclidean vs. geodesic on scalp), and adjacency normalization for the spatial prior is not analyzed; robustness to these design choices remains unknown.

- Prior mismatch and KL divergence validity: The KL loss assumes probability distributions but it is unclear whether A_prior and A_localk are row- or degree-normalized to valid distributions; the impact of different normalization schemes or divergences (e.g., JS, Wasserstein) is not evaluated.

- Overparameterized global graph projection: Using dense learnable P, Q, b ∈ R{N×N} scales quadratically with channels, risks overfitting, and lacks low-rank or sparsity constraints; complexity and generalization with higher-density EEG (e.g., 128–256 channels) are unexamined.

- Symmetry, directionality, and signed connectivity: The learned graphs are forced non-negative via ReLU and are not constrained to be symmetric or directed; the effect of modeling signed (inhibitory vs. excitatory) and directed (lagged) connectivity (e.g., Granger causality, TE, PDC) is not explored.

- Ambiguity in region-restricted graphs: The region-level A_k is defined as N×N but described as intra-regional; it is unclear how non-regional nodes are masked or prevented from leaking into local GCNs; a clear masking strategy and its impact are not provided.

- No alternative parcellations: The effect of different anatomical/functional parcellations (lobar, hemispheric, networks such as DMN/salience, data-driven community detection) and the choice/number of regions K on performance and interpretability is not studied.

- Frequency-specific topology: Although five bands are used, the model does not learn band-specific graphs or cross-frequency couplings; how frequency-resolved connectivity (e.g., per-band graphs, CFC) affects recognition is unexplored.

- Fusion strategy is fixed and unlearned: Late fusion uses a simple average of logits; no investigation of learned, adaptive, or uncertainty-aware fusion (e.g., gating, temperature scaling, confidence weighting) is provided.

- Missing ablations: The paper does not report ablations isolating contributions of (i) the spatial prior, (ii) dynamic global graph, (iii) local attention-based graph proposal, (iv) iTransformer, (v) KL/diversity regularizers, or (vi) the number of attention heads/layers.

- Hyperparameter robustness: No sensitivity analyses for α, β, γ, δ (loss weights), number of iTransformer layers/heads, FFN size, or regional GCN depth; stability across datasets and sessions is unknown.

- Interpretability claims not validated: While interpretability is claimed, there is no quantitative or qualitative validation (e.g., attention/edge saliency maps, frontal asymmetry, hemispheric lateralization) or alignment with known emotion neurophysiology.

- Artifact handling and preprocessing: The approach assumes clean signals and standard 62-channel montages; robustness to artifacts (EOG/EMG), referencing schemes, re-referencing, filtering choices, and differences in cap placement or head geometry is not evaluated.

- Robustness to missing/noisy channels: No experiments on channel dropout, sensor noise, or montage shifts (e.g., low-density headsets) to assess real-world resilience or graceful degradation.

- Cross-dataset and subject-independent generalization: Only SEED-family and MPED are used; cross-dataset transfer (train on SEED, test on MPED) and comprehensive LOSO results (truncated in text) are absent; the ability to generalize to new devices/populations is uncertain.

- Comparison with domain adaptation under identical constraints: Although domain adaptation baselines are included, Neuro-HGLN is not evaluated with unsupervised adaptation or test-time adaptation; whether the proposed priors/regularizers can complement adaptation remains open.

- Computational efficiency and latency: Parameter counts, training/inference time, memory footprint, and feasibility for real-time/online settings are not reported; scalability to mobile/edge devices is unclear.

- Class imbalance and metrics: Beyond accuracy and STD, no analysis of class imbalance, per-class performance, macro-F1, AUROC, or calibration is provided, especially important for 7-class MPED.

- Data modality constraints: The approach uses handcrafted band features; whether end-to-end raw-signal front-ends (learnable spectral encoders) improve performance or interpretability is untested.

- Stability across session gaps: While cross-session results are reported for SEED, systematic analysis of temporal drift magnitude, session spacing, and adaptation strategies (e.g., lightweight calibration) is missing.

- Regularizer side effects: The functional diversity loss may suppress legitimately shared edges across regions; the trade-off between diversity and needed overlap, and sparsity controls on learned graphs, are not studied.

- Individualized anatomy: The spatial prior uses standard coordinates; subject-specific head geometry/cap placement is ignored; integrating individualized electrode positions or head models and quantifying benefits is left unexplored.

- Ethical and demographic coverage: Demographic variability (age, sex, cultural context), fairness analyses, and privacy considerations for affective EEG are not discussed.

Glossary

- Affective computing: A field that designs systems and devices that can recognize, interpret, and process human emotions. "Electroencephalography (EEG)–based emotion recognition has attracted increasing attention due to its potential applications in affective computing, human–computer interaction, and mental health assessment"

- Affective decoding: Inferring or classifying emotional states from physiological or neural signals. "These results highlight the importance of unifying local topological learning with cross-region dependency modeling for robust and interpretable affective decoding from EEG signals."

- Anatomically grounded inductive bias: A prior that encodes anatomical structure to guide model learning toward biologically plausible solutions. "Neuro-HGLN first constructs a spatial Euclidean prior graph based on the physical distances between EEG electrodes, which serves as an anatomically grounded inductive bias."

- Attention-based Graph Proposal mechanism: A method that leverages attention to dynamically propose and refine graph topology. "we propose an Attention-based Graph Proposal mechanism that directly learns the optimal connectivity structure from the spatial prior to dynamically construct Region-level Local Graphs."

- Attention-based Multiple Dimensions EEG Transformer (AMDET): A transformer architecture tailored for EEG, focusing attention across multiple dimensions to capture global context. "Xu et al. proposed the Attention-based Multiple Dimensions EEG Transformer (AMDET) to capture the global context of EEG signals"

- Batch Normalization (BN): A technique that normalizes intermediate activations to stabilize and accelerate training. "we apply Batch Normalization (BN) and ReLU activation to the attention scores, followed by aggregating across heads:"

- Brain Topography: The spatial organization and mapping of brain regions or electrode locations. "EEG emotion recognition, Brain Topography, Local–Global Feature Learning, Graph iTransformer."

- Continuous Wavelet Transform (CWT): A time–frequency analysis method that decomposes signals into wavelets to capture nonstationary patterns. "by converting raw signals into 2D time-frequency images via Continuous Wavelet Transform (CWT)."

- Cross-session validation protocol: An evaluation setting where models are trained on one recording session and tested on a different session to assess robustness. "we followed a strict cross-session validation protocol."

- Differential Entropy (DE): A measure of entropy for continuous random variables, used as EEG features across frequency bands. "Following standard preprocessing protocols, we extract Differential Entropy (DE) features from the raw signals across five distinct frequency bands"

- Dimension-as-token formulation: A modeling approach that treats each feature dimension (e.g., channel) as a token for attention-based architectures. "which captures cross-region dependencies and structured high-level feature interactions under the dimension-as-token formulation."

- Domain adaptation: Techniques that leverage target-domain data (often unlabeled) to reduce distribution shifts between training and test domains. "It is worth noting that Neuro-HGLN even surpasses domain adaptation methods (e.g., RGNN and TANN)"

- Dynamical Graph Convolutional Neural Network (DGCNN): A GCN variant that learns adjacency dynamically from data rather than using fixed priors. "Song et al. introduced a Dynamical Graph Convolutional Neural Network (DGCNN) to overcome the limitations of static geometric graphs."

- Electrode montage: The standardized spatial arrangement of EEG electrodes on the scalp. "corresponding to the 62-channel electrode montage provided by the ESI NeuroScan System."

- Feed-Forward Networks (FFN): The position-wise multilayer perceptron blocks in transformer architectures. "each comprising Multi-Head Self-Attention (MSA) and Feed-Forward Networks (FFN)."

- Frobenius norm: A matrix norm computed as the square root of the sum of squared entries; used to quantify adjacency overlap. "where denotes the element-wise product and is the Frobenius norm."

- Functional connectivity: Statistical dependencies among brain regions reflecting coordinated activity. "model functional connectivity patterns across the entire brain"

- Functional coupling: The dynamic interaction strength between brain regions that varies with cognitive or emotional states. "physical distance alone is insufficient to capture the complex, non-linear functional coupling associated with varying emotional states."

- Functional diversity regularization: A constraint encouraging different learned regional graphs to be distinct to avoid redundancy. "functional diversity regularization ;"

- Functional segregation and integration: The neuroscientific principle that the brain balances specialized local processing with global information integration. "functional segregation and integration—where the brain processes information both within localized regions and through global communication across regions."

- Gaussian kernel function: A radial basis function that converts distances into weights decaying exponentially with squared distance. "we employ a Gaussian kernel function."

- Geometric Constraint (KL Divergence): A loss term that penalizes deviation of learned graphs from spatial priors using KL divergence. "This significant improvement can be attributed to the proposed Geometric Constraint (KL Divergence)."

- GELU activation: A smooth activation function based on the Gaussian error linear unit, often used in transformers. "we utilize GELU activation to introduce non-linearity."

- Graph Convolutional Network (GCN): A neural network that performs convolution-like operations over graph structures to aggregate neighborhood information. "a two-layer graph convolutional network is employed to extract global spatial representations."

- Hierarchical modularity: The organization of brain networks into modules at multiple scales, from local regions to global networks. "neglects the intrinsic hierarchical modularity of the brain"

- International 10--10 System: A dense EEG electrode placement standard providing 3D coordinates for scalp locations. "according to the International 10--10 System"

- International 10--20 system: A widely used EEG electrode placement standard defining relative positions on the scalp. "based on the international 10--20 system."

- iTransformer encoder: A transformer variant that treats dimensions (e.g., channels) as tokens to model multivariate correlations. "fed into an iTransformer encoder, which captures cross-region dependencies and structured high-level feature interactions under the dimension-as-token formulation."

- Kullback-Leibler (KL) Divergence: A measure of divergence between probability distributions, used to align learned and prior graphs. "We introduce a Geometric Constraint Loss using the Kullback-Leibler (KL) Divergence"

- Leave-One-Subject-Out (LOSO) cross-validation strategy: A subject-independent evaluation where each fold holds out one subject for testing. "we employed a Leave-One-Subject-Out (LOSO) cross-validation strategy"

- Local-constrained Parallel GCN Layers: Multiple GCNs operating in parallel, each restricted to a brain region to preserve local topology. "we design Local-constrained Parallel GCN Layers."

- Multi-Head Self-Attention (MSA): An attention mechanism with multiple parallel heads to capture diverse relationships. "each comprising Multi-Head Self-Attention (MSA) and Feed-Forward Networks (FFN)."

- Non-Euclidean nature of brain topology: The property that brain spatial relationships are irregular and not well-represented on regular grids. "which essentially ignores the non-Euclidean nature of brain topology."

- Power Spectral Density (PSD): A representation of signal power across frequencies, used as a feature in EEG analysis. "Power Spectral Density (PSD)"

- Short-Time Fourier Transform (STFT): A time–frequency representation computed over short windows to capture temporal changes in spectra. "we extracted 256-point Short-Time Fourier Transform (STFT) features"

- Spatial Euclidean Prior Graph: A graph constructed from physical distances between electrodes to encode anatomical proximity. "we construct a spatial Euclidean prior graph based on the physical distances between EEG electrodes"

- Vision Transformers (ViT): Transformer architectures adapted for image analysis with global receptive fields. "Arjun et al. applied Vision Transformers (ViT) to EEG analysis"

- Warm-up strategy: A learning rate schedule that gradually increases the rate at the start of training to stabilize optimization. "we employ a dynamic learning rate schedule with a warm-up strategy"

Practical Applications

Immediate Applications

The following items can be deployed with current lab-grade EEG hardware and standard analysis pipelines, especially in controlled environments.

- Affective UX and media testing in labs — Use Neuro-HGLN to label moment-by-moment emotional responses to interfaces, ads, and content with improved robustness and interpretability.

- Sectors: software, media, advertising, market research

- Potential tools/products/workflows: turnkey analysis pipeline (DE feature extraction + spatial prior graph + Neuro-HGLN inference), cloud batch processing for panel studies, dashboard mapping regional contributions

- Assumptions/dependencies: 32–62 channel EEG with known 10–10/10–20 coordinates; controlled stimulus presentation; offline or near–real-time inference; participant consent and artifact control

- Prototype emotion-adaptive VR/AR content — Drive content difficulty, pacing, or scene transitions based on decoded valence/arousal in immersive experiences.

- Sectors: gaming, entertainment, EdTech

- Potential tools/products/workflows: Unity/Unreal plugins that stream EEG to a Neuro-HGLN microservice; rule-based adaptors using logits; developer SDK for event triggers

- Assumptions/dependencies: tethered/mid-density EEG; motion artifact mitigation; latency budgets of 100–300 ms; non-medical use claims

- Driver state research (stress/fatigue proxies) — Analyze in-vehicle EEG sessions to quantify affect under workload or monotony, informing HMI and alerting policies.

- Sectors: automotive (R&D, HMI/UX labs)

- Potential tools/products/workflows: instrumented test rigs, offline labeling workflows, region-wise interpretability to refine stimuli and UI cues

- Assumptions/dependencies: limited movement; high-quality electrodes; model retraining on domain-specific labels (stress, load); not intended for production safety use yet

- Non-clinical biofeedback and wellness sessions — Provide users and coaches with real-time dashboards indicating regional engagement and affect trends during mindfulness or performance training.

- Sectors: corporate wellness, sports coaching

- Potential tools/products/workflows: session-based visualizations (local–global graphs), open-loop recommendations, exportable reports

- Assumptions/dependencies: non-diagnostic use; supervised, controlled environments; minimal motion; clear consent and data privacy controls

- Clinical and neuroscience research tool — Support studies on affect with anatomically grounded interpretability (local–global connectivity) and stronger cross-session stability.

- Sectors: healthcare research, academia

- Potential tools/products/workflows: reproducible scripts for spatial prior construction, region partitioning, KL-constrained training; LOSO and cross-session evaluation templates

- Assumptions/dependencies: IRB approvals; research-grade EEG; no clinical claims; cohort diversity and ecological validity considerations

- Affective tutoring pilots (controlled classrooms) — Post-hoc analysis to estimate engagement/frustration and iterate instructional design; limited real-time pilots in small groups.

- Sectors: education

- Potential tools/products/workflows: classroom session recordings → batch inference → teacher dashboards; content A/B tests informed by regional patterns

- Assumptions/dependencies: student consent; headset logistics; privacy-preserving workflows; small-scale, controlled trials

- Cross-task extension (cognitive load, drowsiness) — Reuse the architecture to model related mental states by retraining on appropriate labels.

- Sectors: transportation, safety, human factors

- Potential tools/products/workflows: task-specific datasets, transfer learning checkpoints, evaluation suites

- Assumptions/dependencies: availability and quality of labeled datasets; domain shift handling; validation against ground truth

- Developer SDK/library for topology-aware EEG modeling — Package modules for spatial prior graphs, region-level GCNs, and iTransformer for broader biosignal R&D.

- Sectors: software/AI platforms

- Potential tools/products/workflows: PyTorch/TensorFlow implementations, MLOps templates (training with geometric and diversity regularizers), ONNX export for inference

- Assumptions/dependencies: permissive licensing; maintenance; documentation; compute for training

Long-Term Applications

These require further research, scaling, domain adaptation, hardware maturation, or regulatory approval before deployment.

- Real-time, subject-independent affect decoding on consumer headsets — Calibration-free emotion-aware interfaces for apps and devices.

- Sectors: consumer electronics, software

- Potential tools/products/workflows: compressed/quantized Neuro-HGLN variants, on-device inference, continual learning for drift

- Assumptions/dependencies: generalization to low-channel dry electrodes; robustness to motion/noise; battery and compute constraints; privacy-by-design

- Clinical decision support for mood disorder screening/monitoring — Use decoded affect dynamics and regional patterns to aid assessment and longitudinal tracking.

- Sectors: healthcare

- Potential tools/products/workflows: EMR-integrated dashboards, digital phenotyping modules, clinician-facing explainability

- Assumptions/dependencies: multi-site clinical validation; regulatory clearance (e.g., FDA/CE); population diversity; safety and false-positive management

- Closed-loop neurofeedback therapy targeting region-specific circuits — Adjust stimuli or neurostimulation based on local–global brain state estimates.

- Sectors: mental health, neurorehabilitation

- Potential tools/products/workflows: therapy protocols incorporating Neuro-HGLN outputs; adaptive feedback engines; therapist consoles

- Assumptions/dependencies: controlled trials demonstrating efficacy; human oversight; integration with other modalities (fNIRS/ECG); safety frameworks

- Production-grade driver monitoring and adaptive ADAS/HMI — Live detection of stress/affect to adapt assistance levels and interfaces.

- Sectors: automotive

- Potential tools/products/workflows: embedded inference stacks; multi-sensor fusion (EEG, camera, steering); fleet MLOps for updates

- Assumptions/dependencies: ruggedized hardware; stringent artifact handling; regulatory/human factors validation; user acceptance

- Adaptive learning platforms with emotion/engagement-aware personalization — Real-time content pacing and difficulty adjustment at scale.

- Sectors: EdTech

- Potential tools/products/workflows: LMS integration; teacher/student dashboards; policy-controlled personalization knobs

- Assumptions/dependencies: cost and wearability of headsets; ethical safeguards; bias/fairness audits; data governance in schools

- Workplace safety in high-risk sectors — Monitoring stress/fatigue in operators (e.g., energy, manufacturing) with alerts and intervention workflows.

- Sectors: energy, manufacturing, logistics

- Potential tools/products/workflows: PPE-integrated EEG, control room dashboards, escalation protocols

- Assumptions/dependencies: hardware ruggedization; union and policy compliance; clear ROI; strong privacy protections

- Standards and policy frameworks for emotion-AI from neurodata — Benchmarks and procurement specs grounded in anatomical priors and LOSO robustness.

- Sectors: government, standards bodies, compliance

- Potential tools/products/workflows: reference test suites (cross-session/subject), montage metadata requirements, interpretability reporting standards

- Assumptions/dependencies: multi-stakeholder consensus; alignment with privacy laws and medical device regulations

- Multi-modal fusion and transfer to other neuro/bio signals — Extend local–global graph-transformer to MEG/fNIRS or fuse with ECG/EDA/eye tracking for robust affect decoding.

- Sectors: healthcare, wearables, research

- Potential tools/products/workflows: modality-specific prior graphs, cross-modal attention blocks, synchronized acquisition toolchains

- Assumptions/dependencies: availability of large multi-modal datasets; synchronization and calibration; model complexity management

- Explainable AI products for clinicians and scientists — Visualize segregated/integrated brain dynamics underlying affect for hypothesis generation and education.

- Sectors: healthcare, academia

- Potential tools/products/workflows: interactive regional graphs over time, saliency maps aligned to 10–10 montage, report generators

- Assumptions/dependencies: validated interpretability metrics; user-centered design; training and trust calibration

- AutoML for topology-aware biosignal and sensor-network analytics — Generalize the hierarchical local–global approach to tactile arrays, IoT sensor grids, or industrial monitoring.

- Sectors: robotics, IoT, industrial analytics

- Potential tools/products/workflows: automated region partitioners, prior-graph builders from physical layouts, graph-transformer templates

- Assumptions/dependencies: well-defined spatial priors; labeled datasets; domain-specific evaluation criteria

- Regulatory test protocols and ethical governance for neuro-emotion systems — Define safety, accuracy, fairness, and transparency thresholds for deployment.

- Sectors: policy, defense, public sector

- Potential tools/products/workflows: pre-certification evaluations (LOSO, cross-device tests), bias audits across demographics, incident response playbooks

- Assumptions/dependencies: interdisciplinary collaboration; evolving norms around neurodata rights and consent

Cross-cutting assumptions and dependencies

- Data and hardware: Many applications assume access to mid/high-density EEG with known electrode coordinates; performance on low-channel consumer headsets requires adaptation and may degrade.

- Generalization: Reported gains are on benchmark datasets with film stimuli; additional validation is needed in naturalistic, ambulatory contexts and diverse populations.

- Real-time constraints: Latency, compute, and power budgets must be met for live applications; model compression and edge deployment are required.

- Labeling and ground truth: Emotion labels are culturally and contextually sensitive; careful experimental design and multimodal corroboration are advised.

- Privacy and ethics: Neurodata are sensitive; robust consent, on-device processing when possible, minimization, and governance are essential.

- Regulatory status: Clinical and safety-critical uses require rigorous validation and regulatory approvals; current results should be treated as research-grade evidence.

Collections

Sign up for free to add this paper to one or more collections.