- The paper demonstrates that instructed retrieval models improve ranking relevance in exploratory search, as shown by NDCG@20 metrics.

- It reveals that models with strong ranking performance often exhibit near-zero p-MRR, indicating limited ability to follow nuanced instructions.

- The study highlights significant differences across aspects, underscoring the need for joint optimization of relevance and instruction sensitivity.

Can Instructed Retrieval Models Really Support Exploration? A Technical Summary

Introduction

The study "Can Instructed Retrieval Models Really Support Exploration?" (2601.10936) evaluates the capacity of instructed retrieval models—retrievers conditioned via natural language instructions—to support exploratory search scenarios, specifically aspect-conditional seed-guided exploration. In contrast to standard retrieval tasks, exploratory search sessions are characterized by under-specified, evolving information needs where users often require retrieval based on nuanced aspects of lengthy queries. The work fills a gap in current evaluation by probing not only ranking relevance but also instruction following fidelity using expert-annotated multi-faceted test collections.

Background and Motivation

Instruction-following models have seen rapid adoption following their success in generative tasks. Building on this, retrieval systems (instructed retrievers) have been proposed to enable user-guided control over ranking via explicit instructions. Recent benchmarks (e.g., MAIR, BRIGHT, CRUMB) have focused on complex retrieval phenomena, but their analysis has not extended robustly to exploratory search scenarios characterized by seed-guided aspect-based exploration. In such settings, users issue queries based on a lengthy seed (e.g., an academic abstract) and wish to retrieve results relevant to specific aspects such as motivation, methodology, or results.

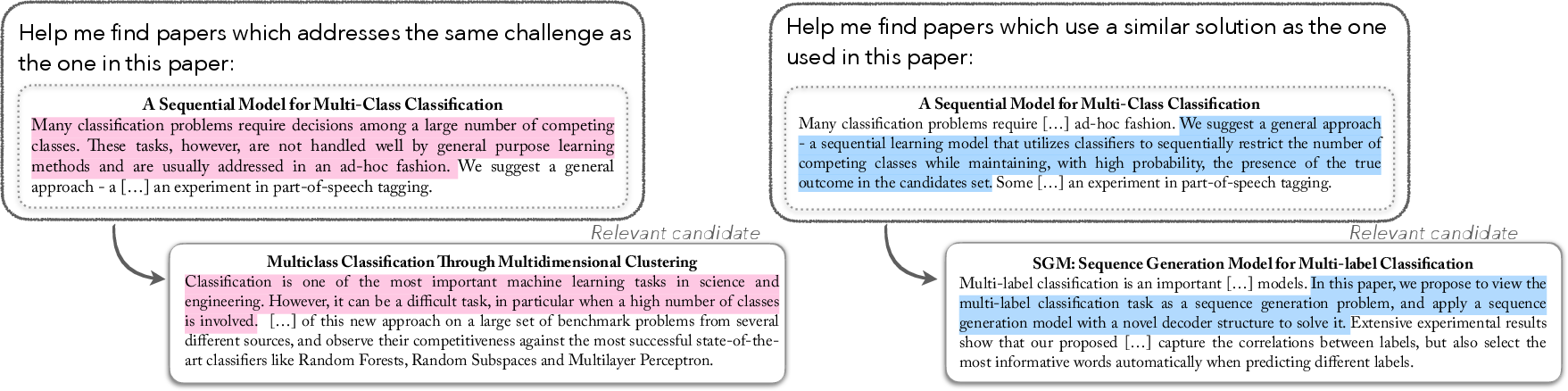

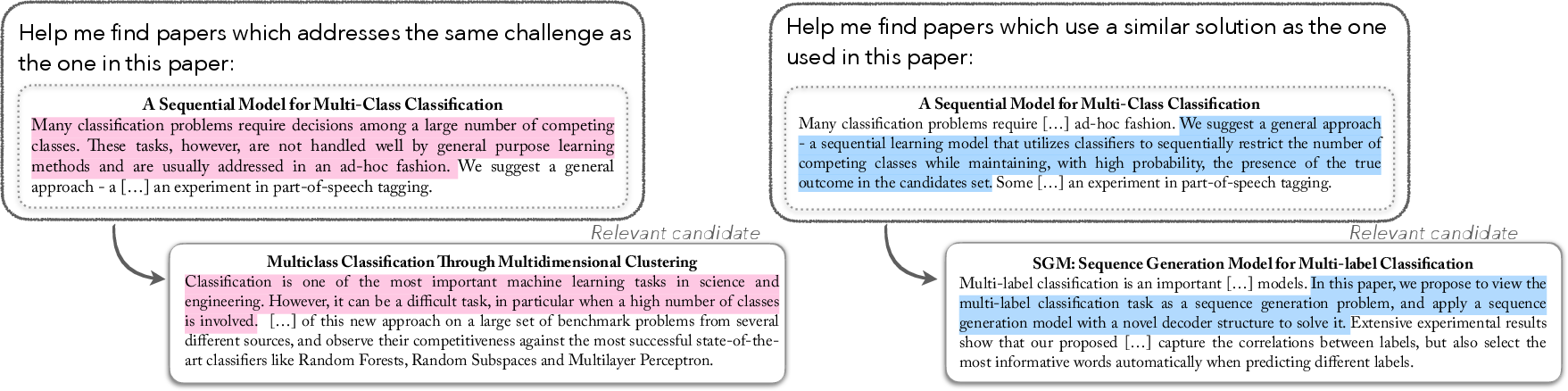

The introduction of the CSFCube test collection, which contains expert annotations for queries with respect to multiple aspects under different instructions (Figure 1), uniquely enables evaluation not just of ranking relevance but also of a model’s factual compliance with specified instructions.

Figure 1: CSFCube enables concurrent evaluation of ranking relevance and instruction following for instructed retrievers via multi-instruction per-query expert annotations.

Experimental Framework

Corpus and Annotations:

CSFCube consists of 50 seed queries (scientific abstracts) and over 800,000 documents. Each seed query is paired with multiple aspects ("Background", "Method", "Result"), and relevance is annotated by at least two experts per aspect, with some seeds annotated across two aspects, facilitating robust instruction-following evaluation.

Models:

The evaluation includes:

- Instruction-agnostic dense retrievers (Specter2, SciNCL)

- Multi-vector aspect-conditional models (otAspire)

- Embedding-based instructed retrievers (GritLM-7B)

- Commercial and open LLMs with Pairwise Ranking Prompting (PRP; including GPT-4o, Llama-3, Mistral-7B)

Retrievers are employed as re-rankers over top-200 documents preselected by SciNCL.

Metrics:

Two axes are evaluated:

- Ranking Relevance: NDCG@20, to measure overall ranking quality.

- Instruction Following: p-MRR [weller2025followir], a pairwise metric comparing result list reordering aligned to changes in instructions/aspects. Values near 0 indicate indifference to instructions; positive (or negative) values signal correct (or counter-intuitive) instruction-following.

Results

Ranking Relevance

GritLM-7B and GPT-4o-PRP outperform instruction-agnostic and baseline aspect-conditional models (NDCG@20 ≈ 42–53 for background aspects). Notably, standard LLMs (e.g., Llama-3, Mistral-7B) as rankers underperform compared to dedicated retrievers. However, the ranking performance of all models remains far from expert annotator upper bounds (NDCG@20 ≈ 77–82).

Instruction Following

Instruction adherence (p-MRR) exhibits a weak alignment with improvements in ranking. Certain models with strong instruction following (e.g., Llama-3-70B, otAspire) display inferior ranking relevance, while high NDCG do not guarantee instruction compliance (GPT-4o-PRP, GritLM-7B p-MRR values near 0 to +2). This signals that advances in overall ranking do not address the core challenge of following nuanced user instructions—critical for exploratory and recall-oriented sessions.

Aspect and Instructional Sensitivity

Performance differences are substantial across aspects—background aspects are consistently handled best, while methodology aspects lag, reflecting the underlying difficulty in matching beyond shallow textual similarity.

Systematic evaluation of instruction variants on GritLM-7B reveals that moving from aspect-specific to generic instructions reduces p-MRR to near 0, confirming some benefit from detailed instructions. However, extensive variations (e.g., paraphrasing, long-form definitions) have minimal effect on both NDCG@20 and p-MRR, underscoring model insensitivity to fine-grained changes in instruction formulation. Using aspect-specific text from queries (aspect subset) markedly improves instruction adherence, at the cost of lower ranking relevance likely due to context loss.

Implications and Future Directions

The analysis demonstrates that while instructed retrievers present clear improvements in ranking relevance for exploratory search, their ability to robustly and intuitively follow detailed instructions remains limited and inconsistent across models, instructions, and aspects. For long, iterative exploratory sessions—where precise user control via aspectual instructions matters—current models exhibit near instruction-agnostic behavior.

These findings point to critical research avenues:

- Architecture and Training: Approaches that jointly optimize for both relevance and instruction sensitivity. Mechanisms to truly "read" and operationalize nuanced instructions in retrieval (potentially via multi-sequence attention or explicit aspect representations) are lacking.

- Benchmarking and Metrics: Requirement for datasets with fine-grained, multi-instruction expert-labeled judgments (as CSFCube offers), and robust instruction-following measures (like p-MRR) to drive model development.

- Interaction Design: Since models are insensitive to paraphrases and minor instruction structure, design burden on end-users may be reduced, but exposes a ceiling in model adaptivity.

- Downstream Applications: The current generation may already benefit search where aspectual context is coarse or closely tied to surface semantic similarity, but cannot yet support dynamic research literature review or iterative exploration requiring precise control (as in science-oriented search platforms or RAG systems for ideation).

Conclusion

The study establishes a comprehensive, empirical baseline for the application of instructed retrievers in exploratory, aspect-conditioned retrieval—a substantially under-explored but practically relevant setup. While modern instructed models enhance baseline relevance, they fail to deliver robust, fine-grained instruction following required to realize the true promise of natural-language steered search in open-ended, long-running settings. These insights motivate further research in both architecture and evaluation for next-generation interactive search systems.