Artificial Intelligence and the US Economy: An Accounting Perspective on Investment and Production

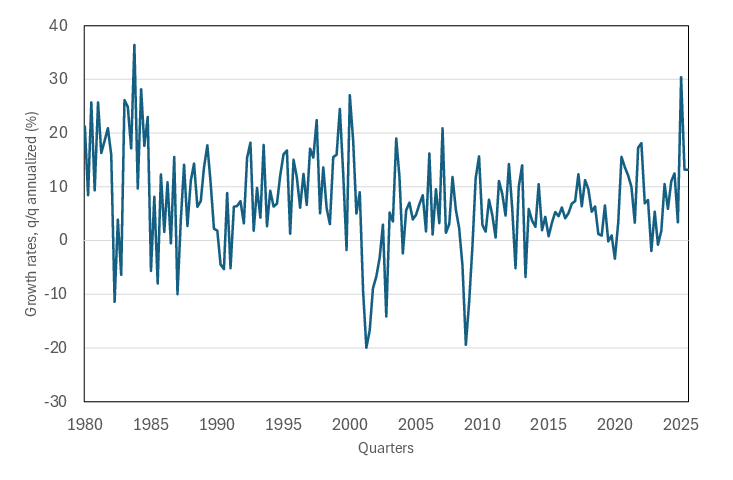

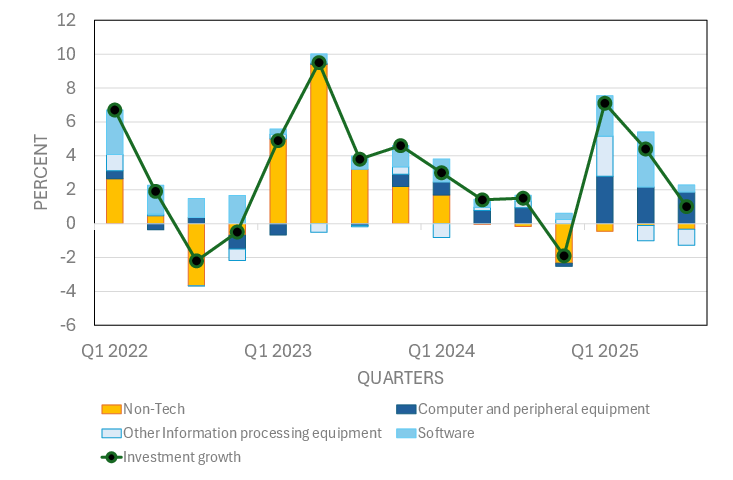

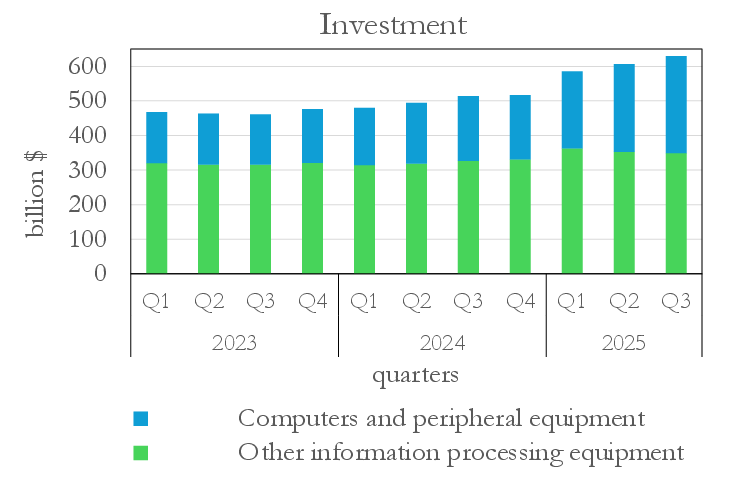

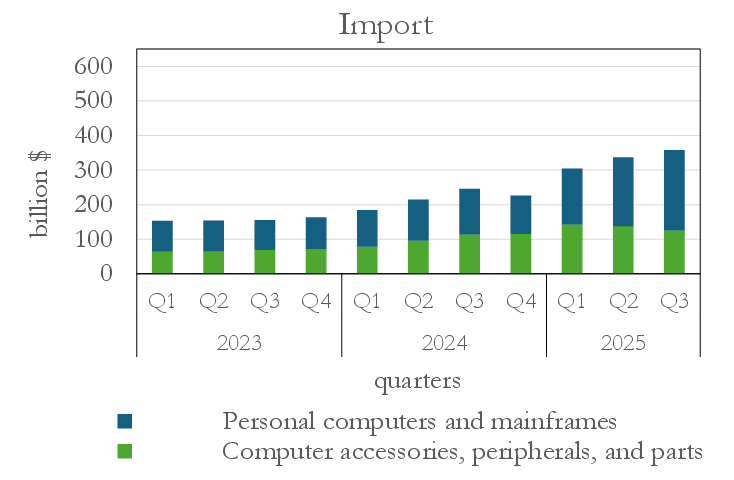

Abstract: AI has moved to the center of policy, market, and academic debates, but its macroeconomic footprint is still only partly understood. This paper provides an overview on how the current AI wave is captured in US national accounts, combining a simple macro-accounting framework with a stylized description of the AI production process. We highlight the crucial role played by data centers, which constitute the backbone of the AI ecosystem and have attracted formidable investment in 2025, as they are indispensable for meeting the rapidly increasing worldwide demand for AI services. We document that the boom in IT and AI-related capital expenditure in the first three quarters of the year has given an outsized boost to aggregate demand, while its contribution to GDP growth is smaller once the high import content of AI hardware is netted out. Furthermore, simple calculations suggest that, at current utilization rates and pricing, the production of services originating in new AI data centers could contribute to GDP over the turn of the next quarters on a scale comparable to that of investment spending to date. Short reinvestment cycles and uncertainty about future AI demand, while not currently acting as a macroeconomic drag, can nevertheless fuel macroeconomic risks over the medium term.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Explain it Like I'm 14

Overview

This paper looks at how the current wave of AI is showing up in the U.S. economy right now. The authors focus on what can be measured today in official statistics, especially GDP, investment, imports, and the value created by data centers. Their big message: data centers are the backbone of AI, and while AI-related spending is giving a strong boost to demand, the part that actually lifts U.S. GDP is smaller than it looks because a lot of the hardware is imported. As new data centers start selling AI and cloud services, their revenues can add to GDP roughly on the same scale as the recent investment—at least in the near term. Over the medium term, fast upgrade cycles and uncertainty about demand could bring risks.

Key questions the paper asks

- How is today’s AI boom (especially the surge in data centers) showing up in U.S. GDP?

- Which parts of the AI supply chain add income inside the U.S. versus abroad?

- Beyond building data centers, how do AI services (like cloud and chatbots) show up in GDP?

- How big is the current boost to demand, and how might that change soon?

How the authors studied it (in simple terms)

Think of the economy like a big scoreboard with rules for what counts where:

- GDP by spending: We add up what people buy (consumption), what businesses buy to invest (investment), what the government buys, and what the world buys from us (exports), then subtract what we buy from abroad (imports).

- GDP by production/value added: We add up what each industry actually produces in income terms (wages, profits, etc.).

What they did:

- Mapped the AI ecosystem to see who does what and where:

- Hardware makers design chips and build servers.

- Cloud companies own data centers and rent computing power (“pay by the hour”).

- AI labs train models and sell AI tools (via APIs or subscriptions).

- Looked at official U.S. data for 2025 (first three quarters) on investment, imports, and industry value added, plus trade data to see where servers are coming from.

- Used a simple “accounting” approach (no fancy forecasting): show where AI spending appears in the national accounts now.

- Cross-checked with company reports (big tech capex) and market prices for cloud GPU rentals to estimate how quickly data centers can earn back their costs (payback time).

Helpful translations:

- Data center: a giant “computer factory” that powers AI training and AI services.

- GPU/AI chip: the “muscle” that does the heavy math for AI.

- Capex: big spending by companies to build or buy long-lasting things like buildings, servers, and networks.

- Imports subtract from GDP: If you buy a server made abroad, U.S. “demand” goes up, but U.S. GDP doesn’t rise by the full amount because the income from making that server happened in another country.

What they found

- Investment is booming—but a lot is imported, so the GDP bump is smaller than the headlines

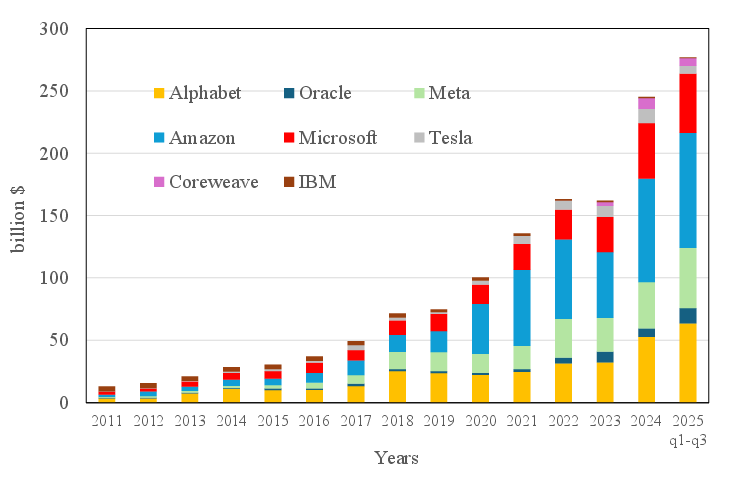

- In early–mid 2025, U.S. companies massively increased spending on tech equipment and software, especially servers for AI. Big tech firms are on track for roughly $300–350 billion in 2025 capex, mostly for AI/data centers.

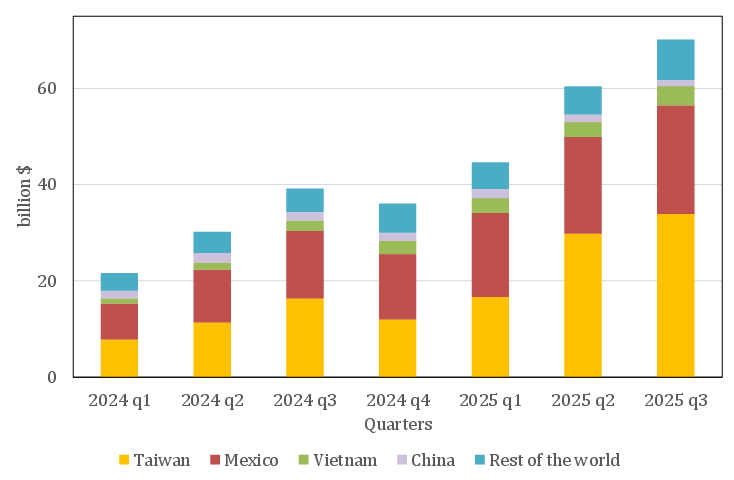

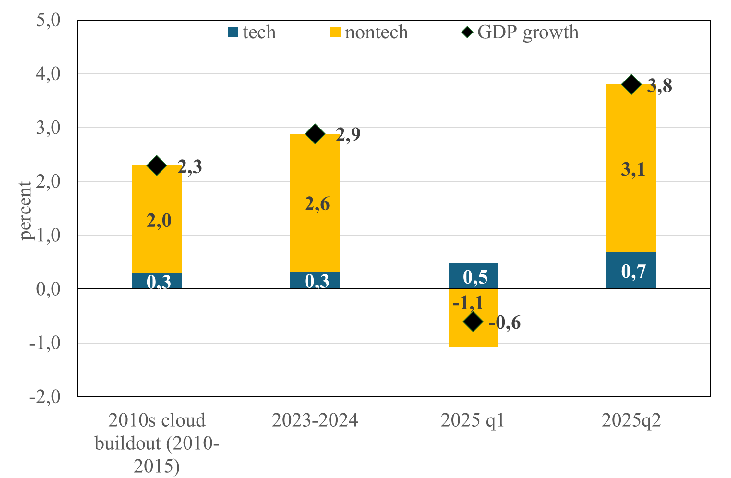

- Much of the hardware (servers and parts) is imported, notably from Mexico and Vietnam. Because imports are subtracted in GDP accounting, the net boost to U.S. GDP is smaller than the raw investment numbers suggest.

- After accounting for imports, tech investment still helped GDP, but it contributed a minority share of total GDP growth compared with the strong consumer spending.

- Data centers are the backbone of AI—and a key channel into GDP

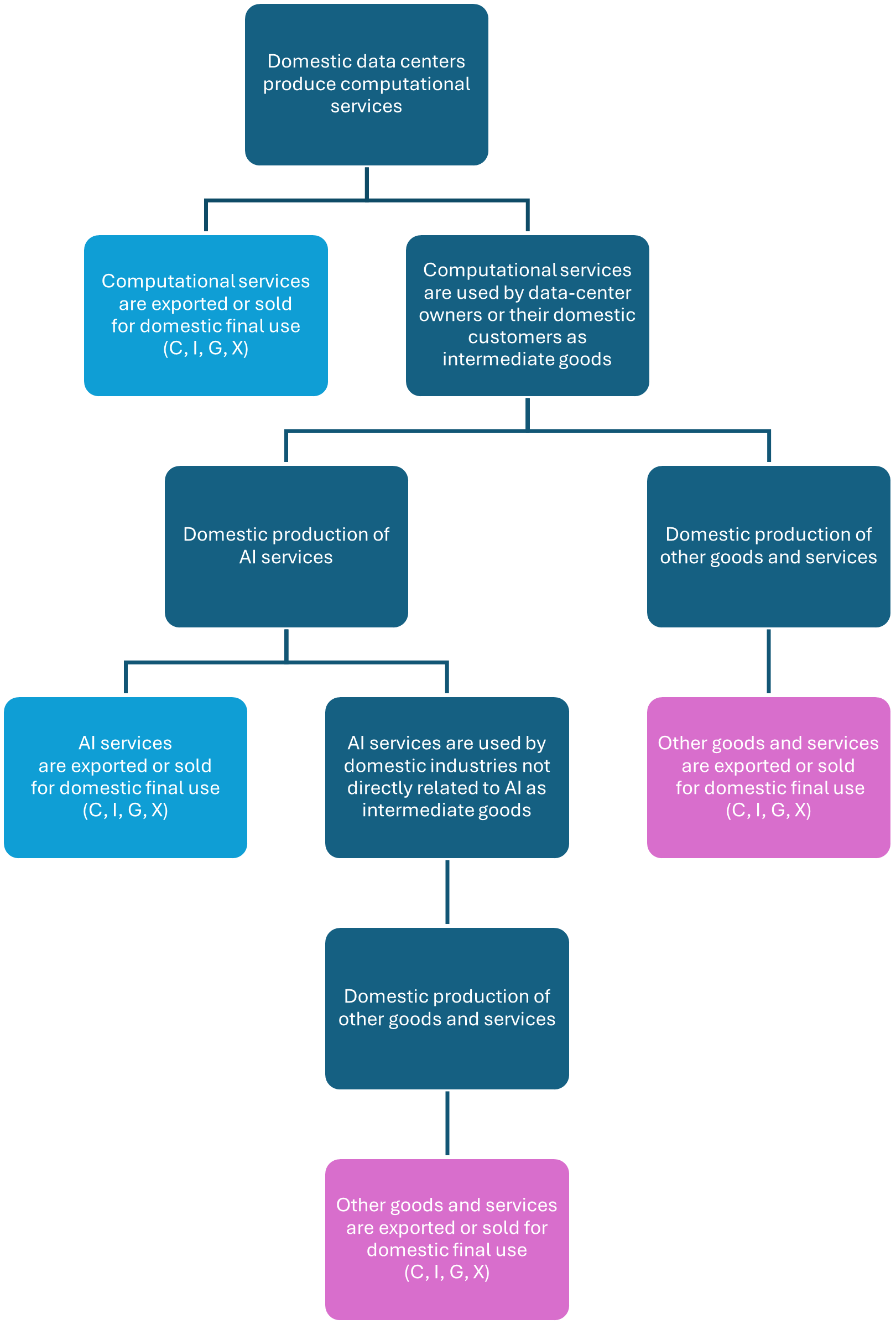

- Data centers turn big capex into real services: they rent out computing power for AI training and running models (inference), plus regular cloud services.

- AI services show up in GDP in two ways:

- Direct final use: for example, a company paying for AI training (counted as R&D investment), a consumer’s chatbot subscription (consumption), the government’s cloud spending (government consumption), or foreign customers using U.S.-based cloud (exports).

- Intermediate use: when other industries buy AI services as inputs to make their own products. This doesn’t show up as “final demand” directly, but it changes costs and productivity.

- Right now, evidence suggests data centers are running near full capacity and renting GPUs at high prices. Simple calculations imply a short payback time (about a year) for new AI racks that are fully used. That means the revenue from selling AI services over the next few quarters could be as large as the recent investment outlays.

- The “revenue channel” is already visible in industry numbers and exports

- Value-added data (the production side of GDP) show strong contributions from tech-heavy sectors like computer/electronic manufacturing, data processing, and computer systems design.

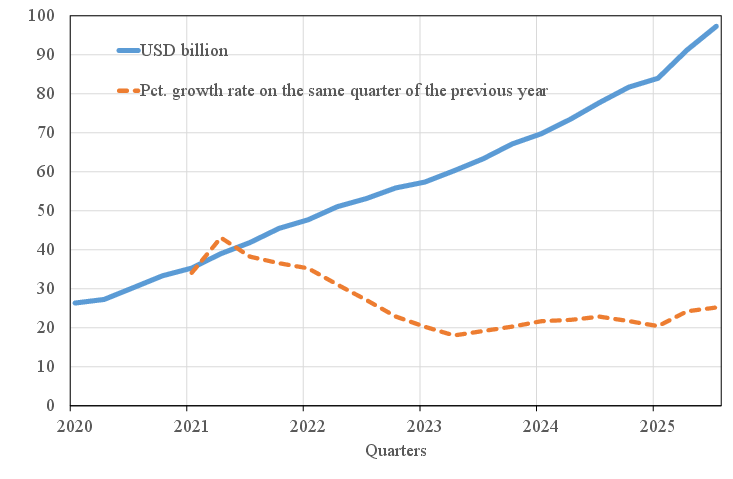

- The three biggest U.S. cloud providers’ data-center revenues keep growing above 20% year over year, and they represent only part of the total market (there are also big private clouds and smaller providers).

- U.S. “exports of computer services” (which include cloud) are rising, suggesting AI/cloud is increasingly important for foreign sales.

- Medium-term risks: fast replacement cycles and demand uncertainty

- AI hardware can become obsolete quickly and may wear out under heavy use, which means companies might need to reinvest often. That can squeeze free cash flow over time, even if revenues are high.

- However, short payback periods help—many facilities can earn back their cost within a year at current prices and utilization. Older GPUs can also be reused for lighter tasks.

- If the hardware supply chain remains global, a sizable chunk of future capex will keep flowing abroad, limiting the U.S. income boost from the spending alone.

- The biggest unknown is the future pace of AI demand. Adoption has been extraordinarily fast compared with past tech waves, but if demand slows unexpectedly, it could create financial and macro risks.

Why this matters

- For policymakers (like the Federal Reserve), it clarifies why the economy can look strong (big spending on AI) without a proportionally large jump in U.S. GDP—because imports are large—and helps them judge inflation and labor-market pressures more accurately.

- For industry and workers, it shows where the money is going now (data centers and cloud) and how AI services are entering everyday production and consumption.

- For financial stability, it helps separate real, income-generating growth from growth mainly tied to asset prices or investment that benefits foreign producers.

- For the growth outlook, the near term looks supported by both continued capex and rising AI-service revenues; the medium-term path depends on how quickly costs fall, how broadly AI boosts productivity, and whether demand keeps up with the massive build-out.

Bottom line

- Today’s AI boom is being felt first as a spending surge on data centers and servers.

- Because much of the hardware is imported, the net lift to U.S. GDP is smaller than the raw investment totals.

- As new data centers go live and sell AI services, their revenues can add to GDP on a scale similar to the recent investment—especially while utilization and prices stay high.

- Over time, the big question is whether AI drives lasting productivity gains. Meanwhile, short hardware cycles and uncertain demand create risks that are important to watch.

Knowledge Gaps

Below is a concise list of the paper’s unresolved knowledge gaps, limitations, and open questions. Each item is framed to be actionable for future research.

- Quantify the split of AI services between final demand (consumption, investment, exports, government) and intermediate use across industries; develop sector-level estimates using input–output tables and firm surveys.

- Construct a firm-level mapping from large tech companies’ AI/data-center capex to National Income and Product Accounts (NIPA) categories (structures vs equipment vs IP products), and quantify the domestic vs foreign location of those investments.

- Develop hedonic price indices for GPU accelerators and AI server racks to correct real investment measures for rapid quality and price-performance changes; validate whether current deflators understate real AI investment.

- Resolve misclassification risks for GPU servers (capital vs intermediate inputs) in trade and investment statistics; create a standardized classification (HS/NIPA/BLS) that cleanly separates servers from “personal computers/mainframes.”

- Decompose the domestic value added embedded in AI hardware supply chains (chip design, fabrication, packaging, server assembly) using Trade in Value Added (TiVA) or firm-level cost/profit data; quantify the US share vs trading partners (e.g., Mexico, Vietnam, Taiwan, Korea).

- Identify how cross-border intra-group transfer pricing for cloud and AI services affects measured US exports, profits, and value added; reconcile national accounts with multinational reporting structures.

- Measure data-center capacity utilization rates (US vs non-US) using operational metrics (GPU-hours sold, queue times) and validate payback-period assumptions under varying utilization and pricing scenarios.

- Analyze pricing dispersion and contract structures for enterprise cloud compute (committed spend, dedicated capacity) vs public list prices; incorporate these into revenue/payback models.

- Quantify the share of AI revenues attributable specifically to AI workloads vs traditional cloud services at hyperscalers; establish a defensible AI revenue split to avoid over/under-attribution.

- Disentangle public cloud vs private cloud (e.g., Apple, Meta, Tesla) output: estimate how much private-cloud AI production is sold to final users (recorded as output) vs internally consumed (affecting intermediate inputs and value added).

- Improve measurement of “Exports of computer services” by isolating AI-specific exports (e.g., GPU rental, AI APIs, LLM inference) and distinguishing exports originating from US-based vs foreign data centers.

- Standardize national accounts treatment of AI model training expenditures: when and how training compute, data acquisition, and model development are capitalized as R&D IP vs expensed; document firm heterogeneity and its macro measurement impact.

- Estimate energy and operating cost shares at scale for AI data centers (electricity, cooling, networking), and assess their pass-through to sectoral value added, prices, and regional infrastructure investment needs.

- Model medium-term dynamics of short reinvestment cycles: quantify replacement investment profiles, free cash flow trajectories, and ROIC under different AI demand/pricing scenarios; assess implications for macro volatility and investment-led growth.

- Provide empirical evidence on actual GPU failure rates, thermal stress, and hardware lifetimes in AI data centers; align accounting depreciation schedules with observed longevity and redeployment of older chips.

- Validate the claim that near-term AI service revenues from new data centers can match capex: construct cohort analyses of new capacity (commissioning dates, realized revenue, utilization) and compare against forecasted payback.

- Map the geography of data-center capacity, revenues, and profit booking (physical location vs legal domicile) to the recording of GDP by country/industry; quantify discrepancies from profit shifting and corporate structuring.

- Assess cannibalization and complementarities between AI workloads and traditional cloud services; estimate net effects on sectoral value added rather than gross revenue growth.

- Measure the training vs inference compute shares over time and their distinct revenue models; link these shares to final vs intermediate demand classification in national accounts.

- Investigate the productivity and labor-market effects of AI adoption (the acknowledged “second-order” impacts): quantify changes in total factor productivity, labor demand by occupation, and the substitution of labor with AI services across industries.

- Quantify the public sector’s AI investment and consumption (federal/state/local), including defense and administrative uses; estimate contributions to government expenditures and public-sector productivity.

- Address potential mismeasurement in imports via re-exports/transshipment and component bundling; refine server import proxies using granular HS codes and supplier shipment data to separate AI servers from other ICT goods.

Practical Applications

Immediate Applications

These applications can be deployed now using the paper’s accounting framework, sectoral evidence, and operational insights about data centers, imports, and AI service revenues.

- Import‑adjusted GDP attribution for AI investment; sector: finance/policy; tool/workflow: “AI Investment Impact Calculator” that nets tech imports from IT investment to estimate domestic GDP contribution using BEA accounts and Trade Monitor data; assumptions/dependencies: timely trade data, correct classification of servers/GPUs as capital goods, stable import patterns (notably Mexico/Vietnam).

- Data‑center capex planning based on payback and utilization; sector: cloud/software/infrastructure; tool/workflow: “Data Center ROI Planner” that calibrates rack‑level payback using current GPU‑hour prices and expected utilization (paper’s ~1‑year payback for fully utilized GB200 NVL72 racks); assumptions/dependencies: sustained high utilization, current pricing, power availability, cooling capacity, supply lead times.

- Real‑time GPU pricing and capacity management; sector: cloud providers/hyperscalers; tool/workflow: “GPU Pricing Telemetry & Capacity Allocator” that ingests public GPU‑hour price pages and committed‑spend contracts to optimize pricing, reservations, and enterprise SLAs; assumptions/dependencies: continued transparency of on‑demand prices, committed‑spend contract mix.

- Server import monitoring and procurement risk management; sector: hardware vendors/IT procurement/logistics; tool/workflow: “Server Import Tracker” that flags origin concentration (Mexico/Vietnam), lead‑time risks, and customs constraints for GPU server racks; assumptions/dependencies: USMCA/NAFTA rules, port throughput, geopolitical stability, supplier diversification.

- Monetary policy dashboards that separate AI’s demand vs domestic value added; sector: central banks; tool/workflow: “AI‑Adjusted Demand Dashboard” linking investment, imports, value‑added sectors (data processing, computer systems design) to parse growth and inflation signals; assumptions/dependencies: BEA release cadence, accurate sector mapping, persistence of current AI capex boom.

- Financial stability monitoring of reinvestment cycles and free cash flow pressure; sector: finance/banking/regulators; tool/workflow: “AI Reinvestment Cycle Monitor” tracking depreciation assumptions, ROIC, capex‑to‑revenue ratios, hyperscaler exposures; assumptions/dependencies: disclosure of capex/revenue by firms, short hardware lifetimes offset by quick payback, secondary market for GPUs.

- Energy and grid planning for high‑density data centers; sector: energy/utilities; tool/workflow: “Power Density & Cooling Planner” for substation upgrades, demand response, liquid cooling retrofits, and siting; assumptions/dependencies: permitting timelines, local grid capacity, water availability, utility–hyperscaler coordination.

- Corporate accounting policies for AI compute in R&D; sector: enterprise finance/accounting; tool/workflow: “AI R&D Capitalization Workflow” to classify training compute as investment (intellectual property) vs operating expense, aligned with national accounts; assumptions/dependencies: GAAP/IFRS interpretation, audit comfort, internal cost tracking.

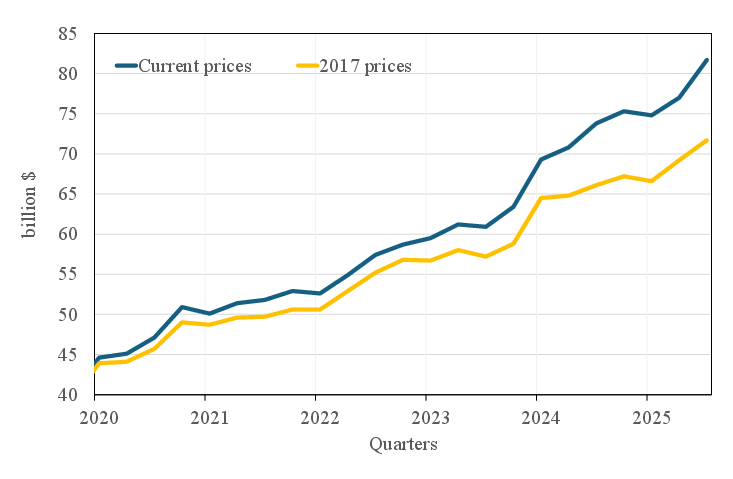

- Export promotion for US cloud/AI services; sector: trade/commerce; tool/workflow: “Cloud Services Export Playbook” targeting growth in “Exports of computer services” (paper’s annualized ~$82b) via cross‑border data strategies and enterprise deals; assumptions/dependencies: data transfer regimes, privacy/compliance, cross‑border tax treatment.

- Industrial policy targeting domestic value added; sector: policy/industrial strategy; tool/workflow: “AI Hardware Localization Roadmap” that coordinates Chips Act incentives, server assembly and memory module capacity to raise domestic share; assumptions/dependencies: cost competitiveness, workforce availability, supplier ecosystems.

- Statistical agency pilots for AI satellite accounts; sector: statistics/academia/policy; tool/workflow: “AI Satellite Account & Classification Guide” to separate AI from broader IT, ensure GPU servers are treated as capital, and capture cloud AI revenues and exports; assumptions/dependencies: firm reporting, survey response rates, price index development for GPUs/services.

- Enterprise procurement budgeting for AI services; sector: corporate IT/operations; tool/workflow: “AI Procurement Playbook” to decide final vs intermediate use, allocate ChatGPT/Copilot/AIaaS spend, and track cost substitution (labor vs intermediate inputs); assumptions/dependencies: usage telemetry, adoption stage (paper suggests much AI is still sold to final users).

- Academic replication and teaching modules; sector: academia/economics; tool/workflow: “AI Macro‑Accounting Replication Pack” using BEA sectoral value added (e.g., data processing, computer systems design), import‑adjusted investment contributions, and cloud revenue indicators; assumptions/dependencies: data availability, reproducible pipelines.

Long‑Term Applications

These applications require further research, scaling, data collection, regulation, or infrastructure development before broad deployment.

- Updated input‑output tables with an explicit AI services sector; sector: academia/statistics/policy; tool/workflow: “AI Input‑Output Extension” tracing intermediate vs final use of AI services and spillovers to non‑AI industries; assumptions/dependencies: firm surveys on AI usage, granular revenue splits from hyperscalers and labs.

- Hedonic price indices for GPUs and cloud AI services; sector: statistics/academia; tool/workflow: “GPU/AI Service PPI Methodology” capturing rapid price‑performance changes and contract heterogeneity; assumptions/dependencies: detailed hardware specs, service bundles, negotiated contract access.

- Energy co‑optimization and waste‑heat recovery from data centers; sector: energy/municipal planning; tool/workflow: “District Heating & Cooling Integration for DCs” to reuse heat, reduce water use, and stabilize grids; assumptions/dependencies: local regulations, technical feasibility, cost‑sharing models.

- Domestic capacity expansion for AI hardware fabrication and server assembly; sector: industrial policy/manufacturing; tool/workflow: “US AI Hardware Capacity Builder” aligning fabs, memory, and ODM assembly near data‑center hubs; assumptions/dependencies: capital intensity (tens of billions), supply chain partnerships, long lead times.

- Standardized disclosures separating AI vs traditional cloud revenues; sector: capital markets/regulation; tool/workflow: “AI Revenue Split Reporting Standard” for hyperscalers/labs to improve macro measurement and investor transparency; assumptions/dependencies: SEC/IASB guidance or investor pressure.

- International transfer‑pricing and taxation frameworks for cross‑border AI services; sector: policy/international tax; tool/workflow: “BEPS for AI Services” to align revenue recognition across jurisdictions and reflect data center locations; assumptions/dependencies: OECD consensus, enforcement capacity.

- Macroprudential stress testing of AI demand uncertainty and reinvestment cycles; sector: central banks/regulators; tool/workflow: “AI Capex Stress Test Module” embedding boom‑bust demand scenarios, obsolescence shocks, and global supply chain disruptions; assumptions/dependencies: bank exposure mapping to hyperscalers, scenario design.

- Workforce development pipelines for AI infrastructure roles; sector: education/workforce; tool/workflow: “AI Infrastructure Talent Pathways” (power systems, thermal engineering, high‑speed networking, DC operations); assumptions/dependencies: curriculum updates, apprenticeship funding, industry partnerships.

- Regional siting and permitting frameworks for sustainable data centers; sector: local government/urban planning; tool/workflow: “Data Center Siting Guidelines” balancing land, water, emissions, and community benefits; assumptions/dependencies: stakeholder engagement, environmental standards, timelines.

- Secondary markets and lifecycle management for GPUs; sector: hardware/resale/SMEs; tool/workflow: “GPU Lifecycle Marketplace” to redeploy older accelerators for less demanding tasks, extending amortization; assumptions/dependencies: dependable performance tiers, logistics, quality assurance.

- National surveys to measure final vs intermediate use shares of AI services; sector: statistics/academia; tool/workflow: “AI Usage Survey Instrument” integrated with economic censuses to quantify enterprise use cases and cost substitution; assumptions/dependencies: survey design quality, response rates, confidentiality protections.

- Rigorous productivity and employment studies on second‑round AI effects; sector: academia/policy; tool/workflow: “AI Adoption Impact Lab” using linked firm‑level data, randomized pilots, and sectoral case studies to estimate durable growth impacts; assumptions/dependencies: data access, multi‑year horizons, causal identification.

Glossary

- Aggregate demand: The total demand for goods and services within an economy. "has given an outsized boost to aggregate demand"

- AI accelerators: Specialized processors designed to speed up AI workloads, typically GPUs or TPUs. "AI workloads rely on clusters built around large arrays of costly AI accelerators (GPUs and TPUs)."

- AI as a Service (AIaaS): Delivery of AI model capabilities over the internet, often via APIs and priced by usage. "AI as a Service (AIaaS): AI laboratories expose their models through Application Programming Interfaces (APIs)"

- Amortizing: Allocating the cost of a capital asset over its useful life. "most critically, amortizing the substantial physical investment in data centres"

- Annualized: Expressing a rate (e.g., growth) as if it applied for a full year. "measured as annualized quarterly growth over the previous quarters"

- Application Programming Interfaces (APIs): Interfaces that allow software to interact programmatically with services or models. "Application Programming Interfaces (APIs), enabling organizations to submit queries programmatically and receive model‑generated responses."

- Capital expenditures (capex): Long-term investments in physical or intangible assets. "capital expenditures (capex henceforth)"

- Chips Act: U.S. legislation providing incentives for domestic semiconductor manufacturing. "public financial incentives provided by the Chips Act"

- Cloud infrastructure providers: Companies that own and operate data centers and lease computing capacity. "Cloud infrastructure providers own and operate globally distributed data centers."

- Conjunctural: Relating to short‑term cyclical economic conditions. "More recently, a conjunctural issue attracting widespread attention from the media and market analysts is the extent to which AI‑related capital investment is currently contributing to US GDP dynamics."

- Data centers: Industrial facilities housing compute, storage, and networking systems that provide cloud and AI services. "data centers are clearly the backbone of the AI ecosystem."

- Expenditure approach: A method of calculating GDP by summing consumption, investment, government spending, and net exports. "notably more than the figure recovered through the expenditure approach that only captures investment."

- Fabs: Semiconductor fabrication plants where chips are manufactured. "semiconductor fabrication plants (so‑called fabs)."

- Final demand: Purchases of goods and services for final use (consumption, investment, exports, government). "Examples of direct final demand contributions of data center and AI services"

- Free cash flow: Cash generated after operating expenses and capital expenditures, available for distribution or reinvestment. "depress free cash flow, limiting the share of operating profits that can ultimately be distributed to investors"

- GB200 NVL72 architecture: A specific NVIDIA high‑density GPU rack configuration used in AI data centers. "current cloud rental prices for NVIDIA’s GB200 NVL72 architecture."

- GPU‑hour: A billing unit representing one hour of GPU usage in cloud pricing. "AI compute is billed per GPU‑hour (or per second/minute), with separate charges for storage and network usage."

- High‑throughput storage: Storage systems capable of sustaining very high data rates to feed AI workloads. "high‑throughput storage."

- Hyperscalers: The largest cloud providers operating massive global data center fleets. "The largest providers — often referred to as hyperscalers — are Amazon Web Services (AWS), Microsoft Azure, and Google Cloud Platform (GCP)."

- IaaS: A cloud computing model providing virtualized infrastructure resources on demand. "(Infrastructure as a Service - IaaS)."

- Import content: The share of imported components embedded in domestic spending or investment. "its contribution to GDP growth is smaller once the high import content of AI hardware is netted out."

- Information processing equipment: A national accounts category covering IT hardware and software investment. "private Fixed investment in information processing, equipment and software"

- Inference: The process of running trained AI models to generate outputs in production. "AI‑model training and inference."

- Intermediate inputs: Goods or services used in the production process but not counted as final demand. "computational capacity and AI services can be used as intermediate inputs by domestic producers"

- Intellectual property products: Intangible assets (e.g., software) treated as investment in national accounts. "Software (from the intellectual property products category)."

- Model training: The process of optimizing an AI model’s parameters using data. "model training and inference."

- Moore’s law: The historical trend of exponential increases in transistor counts, now slowing. "Although replacement cycles may nowadays be longer due to the slowing down of Moore’s law."

- National Income and Product Accounts (NIPA): The U.S. statistical framework for measuring national economic activity. "Gross investment in intellectual property (NIPA 5.2.5U.19,47)"

- Original Design Manufacturer (ODM): A firm that designs and produces products that are branded and sold by another company. "Original Design Manufacturers, ODM"

- Original Equipment Manufacturer (OEM): A firm that manufactures products sold under its own brand. "Original Equipment Manufacturers, OEM"

- Oligopolies: Market structures dominated by a few firms with high barriers to entry. "the main segments of the hardware industry described above function as oligopolies."

- Pay‑as‑you‑go: A pricing model where customers pay only for the resources they use. "A common pricing model of cloud‑infrastructure services is pay‑as‑you‑go"

- Payback period: The time needed for an investment’s cumulative returns to equal its initial cost. "consistent with a payback period close to one year for fully utilised facilities."

- Power density: Electrical power per unit area, critical for AI‑focused data center design. "These AI‑focused facilities require much higher power density"

- Quasi‑hyperscaler: A company with hyperscale‑like data center capacity primarily supporting its own services. "Meta is sometimes classified as a “quasi‑hyperscaler” because its global data‑center footprint is comparable, although its infrastructure primarily supports its own applications."

- R{paper_content}D: Research and development spending, treated as investment in national accounts. "AI‑related R{paper_content}D (for example, related to chip design and model development)"

- Return on invested capital (ROIC): A measure of profitability relative to the capital invested in the business. "returns on invested capital (ROIC)."

- Software‑as‑a‑Service (SaaS): Software delivered via subscriptions over the internet. "Software‑as‑a‑Service (SaaS): AI models are integrated into user‑facing applications"

- Tensor Processing Units (TPUs): Specialized AI accelerators designed for tensor operations. "Tensor Processing Units (TPUs)."

- Trade Monitor data: A dataset providing granular breakdowns of international trade flows. "Trade Monitor data, providing a more granular breakdown of trade flows."

- Transfer pricing: Pricing of transactions within a multinational group, affecting cross‑border reported profits. "cross‑border intra‑group transfer pricing."

- Utilisation rates: The degree to which available capacity is actively used. "high utilisation rates of new data centers"

- Value added: Output minus intermediate inputs; the basis for GDP by industry measures. "value added in the Manufacturing of computer and electronic products"

- Yield levels: The proportion of manufactured chips meeting quality and performance standards. "achieve economically viable yield levels."

Collections

Sign up for free to add this paper to one or more collections.