Universality of Neural Network Field Theory

Abstract: We prove that any quantum field theory, or more generally any probability distribution over tempered distributions in $\mathbb{R}d$, admits a neural network description with a countable infinity of parameters. As an example, we realize the $2d$ Liouville theory as a neural network and numerically compute the three-point function of vertex operators, finding agreement with the DOZZ formula.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Explain it Like I'm 14

What is this paper about?

This paper shows a surprising and powerful connection between two big ideas:

- Quantum field theory (QFT), the math physicists use to describe particles and fields (like the electromagnetic field).

- Neural networks (NNs), the math tools behind modern AI.

The main message: any quantum field theory can be described using a neural network with an infinite-but-listable set of numbers (think an endless checklist: 1st, 2nd, 3rd, …). Even more, in a very formal sense, one single random number between 0 and 1 can carry enough information to generate any such theory.

The authors also test the idea on a famous 2D theory called Liouville theory and show their neural-network version matches exact known results.

What questions are the authors asking?

In simple terms, the paper asks:

- Can every quantum field theory be written as a neural network with a manageable number of “knobs” (parameters) to tune?

- Can we do this not just in 1D (time only), but in higher dimensions where fields are more like “rough objects” than ordinary smooth functions?

- Does this neural-network view help us compute real quantities in an actual theory (like Liouville theory), and do the numbers come out right?

How did they approach the problem?

To make the ideas approachable, here are the key concepts in everyday language:

- Field: Imagine temperature assigned to every point in space—this is a field. In QFT, fields are more complicated and “wigglier” than smooth functions; they are often so rough they’re best treated as “generalized functions” (called distributions), like an idealized spike for a point source.

- Random field: In quantum physics, we don’t fix one exact field; instead, we allow randomness and talk about the probability of different field configurations.

- Neural network for fields: A neural network is like a recipe that takes inputs (like a position in space) and uses a set of numbers (parameters) to produce an output (the field value at that point). The “architecture” is the shape of the recipe (how inputs are combined), and the “parameter density” tells us how we randomly choose the parameters.

- Countably infinite parameters: This means we need an infinite list of parameters, but a list you could enumerate: parameter 1, 2, 3, and so on. It’s “infinite” but still ordered like the counting numbers.

- The math backbone: The authors use a deep result from measure theory (the Borel isomorphism theorem) that, roughly speaking, lets you “relabel” complicated spaces cleanly. In this context, it says: if your field lives in a nice enough space (which it does), then there’s a way to match every possible field configuration to a unique infinite list of real numbers (or even to a single real number in [0, 1]). This guarantees a neural network description exists—because you can feed those numbers into the network definition to reconstruct the field.

- Practical, constructive version: Beyond this abstract existence proof, you can also build actual examples by expanding the field in a known basis (like musical notes for sound, or spherical harmonics for patterns on a sphere). The expansion coefficients are the NN parameters.

The Liouville theory test

- Liouville theory: A 2D field theory that shows up in 2D gravity and string theory. It’s special because some of its answers are known exactly, including a key three-point function (a basic measurement of how the field interacts), given by the DOZZ formula.

- What they did:

- They represented the field on a sphere using spherical harmonics (like decomposing a map into smooth “wave patterns”).

- They split the field into a “zero mode” (a single overall shift) and “non-zero modes” (the patterns).

- They randomly chose the pattern coefficients from a Gaussian distribution tuned to mimic a free (non-interacting) field.

- They then added interactions by changing how the zero mode is chosen, in a way that imitates a refined random weighting known as Gaussian multiplicative chaos (this is a precise way to handle exponentials of a noisy field).

- Finally, they computed the three-point function from this neural-network model and compared it to the exact DOZZ formula.

What did they find and why is it important?

Main findings:

- Universality: Any quantum field theory (more generally, any probability distribution over “tempered distributions,” the mathematical home for rough fields) can be described by a neural network with a countably infinite set of parameters. Formally, you can even compress everything into a single parameter in 0, 1.

- Constructive hints: In practice, you can build such networks by expanding fields in standard bases (like Hermite functions or spherical harmonics) and using the expansion coefficients as parameters.

- Real-world test: Using this approach on 2D Liouville theory, they computed the three-point function and found agreement with the exact DOZZ formula to within a few percent. That’s a strong check that the neural-network description is not only possible but useful.

Why it matters:

- It bridges modern AI tools and fundamental physics by showing any QFT can be “encoded” as a neural network.

- It suggests new ways to simulate and compute in hard quantum theories using flexible NN machinery, potentially speeding up or enabling calculations that are otherwise difficult.

What could this change in the future?

- Better simulations of quantum fields: Because the neural-network approach is so general, it could offer new numerical methods—especially in tricky cases where traditional methods struggle.

- Training networks to learn theories: Instead of hand-designing every detail, we might train neural networks to match data from a target QFT (like fitting a model to known measurements), opening a path to “learning” quantum field theories.

- Deeper theoretical insights: The NN view might help us understand which neural-network architectures produce physically sensible QFTs (for example, those that respect key properties needed for real-world physics).

- Applying the method to other theories: The authors suggest trying this for 3D φ4 theory (well-understood mathematically) or integrable models like sine-Gordon, where more exact results are available for comparison.

Key takeaways in plain language

- Any quantum field theory can be represented by a neural network with an infinite, but orderly, list of parameters.

- This isn’t just a math trick: when tested on a real, nontrivial theory (Liouville theory), the neural-network method reproduced known exact results.

- This opens doors to new computational tools that blend physics and machine learning, potentially making tough calculations easier or more flexible.

Knowledge Gaps

Knowledge gaps, limitations, and open questions

The paper establishes an existence theorem for NN-FT and demonstrates a Liouville-theory example, but it leaves several concrete issues unresolved. Future researchers could address the following:

- Non-constructive universality: The Borel-isomorphism-based proof guarantees existence but not explicit, computable architectures. Develop constructive mappings from sequences in ℝℕ to tempered distributions in S′(ℝd) that are continuous, local, symmetry-preserving, and practical for computation.

- Physical axioms in d ≥ 2: Specify conditions on architectures and parameter measures that ensure the Osterwalder–Schrader axioms (reflection positivity, Euclidean invariance, clustering) and prove unitarity after analytic continuation to Minkowski space for NN-FTs beyond 1d.

- Continuity and regularity of the isomorphism: The guaranteed Borel isomorphism need not be continuous. Determine when one can choose a continuous (or suitably regular) isomorphism and quantify its impact on numerical stability, sampling, and convergence.

- Canonical countable representations: Unlike NN-QM (e.g., Karhunen–Loève-type decompositions), no canonical countable decomposition is provided for QFTs. Devise “preferred” expansions that exploit covariance, spectral data, or symmetries to yield interpretable and efficient NN-FT architectures.

- Finite-parameter feasibility vs. infinite precision: The formal single-parameter construction relies on infinite-precision sampling. Quantify minimal parameter counts/bits needed to approximate a given QFT to a prescribed tolerance and develop practically sampleable finite-parameter schemes with provable error bounds.

- Symmetry and constraint enforcement: Provide explicit mechanisms in NN-FTs to enforce gauge invariance, BRST constraints, global symmetries, locality, and cluster decomposition, and study whether invertible/bijective architectures can respect these structures.

- Training-based construction: Outline and test training protocols (losses, datasets, optimization) that learn architectures and parameter measures from target correlators or Schwinger functions; analyze overparameterization effects and generalization in the NN-FT context.

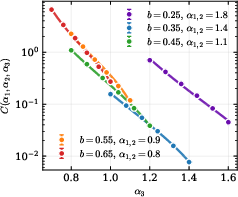

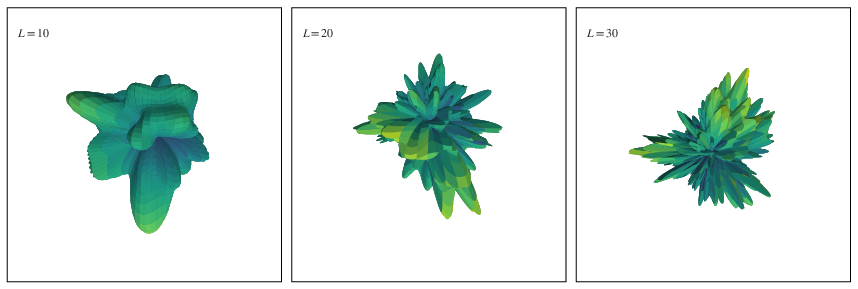

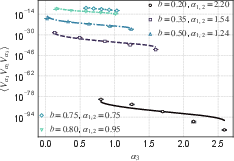

- Liouville numerics scope: The validation targets only the three-point function on S2 with fixed insertion points, truncated harmonics (L = 30), and pixelation. Extend to:

- Convergence studies in L → ∞ and grid refinement.

- Variation over insertion locations and verification of conformal covariance/crossing.

- Four-point functions (conformal blocks), higher-genus surfaces, and broader parameter ranges (b, μ), including heavy–light limits.

- Zero-mode treatment robustness: The conditional zero-mode density P(t | aℓm) and numerical normalization may introduce bias. Compare Monte Carlo sampling with exact analytic zero-mode integration, quantify errors from GMC estimation, and assess sensitivity to the normalization procedure.

- Regulator dependence and renormalization: The approach relies on normal-ordering and UV regulators (sphere pixelation). Establish regulator-independence (continuum) limits, specify a rigorous renormalization scheme, and provide uncertainty budgets tied to UV/IR cutoffs.

- Extension to other QFTs: Construct and validate NN-FTs for interacting higher-dimensional theories (e.g., 3d φ4, sine-Gordon), gauge theories, and fermions, demonstrating agreement with known rigorous or exact results.

- Lorentzian properties and spectral structure: Show that NN-FTs admit analytic continuation with causal Wightman functions, spectral positivity, and Källén–Lehmann representations; identify architectural conditions that guarantee these properties.

- Identifiability in non-invertible architectures: Many practical architectures are many-to-one. Analyze identifiability of parameter densities from correlators and implications for inference, training, and model comparison.

- Basis selection and locality: Assess alternatives to spherical harmonics/Hermite bases (e.g., wavelets, localized frames) to improve locality, sparsity, and numerical efficiency, and develop criteria for basis choice aligned with target theory features.

- Computational scalability: Benchmark computational costs versus lattice Monte Carlo, derive scaling laws with dimension, cutoff L, and accuracy, and optimize sampling of interacting parameter densities (e.g., copula/inverse Rosenblatt implementations) for efficiency.

- Scope of standard Borel assumption: Clarify the boundaries of the universality theorem for systems with constraints, non-tempered distributions, or non-standard measurable spaces, and explore extensions beyond standard Borel settings.

- Systematic uncertainty and reproducibility: Provide comprehensive error quantification (statistical, truncation, discretization, normalization), convergence diagnostics, and release reproducible code and datasets to enable independent verification.

Practical Applications

Immediate Applications

Below are practical, deployable uses that leverage the paper’s findings and methods, organized by sector. Each item notes assumptions or dependencies affecting feasibility.

- Physics and applied mathematics (academia)

- NN-FT simulators for known QFTs to compute observables

- Use the paper’s constructive recipe: basis expansions (e.g., spherical harmonics on S², Hermite functions in ℝᵈ), Gaussian priors for free-field modes, and conditional parameter densities for interactions (e.g., Gaussian multiplicative chaos for Liouville).

- Tools/products/workflow: Python/JAX/Julia libraries that implement x = φθ ∘ Θ; modules for spherical harmonics (e.g., SHTools), Monte Carlo sampling of coefficients aℓ,m, conditional sampling of the zero mode t = e{2bc}, normal ordering and UV regulators via pixelation, validation against exact formulas (e.g., DOZZ).

- Assumptions/dependencies: truncation level L must be high enough for convergence; careful treatment of normal-ordering and GMC; computational cost grows with L and resolution; architecture choices should reflect the target theory’s covariance.

- Rapid validation of constructive field-theory results

- Benchmark NN-FT against exact results (e.g., Liouville three-point functions), using the paper’s workflow to quantify error bars across experiments.

- Assumptions/dependencies: availability of analytic benchmarks; robust numerical integration/variance estimation.

- Software and machine learning

- General-purpose neural representation of stochastic processes valued in standard Borel spaces

- Implement the factorization of random variables through countable parameter spaces using the Borel isomorphism idea. Create reusable “measurable adapters” to convert complex measures into NN parameterizations.

- Tools/products/workflow: probabilistic programming (Pyro, TensorFlow Probability, Stan), normalizing flows; libraries for inverse Rosenblatt/Copula transforms to realize the “single-Uniform(0,1)” generator for complex joint distributions.

- Assumptions/dependencies: non-constructive universality requires explicit isomorphisms or basis choices for practice; numerical stability of measure transforms.

- Energy, climate, and geophysical modeling

- Surrogate modeling of turbulence and multifractal fields

- Use NN-FT with GMC-inspired parameter densities to generate multifractal random fields for wind resource modeling, pollutant dispersion, and ocean/atmosphere turbulence.

- Tools/products/workflow: domain-specific surrogates that parameterize random fields on spheres (Earth) or meshes; calibration to empirical spectra.

- Assumptions/dependencies: mapping from physics-informed spectra to NN priors; validation data; correct handling of long-range correlations and boundary conditions.

- Finance

- Multifractal volatility and risk scenario generators

- Employ GMC-like NN parameter densities to simulate multifractal measures consistent with empirical scaling of returns; integrate with risk engines to stress-test portfolios.

- Tools/products/workflow: plug-in stochastic field module for scenario generation; calibration via maximum-likelihood or method-of-moments on market data.

- Assumptions/dependencies: identification of appropriate multifractal model; robustness to regime changes; regulatory validation of generative models.

- Scientific computing and data engineering

- Compression and reproducibility of complex stochastic simulations

- Use countable-parameter or single-parameter (Uniform[0,1]-seed) representations to reproduce entire random fields deterministically, aiding storage, reproducibility, and audit trails.

- Tools/products/workflow: PRNG-seed management; deterministic simulators exporting compact parameter sets; reproducibility dashboards for large simulations.

- Assumptions/dependencies: precise control of PRNGs and transformations; consistent software stack across environments.

- Education and training

- Interactive teaching modules for QFT via NN architectures

- Visualize how field theories map to architectures; let students change parameter densities and observe impact on correlators (e.g., Liouville on S²).

- Tools/products/workflow: browser-based notebooks with live harmonic truncation, conditional sampling, and correlator computation.

- Assumptions/dependencies: didactic simplifications; computational resources for classroom-scale demos.

Long-Term Applications

These uses need further research, scaling, or development before they can be broadly deployed.

- Physics and applied mathematics (academia)

- NN-FT as an alternative to lattice field theory for interacting QFTs

- Extend the Liouville demonstration to super-renormalizable models (e.g., 3d ϕ⁴), integrable models (e.g., sine-Gordon), gauge theories, and string worldsheet theories. Use NN architectures to compute scattering amplitudes and nonperturbative observables.

- Potential tools/products/workflow: NN-FT libraries with model catalogs, HPC backends, automated observable pipelines; hybrid with normalizing flows and importance sampling.

- Assumptions/dependencies: engineering of Osterwalder–Schrader conditions (reflection positivity, etc.); reliable error control; scaling to high-dimensional fields and large system sizes.

- Machine learning theory and practice

- Training overparameterized NN-FTs to learn target QFTs or stochastic fields from data

- Exploit many-to-one architectures (overparameterization) and double-descent phenomena to improve training and generalization when fitting observed correlators or spectra.

- Tools/products/workflow: end-to-end training pipelines that optimize parameter densities and architecture layers to match target observables; meta-learning across families of field theories.

- Assumptions/dependencies: differentiable estimators for correlators; stable optimization over measures; identifiability and regularization for non-invertible architectures.

- Quantum technologies

- Variational quantum circuits inspired by NN-FT parameterizations

- Map countable parameter NN-FT representations to quantum circuit ansätze for simulating field-theoretic states or sampling field configurations, potentially enhancing quantum algorithms for field theory.

- Assumptions/dependencies: hardware noise and depth constraints; expressivity and trainability of circuit families; efficient encodings of distributional fields.

- Climate and Earth systems

- Physics-informed generative climate surrogates

- Build NN-FT surrogates with GMC-like components to emulate subgrid stochastic processes in climate models, improving efficiency and fidelity of long-term climate projections.

- Assumptions/dependencies: rigorous validation against observational data; coupling with deterministic PDE cores; policy/regulatory acceptance of AI surrogates in decision pipelines.

- Industrial simulation (materials, CFD, acoustics)

- Unified field-based generative engines

- Create product-grade engines that generate physically plausible random fields for materials microstructure, flow fields, and acoustic environments, accelerating design and uncertainty quantification.

- Assumptions/dependencies: domain calibration; integration with existing CAD/CAE tools; certification and performance guarantees.

- Software ecosystems

- Standardized NN-FT libraries and measure-theoretic adapters

- Develop open-source frameworks encapsulating measurable factorization x = φθ ∘ Θ; built-in basis libraries (Hermite, spherical/spheroidal harmonics), GMC modules, and OS checks.

- Assumptions/dependencies: community standards for measure-theoretic APIs; performance portability (CPU/GPU/TPU); sustained maintenance.

- Policy and governance of AI-for-Science

- Reproducibility, standards, and funding for NN-FT research

- Encourage reproducible pipelines (single-parameter seed protocols), open benchmarks, and cross-disciplinary collaborations connecting ML, probability, and theoretical physics.

- Assumptions/dependencies: sustained funding, community buy-in, and clear documentation tying mathematical guarantees (standard Borel, Lusin spaces) to software implementations.

Glossary

- Architecture: The specific functional form mapping inputs to outputs in a neural network representation of a system. "The functional form of is known as the architecture, which depends on a set of parameters and a time ,"

- Borel isomorphism theorem: A result stating that any two uncountable standard Borel spaces are Borel-isomorphic (there exists a measurable bijection with measurable inverse). "The proof relies on the Borel isomorphism theorem, which guarantees the existence of an architecture for the construction."

- Borel σ-algebra: The σ-algebra generated by open sets of a topological space, typically , used to define measurability. "Let be the Borel -algebra on ,"

- Cantor-diagonalization: A technique that constructs countably many sequences from one infinite sequence by interleaving digits, akin to Cantor’s diagonal argument. "by a Cantor-diagonalization-like procedure."

- Conformal defects: Localized modifications in a conformal field theory that preserve conformal symmetry in a reduced sense. "including conformal defects \cite{Capuozzo:2025ozt}"

- Conformal field theory (CFT): A quantum field theory invariant under conformal transformations, important in many areas of theoretical physics. "conformal field theories (CFTs) \cite{Halverson:2024axc}"

- Copula: A function that couples univariate marginal distributions into a multivariate joint distribution, enabling dependence modeling. "using Copula-like methods or an inverse Rosenblatt transformation."

- DOZZ formula: The exact expression for Liouville CFT three-point structure constants in terms of special functions. "finding agreement with the DOZZ formula."

- Evaluation map: The map that takes a function and returns its value at a specific index in the domain. "Let be the evaluation map which acts as on any function ."

- Free field: A field with quadratic action leading to Gaussian statistics and no interactions. "we first endow with the architecture of a free field on ,"

- Gaussian multiplicative chaos (GMC): A probabilistic construction producing random measures by exponentiating log-correlated Gaussian fields. "using the theory of Gaussian multiplicative chaos (GMC)."

- Generalized quantum system (GQS): A stochastic process valued in an uncountable standard Borel space, abstracting quantum-like random systems. "it will be convenient to define an abstract notion of generalized quantum system (GQS), which is a random variable taking values in a space obeying a certain topological condition."

- Hermite functions: An orthonormal basis of functions related to Hermite polynomials, commonly used in analysis and quantum mechanics. "choosing a basis of Hermite functions on ,"

- Kinetic term: The part of a field theory action involving derivatives of the field, controlling fluctuations and dynamics. "If the field has a kinetic term , its contributions to the action scale as"

- Liouville theory: A 2D conformal field theory describing a scalar field with exponential interaction, central in 2D gravity and string theory. "We illustrate the NN-FT approach by simulating Liouville theory on a sphere using a NN representation."

- Lorentzian time: Time coordinate in spacetime with Lorentzian signature, relevant after analytic continuation from Euclidean formulations. "which guarantee that the theory can be analytically continued to yield a physical (e.g. unitary) theory in Lorentzian time."

- Lusin space: A topological space that is a continuous image of a Polish space, ensuring good measurability properties. "It is well-known (see, for instance, \cite{hida2013white}) that is a Lusin space,"

- Normal-ordered: An operator ordering that moves creation operators to the left of annihilation operators, removing certain divergences. "we evaluate an average of appropriate normal-ordered three-point functions of vertex operators (\ref{three_point_vertex}),"

- Osterwalder-Schrader (OS) axioms: Conditions for Euclidean field theories that ensure reconstruction of a unitary Lorentzian quantum theory. "These two assumptions are satisfied by any Euclidean QM model that obeys the Osterwalder-Schrader (OS) axioms, which guarantee that the theory can be analytically continued to yield a physical (e.g. unitary) theory in Lorentzian time."

- Parameter map: The measurable map from the probability space to the parameter space in a neural network representation. "We refer to as the parameter map and as the architecture."

- Parameter measure: The pushforward probability measure on the parameter space induced by the parameter map. "The pushforward of the measure on , along the measurable map , defines a measure "

- Path space: The space of all functions from an index set to a state space, representing entire sample paths. "An equivalent definition involves the path space"

- Polish space: A separable completely metrizable topological space, foundational in descriptive set theory and probability. "it is sufficient (but not necessary) to assume that is a Polish space,"

- Product σ-algebra: The σ-algebra on a product space generated by measurable rectangles, enabling measurability in path spaces. "we may endow with the product -algebra "

- Pushforward (measure): The measure obtained by mapping a measure through a measurable function. "The pushforward of the measure on , along the measurable map , defines a measure "

- Ricci scalar: A scalar curvature invariant derived from the Ricci tensor, appearing in gravitational actions. "and is the Ricci scalar of the surface on which the theory is defined."

- Schwartz distributions: Generalized functions acting on test functions in Schwartz space, capturing distributional fields. "space of tempered Schwartz distributions."

- Sine-Gordon theory: An integrable field theory with a sine-type potential, known for exact solutions. "such as sine-Gordon theory, since some of their observables admit exact expressions that can be compared to numerics."

- Spherical harmonics: Orthonormal basis functions on the sphere, labeled by angular momentum indices. "a term involving real spherical harmonics,"

- Standard Borel space: A measurable space arising from a Borel σ-algebra of a Polish space (or its Borel subsets), supporting strong structural theorems. "Suppose that the process takes values almost surely in an uncountable subspace such that is a standard Borel space."

- Stochastic process: A collection of random variables indexed by a set, describing random evolution over the index. "Such an assignment of a joint PDF to any finite collection of times is a special case of a stochastic process."

- Super-renormalizability: A property of a quantum field theory where only finitely many divergences need renormalization, ensuring constructive existence. "by virtue of its super-renormalizability \cite{Glimm:1987ng},"

- Tempered distributions: Distributions that grow at most polynomially at infinity, forming the dual of Schwartz space. "any probability distribution over tempered distributions in , admits a neural network description"

- Three-point function: A correlator involving three operator insertions, encoding interaction data in CFTs. "and numerically compute the three-point function of vertex operators,"

- UV regulator: A regularization device controlling short-distance (high-energy) divergences. "A UV regulator is introduced by pixelating the sphere at $100$ points of latitude and $200$ points of longitude."

- Vertex operator: An exponential of the field (often normal-ordered) representing primary operators in Liouville/CFT. "We are interested in correlation functions involving the vertex operator ."

- Virasoro symmetry: The infinite-dimensional conformal symmetry in 2D encoded by the Virasoro algebra. "and Virasoro symmetry \cite{Robinson:2025ybg};"

- Worldsheet string theory: A formulation of string theory in terms of a 2D field theory living on the string’s worldsheet. "and worldsheet string theory \cite{Frank:2026bui}."

- Zero mode: The spatially constant component of a field, often treated separately in path integrals. "We begin by splitting the field into a zero mode contribution and a term "

Collections

Sign up for free to add this paper to one or more collections.