- The paper presents an AI-driven fuzz testing framework based on NSGA-II to uncover vulnerabilities in 5G traffic steering algorithms.

- It demonstrates a 34.3% increase in vulnerability detection and a 25% faster convergence compared to traditional testing methods.

- The study validates multi-objective evolutionary optimization as a robust approach for enhancing network resilience and guiding algorithm improvements.

AI-Driven Fuzzing for Vulnerability Assessment of 5G Traffic Steering Algorithms

Introduction

The proliferation of 5G networks introduces increased complexity in traffic management, particularly in dynamically allocating User Equipment (UE) across base stations (gNodeBs) via Traffic Steering (TS) algorithms. While these mechanisms enhance Quality of Experience (QoE), spectrum efficiency, and network load balancing, they expose the system to adversarial conditions, such as abrupt interference, handover storms, and localized outages. The paper "AI-Driven Fuzzing for Vulnerability Assessment of 5G Traffic Steering Algorithms" (2601.18690) addresses this gap by presenting an AI-driven fuzz testing framework based on the Non-Dominated Sorting Genetic Algorithm II (NSGA-II), aiming to systematically reveal rare and critical failures in TS.

Methodology

The core methodological innovation lies in reconceptualizing vulnerability assessment as a multi-objective search problem over diverse network states. The 5G system is modeled with sets of gNodeBs and UEs, encapsulated in a state vector encompassing UE positions, cell loads, association matrices, and channel qualities. Vulnerabilities are defined by three objectives: instability (handover rate variance), lower-tail QoE (5th-percentile user throughput), and fairness (Jain’s index).

The proposed NSGA-II-based fuzzing engine efficiently evolves candidate configurations by leveraging tournament selection, blend crossover, and adaptive mutation, generating adversarial network states that are systematically evaluated in a highly parameterized Sionna-based physical-layer simulator. The framework supports plug-and-play evaluation on various TS algorithms and scenarios, including both standard (A3 baseline, utility-based, load-aware, random) and RL-based (Q-learning) policies.

Experimental Design

The evaluation consists of 300 runs per method across six canonical network scenarios—ranging from stable mobility and high load to load imbalance, coverage hole, high interference, and congestion crisis—each reflecting real-world manifestations of stress in 5G RANs. Each scenario is paired with five diverse TS algorithms, producing comprehensive coverage of operational states. The primary metrics include vulnerability counts, critical failure incidences, detection efficiency, and diversity coverage.

Results

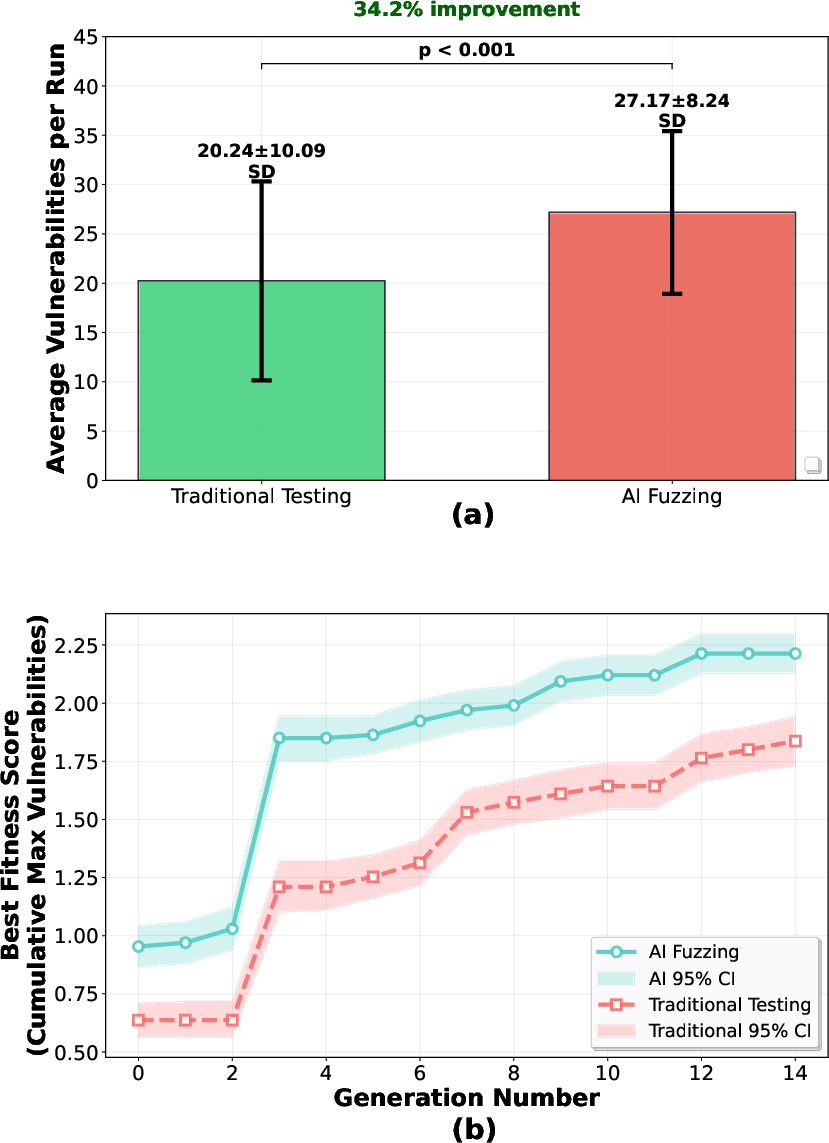

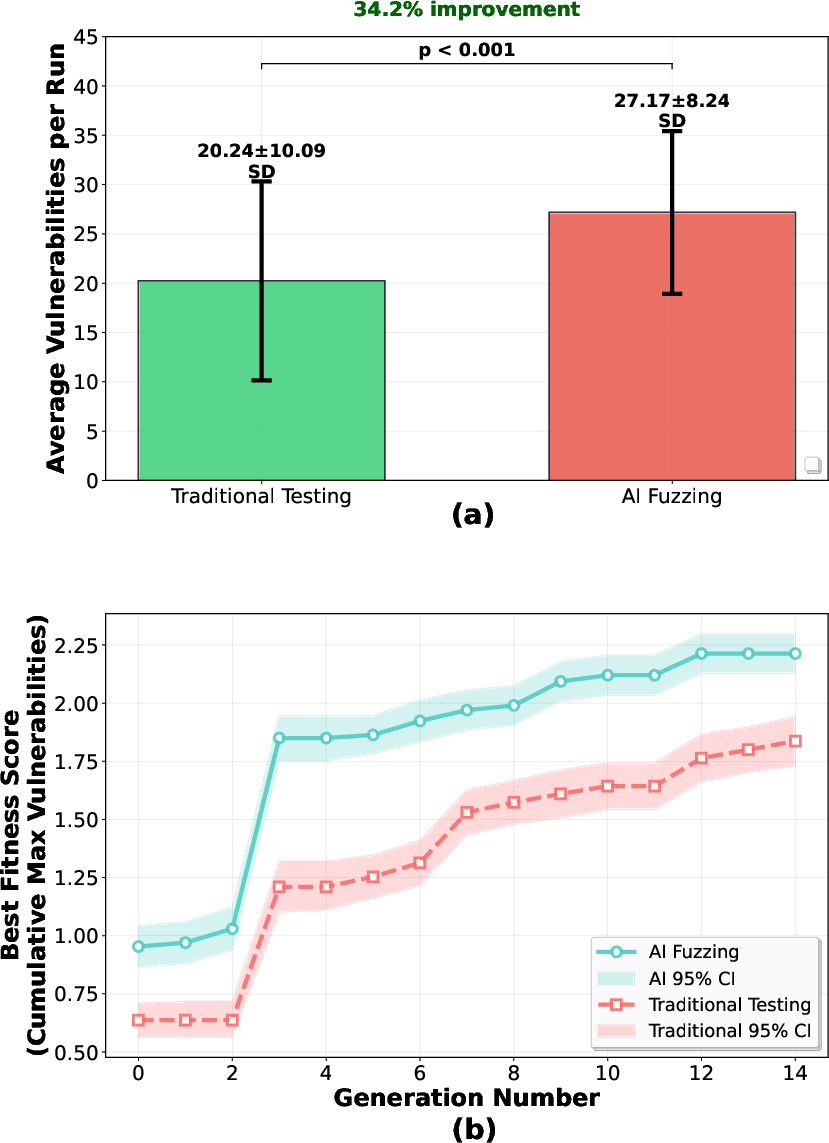

The AI-driven fuzzing approach demonstrates superior vulnerability discovery—detecting 34.3% more vulnerabilities per run compared to traditional scenario-based testing (27.36±8.60 vs. 20.37±10.98, p<0.00001), with a strong effect size (d=0.708). The convergence analysis further reveals a 25% improvement in efficiency, as NSGA-II reaches 90% of its optimal detection rate by generation 9, whereas traditional testing requires 12 generations, underscoring the Pareto-based diversity and search efficacy of the AI-driven strategy.

Figure 1: AI-Fuzzing yields 34.3% higher vulnerabilities-per-run and converges 25% faster versus traditional testing.

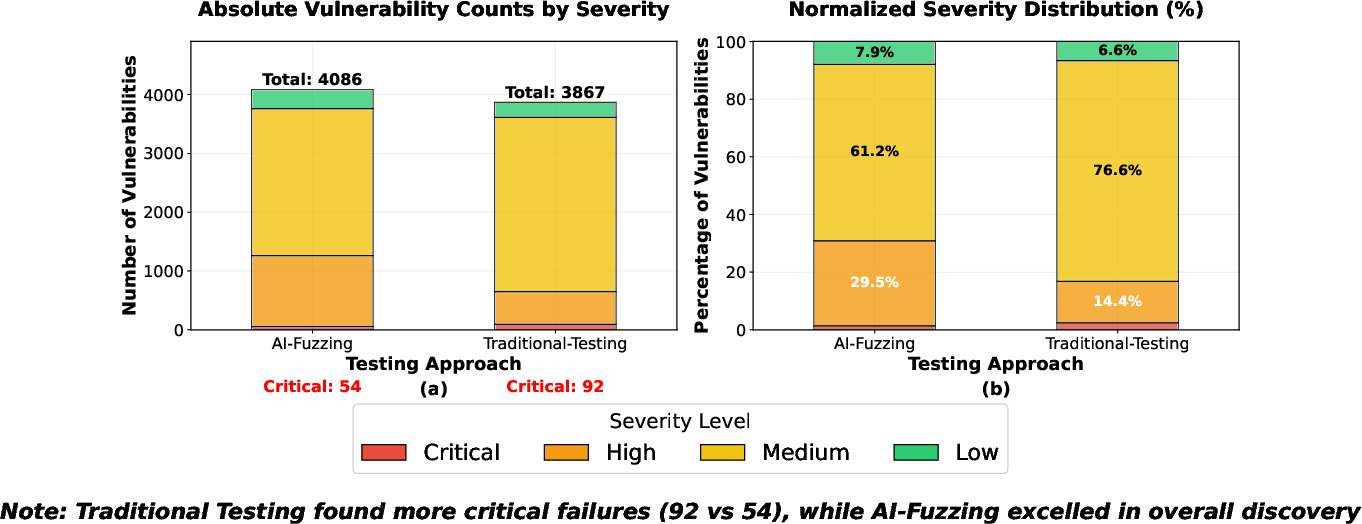

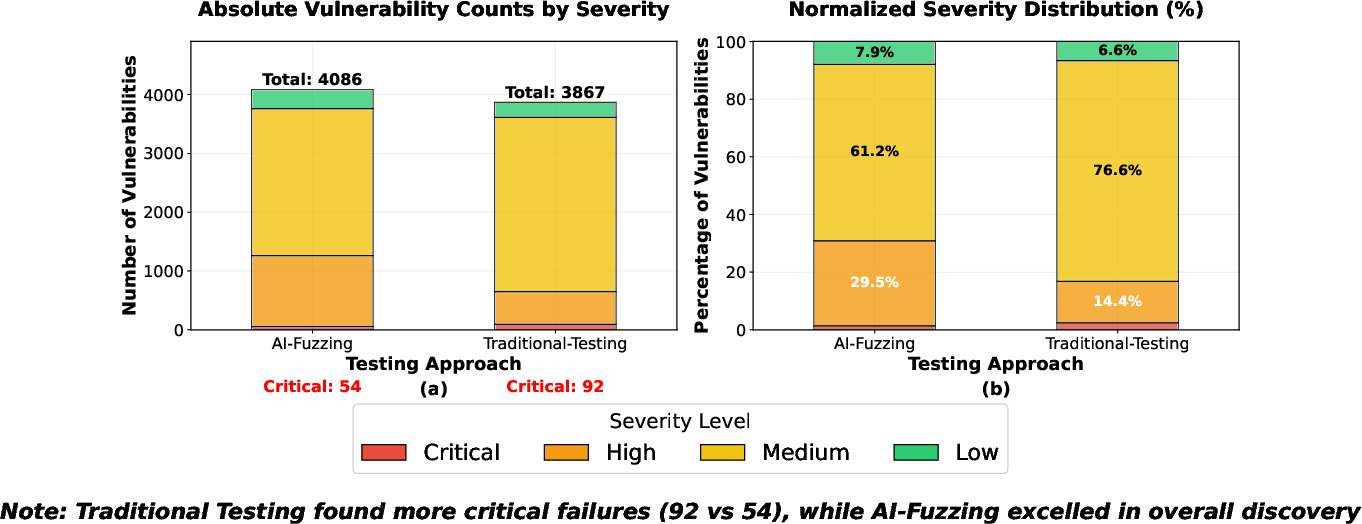

Further, AI-Fuzzing not only identifies a greater total number of vulnerabilities (8207 vs. 6112, p<0.00001), but also uncovers 5.8% more critical failures, despite high variance and right-skewed discovery distributions. The Shannon diversity index confirms the method’s effectiveness in covering a wide range of failure severities ($0.924$ for AI-Fuzzing vs. $0.777$ for traditional), demonstrating robust breadth in both typical and rare event detection.

Figure 2: AI-Fuzzing enhances both total and critical vulnerability counts and increases severity/diversity coverage across all scenarios.

Algorithm-specific analysis demonstrates that traditional rule-based mechanisms, especially the A3 baseline, exhibit high susceptibility to adversarial fuzzing, with a 96.2% increase in vulnerabilities detected by AI-Fuzzing on A3, while adaptive RL-based policies offer improved resilience, though not complete immunity, particularly against stochastic, rare edge cases. Scenario-specific breakdowns reinforce the pattern, with AI-Fuzzing outperforming traditional testing across all conditions, most notably in load imbalance (45.4% improvement) and high interference scenarios (42.3%).

Statistical and Technical Analysis

Extensive statistical rigor underpins the results: confidence intervals and non-parametric Mann-Whitney U tests accommodate data skewness and outlier sensitivity, particularly in critical failures where most runs return zero, but heavy-tailed events dominate overall impact. The results confirm that AI-Fuzzing’s performance advantage is both robust and generalizable, independent of algorithm choice, highlighting its suitability in production-scale validation.

Practical and Theoretical Implications

The findings substantiate AI-driven multi-objective fuzzing as a primary methodology for resilience validation in 5G (and prospective 6G) TS systems. Practically, the tool supports back-office and R&D validation teams in systematic, scalable identification of vulnerabilities that static, deterministic methods inevitably miss. The revealed architectural patterns guide the enhancement of TS design, emphasizing the need for robustness-aware, adaptive (potentially adversarially trained) control policies. The high variance in critical failure detection implies that ensemble or multi-run test strategies are necessary for reliable characterization of rare network failures.

Theoretically, this research delineates the efficacy of multi-objective evolutionary optimization in cybersecurity for complex, stochastic cyber-physical networks—outperforming both formal verification (which is intractable for large state spaces) and static simulation. The approach is extensible to Open RAN, real-time hardware-in-the-loop testbeds, and vendor-specific traffic management pipelines.

Conclusion

Systematic AI-driven fuzzing grounded in NSGA-II multi-objective optimization enables comprehensive and statistically validated vulnerability discovery for 5G TS algorithms. This method not only enhances algorithmic robustness and network resilience but also reveals architectural weaknesses, guiding future algorithm development and validation. Prospective research directions include large-scale field validation on Open RAN testbeds and the integration of GPU-accelerated fuzzing to further scale coverage and runtime efficiency.