Schema-based active inference supports rapid generalization of experience and frontal cortical coding of abstract structure

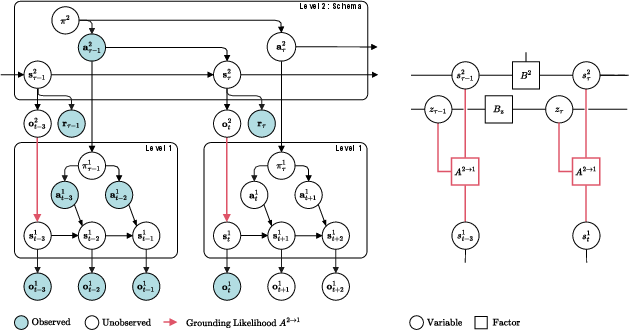

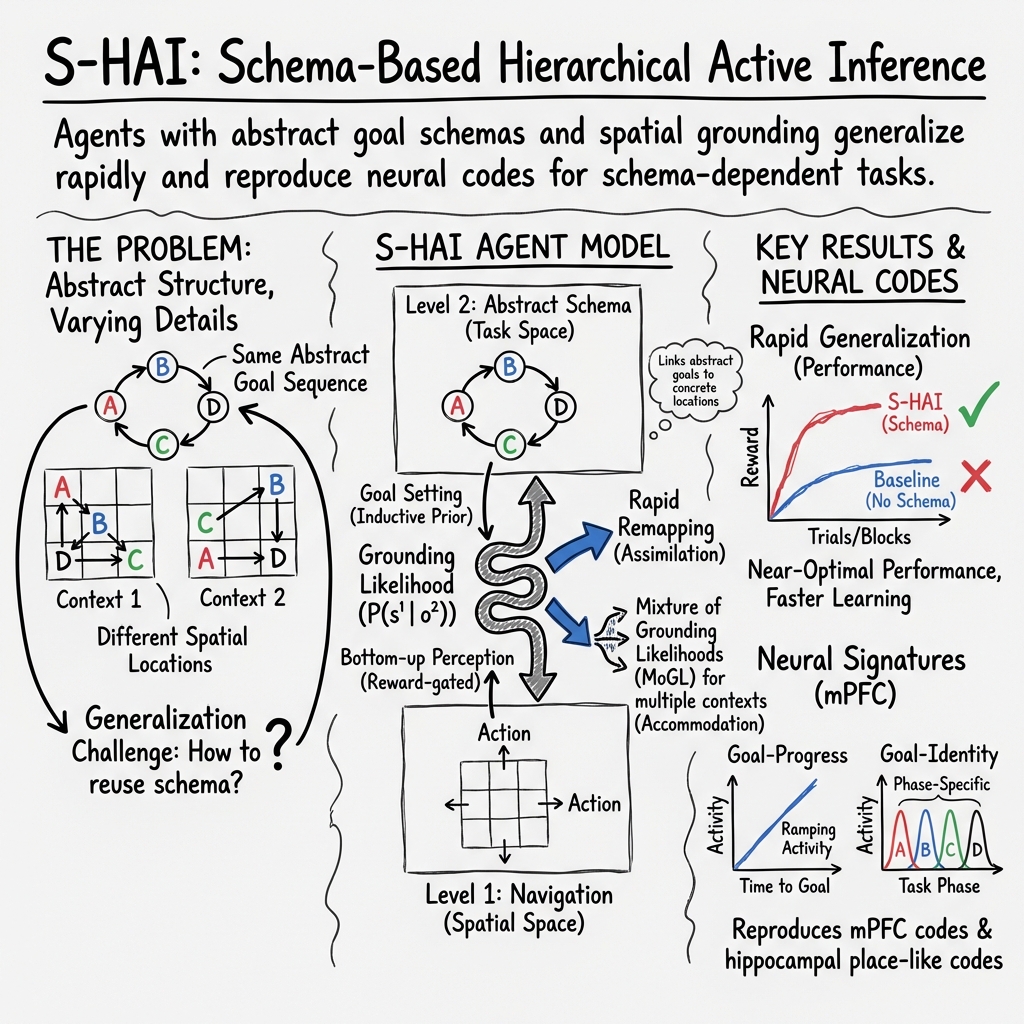

Abstract: Schemas -- abstract relational structures that capture the commonalities across experiences -- are thought to underlie humans' and animals' ability to rapidly generalize knowledge, rebind new experiences to existing structures, and flexibly adapt behavior across contexts. Despite their central role in cognition, the computational principles and neural mechanisms supporting schema formation and use remain elusive. Here, we introduce schema-based hierarchical active inference (S-HAI), a novel computational framework that combines predictive processing and active inference with schema-based mechanisms. In S-HAI, a higher-level generative model encodes abstract task structure, while a lower-level model encodes spatial navigation, with the two levels linked by a grounding likelihood that maps abstract goals to physical locations. Through a series of simulations, we show that S-HAI reproduces key behavioral signatures of rapid schema-based generalization in spatial navigation tasks, including the ability to flexibly remap abstract schemas onto novel contexts, resolve goal ambiguity, and balance reuse versus accommodation of novel mappings. Crucially, S-HAI also reproduces prominent neural codes reported in rodent medial prefrontal cortex during a schema-dependent navigation and decision task, including task-invariant goal-progress cells, goal-identity cells, and goal-and-spatially conjunctive cells, as well as place-like codes at the lower level. Taken together, these results provide a mechanistic account of schema-based learning and inference that bridges behavior, neural data, and theory. More broadly, our findings suggest that schema formation and generalization may arise from predictive processing principles implemented hierarchically across cortical and hippocampal circuits, enabling the generalization of experience.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Explain it Like I'm 14

Overview: What this paper is about

This paper asks how people and animals can learn a rule in one situation and then use it almost immediately in a new situation that looks different. The authors focus on “schemas,” which you can think of as blueprints or recipes that capture the pattern behind many experiences. They build a computer model called S-HAI (Schema-based Hierarchical Active Inference) that learns an abstract rule (the schema) and then quickly “rebinds” it to new places or contexts. They test it in virtual maze tasks and show it can generalize fast, handle tricky cases, and even produce brain-like activity patterns similar to what scientists see in the frontal brains of rodents.

What questions did the researchers ask?

- Can a computer model learn an abstract task structure (a schema) and then reuse it in new environments where the details change?

- How can a model decide when to reuse an old mapping versus create a new one (like choosing between “this is the same pattern” vs. “this is a new pattern”)?

- Can the model’s internal activity look like the neural signals found in animal brains during these kinds of tasks?

How did they study it? (Methods in simple terms)

The authors built a two-level thinking machine that plans and acts:

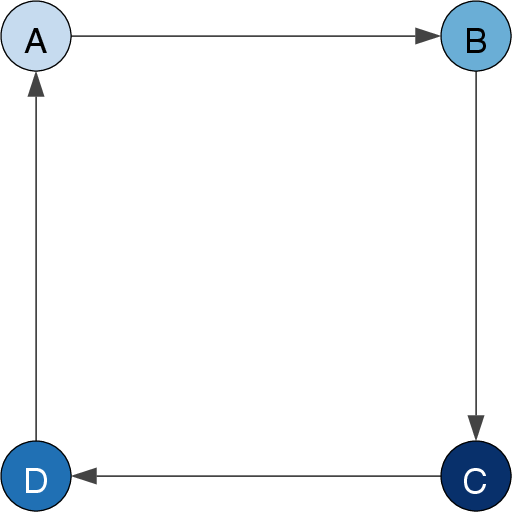

- Level 2: The “big-picture planner” learns the abstract rule of the task. Imagine a recipe that says “go to A, then B, then C, then D, then repeat.” This is the schema. It ignores the visual details and only remembers the order of goals.

- Level 1: The “navigator” moves around a grid-like maze. It knows how to step up/down/left/right and learn the maze’s layout.

To connect these two levels, the model learns a “grounding likelihood.” Think of this as an address book that says, “Abstract goal A lives at this location in this maze; goal B lives over there,” and so on. When the maze changes (the goals swap places), the abstract recipe stays the same, but the address book gets updated quickly.

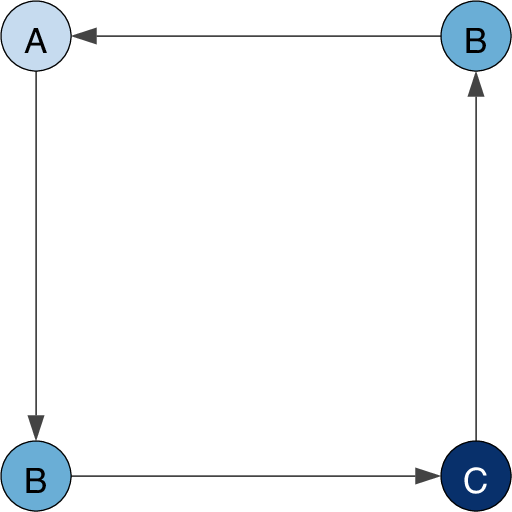

They tested the model in two spatial tasks:

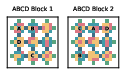

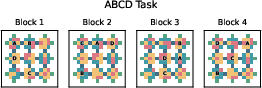

- ABCD task: The agent must visit four goals in order A→B→C→D, but the actual locations of A–D change across blocks. The structure (the order) stays the same.

- ABCB task: Like ABCD, but the second and fourth goals (both “B”) are physically the same location. That means the agent must remember whether it arrived at B from A or from C to know what comes next. This creates ambiguity that confuses simpler models.

They compared several agents:

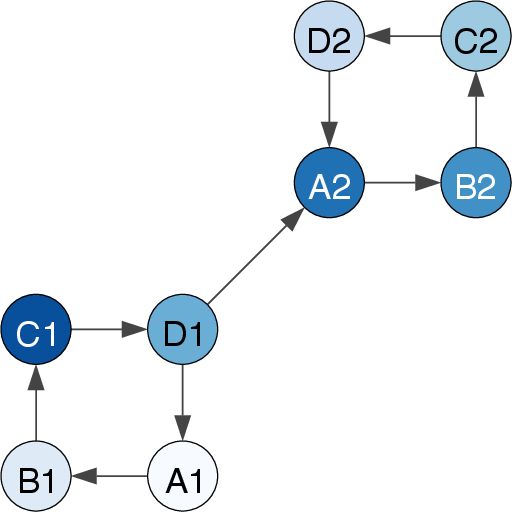

- S-HAI with schemas learned offline (before acting) or online (while acting).

- A version without schemas (which tends to relearn each block separately).

- A random-choice baseline.

- A “clone” version for the tricky ABCB task, which creates two internal copies of the ambiguous state “B” to remember context—like labeling them “B-coming-from-A” and “B-coming-from-C.”

They also added a “mixture of mappings” feature (a growing library of address books). This lets the model:

- Reuse a known mapping when it sees a familiar maze.

- Create a new mapping when it encounters a maze it hasn’t seen before.

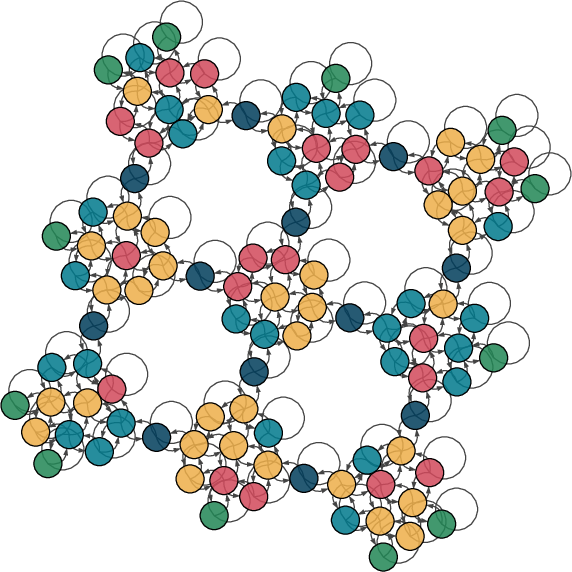

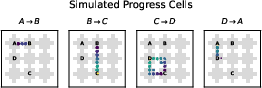

Finally, they simulated “neural activity” in the model to see if it showed patterns like those recorded in rodent medial prefrontal cortex (mPFC). A key signal they looked for is like a progress bar in your brain—cells that fire more as you get closer to any goal (“goal-progress cells”).

What did they find, and why does it matter?

Here are the main results, and what they mean:

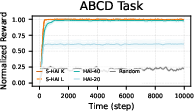

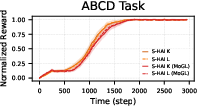

- Fast generalization with schemas (ABCD task)

- The schema-based model quickly adapted to new blocks where the goal locations were different but the order A→B→C→D stayed the same.

- It outperformed models that didn’t use schemas—even if those models had been trained longer—because it reused the abstract rule and only relearned the mapping (address book), not the whole task.

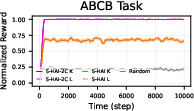

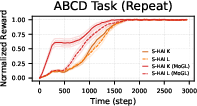

- Handling ambiguous goals (ABCB task)

- In the ABCB task, two goals share the same spot, so the agent must remember how it got there. The schema-based model with “clones” (two versions of the B state) handled this well, reaching near-optimal performance.

- This shows the model can deal with tasks where the same place can mean different things depending on the recent past.

- Reusing vs. creating mappings (mixture model)

- With a library of mappings, the model started a bit slower (because it had to choose which mapping to use), but then learned to reuse the right one when a familiar maze returned—saving time later.

- When it met a brand-new maze, it created a new mapping and added it to its library.

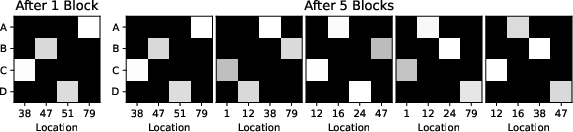

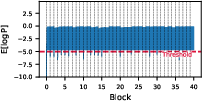

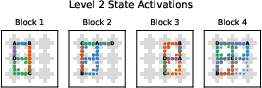

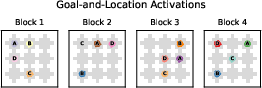

- Brain-like signals (simulated neural activity)

- Inside the model, activity patterns looked similar to rodent frontal brain signals found in experiments:

- “Goal-progress cells”: signals increased smoothly as the agent approached any goal, like a ramping progress bar. This ramping adjusted to the layout, not just the distance—matching rodent data.

- Other codes: the model also showed signals for which goal it was aiming for (goal identity), combinations of goal and location, and place-like signals at the lower level (similar to place cells in spatial navigation areas of the brain).

Why this matters: It’s a single, working explanation that links behavior (fast generalization), computation (schemas + mappings), and brain signals (those goal-progress patterns), showing how all three can come from the same underlying principles.

What’s the bigger impact?

- For neuroscience: The results support the idea that the brain uses hierarchical predictive models: higher areas (like frontal cortex) learn abstract structure (schemas), while hippocampal and related systems manage spatial details. The “grounding” links them together. This helps explain how animals learn quickly and flexibly.

- For AI and robotics: Today’s AI often needs lots of training for each new task. This work shows a way to learn a general blueprint once and then rapidly apply it to new environments—by just updating a mapping. That could make robots and AI systems better at adapting to new places and problems with very little extra experience.

- For learning and development: The model naturally captures two classic learning ideas:

- Assimilation: Fit new experiences into an old schema by remapping details.

- Accommodation: When a new problem doesn’t fit, create a new schema or mapping (as with the clone trick or the mixture library).

In short, this paper shows how a “recipe + address book” approach—implemented with hierarchical predictive processing—can explain fast learning in animals, match brain activity patterns, and point the way toward smarter, more adaptable AI.

Knowledge Gaps

Knowledge gaps, limitations, and open questions

Below is a concise list of what remains missing, uncertain, or unexplored, formulated to guide future research.

- Scalability and complexity: The model is only tested in a 3×3 “wells within grid” maze with deterministic transitions. It is unclear how S-HAI scales to larger, continuous, dynamic, or stochastic environments with obstacles, non-grid topologies, and 3D spaces.

- Concurrent schema and map learning: Level 1 navigation dynamics are pre-trained offline. The paper does not address joint online learning of spatial maps and schemas, nor how co-learning affects assimilation/accommodation, stability, and sample efficiency.

- True accommodation of new schemas: The framework learns a single abstract schema (ABCD or ABCB) and multiple groundings. It does not discover, create, or modify schemas de novo when the latent task structure changes (e.g., new sequences, branching structures, hidden rules), nor specify criteria for forming, merging, or retiring schemas.

- Mixture over schemas vs. groundings: The non-parametric extension maintains a mixture of grounding likelihoods, but not a mixture of schemas. There is no mechanism to infer which abstract schema applies, or to maintain multiple schemas and select among them.

- Unsupervised context boundary detection: Mixture priors are reset at block boundaries that are externally given. The model does not infer event boundaries from data (e.g., surprise signals), nor evaluate reliability and timeliness of boundary detection under uncertainty.

- Heuristic mixture expansion: The threshold (e.g., −5 expected log likelihood) used to add mixture components is ad hoc. There is no sensitivity analysis, principled selection, or adaptive criterion for component creation and pruning to avoid proliferation or underfitting.

- Clone number selection and aliasing: The ABCB solution hard-codes two clones. There is no method to infer the optimal number of clones online, handle more complex aliasing patterns, or prevent overfitting/underfitting in CSCG state cloning.

- Robustness to noise and partial observability: The paper does not test performance under observation noise, missing rewards, distractors, occlusions, ambiguous cues, or nonstationary transition/reward processes.

- Exploration strategy: The action selection hinges on “inductive cost” preferences. The paper does not analyze exploration–exploitation trade-offs, planning horizon choices, or how uncertainty guides exploration in S-HAI.

- Computational efficiency and latency: There is no reporting of time/space complexity, convergence rates, or latency of inference/planning per step; scalability with number of states, goals, and mixture components remains unknown.

- Hyperparameter sensitivity: No ablation or sensitivity analysis is provided (e.g., priors, learning rates, horizon, cost scaling, Dirichlet parameters, mixture truncation), leaving robustness of results unclear.

- Fairness and breadth of baselines: Comparisons are limited to HAI (with/without CSCG) and a random goal chooser. Stronger baselines (model-based RL, meta-RL, successor features, hierarchical options/skills, graph neural planning, CSCG with learned groundings) are not evaluated for sample efficiency or asymptotic performance.

- Negative transfer and mis-binding: The risk and detection of incorrect groundings (catastrophic schema mis-binding) are not quantified; there is no procedure to recover from or avoid entrenched wrong mappings.

- Multi-goal ambiguity beyond ABCB: Beyond a single aliased goal (B), the model is not tested on tasks with multiple overlapping goals, interleaved sequences, branching, or context-dependent transitions.

- Generalization beyond spatial tasks: The framework is only validated in spatial navigation. Its applicability to non-spatial relational domains (e.g., rules, algorithms, language templates) is asserted but not demonstrated.

- Multi-level hierarchies: S-HAI uses two levels. It is unknown how additional hierarchical layers (e.g., meta-schemas, task families) can be learned, coordinated, and grounded.

- Policy formation at Level 2: Level 2 seems to select next goals rather than optimizing full action sequences under uncertainty. The decision-making formalism (planning horizon, expected free energy decomposition) is not fully specified or evaluated.

- Integration with hippocampal/entorhinal computations: Although motivated by grid/place/conjunctive cells, Level 1 does not model grid-like codes or continuous attractor dynamics; interactions (e.g., replay, consolidation) are not implemented or tested.

- Neural validation breadth and quantification: The mPFC “neural codes” are qualitatively illustrated (goal-progress, identity, conjunctive), but quantitative fits to empirical firing statistics, invariance across remappings, and cross-condition generalization are not provided.

- Physiological interpretability of “inductive cost”: The link between model’s inductive cost and neural variables (e.g., ramping potentials, dopaminergic prediction errors) remains speculative; testable neurophysiological predictions are not formalized.

- Timescale alignment: The model does not relate learning and inference timescales to biological processes (trial-by-trial, block-wise, sleep-dependent consolidation), nor probe memory interference across blocks.

- Event segmentation and surprise: While surprise is mentioned, the paper does not implement or evaluate a principled event segmentation mechanism to trigger schema updates, mixture selection, or consolidation.

- Evaluation metrics: Performance uses normalized reward and steps/trial; there is no metric for one-shot grounding speed, remapping latency, sample efficiency per block, or error rates in schema/grounding inference.

- Lifelong learning and forgetting: The framework lacks mechanisms for memory management (component pruning, consolidation, regularization), resisting interference, and maintaining stable reuse across long sequences of tasks.

- Safety and constraints in grounding: No constraints or priors (e.g., spatial topology, goal locality) are exploited to regularize grounding learning; the impact of structure-informed priors on speed and accuracy is unknown.

- Code and reproducibility: Full methodological details (equations, hyperparameters, code) are not included here; reproducibility and robustness across implementations are not established.

These gaps suggest concrete next steps: scale up environments and tasks; enable online co-learning of maps and schemas; add mixture-of-schemas with unsupervised boundary detection; introduce principled nonparametric component management; strengthen baselines and ablations; test robustness to noise and aliasing; extend to non-spatial relational domains; incorporate hippocampal/entorhinal dynamics and quantitative neural fits; and formalize metrics and procedures for rapid, safe, and lifelong schema grounding.

Practical Applications

Immediate Applications

- Warehouse and factory mobile robots — Robotics, Logistics

- Use S-HAI to reuse abstract pick/pack schemas (e.g., “A→B→C→D” station sequence) and rapidly remap them to new aisle/slot layouts via a learned grounding likelihood; handle repeated/aliased stations with clone-structured graphs (ABCB analog).

- Tools/products/workflows: ROS2 planning module implementing S-HAI; “Mixture-of-Groundings” memory for different warehouse zones; WMS integration to set goals and rewards.

- Assumptions/dependencies: Reliable localization/SLAM and a prelearned Level-1 navigation model; clear reward/done signals; safe motion stack; adequate compute for online Bayesian updates.

- Hospital service and hospitality robots (rounds, delivery) — Healthcare, Robotics

- Remap abstract service rounds schemas (e.g., supplies→meds→labs→waste) to different wards/floors; resolve room aliasing (same docking bay for multiple rounds) with clones; reuse groundings across shifts with a mixture model.

- Tools/products/workflows: “Rounds Schema Planner” with S-HAI and MoGL; EHR/task system interface for goal sequencing; audit trail of schema-to-floor mappings.

- Assumptions/dependencies: Accurate floor maps and access constraints; human-in-the-loop override; compliance and safety approval.

- RPA and back-office workflow automation — Software, Finance/Operations

- Treat approval or data-entry workflows as abstract schemas; learn a grounding likelihood per application instance (e.g., SAP vs. Oracle UI); MoGL reuses mappings across clients; clones disambiguate repeated steps that share the same screen.

- Tools/products/workflows: “Grounding Likelihood Learner” for UI elements; schema library of common enterprise flows; explainable logs showing schema states and progress.

- Assumptions/dependencies: Stable UI element detection (CV/DOM hooks); access to APIs or robust screen scraping; change detection triggers for accommodation.

- Personal productivity assistants and cross-app automations — Software, Daily life

- Encode multi-step routines (“draft→review→publish→share”) as schemas; ground to different apps (Google Docs, Notion, WordPress) and accounts using learned mappings; MoGL supports quick context switching (work vs. personal).

- Tools/products/workflows: Assistant SDK with schema planner; grounding profiles per app/account; progress indicator derived from inductive cost for user feedback.

- Assumptions/dependencies: App APIs/permissions; reliable tool-use; clear success/reward signals (e.g., publish confirmation).

- Game AI that generalizes across levels — Software, Gaming

- NPCs reuse quest schemas (fetch→craft→deliver) and remap to new maps/levels without retraining; clones handle ambiguous objectives; MoGL stores per-level remappings to speed replays.

- Tools/products/workflows: Unity/Unreal plugin for S-HAI planning; level-specific grounding caches; debug overlays for goal-progress.

- Assumptions/dependencies: Level graph exposure or learnable navigation model; tractable POMDP state.

- Campus/courier micro-mobility routing — Logistics, Mobility

- Plan multi-stop pickups/deliveries as schema sequences and remap to new building layouts; clones disambiguate repeated pickup points; MoGL accelerates recurring routes.

- Tools/products/workflows: Route planner with schema layer over map graph; “event-boundary” detector to switch groundings when context changes.

- Assumptions/dependencies: Up-to-date maps; curb/entrance access signals; safe navigation constraints.

- Neuroscience experiment design and data analysis — Academia, Healthcare

- Fit S-HAI to rodent/human sequential tasks to generate testable predictions (goal-progress cells, conjunctive codes); use expected log-likelihood dips to mark event boundaries; compare inferred beliefs to neural firing.

- Tools/products/workflows: Open-source S-HAI modeling toolbox; pipelines to align latent beliefs with neural timeseries; hypothesis generators for mPFC/hippocampal coding.

- Assumptions/dependencies: Time-locked behavioral and neural data; model identifiability; parameter recovery studies.

- Adaptive learning/exam practice sequences — Education technology

- Capture abstract skill sequences (e.g., “concept→application→transfer”) and remap to new content sets or LMSs; MoGL reuses mappings across courses/programs; progress signal provides interpretable student-phase feedback.

- Tools/products/workflows: Schema-based tutor backend; content-to-schema grounding builder; dashboards showing per-learner progress phase.

- Assumptions/dependencies: Well-tagged content; outcome signals (grades/attempts); institutional LMS integration.

- Explainable planning dashboards for adaptive systems — Cross-sector

- Expose the high-level schema, active grounding, mixture component chosen, and goal-progress signal to operators for debugging, safety, and audits.

- Tools/products/workflows: “Schema Inspector” UI; alerts when surprise/event-boundary triggers accommodation.

- Assumptions/dependencies: Telemetry hooks; operator training; privacy/compliance controls.

Long-Term Applications

- Generalist home robots with rapid task remapping — Robotics, Consumer

- Maintain libraries of household schemas (tidy→load→wash→unload; cook→plate→serve) and ground them to new homes; MoGL manages per-home mappings; clones resolve ambiguous locations (same counter used in different tasks).

- Tools/products/workflows: Schema engine integrated with perception/affordances and language grounding; cloud-based mixture memory across sessions; safety sandboxes for accommodation.

- Assumptions/dependencies: Robust perception and manipulation; safe exploration; regulatory approval; reliable reward/goal detection from natural language or sensors.

- Multi-stop autonomous delivery and inspection — Mobility, Energy, Infrastructure

- Use schemas to plan inspection/repair sequences and remap across facilities (substations, wind farms); MoGL accelerates recurring sites; accommodate novel layouts online.

- Tools/products/workflows: Fleet planner with S-HAI; digital twin interfaces; evidence logs for certification.

- Assumptions/dependencies: High-fidelity maps/digital twins; adverse-weather robustness; safety and airspace regulations.

- Disaster response and search-and-rescue — Public safety, Defense

- Apply incident-response schemas (triage→stabilize→transport) and remap to unknown buildings; event-boundary detection triggers accommodation when floor plans change; clones handle repeated chokepoints.

- Tools/products/workflows: SAR robot stack with schema planner; operator-in-the-loop schema selection; after-action schema replay analytics.

- Assumptions/dependencies: Communications resilience; partial observability handling; ethical and legal frameworks.

- Clinical neurotechnology and cognitive training — Healthcare

- Use model-derived goal-progress signals as biomarkers for executive function; training regimens to strengthen schema formation/transfer; closed-loop neurostimulation timed to event boundaries or progress phases.

- Tools/products/workflows: Digital therapeutics built on schema tasks; EEG/MEG/fMRI alignment with S-HAI beliefs; clinician dashboards.

- Assumptions/dependencies: Clinical validation; individualized models; safety/efficacy trials and regulatory clearance.

- Brain-inspired generalist AI agents — Software, AI

- Combine S-HAI with foundation models to ground language-described schemas into action across tools/robots; build schema libraries transferable across domains; mixture-of-groundings for client/site personalization at scale.

- Tools/products/workflows: “SchemaOS” SDK; cross-domain schema marketplace; safety layer using surprise-triggered accommodation checks.

- Assumptions/dependencies: Scalable hierarchical inference under long horizons; tool-use reliability; guardrails for rapid generalization.

- Industrial changeover and flexible manufacturing — Manufacturing

- Encode SOPs as schemas and rapidly remap to new product variants/machines; MoGL preserves mappings per line; digital twins validate accommodation before deployment.

- Tools/products/workflows: MES/PLC integration; “Schema-to-PLC Grounder”; changeover optimizer.

- Assumptions/dependencies: Accurate equipment state models; human oversight; safety interlocks.

- Smart grid restoration and operations — Energy

- Represent restoration sequences as schemas; remap to different feeder topologies; event-boundary detection highlights topology shifts requiring accommodation.

- Tools/products/workflows: Grid ops assistant with schema planner; SCADA/DMS integration; explainable restoration progress indicators.

- Assumptions/dependencies: Real-time observability; strict safety constraints; regulator-approved decision support.

- Education policy and curriculum design for transfer — Policy, Education

- Promote schema-centered curricula and assessments that measure generalization; use model insights to scaffold assimilation vs. accommodation (introduce near/far transfer with controlled surprise).

- Tools/products/workflows: Curriculum authoring guides; analytics on progress-phase distributions across cohorts.

- Assumptions/dependencies: Teacher training; content tagging standards; data privacy.

- Standards, auditing, and certification for adaptive systems — Policy, Cross-sector

- Require logging of schema selection, grounding likelihoods, and event-boundary triggers to audit adaptive behavior; develop safety tests for rapid remapping.

- Tools/products/workflows: “Schema Audit Log” standard; conformance test suites with novel-context challenges.

- Assumptions/dependencies: Industry consensus; regulatory adoption; interoperability frameworks.

- Human digital twins for workload and UX — Software, HCI

- Model user task schemas and goal-progress to predict workload, detect friction points, and redesign interfaces; reuse groundings across user segments.

- Tools/products/workflows: UX analytics powered by S-HAI; “progress-phase” heatmaps; A/B testing guided by event-boundary detections.

- Assumptions/dependencies: Ethical data collection; accurate user modeling; generalization beyond lab tasks.

Glossary

- ABCB task: A sequential navigation task where two goals share the same spatial location, requiring alternation based on context. "We also address a more challenging variant, the ABCB task, in which two goals (the B goals) occupy the same spatial location (Figure \ref{fig:taskABCB})."

- ABCD task: A sequential navigation task requiring visiting four goals in a fixed order across changing layouts. "The main experimental task we adopt to evaluate our model is the ABCD task of \citet{el2024cellular}."

- Active inference: A Bayesian framework for perception, learning, and action that minimizes expected surprise through generative models. "We address the ABCD and ABCB tasks using a novel schema-based hierarchical active inference (S-HAI) agent"

- Accommodation: The process of creating or modifying schemas when new information does not fit existing structures. "there is a second process—called accommodation—which involves creating new schemas or modifying existing ones when new information does not fit."

- Assimilation: Integrating new experiences into existing schemas by mapping sensory details onto abstract structure. "This type of learning—or assimilation—requires only mapping the low-level sensory details of a novel experience onto the abstract relational structure of an existing schema"

- Bayesian brain hypothesis: The idea that neural activity encodes probabilistic beliefs rather than simple stimulus responses. "According to the Bayesian brain hypothesis, neurons do not simply fire in response to stimuli; rather, their activations encode probabilistic beliefs about relevant quantities in the environment"

- Clone-structured causal graphs (CSCG): A model that uses cloned latent states to represent abstract structure separate from observations, enabling rapid rebinding. "Another related computational account of schema and rebinding in the hippocampus has been developed based on clone-structured causal graphs (CSCG) \citep{George2021,guntupalli2023graph,swaminathan2023schema, raju2024space}."

- Clone-structured cognitive graph (CSCG): An extension of HMMs that allows multiple clones per state to disambiguate aliased observations. "we endowed Level 2 of the HAI agent with a more expressive clone-structured cognitive graph (CSCG) mechanism \citep{George2021}"

- Cognitive map: An internal representation of spatial or abstract relational structure supporting navigation and inference. "grid cells in the entorhinal cortex have been implicated in cognitive map formation and schema learning"

- Conjugate updates: Bayesian parameter updates using conjugate priors for efficient online learning. "The parameters are initialized randomly and updated using conjugate updates"

- Dirichlet process (truncated): A non-parametric prior enabling flexible mixture expansion with a bounded number of components. "which maintains a mixture of grounding likelihoods that expand over time using a truncated Dirichlet process (Section~\ref{sect:methods})."

- Emission matrix: The observation model in a probabilistic graph that maps latent states to observed symbols. "Learning the grounding likelihoods is similar to learning the emission matrix of a schema as in \citep{guntupalli2023graph} and \citep{swaminathan2023schema}."

- Entorhinal cortex: A brain region containing grid cells that provide a reusable spatial scaffold for maps and schemas. "grid cells in the entorhinal cortex have been implicated in cognitive map formation and schema learning"

- Generative model: A probabilistic model that predicts observations and dynamics, used for planning and inference. "a higher-level generative model encodes abstract task structure, while a lower-level model encodes spatial navigation, with the two levels linked by a grounding likelihood"

- Grid cells: Neurons that code space in a periodic lattice, supporting path integration and abstract mapping. "grid cells provide a periodic, low-dimensional representation of space that is believed to support path integration and map-like computations"

- Grounding likelihood: The probabilistic mapping between abstract schema states (task space) and concrete spatial states (navigation space). "Crucially, the S-HAI agent also includes a grounding likelihood: a probabilistic mapping between the abstract schema representing transitions between goals in task space (i.e., A, B, C, and D) and the concrete locations of the goals in navigation space"

- Goal-and-spatially conjunctive cells: Neurons tuned jointly to abstract goal identity and specific spatial location. "including task-invariant goal-progress cells, goal-identity cells, and goal-and-spatially conjunctive cells"

- Goal-identity cells: Neurons selective for the identity of the current abstract goal, independent of location. "including task-invariant goal-progress cells, goal-identity cells, and goal-and-spatially conjunctive cells"

- Goal-progress cells: Neurons that ramp activity with progress toward a goal, independent of goal identity or distance. "the most frequent are \"goal-progress cells,\" or cells that are tuned (mainly) to progress (e.g., early, mid, and late phases) toward abstract goals, independently of goal identity or physical distance."

- Hippocampal replay: Reinstatement of sequential neural activity during rest/sleep, linked to consolidation and schema abstraction. "as evidenced by hippocampal replay and reactivation patterns during rest and sleep"

- Hippocampus: A brain region crucial for encoding experiences and interacting with entorhinal grid systems to form schemas. "three interconnected brain structures—the hippocampus, the entorhinal cortex, and the frontal cortex—may play a crucial role in schema-based rapid learning and systems consolidation"

- HMM-like architectures: Models based on Hidden Markov Models that can struggle with aliased states without state cloning. "Standard HMM-like architectures, such as the one used by the HAI agent in the first simulation, struggle with this task because they confuse the two instances of the B goal."

- Inductive cost: A planning objective that penalizes distance from intended states according to latent dynamics. "The level 1 goal is specified as an intention over future state, which triggers the model to associate each state with an inductive cost~\citep{friston2023active}."

- Latent states: Hidden variables that capture underlying structure not directly observed, used for abstraction and generalization. "new task-structure schemas have to learned that uses the latent states of the spatial structure that was previously learned."

- Medial prefrontal cortex (mPFC): Frontal cortical area implicated in coding abstract task structure and schema-related signals. "neural codes reported in the medial prefrontal cortex (mPFC) of rodents performing the ABCD task"

- Mixture model: A probabilistic framework combining multiple components to flexibly select mappings or hypotheses. "we implement a mixture model over grounding likelihoods that allows the S-HAI agent to flexibly infer which of its existing grounding likelihoods is most useful in the current maze"

- Mixture of grounding likelihoods (MoGL): A model maintaining multiple schema-to-environment mappings and selecting among them. "we implemented a non-parametric extension of the S-HAI agent, called the S-HAI MoGL agent"

- Non-parametric extension: A model class whose complexity can grow with data, e.g., by adding mixture components. "we implemented a non-parametric extension of the S-HAI agent, called the S-HAI MoGL agent"

- Observation likelihood: The probability of observations given latent states in a generative model. "At Level 2, the grounding likelihood is combined with the observation likelihood ."

- Partially Observable Markov Decision Process (POMDP): A decision-making model with hidden states and stochastic observations/actions. "comprises two levels implemented as two interconnected Partially Observable Markov Decision Processes (POMDPs)."

- Path integration: The computation of position by integrating movement cues, supported by grid-cell dynamics. "support path integration and map-like computations"

- Place-like codes: Spatially tuned representations resembling place cells, emerging in lower-level navigation models. "as well as place-like codes at the lower level."

- Predictive processing: A theory where the brain minimizes prediction errors via hierarchical generative models. "combines predictive processing and active inference with schema-based mechanisms."

- Schema: An abstract relational structure capturing commonalities across experiences to support rapid generalization. "Schemas -- abstract relational structures that capture the commonalities across experiences -- are thought to underlie humans’ and animals’ ability to rapidly generalize knowledge"

- Schema-based hierarchical active inference (S-HAI): The proposed two-level model combining schema inference with navigation via grounding likelihoods. "Here, we introduce schema-based hierarchical active inference (S-HAI), a novel computational framework"

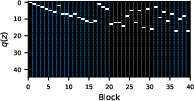

- Selector variable (z): A latent variable indicating which mixture component (e.g., grounding likelihood) is active. "The approximate posterior over (the selector variable of the grounding likelihood) during the ABCD task with repeated blocks"

- Spatial alternation: A task structure requiring alternating choices at a shared location based on previous state. "This setup is similar to spatial alternation tasks commonly used in rodents \citep{jadhav2012awake}"

- Systems consolidation: The process by which memory representations become stabilized and reorganized across brain systems. "may play a crucial role in schema-based rapid learning and systems consolidation"

- Task-invariant goal-progress cells: Neurons whose ramping activity reflects progress regardless of the specific goal or layout. "including task-invariant goal-progress cells, goal-identity cells, and goal-and-spatially conjunctive cells"

- Transition matrix: A structured representation of state-to-state dynamics learned by the model at different levels. "Transition matrix learned at Level 1 by the S-HAI model (trained on Block 1), encoding the spatial structure of the environment."

- Variational posterior: An approximate posterior distribution used in variational inference to represent beliefs about latent variables. "the normalized expected inductive cost, under the variational posterior over the level 1 state"

Collections

Sign up for free to add this paper to one or more collections.