- The paper presents a robust AI framework that integrates deep learning, OCR, and vision-language models to achieve 99.6% component detection F1 and 2.72x overall accuracy improvement over prior methods.

- The paper employs a modular pipeline—including YOLOv11-based detection and connected-component analysis—to accurately infer electrical connectivity and map reference designators within schematics.

- The paper’s approach automates schematic digitization, reducing manual transcription errors and enabling scalable dataset creation for AI-driven electronic design automation workflows.

SINA: Automated Circuit Schematic Image-to-Netlist Translation via AI

Motivation and Context

The conversion of circuit schematic images into machine-readable netlists is a longstanding bottleneck in EDA workflows. Despite substantial prior work, existing methods for schematic recognition suffer persistent challenges: low component detection fidelity, unreliable extraction of electrical connectivity, and inaccurate mapping of reference designators. Such deficiencies curtail the reusability of schematic knowledge present in the literature and hinder the development of large-scale datasets needed for AI-driven circuit synthesis and analysis. SINA directly addresses these constraints by integrating deep learning, connected-component analysis, OCR, and vision–LLMs (VLMs) within a unified and fully automated pipeline.

SINA Architecture and Workflow

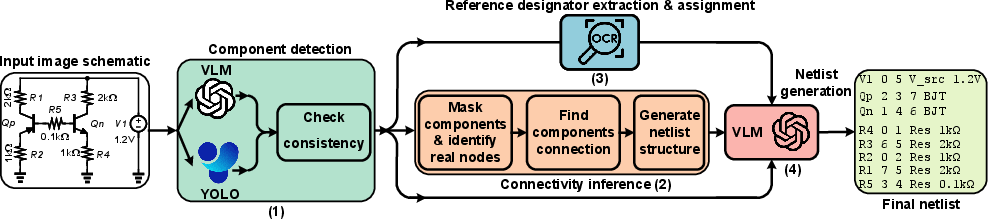

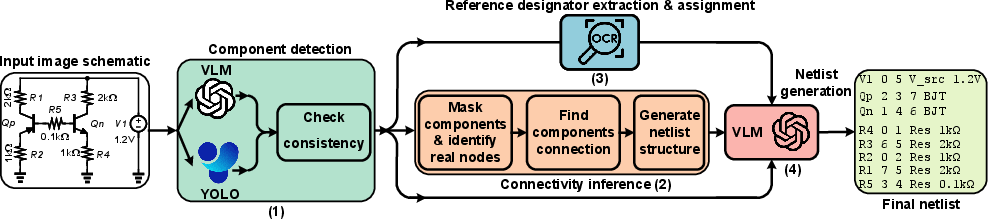

SINA’s workflow (Figure 1) consists of four modular stages: component detection, connectivity inference, reference designator extraction and assignment, and netlist generation.

Figure 1: The SINA workflow integrates deep learning, computer vision, OCR, and vision-language modeling for image-to-netlist conversion.

Component Detection

Leveraging a YOLOv11-based object detector, SINA achieves high-precision identification of schematic components from diverse image sources, including scanned, drawn, and computer-generated images. The detector outputs bounding boxes and component types, which are independently verified with a GPT-4o VLM. An explicit concordance check between YOLO and VLM results enables the quantification of detection confidence and systematic flagging of discrepancies.

Connectivity Inference

After detection, component regions are masked, and the wiring network is isolated. SINA utilizes Connected-Component Labeling (CCL) for robust segmentation and node extraction—rejecting artifacts and merging semantically equivalent nodes. By calculating intersections between masked component hotspots and node clusters, SINA constructs a precise component-to-node map, enabling faithful reproduction of circuit structure in the netlist.

Reference Designator Extraction and Assignment

Textual annotations are extracted using EasyOCR, then mapped to associated component regions by spatial proximity. Subsequently, the VLM (GPT-4o) interprets designator context and formalizes assignments, separating purely visual detection from semantic resolution. This division facilitates generalization across schematic genres and text placements.

Netlist Generation

Utilizing OCR outputs, connectivity maps, and the original schematic image, the VLM synthesizes a SPICE-compatible netlist. The process not only assigns correct values and designators but, drawing on visual and contextual cues, maintains semantic integrity and consistency in output structure.

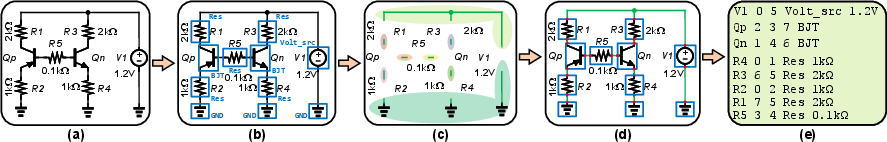

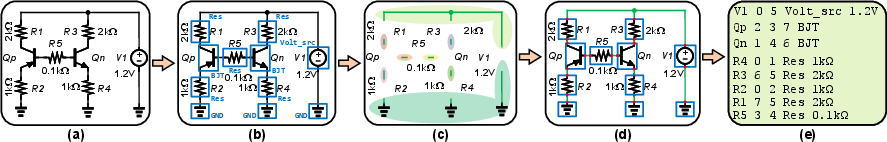

Figure 2: SINA's pipeline—from raw schematic input to detection, node clustering, wiring inference, and final netlist generation.

Quantitative Evaluation

SINA’s component detection model, fine-tuned on 700 annotated schematics with extensive data augmentation, achieves an F1 score of 96.47% (precision: 94%; recall: 99%; weighted mAP: 98%), substantiating robust recognition across schematic types.

In direct comparison to Masala-CHAI, the sole open-source competitor, SINA demonstrates superior performance over 40 representative test circuits:

- Text extraction accuracy: 97.55% vs. 95.09%

- Component detection F1: 99.6% vs. 62.4%

- Circuit structure accuracy: 99.3% vs. 59.8%

- Overall netlist accuracy: 96.47% vs. 35.5%

This comprehensive improvement—2.72x higher overall accuracy—is a marked advance over prior state-of-the-art.

Implications and Prospective Developments

Practically, SINA enables the automated digitization and repurposing of circuit designs from diverse sources, significantly reducing manual transcription overhead and error rates. Its modular architecture permits rapid adaptation to new schematic styles and novel component families, supporting scalable dataset creation for circuit-focused LLM pretraining and benchmarking.

Theoretically, SINA illustrates the synergy between discriminative vision models and multimodal generative engines in symbolic AI tasks. Its decoupled approach to detection and semantic reasoning reflects a trend toward hybrid, context-aware automation in engineering workflows.

Avenues for future work include:

- Incorporation of retrieval-augmented generation for improved component type discrimination.

- Extension to hierarchical schematics and non-SPICE domains (e.g., PCB layouts).

- Integration with active learning protocols for continual improvement.

- Exploration of joint schematic-to-netlist-to-simulation pipelines for closed-loop circuit design automation.

Conclusion

SINA establishes a robust framework for circuit schematic image-to-netlist translation, integrating state-of-the-art deep learning, OCR, and vision–language modeling. With quantitative gains in detection and netlist generation accuracy (96.47%; 2.72x versus Masala-CHAI), SINA substantially enhances the quality and scalability of circuit data curation. Its architecture enables consistent performance across diverse image sources and systematic assignment of semantic labels, advancing both the automation of EDA workflows and the prospects for AI-driven circuit design methodologies.