- The paper presents a novel model, Pixel MeanFlow (pMF), that eliminates latent space for one-step image generation.

- The methodology maps noisy inputs directly to pixel space using a transformation between image and velocity spaces to achieve high fidelity with FID scores as low as 2.22.

- The results suggest that pMF enhances computational efficiency and training speed while maintaining image diversity on challenging datasets like ImageNet.

One-step Latent-free Image Generation with Pixel Mean Flows

Introduction

The paper "One-step Latent-free Image Generation with Pixel Mean Flows" (2601.22158) presents a novel approach for image generation using diffusion and flow-based models. Traditional models typically rely on multi-step sampling and operation within a latent space, which involves breaking down the complex problem of image generation into simpler subproblems. This paper proposes Pixel MeanFlow (pMF), which aims to achieve one-step image generation directly in the pixel space without relying on latent representations.

Methodology

The Pixel MeanFlow (pMF) model operates on the premise of establishing a clear separation between the network's output space and the loss space. The output is designed to lie on a low-dimensional image manifold (referred to as x-prediction), while the loss is defined in the velocity space using a MeanFlow framework. By introducing a transformation between these two spaces, the researchers enable the neural network to perform one-step image generation efficiently.

In pixel MeanFlow, the network learns to map noisy inputs directly to image pixels, ensuring that the output maintains a "what-you-see-is-what-you-get" characteristic, thus enhancing generation quality through the introduction of perceptual loss.

Results

The experimental results demonstrate the superiority of pMF in generating high-quality images in a single step without relying on latent spaces. The model achieves an FID score of 2.22 at a resolution of 256×256 and 2.48 at 512×512 on the ImageNet dataset, indicating strong performance in terms of both image fidelity and diversity when compared to existing methods.

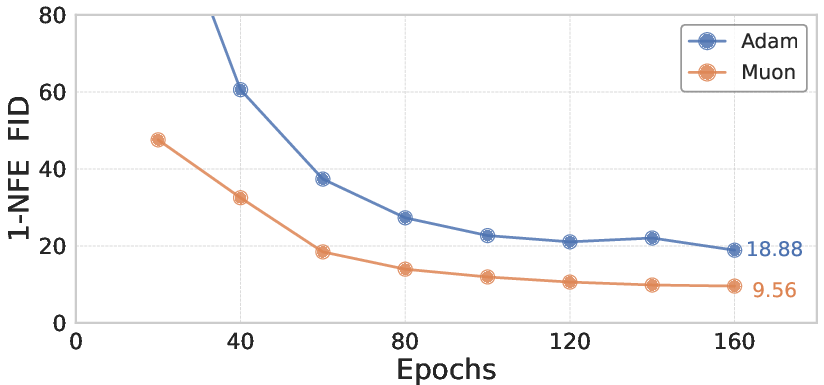

Figure 1: Muon converges faster and achieves better FID. At 320 epochs, Adam reaches 11.86 FID, while Muon achieves 8.71 FID. (Settings: pMF-B/16, MSE loss).

Implications

The implications of this research are significant for the field of image generation, as it provides a viable pathway towards simplifying the generative process by removing the need for latent variables. This contributes to computational efficiency and potentially faster training times due to the reduced complexity.

Furthermore, pMF's approach is in line with the manifold hypothesis, suggesting that images can be effectively modeled as lying on a low-dimensional manifold. This perspective may inspire further exploration into end-to-end learning architectures that leverage pixel-based representations directly.

Conclusion

In conclusion, the Pixel MeanFlow (pMF) model presents a promising direction for the advancement of image generation techniques, demonstrating that it is feasible to achieve competitive performance with a one-step, latent-free approach. This study provides valuable insights into the potential for simplifying generative models, aligning with the broader goals of developing efficient and effective deep learning frameworks. Future research may expand on this work by exploring more complex datasets and further optimizing the network architecture for different applications.