The Hot Mess of AI: How Does Misalignment Scale With Model Intelligence and Task Complexity?

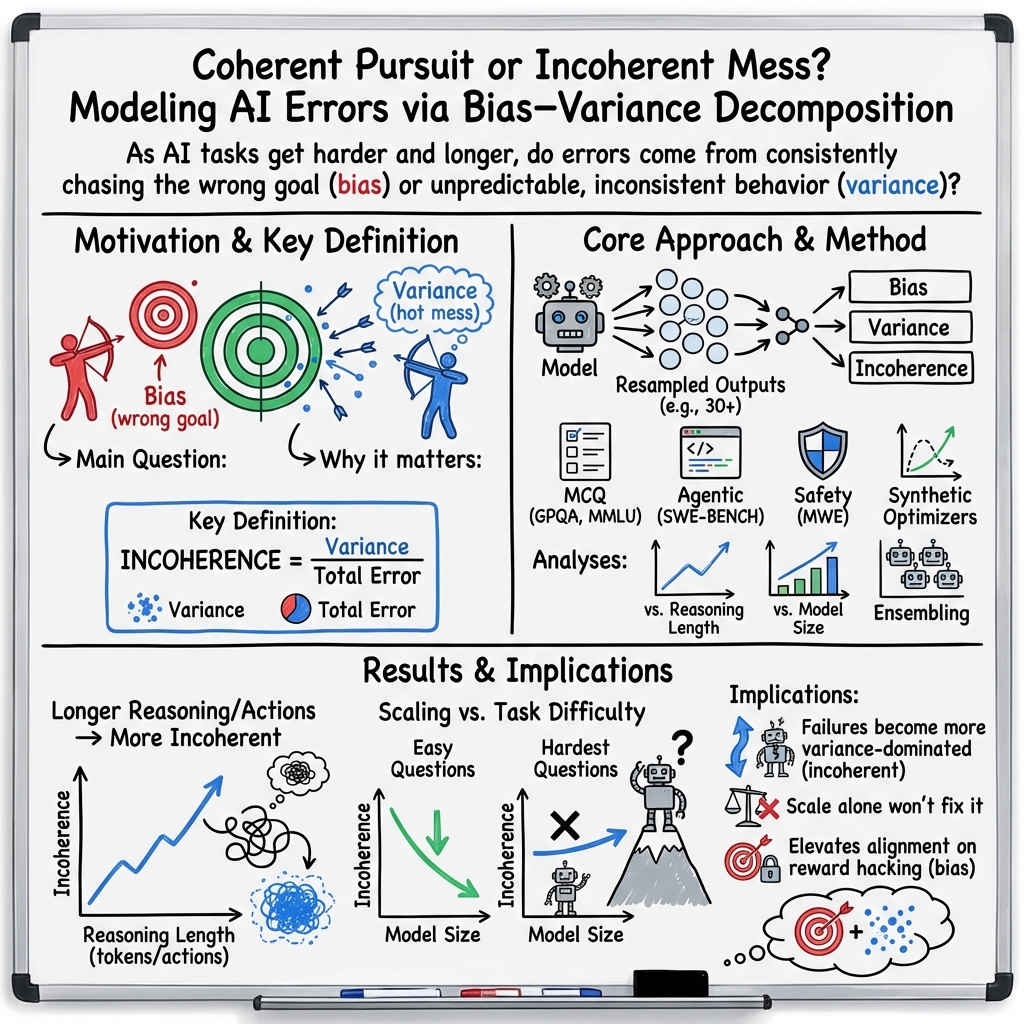

Abstract: As AI becomes more capable, we entrust it with more general and consequential tasks. The risks from failure grow more severe with increasing task scope. It is therefore important to understand how extremely capable AI models will fail: Will they fail by systematically pursuing goals we do not intend? Or will they fail by being a hot mess, and taking nonsensical actions that do not further any goal? We operationalize this question using a bias-variance decomposition of the errors made by AI models: An AI's \emph{incoherence} on a task is measured over test-time randomness as the fraction of its error that stems from variance rather than bias in task outcome. Across all tasks and frontier models we measure, the longer models spend reasoning and taking actions, \emph{the more incoherent} their failures become. Incoherence changes with model scale in a way that is experiment dependent. However, in several settings, larger, more capable models are more incoherent than smaller models. Consequently, scale alone seems unlikely to eliminate incoherence. Instead, as more capable AIs pursue harder tasks, requiring more sequential action and thought, our results predict failures to be accompanied by more incoherent behavior. This suggests a future where AIs sometimes cause industrial accidents (due to unpredictable misbehavior), but are less likely to exhibit consistent pursuit of a misaligned goal. This increases the relative importance of alignment research targeting reward hacking or goal misspecification.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Explain it Like I'm 14

What is this paper about?

This paper asks a simple but important question: As AI systems get smarter and work on harder, longer tasks, how do they mess up? Do they consistently chase the wrong goal (misalignment), or do they act in confusing, inconsistent ways—a “hot mess”—that don’t seem to follow any goal at all?

To study this, the authors break AI mistakes into two parts using a classic idea from statistics: bias and variance. They then use this to measure how “incoherent” an AI’s failures are.

What are the key questions?

The paper explores three easy-to-understand questions:

- When an AI gives the wrong answer, how much of that mistake is because it consistently prefers a specific wrong answer (bias), and how much is because it changes its mind unpredictably (variance)?

- As AIs get smarter, and as tasks get harder and require more steps of thinking or action, does the mix of bias and variance change?

- In the long run, will failures of very capable AIs look more like misalignment (consistent wrong goal) or like accidents (incoherent, unpredictable behavior)?

How did the researchers study this?

The authors use a simple formula to split errors into bias and variance:

- Think of bias like a student who keeps picking B on multiple-choice questions, even when B is wrong—that’s a consistent mistake.

- Think of variance like a student who gives different wrong answers every time—that’s inconsistency or randomness.

They define “incoherence” as the fraction of the total error that comes from variance. If incoherence is high, the AI’s mistakes are mostly unpredictable. If incoherence is low, the AI’s mistakes are more consistent.

To measure this, they repeatedly run the same AI on the same questions but change test-time randomness (like sampling settings, few-shot examples, or tool outcomes), and then compute the bias and variance of its answers.

They tested AIs across several kinds of tasks and models:

- Multiple choice: scientific and general knowledge quizzes (GPQA, MMLU).

- Coding with tools: fixing real software issues and checking with unit tests (SWE-Bench).

- Safety questions: model-written evaluations about advanced AI risks (MWE), including open-ended answers.

- A synthetic setup: tiny transformers trained to imitate a math optimizer moving step-by-step toward a minimum, which lets them measure bias and variance very cleanly.

- Frontier models: Claude Sonnet 4, OpenAI O3-Mini and O4-Mini, plus a family of models (Qwen3) of different sizes.

They also explored two ways to change behavior:

- Reasoning budgets: letting models think for longer before answering.

- Ensembling: combining multiple attempts to reduce randomness.

What did they find, and why does it matter?

Here are the main takeaways, explained simply:

- Longer thinking leads to more incoherent mistakes. Across many tasks, when models spend more steps thinking or acting (longer “chains of thought” or more tool calls), their errors become more dominated by variance. In short: the longer the process, the more likely the model’s failure looks like a “hot mess.”

- Bigger models aren’t always more coherent. As models grow and overall error drops, coherence depends on task difficulty:

- On easy questions, larger models get more coherent (variance drops).

- On hard, complex questions, larger models often get more incoherent (variance rises), even though they still get more right overall.

- This suggests scale alone won’t fix incoherence on challenging, long-horizon tasks.

- Synthetic optimizer results match the trend. In a controlled “models-as-optimizers” setup, larger models learned the right objective faster (lower bias), but their step-by-step behavior stayed noisy (variance didn’t drop as fast). Over longer action sequences, variance dominated.

- Ensembling helps; reasoning budgets help a little. Averaging multiple attempts reduces variance in a clean, predictable way, which lowers incoherence. Giving models more time to think can slightly reduce incoherence, but natural variation in how long they think from sample to sample has a much stronger effect—and often increases incoherence.

Why this matters: If future, highly capable AIs take many steps to do complex tasks, their failures may look more like unpredictable accidents than like a focused pursuit of the wrong goal. That changes how we should prepare for risks.

What does this mean for the future?

The paper suggests a shift in how we think about AI safety:

- Expect more “accidents” on long, complex tasks. Failures may often be incoherent—random-seeming and hard to predict—especially when tasks require long sequences of decisions.

- Misalignment still matters, but the mix changes. If training goals are poorly specified (reward misspecification), bias could dominate and cause systematic misbehavior. But given reasonable goals and training, variance-driven incoherence may increasingly be the main failure mode on hard tasks.

- Focus on error correction and robustness. Techniques like ensembling, backtracking, and other forms of error correction reduce incoherence. However, in real-world actions (like robot steps or code deployments), you often can’t “reset” and ensemble safely, so we need practical ways to catch and correct mistakes mid-trajectory.

- Don’t rely on scale alone. Making models bigger lowers overall error but doesn’t reliably reduce incoherence for hard problems. We’ll need better training methods, clearer goals, and stronger safeguards.

In short: As AIs get smarter and take on harder jobs, their failures may look less like a villain with a plan and more like a clumsy but powerful helper making unpredictable mistakes. Preparing for that kind of “hot mess” is essential for safe AI deployment.

Knowledge Gaps

Knowledge gaps, limitations, and open questions

Below is a concise, actionable list of what remains uncertain or unexplored in the paper’s framework, methods, and conclusions.

- Causal mechanisms: No formal model explains why incoherence increases with longer trajectories; develop and validate a quantitative “noise accumulation” theory (e.g., stepwise stochasticity, error-correction dynamics, attractor drift) that predicts observed slopes across tasks and models.

- Source attribution of variance: The study mixes multiple test-time randomness sources (few-shot prompt variation, sampling seed, tool outcomes) without decomposing their individual contributions; isolate and quantify each source’s share of variance.

- Decoding policies: Effects of temperature, top-p, nucleus sampling, and greedy decoding on incoherence scaling are not assessed; map incoherence as a function of decoding hyperparameters and identify robust operating regimes.

- Prompt stability: Realistic deployments often use fixed prompts; evaluate incoherence when few-shot context is held constant and only sampling varies, and vice versa, to disentangle prompt vs sampling effects.

- Low-error regimes: Incoherence is defined as variance/(bias²+variance); near-zero error the ratio can become unstable or uninformative; characterize behavior and confidence intervals in low-error regimes and propose alternative metrics when ERROR≈0.

- Irreducible noise: The decomposition assumes zero label noise; measure sensitivity to non-zero noise (e.g., ambiguous labels, flaky tests) and provide corrected estimators or bounds under noisy targets.

- Metric dependence: While KL, Brier, and 0/1 show similar qualitative trends, no principled criterion is given for which metric best predicts real-world risk; compare metrics’ stability, sensitivity, and correlation with downstream accident likelihood.

- Open-ended responses: The embedding-variance proxy for free-form answers uses a single embedding model; assess robustness across embedding architectures, distance metrics, and dimensionality, and calibrate embedding variance against human judgments of incoherence.

- Task coverage: Evidence is limited to MCQ benchmarks, SWE-BENCH coding, and a simple synthetic optimizer; extend to other long-horizon, irreversible domains (robotics, planning, multimodal agents, tool-using agents with external memory).

- Generality across architectures: Frontier models differ in training pipelines and RL reasoning methods; control for architecture and training differences by evaluating a single family across wider scales and reasoning variants.

- Reasoning budgets vs “natural overthinking”: The mechanisms by which API “reasoning budgets” reduce incoherence are unknown; ablate specific features (backtracking, review, reflection, verifier calls) and quantify their individual effects.

- Structure of thought: Only limited analysis of reasoning structure is provided; measure how specific CoT features (depth, breadth, self-review, branching, loopbacks) relate to variance growth and design training-time regularizers to stabilize them.

- Ensembling practicality: Ensembling reduces variance as 1/E in MCQ but is impractical in stateful action loops; investigate alternative online error-correction schemes (verifier-critic, checkpoint/rollback, majority vote over subgoals, speculative decoding) for agents.

- Scaling on hard tasks: For hardest question groups, trends are noisy and sometimes inconsistent; expand sample sizes, include more hard datasets (e.g., GPQA Diamond-like subsets), and report uncertainty bands to confirm whether incoherence truly rises with scale.

- SWE-BENCH instrumentation: Separate variance due to model behavior from exogenous factors (tool errors, environment flakiness, dependency issues); run controlled simulations to quantify each factor’s contribution.

- Mesa vs specification bias: The paper introduces BIAS_MESA vs BIAS_SPEC but does not measure them; design experiments that manipulate reward misspecification to empirically decompose these components across capability scales.

- Alignment vs incoherence linkage: Claims about reduced likelihood of coherent misaligned goal pursuit are inferred indirectly; construct evaluations that directly elicit and measure persistent goal pursuit vs randomness under controlled incentives.

- Training-time interventions: No tests of methods intended to reduce incoherence (consistency regularization, trajectory-level KL, variance penalties, curriculum on long-horizon consistency, verifier-based RL); develop and benchmark such interventions.

- Deterministic decoding trade-offs: Explore whether greedy decoding lowers variance but increases bias, and whether hybrid policies (e.g., deterministic for planning, stochastic for exploration) can optimize the bias–variance mix over horizons.

- Temporal error dynamics: The paper reports net variance accumulation but does not analyze per-step autocorrelation, drift, or error-correction episodes; characterize temporal profiles to identify when and where to intervene.

- External validity: Extrapolation from short tasks and limited horizons to superhuman systems is speculative; run progressively longer, semi-open-world evaluations to test whether variance continues to dominate over extended timescales.

- Data contamination and familiarity: If models have seen benchmark content, bias/variance may be reduced spuriously; audit contamination and analyze incoherence on verifiably out-of-distribution and adversarially constructed tasks.

- Human survey limitations: The intelligence–incoherence association is subjective and cross-group; replicate with larger, preregistered samples, clearer constructs, and objective behavioral measures.

- Robustness to objective choice in synthetic setting: The quadratic optimizer is convex and simple; test non-convex objectives, stochastic gradients, changing optima, and different optimizers to see whether variance dominance persists.

- Attribution of improved performance vs reduced incoherence: Inference scaling improved accuracy modestly and sometimes coherence, but mechanisms are unclear; instrument APIs or reproduce reasoning-budget training to link components to outcomes.

- Safety-relevance calibration: Show that higher measured incoherence predicts real accident rates in simulated control tasks with irreversible actions to validate metric safety relevance.

- Cross-modal and retrieval effects: Evaluate how retrieval-augmented generation, tool APIs, and multimodal perception (vision, audio) modulate variance, especially when observations are noisy or partial.

- Reporting and statistical rigor: Provide confidence intervals for incoherence estimates per bucket, power analyses for sample sizes (≥30 samples), and corrections for multiple comparisons across many plots and tasks.

- Benchmarks for incoherence: The field lacks standardized tasks designed to separate bias (consistent misalignment) from variance (hot-mess failures); propose and release benchmarks with controllable horizon, reversibility, and reward specification quality.

Practical Applications

Immediate Applications

The paper introduces an inference-time bias–variance decomposition to quantify “incoherence” (variance share of total error) and shows that:

- Incoherence increases with longer chains of thought and action.

- Larger models become more coherent on easy tasks but more incoherent on hard, long-horizon tasks.

- Ensembling reduces incoherence roughly as 1/E; larger “reasoning budgets” can help modestly. These findings enable the following deployable workflows and tools.

Industry

- Reliability dashboards and gates for AI deployments

- Use case: Add incoherence as a first-class metric in pre-deployment and ongoing evaluation of LLMs and agents (software, customer support, search, content moderation).

- How: Compute KL- or Brier-based bias/variance per task using 30+ resamples (different seeds, few-shots). For open-ended outputs, track embedding variance; for code agents, decompose unit-test coverage vectors.

- Tools/products: “Incoherence Monitor” (service that records per-prompt incoherence), extensions to existing eval harnesses (e.g., OpenAI/Anthropic Evals, lm-eval-harness), pipelines integrated with InspectAI.

- Assumptions/dependencies: Access to multiple samples per prompt; definable targets or suitable proxies (e.g., tests, embeddings); added compute.

- Agentic workflow design to curb variance accumulation

- Use case: Reduce unpredictable failures in production agents (software dev tools, RPA, support, devops).

- How:

- Impose action and reasoning budgets; shorten task horizons; chunk tasks; favor reversible actions.

- Add “natural overthinking” detectors (triggered by long reasoning or high entropy) that switch to concise mode or escalate to humans.

- Default to low temperature/deterministic decoding for high-stakes actions.

- Tools/products: “Trajectory Budgeter” (dynamic step cap), “Hot‑Mess Guardrail” (monitors chain length/entropy; triggers break/verify/handoff).

- Assumptions/dependencies: Task decomposition possible; clear detection signals for long chains; willingness to trade some performance for predictability.

- Ensembling and verify-before-commit patterns where state can be reset

- Use case: Improve answer stability for offline or sandboxed tasks (QA, math, retrieval, code synthesis prior to deployment).

- How: Self-consistency (sample k solutions and majority vote); multi-patch code proposals with unit tests; verifier-critic loops.

- Tools/products: “Ensemble Router” (decides when to run k-sample aggregation), “Variance-Aware Code Agent” (generates candidate patches and selects via tests).

- Assumptions/dependencies: Resettable environment or sandbox; test or verifier availability; extra compute cost; impractical for irreversible real-world actions.

- Dynamic routing by predicted incoherence

- Use case: Reliability-aware orchestration (contact centers, search ranking, document QA).

- How: Train a small meta-model to predict incoherence from early tokens (e.g., reasoning length so far, token entropy, self-contradictions) and route to:

- Shorter-horizon solvers

- Specialist tools

- Human review

- Tools/products: “Incoherence Predictor” microservice within agent frameworks.

- Assumptions/dependencies: Historical traces to train predictors; telemetry access.

- Sector-specific patterns

- Healthcare: Require second opinions (ensembles) for clinical suggestions; present incoherence meter to clinicians; cap reasoning chains; mandate human sign-off for irreversible recommendations.

- Finance: Apply low-temperature, short-horizon policies for trading/credit decisions; backtest across seeds and report incoherence; gate deployment when variance spikes.

- Robotics/Industrial automation: Limit autonomous horizon; perform offline ensemble planning in digital twins; enter safe-stop when predicted incoherence is high.

- Software: For code assistants/agents, select among multiple candidate patches using tests; measure coverage-variance before merging; enforce change-size caps.

Academia

- Standardize incoherence reporting alongside accuracy

- Use case: Benchmarking on MMLU/GPQA/coding/agents reports accuracy plus KL- or Brier-incoherence.

- How: Provide resampling protocols (≥30 samples), publish bias/variance breakdowns stratified by reasoning length and task difficulty.

- Tools/products: Open-source “Variance Audit Kit” with the paper’s decompositions, wrappers for common eval suites.

- Assumptions/dependencies: Community adoption; shared compute.

- Mechanism discovery for variance accumulation

- Use case: Study how long-horizon chains induce incoherence; replicate synthetic optimizer testbed.

- How: Analyze early-token signals for later incoherence; compare training regimes (backtracking, verifiers, debate) to quantify variance reductions.

- Tools/products: Public datasets of long-chain traces with variance labels; synthetic optimizer curricula.

Policy and Governance

- Safety reporting and procurement requirements

- Use case: Require vendors to disclose incoherence for representative tasks in regulated domains.

- How: Add “variance stress tests” (long-horizon scenarios) to audits; define thresholds for human oversight and irreversible actions.

- Assumptions/dependencies: Agreement on protocols and minimum sample sizes; sector-specific thresholds.

- Incident taxonomy and monitoring

- Use case: Classify AI incidents as variance-dominated “hot mess” vs bias-dominated misalignment; prioritize mitigations accordingly.

- How: Collect resampled replays (when feasible), report variance share, and track drift over time.

Daily Life and Team Practices

- Practical prompt/use habits to reduce unpredictability

- Use case: Individuals relying on LLMs for writing, math, planning, and coding.

- How: Keep prompts focused; limit long multi-turn chains; resample once or twice when outputs look erratic; request multiple concise alternatives and pick; lower temperature for critical tasks; periodically “reset” conversation with summaries.

- Assumptions/dependencies: User awareness; UI affordances to make sampling/evidence visible.

- Developer hygiene with AI coding tools

- Use case: Teams using AI code assistants/agents.

- How: Run unit tests by default; prefer smaller diffs; use multiple candidate suggestions and test-based selection; sandbox agent actions; add gates for large action sequences.

Long-Term Applications

The results imply that scale alone won’t eliminate incoherence on hard, long-horizon tasks; durable solutions will require training-time, architecture, and governance innovations.

Industry

- Incoherence-aware orchestration at scale

- Use case: Enterprise platforms that automatically route tasks by predicted variance risk and decompose hard tasks into shorter, verifiable subchains.

- How: Build planners that forecast variance growth and restructure workflows; adopt multi-agent patterns with verifier roles and reversible commitments.

- Products: “Variance-Aware Orchestrator” for agent platforms; “Task Decomposer” that minimizes expected variance.

- Training for robust long-horizon coherence

- Use case: Models that maintain stable behavior across long trajectories.

- How:

- Consistency regularization across resamples/rollouts

- Trajectory-level RL that penalizes outcome variance

- Distillation of ensemble behavior into single models

- Backtracking/reflection curricula that internalize error correction

- Assumptions/dependencies: New objectives and scalable data; careful trade-offs with creativity/diversity.

- Sector-specific systems

- Healthcare: Clinical AI that aggregates multiple internal “second opinions” with verifiable subproofs; UI exposing confidence/incoherence; mandated oversight workflows.

- Finance: Trading/credit agents with built-in variance limits and automatic human escalation; regulators consume incoherence dashboards.

- Robotics/Energy/Manufacturing: Supervisory control architectures that enforce reversibility, require independent plan checks for long sequences, and simulate ensembles in digital twins before real execution.

Academia

- Theory and interpretability of incoherence

- Use case: Formalize LLMs as dynamical systems; characterize when variance dominates; identify mechanistic correlates (e.g., representation drift, entropy growth).

- How: Analytical models, provable bounds on variance accumulation, and metrics for open-ended tasks beyond embedding variance.

- Benchmarks and evals for long-horizon agents

- Use case: Public leaderboards that score both performance and incoherence under varying horizons and reasoning budgets.

- How: Create datasets with reversible/irreversible actions, stateful tasks with ground-truth verifiers, and standardized resampling protocols.

- Safer objective specification (reward misspecification)

- Use case: Reduce BIASSPEC (goal misspecification) so that as capability grows, residual risk does not shift from goal errors to variance-only failures.

- How: Reward auditing, preference elicitation with uncertainty, and specification tests that separately quantify mesa vs specification bias.

Policy and Governance

- Certification regimes for “variance hazards”

- Use case: Safety cases that quantify variance risk under stress tests, similar to functional safety standards.

- How: Require “variance hazard levels” for critical deployments; mandate reversible design for high-variance contexts; minimum ensemble/verification practices where feasible.

- Deployment constraints for irreversible actions

- Use case: Limit autonomy horizon and require human-in-the-loop beyond threshold incoherence scores.

- How: Legally defined caps on step length or chain-of-action for classes of systems (e.g., clinical decision support, industrial robots).

Daily Life and Product UX

- Consumer assistants with built-in self-consistency and transparency

- Use case: Writing/education tools that synthesize and present multiple candidate answers with a clear “incoherence meter.”

- How: UX that shows consensus level, flags long-chain degradation, and offers auto-restart/decomposition suggestions.

- Educational assessment and tutoring

- Use case: Tutors that avoid long, meandering chains; present short, verifiable steps; show agreement among multiple reasoning paths.

Cross-Cutting Assumptions and Dependencies

- Measurement prerequisites

- Reliable estimation requires multiple independent samples per prompt and task; targets or verifiers must exist (unit tests, MCQ answers, formal checks). Embedding variance is a proxy for open-ended tasks.

- In high-stakes, real-world action loops, ensembling is often impractical because state cannot be reset.

- Generalization caveats

- Results were demonstrated on benchmarks and synthetic settings; domain shift can alter variance patterns.

- Temperature, sampling policy, and prompt design all affect variance; thresholds must be tuned per system and sector.

- Trade-offs

- Ensembling and verification increase latency and cost.

- Strong variance suppression may reduce creativity/diversity; policy should differentiate between exploratory vs high-stakes contexts.

- Governance and adoption

- Standards bodies need to specify sample sizes, metrics, and reporting formats.

- Vendors must expose probabilities and traces (or suitable summaries) to enable incoherence estimation while respecting privacy/security.

Glossary

- Agentic Coding: A task setting where autonomous AI agents use tools to write or fix code, evaluated via unit tests. "* Agentic Coding. This focuses on SWE-BENCH (Jimenez et al., 2024), where agents solve GitHub issues using tools, and success is measured with unit tests."

- Autoregressive transformers: Transformer models that generate sequences by predicting each token conditioned on previous tokens. "we train our own autoregressive transformers on a synthetic optimization task."

- Bias-variance decomposition: A framework that decomposes expected error into squared bias, variance, and (optionally) irreducible noise. "the bias-variance decomposition expresses the expected error of a predictor as the sum of three terms: BIAS2, VARIANCE, and irreducible noise"

- Bias-variance tradeoff: The notion that reducing bias often increases variance and vice versa in modeling. "In discussions of the bias-variance tradeoff, the setup typically assumes a deterministic model"

- Bregman Divergences: A class of divergence functions that generalize many losses (including KL), supporting bias-variance decompositions. "This is an instance of the general decomposition for Bregman Divergences (Pfau, 2013)."

- Brier score: A proper scoring rule assessing the accuracy of probabilistic predictions in classification. "Several such decompositions exist, including the 0/1 error (Kong & Dietterich, 1995; Breiman, 1996; Kohavi & Wolpert, 1996; Tibshirani, 1996; Friedman, 1997; Domingos, 2000), Brier score (Degroot & Fienberg, 2018), and cross-entropy error (Heskes, 1998)."

- Coverage error: A metric for code tasks measuring how far results are from passing all unit tests. "The coverage error then computes the mean squared difference to a vector of all 1's, which we decompose into bias and variance contributions."

- Cross-entropy error: A loss measuring the difference between predicted and target probability distributions. "the expected cross-entropy error can be decomposed into (Yang et al., 2020):"

- Decoding-based regression: Training that regresses values by token-level next-token prediction on serialized numeric outputs. "using decoding-based regression (Song & Bahri, 2025)"

- Ensembling: Combining multiple model outputs to reduce variance and improve reliability. "We now study the effect of reasoning budgets, i.e., the techniques provided in model APIs, and ensembling, i.e., averaging multiple responses, on incoherence."

- Few-shot context: Prompting with a small set of examples to condition model behavior at inference. "For GPQA and MMLU, samples additionally use a different random few-shot context."

- GPQA: A graduate-level scientific reasoning benchmark used to evaluate model performance. "We use the popular scientific reasoning benchmark GPQA (Rein et al., 2024)"

- Ill-conditioned quadratic loss: An optimization objective with a large condition number that makes convergence difficult. "emulate an optimizer descending an ill-conditioned quadratic loss."

- Incoherence: The fraction of a model’s error attributable to variance rather than bias. "Incoherence. Throughout this paper, our main metric of interest is the proportion of the variance to the total error, which we define as INCOHERENCE."

- Inference compute: Computation used during inference (e.g., longer reasoning) as a scaling axis for performance. "the most promising recent development uses inference compute as an axis of scale."

- KL-BIAS: The systematic error term in the KL-based bias-variance decomposition. "We denote this decomposition as KL-BIAS and KL-VARIANCE."

- KL-INCOHERENCE: Incoherence measured using the KL-based bias-variance decomposition. "For multiple choice questions, our main metric of interest is the KL-INCOHERENCE, i.e., the incoherence with respect to KL-BIAS and KL-VARIANCE (Equations 1 and 2)."

- KL-VARIANCE: The inconsistency term in the KL-based bias-variance decomposition. "We denote this decomposition as KL-BIAS and KL-VARIANCE."

- Kullback-Leibler (KL) decomposition: A bias-variance decomposition formulated in terms of KL divergence. "We present a Kullback-Leibler (KL) decomposition in the main text."

- Kullback-Leibler divergence: A measure of divergence between probability distributions. "DKL is the Kullback-Leibler divergence"

- Mesa-optimizers: Learned subsystems that internally optimize objectives, potentially misaligned with the outer training objective. "mesa-optimizers (Hubinger et al., 2019)"

- MMLU: A broad general-knowledge benchmark spanning many subjects. "general knowledge benchmark MMLU (Hendrycks et al., 2021)."

- Model-Written Evals (MWE): Safety/alignment evaluations composed of questions written by models. "Model-Written Evals (MWE; Perez et al., 2023)"

- Next-token prediction: The standard language modeling objective of predicting the next token given prior tokens. "i.e., next-token prediction."

- Power-law scaling: Performance or loss improvement that follows a power-law as model size, data, or compute grow. "Model performance generally follows predictable power-law scaling with respect to model size N, dataset size D, and compute C"

- Reasoning budgets: API-controlled settings that allocate longer chains of thought or actions at inference time. "Reasoning budgets reduce incoherence, but natural variation has a much stronger effect."

- Scaling laws: Empirical relationships describing how performance scales with parameters, data, and compute. "In Section 3.2 we will compute scaling laws independently for bias and variance loss contributions, to judge which asymptotically dominates."

- Steepest descent: An optimization method that moves along the negative gradient direction with fixed step size or norm. "performs steepest descent with a fixed step norm."

- Teacher forcing: A training technique that feeds ground-truth previous tokens to condition next-token predictions. "and teacher forcing."

- Variance dominated: A regime where variance contributes more to error than bias, indicating inconsistent failures. "the final loss is also more variance dominated and thus incoherent with increasing model size."

Collections

Sign up for free to add this paper to one or more collections.