Learning Rate Matters: Vanilla LoRA May Suffice for LLM Fine-tuning

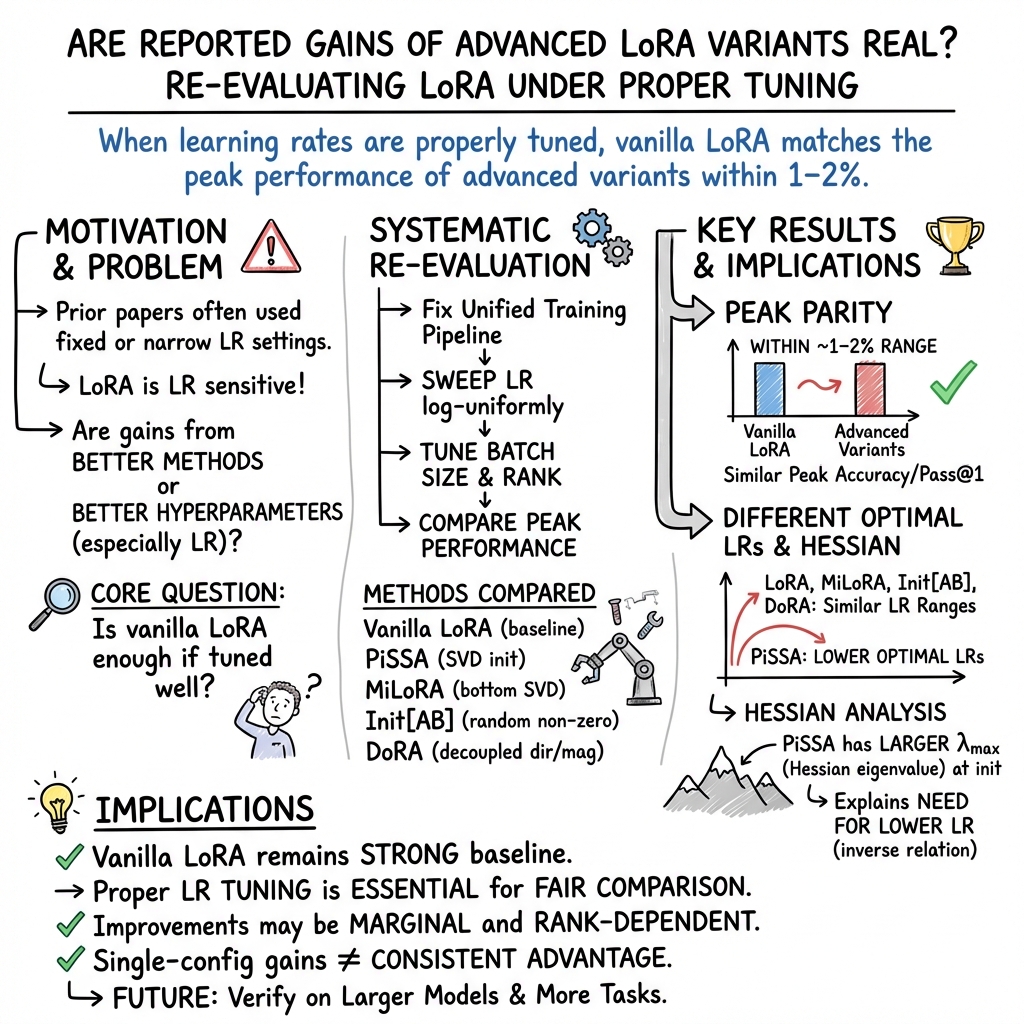

Abstract: Low-Rank Adaptation (LoRA) is the prevailing approach for efficient LLM fine-tuning. Building on this paradigm, recent studies have proposed alternative initialization strategies and architectural modifications, reporting substantial improvements over vanilla LoRA. However, these gains are often demonstrated under fixed or narrowly tuned hyperparameter settings, despite the known sensitivity of neural networks to training configurations. In this work, we systematically re-evaluate four representative LoRA variants alongside vanilla LoRA through extensive hyperparameter searches. Across mathematical and code generation tasks on diverse model scales, we find that different LoRA methods favor distinct learning rate ranges. Crucially, once learning rates are properly tuned, all methods achieve similar peak performance (within 1-2%), with only subtle rank-dependent behaviors. These results suggest that vanilla LoRA remains a competitive baseline and that improvements reported under single training configuration may not reflect consistent methodological advantages. Finally, a second-order analysis attributes the differing optimal learning rate ranges to variations in the largest Hessian eigenvalue, aligning with classical learning theories.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Explain it Like I'm 14

Overview

This paper looks at a popular way to “teach” big LLMs new skills without changing all their parts, called LoRA (Low-Rank Adaptation). Many new versions of LoRA claim to be better. The authors ask a simple question: are these newer methods really better, or do they just use a better learning rate (the “speed” of training)? Their main message is that if you carefully pick the learning rate, plain, original LoRA can work just as well as the fancy versions.

Key questions the paper asks

- When we fine-tune LLMs, do newer LoRA variants truly beat vanilla (original) LoRA?

- Or are the reported gains mostly because they used a better training setup—especially the learning rate?

- Do different LoRA methods need different learning rates to do their best?

- Can we explain why some methods prefer smaller or larger learning rates?

How did they test it?

To make a fair comparison, the authors ran many controlled experiments:

- Models: They fine-tuned three well-known LLMs of different sizes: Qwen3-0.6B, Gemma-3-1B, and Llama-2-7B.

- Tasks: They trained the models to solve math problems and write code, then tested on standard benchmarks (like GSM8K and HumanEval).

- Methods compared:

- Vanilla LoRA (the original method)

- PiSSA and MiLoRA (two smarter ways to start LoRA’s extra weights)

- InitAB

- DoRA (a small architecture change that separates the “direction” and “magnitude” of updates)

- Training setup: They tried a wide range of learning rates (from very small to quite large), plus some different batch sizes (how many examples the model sees at once) and ranks (how big the LoRA adapters are).

- Explaining the “why”: They analyzed something called the Hessian’s largest eigenvalue. In everyday terms, this measures how “steep” or “curvy” the training landscape is near the starting point. Steeper areas usually need smaller steps (smaller learning rates) to avoid tripping.

Simple analogy: Imagine learning is like hiking down a mountain to reach the lowest point (best performance). The learning rate is your step size. If the slope is gentle, big steps are fine. If it’s steep, big steps can make you fall, so you need smaller steps. Different LoRA methods start you on hills with different steepness.

What did they find?

- Tuning the learning rate matters a lot. When they picked the best learning rate for each method, all methods reached nearly the same top performance—usually within 1–2% of each other.

- Different methods prefer different learning rate ranges:

- PiSSA tends to need a smaller learning rate to hit its best performance, but interestingly it also stays stable at some higher learning rates where other methods crash.

- The others (LoRA, MiLoRA, Init[AB], DoRA) usually like similar ranges to vanilla LoRA.

- Rank-dependent behavior:

- DoRA and MiLoRA often look better when the rank (adapter size) is small, but the advantage can shrink or even reverse as rank gets bigger.

- PiSSA sometimes trails at very low ranks but slightly pulls ahead at higher ranks.

- Init[AB] can be a bit better at medium ranks but not consistently at low or high ranks.

- Learning rate is more critical than batch size. Changing the batch size helped less than picking the right learning rate. When batch size increased, the best learning rate also needed to increase (a known rule of thumb).

- Why the learning rate preferences differ: PiSSA showed a much larger “steepness” (largest Hessian eigenvalue) at the start. That explains why it needs smaller steps (lower learning rates) to do best—matching classic training theory.

Why this is important

- Fair testing matters: Many past papers reported improvements without carefully tuning key settings like learning rate for each method. This work shows those gains may come from nicer training settings, not fundamentally better methods.

- Practical advice for users:

- Start with vanilla LoRA—it’s simple and strong.

- Spend your time tuning the learning rate first. It gives the biggest boost.

- Pick rank based on your compute budget. If you must use low rank, DoRA or MiLoRA can be slightly better; if you can afford higher rank, vanilla LoRA is often just as good.

- If your method struggles, try adjusting the learning rate range rather than switching methods.

- Research impact: Future papers should compare methods with thorough hyperparameter tuning, especially learning rate, to show true improvements. The Hessian-based analysis gives a reasoned way to predict which learning rates make sense for different LoRA variants.

In short: The speed of training (learning rate) can make a bigger difference than the choice of fancy LoRA variant. With well-chosen learning rates, the original LoRA is usually enough.

Knowledge Gaps

Unresolved knowledge gaps, limitations, and open questions

Below is a concise, actionable list of what remains missing, uncertain, or unexplored in the paper.

- Scalability beyond 7B decoder-only LLMs: It remains unknown whether the learning-rate parity across LoRA variants holds for larger models (e.g., 13B–70B+), multi-modal LLMs (e.g., Flamingo-like), and encoder–decoder architectures.

- Task generalization: The study focuses on math and code; it does not test common NLP tasks (summarization, QA, translation), dialog, retrieval-augmented generation, or safety/alignment settings (e.g., DPO/PPO/RLHF), leaving generality unverified.

- Full fine-tuning comparisons: The paper reaffirms LoRA’s gap to Full FT but does not include tuned Full FT baselines; it is unclear if well-tuned vanilla LoRA can close the gap under matched compute and optimization.

- Quantized fine-tuning (QLoRA) interplay: The sensitivity of LoRA variants to learning rate under quantization (4–8 bit), and how quantization affects Hessian curvature and optimal learning rates, is unexplored.

- Broader variant coverage: Many LoRA-family methods (e.g., VeRA, BOFT, GraLoRA, RandLoRA, Hira, KronA, Aurora, Sulora, Mora) are not evaluated; whether the “vanilla LoRA suffices when LR is tuned” conclusion extends to them is unknown.

- Optimizer choice and hyperparameters: The analysis frames updates via SGD theory but does not assess optimizer differences (AdamW vs. SGD/momentum), weight decay, betas/momentum, gradient clipping, or warmup/schedulers; the joint impact on optimal LR and stability is unstudied.

- Learning rate schedules: Only a fixed scheduler is used; the effect of cosine, step, linear warmup, and adaptive schedules on peak performance, rank-dependent behavior, and divergence thresholds is not assessed.

- Scaling factor and dropout: The LoRA scaling factor is fixed (Yr=1) and dropout is fixed; the influence of scaling, dropout rate/location, and their interaction with optimal LR across variants and ranks remains uncharacterized.

- Adapter placement: Adapters are placed in fixed locations; how layer-wise placement (Q/K/V/O/FFN) and selective insertion alter Hessian sharpness, LR ranges, and performance is not investigated.

- Rank search boundaries and granularity: Ranks are limited to 4–256 with uniform global ranks; extremely low (r≤2) or high (r≥512) ranks, and heterogeneous per-layer rank allocation, are not explored.

- Theoretical explanation of rank effects: The observed rank-dependent performance reversals (e.g., MiLoRA better at low r, PiSSA improving at high r) lack a formal analysis linking rank to Hessian spectra and method initialization.

- Hessian analysis coverage: Sharpness is estimated block-wise at initialization (primarily reported for Query projections); global cross-layer curvature, other matrices (K/V/O/FFN) in the main text, and how Hessian eigenvalues evolve during training are not analyzed.

- DoRA curvature dynamics: DoRA’s different architecture may alter Hessian evolution, but its Hessian analysis is deferred; understanding how magnitude/direction decoupling impacts sharpness and learning-rate needs is an open problem.

- Predictive LR selection: While larger top eigenvalues correlate with lower optimal LRs, the paper does not provide a procedure to estimate Amax efficiently and select LR per method/layer, nor validate predictive accuracy across tasks/models.

- Batch size nuances: The batch-size “scaling rule” is observed but not systematically studied; effects of small vs. large batches under joint optimizer tuning and gradient-noise-scale analysis remain open.

- Stability and divergence characterization: Beyond noting divergence at high LRs for some methods, the paper does not quantify stability regions, gradient norm dynamics, or “catapult regime” presence across variants.

- Catastrophic forgetting and retention: Claims about MiLoRA’s retention are not tested here; multi-task fine-tuning and retention of base-model capabilities (e.g., general instruction-following) after task-specific tuning are unexamined.

- Data dependence and sample efficiency: Sensitivity to training data quality/size, broader datasets (beyond MetaMathQA/CodeFeedback), and pass@k metrics for code (vs. only pass@1) is limited; statistical significance tests across more seeds are absent.

- Cost–efficiency trade-offs: Compute/memory overheads, throughput, wall-clock time, and the parameter overhead of variants (e.g., DoRA’s magnitude vector at low ranks) are not quantified against realized performance gains.

- Per-layer/adaptive LRs: Only a single global LR is tuned; whether per-layer or curvature-aware LRs (e.g., via layer-wise Hessian or preconditioning) yield consistent improvements across variants is unexplored.

- Deployment and inference considerations: Post-merge latency and serving behavior across methods, especially under different adapter placements or ranks, remain unstudied.

- Cross-lingual robustness: Performance and LR sensitivity on non-English or multilingual datasets are not evaluated.

Glossary

- Adapter: A small trainable module inserted into a model to enable task-specific updates without modifying all parameters. "these learned low-rank adapters can be merged into the original backbone, thereby incurring no additional inference latency."

- Adapter placement: The choice of which layers or components of a model receive adapters for fine-tuning. "Other configurations, such as epoch, adapter placement, and learning rate scheduler, remain fixed across all experiments"

- Adapter rank: The dimensionality r of the low-rank update in LoRA that controls the capacity of the adapter. "varying adapter ranks for Gemma-3-1B"

- Broadcasting: Automatically expanding tensor dimensions during element-wise operations to match shapes. "while @ denotes element-wise mul- tiplication with broadcasting across columns."

- Catapult learning regime: A regime where learning rates above the classical stability threshold still yield good performance in modern architectures. "identified a "catapult" learning regime char- acterized by 2/max < n* < 12/max"

- Catastrophic forgetting: The tendency of a model to lose previously learned knowledge when fine-tuned on new tasks. "MiLoRA achieves superior downstream performance with less catas- trophic forgetting."

- Column-wise norm: The vector of norms computed for each column of a matrix. "Note that |. ||c denotes taking the column- wise norm of a matrix"

- DoRA: A LoRA variant that decouples direction and magnitude of weight updates by learning them separately. "DoRA has been shown to consistently outperform LoRA, especially in regimes where the rank is small."

- Full fine-tuning (Full FT): Updating all parameters of a pretrained model during adaptation to a new task. "making full-parameter fine-tuning (Full FT) prohibitively expensive in terms of memory and computation."

- Gauss-Newton method: An optimization approach related to second-order methods, informing relationships between curvature and learning rate. "originates from the Gauss-Newton method for convex optimization"

- Hessian: The matrix of second derivatives of the loss function with respect to model parameters, capturing curvature. "compute the Hessian matrix of the loss function"

- Hessian-vector Products: Efficient computations of the product between the Hessian and a vector without explicitly forming the Hessian. "Hessian-vector Products are utilized to estimate the top eigenvalue without explicitly forming H."

- Hyperparameter search: Systematic exploration of training settings (e.g., learning rate, batch size, rank) to find optimal performance. "conduct a large-scale hyperpa- rameter search"

- Init[AB]: A LoRA initialization scheme where both low-rank matrices A and B are randomly initialized rather than only A. "Init[AB] initializes both matrices as Bo ~ N(0,02) and A0 ~ N(0,02)."

- Kaiming initialization: A weight initialization method designed for rectified linear units to maintain signal variance. "i.e., Kaiming initialization (He et al., 2015)"

- Lanczos algorithm: An iterative method for approximating extreme eigenvalues of large symmetric matrices. "The Lanczos algorithm (Lanczos, 1950) and Hessian-vector Products are utilized to estimate the top eigenvalue"

- Learning rate scheduler: A strategy to adjust the learning rate during training according to a predefined schedule. "Other configurations, such as epoch, adapter placement, and learning rate scheduler, remain fixed across all experiments"

- Lipschitz smoothness: A property describing bounded changes in gradients, affecting optimization stability and sensitivity. "Theories regarding LoRA's lack of Lipschitz smoothness"

- LoRA: Low-Rank Adaptation; a method that fine-tunes LLMs by injecting low-rank trainable matrices into selected layers. "Low-Rank Adaptation (LoRA) is the prevail- ing approach for efficient LLM fine-tuning."

- Magnitude vector: An additional trainable vector in DoRA that controls the scale of weight updates separately from direction. "while m E R1x2 is an additional trainable magnitude vector initialized with mo = |Wpre |c."

- Maximum Hessian eigenvalue: The largest eigenvalue of the Hessian, indicating the sharpest curvature direction of the loss landscape. "variations in the largest Hessian eigenvalue"

- MiLoRA: A LoRA initialization variant that uses bottom-r minor components to retain pretrained knowledge during adaptation. "MiLoRA initializes the low-rank adapters using bottom-r minor components"

- Parameter-efficient fine-tuning (PEFT): Techniques that adapt models to new tasks by training only a small subset of parameters. "developing parameter-efficient fine-tuning (PEFT) methods"

- pass@1: An evaluation metric for code generation measuring whether the first generated solution passes tests. "mean ac- curacy (equivalently, pass@1 for code generation)"

- PiSSA: A LoRA variant that initializes adapters using top-r principal components of the pretrained weights to speed convergence. "Meng et al. (2024a) proposed to initialize BA with the top-r principal components of the pretrained weight matrix"

- Principal components: Directions corresponding to the largest singular values that capture most variance in a matrix. "top-r principal components of the pretrained weight matrix"

- Query projection matrix: The parameter matrix in a Transformer’s attention mechanism that projects inputs into query vectors. "the Hessian for the Query projection matrix in the i-th layer"

- Residual matrix: The component of the pretrained weights that remains after subtracting the initialized low-rank adapter contribution. "the residual matrix is defined as"

- Scaling factor: A multiplier (often rank-dependent) applied to LoRA updates to control their effect during training. "serves as a rank-dependent scaling factor"

- SGD (Stochastic Gradient Descent): An optimization algorithm that updates parameters using gradients computed on minibatches. "the update rule of Stochastic Gradient Descent (SGD) at step t is:"

- Sharpness: The curvature magnitude of the loss landscape, often measured by the top Hessian eigenvalue. "commonly referred to as sharpness"

- Singular value decomposition (SVD): A matrix factorization that expresses a matrix in terms of singular values and orthogonal vectors. "leverage the singular value decomposition (SVD) of Wpre"

- Spurious loss landscape: A problematic optimization surface with misleading local minima or saddle points that hinder training. "and its spurious loss landscape (Liu et al., 2025)"

- Transformer layers: Stacked components of the Transformer architecture responsible for sequence modeling. "across Transformer layers"

Practical Applications

Summary

This paper’s central finding is practical: with proper learning-rate (LR) tuning, vanilla LoRA achieves similar peak performance (within ~1–2%) to several advanced LoRA variants across math and code-generation tasks and multiple model scales. Different variants prefer different LR ranges (e.g., PiSSA typically needs a lower LR, explained by larger top Hessian eigenvalues), and small rank-dependent advantages exist but are modest. LR is more critical than batch size, and the optimal LR scales with batch size. These insights translate into concrete changes to fine‑tuning workflows, evaluation protocols, and tooling.

Below are actionable applications grouped by deployment horizon.

Immediate Applications

These can be deployed now using existing libraries (e.g., Hugging Face PEFT, DeepSpeed, Ray Tune/Optuna, Weights & Biases Sweeps) and common LLMs (up to ~7B parameters, decoder-only).

- Industry-wide “LR-first” PEFT playbook

- What: Adopt a standardized fine-tuning workflow that prioritizes LR sweeps (log-scale across 10⁻⁶–10⁻³ with several points per decade) before exploring other hyperparameters or switching LoRA variants. Scale LR with batch size.

- Sectors: Software (code assistants), Healthcare (clinical text tools), Finance (report/chat workflows), Education (tutoring agents).

- Tools/workflows:

- Automate LR grid/random/Bayesian sweeps with early stopping.

- Start with vanilla LoRA; only consider variants if strict rank/latency constraints persist after LR tuning.

- Track divergence and stability to bracket viable LR ranges quickly.

- Assumptions/dependencies:

- Findings validated on math/code tasks and decoder-only LLMs up to 7B; other tasks/models may require confirmation.

- Optimizer and schedule interactions (e.g., AdamW, cosine) can shift optimal LR slightly; still, LR remains the primary driver.

- Cost and complexity reduction via “vanilla-first”

- What: Default to vanilla LoRA for fine-tuning projects and internal baselines; avoid adopting advanced variants without LR-robust comparisons.

- Sectors: Startups, SMEs, enterprises with strict time-to-market or compliance constraints.

- Tools/workflows: Baseline templates in MLOps that instantiate vanilla LoRA + LR sweeps; report tuned baselines in model cards.

- Assumptions/dependencies: Some low-rank regimes or niche tasks may still benefit slightly from specific variants; verify under tuned LRs.

- MLOps integration for LR sweeps

- What: Build CI/CD pipelines that automatically launch LR sweeps for every fine-tuning job and scale LR with batch size.

- Sectors: Any organization operating ML platforms.

- Tools/workflows:

- Job templates with default LR ranges and per-variant brackets (e.g., include a lower-LR bracket for PiSSA).

- Early termination policies for unstable runs; caching and resume for failed sweeps.

- Assumptions/dependencies: Requires modest extra compute for sweeps; often offset by fewer subsequent iterations and fewer abandoned variant explorations.

- Fair benchmarking and internal governance

- What: Require tuned LR comparisons across methods before declaring improvements; maintain tuned vanilla LoRA as a reference.

- Sectors: Industry R&D, academia, government labs.

- Tools/workflows: Reproducibility checklists that include LR sweeps and rank sweeps; shared result dashboards.

- Assumptions/dependencies: Organizational buy-in to slower but fairer benchmarking; compute budget for sweeps.

- Rank-aware, LR-tuned method selection

- What: Choose variants conditionally on rank regime, always with LR tuning:

- Very low ranks (e.g., r ≤ 8–16): DoRA or MiLoRA may offer small gains.

- Higher ranks (e.g., r ≥ 128): PiSSA may slightly edge out vanilla LoRA.

- Sectors: Edge/embedded (robotics, mobile), latency-constrained serving.

- Tools/workflows: Rank–variant–LR lookup tables in internal playbooks.

- Assumptions/dependencies: Gains are small and not universal; verify under the target task/model and constraints.

- Batch-size/LR coupling guidelines

- What: As batch size increases, increase LR proportionally; reevaluate performance if batch size changes.

- Sectors: All.

- Tools/workflows: Auto-scaling LR with batch size in configuration; trigger retuning when batch size changes.

- Assumptions/dependencies: May interact with optimizer settings and warmup; test on a short schedule before committing.

- Lightweight sharpness-informed LR bracketing (optional)

- What: Use quick curvature proxies (e.g., gradient norm trends, small-batch HVP probes on a subset of layers) to set initial LR brackets; anticipate lower LRs for PiSSA-like initializations.

- Sectors: Teams looking to reduce sweep size.

- Tools/workflows: Scripts that compute approximate top Hessian eigenvalues (block-wise) on a small validation set.

- Assumptions/dependencies: Exact Hessian estimation is expensive; use approximations sparingly. Benefit is bracketing, not precise LR.

- Practitioner-facing quick-start recipes

- What: Provide prebuilt configs per base model/task that:

- Start with vanilla LoRA and a broad LR sweep.

- Include variant-specific LR brackets (lower for PiSSA; similar ranges for LoRA/DoRA/Init[AB]/MiLoRA).

- Sectors: Open-source, education, individual developers.

- Tools/workflows: YAML configs and Hydra bundles; example Colab notebooks.

- Assumptions/dependencies: Must be maintained per model release; not a substitute for project-specific tuning.

- Procurement and compliance checklists

- What: For model selection/vendor evaluation, require evidence of LR tuning when reporting gains over tuned vanilla LoRA.

- Sectors: Healthcare/finance regulators, enterprise procurement.

- Tools/workflows: Documentation templates that log LR ranges, ranks, batch sizes, and stability.

- Assumptions/dependencies: Organizational policy updates and auditor training.

Long-Term Applications

These require further research, scaling, or development beyond the paper’s tested scope.

- LR- and sharpness-aware PEFT optimizers

- What: Auto-LR selection using top Hessian eigenvalue estimates; per-layer/per-block LR schedules; trust-region or second-order variants tailored for LoRA.

- Sectors: Platform MLOps, foundational model providers.

- Tools/products: “AutoLR for LoRA” modules integrated into PEFT libraries; optional HVP-based diagnostics.

- Assumptions/dependencies: Efficient and stable Hessian estimation at scale; validation on larger models and diverse tasks.

- PEFT-as-a-Service with audit-ready tuning

- What: Managed services that deliver tuned LoRA fine-tuning with reproducibility reports, cost/performance trade-offs, and hyperparameter audit trails.

- Sectors: Healthcare, finance, government, regulated industries.

- Tools/products: Dashboards with LR sweep outcomes, divergence maps, and rank/variant comparisons.

- Assumptions/dependencies: Standardized benchmarks and governance frameworks; privacy/security for sensitive data.

- Benchmark and policy standards for fair comparisons

- What: Community and venue guidelines that mandate method-specific LR sweeps and tuned vanilla LoRA baselines for PEFT claims.

- Sectors: Academic conferences/journals, public sector AI evaluations.

- Tools/products: Benchmark suites with built-in sweep protocols and result normalizers.

- Assumptions/dependencies: Consensus among reviewers and organizers; compute credits or shared resources.

- Systems and hardware co-design for fast hyperparameter search

- What: Scheduling, early-stopping, and multi-fidelity HPO tailored to PEFT LR sweeps; cluster support for short, parallel runs; bandit-style resource allocation.

- Sectors: Cloud/HPC providers, large ML teams.

- Tools/products: “Sweep-first” orchestrators; resource-aware autoscaling policies.

- Assumptions/dependencies: Integration with training frameworks; support for distributed short jobs.

- LR profile registries for base models and tasks

- What: Public registries capturing LR ranges and stability bands by base model, task family, and rank regime (including quantized settings like QLoRA).

- Sectors: Open-source ecosystems, platform providers.

- Tools/products: Searchable catalogs; API endpoints for auto-configuration.

- Assumptions/dependencies: Continuous community contributions; versioning and curation.

- Extension and validation across scales and modalities

- What: Verify LR-centric conclusions on larger models (e.g., 13B–70B+), multimodal models, and broader task sets (dialogue, retrieval, RLHF).

- Sectors: Foundation model labs, applied research groups.

- Tools/workflows: Large-scale experimental campaigns; unified evaluation harnesses.

- Assumptions/dependencies: Significant compute; careful dataset/eval design.

- Low-rank-aware optimizers and schedules

- What: Optimizers that incorporate rank, scaling factors, and initialization into LR schedules; adaptive scaling with rank and Hessian curvature.

- Sectors: Research, library maintainers.

- Tools/products: New optimizer classes in PEFT libraries; papers on theory-validated schedules.

- Assumptions/dependencies: Theoretical development; robust empirical validation.

- Model risk management and regulatory guidance

- What: Guidelines requiring documented search over LR ranges as part of model risk assessments before deployment in high-stakes settings.

- Sectors: Healthcare, finance, public services.

- Tools/products: Compliance checklists and audit artifacts incorporating hyperparameter search practices.

- Assumptions/dependencies: Regulatory acceptance and standardization; clear audit procedures.

- Energy/carbon-aware tuning policies

- What: Use LR-first strategies and early-stopping to reduce unnecessary training cycles and energy usage; publish energy metrics alongside performance.

- Sectors: Sustainability-conscious organizations, cloud providers.

- Tools/products: Energy dashboards linked to sweep outcomes; budgeted HPO methods.

- Assumptions/dependencies: Reliable energy metering and attribution; organizational incentives.

- Theory and diagnostics for architecture-specific sharpness (e.g., DoRA)

- What: Extend Hessian-based analysis to architectures like DoRA to design principled LR bands and schedules.

- Sectors: Research groups, optimizer designers.

- Tools/workflows: New analyses and diagnostics integrated into training loops.

- Assumptions/dependencies: Tractable estimation methods; alignment between theory and practice.

Notes on feasibility and scope:

- The reported evidence covers decoder-only LLMs up to 7B and math/code tasks. Applying the same conclusions in other settings (e.g., instruction-following, multilingual, multimodal, very large models, and quantized fine-tuning like QLoRA) requires validation.

- The paper fixed certain hyperparameters (e.g., LoRA scaling set so that the effective factor was 1). Different scaling choices may interact with LR.

- Hessian-based methods are informative but computationally nontrivial; approximate proxies may suffice for bracketing LRs in practice.

Collections

Sign up for free to add this paper to one or more collections.