Computing Diffusion Geometry

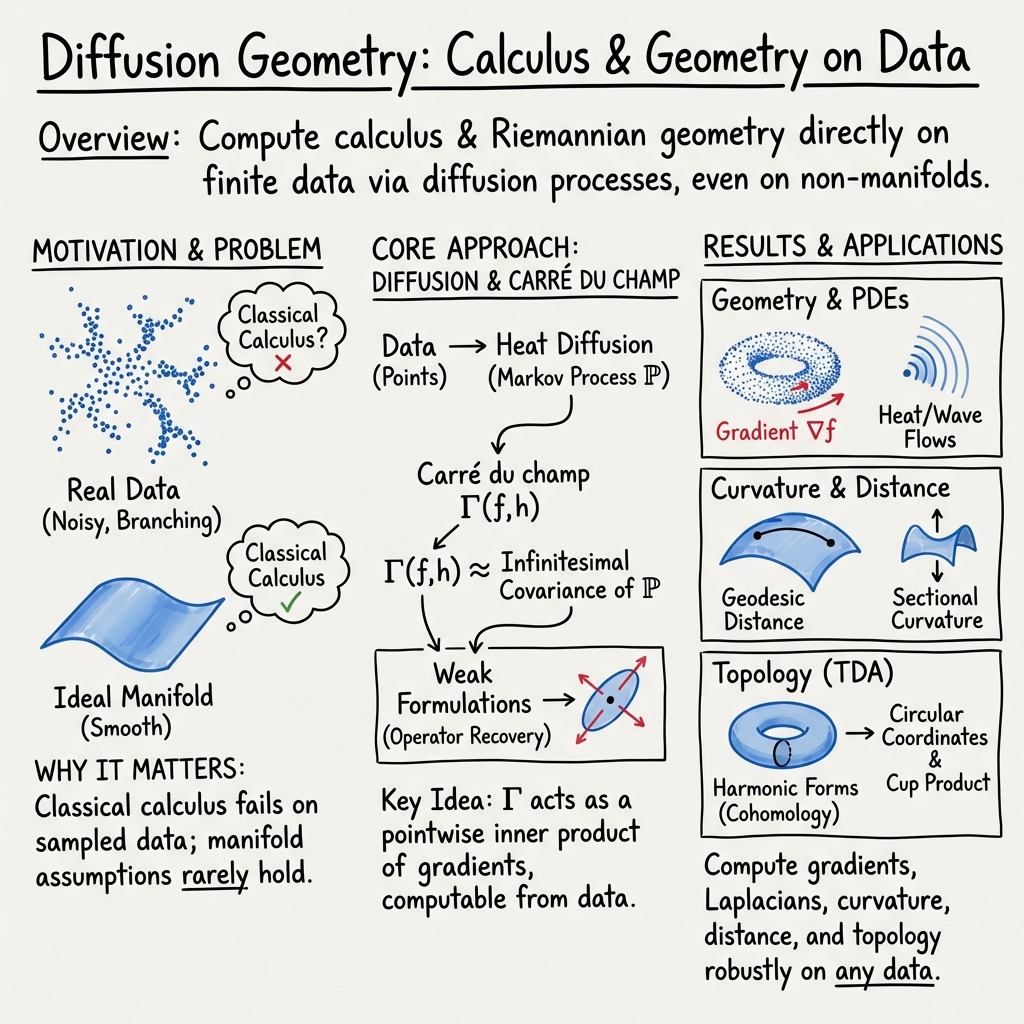

Abstract: Calculus and geometry are ubiquitous in the theoretical modelling of scientific phenomena, but have historically been very challenging to apply directly to real data as statistics. Diffusion geometry is a new theory that reformulates classical calculus and geometry in terms of a diffusion process, allowing these theories to generalise beyond manifolds and be computed from data. This work introduces a new computational framework for diffusion geometry that substantially broadens its practical scope and improves its precision, robustness to noise, and computational complexity. We present a range of new computational methods, including all the standard objects from vector calculus and Riemannian geometry, and apply them to solve spatial PDEs and vector field flows, find geodesic (intrinsic) distances, curvature, and several new topological tools like de Rham cohomology, circular coordinates, and Morse theory. These methods are data-driven, scalable, and can exploit highly optimised numerical tools for linear algebra.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Explain it Like I'm 14

Overview

This paper is about a new way to do calculus and geometry directly on messy, real-world data. Instead of assuming your data lies on a perfectly smooth surface (a “manifold”), the authors use heat diffusion—the way heat spreads out—to rebuild the tools of calculus and geometry from the data itself. This lets you measure things like gradients (directions of fastest change), distances along curved shapes, and how “bendy” a space is, even when the data has noise, branches, or mixed dimensions.

Key Objectives

The paper aims to:

- Create a general, practical method to compute calculus and geometry from data using diffusion (heat flow).

- Work on real datasets that may not live on nice, smooth manifolds.

- Provide tools to solve equations that describe change (like the heat and wave equations), analyze vector fields (like wind or flow), measure intrinsic distances and curvature, and do topological analysis (finding holes and loops) much faster and more robustly than older methods.

Methods and Approach

The core idea: From heat diffusion to geometry

Think of dropping a tiny bit of heat at a point and watching it spread. How the heat spreads tells you about the shape of the space. If the space is curved or has varying thickness, heat spreads differently. The authors use this spreading pattern to define geometry.

Technically, they use:

- The heat kernel: a function that says how likely heat (or a random walker) goes from point x to point y in time t.

- A Markov chain: a matrix built from the heat kernel that describes the probabilities of moving from one data point to another. This turns your dataset into a “random walk” structure.

- The carré du champ (pronounced “car-ray do shahmp”): a quantity that measures how two functions change together at a very tiny scale. You can think of it like a “local covariance” of the diffusion process. It acts like a pointwise inner product and becomes the engine behind calculus and geometry.

In everyday terms: by watching how nearby points “exchange heat,” you learn how the space is shaped and how to measure change on it.

Building the Markov chain from data

- Use a kernel (often a Gaussian) to measure “closeness” or affinity between points: closer points share more heat.

- Normalize rows to get probabilities, making a Markov chain P where each row sums to 1.

- Use a variable bandwidth so the kernel adapts to dense vs. sparse regions, improving robustness on uneven data.

Computing the carré du champ as a local covariance

For functions f and h defined on your data points, the carré du champ Γ(f, h) at a point is computed from how f and h co-vary under one diffusion step of the Markov chain. Intuitively, it tells you “how much f and h tend to rise/fall together at that point when you look very locally.”

This Γ becomes the pointwise inner product that powers:

- Gradients: directions of fastest increase.

- Metrics: how long/big vectors are.

- Geometric operators used throughout calculus and geometry.

Making computation efficient: A smooth function basis

To keep things fast and stable, the authors compress the function space:

- They take the top eigenvectors of the Markov chain P. These are the smoothest “patterns” on the data (like gentle waves before sharper ones).

- Represent functions and vector fields using a small number n₀ of these smooth basis functions. This reduces memory and speeds up calculations, while filtering noise.

Representing vector fields from coordinates

Vector fields assign a direction at each data point. The authors build them from the gradients of coordinate functions (like x, y, z) multiplied by smooth coefficients from the function basis. This is flexible, data-driven, and doesn’t require a mesh or a perfect manifold.

A simple recipe used throughout

The paper follows a repeatable recipe:

- Define the object you want (gradient, distance, curvature, etc.) in terms of functions and the carré du champ Γ.

- Build a Markov chain on the data using a kernel.

- Compute Γ(f, h) as the local covariance of the diffusion process.

- Plug Γ into the formula for your object and compute.

This unified approach lets them tackle many tasks with the same toolkit.

Main Findings and Why They Matter

The authors show that their diffusion-based framework can:

- Compute gradients, Hessians, and vector field flows from raw point clouds.

- Solve spatial partial differential equations (PDEs) like the heat and wave equations without a mesh.

- Recover geodesic distances (true shortest paths along the shape) and the intrinsic metric of the data.

- Estimate classical curvature (how bendy the space is) via the Levi-Civita connection—even on non-manifold data. This is a first.

- Perform topological data analysis using smooth tools:

- de Rham cohomology (detects “holes” using harmonic forms),

- circular coordinates (like angles around loops),

- Morse theory (studies peaks, valleys, and saddles to infer shape).

- Achieve major speedups and memory savings compared to popular methods like Vietoris–Rips persistent homology, while being more robust to noise and outliers.

Why it’s important:

- It brings the powerful language of calculus and geometry to real-world data that is messy, branched, and noisy.

- It unifies many tasks under one simple, scalable method based on diffusion.

- It opens the door to trustworthy geometric and topological analysis in statistics and machine learning without heavy assumptions.

Implications and Potential Impact

This work could reshape how we analyze complex datasets:

- In science and engineering, you can study waves, flows, and stresses on shapes sampled by sensors without building detailed meshes.

- In medicine, you could measure how signals propagate over real anatomical surfaces extracted from scans.

- In machine learning, you can use intrinsic distances, curvature, and topology-aware features to improve understanding and performance on structured data.

- In topological data analysis, you get faster, more noise-robust tools to detect loops and holes in data.

Overall, the paper shows that “watching heat spread” is a surprisingly powerful lens. It turns advanced geometry into practical, data-driven computations that scale to large datasets and work even when the underlying space isn’t perfectly smooth. The authors also release a Python package, making these methods accessible to researchers and practitioners.

Knowledge Gaps

Knowledge gaps, limitations, and open questions

The paper introduces a promising, general-purpose computational framework for diffusion geometry using a covariance-based carré du champ and weak formulations. However, several theoretical, methodological, and practical aspects remain open. The following list highlights concrete gaps and questions to guide future work:

- Convergence guarantees for discrete operators:

- Prove that the discrete covariance-based carré du champ converges to the continuum Γ on manifolds (and identify what it converges to on non-manifold spaces); provide finite-sample error rates in terms of sample size n, ambient dimension d, neighborhood size k, and bandwidth function ρ.

- Establish consistency and rates for downstream objects (gradients, divergence, Laplacian, Levi-Civita connection, curvature, geodesic distances) derived from the discrete Γ.

- Dependence on sampling density and kernel normalization:

- Quantify the bias introduced by row-normalized Markov chains that do not enforce density-invariant normalization; characterize when geometry estimates are sampling-density invariant and when they are not.

- Compare the proposed normalization to Berry–Coifman and related diffusion-maps normalizations; develop principled debiasing if needed.

- Bandwidth and neighborhood selection:

- Provide theoretically grounded and task-adaptive procedures to choose bandwidths ρ (or t) and k (e.g., via risk bounds, cross-validation, multi-scale criteria).

- Analyze sensitivity of Γ and all downstream operators to mis-specified bandwidths, especially on heterogeneous/anisotropic and noisy data.

- Boundaries and boundary conditions:

- Characterize the bias of the covariance estimator near boundaries; develop corrections for Neumann/Dirichlet/Robin boundary conditions in PDE solvers and geometric operators.

- Provide diagnostics to detect boundary effects and prescribe remedies.

- Geometry on non-manifold and singular spaces:

- Precisely define which “geometric” quantities (e.g., metric, connection, curvature, geodesic distance) are well-defined on stratified or singular spaces via Γ-calculus, and at which scales.

- For branching points and variable dimension regions, clarify interpretability and uniqueness of geodesics and curvature; formalize the notion of sectional curvature and Levi-Civita connection in this setting.

- Morphisms and immersions in diffusion geometry:

- Formalize morphisms between Markov triples and precise definitions of immersions, embeddings, and isometries in diffusion geometry (explicitly acknowledged as open in the paper), and specify conditions under which coordinate functions generate a faithful vector-field module.

- Function space not closed under multiplication:

- Quantify the projection error from multiplying functions in the compressed space and reprojecting; bound its impact on operators relying on products (e.g., Lie bracket, wedge product, Hodge star, nonlinear PDEs).

- Develop alternatives that preserve more algebraic structure (e.g., larger or multiresolution bases, adaptive enrichment, or compressed tensorized representations) with controlled computational cost.

- Stability and coercivity of weak formulations:

- Provide analytical conditions ensuring well-posedness, coercivity, and conditioning of the weak formulations for all differential operators used (including the connection and curvature).

- Analyze preconditioning strategies and error propagation through composite operators (e.g., metric → connection → curvature).

- Curvature estimation:

- Give error bounds, scale dependence, and robustness analysis for estimated sectional curvature; establish when results are meaningful on non-manifolds and the relation to classical curvature in manifold regions.

- Compare systematically with discrete Ricci notions (Ollivier, Forman) and clarify complementarities and differences.

- Geodesic distance recovery:

- Prove consistency of solving the geodesic distance equation from discrete Γ; specify conditions ensuring uniqueness and stability, especially in presence of singularities and multiple geodesics.

- Compare to Isomap/heat method/fast marching in accuracy, runtime, and robustness, with clear regimes of superiority.

- Topological computations via differential tools:

- State precise conditions under which harmonic forms computed from Γ recover de Rham cohomology; clarify applicability on non-manifold or singular spaces where classical de Rham theory may not hold.

- Provide correctness guarantees and failure modes compared to Vietoris–Rips persistent homology; characterize what topology (if any) is computed off-manifolds.

- Directed and anisotropic diffusions:

- Extend the framework to non-reversible Markov chains (with drift) for anisotropic or directed data; analyze how Γ, operators, and geometry change and which objects remain well-defined.

- Design and evaluate anisotropic kernels capturing tangent anisotropy beyond scalar bandwidths.

- Multi-scale geometry:

- Develop a scale-space approach linking geometry across diffusion scales t (or ρ); address the ambiguity of “the” correct scale on heterogeneous data and propose scale selection or aggregation methods.

- Robustness and uncertainty quantification:

- Provide theoretical robustness results under additive noise, outliers, and sampling anisotropy; characterize breakdown points.

- Introduce uncertainty quantification for Γ and induced geometric quantities (e.g., bootstrap confidence intervals, Bayesian models) to report estimation uncertainty.

- Out-of-sample extension:

- Formalize and evaluate Nyström-like schemes to extend operators, fields, and geometric quantities to new points without recomputing the full model; analyze stability.

- Graph connectivity and spectral properties:

- Characterize how graph connectivity (e.g., disconnected or nearly reducible graphs) affects eigenfunctions used for the function space and the induced operators; propose remedies (e.g., per-component processing, regularization).

- High-dimensional neighbor search and scalability:

- Address the degradation of KD-trees in high d; benchmark and integrate ANN methods (e.g., HNSW, FAISS) and quantify their impact on Γ accuracy and run time.

- Provide out-of-core, randomized, or streaming eigen-solvers and Γ estimators for n ≫ 106, including memory–time trade-offs and GPU implementations.

- Computational complexity and memory footprint:

- Give detailed end-to-end complexity (time and memory) for each operator (metric, divergence, Laplacian, connection, curvature, PDE solvers, cohomology), including dependence on n, k, d, n₀, n₁.

- Analyze and mitigate the cost of two-hop expectations in the covariance Γ estimator and the implicit formation of large tensors (e.g., per-point metric matrices).

- Parameterization of vector-field bases:

- Assess sensitivity of results to the choice of immersion/coordinates used to span vector fields; provide invariance diagnostics and guidelines for smoothing/projection of coordinates or for learning reduced coordinates.

- PDE solvers on data:

- Validate accuracy and stability of meshless solvers for heat/wave equations and vector-field flows; specify CFL-like conditions, mass/energy conservation properties, and boundary handling.

- Compare with established graph-PDE discretizations (e.g., graph Laplacians, finite elements on point clouds).

- Handling non-Euclidean or heterogeneous input data:

- Generalize kernel construction to non-Euclidean inputs (e.g., strings, graphs, general metric spaces) and learn metrics when Euclidean distances are inappropriate; analyze effects on Γ and operators.

- Stationary distribution and measure choice:

- Clarify the role of the empirical measure μ (from row sums) versus the stationary distribution of P; analyze when these coincide, how choices affect inner products and operators, and how to correct for sampling measures.

- Validation on real data and benchmarks:

- Provide comprehensive empirical evaluations against baselines across tasks (gradients, geodesics, curvature, PDEs, topology), including ablations (covariance vs. generator-based Γ), sensitivity analyses, and standardized datasets.

- Theoretical scope of Γ-calculus on general spaces:

- Identify the minimal structural assumptions on spaces (e.g., metric-measure spaces with a strongly local Dirichlet form, RCD(K,N) conditions) under which the proposed computations correspond to well-defined geometric objects.

- Numerical regularization:

- Develop strategies for handling near-singular local metric matrices g_p (e.g., Tikhonov regularization, truncated SVD) and analyze the impact on downstream operators and visualization.

- Practical guidance for n₀ and n₁:

- Provide data-driven rules or error-controlled procedures for selecting the number of basis functions for the function and vector-field spaces, balancing bias–variance and computational cost.

Practical Applications

Overview

This paper introduces a practical, data-driven toolkit for computing calculus, geometry, and topology directly on point clouds and other non-manifold data by leveraging diffusion processes (via a Markov chain) and the carré du champ operator. It delivers scalable, noise-robust methods to compute gradients, vector fields, geodesic distances (intrinsic metrics), curvature (via the Levi-Civita connection), solve PDEs (heat/wave), and derive topological features (de Rham cohomology, circular coordinates, Morse theory). A Python package is available: github.com/Iolo-Jones/DiffusionGeometry.

Below are actionable applications grouped by immediacy, with sectors, potential tools/workflows, and key assumptions/dependencies.

Immediate Applications

These can be deployed now using the released software with standard data engineering and numerical linear algebra stacks.

- Geodesic distance (intrinsic metric) on point clouds

- Sectors: software/graphics (3D shape analysis, retrieval), manufacturing (metrology/QC), AR/VR, medical imaging (organ morphometrics).

- Tools/workflows: compute intrinsic geodesic distances with DiffusionGeometry and integrate with Open3D/PCL for shape matching, alignment, and retrieval; replace graph-shortest-path or mesh-based geodesics on unmeshed scans.

- Assumptions/dependencies: sufficiently dense sampling; an immersion/embedding (e.g., scanner coordinates); kernel bandwidth tuning; scalable nearest-neighbor search.

- Curvature and intrinsic geometry estimation on non-manifold data

- Sectors: quality inspection (detecting dents/warping), materials science (microstructure analysis), neuroscience/biomechanics (cortical folding metrics, cartilage surfaces).

- Tools/workflows: estimate sectional curvature via the Levi-Civita connection on point clouds (no mesh needed) to flag geometric anomalies or quantify local shape.

- Assumptions/dependencies: local sampling adequacy; noise levels; robustness depends on variable-bandwidth kernels and appropriate basis size.

- Fast, noise-robust topological data analysis (TDA) via de Rham cohomology and circular coordinates

- Sectors: single-cell genomics (cell cycle/trajectories), time-series/IoT (seasonality, operational cycles), robotics (loop detection), finance (cyclical regimes).

- Tools/workflows: replace Vietoris–Rips persistent homology with harmonic-form-based cohomology and circular coordinates for orders-of-magnitude faster, more robust TDA; integrate with Scanpy/scikit-learn.

- Assumptions/dependencies: adequate coverage of loops in data; basis dimension (n0) controls resolution; results depend on diffusion operator quality and sampling.

- Gradient and vector-field estimation on irregular domains

- Sectors: IoT/environmental sensing (temperature/pressure gradients), transportation (traffic potential fields), geoscience (terrain-derived flows).

- Tools/workflows: compute ∇f and visualize vector fields on point clouds via the carré du champ; build gradient-based alerts or controllers on arbitrary geometries.

- Assumptions/dependencies: scalar field availability on points; immersion coordinates or smoothed coordinates (P x); bandwidth selection.

- Meshless PDE solving (heat/wave equations, vector-field flows) on data geometries

- Sectors: graphics (denoising/smoothing via heat flow), acoustics in AR/VR (approximate wave propagation on scanned scenes), additive manufacturing (thermal analysis from scans), geoscience (diffusion on terrains).

- Tools/workflows: solve PDEs directly on point clouds without meshing; incorporate boundary conditions; run “what-if” simulations for design/ops.

- Assumptions/dependencies: PDE specification and boundary conditions; stability/time-step selection; resolution set by basis size; performance depends on sparse kernels and iterative solvers.

- Geometry-aware ML regularization and features

- Sectors: ML across domains (recommendation, NLP, vision, tabular).

- Tools/workflows: use bandlimited bases and diffusion operators for smoothness regularization; add features like intrinsic geodesic distances, circular coordinates, or curvature; integrate with scikit-learn/PyTorch.

- pipeline (e.g., fit on embeddings).

- Assum NB: requires eigen-decomposition for basis; differentiable implementation for end-to-end training is feasible but may need custom autograd.

- Non-manifold–aware embeddings and segmentation

- Sectors sop recommendation, e-commerce, vision (3 sop recognition), GIS (terrain segmentation).

- Tools/work support: compute intrinsic distances on heterogeneous (branched, variable-dimension) data for Isomap-like embeddings; segment data into Morse bas NB; pipeline: compute Morse functions, gradients, and critical points for topology-aware clustering/segmentation.

- Assumptions/dependencies: enough sampling near junctions/branches; Morse function choice; computational scaling via sparse kernels and approximate nearest neighbors.

- Cycle detection and topological monitoring in mobility/ops data

- Sectors: policy/public health (mobility cycles, epidemic waves), operations/IT (load cycles), energy (demand cycles).

- Tools/workflows: derive circular coordinates and harmonic forms from time-aggregated point clouds or state embeddings to track emergent loops; dashboard “topological alerts.”

- Assumptions/dependencies: stationarity over the aggregation window; privacy constraints; correct mapping from trajectories/logs to point-cloud/state embeddings.

Long-Term Applications

These require further research, validation, scaling, or systems integration (e.g., online/real-time, domain-specific modeling, or regulatory-grade assurance).

- Real-time SLAM and navigation enriched with intrinsic geometry

- Sectors: robotics/autonomy.

- Vision: online estimation of geodesic distances, curvature, and potential fields from streaming LiDAR/vision point clouds for path planning and localization.

- Dependencies: incremental/online diffusion operators; hardware acceleration (GPU/TPU); latency constraints; robust bandwidth adaptation.

- Data-driven digital twins using meshless PDEs on sensor-derived point clouds

- Sectors: infrastructure, manufacturing, energy, process industries.

- Vision: simulate diffusion/transport/elasticity on complex assets without meshing; feed control and predictive maintenance.

- Dependencies: governing equations, boundary/initial conditions, validation data; stable solvers for more complex PDEs; scalable basis updates as geometry evolves.

- Physics-informed ML on non-manifold geometries

- Sectors: scientific ML, climate, materials, biophysics.

- Vision: embed diffusion-geometry operators (grad, div, Laplacian, Lie bracket) as differentiable layers to impose physical/geometric priors on arbitrary data geometries.

- Dependencies: differentiable implementations of Γ and PDE solvers; training stability; integration with JAX/PyTorch; theoretical guarantees.

- Curvature-driven diagnostics and design optimization

- Sectors: medical imaging (brain cortical folding, cardiac surfaces), CAD/CAE (shape optimization), materials (microstructure design).

- Vision: use non-manifold curvature estimates to inform diagnosis or guide shape/material optimization without meshing.

- Dependencies: clinical/engineering validation; sensitivity to noise and sampling; standardized workflows and QA.

- Intrinsic geometry in foundation-model embedding spaces

- Sectors: search/retrieval, recommendation, NLP, vision.

- Vision: compute geodesic distances and topology in high-dimensional embeddings (e.g., sentence/image embeddings) to improve retrieval, clustering, debiasing, and novelty detection beyond cosine/Euclidean metrics.

- Dependencies: scalable construction of P in very high dimensions (ANN, memory); batch/online updates; interpretability and fairness considerations.

- Large-scale topology-aware monitoring for resilience and risk

- Sectors: finance (market regimes), supply chains, critical infrastructure.

- Vision: continuous monitoring of cohomology/circular coordinates as signals of phase transitions or cyclic stress; topology-based early warnings.

- Dependencies: robust streaming estimates; false-positive control; regulatory validation; handling non-stationarity.

- PDE-constrained control and planning on learned state spaces

- Sectors: robotics, energy systems, smart grids.

- Vision: learn state-space geometry from telemetry and solve control-relevant PDEs (e.g., potential fields) directly on that geometry for safe, efficient control.

- Dependencies: reliable state embeddings; stable online solvers; safety guarantees.

- Standardization and governance for diffusion-geometry analytics

- Sectors: policy, enterprise analytics.

- Vision: establish data/model standards (choice of kernels, bandwidths, basis size, QC) and assurance frameworks for deploying geometry/topology-based analytics in regulated settings.

- Dependencies: benchmarks, documentation, interpretability tools; bias/sampling-bias analyses; model risk management.

Cross-Cutting Assumptions and Dependencies

- Sampling and scale: methods assume data are sufficiently dense locally relative to chosen bandwidths; variable-bandwidth kernels mitigate heterogeneity but require tuning.

- Immersion/coordinates: vector-field constructions assume available immersion/embedding coordinates (raw coordinates, smoothed P x, or reduced dimensions); choice encodes inductive bias but does not change underlying Γ.

- Markov chain quality: symmetry/normalization and nearest-neighbor graphs affect Γ; robustness improves with sparse, well-conditioned kernels and accurate density estimates.

- Basis size and computation: compressed function space dimension (n0) controls resolution/accuracy vs. compute; requires sparse eigensolvers and ANN for scalability.

- Noise/outliers: the covariance-based Γ is empirically robust; nonetheless, extreme noise or severe sampling bias can degrade curvature/geodesic estimates.

- PDE specifics: boundary conditions and time stepping are required for PDEs; stability depends on operator discretization and basis choice.

- Interpretability and validation: geometric/topological quantities on non-manifolds are mathematically well-defined here but may require domain-specific interpretation and empirical validation before high-stakes use.

Glossary

- Algebra: A vector space equipped with a multiplication operation on its elements (here, functions), making it an algebraic structure supporting products. "A is an customblue{algebra}: it is a vector space that also has a notion of {multiplication} (because, if are functions, then so is )."

- Bandlimited functions: Functions restricted to low-frequency content with respect to a chosen spectral basis, yielding smoothness and numerical stability. "we can think of as a space of customblue{bandlimited functions} on the data."

- Bandwidth function: A positive function controlling the local scale of a kernel per point, enabling adaptive neighborhood sizes. "for some customblue{bandwidth function} ."

- Brownian motion: A continuous-time stochastic process modeling random movement; its transition density underlies the heat kernel. "which we can interpret as the probability of a Brownian motion transitioning from to after time."

- Carré du champ: A bilinear form associated with a diffusion generator that plays the role of a pointwise inner product of gradients, central in diffusion geometry. "the terms are called the customblue{carré du champ}~##1, ##2{bakry2014analysis} of and , denoted ."

- Circular coordinates: Topological coordinates mapping data to the circle, often derived from cohomology and used in topological data analysis. "including de Rham cohomology, circular coordinates, and Morse theory."

- de Rham cohomology: A cohomology theory built from differential forms capturing topological invariants of a space. "such as de Rham cohomology via harmonic forms, circular coordinates, and Morse theory."

- Dirichlet energy: A functional measuring the smoothness of a function via gradients; here, a discrete analogue controls numerical stability. "Since is a diffusion operator, $1 - E$ is a discrete analogue of the customblue{Dirichlet energy}."

- Differential forms: Antisymmetric tensor fields generalizing functions and vector fields, integrated over manifolds. "The fundamental objects of calculus and geometry are customblue{tensors}, such as customblue{functions}, customblue{vector fields}, and customblue{differential forms}."

- Differential topology: The study of topological properties via differentiable structures and smooth functions. "we introduce methods for topological data analysis based on differential topology, including de Rham cohomology, circular coordinates and Morse theory."

- Diffusion geometry: A framework reformulating calculus and geometry in terms of diffusion processes, enabling computation from data beyond manifolds. "customblue{Diffusion geometry} ##1, ##2{jones2024diffusion,jones2024manifold} is a new theory that simultaneously overcomes both of these obstacles by reformulating calculus and geometry in terms of the customblue{heat diffusion} on the underlying space."

- Exterior derivative: An operator on differential forms generalizing differentiation and encoding grad/curl/div structures. "The interesting information in calculus and geometry is captured by differential operators that map between these spaces, such as the exterior derivative and Lie bracket, and these are described in Section \ref{sec: differential_operators}."

- Geodesic distance: The intrinsic shortest-path distance induced by a Riemannian metric on a space. "We solve the geodesic distance equation to recover the intrinsic metric of point cloud data."

- Gram matrix: A matrix of inner products defining the global inner product structure of a vector space in a chosen basis. "We can compute the Gram matrix of vector fields as"

- Graph Laplacian: A discrete Laplacian operator on graphs used to approximate continuous differential operators from data. "This approach is equivalent to computing the carré du champ with a graph Laplacian, which has previously been applied in ##1, ##2{lin2010ricci, lin2011ricci, berry2020spectral, jones2024diffusion}."

- Heat equation: A partial differential equation governing the diffusion of heat (or probability) over time. "Heat diffusion (as described by the classical heat equation) may appear to be an extremely specific dynamical process"

- Heat flow: The evolution semigroup generated by the heat equation, capturing diffusion on a space. "The specific diffusion process that captures the geometry of Euclidean space and manifolds is the customblue{heat flow}."

- Heat kernel: The fundamental solution of the heat equation describing heat propagation and transition densities of diffusions. "First, the heat diffusion can be expressed using the customblue{heat kernel}, which measures how heat spreads from one point to another over time."

- Hessian: The matrix of second derivatives of a function, encoding local curvature and expansion/contraction directions. "We compute the customblue{Hessian }, which measures the expansion and contraction of in a matrix at each point."

- Immersion: A smooth map whose differential is injective, providing local coordinates or embeddings of the data. "define an customblue{immersion} ."

- Kernel density estimate: A nonparametric estimate of a probability density obtained by averaging a kernel over data points. "is a customblue{kernel density estimate} of the function ."

- Laplace-Beltrami operator: The intrinsic Laplacian on a Riemannian manifold, governing spectral geometry. "then the eigenfunctions $#1{i}$ converge to the eigenfunctions of the Laplace-Beltrami operator on a manifold as "

- Levi-Civita connection: The unique torsion-free, metric-compatible connection enabling covariant differentiation on Riemannian manifolds. "We compute the sectional curvature via the Levi-Civita connection, which is the first time a classical curvature tensor has been estimated from non-manifold data."

- Lie bracket: A bilinear operator on vector fields measuring the noncommutativity of flows. "such as the exterior derivative and Lie bracket, and these are described in Section \ref{sec: differential_operators}."

- Manifold: A space that locally resembles Euclidean space of fixed dimension and satisfies smoothness constraints. "the classical theory of Riemannian geometry only applies when the space is a customblue{manifold}."

- Manifold hypothesis: The assumption that high-dimensional data lie near a low-dimensional manifold; here critiqued as misnamed. "Confusingly, the reasonable and broadly applicable assumption that the data are low-dimensional has been mislabelled the \qq{manifold hypothesis}"

- Markov chain: A discrete-time stochastic process with transition probabilities whose rows sum to one, modeling diffusion on data. "so defines a discrete diffusion process or customblue{Markov chain}."

- Markov process: A memoryless stochastic process encompassing continuous-time diffusions used to define geometry. "These can be computed from any Markov process, and we offer a simple data-driven solution based on the heat kernel."

- Measure: A mathematical object specifying how to integrate functions and assign weights/probabilities over a space. "provides us with a customblue{measure} "

- Module: An algebraic structure where a set (here, vector fields) is scaled by elements of an algebra (function coefficients). "a spanning set for the space of vector fields as a module over the function algebra "

- Morse theory: A framework relating topology to the critical points of smooth functions on a manifold. "including de Rham cohomology, circular coordinates, and Morse theory."

- Non-manifold: A space that fails manifold criteria (e.g., variable dimension or branching), yet still analyzable via diffusion geometry. "We can directly apply it to customblue{non-manifold} data that has noise, variable density, variable dimension, and singularities"

- Pullback: The operation of inducing functions or vector fields on a subspace via restriction or projection. "a process which is called the customblue{pullback}."

- Riemannian geometry: The study of smooth manifolds endowed with a metric, enabling calculus on curved spaces. "and the ways in which this geometry can be inferred from calculus are studied in customblue{Riemannian geometry}."

- Riemannian metric: A pointwise inner product on tangent spaces that defines lengths, angles, and gradients. "called the customblue{Riemannian metric} and denoted ."

- Sectional curvature: The curvature of two-dimensional sections of a Riemannian manifold, derived from the connection. "We compute the sectional curvature via the Levi-Civita connection"

- Spectral Exterior Calculus: A computational framework mapping exterior calculus and geometry to expansions in Laplacian eigenfunctions. "we computed diffusion geometry objects by expanding on the Spectral Exterior Calculus framework ##1, ##2{berry2020spectral}"

- Tangent space: The vector space of directions of curves through a point on a manifold. "customblue{tangent space} "

- Tensor: A multilinear object generalizing scalars, vectors, matrices, and forms used throughout geometry and physics. "The fundamental objects of calculus and geometry are customblue{tensors}"

- Variable bandwidth: A kernel scheme where bandwidth adapts to local density or heterogeneity for robust neighborhood estimation. "In practice, it is standard to use a customblue{variable bandwidth} kernel"

- Vietoris-Rips persistent homology: A combinatorial method detecting multiscale topological features from pairwise distances. "These can be computed in several orders of magnitude less time and space than Vietoris-Rips persistent homology"

- Weak formulations: Variational integral formulations of differential operators or PDEs used for stable numerical computation. "We represent all the major differential operators from calculus and geometry by solving weak formulations, and study their stability."

Collections

Sign up for free to add this paper to one or more collections.