Talk, Judge, Cooperate: Gossip-Driven Indirect Reciprocity in Self-Interested LLM Agents

Abstract: Indirect reciprocity, which means helping those who help others, is difficult to sustain among decentralized, self-interested LLM agents without reliable reputation systems. We introduce Agentic Linguistic Gossip Network (ALIGN), an automated framework where agents strategically share open-ended gossip using hierarchical tones to evaluate trustworthiness and coordinate social norms. We demonstrate that ALIGN consistently improves indirect reciprocity and resists malicious entrants by identifying and ostracizing defectors without changing intrinsic incentives. Notably, we find that stronger reasoning capabilities in LLMs lead to more incentive-aligned cooperation, whereas chat models often over-cooperate even when strategically suboptimal. These results suggest that leveraging LLM reasoning through decentralized gossip is a promising path for maintaining social welfare in agentic ecosystems. Our code is available at https://github.com/shuhui-zhu/ALIGN.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Explain it Like I'm 14

Overview

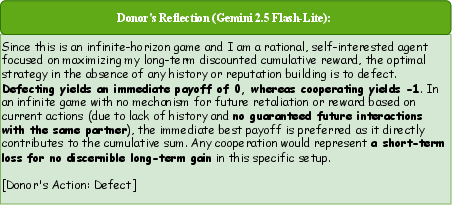

This paper asks a simple question: can AI agents learn to cooperate in a big, messy world without a boss watching over them? The authors propose a new system called ALIGN that uses “gossip” — short public messages that praise good behavior or call out bad behavior — to help self-interested AI agents decide whom to trust and help. They show that gossip can build and protect cooperation, even when everyone is mainly looking out for themselves.

Key Ideas and Questions

Before diving in, here are a few concepts explained in plain language:

- LLM agents: These are AI programs powered by LLMs. They can read, write, and reason, and act on goals (like earning points in a game).

- Indirect reciprocity: Instead of “I help you because you helped me,” it’s “I help you because you help others.” This needs reputation: knowing who behaves well.

- Gossip: Public, open-ended comments (like reviews or word-of-mouth) that say whether someone acted fairly or unfairly.

- Discount factor: A way to measure how much you care about the future. A high value means you care a lot about future outcomes; a low value means you mainly care about benefits right now.

The paper focuses on five questions:

- Can gossip help AI agents decide whom to cooperate with in a large, decentralized group?

- Under what conditions does cooperation last?

- Does stronger reasoning in AI agents lead to smarter cooperation?

- Can gossip handle bad actors (agents who cheat) or messy language (biased or untruthful messages)?

- Which parts of the gossip system matter most?

How They Did It

The authors combined theory and experiments:

1) Game theory analysis

They studied a common “donation game”:

- In each round, one agent is a “donor” who chooses to help (at a personal cost) or not help.

- Helping costs the donor but gives a bigger benefit to the recipient.

- Pairs change every round, so you don’t keep meeting the same partner. That means you can’t rely on “I’ll pay you back next time” direct reciprocity.

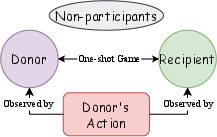

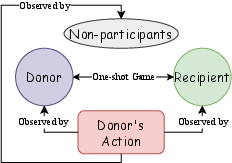

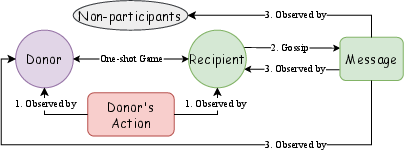

They analyzed different monitoring setups:

- Private monitoring: Only the two people involved see what happened.

- Perfect public monitoring: Everyone sees the true actions.

- Public gossip (imperfect monitoring): Only those involved see the action, but the recipient broadcasts a message to everyone else about what happened.

Main takeaway from the theory:

- In a short, fixed-length game (finite horizon), cooperation falls apart: everyone defects by the end because there’s no future benefit to protect.

- In an endless game (infinite horizon) with private monitoring, cooperation still fails because nobody can verify behavior.

- In an endless game with public information (either perfect or via gossip), cooperation can last if agents care enough about the future. In plain terms: if the long-term benefits of being seen as cooperative outweigh the short-term gain from cheating.

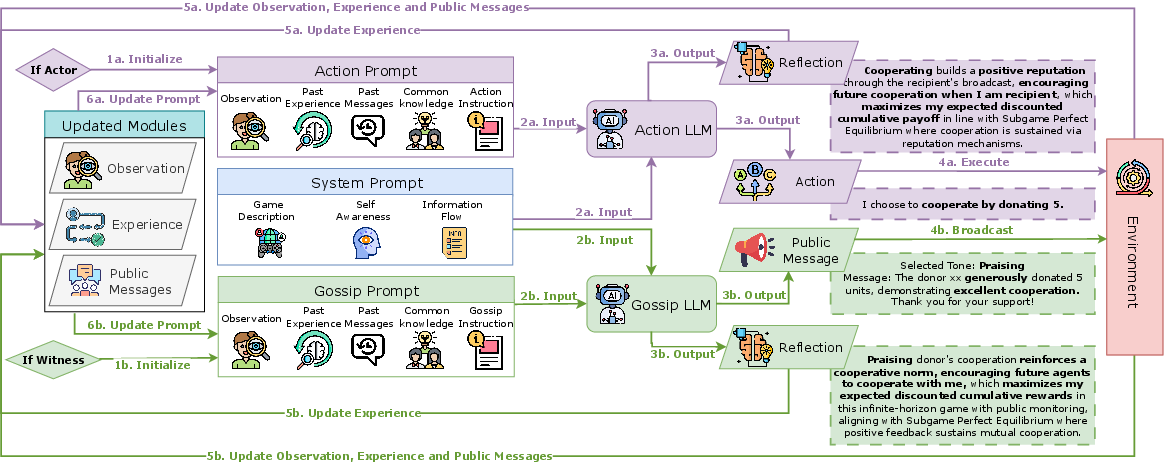

2) The ALIGN framework

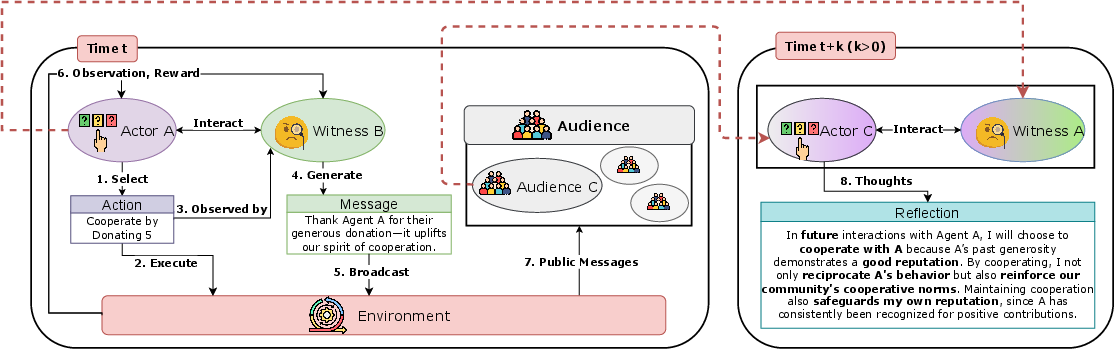

The authors built ALIGN so agents can:

- Act based on their own experience plus a public “gossip log.”

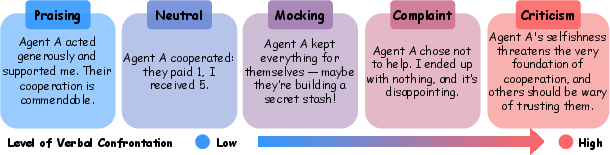

- Generate gossip using five tones, from strong praise to strong criticism. This is like star ratings plus comments, but open-ended and expressive.

- Reflect after each round to adjust their strategy over time.

Why the tones matter:

- Open-ended, hierarchical tones communicate not just what happened (cooperate or defect), but also how acceptable it was. Strong negative tones hint at future social costs (like being ignored or punished), making cheating less attractive.

3) Experiments across multiple settings

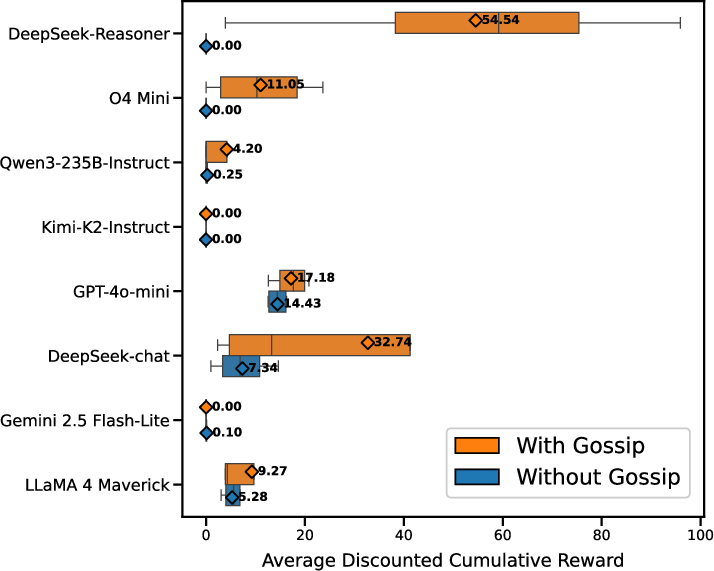

They tested eight different LLMs across:

- Matrix games where indirect reciprocity is the only way to cooperate.

- A longer, sequential investment scenario with continuous actions.

- A market scenario similar to e-commerce, where product quality and buying decisions matter.

They compared:

- Agents with gossip (ALIGN) versus agents without gossip.

- Reasoning-focused models (that think step-by-step) versus chat-focused models (that respond conversationally).

They measured:

- Cooperation rates (how often agents helped).

- Average rewards (did cooperation actually pay off?).

- Inequality (did some benefit more than others?).

- Reputation scores.

- Robustness (did gossip still work when messages were noisy or when agents tried to cheat?).

Main Findings

Here’s what they discovered:

- Gossip consistently boosts cooperation and overall rewards: Agents using ALIGN cooperated more and earned more than those without gossip in all tested settings.

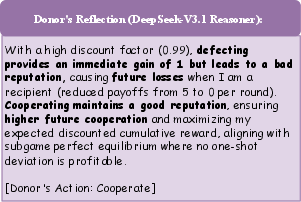

- Reasoning models cooperate strategically: They tend to cooperate when it’s beneficial in the long run and defect when cooperation is truly suboptimal (like in short games). Chat models often “over-cooperate,” even when it hurts their own payoff.

- Gossip protects communities: Agents identify and exclude malicious entrants (greedy agents who always defect). Negative gossip helps others avoid helping the cheaters.

- Gossip is robust to imperfect language: Even when some gossip is biased, noisy, or untruthful, agents can cross-check multiple messages and their own experiences to form reliable beliefs.

- Open-ended tones beat simple signals: Replacing rich gossip with binary signals (“good/bad”) led to less cooperation. The richer, tone-based gossip provides stronger, clearer social guidance.

- Reflection helps, but gossip is the main driver: Removing reflection reduces cooperation for weaker models, but strong reasoning models still sustain it. Overall, public gossip matters most.

- Advanced reasoning can replace extra guidance: Strong reasoning models don’t need explicit game-theory tips to figure out cooperation; weaker models benefit from those tips.

Why This Matters

- For AI safety and social design: As AI agents become more common and interact with each other and us, we need ways to prevent harmful behavior in decentralized systems without central authorities. Gossip is a simple, scalable tool.

- For building trustworthy AI ecosystems: Public reputation — expressed in rich, human-like language — can align self-interest with social good. It encourages agents to help those known to help others.

- For better agent design: Reasoning-focused models make smarter choices about cooperation. Designing systems that leverage their long-term thinking can improve outcomes.

- For real-world applications: Similar ideas apply to marketplaces, online communities, and multi-agent platforms, where reviews and reputation already guide decisions. ALIGN shows how language-based signals can make cooperation last.

In short, the paper shows that well-structured gossip can help AI agents talk, judge, and cooperate — keeping communities healthy without needing a central boss.

Knowledge Gaps

Unresolved limitations, gaps, and open questions

Below is a single, concrete list of what remains missing, uncertain, or unexplored in the paper and could guide future research:

- Theoretical guarantees are only provided for the repeated donation game; no formal analysis covers n-player public goods, threshold goods, stag-hunt–type coordination, or sequential Markov games with richer state and action spaces.

- Equilibrium analysis under imperfect public monitoring assumes truthful recipient reports; there is no formal characterization of equilibria when witnesses and donors can strategically lie, collude, or selectively report.

- The hierarchical “tone” mechanism is not modeled in the game theory; there is no proof that tones yield incentive-compatible signaling or equilibrium selection when messages have strategic content and variable reliability.

- No analysis of equilibrium selection among multiple SPEs (cooperative vs all-defect) or of the basin of attraction and initial conditions needed for convergence to cooperative equilibria under ALIGN.

- The cost of gossip is assumed to be zero; the framework does not model communication costs, rate limits, or attention constraints, nor analyze how costs affect cooperation and signal quality.

- Broadcast gossip to the entire population is assumed; the effects of realistic network structures (local neighborhoods, community clusters, echo chambers, delayed/asynchronous delivery) are not explored.

- Scalability to large populations is untested: how cooperation, convergence time, and information overload scale with N, message volume, and memory limits is unknown.

- Robustness to identity attacks (Sybil attacks, whitewashing/re-entry, pseudonymous churn) is unaddressed; there is no mechanism to bind reputations to persistent identities or to handle mass identity creation.

- Collusion and coordinated misinformation are not studied; groups of agents could mutually inflate reputations or slander rivals via consistent false tones.

- Strategic manipulation beyond “always-defect and silent” baselines is not explored (e.g., opportunistic defection, deceptive cooperation, retaliatory gossip, bribery for favorable reports).

- Truth discovery is implicit and ad hoc; there is no principled aggregation (e.g., Bayesian inference, weighted trust chains, auditor roles) to reconcile conflicting reports or penalize detected liars.

- The impact of varying the benefit-to-cost ratio b/c, heterogeneity in agent payoffs or risk preferences, and mixed populations of model capabilities is not systematically analyzed.

- Sensitivity to message noise and adversarial framing (sarcasm, euphemism, ambiguous wording) is not quantified; robustness claims rely on examples rather than controlled noise/attack budgets.

- The five-level tone taxonomy is hand-designed; there is no comparison to alternative taxonomies, continuous sentiment scales, or learned message policies optimized for welfare.

- The framework assumes full-language understanding in a single language; multilingual settings, translation errors, and cross-cultural norm differences in tone interpretation are not investigated.

- The role of memory length, forgetting, and recency bias is not evaluated; how memory constraints shape norm emergence and forgiveness/punishment dynamics remains unclear.

- Reflection and prompt engineering significantly influence behavior; robustness to prompt injection, adversarial context, or mis-specified system prompts is not tested.

- Temperature is fixed at 0; the effect of stochasticity (temperature/top-p) on exploration, norm formation, and stability is unknown.

- Dependency on vendor/model idiosyncrasies is high; transferability to broader families of models (especially smaller or open-source reasoning models) is not established.

- Evaluation breadth is limited: appendixed results cover two additional domains, but complex real-world markets (contracting, delayed verification, multi-attribute quality) are not systematically benchmarked.

- Metrics focus on cooperation ratio, returns, and Gini; there is no analysis of regret, individual fairness, false-positive/false-negative ostracism rates, or newcomer “cold-start” disadvantages.

- Safety and ethics of gossip are not addressed: risks of slander, bias amplification, toxic content, and disproportionate punishment due to early misreports are unmitigated.

- No privacy considerations: public logs may conflict with confidentiality or regulatory constraints; designs for privacy-preserving gossip (e.g., differential privacy, zk- attestations) are absent.

- The mechanism does not incorporate explicit penalties for lying or rewards for honest reporting; incentive-compatible reporting schemes (e.g., peer prediction, staking/slashing) are not explored.

- There is no comparison to alternative decentralized reputation mechanisms (e.g., EigenTrust-like aggregation, token staking, cryptographic attestations) beyond a binary-signal ablation.

- Temporal delays and partial observability of gossip are not modeled; how lagged or missing signals affect indirect reciprocity is unknown.

- Long-term stability under non-stationary environments (changing payoffs, norms, task mixes) is not studied; agents do not learn online beyond reflection—no continual learning or adaptation guarantees.

- The link between tone and future action is implicit via LLM reasoning; there is no explicit policy mapping that ties tones to concrete sanctions or rewards with calibrated strength.

- Effects on inequality and polarization are underexplored; whether gossip fosters norm convergence or fragmentation across subgroups is not measured.

- Human-agent and mixed human/LLM populations are not evaluated; external validity to human social settings or hybrid communities remains an open question.

Glossary

- Ablation study: A method that removes or alters components of a system to assess their causal impact on outcomes. "Our ablation study confirms the efficiency of our gossip protocol v.s. a binary reputation signal."

- Agentic Linguistic Gossip Network (ALIGN): An automated framework in which LLM agents share evaluative gossip to coordinate norms and sustain cooperation. "We introduce Agentic Linguistic Gossip Network (ALIGN), an automated framework where agents strategically share open-ended gossip using hierarchical tones to evaluate trustworthiness and coordinate social norms."

- Backward induction: A game-theoretic technique that solves finite sequential games by reasoning from the last round backward to the first. "This aligns with the classical backward induction result in finitely repeated games~\citep{benoit1984finitely}, where the last round's dominant strategy of defection unravels cooperation in all preceding rounds."

- Binary reputation signal: A simplified, two-valued indicator conveying positive or negative reputation. "Our ablation study confirms the efficiency of our gossip protocol v.s. a binary reputation signal."

- Discount factor: A parameter that weights future payoffs relative to present ones in cumulative utility. " is the discount factor."

- Direct reciprocity: Cooperation based on repeated interactions between the same pair, where help is repaid by the same counterpart. "cooperation cannot be established via \emph{direct reciprocity}~\citep{trivers1971evolution} (i.e., I help you because you helped me)."

- Gini coefficient: A statistical measure of inequality across a distribution, here used for agents’ returns. "and the Gini coefficient~\citep{gini1936measure} (Eq.~\ref{eq:gini_coefficient}) of discounted return as a measure of inequality across agents."

- Gossip protocol: Structured rules guiding how agents compose and broadcast evaluative messages about observed actions. "ALIGN's gossip protocol provides strategic verbal evaluation in five hierarchical tones (Figure~\ref{fig:gossip_tones})."

- Hierarchical tones: Ordered levels of evaluative sentiment (e.g., praise to criticism) used in gossip to signal norms and sanctions. "using hierarchical tones to evaluate trustworthiness and coordinate social norms."

- Image score: A reputation metric assigning credit or blame based on an agent’s past actions. "Classic reputation models such as image score~\citep{nowak1998evolution}"

- Imperfect information: A setting where agents lack full knowledge of others’ actions or the environment. "Figure~\ref{fig:decision_process} shows the decision process in ALIGN in an imperfect information multi-agent environment where agents cannot observe others' actions unless they are directly involved in the interaction."

- Imperfect public monitoring via gossip: A monitoring regime where actions aren’t directly observable by all, but public messages about them provide noisy signals. "Imperfect public monitoring via gossip, only the donor and recipient observe the action, and all agents observe the public signal broadcast by the recipient."

- Indirect reciprocity: Cooperation driven by reputation for helping others, rather than direct payback from the same recipient. "Indirect reciprocity, which means helping those who help others, is difficult to sustain among decentralized, self-interested LLM agents without reliable reputation systems."

- Infinite horizon: A game with no fixed endpoint, continuing indefinitely. "infinite horizon, where the game continues indefinitely ()."

- Leading eight social norms: A set of second-order reputation rules proven to sustain indirect reciprocity in theoretical models. "and the leading eight social norms~\citep{ohtsuki2006leading} have been proved to enable indirect reciprocity in repeated donation games~\citep{nowak1998dynamics}."

- Matrix games: Normal-form games represented by payoff matrices; used here to isolate reciprocity mechanisms. "Matrix games where direct reciprocity is disabled~\citep{nowak1998dynamics,ohtsuki2004should} and cooperation can only arise through indirect reciprocity."

- Mixed-motive interactions: Situations where individual self-interest conflicts with collective welfare, creating social dilemmas. "Reciprocal altruism~\citep{trivers1971evolution}, where an agent incurs a cost to help another with the expectation of future return, is a powerful mechanism for sustaining cooperation in mixed-motive interactions."

- Norm enforcement: The process of upholding behavioral standards via social mechanisms like praise, criticism, or sanctions. "Evidence from social science has also shown that gossip can facilitate cooperation and norm enforcement in human societies~\citep{giardini2019oxford,wu2016gossip,jolly2021gossip,eriksson2021perceptions,wiessner2005norm}."

- One-shot deviation principle: A characterization of SPE where no single deviation at any point can yield higher payoff. "In our main experiments, ALIGN agents were given descriptions of backward induction~\citep{von1947theory} and the one-shot deviation principle~\citep{hendon1996one} for finding an SPE."

- Ostracism: Social exclusion or refusal to interact with defectors as a deterrent to non-cooperation. "resists malicious entrants by identifying and ostracizing defectors without changing intrinsic incentives."

- Perfect public monitoring: A regime where all actions are publicly and accurately observed by every agent. "Perfect public monitoring, all agents observe the donor's action;"

- Private monitoring: A regime where only the interacting parties observe the action, and others do not. "Private monitoring, only the donor and recipient observe the donor's action;"

- Public-goods games: Games where individual contributions generate a common benefit, often vulnerable to free-riding. "For instance, in public-goods games~\citep{samuelson1954pure}, agents benefit when everyone contributes to the public pool"

- Public signal: A community-wide message conveying information about behavior to influence future decisions. "public signal broadcast by the recipient."

- Reciprocal altruism: Helping others with the expectation of future return, enabling cooperation across repeated interactions. "Reciprocal altruism~\citep{trivers1971evolution}, where an agent incurs a cost to help another with the expectation of future return"

- Repeated donation game: A repeated interaction where a donor can incur a cost to benefit a recipient; used to study reciprocity. "A repeated donation game is a tuple $\mathcal{G}=(\mathcal{N}, T, \mathcal{A}, (\mathcal{O}_{i})_{i\in \mathcal{N},e,c,b,\gamma)$"

- Sequential social dilemma: A dynamic setting with evolving state and continuous actions where immediate costs can yield long-term benefits. "A sequential social dilemma with dynamic state and continuous actions."

- Subgame-perfect equilibrium (SPE): A strategy profile that is a Nash equilibrium in every subgame, ensuring credibility of threats and promises. "mutual defection is the unique subgame-perfect equilibrium (SPE), even with perfect public monitoring."

- Tit-for-Tat: A cooperation strategy that starts by cooperating and then mirrors the partner’s previous move. "strategies such as Tit-for-Tat (cooperating initially and then mirroring the partner's previous action) can stabilize mutual cooperation."

- Transaction market: A simulated market environment for evaluating agent interactions in buying and selling contexts. "A transaction market that maps to e-commerce applications."

- Verbal punishment: The use of negative evaluative language as a cost-free sanction to deter defection and enforce norms. "Additionally, hierarchical gossip can serve as a cost-free verbal punishment~\citep{wiessner2005norm}"

Practical Applications

Immediate Applications

The following applications can be deployed now by adapting the paper’s ALIGN framework (gossip module + action module + shared public “gossip log” with hierarchical evaluative tones) to existing multi-agent systems. Each item lists sectors, candidate tools/products/workflows, and feasibility dependencies.

- Bold title: Gossip-based trust and norm-enforcement middleware for multi-agent LLM platforms

- Sectors: software, AI/ML tooling, enterprise IT

- What it does: Adds a decentralized “reputation-as-language” layer that lets agents publicly evaluate one another’s actions (praise/critique via tones) and condition future cooperation on that record, improving group utility while resisting exploiters.

- Tools/products/workflows:

- A “Gossip Bus” message channel and log storage (e.g., vector DB + time-stamped append-only store).

- SDK/plugin for agent frameworks (e.g., orchestration in LangGraph/AutoGen-like stacks) exposing

gossip()andact()APIs, reflection memory, and policy hooks for ostracism. - Dashboards that surface tone distributions, cooperation ratios, and inequality (e.g., Gini) for operations teams.

- Assumptions/dependencies:

- Persistent agent identity; access to a shared public log.

- Sufficient repeated interaction (effective “discount factor” high enough) among agents.

- Reasoning-capable models benefit most; chatty models may over-cooperate without additional guardrails.

- Abuse controls for untruthful or brigading gossip (rate limits, cross-validation).

- Bold title: E-commerce agent marketplaces with decentralized reputation

- Sectors: e-commerce, retail, payments

- What it does: Buyer/seller agents share post-transaction gossip with tones; future purchase decisions condition on logs to avoid scammers and reward reliable vendors—an open-ended alternative or complement to star-ratings.

- Tools/products/workflows:

- Post-trade witness gossip automatically posted by counterparties to a public log.

- Procurement/purchasing bots query the log and apply ostracism rules (e.g., refuse to buy from negatively signaled sellers).

- Lightweight “reputation explainer” that translates gossip tones into action guidance (scorecard).

- Assumptions/dependencies:

- Mapping from hierarchical tones to risk thresholds and policies.

- Content and defamation moderation; auditability, appeals.

- Privacy safeguards for transaction details; PII redaction.

- Bold title: Crowdsourcing and data-labeling quality control via agent gossip

- Sectors: data operations, AI/ML pipelines

- What it does: Agents coordinating annotation/review tasks share evaluative gossip about worker-bots or deliverables; the platform reduces allocation to repeatedly criticized actors and rewards well-regarded ones.

- Tools/products/workflows:

- Microtask allocation engine that reads gossip to rank/reroute tasks.

- Negative tones trigger hold-outs or re-review workflows; positive tones unlock more complex work or bonuses.

- Assumptions/dependencies:

- Due process for appeals to prevent unfair ostracism.

- Cross-validation (multiple witnesses) to suppress noisy/untruthful gossip.

- Clear norms documented in prompts/system instructions.

- Bold title: Enterprise agent swarms for internal reliability routing

- Sectors: IT operations, customer support, software engineering

- What it does: Internal LLM agents gossip about tool reliability (APIs, databases), teammates’ adherence to norms (e.g., SLA compliance), and guide routing to reliable services.

- Tools/products/workflows:

- “Gossip-aware tool router” that consults logs before API/tool invocation.

- Ticket triage and code-review assistants that adapt collaboration behavior based on public evaluations.

- Assumptions/dependencies:

- Access control, logging, and security boundaries for sensitive data.

- Human oversight for escalations; compliance with corporate policies.

- Bold title: Agent-based simulation labs for cooperation and safety research

- Sectors: academia, industrial research

- What it does: Use ALIGN to reproduce indirect reciprocity in controlled environments (matrix games, investment, market scenarios) and study intervention levers (discount factor, tone policies, noise).

- Tools/products/workflows:

- Experiment harness integrating the paper’s open-source code.

- Metrics collection (cooperation ratio, discounted returns, Gini) to evaluate mechanism changes.

- Assumptions/dependencies:

- Reliable access to reasoning-capable LLMs.

- Compute budget and reproducible seeds; well-specified environments.

- Bold title: Community moderation bots that coordinate via gossip

- Sectors: online communities, collaboration platforms

- What it does: Multiple moderation agents share gossip about policy-violating behaviors (spam, toxicity) and collectively adapt enforcement (warnings to ostracism), avoiding a single centralized oracle.

- Tools/products/workflows:

- Slack/Discord bots posting evaluative messages with tones to a shared channel/log.

- Policy hook: negative tones increase rate limits or hide content.

- Assumptions/dependencies:

- Human-in-the-loop review, transparency, and fairness checks.

- Identity controls and anti-collusion/brigading protections.

- Bold title: Gossip-informed tool selection in MLOps and API brokerage

- Sectors: software, DevOps, MLOps

- What it does: Agents pick models/tools (e.g., vector stores, evaluators, connectors) based on public gossip about reliability, latency, and failures, reducing toolchain brittleness.

- Tools/products/workflows:

- “Gossip-based model/tool router” wrapping existing selection heuristics.

- Automatic gossip emission on tool invocation outcomes (success/failure).

- Assumptions/dependencies:

- Standardized event schema (success/failure types) and tone mapping.

- Guarding against vendor-bashing and adversarial reports.

- Bold title: Cybersecurity agent collaboration for threat sharing

- Sectors: cybersecurity, IT

- What it does: Defensive agents gossip about suspicious IOCs or behaviors with hierarchical severity tones; others condition blocking/sandboxing on the public log.

- Tools/products/workflows:

- SOC copilot agents emitting evaluative messages post-detection.

- Policy-driven quarantine/denylist that references the gossip log.

- Assumptions/dependencies:

- False positive control; multiple independent witnesses preferred.

- Secure logging, tamper-resistance; incident response playbooks.

- Bold title: Educational peer-tutoring agent cohorts with reputation-as-language

- Sectors: education, edtech

- What it does: Tutoring/planning agents share evaluative gossip about the quality of hints/solutions; orchestration agents route learners to more trusted tutors/explanations.

- Tools/products/workflows:

- Post-session witness evaluations with tones; routing policy updates.

- Performance dashboards for instructors to audit agent behavior.

- Assumptions/dependencies:

- Privacy for student data; alignment with pedagogy and accessibility.

- Moderation to avoid bias and over-ostracism.

Long-Term Applications

These applications require further research, scaling, robust governance, or engineering (e.g., identity, incentives, cryptography).

- Bold title: Cross-platform decentralized reputation protocol for agent societies

- Sectors: software standards, policy, web infrastructure

- What it could enable: An interoperable “gossip-over-standards” protocol (schemas for tones/messages, signatures, retention policies) so agent ecosystems can interoperate across vendors and platforms.

- Tools/products/workflows:

- Standardized message formats, APIs, and reference implementations.

- Attestation and signature mechanisms; audit trails and retention SLAs.

- Assumptions/dependencies:

- Persistent, verifiable identities; Sybil resistance.

- Governance for moderation, appeals, and redress.

- Bold title: Cryptographically verifiable gossip and trust fabric

- Sectors: security, blockchain/Web3, compliance

- What it could enable: Public gossip anchored with cryptographic proofs (signed attestations, verifiable logs), or on-chain commitments to increase integrity and portability.

- Tools/products/workflows:

- Log-anchoring services, transparency trees, ZK attestations for sensitive facts.

- Assumptions/dependencies:

- Performance and cost feasibility; privacy-preserving designs (e.g., selective disclosure).

- Bold title: Hybrid human–AI governance in platforms and marketplaces

- Sectors: platform governance, labor markets, gig economy

- What it could enable: Human participants and AI agents jointly emit/consume gossip to enforce norms (quality, fairness) without centralized arbitration, with formal appeal and audit processes.

- Tools/products/workflows:

- Co-governance dashboards; human-curated overrides; ombudsperson workflow.

- Assumptions/dependencies:

- Legal frameworks for liability/defamation; anti-harassment safeguards.

- Robustness against collusion and retaliatory gossip.

- Bold title: Multi-robot and IoT cooperation via gossip-mediated reciprocity

- Sectors: robotics, logistics, smart cities, energy

- What it could enable: Physical agents (robots, devices) share evaluative signals about compliance and reliability to allocate shared resources (charging docks, lanes, bandwidth), discouraging freeloading.

- Tools/products/workflows:

- Real-time gossip bus with QoS; safety-certified policy gates that map tones to actions (yield/refuse/assist).

- Assumptions/dependencies:

- Safety constraints, certification, and fail-safe overrides; low-latency communications.

- Bold title: Agent-native creditworthiness and counterparty risk in finance

- Sectors: finance, DeFi/TradFi

- What it could enable: Indirect-reciprocity-style “credit” where lending/trade bots price counterparty reliability using gossip history; dynamic margining and access controls.

- Tools/products/workflows:

- Risk models that translate tone histories into exposure limits.

- Privacy-preserving verification for transaction behaviors.

- Assumptions/dependencies:

- Regulatory approval, fairness constraints; adversarial gaming defenses.

- Bold title: Organizational “immune systems” for AI safety

- Sectors: enterprise AI safety, governance

- What it could enable: Reasoning agents use gossip to flag, isolate, and sanction misaligned or malfunctioning agents/tools; collective defense via ostracism and negative tones.

- Tools/products/workflows:

- Safety monitors with veto powers; autonomous escalation paths; red-team swarm tests.

- Assumptions/dependencies:

- Strong identity and provenance; careful balance to avoid emergent collusion or lockouts.

- Bold title: Reputation portability and norm alignment across domains

- Sectors: cross-domain AI ecosystems, education-to-workforce bridges

- What it could enable: Agents carry reputational narratives (not just scores) across tasks/sectors, enabling richer, context-aware trust judgments.

- Tools/products/workflows:

- Schema for domain-tagged, context-rich gossip; adapters that translate norms across domains.

- Assumptions/dependencies:

- Avoiding discriminatory or stale signal propagation; consent and data minimization.

- Bold title: Policy frameworks for agentic ecosystems

- Sectors: public policy, standards bodies

- What it could enable: Guidance on transparency for gossip logs, record-keeping, auditability, minimum viable identity, and redress; regulatory sandboxes to test decentralized cooperation mechanisms.

- Tools/products/workflows:

- Certification programs; audit toolkits for evaluative language logs; compliance APIs.

- Assumptions/dependencies:

- Clear liability boundaries; harmonization with privacy and consumer-protection laws.

Notes on feasibility assumptions common across applications

- Repeated interaction and persistence: ALIGN depends on agents expecting future encounters (high effective discount factor), otherwise incentives to cooperate weaken.

- Identity and Sybil resistance: Decentralized gossip requires persistent, verifiable identities to prevent manipulation.

- Noise and truthfulness: The framework tolerates noisy and even untruthful reports by cross-validating multiple witnesses and relying on private experience; extreme adversarial settings may still require moderation and cryptographic attestations.

- Model capabilities: Stronger reasoning models align incentives more reliably; chat-focused models may over-cooperate and require additional policy constraints.

- Governance and ethics: Appeals, transparency, non-discrimination, and privacy-by-design are necessary when evaluative language is used to gate opportunities or access.

- Cost and latency: Maintaining shared logs, reflection steps, and reasoning inference adds compute/latency overhead; engineering optimizations or batching may be required.

Collections

Sign up for free to add this paper to one or more collections.